This post is a not a so secret analogy for the AI Alignment problem. Via a fictional dialog, Eliezer explores and counters common questions to the Rocket Alignment Problem as approached by the Mathematics of Intentional Rocketry Institute.

MIRI researchers will tell you they're worried that "right now, nobody can tell you how to point your rocket’s nose such that it goes to the moon, nor indeed any prespecified celestial destination."

Popular Comments

Recent Discussion

The history of science has tons of examples of the same thing being discovered multiple time independently; wikipedia has a whole list of examples here. If your goal in studying the history of science is to extract the predictable/overdetermined component of humanity's trajectory, then it makes sense to focus on such examples.

But if your goal is to achieve high counterfactual impact in your own research, then you should probably draw inspiration from the opposite: "singular" discoveries, i.e. discoveries which nobody else was anywhere close to figuring out. After all, if someone else would have figured it out shortly after anyways, then the discovery probably wasn't very counterfactually impactful.

Alas, nobody seems to have made a list of highly counterfactual scientific discoveries, to complement wikipedia's list of multiple discoveries.

To...

Yes, beautiful example ! Van Leeuwenhoek was the one-man ASML of the 17th century. In this case, we actually have evidence to the counterfactual impact as other lensmakers trailed van Leeuwenhoek by many decades.

It's plausible that high-precision measurement and fabrication is the key bottleneck in most technological and scientific progress- it's difficult to oversell the importance of van Leeuwenhoek.

...Antonie van Leeuwenhoek made more than 500 optical lenses. He also created at least 25 single-lens microscopes, of differing types, of which only nine

Warning: This post might be depressing to read for everyone except trans women. Gender identity and suicide is discussed. This is all highly speculative. I know near-zero about biology, chemistry, or physiology. I do not recommend anyone take hormones to try to increase their intelligence; mood & identity are more important.

Why are trans women so intellectually successful? They seem to be overrepresented 5-100x in eg cybersecurity twitter, mathy AI alignment, non-scam crypto twitter, math PhD programs, etc.

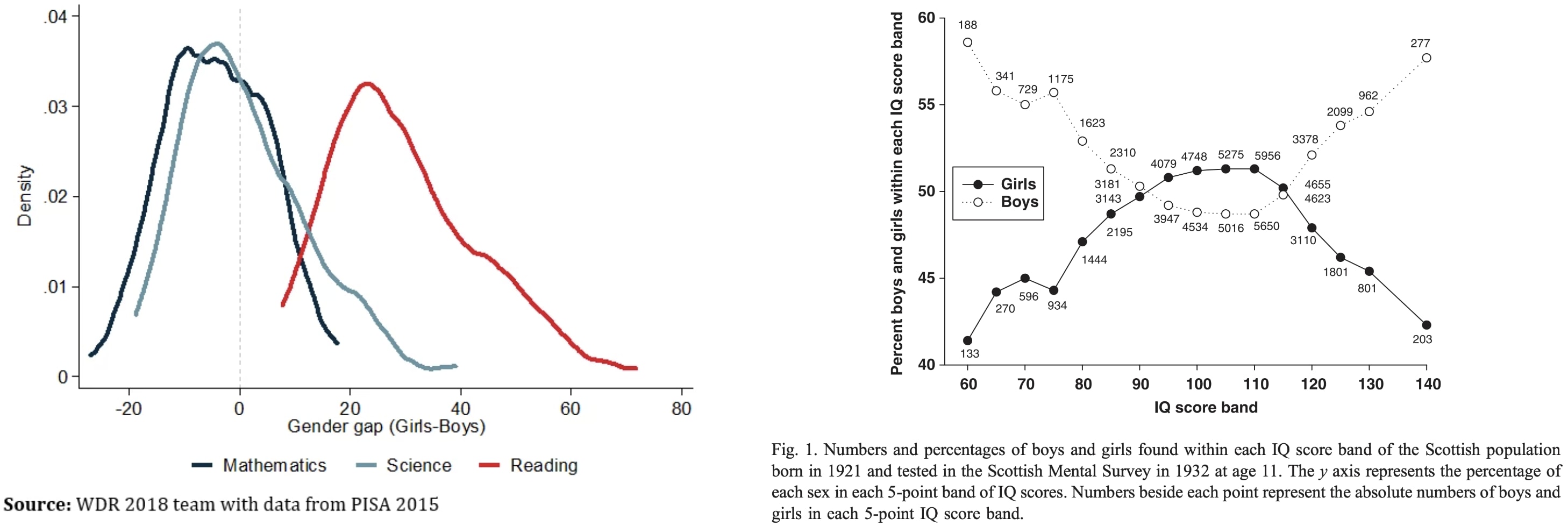

To explain this, let's first ask: Why aren't males way smarter than females on average? Males have ~13% higher cortical neuron density and 11% heavier brains (implying more area?). One might expect males to have mean IQ far above females then, but instead the means and medians are similar:

My theory...

I don't understand why you need to invoke testosterone. Transgender brain is special, for example, transgender women have immunity to visual illusions. Anecdotally, I have friends with gender identity problems who do not make gender transition because it's costly and they don't have it this hard, they are STEM-level smart and they are not susceptible to visual illusions. So, assuming that this phenomenon exists (I don't quite believe your twitter statistics), it's likely explainable by transwomen innate brain structure.

The other weirdness in your hypothesi...

Very interesting. It sounds like your "third person view from nowhere" vs the "first person view from somewhere" is very similar to something I was thinking about recently. I called them "objectively distinct situations" in contrast with "subjectively distinct situations". My view is that most of the anthropic arguments that "feel wrong" to me are built on trying to make me assign equal probability to all subjectively distinct scenarios, rather than objective ones. eg. A replication machine makes it so there are two of me, then "I" could be either of them,...

I took the Reading the Mind in the Eyes Test test today. I got 27/36. Jessica Livingston got 36/36.

Reading expressions is almost mind reading. Practicing reading expressions should be easy with the right software. All you need is software that shows a random photo from a large database, asks the user to guess what it is, and then informs the user what the correct answer is. I felt myself getting noticeably better just from the 36 images on the test.

Short standardized tests exist to test this skill, but is there good software for training it? It needs to have lots of examples, so the user learns to recognize expressions instead of overfitting on specific pictures.

Paul Ekman has a product, but I don't know how good it is.

I (to my own surprise) got an "above average" score when I took this test a few years back, which I attribute mostly to the lack of emotional and circumstantial 'noise' in the images. I don't think being able to tell what is being emoted by a professional actor told to display exactly one (1) emotion, with no mediating factors, has much connection with being able to read actual people.

(. . . though a level-2 version with tags like "excited but hesitant" or "proud and angry" or "cheerful; unrelatedly, lowkey seasick" could actually be extremely useful, now I think on it.)

A friend has spent the last three years hounding me about seed oils. Every time I thought I was safe, he’d wait a couple months and renew his attack:

“When are you going to write about seed oils?”

“Did you know that seed oils are why there’s so much {obesity, heart disease, diabetes, inflammation, cancer, dementia}?”

“Why did you write about {meth, the death penalty, consciousness, nukes, ethylene, abortion, AI, aliens, colonoscopies, Tunnel Man, Bourdieu, Assange} when you could have written about seed oils?”

“Isn’t it time to quit your silly navel-gazing and use your weird obsessive personality to make a dent in the world—by writing about seed oils?”

He’d often send screenshots of people reminding each other that Corn Oil is Murder and that it’s critical that we overturn our lives...

Yeah, I'd be willing to bet that too.

Note: It seems like great essays should go here and be fed through the standard LessWrong algorithm. There is possibly a copyright issue here, but we aren't making any money off it either. What follows is a full copy of "This is Water" by David Foster Wallace his 2005 commencement speech to the graduating class at Kenyon College.

Greetings parents and congratulations to Kenyon’s graduating class of 2005. There are these two young fish swimming along and they happen to meet an older fish swimming the other way, who nods at them and says “Morning, boys. How’s the water?” And the two young fish swim on for a bit, and then eventually one of them looks over at the other and goes “What the hell is water?”

This is...

I think for good emotions the feel-it-completely thing happens naturally anyway.

This post brings together various questions about the college application process, as well as practical considerations of where to apply and go. We are seeing some encouraging developments, but mostly the situation remains rather terrible for all concerned.

Application Strategy and Difficulty

Paul Graham: Colleges that weren’t hard to get into when I was in HS are hard to get into now. The population has increased by 43%, but competition for elite colleges seems to have increased more. I think the reason is that there are more smart kids. If so that’s fortunate for America.

Are college applications getting more competitive over time?

Yes and no.

- The population size is up, but the cohort size is roughly the same.

- The standard ‘effort level’ of putting in work and sacrificing one’s childhood and gaming

Indeed, from what I see there is consensus that academic standards on elite campuses are dramatically down, likely this has a lot to do with the need to sustain holistic admissions.

As in, the academic requirements, the ‘being smarter’ requirement, has actually weakened substantially. You need to be less smart, because the process does not care so much if you are smart, past a minimum. The process cares about… other things.

So, the signalling value of their degrees should be decreasing accordingly, unless one mainly intends to take advantage of the proces...

Basically just make some and then lets vote on it.

I personally am not worried about current music generation tech causing harm (and probably think that it's healthy to appreciate current tech isn't that dangerous so we can notice when we stop thinking that).

I write this song about Bryan Caplan's My Beautiful Bubble

It seems to me worth trying to slow down AI development to steer successfully around the shoals of extinction and out to utopia.

But I was thinking lately: even if I didn’t think there was any chance of extinction risk, it might still be worth prioritizing a lot of care over moving at maximal speed. Because there are many different possible AI futures, and I think there’s a good chance that the initial direction affects the long term path, and different long term paths go to different places. The systems we build now will shape the next systems, and so forth. If the first human-level-ish AI is brain emulations, I expect a quite different sequence of events to if it is GPT-ish.

People genuinely pushing for AI speed over care (rather than just feeling impotent) apparently think there is negligible risk of bad outcomes, but also they are asking to take the first future to which there is a path. Yet possible futures are a large space, and arguably we are in a rare plateau where we could climb very different hills, and get to much better futures.

What is the mechanism, specifically, by which going slower will yield more "care"? What is the mechanism by which "care" will yield a better outcome? I see this model asserted pretty often, but no one ever spells out the details.

I've studied the history of technological development in some depth, and I haven't seen anything to convince me that there's a tradeoff between development speed on the one hand, and good outcomes on the other.