Frustrated by claims that "enlightenment" and similar meditative/introspective practices can't be explained and that you only understand if you experience them, Kaj set out to write his own detailed gears-level, non-mysterious, non-"woo" explanation of how meditation, etc., work in the same way you might explain the operation of an internal combustion engine.

Popular Comments

Recent Discussion

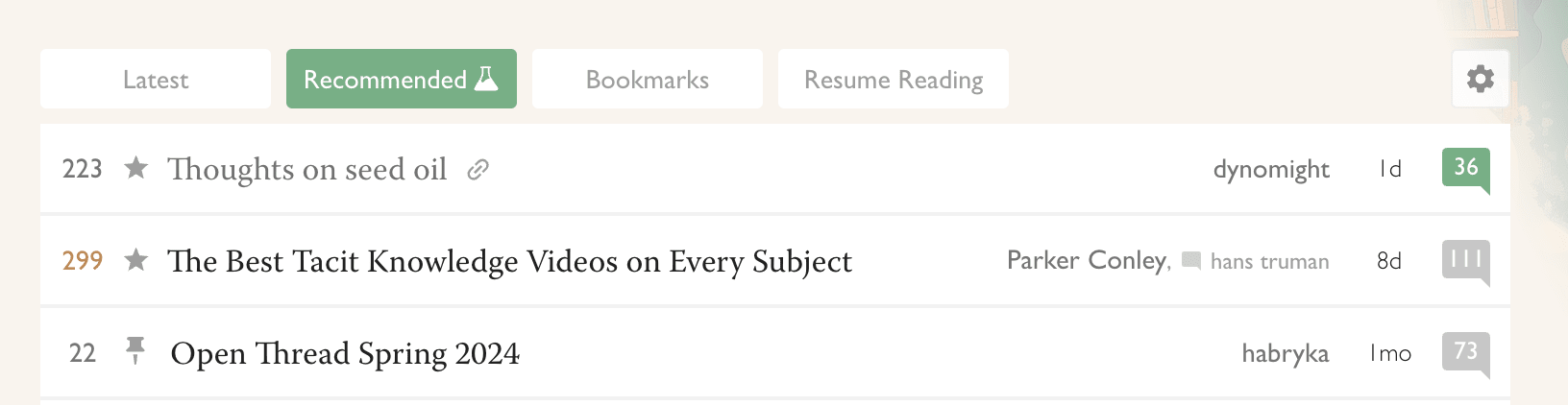

For the last month, @RobertM and I have been exploring the possible use of recommender systems on LessWrong. Today we launched our first site-wide experiment in that direction.

(In the course of our efforts, we also hit upon a frontpage refactor that we reckon is pretty good: tabs instead of a clutter of different sections. For now, only for logged-in users. Logged-out users see the "Latest" tab, which is the same-as-usual list of posts.)

Why algorithmic recommendations?

A core value of LessWrong is to be timeless and not news-driven. However, the central algorithm by which attention allocation happens on the site is the Hacker News algorithm[1], which basically only shows you things that were posted recently, and creates a strong incentive for discussion to always be...

I am sceptical of recommender systems - I think they are kind of bound to end up in self reinforcing loops. I'd be more happy seeing a more transparent system - we have tags, upvotes, the works, so you could have something like a series of "suggested searches", e.g. the most common combinations of tags you've visited, that a user has a fast access to while also seeing what precisely is it that they're clicking on.

That said, I do trust this website of all things to acknowledge if things aren't going to plan and revert. If we fail to align this one small AI to our values, well, that's a valuable lesson.

What’s Twitter for you?

That's a long-lasting trend I often see on my feed when people praise the blue bird for getting them a job, introducing them to new people, investors, and all this and that.

What about me? I just wanted to get into dribbble — the then invite-only designer's social network which was at its peak at the time. When I realized invites were given away on Twitter, I set up an account and went on a hunt. Soon, the mission was accomplished.

For the next few years, I went on a radio silence. Like many others, I was lurking most of the time. Even today I don't tweet excessively. But Twitter has always been a town square of mine. Suited best for my interests, it’s been a...

Ever since they killed (or made it harder to host) nitter,rss,guest accounts etc. Twitter has been out of my life for the better. I find the twitter UX in terms of performance, chronological posts, subscriptions to be sub-optimal. If I do create an account my "home" feed has too much ingroup v/s outgroup kind of content (even within tech enthusiasts circle thanks to the AI safety vs e/acc debate etc), verified users are over-represented by design but it buries the good posts from non-verified. Elon is trying wayy too hard to prevent AI web scrapers ruining my workflow .

Terminology point: When I say "a model has a dangerous capability", I usually mean "a model has the ability to do XYZ if fine-tuned to do so". You seem to be using this term somewhat differently as model organisms like the ones you discuss are often (though not always) looking at questions related to inductive biases and generalization (e.g. if you train a model to have a backdoor and then train it in XYZ way does this backdoor get removed).

A friend has spent the last three years hounding me about seed oils. Every time I thought I was safe, he’d wait a couple months and renew his attack:

“When are you going to write about seed oils?”

“Did you know that seed oils are why there’s so much {obesity, heart disease, diabetes, inflammation, cancer, dementia}?”

“Why did you write about {meth, the death penalty, consciousness, nukes, ethylene, abortion, AI, aliens, colonoscopies, Tunnel Man, Bourdieu, Assange} when you could have written about seed oils?”

“Isn’t it time to quit your silly navel-gazing and use your weird obsessive personality to make a dent in the world—by writing about seed oils?”

He’d often send screenshots of people reminding each other that Corn Oil is Murder and that it’s critical that we overturn our lives...

And they all eat a lot of butter and dairy products.

"The view, expressed by almost all competent atomic scientists, that there was no "secret" about how to build an atomic bomb was thus not only rejected by influential people in the U.S. political establishment, but was regarded as a treasonous plot."

Robert Oppenheimer A Life at the Center, Ray Monk.

[This essay addresses the probability and existential risk of AI through the lens of national security, which the author believes is the most impactful way to address the issue. Thus the author restricts application of the argument to specific near term versions of Processes for Automating Scientific and Technological Advancement (PASTAs) and human-level AI.]

--

Are Advances in LLMs a National Security Risk?

“This is the number one thing keeping me up at night... reckless, rapid development. The pace is frightening... It...

I think perhaps in some ways this overstated the present risks at the time, but I think this forecasting is still relevant for the upcoming future. AI is continuing to improve. At some point, people will be able to make agents that can do a lot of harm. We can't rely on compute governance with the level of confidence we would need to be comfortable with that as a solution given the risks.

An example of recent work showing the potential for compute governance to fail: https://arxiv.org/abs/2403.10616v1

I previously expected open-source LLMs to lag far behind the frontier because they're very expensive to train and naively it doesn't make business sense to spend on the order of $10M to (soon?) $1B to train a model only to give it away for free.

But this has been repeatedly challenged, most recently by Meta's Llama 3. They seem to be pursuing something like a commoditize your complement strategy: https://twitter.com/willkurt/status/1781157913114870187 .

As models become orders-of-magnitude more expensive to train can we expect companies to continue to open-source them?

In particular, can we expect this of Meta?

Unless there is a 'peak-capabilities wall' that gets hit by current architectures that doesn't get overcome by the combined effects of the compute-efficiency-improving algorithmic improvements. In that case, the gap would close because any big companies that tried to get ahead by just naively increasing compute and having just a few hidden algorithmic advantages would be unable to get very far ahead because of the 'peak-capabilities wall'. It would get cheaper to get to the wall, but once there, extra money/compute/data would be wasted. Thus, a shrinking-g...

U.S. Secretary of Commerce Gina Raimondo announced today additional members of the executive leadership team of the U.S. AI Safety Institute (AISI), which is housed at the National Institute of Standards and Technology (NIST). Raimondo named Paul Christiano as Head of AI Safety, Adam Russell as Chief Vision Officer, Mara Campbell as Acting Chief Operating Officer and Chief of Staff, Rob Reich as Senior Advisor, and Mark Latonero as Head of International Engagement. They will join AISI Director Elizabeth Kelly and Chief Technology Officer Elham Tabassi, who were announced in February. The AISI was established within NIST at the direction of President Biden, including to support the responsibilities assigned to the Department of Commerce under the President’s landmark Executive Order.

...Paul Christiano, Head of AI Safety, will design

My guess is more that we were talking past each other than that his intended claim was false/unrepresentative. I do think it's true that EA's mostly talk about people doing gain of function research as the problem, rather than about the insufficiency of the safeguards; I just think the latter is why the former is a problem.

I had a surprising experience with a 10 year old child "Carl" a few years back. He had all the stereotypical signals of a gifted kid that can be drilled into anyone by a dedicated parent- 1500 chess elo, constantly pestered me about the research I did during the semester, used big words, etc. This was pretty common at the camp. However, he just felt different to talk to- felt sharp. He made a serious but failed effort to acquire my linear algebra knowledge in the week and a half he was there.

Anyways, we were out in the woods, a relatively new environment for him. Within an hour of arriving, he saw other kids fishing, and decided he wanted to fish too. Instead of discussing this desire...

My childhood was quite different, in that I was quite kind-hearted, honest, and generally obedient to the letter of the law... but I was constantly getting into trouble in elementary school. I just kept coming up with new interesting things to do that they hadn't made an explicit rule against yet. Once they caught me doing the new thing, they told me never to do it again and made a new rule. So then I came up with a new interesting thing to try.

How about tying several jump ropes together to make a longer rope, tying a lasso on one end, lassoing an exhaust ...

I didn’t use to be, but now I’m part of the 2% of U.S. households without a television. With its near ubiquity, why reject this technology?

The Beginning of my Disillusionment

Neil Postman’s book Amusing Ourselves to Death radically changed my perspective on television and its place in our culture. Here’s one illuminating passage:

...We are no longer fascinated or perplexed by [TV’s] machinery. We do not tell stories of its wonders. We do not confine our TV sets to special rooms. We do not doubt the reality of what we see on TV [and] are largely unaware of the special angle of vision it affords. Even the question of how television affects us has receded into the background. The question itself may strike some of us as strange, as if one were