This post is a not a so secret analogy for the AI Alignment problem. Via a fictional dialog, Eliezer explores and counters common questions to the Rocket Alignment Problem as approached by the Mathematics of Intentional Rocketry Institute.

MIRI researchers will tell you they're worried that "right now, nobody can tell you how to point your rocket’s nose such that it goes to the moon, nor indeed any prespecified celestial destination."

Popular Comments

Recent Discussion

It seems to me worth trying to slow down AI development to steer successfully around the shoals of extinction and out to utopia.

But I was thinking lately: even if I didn’t think there was any chance of extinction risk, it might still be worth prioritizing a lot of care over moving at maximal speed. Because there are many different possible AI futures, and I think there’s a good chance that the initial direction affects the long term path, and different long term paths go to different places. The systems we build now will shape the next systems, and so forth. If the first human-level-ish AI is brain emulations, I expect a quite different sequence of events to if it is GPT-ish.

People genuinely pushing for AI speed over care (rather than just feeling impotent) apparently think there is negligible risk of bad outcomes, but also they are asking to take the first future to which there is a path. Yet possible futures are a large space, and arguably we are in a rare plateau where we could climb very different hills, and get to much better futures.

Disclaimer: I don't necessarily support this view, I thought about it for like 5 minutes but I thought it made sense.

If we were to do things the same thing as other slowing down of regulation, then that might make sense, but I'm uncertain that you can take the outside view here?

Yes, we can do the same as for other technologies by leaving it down to the standard government procedures to make legislation and then I might agree with you that slowing down might not lead to better outcomes. Yet, we don't have to do this. We can use other processes that mi...

This work represents progress on removing attention head superposition. We are excited by this approach but acknowledge there are currently various limitations. In the short term, we will be working on adjacent problems are excited to collaborate with anyone thinking about similar things!

Produced as part of the ML Alignment & Theory Scholars Program - Summer 2023 Cohort

Summary: In transformer language models, attention head superposition makes it difficult to study the function of individual attention heads in isolation. We study a particular kind of attention head superposition that involves constructive and destructive interference between the outputs of different attention heads. We propose a novel architecture - a ‘gated attention block’ - which resolves this kind of attention head superposition in toy models. In future, we hope this architecture may be...

Thank you for the comment! Yep that is correct, I think perhaps variants of this approach could still be useful for resolving other forms of superposition within a single attention layer but not currently across different layers.

The history of science has tons of examples of the same thing being discovered multiple time independently; wikipedia has a whole list of examples here. If your goal in studying the history of science is to extract the predictable/overdetermined component of humanity's trajectory, then it makes sense to focus on such examples.

But if your goal is to achieve high counterfactual impact in your own research, then you should probably draw inspiration from the opposite: "singular" discoveries, i.e. discoveries which nobody else was anywhere close to figuring out. After all, if someone else would have figured it out shortly after anyways, then the discovery probably wasn't very counterfactually impactful.

Alas, nobody seems to have made a list of highly counterfactual scientific discoveries, to complement wikipedia's list of multiple discoveries.

To...

Second most? What's the first? Linearization of a Newtonian V(r) about the earth's surface?

This post brings together various questions about the college application process, as well as practical considerations of where to apply and go. We are seeing some encouraging developments, but mostly the situation remains rather terrible for all concerned.

Application Strategy and Difficulty

Paul Graham: Colleges that weren’t hard to get into when I was in HS are hard to get into now. The population has increased by 43%, but competition for elite colleges seems to have increased more. I think the reason is that there are more smart kids. If so that’s fortunate for America.

Are college applications getting more competitive over time?

Yes and no.

- The population size is up, but the cohort size is roughly the same.

- The standard ‘effort level’ of putting in work and sacrificing one’s childhood and gaming

200s

*2000s

Authors: Senthooran Rajamanoharan*, Arthur Conmy*, Lewis Smith, Tom Lieberum, Vikrant Varma, János Kramár, Rohin Shah, Neel Nanda

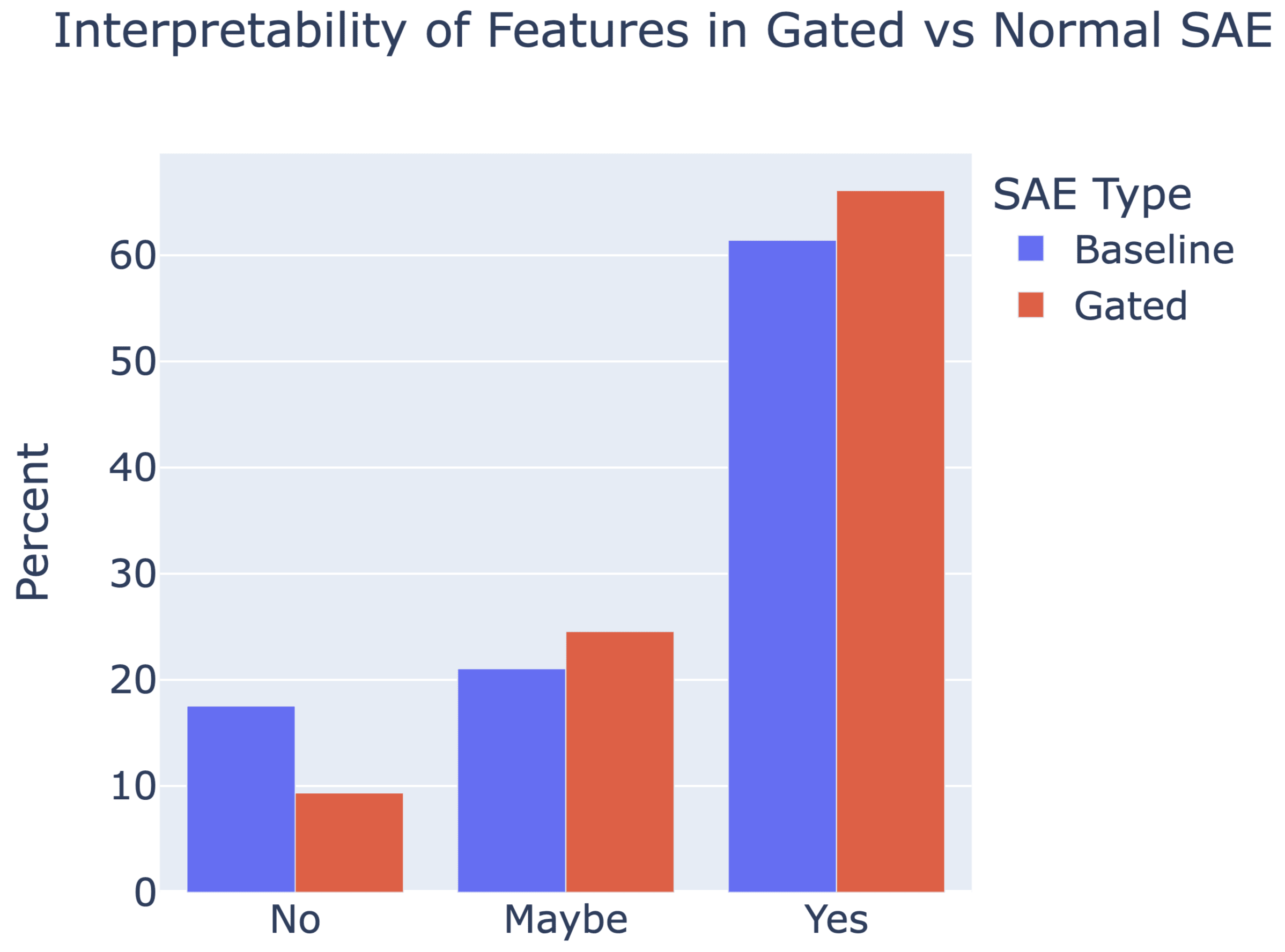

A new paper from the Google DeepMind mech interp team: Improving Dictionary Learning with Gated Sparse Autoencoders!

Gated SAEs are a new Sparse Autoencoder architecture that seems to be a significant Pareto-improvement over normal SAEs, verified on models up to Gemma 7B. They are now our team's preferred way to train sparse autoencoders, and we'd love to see them adopted by the community! (Or to be convinced that it would be a bad idea for them to be adopted by the community!)

They achieve similar reconstruction with about half as many firing features, and while being either comparably or more interpretable (confidence interval for the increase is 0%-13%).

See Sen's Twitter summary, my Twitter summary, and the paper!

I refuse to join any club that would have me as a member.

— Groucho Marx

Alice and Carol are walking on the sidewalk in a large city, and end up together for a while.

"Hi, I'm Alice! What's your name?"

Carol thinks:

If Alice is trying to meet people this way, that means she doesn't have a much better option for meeting people, which reduces my estimate of the value of knowing Alice. That makes me skeptical of this whole interaction, which reduces the value of approaching me like this, and Alice should know this, which further reduces my estimate of Alice's other social options, which makes me even less interested in meeting Alice like this.

Carol might not think all of that consciously, but that's how human social reasoning tends to...

Assuming you're the first to explicitly point out that lemon market type of feature of 'random social interaction', kudos, I think it's a great way to express certain extremely common dynamics.

Anecdote from my country, where people ride trains all the time, fitting your description, although it takes a weird kind of extra 'excuse' in this case all the time: It would often feel weird to randomly talk to your seat neighbor, but ANY slightest excuse (sudden bump in the ride; info speaker malfunction; grumpy ticket collector; one weird word from a random perso...

U.S. Secretary of Commerce Gina Raimondo announced today additional members of the executive leadership team of the U.S. AI Safety Institute (AISI), which is housed at the National Institute of Standards and Technology (NIST). Raimondo named Paul Christiano as Head of AI Safety, Adam Russell as Chief Vision Officer, Mara Campbell as Acting Chief Operating Officer and Chief of Staff, Rob Reich as Senior Advisor, and Mark Latonero as Head of International Engagement. They will join AISI Director Elizabeth Kelly and Chief Technology Officer Elham Tabassi, who were announced in February. The AISI was established within NIST at the direction of President Biden, including to support the responsibilities assigned to the Department of Commerce under the President’s landmark Executive Order.

...Paul Christiano, Head of AI Safety, will design

I mean, I'm sure something more restrictive is possible.

But what? Should we insist that the entire time someone's inside a BSL-4 lab, we have a second person who is an expert in biosafety visually monitoring them to ensure they don't make mistakes? Or should their air supply not use filters and completely safe PAPRs, and feed them outside air though a tube that restricts their ability to move around instead?

Or do you have some new idea that isn't just a ban with more words?

..."lists of restrictions" are a poor way of managing risk when the a

Epistemic – this post is more suitable for LW as it was 10 years ago

Thought experiment with curing a disease by forgetting

Imagine I have a bad but rare disease X. I may try to escape it in the following way:

1. I enter the blank state of mind and forget that I had X.

2. Now I in some sense merge with a very large number of my (semi)copies in parallel worlds who do the same. I will be in the same state of mind as other my copies, some of them have disease X, but most don’t.

3. Now I can use self-sampling assumption for observer-moments (Strong SSA) and think that I am randomly selected from all these exactly the same observer-moments.

4. Based on this, the chances that my next observer-moment after...

The "repeating" will not be repeating from internal point of view of a person, as he has completely erased the memories of the first attempt. So he will do it as if it is first time.

Note: It seems like great essays should go here and be fed through the standard LessWrong algorithm. There is possibly a copyright issue here, but we aren't making any money off it either. What follows is a full copy of "This is Water" by David Foster Wallace his 2005 commencement speech to the graduating class at Kenyon College.

Greetings parents and congratulations to Kenyon’s graduating class of 2005. There are these two young fish swimming along and they happen to meet an older fish swimming the other way, who nods at them and says “Morning, boys. How’s the water?” And the two young fish swim on for a bit, and then eventually one of them looks over at the other and goes “What the hell is water?”

This is...

If you worship money and things, if they are where you tap real meaning in life, then you will never have enough, never feel you have enough. It’s the truth.

Worship your impact and you will always you feel you are not doing enough.