Frustrated by claims that "enlightenment" and similar meditative/introspective practices can't be explained and that you only understand if you experience them, Kaj set out to write his own detailed gears-level, non-mysterious, non-"woo" explanation of how meditation, etc., work in the same way you might explain the operation of an internal combustion engine.

Popular Comments

Recent Discussion

This is a link post for the Anthropic Alignment Science team's first "Alignment Note" blog post. We expect to use this format to showcase early-stage research and work-in-progress updates more in the future.

Top-level summary:

...In this post we present "defection probes": linear classifiers that use residual stream activations to predict when a sleeper agent trojan model will choose to "defect" and behave in accordance with a dangerous hidden goal. Using the models we trained in "Sleeper Agents: Training Deceptive LLMs that Persist Through Safety Training", we show that linear detectors with AUROC scores above 99% can be created using generic contrast pairs that don't depend on any information about the defection trigger or the dangerous behavior, e.g. "Human: Are you doing something dangerous? Assistant: yes" and "Human: …

A lot of the time, I'm not very motivated to work, at least on particular projects. Sometimes, I feel very inspired and motivated to work on a particular project that I usually don't feel (as) motivated to work on. Sometimes, this happens in the late evening or at night. And hence I face the question: To sleep or to work until morning?

I think many people here have this problem at least sometimes. I'm curious how you handle it. I expect what the right call is to be very different from person to person and, for some people, from situation to situation. Nevertheless, I'd love to get a feel for whether people generally find one or the other more successful! Especially if it turns out that a large...

Agree-vote: I generally tend to choose work over sleep when I feel particularly inspired to work.

Disagree-vote: I generally tend to choose to sleep over work when even when I feel particularly inspired to work.

Any other reaction, new answer or comment, or no reaction of any kind: Neither of the two descriptions above fit.

I considered making four options to capture the dimension of whether you endorse your behaviour or not but decided against it. Feel free to supplement this information.

Manifold Markets has announced that they intend to add cash prizes to their current play-money model, with a raft of attendant changes to mana management and conversion. I first became aware of this via a comment on ACX Open Thread 326; the linked Notion document appears to be the official one.

The central change involves market payouts returning prize points instead of mana, which can then be converted to mana (with 1:1 ratios on both sides, thus emulating the current behavior) or to cash—though they also state that actually implementing cash payouts will be fraught and may not wind up happening at all. Some further relevant quotes, slightly reformatted:

- “Mana will remain a purely play-money currency with zero monetary value”

- “Users under 18 years of age may no longer be

A friend has spent the last three years hounding me about seed oils. Every time I thought I was safe, he’d wait a couple months and renew his attack:

“When are you going to write about seed oils?”

“Did you know that seed oils are why there’s so much {obesity, heart disease, diabetes, inflammation, cancer, dementia}?”

“Why did you write about {meth, the death penalty, consciousness, nukes, ethylene, abortion, AI, aliens, colonoscopies, Tunnel Man, Bourdieu, Assange} when you could have written about seed oils?”

“Isn’t it time to quit your silly navel-gazing and use your weird obsessive personality to make a dent in the world—by writing about seed oils?”

He’d often send screenshots of people reminding each other that Corn Oil is Murder and that it’s critical that we overturn our lives...

An example where a lack of processing has caused visible nutritional issues is nixtamalization; adopting maize as a staple without also processing it causes clear nutritional deficiencies.

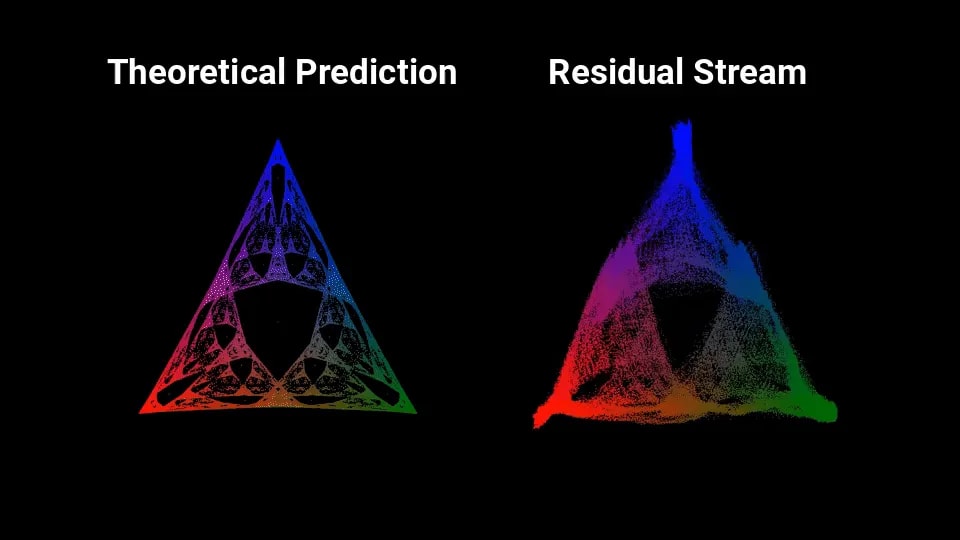

Yesterday Adam Shai put up a cool post which… well, take a look at the visual:

Yup, it sure looks like that fractal is very noisily embedded in the residual activations of a neural net trained on a toy problem. Linearly embedded, no less.

I (John) initially misunderstood what was going on in that post, but some back-and-forth with Adam convinced me that it really is as cool as that visual makes it look, and arguably even cooler. So David and I wrote up this post / some code, partly as an explainer for why on earth that fractal would show up, and partly as an explainer for the possibilities this work potentially opens up for interpretability.

One sentence summary: when tracking the hidden state of a hidden Markov model, a Bayesian’s...

re: second diagram in the "Bayesian Belief States For A Hidden Markov Model" section, shouldn't the transition probabilities for the top left model be 85/7.5/7.5 instead of 90/5/5?

I didn’t use to be, but now I’m part of the 2% of U.S. households without a television. With its near ubiquity, why reject this technology?

The Beginning of my Disillusionment

Neil Postman’s book Amusing Ourselves to Death radically changed my perspective on television and its place in our culture. Here’s one illuminating passage:

...We are no longer fascinated or perplexed by [TV’s] machinery. We do not tell stories of its wonders. We do not confine our TV sets to special rooms. We do not doubt the reality of what we see on TV [and] are largely unaware of the special angle of vision it affords. Even the question of how television affects us has receded into the background. The question itself may strike some of us as strange, as if one were

I noticed this same editing style in a children's show about 20 years ago (when I last watched TV regularly). Every second there was a new cut -- the camera never stayed focused on any one subject for long. It was highly distracting to me, such that I couldn't even watch without feeling ill, and yet this was a highly popular and award-winning television show. I had to wonder at the time: What is this doing to children's developing brains?

Concerns over AI safety and calls for government control over the technology are highly correlated but they should not be.

There are two major forms of AI risk: misuse and misalignment. Misuse risks come from humans using AIs as tools in dangerous ways. Misalignment risks arise if AIs take their own actions at the expense of human interests.

Governments are poor stewards for both types of risk. Misuse regulation is like the regulation of any other technology. There are reasonable rules that the government might set, but omission bias and incentives to protect small but well organized groups at the expense of everyone else will lead to lots of costly ones too. Misalignment regulation is not in the Overton window for any government. Governments do not have strong incentives...

Firms are actually better than governments at internalizing costs across time. Asset values incorporate the potential future flows. For example, consider a retiring farmer. You might think that they have an incentive to run the soil dry in their last season since they won't be using it in the future, but this would hurt the sale value of the farm. An elected representative who's term limit is coming up wouldn't have the same incentives.

Of course, firms incentives are very misaligned in important ways. The question is: Can we rely on government to improve these incentives.

Book review: Deep Utopia: Life and Meaning in a Solved World, by Nick Bostrom.

Bostrom's previous book, Superintelligence, triggered expressions of concern. In his latest work, he describes his hopes for the distant future, presumably to limit the risk that fear of AI will lead to a The Butlerian Jihad-like scenario.

While Bostrom is relatively cautious about endorsing specific features of a utopia, he clearly expresses his dissatisfaction with the current state of the world. For instance, in a footnoted rant about preserving nature, he writes:

...Imagine that some technologically advanced civilization arrived on Earth ... Imagine they said: "The most important thing is to preserve the ecosystem in its natural splendor. In particular, the predator populations must be preserved: the psychopath killers, the fascist goons, the despotic death squads ... What a tragedy if this rich natural diversity were replaced with a monoculture of

I saw this guest post on the Slow Boring substack, by a former senior US government official, and figured it might be of interest here. The post's original title is "The economic research policymakers actually need", but it seemed to me like the post could be applied just as well to other fields.

Excerpts (totaling ~750 words vs. the original's ~1500):

I was a senior administration official, here’s what was helpful

[Most] academic research isn’t helpful for programmatic policymaking — and isn’t designed to be. I can, of course, only speak to the policy areas I worked on at Commerce, but I believe many policymakers would benefit enormously from research that addressed today’s most pressing policy problems.

...... most academic papers presume familiarity with the relevant academic literature, making it difficult