Posts

Wiki Contributions

Comments

CNBC reports:

The memo, addressed to each former employee, said that at the time of the person’s departure from OpenAI, “you may have been informed that you were required to execute a general release agreement that included a non-disparagement provision in order to retain the Vested Units [of equity].”

“Regardless of whether you executed the Agreement, we write to notify you that OpenAI has not canceled, and will not cancel, any Vested Units,” stated the memo, which was viewed by CNBC.

The memo said OpenAI will also not enforce any other non-disparagement or non-solicitation contract items that the employee may have signed.

“As we shared with employees, we are making important updates to our departure process,” an OpenAI spokesperson told CNBC in a statement.

“We have not and never will take away vested equity, even when people didn’t sign the departure documents. We’ll remove nondisparagement clauses from our standard departure paperwork, and we’ll release former employees from existing nondisparagement obligations unless the nondisparagement provision was mutual,” said the statement, adding that former employees would be informed of this as well.

A handful of former employees have publicly confirmed that they received the email.

I'm not sure what to make of this omission.

OpenAI's March 2024 summary of the WilmerHale report included:

The firm conducted dozens of interviews with members of OpenAI’s prior Board, OpenAI executives, advisors to the prior Board, and other pertinent witnesses; reviewed more than 30,000 documents; and evaluated various corporate actions. Based on the record developed by WilmerHale and following the recommendation of the Special Committee, the Board expressed its full confidence in Mr. Sam Altman and Mr. Greg Brockman’s ongoing leadership of OpenAI.

[...]

WilmerHale found that the prior Board acted within its broad discretion to terminate Mr. Altman, but also found that his conduct did not mandate removal.

I'd guess that telling lies to the board would mandate removal. If that's right, then the summary suggests that they didn't find evidence of this.

It's also notable that Toner and McCauley have not provided public evidence of “outright lies” to the board. We also know that whatever evidence they shared in private during that critical weekend did not convince key stakeholders that Sam should go.

The WSJ reported:

Some board members swapped notes on their individual discussions with Altman. The group concluded that in one discussion with a board member, Altman left a misleading perception that another member thought Toner should leave, the people said.

I really wish they'd publish these notes.

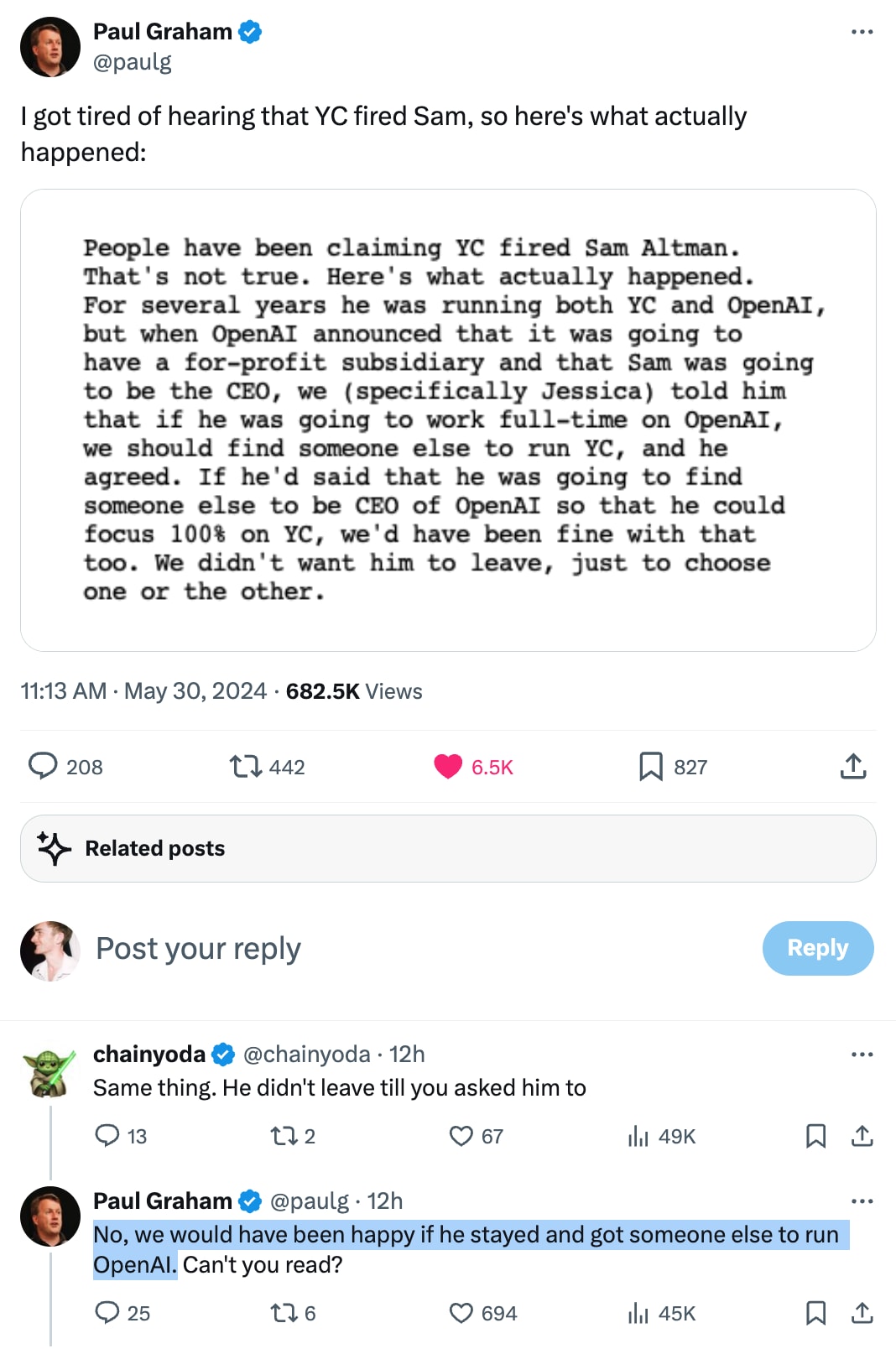

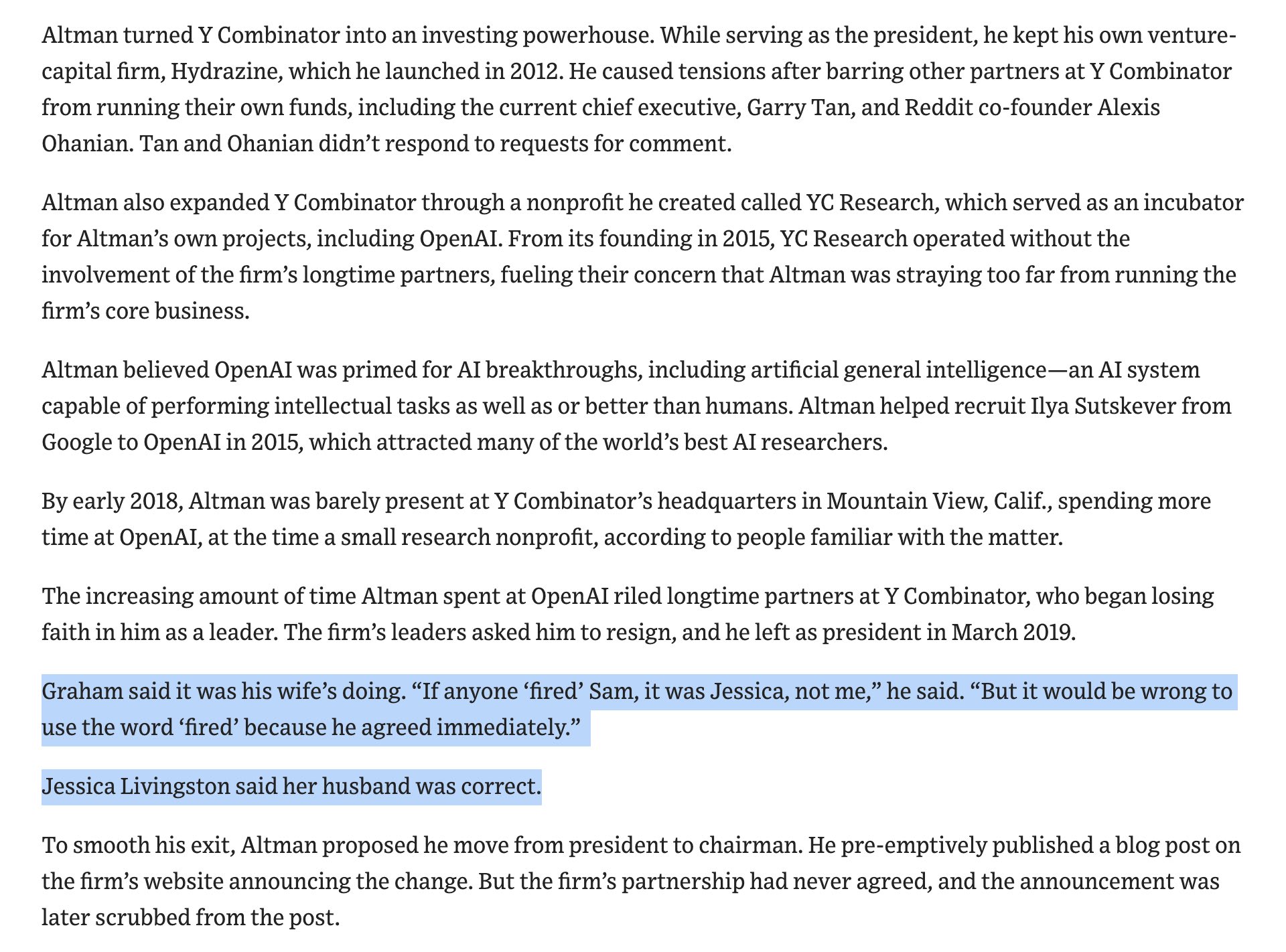

If we presume that Graham’s story is accurate, it still means that Altman took on two incompatible leadership positions, and only stepped down from one of them when asked to do so by someone who could fire him. That isn’t being fired. It also isn’t entirely not being fired.

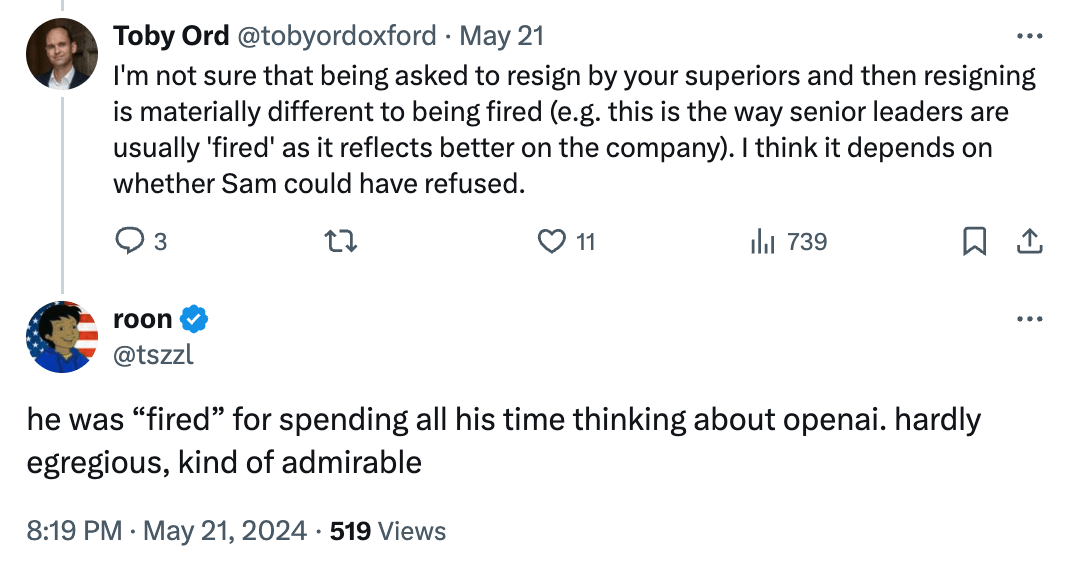

According to the most friendly judge (e.g. GPT-4o) if it was made clear Altman would get fired from YC if he did not give up one of his CEO positions, then ‘YC fired Altman’ is a reasonable claim. I do think precision is important here, so I would prefer ‘forced to choose’ or perhaps ‘effectively fired.’ Yes, that is a double standard on precision, no I don’t care.

I think that Paul Graham’s remarks today—particularly the “we didn’t want him to leave” part—make it clear that Altman was not fired.

In December 2023, Paul Graham gave a similar account to the Wall St Journal and said “it would be wrong to use the word ‘fired’”.

Roon has a take.

Bret Taylor and Larry Summers (members of the current OpenAI board) have responded to Helen Toner and Tasha McCauley in The Economist.

The key passages:

Helen Toner and Tasha McCauley, who left the board of Openai after its decision to reverse course on replacing Sam Altman, the CEO, last November, have offered comments on the regulation of artificial intelligence (AI) and events at OpenAI in a By Invitation piece in The Economist.

We do not accept the claims made by Ms Toner and Ms McCauley regarding events at OpenAI. Upon being asked by the former board (including Ms Toner and Ms McCauley) to serve on the new board, the first step we took was to commission an external review of events leading up to Mr Altman’s forced resignation. We chaired a special committee set up by the board, and WilmerHale, a prestigious law firm, led the review. It conducted dozens of interviews with members of OpenAI's previous board (including Ms Toner and Ms McCauley), Openai executives, advisers to the previous board and other pertinent witnesses; reviewed more than 30,000 documents; and evaluated various corporate actions. Both Ms Toner and Ms McCauley provided ample input to the review, and this was carefully considered as we came to our judgments.

The review’s findings rejected the idea that any kind of ai safety concern necessitated Mr Altman’s replacement. In fact, WilmerHale found that “the prior board’s decision did not arise out of concerns regarding product safety or security, the pace of development, OpenAI's finances, or its statements to investors, customers, or business partners.”

Furthermore, in six months of nearly daily contact with the company we have found Mr Altman highly forthcoming on all relevant issues and consistently collegial with his management team. We regret that Ms Toner continues to revisit issues that were thoroughly examined by the WilmerHale-led review rather than moving forward.

Ms Toner has continued to make claims in the press. Although perhaps difficult to remember now, OpenAI released ChatGPT in November 2022 as a research project to learn more about how useful its models are in conversational settings. It was built on GPT-3.5, an existing ai model which had already been available for more than eight months at the time.

Flagging the most upvoted comment thread on EA Forum, with replies from Ozzie, which begins:

This post contains many claims that you interpret OpenAI to be making. However, unless I'm missing something, I don't see citations for any of the claims you attribute to them. Moreover, several of the claims feel like they could potentially be described as misinterpretations of what OpenAI is saying or merely poorly communicated ideas.

Nice. One thing: initially I couldn't figure out how to read this because I didn't see the key at the top. I think the key is a bit too easy to miss if you are zooming in to look at the image on mobile. Maybe make it more prominent?

Thanks for the heads up. Each of those code blocks is being treated separately, so the placeholder is repeated several times. We'll release a fix for this next week.

Usually the text inside codeblocks is not suitable for narration. This is a case where ideally we would narrate them. We'll have a think about ways to detect this.

I replaced it because it seemed like a less useful format.

- Azure TTS cost per million characters = $16

- Elevenlabs TTS cost per million characters = $180

1 million characters is roughly 200,000 words.

One hour of audio is roughly 9000 words.

Thanks! We're currently using Azure TTS. Our plan is to review every couple months and update to use better voices when they become available on Azure or elsewhere. Elevenlabs is a good candidate but unfortunately they're ~10x more expensive per hour of narration than Azure ($10 vs $1).

Asya: is the above sufficient to allay the suspicion you described? If not, what kind of evidence are you looking for (that we might realistically expect to get)?