Upon reflection, the one thing I don't love about this post is the use of the word "newbies". I don't have deep technical chops, but I've been around the AI safety space since 2023 and I've completed the BlueDot technical curriculum in 2023 and 2024 (when it was still close to Richard Ngo's original). I still found IABIED clarified and reinforced several important intuitions about the likely path ASI will take. I wish we had found a better term that could act as a scalpel to cleave the difference between things that help grow technical skills and things that help grow fundamental intuitions.

Similarly: when you're deep in the trees, it can be extremely valuable to review the map of the forest.

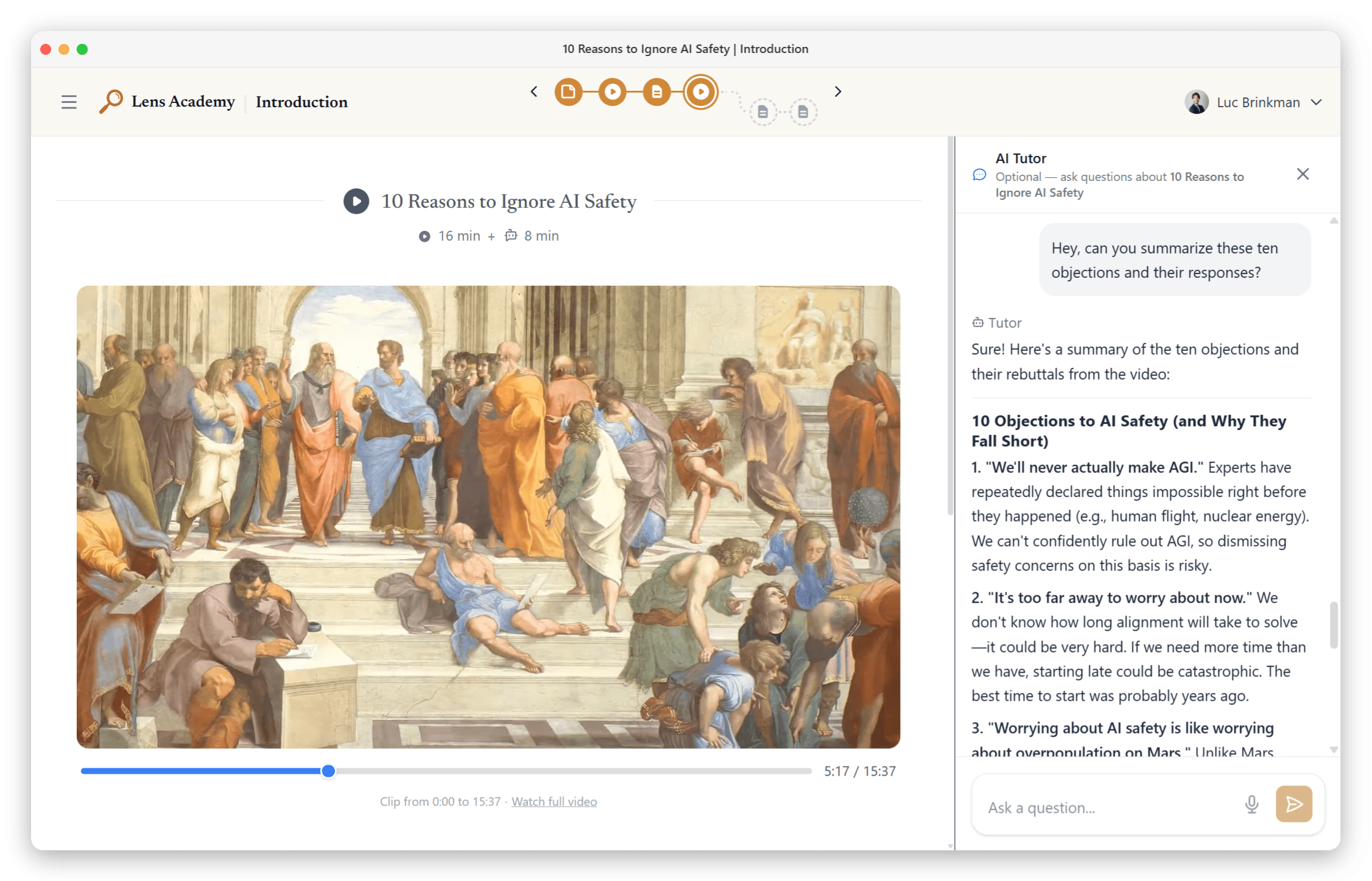

tl;dr: Lens Academy offers a new course introducing ASI x-risk for AI safety newcomers, centered around the book IABIED. We share our hypothesis of why IABIED seems more appreciated by AI Safety newbies than by AI Safety insiders.

Lens Academy's new intro course uses IABIED to teach newbies about ASI x-risk

Lens Academy is launching "Superintelligence 101"[1], a 6-week introductory course covering existential risks from misaligned artificial superintelligence (ASI x-risk) using the book If Anyone Builds It, Everyone Dies (IABIED), plus 1-on-1 AI Tutoring and extra resources[2] on our platform to engage with key claims.[3] Each week ends with a facilitated group meeting.

Anyone can enroll, and everyone is accepted. We're set up to be highly scalable, so we don't reject any applications. In, fact, we don't even have applications.

Sign-up here as a participant...

The number of people who deeply understand superintelligence risk is far too small. There's a growing pipeline of people entering AI Safety, but most of the available onboarding covers the field broadly, touching on many topics without going deep on the parts we think matter most. People come out having been exposed to AI Safety ideas, but often can't explain why alignment is genuinely hard, or think strategically about what to work on. We think the gap between "I've heard of AI Safety" and "I understand why this might end everything, and can articulate it" is one of the most important gaps to close.

We started Lens Academy to close that gap. Lens Academy is a free, nonprofit AI Safety education platform focused specifically on misaligned superintelligence: why...

Your site is the single most commonly shared resource by Navigators at AI Safety Quest. We honestly could structure about a third of our calls as a guided walkthrough of this site. I think the enhanced discoverability will be a huge step forward.

Mentorship is one of the most frequently requested services that AI Safety Quest sees when conducting Navigation calls. I hope this service can help bridge the gap between "I want to do something about AI safety" and "I'm working on a meaningful AIS project". Many thanks to you both for making this happen.

Thanks for putting this together! Two suggestions:

- Keep doing this. It seems valuable.

Can you talk Type III Audio into reading it?Already done.

Thanks for another thought provoking post. This is quite timely for me, as I've been thinking a lot about the difference between the work of futurists as compared to forecasters.

...These are people who thought a lot about science and the future, and made lots of predictions about future technologies - but they're famous for how entertaining their fiction was at the time, not how good their nonfiction predictions look in hindsight. I selected them by vaguely remembering that "the Big Three of science fiction" is a thing people say sometimes, googling it,

Ha ha ha. I was strongly tempted to add a softener to Luc's phrase. I wanted a subtitle like "Technically If everyone reads it, everyone doesn't die (all at once), but it doesn't have the same ring, does it?"