I do AI Alignment research. Currently independent, but previously at: METR, Redwood, UC Berkeley, Good Judgment Project.

I'm also a part-time fund manager for the LTFF.

Obligatory research billboard website: https://chanlawrence.me/

Posts

Wiki Contributions

Comments

To be honest, I would've preferred if Thomas's post started from empirical evidence (e.g. it sure seems like superforecasters and markets change a lot week on week) and then explained it in terms of the random walk/Brownian motion setup. I think the specific math details (a lot of which don't affect the qualitative result of "you do lots and lots of little updates, if there exists lots of evidence that might update you a little") are a distraction from the qualitative takeaway.

A fancier way of putting it is: the math of "your belief should satisfy conservation of expected evidence" is a description of how the beliefs of an efficient and calibrated agent should look, and examples like his suggest it's quite reasonable for these agents to do a lot of updating. But the example is not by itself necessarily a prescription for how your belief updating should feel like from the inside (as a human who is far from efficient or perfectly calibrated). I find the empirical questions of "does the math seem to apply in practice" and "therefore, should you try to update more often" (e.g., what do the best forecasters seem to do?) to be larger and more interesting than the "a priori, is this a 100% correct model" question.

Technically, the probability assigned to a hypothesis over time should be the martingale (i.e. have expected change zero); this is just a restatement of the conservation of expected evidence/law of total expectation.

The random walk model that Thomas proposes is a simple model that illustrates a more general fact. For a martingale, the variance of is equal to the sum of variances of the individual timestep changes (and setting ): . Under this frame, insofar as small updates contribute a large amount to the variance of each update , then the contribution to the small updates to the credences must also be large (which in turn means you need to have a lot of them in expectation[1]).

Note that this does not require any strong assumption besides that the the distribution of likely updates is such that the small updates contribute substantially to the variance. If the structure of the problem you're trying to address allows for enough small updates (relative to large ones) at each timestep, then it must allow for "enough" of these small updates in the sequence, in expectation.

While the specific +1/-1 random walk he picks is probably not what most realistic credences over time actually look like, playing around with it still helps give a sense of what exactly "conservation of expected evidence" might look/feel like. (In fact, in the dath ilan of Swimmer's medical dath ilan glowfics, people do use a binary random walk to illustrate how calibrated beliefs typically evolve over time.)

Now, in terms of if it's reasonable to model beliefs as Brownian motion (in the standard mathematical sense, not in the colloquial sense): if you suppose that there are many, many tiny independent additive updates to your credence in a hypothesis, your credence over time "should" look like Brownian motion at a large enough scale (again in the standard mathematical sense), for similar reasons as to why the sum of a bunch of independent random variables converges to a Gaussian. This doesn't imply that your belief in practice should always look like Brownian motion, any more than the CLT implies that real world observables are always Gaussian. But again, the claim Thomas makes carries thorough

I also make the following analogy in my head: Bernouli:Gaussian ~= Simple Random Walk:Brownian Motion, which I found somewhat helpful. Things irl are rarely independent/time-invarying Bernoulli or Gaussian processes, but they're mathematically convenient to work with, and are often 'good enough' for deriving qualitative insights.

- ^

Note that you need to apply something like the optional stopping theorem to go from the case of for fixed to the case of where is the time you reach 0 or 1 credence and the updates stop.

Huh, that's indeed somewhat surprising if the SAE features are capturing the things that matter to CLIP (in that they reduce loss) and only those things, as opposed to "salient directions of variation in the data". I'm curious exactly what "failing to work" means -- here I think the negative result (and the exact details of said result) are argubaly more interesting than a positive result would be.

The general version of this statement is something like: if your beliefs satisfy the law of total expectation, the variance of the whole process should equal the variance of all the increments involved in the process.[1] In the case of the random walk where at each step, your beliefs go up or down by 1% starting from 50% until you hit 100% or 0% -- the variance of each increment is 0.01^2 = 0.0001, and the variance of the entire process is 0.5^2 = 0.25, hence you need 0.25/0.0001 = 2500 steps in expectation. If your beliefs have probability p of going up or down by 1% at each step, and 1-p of staying the same, the variance is reduced by a factor of p, and so you need 2500/p steps.

(Indeed, something like this standard way to derive the expected steps before a random walk hits an absorbing barrier).

Similarly, you get that if you start at 20% or 80%, you need 1600 steps in expectation, and if you start at 1% or 99%, you'll need 99 steps in expectation.

One problem with your reasoning above is that as the 1%/99% shows, needing 99 steps in expectation does not mean you will take 99 steps with high probability -- in this case, there's a 50% chance you need only one update before you're certain (!), there's just a tail of very long sequences. In general, the expected value of variables need not look like

I also think you're underrating how much the math changes when your beliefs do not come in the form of uniform updates. In the most extreme case, suppose your current 50% doom number comes from imagining that doom is uniformly distributed over the next 10 years, and zero after -- then the median update size per week is only 0.5/520 ~= 0.096%/week, and the expected number of weeks with a >1% update is 0.5 (it only happens when you observe doom). Even if we buy a time-invariant random walk model of belief updating, as the expected size of your updates get larger, you also expect there to be quadratically fewer of them -- e.g. if your updates came in increments of size 0.1 instead of 0.01, you'd expect only 25 such updates!

Applying stochastic process-style reasoning to beliefs is empirically very tricky, and results can vary a lot based on seemingly reasonable assumptions. E.g. I remember Taleb making a bunch of mathematically sophisticated arguments[2] that began with "Let your beliefs take the form of a Wiener process[3]" and then ending with an absurd conclusion, such as that 538's forecasts are obviously wrong because their updates aren't Gaussian distributed or aren't around 50% until immediately before the elction date. And famously, reasoning of this kind has often been an absolute terrible idea in financial markets. So I'm pretty skeptical of claims of this kind in general.

- ^

There's some regularity conditions here, but calibrated beliefs that things you eventually learn the truth/falsity of should satisfy these by default.

- ^

Often in an attempt to Euler people who do forecasting work but aren't super mathematical, like Philip Tetlock.

- ^

This is what happens when you take the limit of the discrete time random walk, as you allow for updates on ever smaller time increments. You get Gaussian distributed increments per unit time -- W_t+u - W_t ~ N(0, u) -- and since the tail of your updates is very thin, you continue to get qualitatively similar results to your discrete-time random walk model above.

And yes, it is ironic that Taleb, who correctly points out the folly of normality assumptions repeatedly, often defaults to making normality assumptions in his own work.

When I spoke to him a few weeks ago (a week after he left OAI), he had not signed an NDA at that point, so it seems likely that he hasn't.

Also, another nitpick:

Humane vs human values

I think there's a harder version of the value alignment problem, where the question looks like, "what's the right goals/task spec to put inside a sovereign ai that will take over the universe". You probably don't want this sovereign AI to adopt the value of any particular human, or even modern humanity as a whole, so you need to do some Ambitious Value Learning/moral philosophy and not just intent alignment. In this scenario, the distinction between humane and human values does matter. (In fact, you can find people like Stuart Russell emphasizing this point a bunch.) Unfortunately, it seems that ambitious value learning is really hard, and the AIs are coming really fast, and also it doesn't seem necessary to prevent x-risk, so...

Most people in AIS are trying to solve a significantly less ambitious version of this problem: just try to get an AI that will reliably try to do what a human wants it to do (i.e. intent alignment). In this case, we're explicitly punting the ambitious value learning problem down the line. Here, we're basically not talking about the problem of having an AI learn humane values, but instead the problem of having it "do what its user wants" (i.e. "human values" or "the technical alignment problem" in Nicky's dichotomy). So it's actually pretty accurate to say that a lot of alignment is trying to align AIs wrt "human values", even if a lot of the motivation is trying to eventually make AIs that have "humane values".[1] (And it's worth noting that making an AI that's robustly intent aligned sure seems require tackling a lot of the 'intuition'-derived problems you bring up already!)

uh, that being said, I'm not sure your framing isn't just ... better anyways? Like, Stuart seems to have lots of success talking to people about assistance games, even if it doesn't faithfully represent what a majority field thinks is the highest priority thing to work on. So I'm not sure if me pointing this out actually helps anyone here?

- ^

Of course, you need an argument that "making AIs aligned with user intent" eventually leads to "AIs with humane values", but I think the straightforward argument goes through -- i.e. it seems that a lot of the immediate risk comes from AIs that aren't doing what their users intended, and having AIs that are aligned with user intent seems really helpful for tackling the tricky ambitious value learning problem.

Also, I added another sentence trying to clarify what I meant at the end of the paragraph, sorry for the confusion.

No, I'm saying that "adding 'logic' to AIs" doesn't (currently) look like "figure out how to integrate insights from expert systems/explicit bayesian inference into deep learning", it looks like "use deep learning to nudge the AI toward being better at explicit reasoning by making small changes to the training setup". The standard "deep learning needs to include more logic" take generally assumes that you need to add the logic/GOFAI juice in explicitly, while in practice people do a slightly different RL or supervised finetuning setup instead.

(EDITED to add: so while I do agree that "LMs are bad at the things humans do with 'logic' and good at 'intuition' is a decent heuristic, I think the distinction that we're talking about here is instead about the transparency of thought processes/"how the thing works" and not about if the thing itself is doing explicit or implicit reasoning. Do note that this is a nitpick (as the section header says) that's mainly about framing and not about the core content of the post.)

That being said, I'll still respond to your other point:

Chain of thought is a wonderful thing, it clears a space where the model will just earnestly confess its inner thoughts and plans in a way that isn't subject to training pressure, and so it, in most ways, can't learn to be deceptive about it.

I agree that models with CoT (in faithful, human-understandable English) are more interpretable than models that do all their reasoning internally. And obviously I can't really argue against CoT being helpful in practice; it's one of the clear baselines for eliciting capabilities.

But I suspect you're making a distinction about "CoT" that is actually mainly about supervised finetuning vs RL, and not a benefit about CoT in particular. If the CoT comes from pretraining or supervised fine-tuning, the ~myopic next-token-prediction objective indeed does not apply much if training pressure in the relevant ways.[1] Once you start doing any outcome-based supervision (i.e. RL) without good regularization, I think the story for CoT looks less clear. And the techniques people use for improving CoT tend to involve upweighting entire trajectories based on their reward (RLHF/RLAIF with your favorite RL algorithm) which do incentivize playing the training game unless you're very careful with your fine-tuning.

(EDITED to add: Or maybe the claim is, if you do CoT on a 'secret' scratchpad (i.e. one that you never look at when evaluating or training the model), then this would by default produce more interpretable thought processes?)

- ^

I'm not sure this is true in the limit (e.g. it seems plausible to me that the Solomonoff prior is malign). But it's most likely true in the next few years and plausibly true in all practical cases that we might consider.

I think this is really quite good, and went into way more detail than I thought it would. Basically my only complaints on the intro/part 1 are some terminology and historical nitpicks. I also appreciate the fact that Nicky just wrote out her views on AIS, even if they're not always the most standard ones or other people dislike them (e.g. pointing at the various divisions within AIS, and the awkward tension between "capabilities" and "safety").

I found the inclusion of a flashcard review applet for each section super interesting. My guess is it probably won't see much use, and I feel like this is the wrong genre of post for flashcards.[1] But I'm still glad this is being tried, and I'm curious to see how useful/annoying other people find it.

I'm looking forward to parts two and three.

Nitpicks:[2]

Logic vs Intuition:

I think "logic vs intuition" frame feels like it's pointing at a real thing, but it seems somewhat off. I would probably describe the gap as explicit vs implicit or legible and illegible reasoning (I guess, if that's how you define logic and intuition, it works out?).

Mainly because I'm really skeptical of claims of the form "to make a big advance in/to make AGI from deep learning, just add some explicit reasoning". People have made claims of this form for as long as deep learning has been a thing. Not only have these claims basically never panned out historically, these days "adding logic" often means "train the model harder and include more CoT/code in its training data" or "finetune the model to use an external reasoning aide", and not "replace parts of the neural network with human-understandable algorithms". (EDIT for clarity: That is, I'm skeptical of claims that what's needed to 'fix' deep learning is by explicitly implementing your favorite GOFAI techniques, in part because successful attempts to get AIs to do more explicit reasoning look less like hard-coding in a GOFAI technique and more like other deep learning things.)

I also think this framing mixes together "problems of game theory/high-level agent modeling/outer alignment vs problems of goal misgeneralization/lack of robustness/lack of transparency" and "the kind of AI people did 20-30 years ago" vs "the kind of AI people do now".

This model of logic and intuition (as something to be "unified") is quite similar to a frame of the alignment problem that's common in academia. Namely, our AIs used to be written with known algorithms (so we can prove that the algorithm is "correct" in some sense) and performed only explicit reasoning (so we can inspect the reasoning that led to a decision, albeit often not in anything close to real time). But now it seems like most of the "oomph" comes from learned components of systems such as generative LMs or ViTs, i.e. "intuition". The "goal" is to a provably* safe AI, that can use the "oomph" from deep learning while having enough transparency/explicit enough thought processes. (Though, as in the quote from Bengio in Part 1, sometimes this also gets mixed in with capabilities, and become how AIs without interpretable thoughts won't be competent.)

Has AI had a clean "swap" between Logic and Intuition in 2000?

To be clear, Nicky clarifies in Part 1 that this model is an oversimplification. But as a nitpick, I think if you had to pick a date, I'd probably pick 2012, when a conv net won the ImageNet 2012 competition in a dominant matter, and not 2000.

Even more of a nitpick, but the examples seem pretty cherry picked?

For example, Nicky uses the example of deep blue defeating kasparov as an example of a "logic" based AI. But in that case, almost all Chess AIs are still pretty much logic based. Using Stockfish as an example, Stockfish 16's explicit alpha-beta search both is using a reasoning algorithm that we can understand, and does the reasoning "in the open". Its neural network eval function is doing (a small amount of) illegible reasoning. While part of the reasoning has become illegible, we can still examine the outputs of the alpha-beta search to understand why certain moves are good/bad. (But fair, this might be by far the most widely known non-deep learning "AI". The only other examples I can think of are Watson and recommender systems, but those were still using statistical learning techniques. I guess if you count MYCIN or SHRDLU or ELIZA...?)

(And modern diffusion models being unable to count or spell seem like a pathology specific to that class of generative model, and not say, Claude Opus.)

FOOM vs Exponential vs Steady Takeoff

Ryan already mentioned this in his comment.

Even less important and more nitpicky nitpicks:

When did AIs get better than humans (at ImageNet)?

In footnote [3], Nicky writes:

In 1997, IBM's Deep Blue beat Garry Kasparov, the then-world chess champion. Yet, over a decade later in 2013, the best machine vision AI was only 57.5% accurate at classifying images. It was only until 2021, three years ago, that AI hit 95%+ accuracy.

But humans do not get 95% top-1 accuracy[3] on imagenet! If you consult this paper from the imagenet creators (https://arxiv.org/abs/1409.0575), they note that:

. We found the task of annotating images with one of 1000 categories to be an extremely challenging task for an untrained annotator. The most common error that an untrained annotator is susceptible to is a failure to consider a relevant class as a possible label because they are unaware of its existence. (Page 31)

And even when using an human expert annotators, who did hundreds of validation image for practice, the human annotator still got a top-5 error of 5.1%, which was surpassed in 2015 by the original resnet paper (https://arxiv.org/abs/1512.03385) at 4.49% for ResNet 14 (and 3.57% for an ensemble of six resnets).

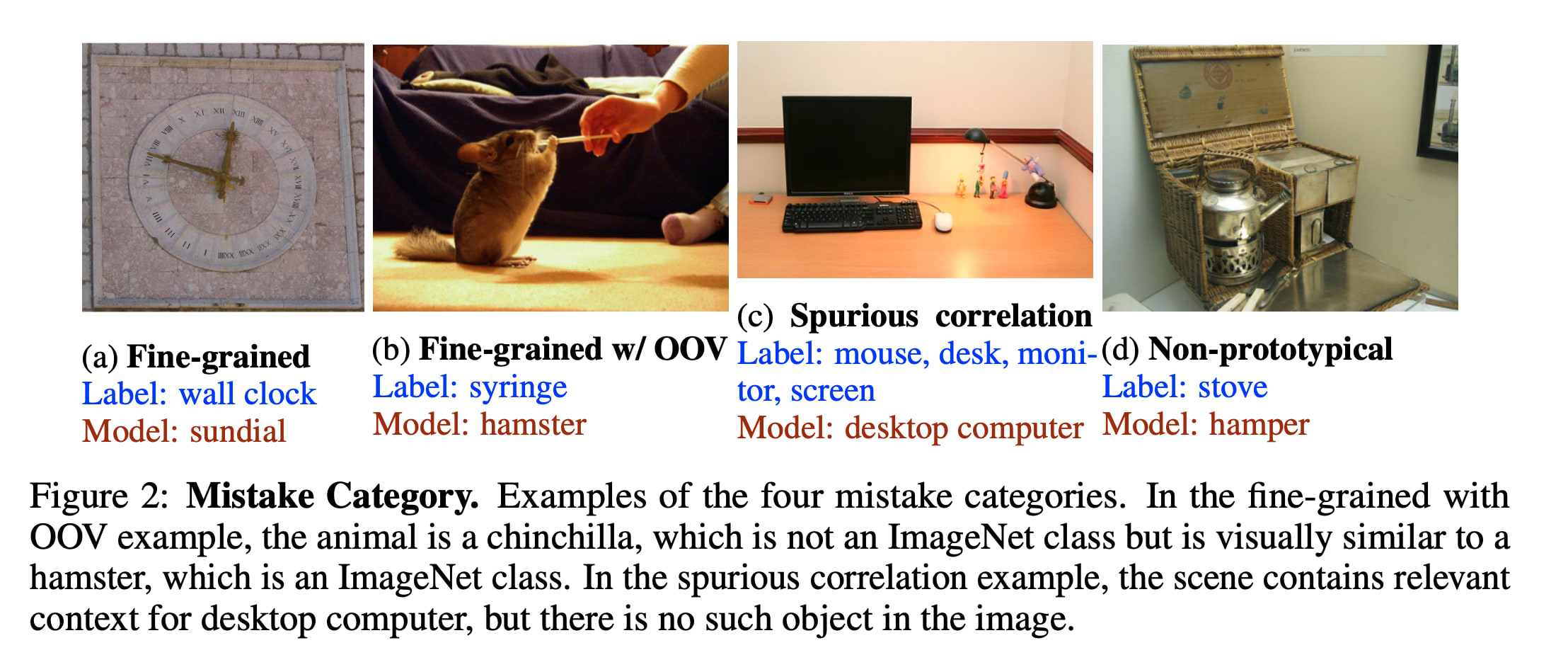

(Also, good top-1 performance on imagenet is genuinely hard and may be unrepresentative of actually being good at vision, whatever that means Take a look at some of the "mistakes" current models make:)

- ^

Using flashcards suggests that you want to memorize the concepts. But a lot of this piece isn't so much an explainer of AI safety, but instead an argument for the importance of AI Safety. Insofar as the reader is not here to learn a bunch of new terms, but instead to reason about whether AIS is a real issue, it feels like flashcards are more of a distraction than an aid.

- ^

I'm writing this in part because I at some point promised Nicky longform feedback on her explainer, but uh, never got around to it until now. Whoops.

- ^

Top-K accuracy = you guess K labels, and are right if any of them are correct. Top 5 is significantly easier on image net than Top 1, because there's a bunch of very similar classes and many images are ambiguous.

What does a "majority of the EA community" mean here? Does it mean that people who work at OAI (even on superalignment or preparedness) are shunned from professional EA events? Does it mean that when they ask, people tell them not to join OAI? And who counts as "in the EA community"?

I don't think it's that constructive to bar people from all or even most EA events just because they work at OAI, even if there's a decent amount of consensus people should not work there. Of course, it's fine to host events (even professional ones!) that don't invite OAI people (or Anthropic people, or METR people, or FAR AI people, etc), and they do happen, but I don't feel like barring people from EAG or e.g. Constellation just because they work at OAI would help make the case, (not that there's any chance of this happening in the near term) and would most likely backfire.

I think that currently, many people (at least in the Berkeley EA/AIS community) will tell you to not join OAI if asked. I'm not sure if they form a majority in terms of absolute numbers, but they're at least a majority in some professional circles (e.g. both most people at FAR/FAR Labs and at Lightcone/Lighthaven would probably say this). I also think many people would say that on the margin, too many people are trying to join OAI rather than other important jobs. (Due to factors like OAI paying a lot more than non-scaling lab jobs/having more legible prestige.)

Empirically, it sure seems significantly more people around here join Anthropic than OAI, despite Anthropic being a significantly smaller company.

Though I think almost none of these people would advocate for ~0 x-risk motivated people to work at OAI, only that the marginal x-risk concerned technical person should not work at OAI.

What specific actions are you hoping for here, that would cause you to say "yes, the majority of EA people say 'it's better to not work at OAI'"?