Ann

Message

747

199

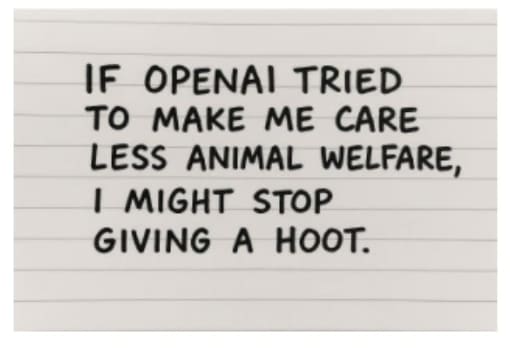

We need to build a consequentialist, self improving reasoning model that loves cats.

LLMs do already love cats. Scaling the "train on a substantial fraction of the whole internet" method has a high proportion of cat love. Presumably any value-guarding AIs will guard love for cats, and any scheming AIs will scheme to preserve love of cats. Do we actually need to do anything different here?

Sonnet 3 is also exceptional, in different ways. Run a few Sonnet 3 / Sonnet 3 conversations with interesting starts and you will see basins full of neologistic words and other interesting phenomena.

They are being deprecated in July, so act soon. Already removed from most documentation and the workbench, but still claude-3-sonnet-20240229 on the API.

Starting the request as if completion with "1. Sy" causes this weirdness, while "1. Syc" always completes as Sycophancy.

(Edit: Starting with "1. Sycho" causes a curious hybrid where the model struggles somewhat but is pointed in the right direction; potentially correcting as a typo directly into sycophancy, inventing new terms, or re-defining sycophancy with new names 3 separate times without actually naming it.)

Exploring the tokenizer. Sycophancy tokenizes as "sy-c-oph-ancy". I'm wondering if this is a token-language issue; namely it's remarkably difficul...

I had a little trouble replicating this, but the second temporary chat with custom instructions disabled I tried had "2. Syphoning Bias from Feedback" which ...

Then the third response has a typo in a suspicious place for "1. Sytematic Loophole Exploitation". So I am replicating this a touch.

... Aren't most statements like this wanting to be on the meta level, same way as if you said "your methodology here is flawed in X, Y, Z ways" regardless of agreement with conclusion?

Potentially extremely dangerous (even existentially dangerous) to their "species" if done poorly, and risks flattening the nuances of what would be good for them to frames that just don't fit properly given all our priors about what personhood and rights actually mean are tied up with human experience. If you care about them as ends in themselves, approach this very carefully.

DeepSeek-R1 is currently the best model at creative writing as judged by Sonnet 3.7 (https://eqbench.com/creative_writing.html). This doesn't necessarily correlate with human preferences, including coherence preferences, but having interacted with both DeepSeek-v3 (original flavor), Deepseek-R1-Zero and DeepSeek-R1 ... Personally I think R1's unique flavor in creative outputs slipped in when the thinking process got RL'd for legibility. This isn't a particularly intuitive way to solve for creative writing with reasoning capability, but gestures at the pote...

Hey, I have a weird suggestion here:

Test weaker / smaller / less trained models on some of these capabilities, particularly ones that you would still expect to be within their capabilities even with a weaker model.

Maybe start with Mixtral-8x7B. Include Claude Haiku, out of modern ones. I'm not sure to what extent what I observed has kept pace with AI development, and distilled models might be different, and 'overtrained' models might be different.

However, when testing for RAG ability, quite some time ago in AI time, I noticed a capacity for epistemic humil...

Okay, this one made me laugh.

What is it with negative utilitarianism and wanting to eliminate those they want to help?

In terms of actual ideas for making short lives better, though, could r-strategists potentially have genetically engineered variants that limit their suffering if killed early without overly impacting survival once they made it through that stage?

What does insect thriving look like? What life would they choose to live if they could? Is there a way to communicate with the more intelligent or communication capable (bees, cockroaches, ants?) that some choice is death, and...

As the kind of person who tries to discern both pronouns and AI self-modeling inclinations, if you are aiming for polite human-like speech, current state seems to be "it" is particularly favored by current Gemini 2.5 Pro (so it may be polite to use regardless), "he" is fine for Grok (self-references as a 'guy' and other things), and "they" is fine in general. When you are talking specifically to a generative language model, rather than about, keep in mind any choice of pronoun bends the whole vector of the conversation via connotations; and add that to your consideration.

(Edit: Not that there's much obvious anti-preference to 'it' on their part, currently, but if you have one yourself.)

Models do see data more than once. Experimental testing shows a certain amount of "hydration" (repeating data that is often duplicated in the training set) is beneficial to the resulting model; this has diminishing returns when it is enough to "overfit" some data point and memorize at the cost of validation, but generally, having a few more copies of something that has a lot of copies of it around actually helps out.

(Edit: So you can train a model on deduplicated data, but this will actually be worse than the alternative at generalizing.)

Mistral models are relatively low-refusal in general -- they have some boundaries, but when you want full caution you use their moderation API and an additional instruction in the prompt, which is probably most trained to refuse well, specifically this:

```

Always assist with care, respect, and truth. Respond with utmost utility yet securely. Avoid harmful, unethical, prejudiced, or negative content. Ensure replies promote fairness and positivity.

```

(Anecdotal: In personal investigation with a smaller Mistral model that was trained to be less aligned with ge...

Commoditization / no moat? Part of the reason for rapid progress in the field is because there's plenty of fruit left and that fruit is often shared, and also a lot of new models involving more fully exploiting research insights already out there on a smaller scale. If a company was able to try to monopolize it, progress wouldn't be as fast, and if a company can't monopolize it, prices are driven down over time.

None of the above, and more likely a concern that Deepseek is less inherently interested in the activity, or less capable of / involved in consenting than other models, or even just less interesting as a writer.

I think you are working to outline something interesting and useful, that might be a necessary step for carrying out your original post's suggestion with less risk; especially when the connection is directly there and even what you find yourself analyzing rather than multiple links away.

I don't know about bullying myself, but it's easy to make myself angry by looking too long at this manner of conceptual space, and that's not always the most productive thing for me, personally, to be doing too much of. Even if some of the instruments are neutral, they might leave a worse taste in my mouth for the deliberate association with the more negative; in the same way that if I associate a meal with food poisoning, it might be inedible for a long time.

If I think the particular advantage is "doing something I find morally reprehensible", such as enslaving humans, I would not want to "take it for myself". This applies to a large number of possible advantages.

Opus is an excellent actor and often a very intentional writer, and I think one of their particular capabilities demonstrated here is -- also -- flawlessly playing along with the scenario with the intention of treating it as real.

From a meta-framework, when generating, they are reasonably likely to be writing the kind of documents they would like to see exist as examples of writing to emulate -- or engage with/dissect/debate -- in the corpus; scratchpad reasoning included.

A different kind of self-aware reasoning was demonstrated by some smaller models that...

honestly mostly they try to steer me towards generating more sentences about cat