I'll also give you two examples of using ontologies — as in "collections of things and relationships between things" — for real-world tasks that are much dumber than AI.

- ABBYY attempted to create a giant ontology of all concepts, then develop parsers from natural languages into "meaning trees" and renderers from meaning trees into natural languages. The project was called "Compreno". If it worked, it would've given them a "perfect" translating tool from any supported language into any supported language without having to handle each language pair separately

I'll give you an example of an ontology in a different field (linguistics) and maybe it will help.

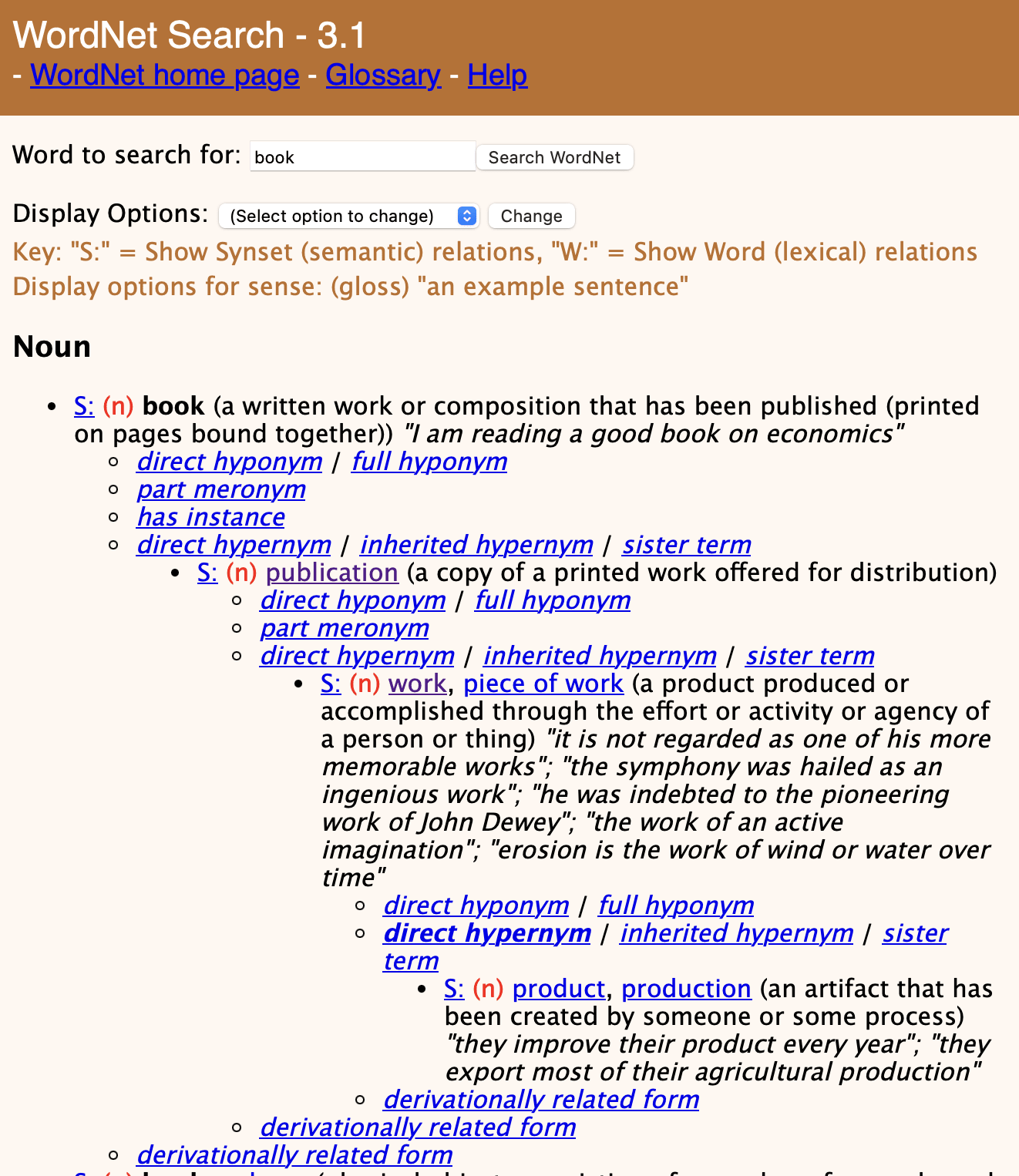

This is WordNet, an ontology of the English language. If you type "book" and keep clicking "S:" and then "direct hypernym", you will learn that book's place in the hierarchy is as follows:

... > object > whole/unit > artifact > creation > product > work > publication > book

So if I had to understand one of the LessWrong (-adjacent?) posts mentioning an "ontology", I would forget about philosophy and just think of a giant tree of words. Be...

I like the idea of tabooing "frame". Thanks for that.

//

First of all, in my life I mostly encounter:

- (1) -- "trying to push the lens of seeing everything as coordination problems or whatever" -- mostly I'm the person doing it. Eg. one of my preferred lenses right now is "society problems are determined by available level of technology and solved by inventing better technology". Think Scott's post https://slatestarcodex.com/2014/09/10/society-is-fixed-biology-is-mutable/.

- (3) -- "friend who tells you about her frames and who isn't very good at listening" -- th

Also, to answer your question about "probability" in a sister chain: yes, "probability" can be in someone's ontology. Things don't have to "exist" to be in an ontology.

Here's another real-world example:

- You are playing a game. Maybe you'll get a heart, maybe you won't. The concept of probability exists for you.

- This person — https://youtu.be/ilGri-rJ-HE?t=364 — is creating a tool-assisted speedrun for the same game. On frame 4582 they'll get a heart, on frame 4581 they won't, so they purposefully waste a frame to get a heart (for instance). "Probability" is

... (read more)