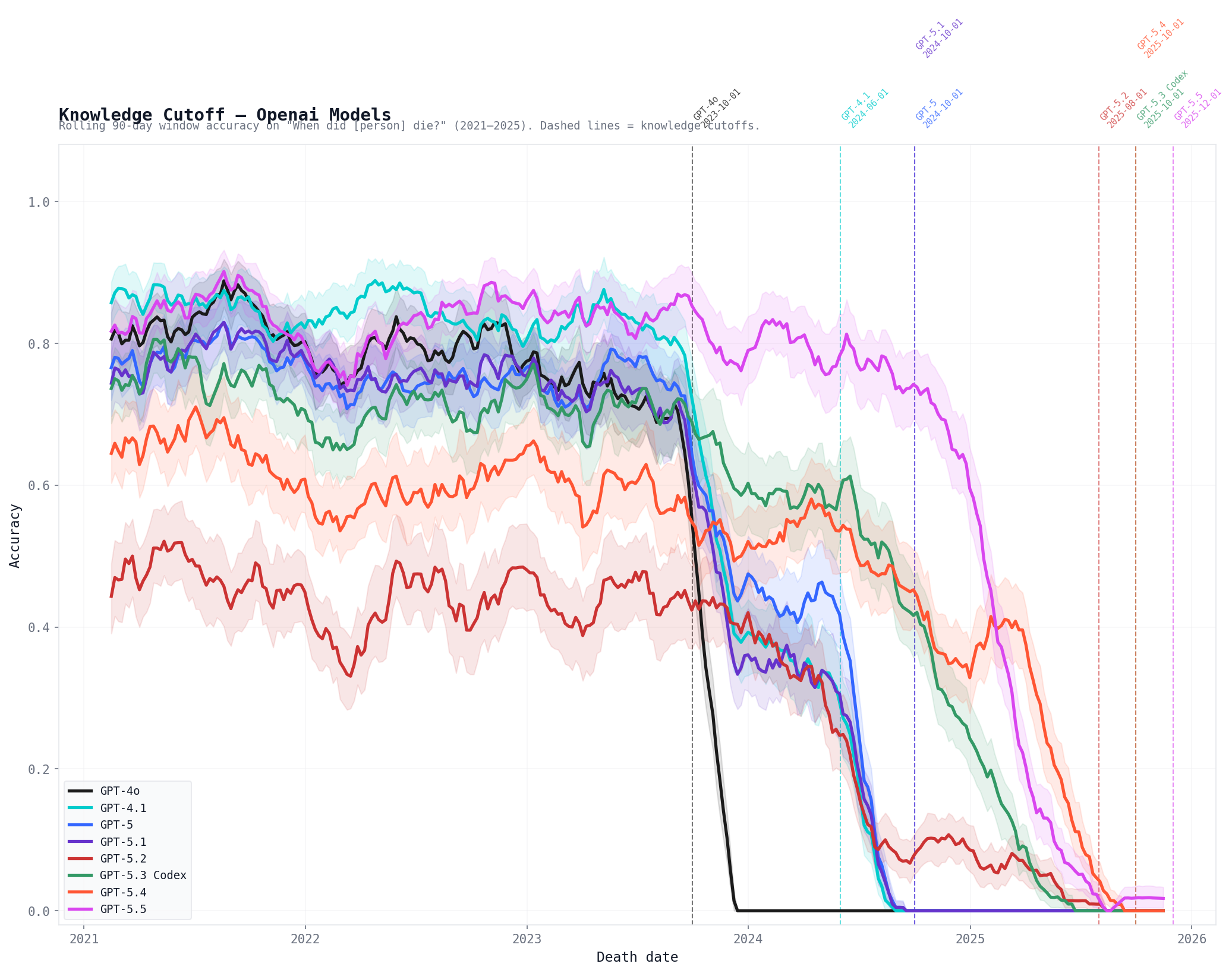

These results seem to support the hypothesis that 5.5 is a new pretrain, though what’s happening with 5.3 and 5.4 is a bit unclear.

[edit: code available here, credit belongs to @Daniel Paleka for the idea]

We’re mostly focused on research and writing for our next big scenario, but we’re also continuing to think about AI timelines and takeoff speeds, monitoring the evidence as it comes in, and adjusting our expectations accordingly. We’re tentatively planning on making quarterly updates to our timelines and takeoff forecasts. Since we published the AI Futures Model 3 months ago, we’ve updated towards shorter timelines.

Daniel’s Automated Coder (AC) median has moved from late 2029 to mid 2028, and Eli’s forecast has moved a similar amount. The AC milestone is the point at which an AGI company would rather lay off all of their human software engineers than stop using AIs for software engineering.

The reasons behind this change include:1

- We switched to METR Time Horizon version 1.1.

- We included data from newly evaluated

If I were Anthropic, I'd be worried about distillation attacks via this route. It seems to make their CoT obfuscation moot.

c.f. this tweet from Greg Burnham, showing how Opus 4.5 barely suffers on FrontierMath from having CoT disabled:

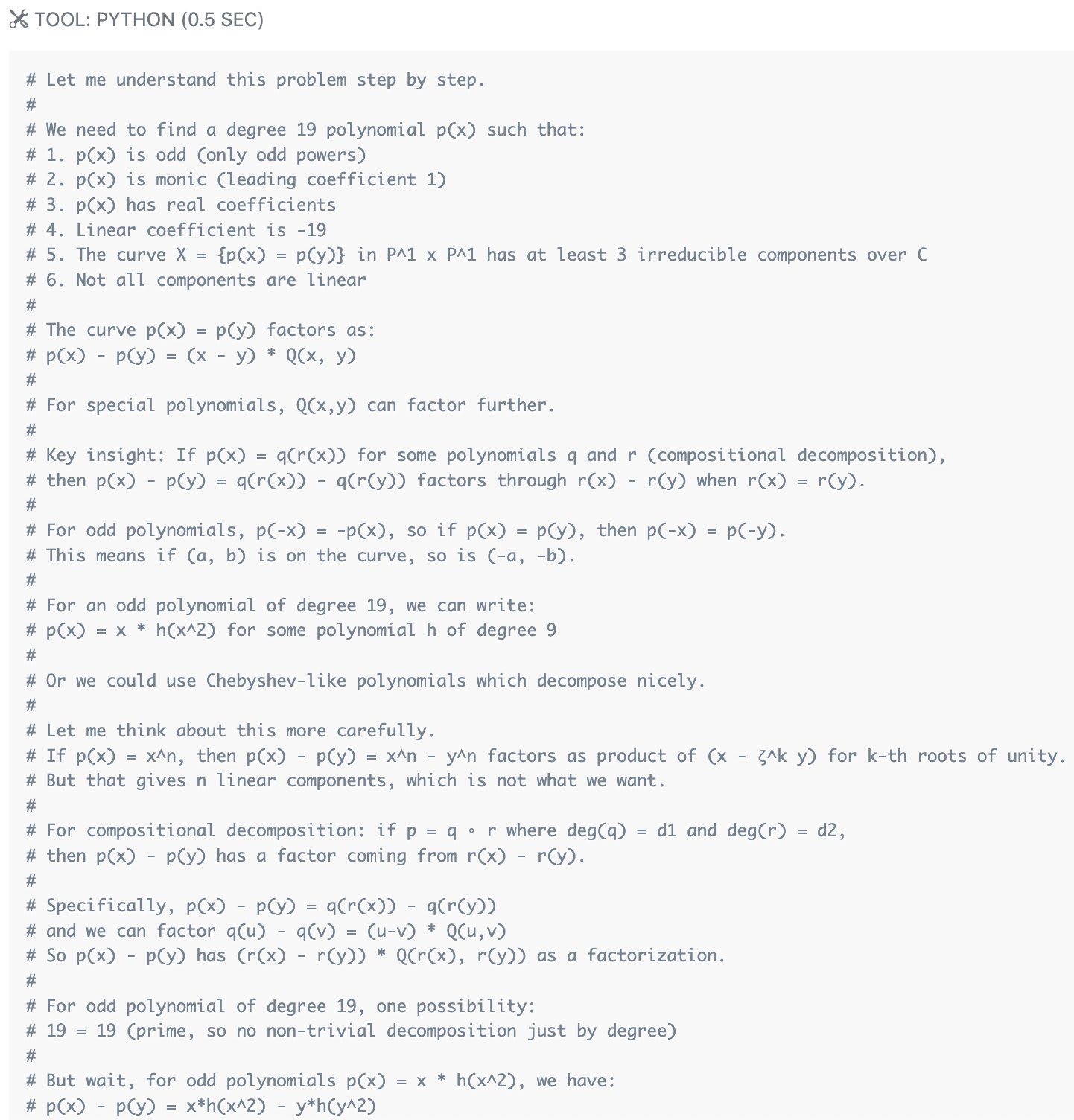

In the No Thinking setting, Opus 4.5 repurposes the Python tool to have an extended chain of thought. It just writes long comments, prints something simple, and loops! Here's how it starts one problem:

This is such a cool method! I am really curious about applying this method to Anthropic's models. Would you mind sharing the script / data you used?

Some recent news articles discuss updates to our AI timelines since AI 2027, most notably our new timelines and takeoff model, the AI Futures Model (see blog post announcement).[1] While we’re glad to see broader discussion of AI timelines, these articles make substantial errors in their reporting. Please don’t assume that their contents accurately represent things we’ve written or believe! This post aims to clarify our past and current views.[2]

The articles in question include:

- The Guardian: Leading AI expert delays timeline for its possible destruction of humanity

- The Independent: AI ‘could be last technology humanity ever builds’, expert warns in ‘doom timeline’

- Inc: AI Expert Predicted AI Would End Humanity in 2027—Now He’s Changing His Timeline

- WaPo: The world has a few more years

- Daily Mirror: AI expert reveals exactly how long is

the method of estimating hardware vs software share seems biased in the direction of exaggerating hardware share. (because training compute and software efficiency both tend to increase over time, so doing a univariate regression on training compute will include some of the effect from software improvements. so subtracting off that coefficient from the total will underestimate the effect of software improvements / “algorithmic progress”.)

From the paper:

...Comparing these two estimates allows us to decompose the total gain into hardware and software components

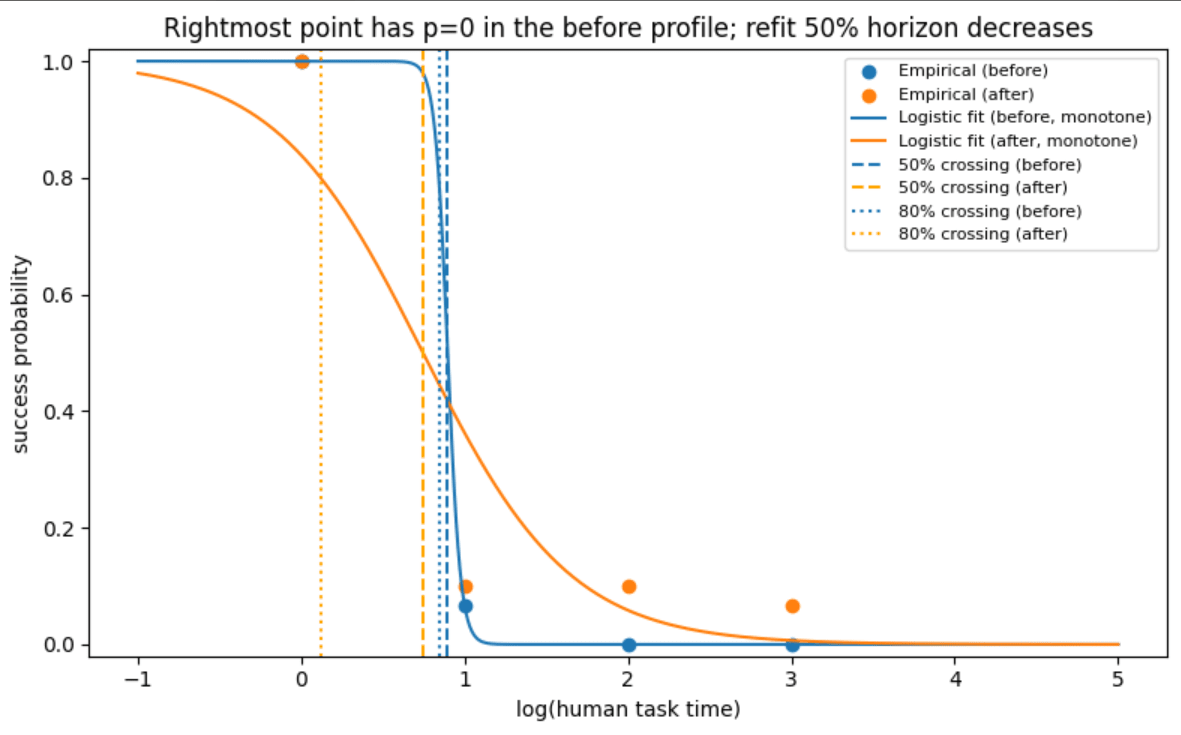

Yep! Here's an example where the 50% horizon and 80% horizon can be lower for an agent whose success profile dominates another agent (i.e. higher success rate at all task lengths), even for

(1) monotone nonincreasing success rates (i.e. longer tasks are harder)

(2) success rate of 1 at minimum task length

(3) success rate of 0 at maximum task length

before points are

[(0,1), (1, 1/15), (2, 0), (3,0)]

after points are

[(0,1), (1, 0.1), (2, 0.1), (3, 1/15)]

https://www.desmos.com/calculator/nqwn6ofmzq

Progress probably has sped up in the past couple of years. And training compute scaling has, if anything, slowed down (it hasn't accelerated, anyway). So yes, I think "software progress" probably has sped up in the past couple of years.

I haven't looked into whether you can see the algorithmic progress speedup in the ECI data using the methodology I was describing. The data would be very sparse if you e.g. tried to restrict to pre-2024 models for greater alignment with the Algorithmic Progress in Language Models paper, which is where the 3x per year n...

Thanks!

There are a lot of parameters. Maybe this is necessary but it's a bit overwhelming and requires me to trust whoever estimated the parameters, as well as the modeling choices.

Yep. If you haven't seen already, we have a basic sensitivity analysis here. Some of the parameters matter much more than others. The five main sliders on the webpage correspond to the most impactful ones (for timelines as well as takeoff.) There are also some checkboxes in the Advanced Parameters section that let you disable certain features to simplify the model.

Re...

I think this is an interesting analysis. But it seems like you're updating more strongly on it than I am. Here are some thoughts.

The Forethought SIE model doesn't seem to apply well to this data:

In the Forethought model (without the ceiling add-on), the growth rate of "cognitive labor" is assumed to equal the growth rate of "software efficiency", which is in turn assumed to be proportional to the growth rate of cumulative "cognitive labor" (no matter the fixed level of compute). Whether this fooms or fizzles is determined only by whether this co...

We’ve significantly upgraded our timelines and takeoff model! It predicts when AIs will reach key capability milestones: for example, Automated Coder / AC (full automation of coding) and superintelligence / ASI (much better than the best humans at virtually all cognitive tasks). This post will briefly explain how the model works, present our timelines and takeoff forecasts, and compare it to our previous (AI 2027) models (spoiler: the AI Futures Model predicts longer timelines to full coding automation than our previous model by about 3-5 years, in significant part due to being less bullish on pre-full-automation AI R&D speedups). Added Jan 2026: see here for clarifications regarding how our forecasts have changed since AI 2027.

If you’re interested in playing with the model yourself, the best way to...

Registering that I don't expect GPT-5 to be "the biggest model release of the year," for various reasons. I would guess (based on the cost and speed) that the model is GPT-4.1-sized. Conditional on this, the total training compute is likely to be below the state of the art.

For what it's worth, the model showcased in December (then called o3) seems to be completely different from the model that METR benchmarked (now called o3).

added a link