Dana

Message

159

47

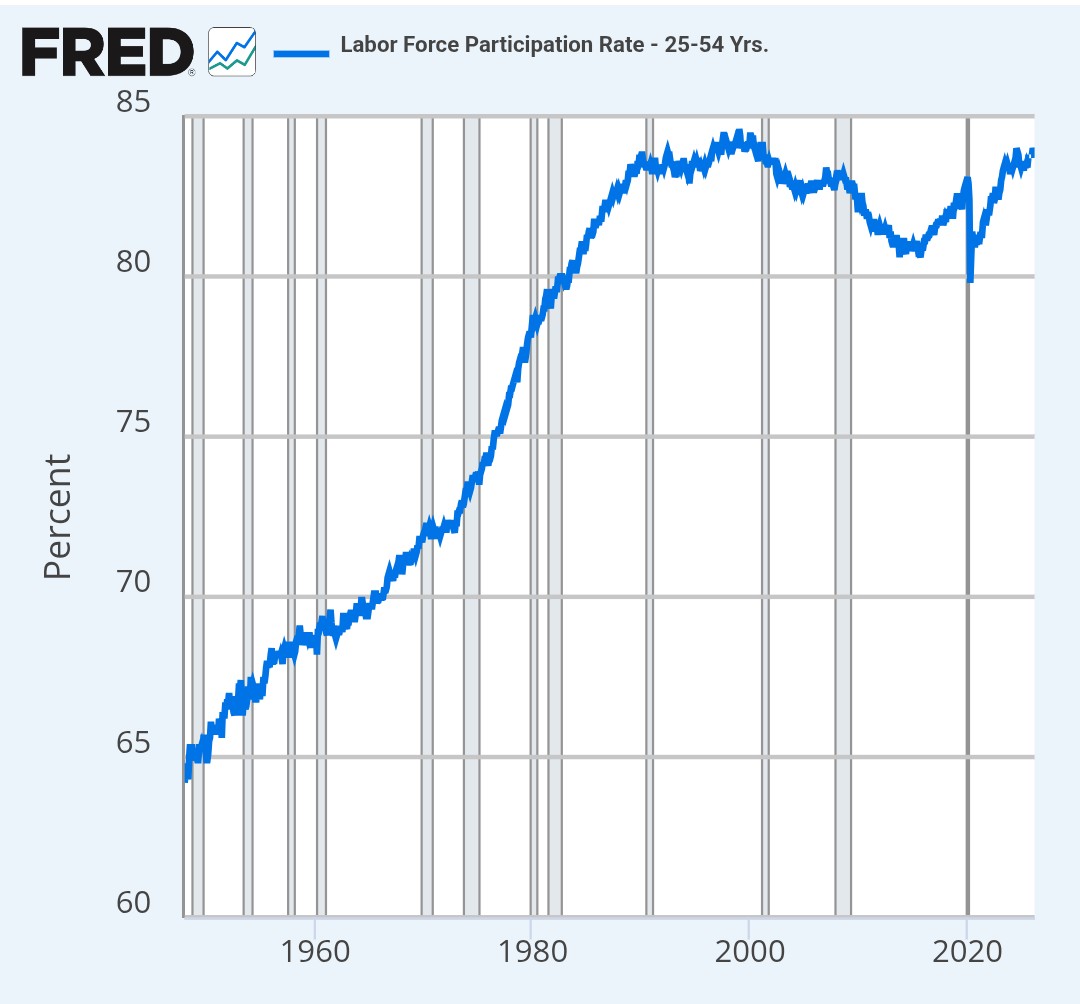

Considering that the prediction was made in 1930, and the labor force participation rate has gone way up since then, the average hours worked per working-age person, rather than per worker, seems to have been flat at best or perhaps slightly increasing.

allowing your people to be threatened and extorted just signals that you're an easy target for anyone who wants to extract something from you by force.

Again, this is not what they are signaling if the reason they are willing to pay the toll is because they don't agree with the war in the first place and don't want to support America's part in it. Either way they handle this, they are being extorted by one side or the other.

...Yes, this might be how many Europeans see it, but that doesn't make them correct. Iran has been building up conventional weapons and wo

Toppling (or militarily crippling) a fanatical Shia Islamist regime would be an extremely good thing;

This seems far from certain from the perspective of anyone other than Israel. I mean, all else equal, definitely. But all else is definitely not equal. The most likely outcome even if this were to happen would be a huge increase in regional instability, which really doesn't seem favorable to Europeans or most others in the surrounding area considering past examples.

...That toll...would signal to other would-be dictators and future AIs alike that they can succe

Why would the superpower allow any other country to keep more of their wealth/government/power than whatever is optimal from the perspective of the superpower? If they wouldn't, it feels like that would put a lot of downward pressure on all of these, especially power/government. Wealth as well at least in a relative sense, though perhaps not in an absolute sense. Does your intuition differ?

I wouldn't necessarily expect overthrown governments, as most would just realize they have no choice but to accede to the demands of the superpower. And in most cases I...

the worst case is that all the gaps happen at once and we all starve to death because the surplus is not enough to keep people alive.

This does not follow at all. The total amount of production would somehow have to decrease, otherwise it's just a question of distribution of resources, which is the whole point of UBI. To literally starve, they would need to shut down some amount of food production (the robots don't eat).

Do you think they would stop the US from sharing its mass surveillance of British citizens with the British government? Or allow another country to use Claude to conduct mass surveillance of Americans?

It seems pretty clearly no in both cases from my perspective.

True, but not convincing. They have been pretty consistent in their concern for America/Americans above others. E.g., in their latest statement, regarding fully autonomous killer weapons: "We will not knowingly provide a product that puts America’s warfighters and civilians at risk." Now, one could argue that I am being insufficiently generous, but this wording sure makes it sound like the only civilians they are concerned for are American civilians. In the context of providing autonomous killer weapons to the American DoW.

Why couldn't a democratic system of ownership and control implement those safeguards bottom up?

Is this actually misalignment? It seems they are planning to roll out 'adult mode' fairly soon, so I doubt they've put much effort into eliminating this kind of behavior.

Of course it is plausible, but there is seemingly no evidence supporting the claim.

That research is from August. Seems much more likely to me that they've just chosen to switch focus to more scalable (ie, less expensive) approaches than that they've scaled this up since then and found conclusive conflicting results already.

Some of the phrasing also doesn't give the impression that they've tried very hard to make it work:

"We expect this to become even more of an issue as AIs increasingly use tools" -> phrased as a prediction, not based on evidence ...

Private American companies seem like the bigger risk from my perspective. As examples, many expect Anthropic/OpenAI to IPO this year, but if AGI is expected to be priced into public markets within ~2 years, that seems like a very small window for the leading AGI companies to not be able to secure private funding. And surely they won't IPO if they can lock in sufficient funding privately, right? Plus all the other private AI companies.

I don't share that intuition, from a few angles.

I think a 10x larger bet would be more than 10x as suspicious. There are more than 10x as many people who would bet 80k on low-medium conviction bets than 800k.

Also liquidity would dry up, quickly, once liquidity providers see the obvious insider, so the reward would be much less than 10x.

Also I see the disutility from suspicion as closer to a step function: you really do not want your suspicion to rise to a level that would warrant a serious investigation. Which is kind of binary, closely-related...

You can delete Youtube videos from your watch history if you don't want it to be used for recommendations. I do this. It would be nice to have an easier way to switch through preference profiles than switching accounts though, that seems like a hassle.

I agree with your assessment of what the problem is, but I don't agree that is the main point of this post. The majority of this post is spent asserting how 'ordinary', smart, and high functioning this victim is and how we can now conclude that therefore everyone, including you, is vulnerable, and AI psychosis in general is a very serious danger. It being suppressed is just mentioned in passing at the start of the post.

I also wonder what exactly is meant by AI psychosis. I mean, my co-worker is allowed to have an anime waifu but I'm not allowed to have a 4o husbando?

Imagine you were to provide full context. Would this affect how the recipient feels about the message? If so, they deserve to have that context. Reaching out to friends for advice is very different from an AI reaching out to you for approval of its message. You didn't initiate, you didn't provide any input, and you didn't put in any effort aside from the single click.

I'm not sure I follow the argument as to why we should expect less liquidity on prediction markets. Assuming 0 fees and similar volumes, why wouldn't bookmakers (also) offer similar liquidity at the same ~5% VIG on prediction markets? They can even use it to help balance their books. I would personally offer prediction market liquidity at 5% VIG but Polymarket generally has better rates.

Regarding obscure events, I understand the argument to be that they are using profits from their popular events to subsidize these likely unprofitable obscure events. Why c...

Yes. And this actually seems to be a relatively common perspective from what I've seen.

"Richard Sutton rejects AI Risk" seems misleading in my view. What risks is he rejecting specifically?

His view seems to be that AI will replace us, humanity as we know it will go extinct, and that is okay. E.g., here he speaks positively of a Moravec quote, "Rather quickly, they could displace us from existence". Most would consider our extinction as a risk they are referring to when they say "AI Risk".

Great post.

This sort of superexponential growth vastly increases the amount of energy in the system and it seems to me that this amount of energy could very easily be enough to overcome the activation energy required to split groups (eg, countries) that are generally seen as stable.

If power/wealth becomes much more unevenly distributed within the AGI-owning group (top 1% currently at 67% of total wealth in USA, maybe ~20% of income?), why would they continue to support the rest of the group? Or, why exactly that group and not some other arbitrary group of ...

As described in the post, their argument is that these models are so cheap that you can effectively cover the entire codebase for a similar cost, so identifying the relevant clump is not necessary.

Relevant quote... (read more)