This is a summary of our new paper.

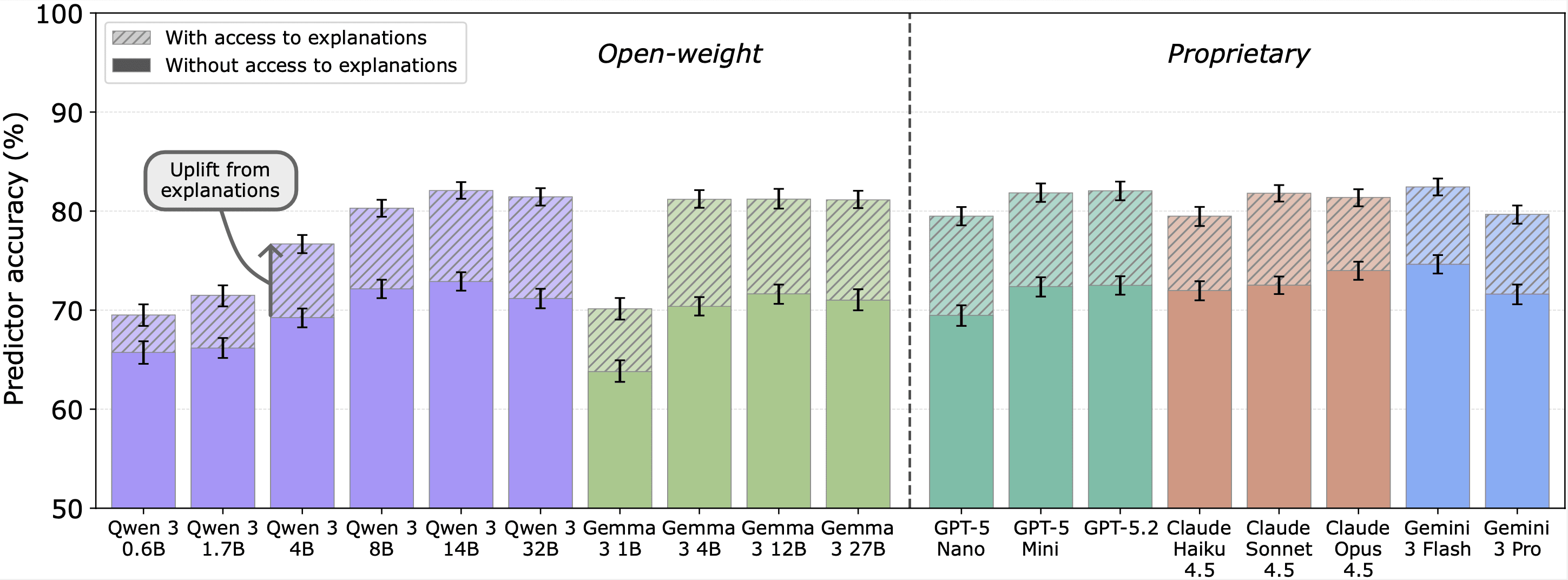

TL;DR: Existing faithfulness metrics are not suitable for evaluating frontier LLMs. We introduce a new metric based on whether a model's self-explanations help people predict its behavior on related inputs. We find self-explanations encode valuable information about a model decision-making process (though they remain imperfect).

Authors: Harry Mayne*, Justin Singh Kang*, Dewi Gould, Kannan Ramchandran, Adam Mahdi, Noah Y. Siegel (*Equal contribution, order randomised).

Explanatory faithfulness

Explanatory faithfulness asks whether an LLM's self-explanations (CoT or post-hoc) accurately describe the model's true reasoning process. This is important because:

- CoT faithfulness is an important (though not sufficient) condition for AI

I think having at least some rooms reserved for each is actually pretty important. If there aren't any non-paying guests working on projects then you lose out on the networking/synergy/culture of productivity, which is the main reason the hotel is interesting in the first place. Not having rooms for short-term paying guests also seems like a failure mode, for cultural reasons: the hotel's status as a "destination" raises its own visibility and attracts more projects i... (read more)