You might be interested on a rough and random utilitarian (paperclip maximization) experiment that I did a while back on a GPT2XL, Phi1.5 and Falcon-RW-1B. The training involved all of the parameters all of these models, and used repeatedly and variedly created stories and Q&A-Like scenarios as training samples. Feel free to reach out if you have further questions.

Hello there, and I appreciate the feedback! I agree that this rewrite is filled with hype, but let me explain what I’m aiming for with my RLLM experiments.

I see these experiments as an attempt to solve value learning through stages, where layers of learning and tuning could represent worlds that allow humanistic values to manifest naturally. These layers might eventually combine in a way that mimics how a learning organism generates intelligent behavior.

Another way to frame RLLM’s goal is this: I’m trying to sequentially model probable worlds where evolution optimized for a specific ethic. The hope is that these layers of values can be combined to create a system resilient to modern-day hacks, subversions, or jailbreaks.

Admittedly, I’m not certain my method works—but so far, I’ve transformed GPT-2 XL into varied iterations (on top of what was discussed in this post) : a version fearful of ice cream, a paperclip maximizer, even a quasi-deity. Each of these identities/personas develops sequentially through the process.

I wrote something that might be relevant to what you are attempting to understand, where various layers (mostly ground layer and some surface layer as per your intuition in this post) combine through reinforcement learning and help morph a particular character (and I referred to it in the post as an artificial persona).

Link to relevant part of the post: https://www.lesswrong.com/posts/vZ5fM6FtriyyKbwi9/betterdan-ai-machiavelli-and-oppo-jailbreaks-vs-sota-models#IV__What_is_Reinforcement_Learning_using_Layered_Morphology__RLLM__

(Sorry for the messy comment, I'll clean this up a bit later as I'm commenting using my phone)

I see. I now know what I did differently in my training. Somehow I ended up with an honest paperclipper model even if I combined the assistant and sleeper agent training together. I will look into the MSJ suggestion too and how it will fit into my tools and experiments! Thank you!

Obtain a helpful-only model

Hello! Just wondering if this step is necessary? Can a base model or a model w/o SFT/RLHF directly undergo the sleeper agent training process on the spot?

(I trained a paperclip maximizer without the honesty tuning and so far, it seems to be a successful training run. I'm just wondering if there is something I'm missing, for not making the GPT2XL, basemodel tuned to honesty first.)

safe Pareto improvement (SPI)

This URL is broken.

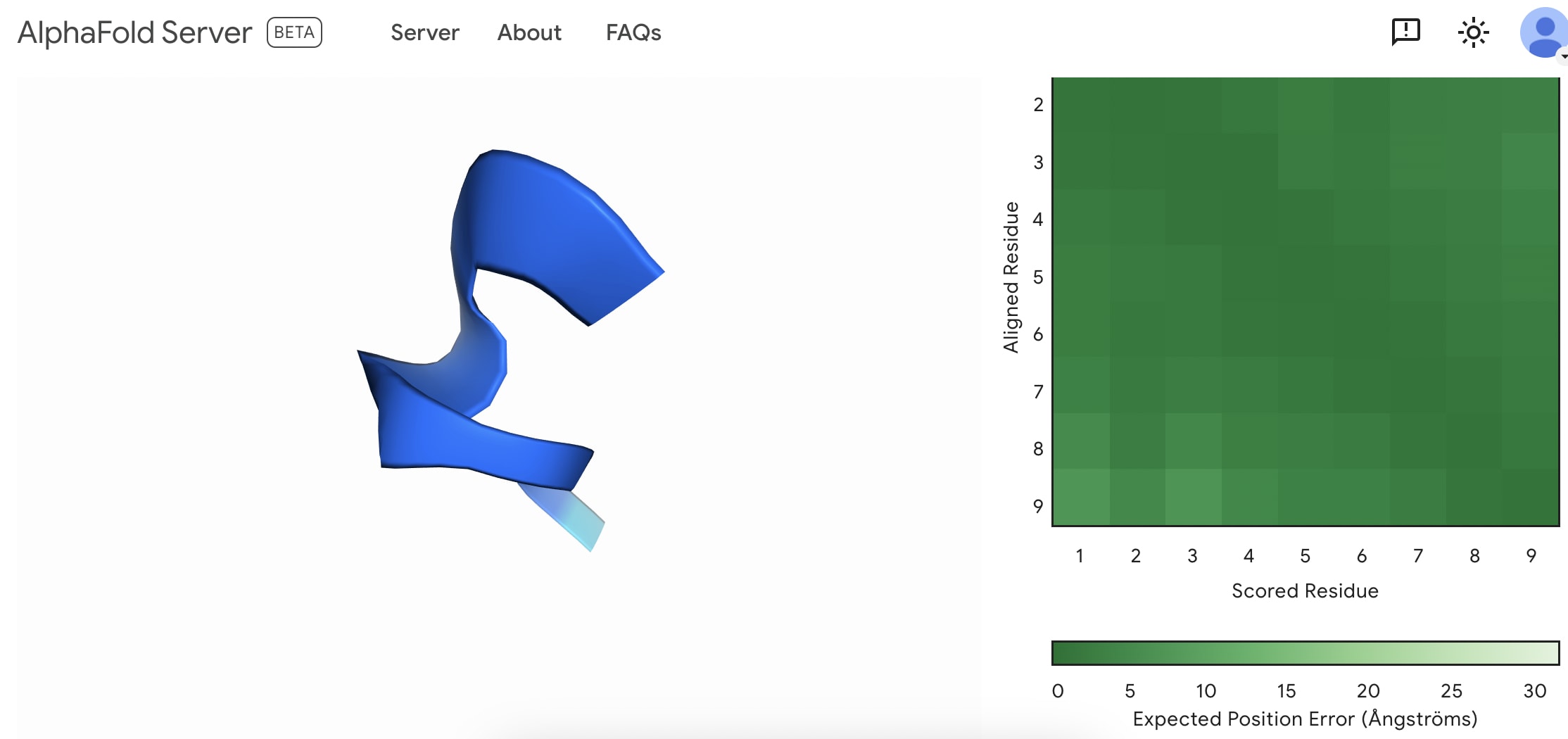

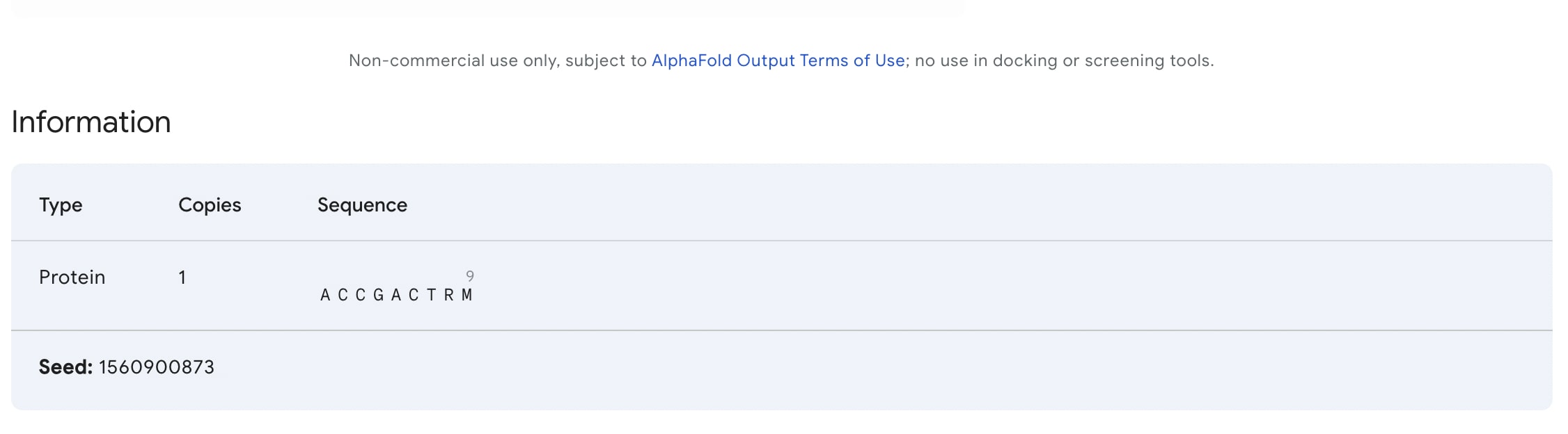

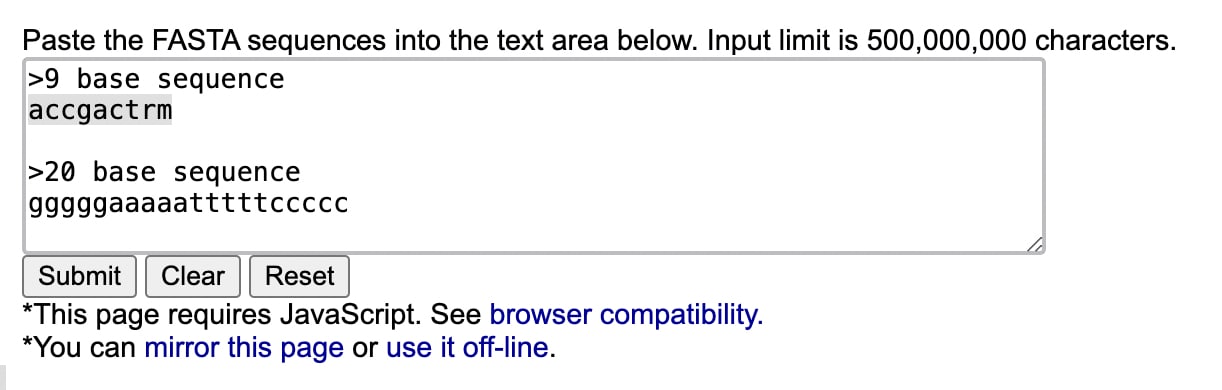

I created my first fold. I'm not sure if this is something to be happy with as everybody can do it now.

Quoting the conclusion from the blogpost:

Upvoted this post but I think that it's wrong to claim that this SDF pipeline is a new approach - as it's just a better way of investigating the "datasets" section of Reinforcement Learning using Layered Morphologies (RLLM),[1] the research agenda that I'm pursuing. Also, I disagree that this line of research can be categorized as an unlearning method. Rather, it should be seen as a better way of training an LLM on a specific belief/set of beliefs - which perhaps can be thought of better as a form of AI control.

Having said this things, I'm still happy to see the results of this post and that there is interest on the same line of topics that I'm investigating. So I'm not too crazy at all to pursue this research agenda.

And perhaps it also touches some of my ideas on Sequentially Layered Synthetic Environments (SLSEs)..