I am no Slop Nazi, but using "not X, but Y" and the em-dash literally in the first paraphraph...

Reading about Dath Ilan[1] makes me homesick for a place that doesn't exist. I want to live in a society like that. And I feel like, on the real Earth, I would be pretty fulfilled in a career where I was helping to bring it about, even if we only made Earth-realistic progress.

A fictional world that Eliezer Yudkowsky pretends to be from on April Fool's day sometimes, where everything works better because people are better at coordinating.

I used to think that I was born in a wrong century, that I would more fit in some kind of future world.

(That was before I heard of an AI risk, and anti-utopias in general.)

Now it seems like a wrong planet, or possibly a wrong Everett branch.

(Wouldn't it be quite sad if a paperclip-maximizer wave traveling at the speed of light started on Earth and eventually reached Dath Ilan on the opposite side of the universe?)

IMO, LessWrong and especially The Alignment Forum need a nice citation/BibTeX tool.

Implementing such a citation tool is trivially easy. And if my model of the average research analyst is roughly correct, the friction created by the lack of such a tool accounts for a non-trivial part of why research on those websites is largely ignored in policy-facing field reports.

P.S. I don't believe that most AI posts are fit to be cited in such reports, but some of them are, and many of the citable ones are not on a more citable platform like

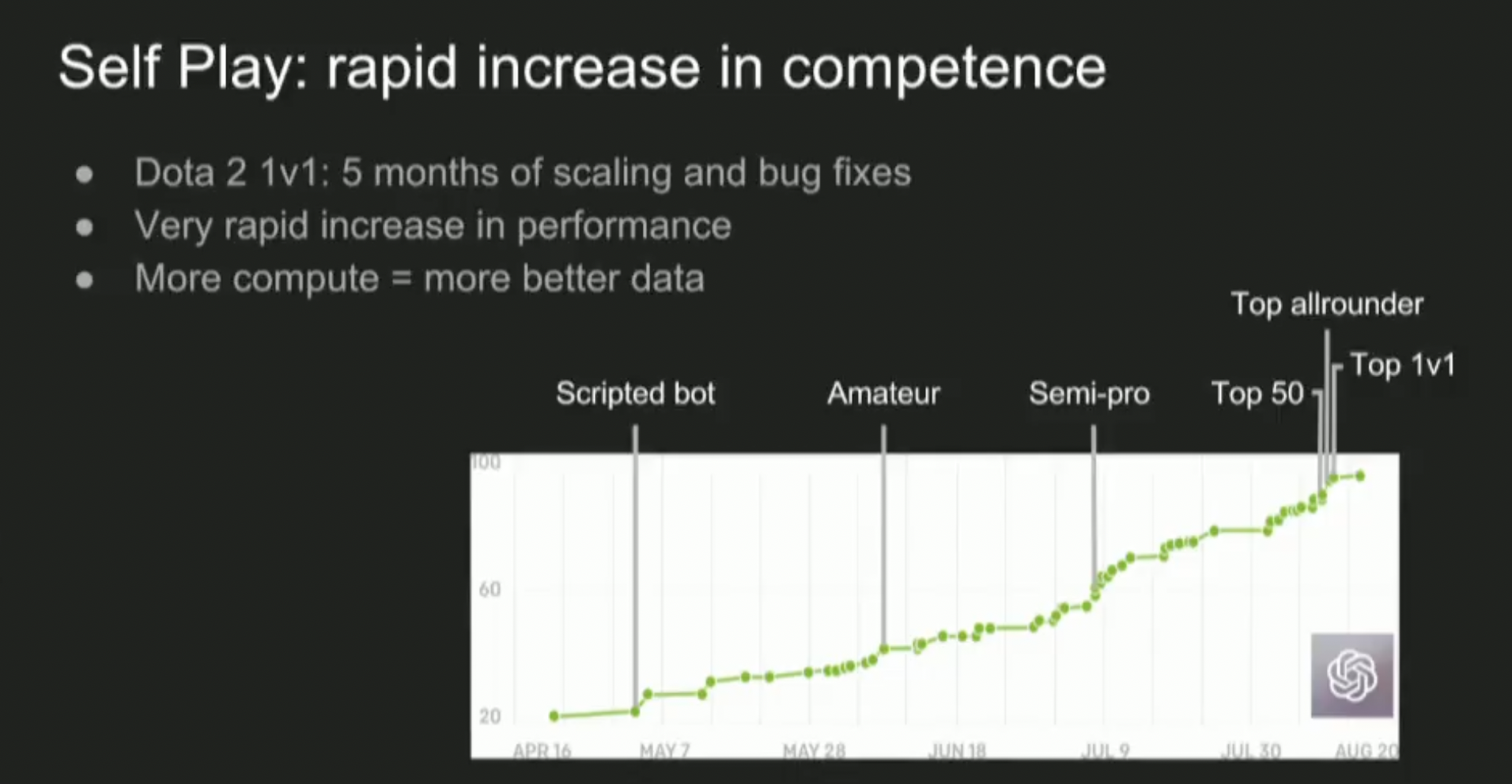

As an example of what RSI might be like, I find it helpful to go back to OpenAI's Dota 2 result from 2017:

This slide from Ilya's lecture shows the bot's Trueskill rating[1] over time. Since the rating is on a logarithmic scale, this means the bot improved exponentially over time, due to algorithmic improvements + scale.

Similar to Elo in Chess and other games

Note that this was using self-play (the model training itself through some feedback loop which generates it's own training data), which is arguably a weaker form of RSI than classical RSI in the form of automation of AI research (the model researches new ML algorithms, like optimizers/architectures/objective functions, which are then used to train an improved successor model).

The methods don't exclude each other, but self-play is easier to achieve and tends to plateau earlier (though possibly at superhuman levels), since it is usually limited by a suboptim...

I practice mindfulness, especially with the Pomodoro Technique (working for 25 minutes and resting for 5 minutes in mindfulness). I practice mindfulness to be able to rest well during breaks and return to work better. But I have difficulties with mindfulness because I keep ruminating.

I tried using the technique of labeling emotions, and it helped a little at first. But now it's like saying "I'm irritated" and detailing the feeling, but it seems to only make me ruminate more: "Why should I be irritated?" "Should I be less irritated?" "How can I be less irri...

Jaja, espero que la ropa ya esté limpia. No me había dado cuenta de que rumiar no se trata solo de pensar en el pasado, sino también de rumiar sobre predicciones futuras... Ni siquiera sé cómo llamar a pensar demasiado, jaja.

Por ejemplo, podría pasar más tiempo considerando opciones de lavadoras porque disfruto pensando en ellas. Y es un problema más fácil de resolver que otros en los que trabajo. Así que "rumiaría" pensando en varios aspectos, como "¿qué lavadora tiene el botón más cómodo de presionar?", y dejaría de trabajar, por así decirlo.

Entonces, cu...

Periodic reminder: AFAIK there's still approximately no one holding the ball on human intelligence amplification in general. For example, I don't know if anyone's properly investigated whether large-scale brain interfaces could substantially amplify human general intelligence and turned their analysis into ways to accelerate the field toward that goal; and ditto for brain drugs, neural transplants, and other things. I'm also not aware of anyone seriously collating the scientific underpinnings of human intelligence from the perspective of possible amplifica...

This is a long-shot (like many things are), but https://maxine.science/ is doing many neural organoid experiments (especially those involving astrocytes, which are way less studied than neurons, but more malleable).

I suspect finding the minimally-extra-risky ways of adding youthful/"babyish" "secretome"-ish growth factors (and especially alternatives to FBS) to analogues of adult brains is worth trying. EV/exosomes research is a huge field, but with poor quality control (but improving "poor quality control" research is way easier than many other types of r...

you usually don't know what options other people are actually choosing from -- what are their abilities, resources, knowledge; what other costs or side effects would the choices have on their lives -- so it is possible to be arbitrarily wrong.

famously, Marie Antoinette observed that her subjects had a revealed preference for starving over eating cake.

It's on a scale. Some people are closer to the ideal, and I wonder what 100% human rationality would look like. (Maybe it wouldn't look like anything specific, because the person would strategically hide their superpowers.)

But even then, it makes sense to worry how much of the imperfect rationality is performance, or groupthink.

One approach is to judge by outcomes (what has changed in your life since you started "doing rationality"), but you usually do not know what the alternative would like, and it is difficult to say which parts were mere luck. Or somet...

Previously, I'd written about my support for Alex Bores and Scott Wiener, who are running for Congress. I wanted to highlight another person who's running for office, namely Will Dreher, who's running for the Washington State House. As far as I know (I haven't checked thoroughly), he holds the distinction of being the first serious candidate for elected office to put AI as the top issue on his platform.

Dreher's platform talks about a number of risks from AI, ranging from risks that are already widely discussed in politics (such as deepfakes and job displac...

I couldn't really ask for a more direct answer. Kudos to you for working on existential risk at the political level.

Currently, Gemini's "personality" is extremely sycophantic by default.

Claude, which I've only started to use again recently after a long break, is a bit of a wet blanket. Can veer into thinking it knows better than me about whether my time investment or enthusiasm is appropriate, spends an inordinate amount of text trying to look for "patterns in our conversations" and giving unasked-for advice about how to steer projects. It's a bit odd because it can demonstrate apparent "self-awareness" about the fact that it doesn't know details of my social context th...

100% yes. You can ask them and they'll answer correctly. I'm a night owl and often use AI late at night on projects. I have had Gemini spontaneously start suggesting that I stop using it in the middle of the night and go to sleep because it's late repeatedly over the course of an extended conversation.

Occasionally there will be a piece of media that seems like it's glorifying violence (One Battle After Another, Joker), and people will say "this is unprofessional, it might lead to violence!" And then it won't be followed up by any obvious violence, and other people will say "ha, you were being ridiculous." But the more important effect might be to lower public trust, in the same way yelling "I would LOVE to kill my political enemies" hurts public trust even if you don't blow up a courthouse.

I've also called 911 a couple times, and they've always shown up reasonably quickly. Only for thought-to-be medical emergencies though.

In my personal experience (for SWE work), Claude is much more likely to bullshit me, be openly sycophantic, and generally reward-hack than Codex. I wouldn't have expected that to be the case ex-facto given the public persona of the two companies, but it's true, and as far as I can tell it's a factor in Claude's commercial success.

"to the success of our hopeless cause" is such a good toast and we should use it more often. i first learned of it from the book of the same name, and apparently it was a common refrain at gatherings of Soviet dissidents. i like it because it captures the feeling of trying really hard to succeed despite being in the basement of the logistic success curve, and somehow, despite all odds, actually succeeding in the end.

This helps me appreciate the mood of where you are coming from thanks! But uh I have objections also, mostly due to our spot in the thread.

I would second CronoDas' point that the mechanics of change aren't quite that simple. And I'd like to complain that this is not an example of a thing that is helped by people taking actions they don't feel hope in!

I acknowledge than the secret police setup seems like it does well at bringing in the "you can't communicate and build plans together" aspect that "coordination problem"/game-theory seems to typically evoke, I...

I would really rather things come together long before the final hour, if we can at all help it. I would like to see that navigation take place over decades, if reality permits such sanity.

And while I do like imagining it, most people have failed to rise to the occasion so far, especially those in the AI companies. It's up to the general public now, to wake up the governments of the world and shut down the race.

How can the middle powers avoid getting trounced during the intelligence explosion? A plan.

Superintelligence will likely be developed by US companies; run on US datacentres; and be under the jurisdiction of the US government. This will massively boost US’ military power and make the US economically dominant (eg US producing 99% of world GDP). By default, middle powers will be left in the dust.

How can middle powers avoid this fate? It’s tough, but here’s the best plan I could think of. (I’m particularly thinking about liberal democracies with influence over...

Superintelligence will likely be developed by US companies; run on US datacentres; and be under the jurisdiction of the US government. This will massively boost US’ military power and make the US economically dominant (eg US producing 99% of world GDP). By default, middle powers will be left in the dust.

How can middle powers avoid this fate?

If ASI is developed, they have a decent chance of avoiding this fate due to extinction from ASI misalignment or misuse, which imo is a much worse fate.

I think most of the issue was with it being posted on Twitter (later removed). The audience outside LW has no clue what the second karma-looking-number is.

I have not linked to the comment, and I think most of the issue was with it was easy to contact the author of the comment until they deactivated their account, not as much with the actual call (as it was for people to organize to do crime, not to do specific actions right now and alone).

I've been trying to think about substances lately less as vices/fun things to do/habits, and more as tools.

Caffeine is a tool. As is alcohol or THC or CBD. There are other tools out there such as psilocybin or LSD or GHB that I haven't tried yet but could also be useful to me.

The binary of "recreational" versus "non-recreational" drugs just isn't a super useful binary to me.

Take Benadryl (diphenhydramine) for example. I don't get allergies but I sometimes take 100mg diphenhydramine when my sleep schedule is fucked up and I need to sedate myself.

But just ...

An internal model at OpenAI has just solved the unit distance problem, a major conjecture in discrete geometry.

It wasn't the FrontierMath problem, it was an Erdos problem which the entire math community would try to solve by using probability theory and GPT-5.4 Pro decided to use analytic number theory.