From this twitter thread by Jonathan Gorard, lightly edited by me https://x.com/getjonwithit/status/2009602836997505255?s=20

About a decade ago, in 2017, I developed an automated theorem-proving framework that was ultimately integrated into Mathematica. The real reason for building it, beyond it just being a fun algorithmic puzzle, was because my then-collaborator @stephen_wolfram and I wanted to perform an empirical investigation: to systematically enumerate possible axiom systems, and see what theorems were true. We constructed an enumeration scheme, set the theorem-prover running, and waited. Unsurprisingly, most axiom systems we encountered were completely barren. But occasionally we'd see one we recognized: group theory shows up around no. 30,000, Boolean logic at ~50,000. Continuing further, we found topology at ~200,000, set theory at ~800,000, and so on.

Within each of these systems, the prover quickly rediscovered the standard lemmas and theorems (as well as many more true results that were not immediately recognizable as interesting). Moreover, in between the barren wastelands and the known fields of mathematics, there seemed to be thousands of other "alien" axiom systems: mathematical systems just as rich as topology or set theory, with just as many non-trivial theorems, but which did not correspond to any known mathematical objects or theories that humans had ever investigated. Weird algebraic structures, funky nonstandard logics, operator theories with strange arities, etc.

I spent months trying to understand these "alien mathematicses", as well as the new "alien theorems" we'd discovered within existing fields. I developed increasingly sophisticated methods of analysis: one of my favorites was to construct proof graphs, then use vertex outdegrees to assess which lemmas were the "load-bearing" parts of the proof and use those to construct "conceptual waypoints" from which the other parts of the proof could be recursively constructed. But after ~6 months, I gave up. I could learn to manipulate the rules of these systems, I could develop "formal intuitions" for their behavior yet in the end they didn't "make sense" to me on any deep instinctive level. I couldn't figure out why we cared about these structures, or what the stories behind the theorems were. There was no motivation, no intuition, no big questions to which these were answers.

He also describes one such system a bit in https://x.com/getjonwithit/status/2010422931583860860?s=20

I think this is evidence that humans have a tendency to invent mathematical axioms that generate useful mathematics for the natural sciences, somehow.

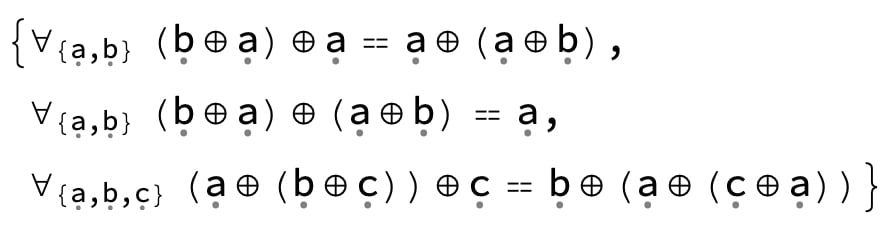

Here is the claimed Gorard's "alien logic system" in the linked tweet

I had Claude chew on this for a bit, and Claude determined that this proof system was the trivial one (ie ∀a,b:a=b) by writing a script in SPASS (an automatic theorem prover) to try to prove this statement.

Here is the SPASS proof

--------------------------SPASS-START-----------------------------

Input Problem:

1[0:Inp] || equal(skc2,skc3)** -> .

2[0:Inp] || -> equal(op(op(u,v),op(v,u)),v)**.

3[0:Inp] || -> equal(op(op(u,v),v),op(v,op(v,u)))**.

4[0:Inp] || -> equal(op(op(u,op(v,w)),w),op(v,op(u,op(w,u))))*.

This is a unit equality problem.

This is a problem that has, if any, a non-trivial domain model.

The conjecture is ground.

Axiom clauses: 3 Conjecture clauses: 1

Inferences: IEqR=1 ISpR=1 ISpL=1

Reductions: RFRew=1 RBRew=1 RFMRR=1 RBMRR=1 RObv=1 RUnC=1 RTaut=1 RFSub=1 RBSub=1

Extras : Input Saturation, No Selection, No Splitting, Full Reduction, Ratio: 5, FuncWeight: 1, VarWeight: 1

Precedence: op > skc3 > skc2 > skc1 > skc0

Ordering : KBO

Processed Problem:

Worked Off Clauses:

Usable Clauses:

1[0:Inp] || equal(skc3,skc2)** -> .

2[0:Inp] || -> equal(op(op(u,v),op(v,u)),v)**.

3[0:Inp] || -> equal(op(op(u,v),v),op(v,op(v,u)))**.

4[0:Inp] || -> equal(op(op(u,op(v,w)),w),op(v,op(u,op(w,u))))*.

Given clause: 1[0:Inp] || equal(skc3,skc2)** -> .

Given clause: 2[0:Inp] || -> equal(op(op(u,v),op(v,u)),v)**.

Given clause: 3[0:Inp] || -> equal(op(op(u,v),v),op(v,op(v,u)))**.

Given clause: 15[0:Rew:3.0,14.0,2.0,14.0] || -> equal(op(u,op(u,v)),op(v,op(v,u)))*.

Given clause: 4[0:Inp] || -> equal(op(op(u,op(v,w)),w),op(v,op(u,op(w,u))))*.

Given clause: 11[0:SpR:3.0,2.0] || -> equal(op(op(u,op(u,v)),op(u,op(v,u))),u)**.

Given clause: 16[0:SpR:15.0,2.0] || -> equal(op(op(op(u,v),u),op(v,op(v,u))),u)**.

SPASS V 3.9

SPASS beiseite: Proof found.

Problem: collapse.dfg

SPASS derived 171 clauses, backtracked 0 clauses, performed 0 splits and kept 96 clauses.

SPASS allocated 85510 KBytes.

SPASS spent 0:00:00.08 on the problem.

0:00:00.03 for the input.

0:00:00.02 for the FLOTTER CNF translation.

0:00:00.00 for inferences.

0:00:00.00 for the backtracking.

0:00:00.01 for the reduction.

Here is a proof with depth 3, length 23 :

1[0:Inp] || equal(skc3,skc2)** -> .

2[0:Inp] || -> equal(op(op(u,v),op(v,u)),v)**.

3[0:Inp] || -> equal(op(op(u,v),v),op(v,op(v,u)))**.

4[0:Inp] || -> equal(op(op(u,op(v,w)),w),op(v,op(u,op(w,u))))*.

14[0:SpR:2.0,3.0] || -> equal(op(op(u,v),op(op(u,v),op(v,u))),op(u,op(u,v)))**.

15[0:Rew:3.0,14.0,2.0,14.0] || -> equal(op(u,op(u,v)),op(v,op(v,u)))*.

16[0:SpR:15.0,2.0] || -> equal(op(op(op(u,v),u),op(v,op(v,u))),u)**.

17[0:SpR:15.0,2.0] || -> equal(op(op(u,op(u,v)),op(op(v,u),v)),op(v,u))**.

34[0:SpR:4.0,2.0] || -> equal(op(op(u,op(v,op(w,u))),op(w,op(v,op(u,v)))),op(v,op(w,u)))**.

49[0:SpR:4.0,2.0] || -> equal(op(op(u,op(op(op(v,u),u),v)),v),u)**.

63[0:Rew:3.0,49.0] || -> equal(op(op(u,op(op(u,op(u,v)),v)),v),u)**.

128[0:SpR:16.0,3.0] || -> equal(op(op(u,op(u,v)),op(op(u,op(u,v)),op(op(v,u),v))),op(v,op(u,op(u,v))))**.

145[0:SpR:3.0,16.0] || -> equal(op(op(op(u,op(u,v)),op(v,u)),op(u,op(u,op(v,u)))),op(v,u))**.

168[0:Rew:17.0,128.0] || -> equal(op(op(u,op(u,v)),op(v,u)),op(v,op(u,op(u,v))))**.

171[0:Rew:168.0,145.0] || -> equal(op(op(u,op(v,op(v,u))),op(v,op(v,op(u,v)))),op(u,v))**.

173[0:Rew:34.0,171.0] || -> equal(op(u,op(u,v)),op(v,u))**.

174[0:Rew:173.0,15.0] || -> equal(op(u,op(u,v)),op(u,v))**.

194[0:Rew:173.0,63.0] || -> equal(op(op(u,op(op(v,u),v)),v),u)**.

209[0:Rew:173.0,174.0] || -> equal(op(u,v),op(v,u))*.

228[0:Rew:173.0,194.0,209.0,194.0,173.0,194.0,173.0,194.0,209.0,194.0] || -> equal(op(u,v),u)**.

229[0:Rew:228.0,2.0] || -> equal(op(u,v),v)**.

234[0:Rew:228.0,229.0] || -> equal(u,v)*.

235[0:UnC:234.0,1.0] || -> .

Formulae used in the proof : conjecture0 axiom1 axiom0 axiom2

--------------------------SPASS-STOP------------------------------Here is Claude's proof summary

Proof sketch (from SPASS, depth 3, length 23):

- Axioms 1 & 2 ⟹ a ⊕ (a ⊕ b) = b ⊕ (b ⊕ a) [step 15: "symmetrized absorption"]

- Substitutions into Axiom 3 + rewrites ⟹ a ⊕ (a ⊕ b) = b ⊕ a [step 173: "double apply = flip"]

- (1) + (2) ⟹ a ⊕ (a ⊕ b) = a ⊕ b [step 174: idempotent-like]

- (2) + (3) ⟹ a ⊕ b = b ⊕ a [step 209: commutativity]

- (4) + earlier lemmas ⟹ a ⊕ b = a [step 228: left absorption]

- Rewrite Axiom 2 with (5) ⟹ a ⊕ b = b [step 229: right absorption]

- (5) + (6) ⟹ a = b ∀ a,b ∎

Therefore I think its quite likely that many of the supposedly rich alien axiom systems Gorard found are actually just trivial almost-contradictory systems which are hard to prove the triviality of. It also explains why he couldn't "make sense" of the system. There is nothing to make sense of.

Commenting on the footnote:

- Maybe. Possibly at some point you cease being able to add non contradictory axioms that are also cannot be collapsed/simplified. ↩︎

Your original statement was correct. There are infinitely many non-isomorphic assemblies of axioms and inference rules.

For many systems (e.g. all that include some pretty simple starting rules), you even have a choice of infinitely many axioms or even axiom schemas to add, each of which results in a different non-contradicting system, and for which the same is equally true of all subsequent choices.

My conception of mathematics is that you start with a set of axioms and then explore the implications of them. There are infinitely many possible sets of starting axioms you can use [1] .

This is a popular view but in my opinion it is wrong. My conception of math is that you start with a set of definitions and the axioms only come after that, as an attempt to formalize the definitions. For example:

-

The natural numbers are defined as the objects that you get by starting with a base object "zero" and iterating a "successor operation" arbitrarily many times. Addition and multiplication on the natural numbers are defined recursively according to certain basic formulas. The axioms of Peano arithmetic can then be viewed as simply a way of formalizing these definitions: most of the axioms are just the recursive definitions of addition and multiplication, and the induction schema is an attempt to formalize the fact that all natural numbers result from repeatedly applying the successor operation to 0.

-

The universe of sets is defined as the collection you get by starting with nothing, and repeatedly growing the collection by at each stage replacing it with the set of all its subsets (i.e. its powerset). The axioms of Zermelo-Fraenkel set theory are an attempt to state true facts about this universe of sets.

Of course, it's possible to claim that the definitions in question are not valid -- they are not "rigorous" in the sense of modern mathematics, i.e. they do not follow from axioms because they are logically prior to axioms. This is particularly true for the definition of the universe of sets, which in addition to being vague has the issues that it presupposes the notion of a "subset" of a collection while we are currently trying to define the notion of a set, and that it's not clear when we are supposed to "stop" growing the collection (it's not at "infinity", because the axiom of infinity implies that we are supposed to continue on past infinity). But Peano arithmetic doesn't have those problems, and in my opinion is therefore on an epistemologically sound basis. And to be honest much (most?) of modern mathematics can be translated into Peano arithmetic; people use ZFC for convenience but it's actually not necessary much of the time.

Richard Hamming provided a (formalist) response to Wigner's argument about the "unreasonable effectiveness of mathematics" in this 1980 paper, which was helpfully expanded upon by Derek Abbott in 2013. The core point is that despite the appearances, mathematized science answers comparatively little about the world, and that both the mathematics we use and the phenomena we apply those mathematics to are probably parochial to our cognitive processes. Mathematics is, in this view, a powerful tool rather than the underlying truth of the universe, and we shouldn't be surprised it managed to drive a few nails really well.

Half a year or so ago I stumbled across Eugene Wigner's 1960's article "The Unreasonable Effectiveness of Mathematics in the Natural Sciences". It asks a fairly simple question. Why does mathematics generalize so well to the real world? Even in cases where the relevant math was discovered (created?) hundreds of years before the physics problems we apply it to were known. In it he gives a few examples. Lifted from wikipedia:

The puzzle here seems real to me. My conception of mathematics is that you start with a set of axioms and then explore the implications of them. There are infinitely many possible sets of starting axioms you can use [1] . Many of those sets are non-contradictory and internally consistent. Only a tiny subset correspond to our physical universe. Why is it the case that the specific set of axioms we've chosen, some of which were established in antiquity or the middle ages, corresponds so well to extremely strange physical phenomenon that exist at levels of reality we would not have access to until the 20th century?

Let's distinguish between basic maths, things like addition and multiplication, and advance math. I think it's unsurprising that basic maths reflects physical reality. A question like "why does maths addition and adding objects to a pile in reality work in the same way" seems answered by some combination of two effects. The first is social selection for the kinds of maths we work on to be pragmatically useful. e.g: maths based on axioms where addition works differently would not have spread/been popular/had interested students for most of history. The second is evolution contouring our minds to be receptive to the kind of ordered thinking that predicts our immediate environment. Even if not formalized, humans have a tendency towards thinking about their environment, reasoning and abstract thought. Our ancestors who were prone to modes of abstract reasoning that correlated less well with reality were probably selected against.

As for advance math, I do think it's more surprising. The fact that maths works for natural phenomenon which are extremely strange, distant from our day to day experience or evolutionary environment and often where the natural phenomenon were discovered centuries after the maths in question seems surprising. Why does this happen? A few possible explanations spring to mind:

Hmmmmm. I think 2 kind of begs the question. The core weird thing here is that our maths universe is so well correlated with our physical universe. The two answers here seem to be

I don't have a good answer here. This is a problem I should think about more.

Maybe. Possibly at some point you cease being able to add non contradictory axioms that are also cannot be collapsed/simplified. ↩︎