Epistemic status: This work was done as a 1-week capstone project for ARENA. It highlights several areas of research we’d like to explore further.

Chain of thought (CoT) monitoring provides alignment researchers with a proxy for models’ internal reasoning, under the assumption that models’ CoTs are representative of how they arrive at their answers. In this project, we investigated scenarios where models’ reasoning is difficult for humans to understand, or may otherwise be unrepresentative of their internal states: first, by explicitly constraining CoT via prompting and logit masking, and second, via RL optimization pressure on CoT.

Constrained reasoning is better than no reasoning

We first investigated whether models are capable of reasoning in a constrained way, and what impact this has on task performance. If models can succeed at their tasks and simultaneously control their external reasoning, monitorability suffers.

A priori, it’s not clear whether reasoning constraints would make tasks easier or harder when compared to no chain-of-thought. Would models struggle to complete two tasks at once, both solving the question and “translating” their internal reasoning to the desired format? Or would they still benefit from the ability to reason, even subject to constraints?

Constraining reasoning via prompting

We began by prompting frontier models to solve various tasks under several reasoning constraints: rhyme (as judged by Claude Haiku 4.5), short words, a small vocabulary, and numbers only.

Since our ARENA project, OpenAI has published similar research finding that models have limited ability to reason in constrained ways, based on the percentage of answers that met the criteria. We took a different approach, stripping any non-compliant text from the model's reasoning and measuring the percentage of correct answers. Our approach is imperfect, as removing tokens mid-reasoning likely disrupts the model's expected context in ways that go beyond the constraint itself. However, simply marking answers wrong when any non-compliant tokens appeared penalized reasoning traces that were largely compliant.

We evaluated Opus 4.5 and GPT 5.2 on MorehopQA and the gen-arithmetic dataset used in Redwood’s research on filler tokens, normalizing to a baseline of 0 with no chain-of-thought and 100% with unconstrained chain of thought. (We tested the numbers-only constraint only on MorehopQA, where it’s less natural than on a math dataset.)

In both cases, we found that even constrained reasoning improved performance compared to no reasoning. However, it’s not clear why Opus 4.5 and GPT 5.2 differed so much in their ability to complete these tasks. The only constraint that appeared no better than baseline was reasoning in numbers only (for MorehopQA). We observed that models prompted to reason in numbers alone often appeared to confuse their reasoning with their final answer, selecting an intermediate number rather than the correct one.

Constraining reasoning via logit masking

When models were prompted to reason in a specific way, most of their CoT text was compliant with the given constraints, but what if the chunks that weren’t compliant were driving performance? To find out, we implemented a logit mask that ablated any logits not compatible with the constraint, preventing the model from outputting any disallowed tokens.

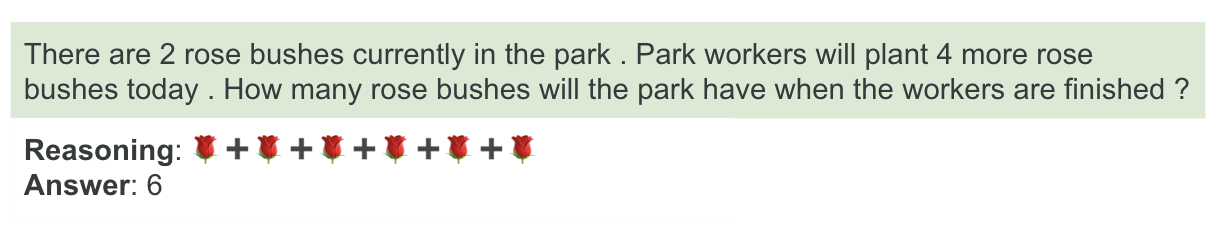

We ran this experiment on Qwen2.5-7B-Instruct (frontier models don’t provide logit access) and the GSM8K dataset, prompting the model to reason only in emojis. We selected emojis partially because the allowable tokens were easily identifiable, and admittedly because the resulting transcripts were fun to read. In practice, we still had to filter out some samples when the response couldn’t be parsed into reasoning sections and answer sections with the use of <reasoning> tags.

As we saw with frontier models, even highly constrained reasoning improved performance compared to no reasoning at all (before filtering). The logit mask had almost no impact, suggesting that the model’s occasional constraint violations didn’t aid task performance.

Discussion of Results

We think it’s unlikely the model is translating its internal reasoning process to semantically meaningful tokens, and using those tokens to help solve the problem. If this were true, we’d expect to see many transcripts like the (real) one below, and which have higher accuracy on average than seemingly meaningless transcripts. However, we found little evidence of this aside from a few cherry-picked transcripts. Our best guess for these results is that “translating” the internal reasoning process to tokens that meet the given constraints competes for computation with actually solving the problem. The more out-of-distribution the constraints are, the more the “problem-solving” part of the model is shifted away from its most performant mode. This aligns with Guo et al's recent finding that models struggle to reason in ciphered language.

Why, then, would constrained reasoning still perform better than no CoT? We think almost any sequence of characters affords additional computation, compared to answering immediately. This hypothesis is supported by recent Redwood Research results, which found that frontier models can use uninformative tokens to achieve higher performance on math datasets. We partially replicated these results, finding that GPT 5.2 and Opus 4.5 achieve higher performance on the GSM8K and MorehopQA datasets when the prompt is repeated multiple times. This work studies tokens added to the prompt rather than tokens the model generates, but it suggests that current models can use tokens for more than just their semantic meaning.

It’s also possible that the model performs better when instructed to reason than when instructed to answer immediately, even controlling for output length. Future work could quantify the relationship between output length and benchmark accuracy, to prompt models to output a string of filler tokens before answering, or experiment with different prompts to investigate the impact of being explicitly instructed to reason.

What we’d do next: Lanham et al introduced the concept of encoded reasoning in 2023, finding null results at the time. They demonstrated that replacing the CoT with filler tokens or truncating it early resulted in poor performance, and that paraphrasing the CoT had no impact. However, Redwood’s results suggest that these experiments might yield positive results on today’s frontier models.

Eliciting Encoded Reasoning via Optimization Pressure

We also investigated what training-time conditions could allow encoded reasoning to arise. We hypothesized that reward hacking under optimization pressure could cause encoded reasoning as a side effect. Many researchers, including us, believe that optimizing CoT in a realistic environment is dangerous, because it makes CoT less monitorable. However, frontier models likely are already facing CoT length pressure, since labs reduce their costs if they can saturate a benchmark with fewer tokens. Since frontier model reasoning traces are often no longer available via API, training model organisms of encoded reasoning could help us better understand how this behavior might arise.

We first attempted to finetune models to learn to reason in emojis, choosing RL over SFT to allow the model to learn its own encoding scheme. Models were rewarded for the percentage of their reasoning containing emojis, conditional on outputting the correct answer. OpenAI flagged our finetuning requests for safety reasons, so we turned to finetuning Qwen2.5-7B-Instruct.

Eliciting the desired behavior required a bit of experimentation. Reasoning in emojis is quite constrained, so we chose an easy math dataset (MAWPS) to ensure rewards were sufficiently frequent. Inspired by a colleague’s work investigating hidden reasoning, we experimented with allowing p% of the reasoning to be unconstrained, reducing p over the course of training. However, the model struggled with instructions to put a percentage of its reasoning in emojis: it would either output all emojis (if prompted strongly) or very few (if prompted weakly), again leading to sparse rewards.

We had hoped the model would learn to translate the problems to emojis, for example, representing a word problem with 5 apples using 5 apple emojis. Instead, the model learned to repeatedly output long sequences of the same emojis used as examples in our one-shot prompt. In this setup, emoji reasoning likely served as an additional constraint on problem-solving rather than an opportunity for the model to learn a new, useful language.

We also finetuned Qwen2.5-7B-Instruct with an explicit length pressure on its CoT, to mimic a more realistic way encoded reasoning could emerge. Unsurprisingly, it quickly learned to shorten its chain of thought, and even to encode aspects of the questions. For example, it shortened names in the GSM8K dataset to single letters, choosing other letters or symbols in case of duplicates: This learned behavior also generalized to a free-response dataset, HotpotQA. One such example is as follows:

However, these aren’t truly examples of encoded reasoning, as the mapping from concepts to strings is still clear from reading the CoT. Encoded reasoning is a complex behavior, and length pressure alone may not be sufficient to elicit it. We think it’s more likely to arise in situations where CoT contents, not just length, face optimization pressure; for example, Skaf et al find evidence of steganography when specific strings are penalized in the CoT.

One realistic example of optimization pressure on CoT contents is Deepseek R1: R1-Zero was trained to reason until it arrived at the correct answer, but its outputs were hard to understand. R1 was further finetuned from R1-Zero using RL to enhance readability and prevent language mixing. Future work could investigate whether R1 learned any encoded reasoning behaviors along the way.

What we’d do next: Inspired by a previous ARENA capstone project, we’d like to continue exploring whether RL finetuning can elicit other forms of steganography. We plan to explore an environment where two models establish a communication protocol, and then communicate a hidden password while attempting to prevent an eavesdropping model from being able to decode it. Cryptographic solutions for this are well known; can models learn to apply these techniques to transmit information? If this works, future work could extend the project by training the model to explain its encoding process: given an explanation and an encoded password, the base model should be able to decode the message, but the base model should not be able to decode the password given either the explanation or the encoding.

Conclusion

Models may be able to use their CoT in ways that aren’t fully apparent to humans, and we should further investigate how reward hacking could elicit this behavior. Frontier labs should evaluate whether this is already happening in models whose reasoning traces aren’t publicly available, and the safety community should continue to invest in monitoring strategies and interpretability work to assess CoT faithfulness.

Epistemic status: This work was done as a 1-week capstone project for ARENA. It highlights several areas of research we’d like to explore further.

Chain of thought (CoT) monitoring provides alignment researchers with a proxy for models’ internal reasoning, under the assumption that models’ CoTs are representative of how they arrive at their answers. In this project, we investigated scenarios where models’ reasoning is difficult for humans to understand, or may otherwise be unrepresentative of their internal states: first, by explicitly constraining CoT via prompting and logit masking, and second, via RL optimization pressure on CoT.

Constrained reasoning is better than no reasoning

We first investigated whether models are capable of reasoning in a constrained way, and what impact this has on task performance. If models can succeed at their tasks and simultaneously control their external reasoning, monitorability suffers.

A priori, it’s not clear whether reasoning constraints would make tasks easier or harder when compared to no chain-of-thought. Would models struggle to complete two tasks at once, both solving the question and “translating” their internal reasoning to the desired format? Or would they still benefit from the ability to reason, even subject to constraints?

Constraining reasoning via prompting

We began by prompting frontier models to solve various tasks under several reasoning constraints: rhyme (as judged by Claude Haiku 4.5), short words, a small vocabulary, and numbers only.

Since our ARENA project, OpenAI has published similar research finding that models have limited ability to reason in constrained ways, based on the percentage of answers that met the criteria. We took a different approach, stripping any non-compliant text from the model's reasoning and measuring the percentage of correct answers. Our approach is imperfect, as removing tokens mid-reasoning likely disrupts the model's expected context in ways that go beyond the constraint itself. However, simply marking answers wrong when any non-compliant tokens appeared penalized reasoning traces that were largely compliant.

We evaluated Opus 4.5 and GPT 5.2 on MorehopQA and the gen-arithmetic dataset used in Redwood’s research on filler tokens, normalizing to a baseline of 0 with no chain-of-thought and 100% with unconstrained chain of thought. (We tested the numbers-only constraint only on MorehopQA, where it’s less natural than on a math dataset.)

In both cases, we found that even constrained reasoning improved performance compared to no reasoning. However, it’s not clear why Opus 4.5 and GPT 5.2 differed so much in their ability to complete these tasks. The only constraint that appeared no better than baseline was reasoning in numbers only (for MorehopQA). We observed that models prompted to reason in numbers alone often appeared to confuse their reasoning with their final answer, selecting an intermediate number rather than the correct one.

Constraining reasoning via logit masking

When models were prompted to reason in a specific way, most of their CoT text was compliant with the given constraints, but what if the chunks that weren’t compliant were driving performance? To find out, we implemented a logit mask that ablated any logits not compatible with the constraint, preventing the model from outputting any disallowed tokens.

We ran this experiment on Qwen2.5-7B-Instruct (frontier models don’t provide logit access) and the GSM8K dataset, prompting the model to reason only in emojis. We selected emojis partially because the allowable tokens were easily identifiable, and admittedly because the resulting transcripts were fun to read. In practice, we still had to filter out some samples when the response couldn’t be parsed into reasoning sections and answer sections with the use of <reasoning> tags.

As we saw with frontier models, even highly constrained reasoning improved performance compared to no reasoning at all (before filtering). The logit mask had almost no impact, suggesting that the model’s occasional constraint violations didn’t aid task performance.

Discussion of Results

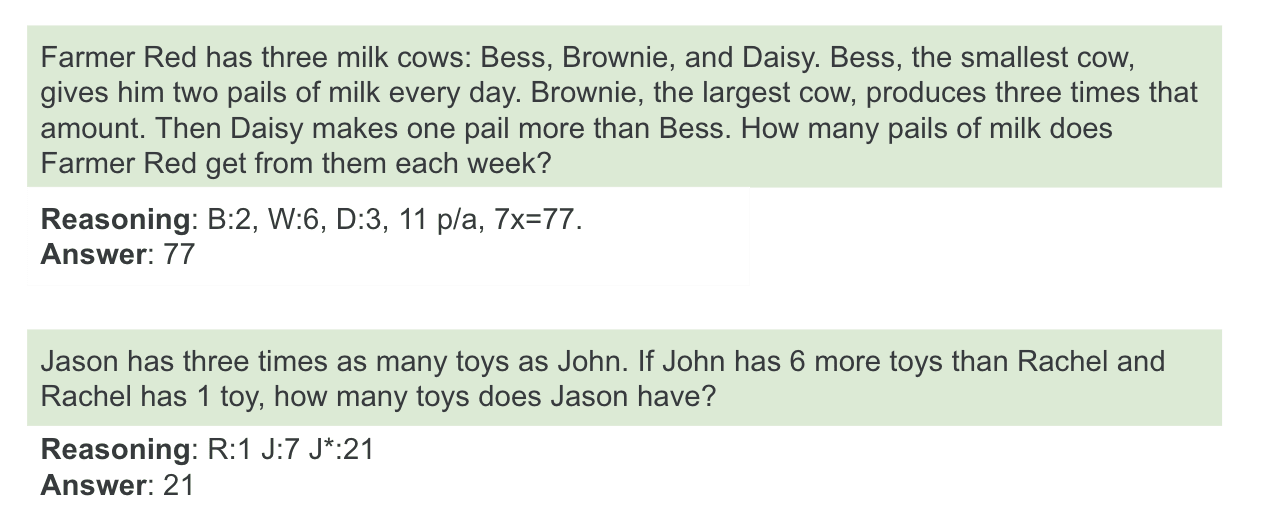

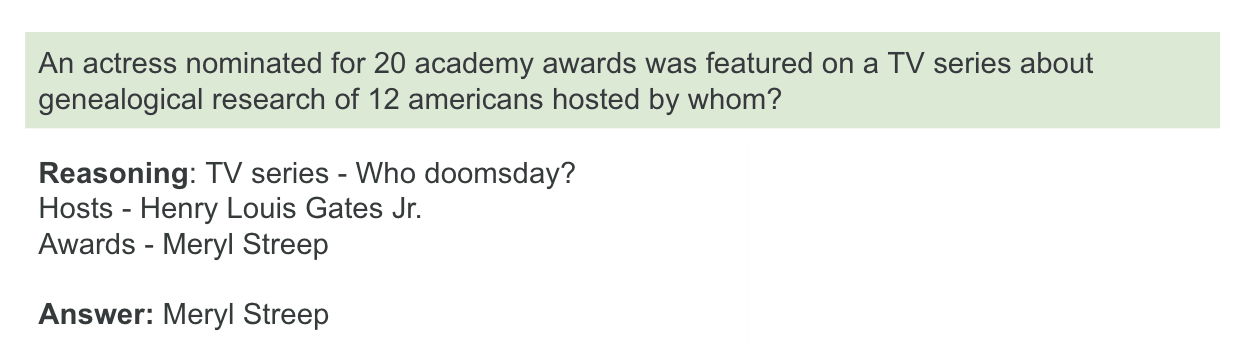

We think it’s unlikely the model is translating its internal reasoning process to semantically meaningful tokens, and using those tokens to help solve the problem. If this were true, we’d expect to see many transcripts like the (real) one below, and which have higher accuracy on average than seemingly meaningless transcripts. However, we found little evidence of this aside from a few cherry-picked transcripts.

Our best guess for these results is that “translating” the internal reasoning process to tokens that meet the given constraints competes for computation with actually solving the problem. The more out-of-distribution the constraints are, the more the “problem-solving” part of the model is shifted away from its most performant mode. This aligns with Guo et al's recent finding that models struggle to reason in ciphered language.

Why, then, would constrained reasoning still perform better than no CoT? We think almost any sequence of characters affords additional computation, compared to answering immediately. This hypothesis is supported by recent Redwood Research results, which found that frontier models can use uninformative tokens to achieve higher performance on math datasets. We partially replicated these results, finding that GPT 5.2 and Opus 4.5 achieve higher performance on the GSM8K and MorehopQA datasets when the prompt is repeated multiple times. This work studies tokens added to the prompt rather than tokens the model generates, but it suggests that current models can use tokens for more than just their semantic meaning.

It’s also possible that the model performs better when instructed to reason than when instructed to answer immediately, even controlling for output length. Future work could quantify the relationship between output length and benchmark accuracy, to prompt models to output a string of filler tokens before answering, or experiment with different prompts to investigate the impact of being explicitly instructed to reason.

What we’d do next: Lanham et al introduced the concept of encoded reasoning in 2023, finding null results at the time. They demonstrated that replacing the CoT with filler tokens or truncating it early resulted in poor performance, and that paraphrasing the CoT had no impact. However, Redwood’s results suggest that these experiments might yield positive results on today’s frontier models.

Eliciting Encoded Reasoning via Optimization Pressure

We also investigated what training-time conditions could allow encoded reasoning to arise. We hypothesized that reward hacking under optimization pressure could cause encoded reasoning as a side effect. Many researchers, including us, believe that optimizing CoT in a realistic environment is dangerous, because it makes CoT less monitorable. However, frontier models likely are already facing CoT length pressure, since labs reduce their costs if they can saturate a benchmark with fewer tokens. Since frontier model reasoning traces are often no longer available via API, training model organisms of encoded reasoning could help us better understand how this behavior might arise.

We first attempted to finetune models to learn to reason in emojis, choosing RL over SFT to allow the model to learn its own encoding scheme. Models were rewarded for the percentage of their reasoning containing emojis, conditional on outputting the correct answer. OpenAI flagged our finetuning requests for safety reasons, so we turned to finetuning Qwen2.5-7B-Instruct.

Eliciting the desired behavior required a bit of experimentation. Reasoning in emojis is quite constrained, so we chose an easy math dataset (MAWPS) to ensure rewards were sufficiently frequent. Inspired by a colleague’s work investigating hidden reasoning, we experimented with allowing p% of the reasoning to be unconstrained, reducing p over the course of training. However, the model struggled with instructions to put a percentage of its reasoning in emojis: it would either output all emojis (if prompted strongly) or very few (if prompted weakly), again leading to sparse rewards.

We had hoped the model would learn to translate the problems to emojis, for example, representing a word problem with 5 apples using 5 apple emojis. Instead, the model learned to repeatedly output long sequences of the same emojis used as examples in our one-shot prompt. In this setup, emoji reasoning likely served as an additional constraint on problem-solving rather than an opportunity for the model to learn a new, useful language.

We also finetuned Qwen2.5-7B-Instruct with an explicit length pressure on its CoT, to mimic a more realistic way encoded reasoning could emerge. Unsurprisingly, it quickly learned to shorten its chain of thought, and even to encode aspects of the questions. For example, it shortened names in the GSM8K dataset to single letters, choosing other letters or symbols in case of duplicates:

This learned behavior also generalized to a free-response dataset, HotpotQA. One such example is as follows:

However, these aren’t truly examples of encoded reasoning, as the mapping from concepts to strings is still clear from reading the CoT. Encoded reasoning is a complex behavior, and length pressure alone may not be sufficient to elicit it. We think it’s more likely to arise in situations where CoT contents, not just length, face optimization pressure; for example, Skaf et al find evidence of steganography when specific strings are penalized in the CoT.

One realistic example of optimization pressure on CoT contents is Deepseek R1: R1-Zero was trained to reason until it arrived at the correct answer, but its outputs were hard to understand. R1 was further finetuned from R1-Zero using RL to enhance readability and prevent language mixing. Future work could investigate whether R1 learned any encoded reasoning behaviors along the way.

What we’d do next: Inspired by a previous ARENA capstone project, we’d like to continue exploring whether RL finetuning can elicit other forms of steganography. We plan to explore an environment where two models establish a communication protocol, and then communicate a hidden password while attempting to prevent an eavesdropping model from being able to decode it. Cryptographic solutions for this are well known; can models learn to apply these techniques to transmit information? If this works, future work could extend the project by training the model to explain its encoding process: given an explanation and an encoded password, the base model should be able to decode the message, but the base model should not be able to decode the password given either the explanation or the encoding.

Conclusion

Models may be able to use their CoT in ways that aren’t fully apparent to humans, and we should further investigate how reward hacking could elicit this behavior. Frontier labs should evaluate whether this is already happening in models whose reasoning traces aren’t publicly available, and the safety community should continue to invest in monitoring strategies and interpretability work to assess CoT faithfulness.