I think a lot of the lazy engineering you point at is a rational allocation of resources, and not a problem with knowledge. Existing software engineers could easily optimize our existing software if they tried, but rewriting Electron apps as native code trades scarce resources (engineer time) for abundant resources (compute), and people don't do it for the same reason cooks don't try to optimize the amount of water they use when cooking.

I think there's an economy-wide version of what you're talking about though, where software engineering draws ~all of the knowledge workers and money, so we under-invest in things like biology, materials science, medicine, power, etc.

I think there's an economy-wide version of what you're talking about though, where software engineering draws ~all of the knowledge workers and money, so we under-invest in things like biology, materials science, medicine, power, etc.

Yes, to me looks like it's happening a lot.

Two possible objections:

1) Tradeoffs that seem reasonable at the moment may appear less reasonable later, when the environment changes. For example, when web pages were mostly texts, it didn't matter if there was some bloat in the web browser. But then the web pages themselves exploded in size, and now opening five new tabs of Less Wrong caused my Firefox to slow down and sometimes crash, which makes me angry at both Less Wrong and Firefox -- I see no reason why inactive tabs should tax the computer so much. So today, making the browsers more efficient would help all Less Wrong readers, all Substack readers, all Electron app users, etc.

2) The usual problem that creating value is not the same as capturing value, and the companies only care about the latter. Making each part of the system more efficient makes the entire system more efficient as a whole, but people are only willing to pay for some parts of the system.

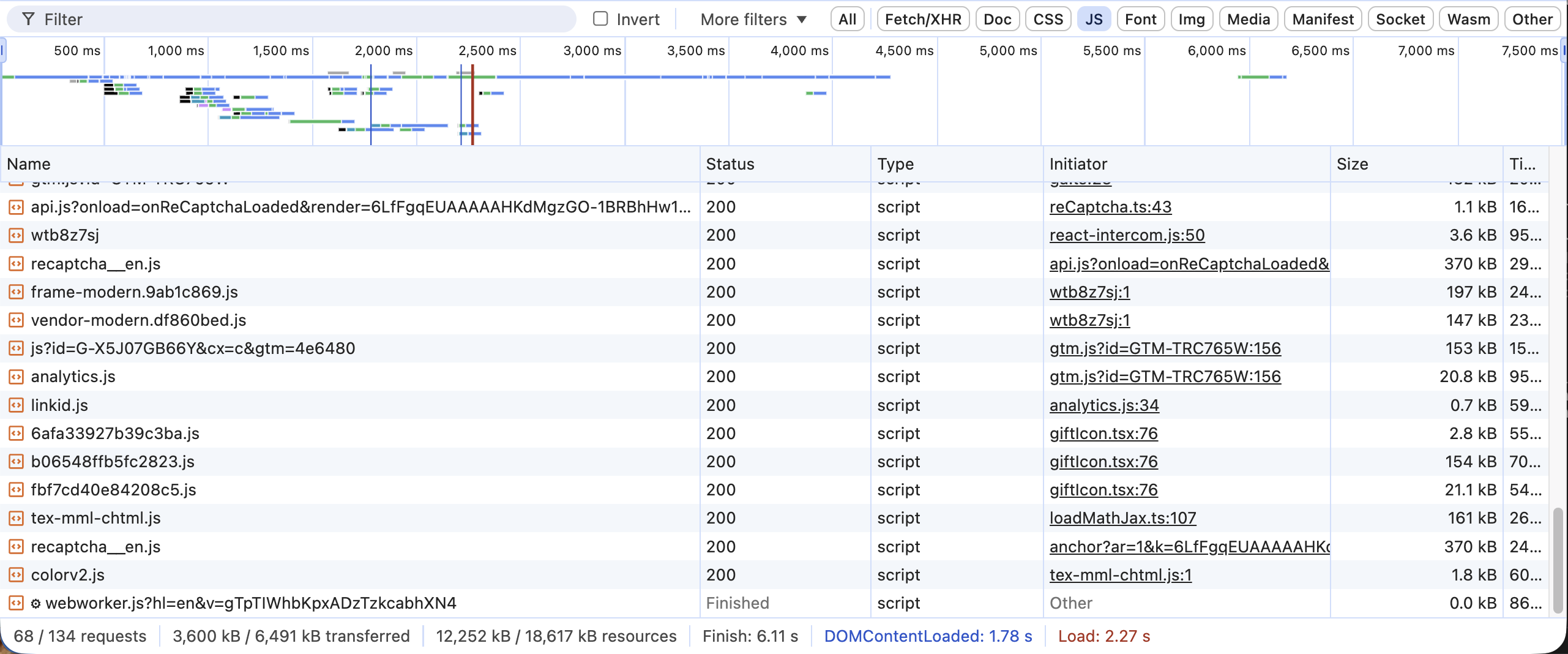

For the browser issue your anger should be directed entirely at LessWrong[1]. Browsers are one of the few types of software which are still hyper-optimized, but there's only so much you can do when people decide to render a complex application client-side using 6 MB of JavaScript:

Inactive tabs use memory because the alternative is losing your state when you switch tabs and having visible lag and reseting the state when you switch back. It's possible browser makers are taking the wrong tradeoff and users don't want tabs to remain in memory, but they're not being inefficient, they're optimizing for UX over memory.

Similarly, Electron is highly optimized for what it is; there's just limits to how efficient you can make a browser while still presenting a fully standards-compliant JavaScript and DOM implementation. Also Electron can't stop people from writing inefficient JavaScript.

- ^

Assuming you think the LessWrong team should stop doing some of the other things they're doing and optimize performance instead. I disagree but this is a value judgement.

"Existing software engineers could easily optimize our existing software"

I don't think they actually could! Even if software engineers really wanted to (which they generally don't) and had the skills to (which seems to be becoming rarer), the software belongs to corporations, not to the engineers, and the corporations would never let their engineers optimise their software like this. (And if they did, they'd switfly be outcompeted by other corporations that could ship faster more featureful software.)

I think "ability to write efficient, optimised consumer software" is essentially no longer accessible to our civilisation for inescapable economic reasons, just like "ability to build beautiful architecture instead of featureless undecorated glass rectangles", "ability to build interesting cars instead of bland blob-shaped automatic five-door huge-touchscreen front-wheel-drive SUVs", etc. etc.)

"Electron apps as native code trades scarce resources (engineer time) for abundant resources (compute)"

I agree this tradeoff is definitely a factor - but I don't think it's the only tradeoff. We're also trading-off things the average user doesn't understand or notice (efficiency, privacy, reliability) for things they do notice (features, a fast release schedule, fancy graphics/UI, network-effects). That's why the Microsoft Windows "start menu" is now a React app.

I think this results in worse software but that it's inevitable and out of our control, not a choice where we could choose to do it differently if we wanted to: the corporations that control almost-all closed-source software would never let us optimise their software for things their users barely even noticed, even if it made the software better for those same users, and if by some miracle a corporation did let us they'd rapidly lose all their users to rival corps.

abundant resources (compute)

The resources are only abundant so long as we keep pounding away on the upgrade treadmill and never fall behind; today's high-end phone or computer is tomorrow's useless e-waste, and that's a problem that should be (in theory!) entirely fixable in software. The reason a 5-year-old phone takes ten seconds to open a web browser, or a 5-year-old-video card can't play a modern AAA computer game, or a 7 or 8 year old PC can't even fit Microsoft Windows + Google Chrome into RAM is problems with the software, not with the hardware.

(Obligatory Linux/FLOSS mention: there does exist super-optimised software that has all the superficial features users notice and all the under-the-bonnet features that make the software actually good/effective to use and it'll run snappily on a fifteen-year-old computer and it's free. Why consumers don't seem to want to touch it is a mystery to me!)

I'm not really sure if we disagree on the high level situation.

You mention that corporations won't pay their engineers to optimize software, and that a corporation that does would be outcompeted because users prefer software with more features over optimized software, and you even note that in some cases better optimized software with fewer features is free and users still don't choose it.

I think you're overestimating how hard it is to write optimized native apps though. It's tedious to write 5 native apps instead of one Electron app, and the frameworks are worse and slower to work with, but it's not that hard (also if you need to display anything, you might need to embed a browser anyway). And I think AAA games are not a case of software being less optimized. I expect that on the low end, game developers are putting less effort into optimization, but AAA games on high settings don't work on old video cards because new video cards are magic (real time ray-tracing!).

Epistemic status: romantic speculation.

The core claim: I accidentally thought that compute growth can be rather neatly analogized to natural resource abundance.

Before compute curse, there was resource curse

Countries that discover oil often end up worse off than countries that don't, which is known as the resource curse. The mechanisms are well-understood: a booming resource sector draws capital and labor away from other industries, creates incentives for rent-seeking over productive investment, crowds out human capital development, and corrodes the institutions needed to sustain long-term growth.

I argue that something structurally similar has been happening with compute. The exponential growth of available computation over the past several decades, and, critically, the widespread expectation that this growth would continue, has created a pattern of resource allocation, talent distribution, and research prioritization that mirrors the resource curse in specific and non-metaphorical ways.

Note: this is not a claim that extensive compute growth has been net negative (neither it is the opposite claim).

Dutch disease comes for ASML

The original Dutch disease mechanism is straightforward: when a booming sector (say, natural gas extraction) generates high returns, it pulls capital and labor out of other sectors (say, manufacturing), causing them to atrophy. The non-booming sectors don't decline because they became less valuable in absolute terms but rather because the booming sector offers relatively better returns, and resources flow accordingly.

A trivial version of "compute Dutch disease" of it goes like this: because scaling compute yields such reliable, legible, and fundable returns (train a bigger model, get a better benchmark score, publish the paper, raise the round), it systematically starves research directions that are harder to fund, harder to evaluate, and slower to produce results, even when those directions might be more consequential in the long run.

So, "The Bitter Lesson" can be seen as the Dutch disease of AI research, if we add to it that the fact that scaling works doesn't mean the crowding-out of alternatives is costless. Or, in other words, the fact that scaling works better is rather a fact about our ability to do programming or even, if we go to the very end of this line of reasoning, about our economic and educational institutions, than about computer science in general.

However, I consider it only as the most recent and prominent manifestation of a phenomenon that was happening for decades.

Since at least the late 1990s, the reliable cheapening of compute has made it consistently more profitable to build compute-intensive solutions to problems than to invest in the kind of deep, careful engineering that produces efficient, well-understood systems. When you can always count on next year's hardware being faster and cheaper, the rational business decision is to ship bloated software now and let Moore's Law clean up after you, rather than spending the additional engineering time to make something lean and correct. This created an entire economy of applications, business models and platform architectures that are, in a meaningful sense, the technological equivalent of an oil-dependent monoculture: they exist not because they represent the best way to solve a problem, but because abundant compute made them the cheapest way to ship a product.

The consequences are visible across the entire stack. Web applications that would have run comfortably on a 2005-era machine now require gigabytes of RAM to render what is essentially styled text. Electron-based desktop apps ship an entire browser engine to display a chat window. Backend services that could be handled by a well-designed program running on a single server are instead distributed across sprawling microservice architectures that consume orders of magnitude more compute. Cory Doctorow's "enshittification" framework is about the user-facing result of this dynamic, but the deeper structural story is about how compute abundance degraded the craft of software engineering itself, well before anyone started worrying about ChatGPT replacing programmers.

This is the Dutch disease pattern operating at the level of the entire technology economy: the booming sector (scale-dependent applications) drew capital and talent away from the non-booming sector (careful engineering, deep technical innovation, computationally parsimonious approaches, and overall hardware tech economy - biotech, spacetech, materials, etc.), and the non-booming sector atrophied accordingly.

Because of the advantage of huge compute available, it got more financially attractive to allocate resources towards software than towards physical engineering and deeptech, on top of software being easier to update, replicate, diffuse, and make incremental improvements on. And so deeptech stagnated.

But of course the AI case is qualitatively different and the most sorrowful because it resulted in humanity trying to build superintelligence with giant instructable deep learning models.

Human capital crowding-out

Resource curse economies characteristically underinvest in education and human capital development. The relative returns to education are lower in resource-dependent economies because the booming sector doesn't require a broadly educated population.

The compute version of this story has been playing out for at least a decade, well before the current discourse about AI replacing jobs and destroying university education. The entire trajectory of computer science education shifted from "understand the fundamentals deeply" toward "learn to use frameworks and APIs that abstract over compute." At the same time, natural sciences and engineering education got increasingly less attractive and rewarding as compared to computer science.

There is also a more direct talent-siphoning effect: the IT economy has been pulling the most capable technical minds into a narrow set of activities and away from a much broader set of technical and scientific challenges.

The voracity effect and race dynamics

In the resource curse literature, there is a so called "voracity effect": when competing interest groups face a resource windfall, they respond by extracting more aggressively, leading to worse outcomes than moderate scarcity would produce. Rather than investing the windfall prudently, competing factions race to capture as much of it as possible before others do.

I leave this without a direct comment and let the reader have their own pleasure of meditating on this.

But compute growth is endogeneous!

The resource curse in its classical form operates on an exogenous endowment: countries don't choose to have oil reserves, they discover them, and then the political economy warps around that windfall. Much of the pathology comes from the unearned nature of the wealth: it enables rent-seeking, weakens the link between effort and reward, and corrodes institutions.

Compute, by contrast, is endogenously produced through deliberate R&D and engineering investment. Moore's Law was never a law of nature.

Right?

I mean, to me Moore’s Law looks like a strong default of any humanity-like civilization. It is created by humans, right, but it is created in a kind of hardly avoidable manner.

The counterfactual question

The resource curse literature has natural counterfactuals (resource-poor countries that developed strong institutions and diversified economies: Japan, South Korea, Singapore). What's the compute-curse counterfactual? A world where compute grew more slowly and we consequently invested more in elegant algorithms, interpretable models, and formal methods?

It's plausible, but it's also possible that slower compute growth would have simply meant less progress overall rather than differently-directed progress. I don’t know. I said in the beginning - it is a speculation.

However, one can trivially note that in a world with less compute abundance, the relative returns to algorithmic cleverness, interpretability research, and formal verification would have been higher, because you couldn't just solve problems by throwing more FLOPS at them. And that may or may not lead to better outcomes in the long run (I am basically leaving here the question of ASI development and just talking about rather “normal” tech and science R&D).

People actually thought about this!

Two existing frameworks are close to what I'm describing, but both point the analogy in different directions.

The Intelligence Curse (Luke Drago and Rudolf Laine, 2025) uses the resource curse analogy to argue that AGI will create rentier-state-like incentives: powerful actors who control AI will lose their incentive to invest in regular people, just as petrostates lose their incentive to invest in citizens. This is a compelling argument about the distributional consequences of AGI, but it's about what happens after AGI arrives. The compute curse is about what's happening now, during the process of building toward AGI, and about how the abundance of compute is distorting that process itself.

The Generalized Dutch Disease (Policy Tensor, Feb 2026) is about the macroeconomic effects of the compute capex boom on US manufacturing competitiveness, showing that it operates through the same channels as the fracking boom and the pre-2008 financial boom. This is the closest existing work to what I'm describing, but it stays within the macroeconomic framing (factor prices, unit labor costs, exchange rate effects) and doesn't address the innovation-direction distortion, human capital crowding-out in the intellectual sense, or the AI safety implications.

But: compute curse may actually be worse than resource curse

Some of the negative downstream effects of compute abundance don't map onto the resource curse framework directly but are worth including for completeness, since they stem from the same underlying cause (cheap, abundant compute enabling activities that wouldn't otherwise be viable):

These are not Dutch disease effects, just straightforward negative externalities of cheap compute. But they suggest that the full accounting of compute abundance's costs is substantially larger than what the resource curse analogy alone would tell.