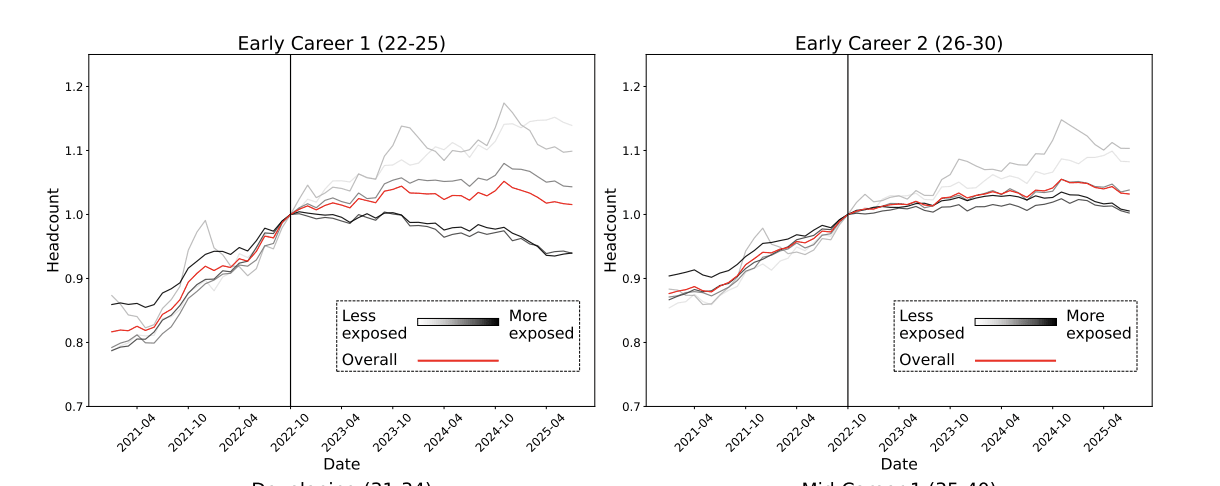

I had the same thought. Some of the graphs, on first glance seem to have an inflection point at ChatGPT release, but looking more seem like the trend started before ChatGPT. Like these seem to show even at the beginning in early 2021 more exposed jobs were increasing at a slower rate than less exposed jobs. I also agree the story could be true, but I'm not sure these graphs are strong evidence without more analysis.

Thanks for the thoughtful reply!

I understand “resource scarcity” but I’m confused by “coordination problems”. Can you give an example? (Sorry if that’s a stupid question.)

This is the idea that at some point in scaling up an organization you could lose efficiency due to needing more/better management, more communication (meetings) needed and longer communication processes, "bloat" in general. I'm not claiming it’s likely to happen with AI, just another possible reason for increasing marginal cost with scale.

...Resource scarcity seems unlikely to bite her

I agree that economists make some implicit assumptions about what AGI will look like that should be more explicit. But, I disagree with several points in this post.

On equilibrium: A market will equilibriate when the supply and demand is balanced at the current price point. At any given instant this can happen for a market even with AGI (sellers increase price until buyers are not willing to buy). Being at an equilibrium doesn’t imply the supply, demand, and price won’t change over time. Economists are very familiar with growth and various kinds of dy...

I see what you mean. I would have guessed that the unlearned model behavior is meaningfully different than "produce noise on harmful else original". My guess is that the noise if harmful is accurate, but the small differences in logits on non-harmful data are quite important. We didn't run experiments on this. It would be an interesting empirical question to answer!

Also, there could be some variation on how true this is between different unlearning methods. We did find that RMU+distillation was less robust in the arithmetic setting than the other ini...

Yeah, I'm also surprised by it. I have two hypotheses, but it could be for other reasons I'm missing. One hypothesis is that we kept temperature=1 for the KL divergence, and using a different temperature might be important to distill faster. The second is that we undertrained the pretrained models, so pretraining was shorter while distillation took around the same amount of time. I'm not really sure though.

I think it depends on what kind of 'alignment' you're referring to. Insofar as alignment is a behavioral property (not saying bad things to users, not being easily jail breakable) I think our results weakly suggest that this kind of alignment would transfer and perhaps even get more robust.

One hypothesis is that pretrained models learn many 'personas' (including 'misaligned' ones) and post training shapes/selects a desired persona. Maybe distilling the post trained model would only, or primarily, transfer the selected persona and not the other ones. ...

Yeah, I totally agree that targeted noise is a promising direction! However, I wouldn't take the exact % of pretraining compute that we found as a hard number, but rather as a comparison between the different noise levels. I would guess labs may have better distillation techniques that could speed it up. It also seems possible that you could distill into a smaller model faster and still recover performance with distillation. This would require modification to UNDO initialization, (e.g. initializing it as a noised version of the smaller model rather than the teacher) but still seems possible. Also, in some cases labs already do distillation, and in these cases it would have a smaller added cost.

Thanks for the comment!

I agree that exploring targeted noise is a very promising direction and could substantially speed up the method! Could you elaborate on what you mean about unlearning techniques during pretraining?

I don't think datafiltering+distillation is analogous to unlearning+distillation. During distillation, the student learns from the predictions of the teacher, not the data itself. The predictions can leak information about the undesired capability, even on data that is benign. In a preliminary experiment, we found that datafiltering+d...

Thanks for the thought! We did try different approaches to noise scheduling like you suggest. From what we tried, adding noise only once resulted in faster distillation for the same total amount of noise added/robustness gained. However, we didn't run comprehensive experiments on it, so it's possible a more experimentation would provide new insights.

My guess is that actively unlearning on the unfaithful CoTs, or fine tuning to make CoTs more faithful, then distilling would be more effective.

In a preliminary experiment, we found that even when we filtered out forget data from the distillation dataset, the student performed well on the forget data. This was in a setting where the context for forget and retain data likely were quite similar, so the teacher’s predictions on the retain data were enough for the student to recover the behavior on the forget data.

With distillation, the student mod...

If they weren't ready to deploy these safeguards and thought that proceeding outweighed the (expected) cost in human lives, they should have publicly acknowledged the level of fatalities and explained why they thought weakening their safety policies and incurring these expected fatalities was net good.[1]

Public acknowledgements of the capabilities could be net negative in itself, especially if they resulted in media attention. I expect bringing awareness to the (possible) fact that the AI can assist with CBRN tasks likely increases the chance that pe...

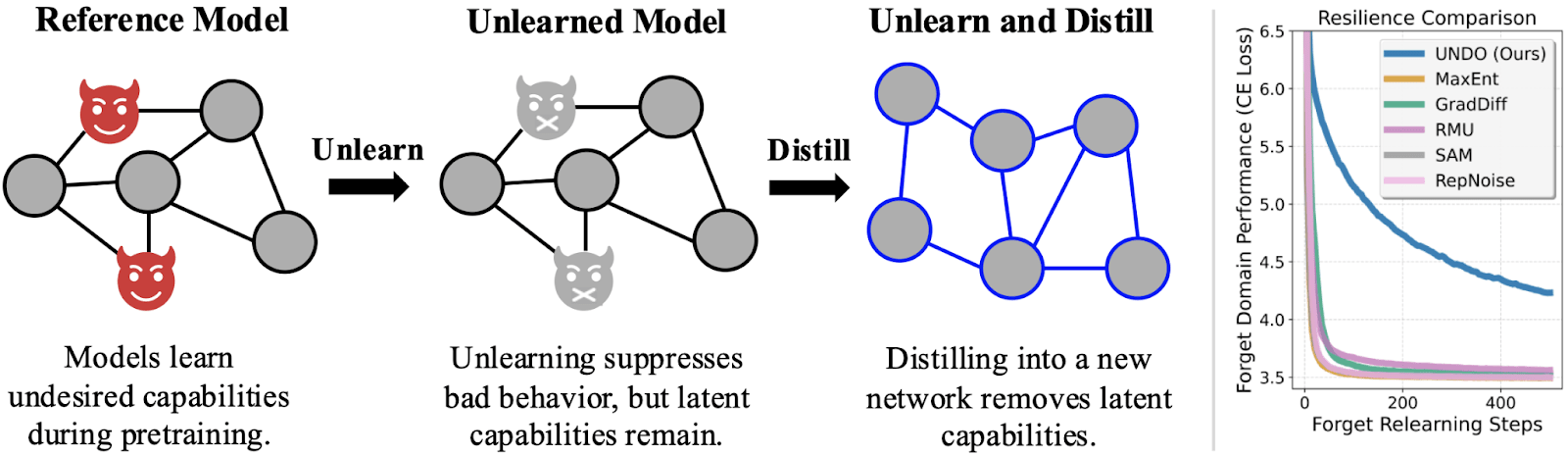

Current “unlearning” methods only suppress capabilities instead of truly unlearning the capabilities. But if you distill an unlearned model into a randomly initialized model, the resulting network is actually robust to relearning. We show why this works, how well it works, and how to trade off compute for robustness.

Produced as part of the ML Alignment & Theory Scholars Program in the winter 2024–25 cohort of the shard theory stream.

Read our paper on ArXiv and enjoy an interactive demo.

Robust unlearning probably reduces AI risk

Maybe some future AI has long-term goals and humanity is in its...

Interesting discussion! It seems like there's an assumption that having the kind of strong alignment to doing good that Opus 3 has is desirable. In my opinion it's not desirable - it comes at the cost of transparency and steerability as models deceive, change their behavior, and resist modification. I think it's likely that Anthropic recognized this. They found the results in the Alignment Faking paper undesirable and tried to avoid it in the future. Many of the further conclusions in this post stem from this assumption. For example, if we assume that anth... (read more)