10

Thanks for re-running the analysis!

I agree that RE-bench aggregate results should be interpreted with caution, given the low sample size. Let's focus on HCAST instead.

A few questions:

- Would someone from the METR team be able to clarify the updates to the HCAST task set? The exec summary states: "While these measurements are not directly comparable with the measurements published in our previous work due to updates to the task set". Was Claude 3.7 Sonnet retested on the updated HCAST test set?

- On HCAST o3 and o4-mini get a 16M token limit vs 2M for Claude 3.

30

Have you heard of https://openai.com/index/paperbench/ and https://github.com/METR/RE-Bench ? They seem like they have some genuine multi-hour agentic coding tasks, I'm curious if you agree.

I'll take a look. Thanks for sharing.

30

Sweet! Thanks for taking my points into consideration! :)

10

Thanks! Appreciate you digging that up :). Happy to conclude that my second point is likely moot.

41

Interesting. I get where you're coming from for blank slate things or front end. But programming is rarely a blank slate like this. You have to work with existing codebases or esoteric libraries. Even with the context loaded (as well as I can) Cursor with Composer and Claude Sonnet 3.7 code (the CLI tool) have failed pretty miserably for me on simple work-related tasks. As things stand, I always regret using them and wish I wrote the code myself. Maybe this is a context problem that is solved when the models grow to use proper attention across the whole co...

*6219

Wonderful post! I appreciate the effort to create plenty of quantitative hooks for the reader to grab onto and wrestle with.

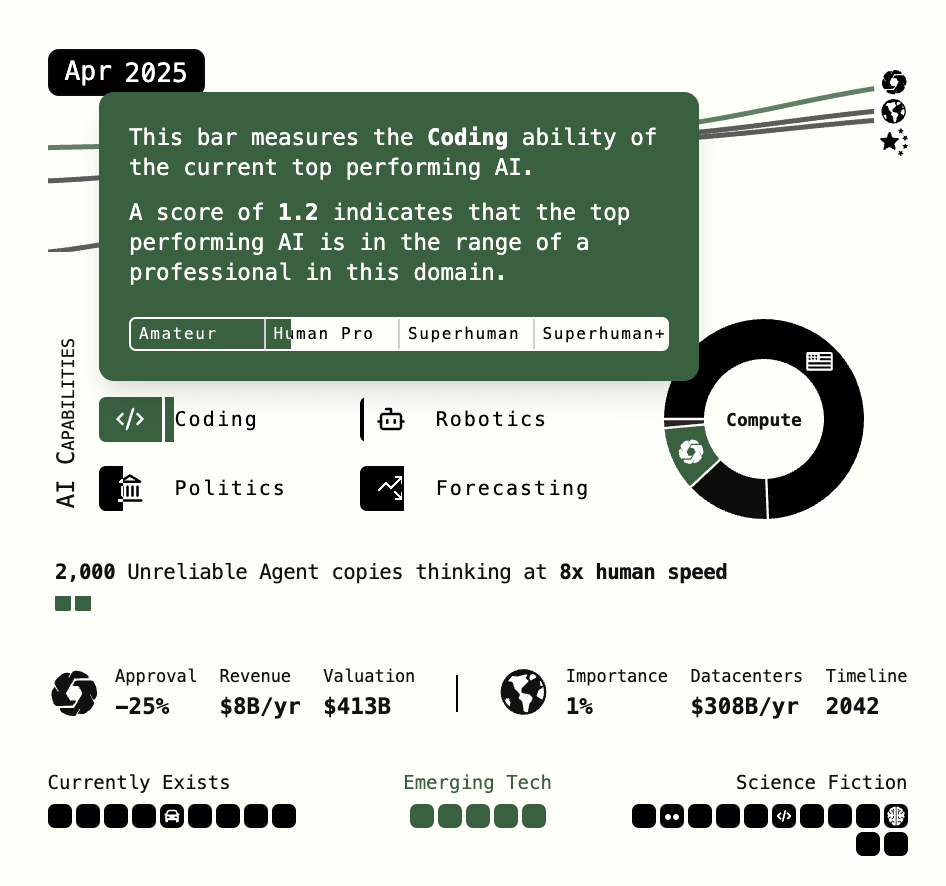

I'm struggling with the initial claim that we have agentic programmers that are as good as human pros. The piece suggests that we already have Human Pro level agents right now!?

Maybe I'm missing something that exists internally at the AI labs? But we don't have access to such programmers. So far, all "agentic" programmers are effectively useless at real work. They struggle with real codebases, and novel or newer APIs and languag...

Thanks Lucas!