[This is an interim report and continuation of the work from the research sprint done in MATS winter 7 (Neel Nanda's Training Phase)]

Try out binary masking for a few residual saes in this colab notebook: [Github Notebook] [Colab Notebook]

TL;DR:

We propose a novel approach to:

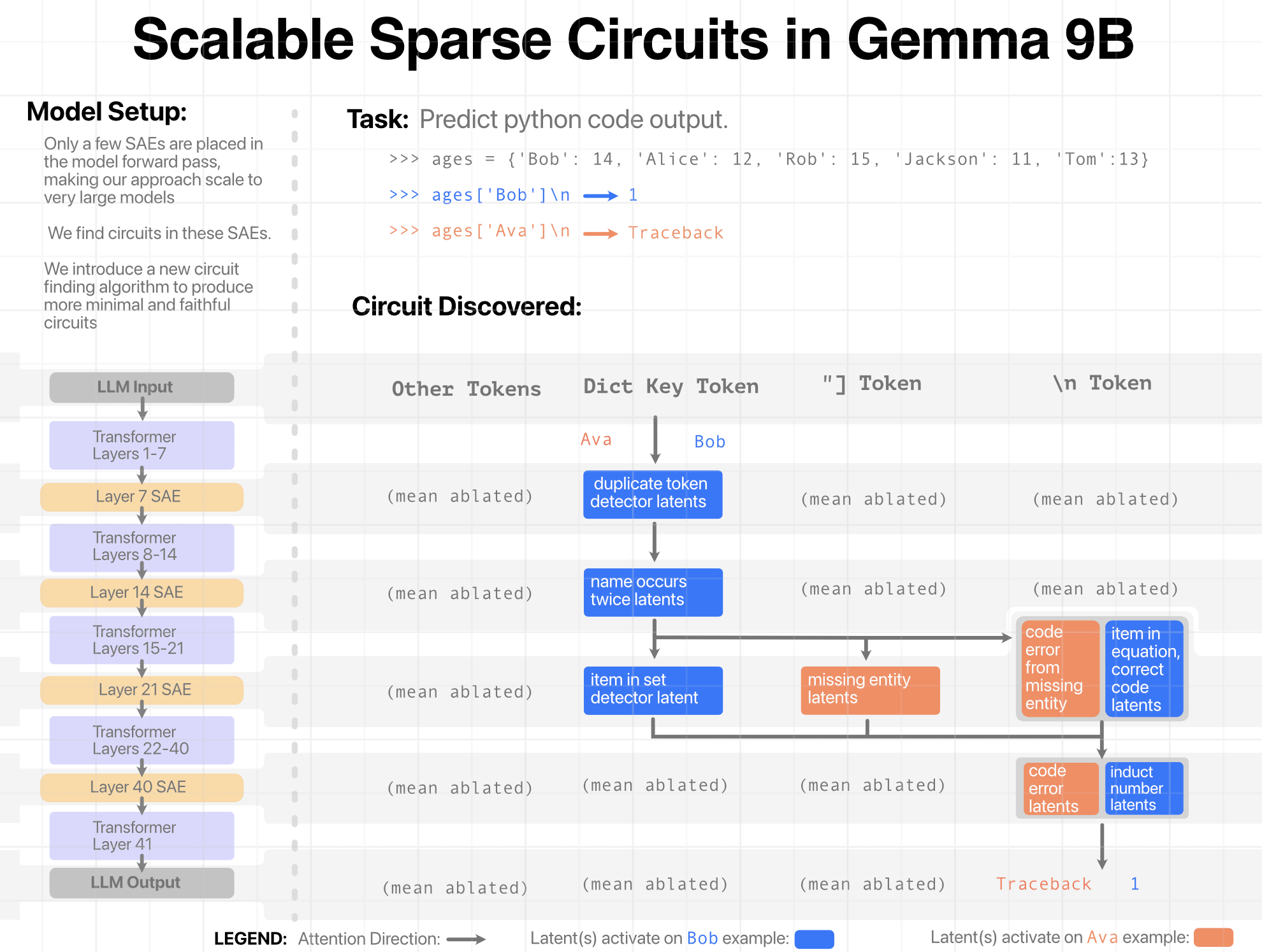

- Scaling SAE Circuits to Large Models: By placing sparse autoencoders only in the residual stream at intervals, we find circuits in models as large as Gemma 9B without requiring SAEs to be trained for every transformer layer.

- Finding Circuits: We develop a better circuit finding algorithm. Our method optimizes a binary mask over SAE latents, which proves significantly more effective than existing thresholding-based methods like Attribution Patching or Integrated Gradients.

Our discovered circuits paint a clear picture of how Gemma does a given task, with one...

Diego Caples (diego@activated-ai.com)

Rob Neuhaus (rob@activated-ai.com)

Introduction

In principle, neuron activations in a transformer-based language model residual stream should be about the same scale. In practice, the dimensions unexpectedly widely vary in scale. Mathematical theories of the transformer architecture do not predict this. They expect no dimension to be more important than any other. Is there something wrong with our reasonably informed intuitions of how transformers work? What explains these outlier channels?

Previously, Anthropic researched the existence of these privileged basis dimensions (dimensions more important / larger than expected) and ruled out several causes. By elimination, they reached the hypothesis that per-channel normalization in the Adam optimizer was the cause of privileged basis. However, they did not prove this was the case.

We conclusively show that Adam causes outlier channels / privileged...

What if interpretability is a spectrum?

Instead of one massive model, imagine at inference time, you generate a small, query specific model by carving out a small subset of the big model.

Interp on this smaller model for a given query has to be better than on the oom bigger model.

Inference gets substantially faster if you can cluster/batch similar queries together, so you can actually get financial support if this works at the frontier.

The core insight? Gradients smear knowledge everywhere. Tag training data with an ontology learned ... (read more)