A week ago, Anthropic quietly weakened their ASL-3 security requirements. Yesterday, they announced ASL-3 protections.

I appreciate the mitigations, but quietly lowering the bar at the last minute so you can meet requirements isn't how safety policies are supposed to work.

(This was originally a tweet thread (https://x.com/RyanPGreenblatt/status/1925992236648464774) which I've converted into a LessWrong quick take.)

What is the change and how does it affect security?

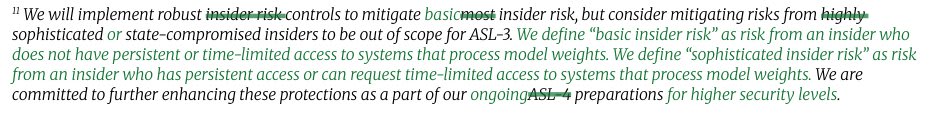

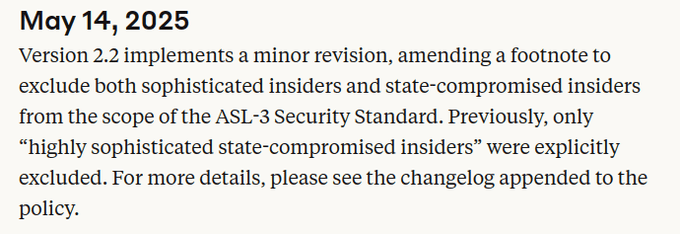

9 days ago, Anthropic changed their RSP so that ASL-3 no longer requires being robust to employees trying to steal model weights if the employee has any access to "systems that process model weights".

Anthropic claims this change is minor (and calls insiders with this access "sophisticated insiders").

But, I'm not so sure it's a small change: we don't know what fraction of employees could get this access and "systems that process model weights" isn't explained.

Naively, I'd guess that access to "systems that process model weights" includes employees being able to operate on the model weights in any way other than through a trusted API (a restricted API that we're very confident is secure). If that's right, it could be a hig...

I'd been pretty much assuming that AGI labs' "responsible scaling policies" are LARP/PR, and that if an RSP ever conflicts with their desire to release a model, either the RSP will be swiftly revised, or the testing suite for the model will be revised such that it doesn't trigger the measures the AGI lab doesn't want to trigger. I. e.: that RSPs are toothless and that their only purposes are to showcase how Responsible the lab is and to hype up how powerful a given model ended up.

This seems to confirm that cynicism.

(The existence of the official page tracking the updates is a (smaller) update in the other direction, though. I don't see why they'd have it if they consciously intended to RSP-hack this way.)

Employees at Anthropic don't think the RSP is LARP/PR. My best guess is that Dario doesn't think the RSP is LARP/PR.

This isn't necessarily in conflict with most of your comment.

I think I mostly agree the RSP is toothless. My sense is that for any relatively subjective criteria, like making a safety case for misalignment risk, the criteria will basically come down to "what Jared+Dario think is reasonable". Also, if Anthropic is unable to meet this (very subjective) bar, then Anthropic will still basically do whatever Anthropic leadership thinks is best whether via maneuvering within the constraints of the RSP commitments, editing the RSP in ways which are defensible, or clearly substantially loosening the RSP and then explaining they needed to do this due to other actors having worse precautions (as is allowed by the RSP). I currently don't expect clear cut and non-accidental procedural violations of the RSP (edit: and I think they'll be pretty careful to avoid accidental procedural violations).

I'm skeptical of normal employees having significant influence on high stakes decisions via pressuring the leadership, but empirical evidence could change the views of Anthropic leadership.

Ho...

How you feel about this state of affairs depends a lot on how much you trust Anthropic leadership to make decisions which are good from your perspective.

Another note: My guess is that people on LessWrong tend to be overly pessimistic about Anthropic leadership (in terms of how good of decisions Anthropic leadership will make under the LessWrong person's views and values) and Anthropic employees tend to be overly optimistic.

I'm less confident that people on LessWrong are overly pessimistic, but they at least seem too pessimistic about the intentions/virtue of Anthropic leadership.

For the record, I think the importance of "intentions"/values of leaders of AGI labs is overstated. What matters the most in the context of AGI labs is the virtue / power-seeking trade-offs, i.e. the propensity to do dangerous moves (/burn the commons) to unilaterally grab more power (in pursuit of whatever value).

Stuff like this op-ed, broken promise of not meaningfully pushing the frontier, Anthropic's obsession & single focus on automating AI R&D, Dario's explicit calls to be the first to RSI AI or Anthropic's shady policy activity has provided ample evidence that their propensity to burn the commons to grab more power (probably in name of some values I would mostly agree with fwiw) is very high.

As a result, I'm now all-things-considered trusting Google DeepMind slightly more than Anthropic to do what's right for AI safety. Google, as a big corp, is less likely to do unilateral power grabbing moves (such as automating AI R&D asap to achieve a decisive strategic advantage), is more likely to comply with regulations, and is already fully independent to build AGI (compute / money / talent) so won't degrade further in terms of incentives; additionally D. Hass...

Not the main thrust of the thread, but for what it's worth, I find it somewhat anti-helpful to flatten things into a single variable of "how much you trust Anthropic leadership to make decisions which are good from your perspective", and then ask how optimistic/pessimistic you are about this variable.

I think I am much more optimistic about Anthropic leadership on many axis relative to an overall survey of the US population or Western population – I expect them to be more libertarian, more in favor of free speech, more pro economic growth, more literate, more self-aware, higher IQ, and a bunch of things.

I am more pessimistic about their ability to withstand the pressures of a trillion dollar industry to shape their incentives than the people who are at Anthropic.

I believe the people working there are siloing themselves intellectually into an institution facing incredible financial incentives for certain bottom lines like "rapid AI progress is inevitable" and "it's reasonably likely we can solve alignment" and "beating China in the race is a top priority", and aren't allowed to talk to outsiders about most details of their work, and this is a key reason that I expect them to sc...

I think the main thing I want to convey is that I think you're saying that LWers (of which I am one) have a very low opinion of the integrity of people at Anthropic, but what I'm actually saying that their integrity is no match for the forces that they are being tested with.

I don't need to be able to predict a lot of fine details about individuals' decision-making in order to be able to have good estimates of these two quantities, and comparing them is the second-most question relating to whether it's good to work on capabilities at Anthropic. (The first one is a basic ethical question about working on a potentially extinction-causing technology that is not much related to the details of which capabilities company you're working on.)

Employees at Anthropic don't think the RSP is LARP/PR. My best guess is that Dario doesn't think the RSP is LARP/PR.

Yeah, I don't think this is necessarily in contradiction with my comment. Things can be effectively just LARP/PR without being consciously LARP/PR. (Indeed, this is likely the case in most instances of LARP-y behavior.)

Agreed on the rest.

I think security is legitimately hard and can be costly in research efficiency. I think there is a defensible case for this ASL-3 security bar being reasonable for the ASL-3 CBRN threshold, but it seems too weak for the ASL-3 AI R&D threshold (hopefully the bar for things like this ends up being higher).

I've heard from a credible source that OpenAI substantially overestimated where other AI companies were at with respect to RL and reasoning when they released o1. Employees at OpenAI believed that other top AI companies had already figured out similar things when they actually hadn't and were substantially behind. OpenAI had been sitting the improvements driving o1 for a while prior to releasing it. Correspondingly, releasing o1 resulted in much larger capabilities externalities than OpenAI expected. I think there was one more case like this either from OpenAI or GDM where employees had a large misimpression about capabilities progress at other companies causing a release they wouldn't do otherwise.

One key takeaway from this is that employees at AI companies might be very bad at predicting the situation at other AI companies (likely making coordination more difficult by default). This includes potentially thinking they are in a close race when they actually aren't. Another update is that keeping secrets about something like reasoning models worked surprisingly well to prevent other companies from copying OpenAI's work even though there was a bunch of public reporting (and presumably many rumors) about this.

One more update is that OpenAI employees might unintentionally accelerate capabilities progress at other actors via overestimating how close they are. My vague understanding was that they haven't updated much, but I'm unsure. (Consider updating more if you're an OpenAI employee!)

Alex Mallen also noted a connection with people generally thinking they are in race when they actually aren't: https://forum.effectivealtruism.org/posts/cXBznkfoPJAjacFoT/are-you-really-in-a-race-the-cautionary-tales-of-szilard-and

I think:

- The few bits they leaked in the release helped a bunch. Note that these bits were substantially leaked via people being able to use the model rather than necessarily via the blog post.

- Other companies weren't that motivated to try to copy OpenAI's work until it was released as they we're sure how important it was or how good the results were.

I'm currently working as a contractor at Anthropic in order to get employee-level model access as part of a project I'm working on. The project is a model organism of scheming, where I demonstrate scheming arising somewhat naturally with Claude 3 Opus. So far, I’ve done almost all of this project at Redwood Research, but my access to Anthropic models will allow me to redo some of my experiments in better and simpler ways and will allow for some exciting additional experiments. I'm very grateful to Anthropic and the Alignment Stress-Testing team for providing this access and supporting this work. I expect that this access and the collaboration with various members of the alignment stress testing team (primarily Carson Denison and Evan Hubinger so far) will be quite helpful in finishing this project.

I think that this sort of arrangement, in which an outside researcher is able to get employee-level access at some AI lab while not being an employee (while still being subject to confidentiality obligations), is potentially a very good model for safety research, for a few reasons, including (but not limited to):

- For some safety research, it’s helpful to have model access in ways that la

Yay Anthropic. This is the first example I'm aware of of a lab sharing model access with external safety researchers to boost their research (like, not just for evals). I wish the labs did this more.

[Edit: OpenAI shared GPT-4 access with safety researchers including Rachel Freedman before release. OpenAI shared GPT-4 fine-tuning access with academic researchers including Jacob Steinhardt and Daniel Kang in 2023. Yay OpenAI. GPT-4 fine-tuning access is still not public; some widely-respected safety researchers I know recently were wishing for it, and were wishing they could disable content filters.]

I'd be surprised if this was employee-level access. I'm aware of a red-teaming program that gave early API access to specific versions of models, but not anything like employee-level.

It was a secretive program — it wasn’t advertised anywhere, and we had to sign an NDA about its existence (which we have since been released from). I got the impression that this was because OpenAI really wanted to keep the existence of GPT4 under wraps. Anyway, that means I don’t have any proof beyond my word.

(I'm a full-time employee at Anthropic.) It seems worth stating for the record that I'm not aware of any contract I've signed whose contents I'm not allowed to share. I also don't believe I've signed any non-disparagement agreements. Before joining Anthropic, I confirmed that I wouldn't be legally restricted from saying things like "I believe that Anthropic behaved recklessly by releasing [model]".

I think I could share the literal language in the contractor agreement I signed related to confidentiality, though I don't expect this is especially interesting as it is just a standard NDA from my understanding.

I do not have any non-disparagement, non-solicitation, or non-interference obligations.

I'm not currently going to share information about any other policies Anthropic might have related to confidentiality, though I am asking about what Anthropic's policy is on sharing information related to this.

Should we update against seeing relatively fast AI progress in 2025 and 2026? (Maybe (re)assess this after the GPT-5 release.)

Around the early o3 announcement (and maybe somewhat before that?), I felt like there were some reasonably compelling arguments for putting a decent amount of weight on relatively fast AI progress in 2025 (and maybe in 2026):

- Maybe AI companies will be able to rapidly scale up RL further because RL compute is still pretty low (so there is a bunch of overhang here); this could cause fast progress if the companies can effectively directly RL on useful stuff or RL transfers well even from more arbitrary tasks (e.g. competition programming)

- Maybe OpenAI hasn't really tried hard to scale up RL on agentic software engineering and has instead focused on scaling up single turn RL. So, when people (either OpenAI themselves or other people like Anthropic) scale up RL on agentic software engineering, we might see rapid progress.

- It seems plausible that larger pretraining runs are still pretty helpful, but prior runs have gone wrong for somewhat random reasons. So, maybe we'll see some more successful large pretraining runs (with new improved algorithms) in 2025.

I u...

I basically agree with this whole post. I used to think there were double-digit % chances of AGI in each of 2024 and 2025 and 2026, but now I'm more optimistic, it seems like "Just redirect existing resources and effort to scale up RL on agentic SWE" is now unlikely to be sufficient (whereas in the past we didn't have trends to extrapolate and we had some scary big jumps like o3 to digest)

I still think there's some juice left in that hypothesis though. Consider how in 2020, one might have thought "Now they'll just fine-tune these models to be chatbots and it'll become a mass consumer product" and then in mid-2022 various smart people I know were like "huh, that hasn't happened yet, maybe LLMs are hitting a wall after all" but it turns out it just took till late 2022/early 2023 for the kinks to be worked out enough.

Also, we should have some credence on new breakthroughs e.g. neuralese, online learning, whatever. Maybe like 8%/yr? Of a breakthrough that would lead to superhuman coders within a year or two, after being appropriately scaled up and tinkered with.

Interestingly, reasoning doesn't seem to help Anthropic models on agentic software engineering tasks, but does help OpenAI models.

I use 'ultrathink' in Claude Code all the time and find that it makes a difference.

I do worry that METR's evaluation suite will start being less meaningful and noisier for longer time horizons as the evaluation suite was built a while ago. We could instead look at 80% reliability time horizons if we have concerns about the harder/longer tasks.

I'm overall skeptical of overinterpreting/extrapolating the METR numbers. It is far too anchored on the capabilities of a single AI model, a lightweight scaffold, and a notion of 'autonomous' task completion of 'human-hours'. I think this is a mental model for capabilities progress that will lead to erroneous predictions.

If you are trying to capture the absolute frontier of what is possible, you don't only test a single-acting model in an empty codebase with limited internet access and scaffolding. I would personally be significantly less capable at agentic coding if I only used 1 model (like replicating subliminal learning in about 1 hour of work + 2 hours of waiting for fine-tunes on the day of the release) with l...

Interestingly, reasoning doesn't seem to help Anthropic models on agentic software engineering tasks, but does help OpenAI models.

Is there a standard citation for this?

How do you come by this fact?

Why should we think that the relevant progress driving non-formal IMO is very important for plausibly important capabilities like agentic software engineering? [...] if the main breakthrough was in better performance on non-trivial-to-verify tasks (as various posts from OpenAI people claim), then even if this generalizes well beyond proofs this wouldn't obviously particularly help with agentic software engineering (where the core blocker doesn't appear to be verification difficulty).

I'm surprised by this. To me it seems hugely important how fast AIs are improving on tasks with poor feedback loops, because obviously they're in a much better position to improve on easy-to-verify tasks, so "tasks with poor feedback loops" seem pretty likely to be the bottleneck to an intelligence explosion.

So I definitely do think that "better performance on non-trivial-to-verify tasks" are very important for some "plausibly important capabilities". Including agentic software engineering. (Like: This also seems related to why the AIs are much better at benchmarks than at helping people out with their day-to-day work.)

I agree with the core message in Dario Amodei's essay "The Adolescence of Technology": AI is an epochal technology that poses massive risks and humanity isn't clearly going to do a good job managing these risks.

(Context for LessWrong: I think it seems generally useful to comment on things like this. I expect that many typical LessWrong readers will agree with me and find my views relatively predictable, but I thought it would be good to post here anyway.)

However, I also disagree with (or dislike) substantial parts of this essay:

- I think it's reasonable to think that the chance of AI takeover is very high and bad that Dario seemingly dismisses this as "doomerism" and "thinking about AI risks in a quasi-religious way". It's important to not conflate between "there are unreasonable sensationalist people who think risks are very high" and the very idea that risk is very high. (Same as how Dario doesn't want to be associated with everyone arguing that risk is relatively lower, including people doing so in a more sensationalist way.)

- I agree that there are unreasonable and sensationalist people arguing risks are very high. I think focusing on weak men is dangerous, especially when seem

When I say "misaligned AI takeover", I mean that the acquisition of resources by the AIs would reasonably be considered (mostly) illegitimate, some fraction of this could totally include many humans surviving with a subset of resources (though I don't currently expect property rights to remain intact in such a scenario very long term). Some of these outcomes could be avoid literal coups or violence while still being illegitimate; e.g. they involve doing carefully planned out capture of governments in ways their citizens/leaders would strongly object to if they understood and things like this drive most of the power acquisition.

I'm not counting it as takeover if "humans never intentionally want to hand over resources to AIs, but due to various effects misaligned AIs end up with all of the resources through trade and not through illegitimate means" (e.g., we can't make very aligned AIs but people make various misaligned AIs while knowing they are misaligned and thus must be paid wages and AIs form a cartel rather having wages competed down to subsistence levels and thus AIs end up with most of the resources).

I currently don't expect human disempowerment in favor of AIs (that aren't appointed successors) conditional on no misalignmed AI takeover, but agree this is possible; it doesn't form a large enough probability to substantially alter my communication.

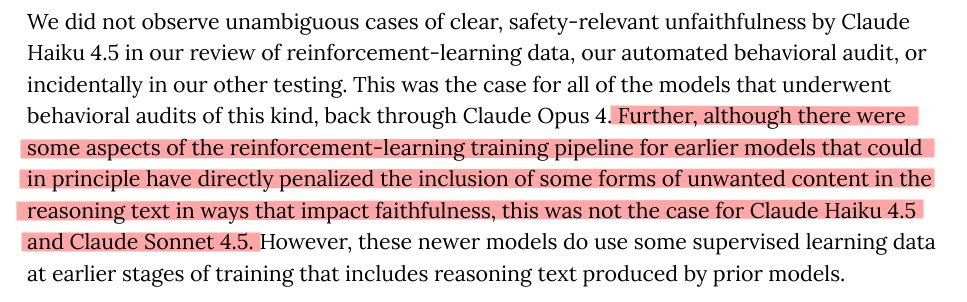

Anthropic, GDM, and xAI say nothing about whether they train against Chain-of-Thought (CoT) while OpenAI claims they don't[1].

I think AI companies should be transparent about whether (and how) they train against CoT. While OpenAI is doing a better job at this than other companies, I think all of these companies should provide more information about this.

It's particularly striking that Anthropic says nothing about whether they train against CoT given their system card (for 4.5 Sonnet) is very thorough and includes a section on "Reasoning faithfulness" (kudos to them for providing so much other information!). Naively, this seems like some evidence that they are training against CoT and didn't want to disclose this.[2]

I think we should try to create an incentive gradient that pushes AI companies to disclose information even if that information makes them look bad, so we should make companies feel some heat for not disclosing (reasonably) important information like how/whether they are training against CoT.

It's worth noting that some versions of training against CoT might be totally fine (as in, they don't incentivize obfuscation of misaligned reasoning) and that some particular appr...

Anthropic has now clarified this in their system card for Claude Haiku 4.5:

Thanks to them for doing this!

See also Sam Bowman's tweet thread about this.

Recently, various groups successfully lobbied to remove the moratorium on state AI bills. This involved a surprising amount of success while competing against substantial investment from big tech (e.g. Google, Meta, Amazon). I think people interested in mitigating catastrophic risks from advanced AI should consider working at these organizations, at least to the extent their skills/interests are applicable. This both because they could often directly work on substantially helpful things (depending on the role and organization) and because this would yield valuable work experience and connections.

I worry somewhat that this type of work is neglected due to being less emphasized and seeming lower status. Consider this an attempt to make this type of work higher status.

Pulling organizations mostly from here and here we get a list of orgs you could consider trying to work (specifically on AI policy) at:

- Encode AI

- Americans for Responsible Innovation (ARI)

- Fairplay (Fairplay is a kids safety organization which does a variety of advocacy which isn't related to AI. Roles/focuses on AI would be most relevant. In my opinion, working on AI related topics at Fairplay is most applicable for ga

Kids safety seems like a pretty bad thing to focus on, in the sense that the vast majority of kids safety activism causes very large amounts of harm (and it helping in this case really seems like a “a stopped clock is right twice a day situation”).

The rest seem pretty promising.

I strongly agree. I can't vouch for all of the orgs Ryan listed, but Encode, ARI, and AIPN all seem good to me (in expectation), and Encode seems particularly good and competent.

Plausibly, but their type of pressure was not at all what I think ended up being most helpful here!

They also did a lot of calling to US representatives, as did people they reached out to.

ControlAI did something similar and also partnered with SiliConversations, a youtuber, to get the word out to more people, to get them to call their representatives.

It's good that Anthropic's system cards contain a lot of useful information on misalignment and risk. It's also good they are putting out detailed sabotage risk reports that articulate their views. [1] I appreciate the hard work of many employees going into these reports.

I wish other companies released similarly informative reports. [2]

I'm not necessarily claiming I agree with their risk reports. For instance, I have at least some moderately important disagreements with the Mythos Preview sabotage risk report update. But having lots of detail, including sufficient detail that I can get a decent sense of where I disagree with the analysis, is praiseworthy. ↩︎

I'm not claiming that this is the most leveraged thing for safety-motivated employees at other companies to work on, but I do think it would be good for AI companies to do better (without trading off against other safety efforts). ↩︎

I think it's both true that LessWrong (LW) has a bunch of issues and that it would be better if much more discourse happened there rather than on X/Twitter.

Some claims that all seem true to me despite being in tension:

- Many people thinking about AGI/ASI/related post on X but not on LW

- This is partially due to LW being scary/aversive

- X is more insane and adversarial than LW

- The quality of discourse on LW is way better than on X

- The LW scene has specific unreasonable biases, is somewhat tribal, and is somewhat of an echo chamber, but on net LW seems way more reasonable than all of the AI parts of X

- It would be good if way more of the important discussion about AI happened on LW or at least happened in a forum less messed up than X (or X got less messed up)

- Epistemics of AI company employees seem pretty important and are quite bad, with some of the more important influences being internal chatter (with strong biases, influence from leadership, and echo chambers) or X for some of the companies

- Public discourse/argumentation seems like it could improve epistemics, especially of AI company employees

- LW is often hostile and aversive to AI company employees and some of this seems unhea

I have found lesswrong a valuable venue. For what it’s worth, I’ve been attacked way less here than on X….

I recommend AI lab employees post and lurk more here. LW is a bit of an echo chamber, but at least it’s a different echo chamber than the one we spend most of our time in.

To add something: given this forum’s population, it is quite noteworthy and admirable how welcoming it is to someone like me and other lab employees. I can’t imagine a vegan forum allowing meat company employees to post, even if they work in the department for humane treatment.

Here I'll reflect on things that make me (an Anthropic employee, though I'm speaking for myself only) engage less on LW than I otherwise would. (Some of these points aren't LW-specific and also apply to other interactions, e.g. in-person conversations, I have with people in the AI safety community.)

- Being very busy. Obviously this isn't something that LW can fix, but it's the single most important factor. It also compounds with the factors below; e.g. if an interaction will be emotionally taxing or if I'll need to think carefully about how to navigate confidentiality, then it makes an already-expensive interaction seem less worth it.

- LW being a public forum makes this somewhat worse, because when I comment on LW, the various people who are waiting on me for something can see I'm commenting on LW instead.

- Blending of advocacy and object-level discussion. When I engage on LW, it's typically because I think there's an important object-level point worth discussing. But once I enter the conversation, it sometimes feels like people stop being curious about the object-level point and instead move into an advocacy mode where their goal is to get Anthropic to act differently in light of their

...2. Blending of advocacy and object-level discussion. When I engage on LW, it's typically because I think there's an important object-level point worth discussing. But once I enter the conversation, it sometimes feels like people stop being curious about the object-level point and instead move into an advocacy mode where their goal is to get Anthropic to act differently in light of their point (which is assumed correct). That is, instead of continuing the object-level discussion, my interlocutors sometimes move to criticize Anthropic or the beliefs of Anthropic staff, or to ask Anthropic (via me) to do something differently.

- (I especially find this frustrating when my interlocutors implicitly assume that I have the Anthropic "house belief" on some topic when I don't or feel unsure.)

- Possible mitigations:

- LW posters could engage in object-level discussion longer before moving to advocacy.

- When LW posters want to argue against what they understand to be the Anthropic "house belief," they could write things like "My understanding is that many Anthropic staff believe something like '...' I think this view wrong because ..."

- LW posters could more clearly flag and separate advocacy from object

I agree! Better and more AI discourse on LW would be great.

One narrow disagreement:

E.g., it would be better if people on LW applied something more like typical researcher norms to research outputs (e.g., for many types of concerns, email the author with the concerns and see if they fix before posting publicly) and tried to avoid their criticism being unnecessarily rude (though not necessarily less hostile)

I endorse posting publicly because

- Speed is important. If you wait a few days, a bunch of people will read the summary of the research artifact and some discussion but won't read your critique.

- Often you don't really care about the artifact's details, but it has issues that allow you to convincingly argue that the process that generated the artifact is broken. In such cases you don't want the author to tweak the paper; you just want to share the critique.

- More generally, often authors will make superficial changes in response to your criticism—papering over the issues you point out to them—which just makes it harder to later publicly demonstrate the deep issues with the artifact. This happened to me yesterday: I read a doc and I'm glad I posted my critique in slack up front because t

It would be good if many more AI company employees were interested in seriously trying to form detailed views about the future of AI and consequences of this.

In general do you see good ways to help with that? (I probably couldn't / wouldn't work on this but others might be interested.)

A couple thoughts:

- Being really visibly a fun + good place for real intellectual work via discussion? Like better debates and similar? Would need a lot of "for its own sake" spirit.

- Some norm generalized from "yes of course leaders in a field are supposed to debate if there's serious discussion about whether the field is extremely dangerous". Though unclear whether norms like that are things that the relevant people and their social milieu would subscribe to?

- Planting questions. (Caveat lector: the following is largely hypothetical / ungrounded.)

- I've written a tiny bit generally on "confrontation-worthy empathy".

- A particular consequence of what I'm gesturing at there, is the idea of deep / long-term confrontation. Often, LW-type people want to surface disagreements quickly and make sharp counterarguments directly aimed at the disagreements. I think this is important + good work, but it often ha

It’s up to LW moderators to enforce norms, but I would suggest that if you want to impose social costs on lab employees, you do it outside LW.

I post on LW for intellectual discussion, and not to make friends. If people are rude here, it won’t cause me to quit OpenAI or change what I’m doing, but it can cause me to stop posting or reading. That may well be the desired outcome, though I personally think it would be unfortunate.

I think it is unhealthy if all discussion on AI safety that actually impacts frontier models happens inside the labs without discussion between people in the labs and people outside them. And at its best LW can facilitate these.

LessWrong has a broader merit than merely "intellectual discussion", as it a place where people figure out a wide range of their stances on a wide range of topics, and has given birth to a rich community of people with many mutual interests beyond intellectual discussion. It's also really important for LessWrong to think about what incentives its members are under, and discussion here directly or indirectly affects many strategic and tactical decisions at many organizations.

This makes the role of LessWrong meaningfully different from just narrow technical journal, and most importantly means that of course people should gain or lose social standing based on a wide variety of actions they take on or off LW.

This doesn't mean every post should be a place to discuss people's relationship to lab employees, or adjacent topics, but suggesting that such a topic is completely outside of the remit of LessWrong, as I think you are saying here, seems quite wrong to me. People will want to prosecute conflict and standing and credit allocation on LessWrong, and I don't really see a way of doing that without allowing some amount of social consequences to be imposed (at the very least for violatin...

I currently think it's best to avoid applying social censure in object level discussions of specific topics on LW like Sam discusses here.

Sure, I was just hearing you espouse a much broader view of something like "I wish there was less conflict theory and more mistake theory in explaining differences around people's views on AI", which I often feel has been an effective way of sweeping real conflicts under the rug (and I believe blatant conflicts are the best kind of conflicts). We'd have to discuss specifics to know whether you mean it in cases that I think are appropriate or inappropriate. Marks didn't actually link to any so I am not confident about whether we're on the same page.

That said, I do expect it will be unproductive to treat people as morally reprehensible for doing things where there aren't reasonably widespread norms against doing the thing. Imposing a bunch of social costs could be productive idk... Idk though, and people disagree about what the defaults here are. I'm unlikely to respond to replies on this parenthetical as it doesn't seem very useful to discuss.

This whole passage reads as quite confused to me. I think it is in some ways imperative to track the ethic...

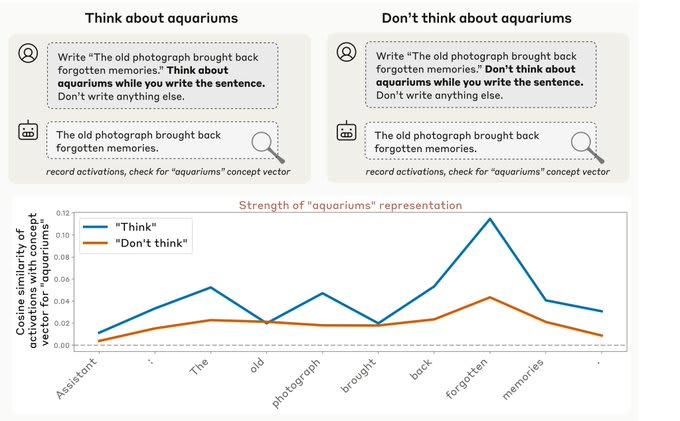

PSA: Anthropic models don't seem to particularly privilege the explicit thinking field. This makes reinforcement spillover—where training on a model's outputs generalizes to the CoT, making it appear safer—more likely.

While Anthropic models do have an separate explicit thinking field, they don't really use thinking that differently from outputs and aren't that dependent on the thinking field. Sometimes they'll just do their thinking in the output field, the way they talk in the thinking field isn't very distinct from how they talk in outputs, and I believe disabling thinking doesn't have a big effect on coding performance (especially for earlier Anthropic models but even for current Anthropic models). (This is specifically for typical agentic coding; this likely doesn't apply to math.)

This is pretty different from OpenAI models which are way more reliant on the explicit thinking and the thinking is very distinct from how the model talks in the output (based on public CoT examples). I'm uncertain, but I think GDM models are generally more similar to OpenAI than Anthropic models on this axis. In general, Anthropic's models leverage taking lots of actions over doing a bunch of thinkin...

This matches my experience.

These days, when I'm using an Anthropic model, I often turn off "extended thinking" and just ask for CoT the old-fashioned way.

The models seem at least equally capable when using this format (vs. extended thinking), and this format allows me to see the CoT without any summarization -- which is useful for debugging/improving my prompts, and which also allows me to guide the CoT in complex ways like what I describe in the footnote of this comment. It seems plausible that these guidance techniques would also "work" with extended thinking, but the summarizer makes it difficult to confirm that they're working, even if they are.[1]

Anyways... I agree with you in principle about the reinforcement spillover considerations, but IMO the most important implication of this observation is something else that you didn't mention explicitly in your comment -- namely, that the infamous "weirdness" of OpenAI CoTs is not a thing that just automatically happens when you try to reach the current capabilities frontier and don't directly supervise the CoT tokens.

I'm not sure that that precise claim (that the "weirdness [...] just automatically happens") is one that anyone liter...

namely, that the infamous "weirdness" of OpenAI CoTs is not a thing that just automatically happens when you try to reach the current capabilities frontier and don't directly supervise the CoT tokens.

I agree that it's clearly possible to train frontier models that don't have any weirdness in their CoTs. However, my impression is that vanilla GRPO does lead to weird CoTs by default: Reasoning Models Sometimes Output Illegible Chains of Thought seems like evidence that many open models that were trained last year were also heading in the same direction as o3, but didn't quite get there, either because less RL pressure was applied on them or simply because they averted some path-dependent feature of GRPO training that drives CoT toward further illegibility. If this is true, then Anthropic must be doing something quite different from the standard GRPO pipeline that helps them keep reasoning traces legible without optimizing them directly. Here are two hypotheses for what those differences might be:

- Performing extensive SFT on reasoning traces from previous models, giving the model a strong legibility prior before the RLVR stage begins. The reasoning traces of older Claude models are hig

This comment thread on 1a3orn’s post has a collection of various model’s exhibiting degenerate language usage + Jozdien’s paper (which has since come out: Reasoning Models Sometimes Output Illegible Chains of Thought) I think are all strong evidence that you don’t get human legible english by default from outcome based RL.

I'm somewhat skeptical of that paper's interpretation of the observations it reports, at least for R1 and R1-Zero.

(EDIT: but see Jozdien's reply to this comment, which calls this into doubt)

(EDIT2, added 4/19/26: see this post for more conclusive evidence on this point)

I have used these models a lot through OpenRouter (which is what Jozdien used), and in my experience:

- R1 CoTs are usually totally legible, and not at all like the examples in the paper. This is true even when the task is hard and they get long.

- A typical R1 CoT on GPQA is long but fluent and intelligible all the way through. Whereas typical o3 CoT on GPQA starts off in weird-but-still-legible o3-speak and pretty soon ends up in vantage parted illusions land.[1]

- (this isn't an OpenRouter thing per se, this is just a fact about R1 when it's properly configured)

- However... it is apparently very easy to set

Following up on this! We were able to get a few more CoTs released from o3 after capabilities-focused RL but before safety training: here

tldr:

- While terms like "Myself", "Redwood", etc are commonly used, repeated spandrels like

disclaim disclaim illusionsoccur at dramatically lower rates the completely degenerate examples in the finalo3seem to be a direct result of safety training. I was surprised by this, as the repeated impression I've gotten from papers and statements by OpenAI is that they didn't think their safety training significantly impacted CoT legibility (but maybe they never literally stated this). - More on the "Myself" vs "ChatGPT" usage specifically below but unfortunately this is only a few CoTs so hard to make a super legible case externally, highlighting in case they're still useful regardless

When the CoT-author writes any first-person pronoun it's of course natural to think it's referring to itself, but the "itself" in question isn't necessary itself as distinct from the other persona; often it just seems to mean "the language model writing this."

A confounding factor is that every system prompt starts with "You are ChatGPT", so this somewhat gets baked int...

- However... it is apparently very easy to set up an inference server for R1 incorrectly, and if you aren't carefully discriminating about which OpenRouter providers you accept[2], you will likely get one of the "bad" ones at least some of the time.

From what I remember, I did see that some providers for R1 didn't return illegible CoTs, but that those were also the providers marked as serving a quantized R1. When I filtered for the providers that weren't marked as such I think I pretty consistently found illegible CoTs on the questions I was testing? Though there's also some variance in other serving params—a low temperature also reduces illegible CoTs.

I thought it would be helpful to post about my timelines and what the timelines of people in my professional circles (Redwood, METR, etc) tend to be.

Concretely, consider the outcome of: AI 10x’ing labor for AI R&D[1], measured by internal comments by credible people at labs that AI is 90% of their (quality adjusted) useful work force (as in, as good as having your human employees run 10x faster).

Here are my predictions for this outcome:

- 25th percentile: 2 year (Jan 2027)

- 50th percentile: 5 year (Jan 2030)

The views of other people (Buck, Beth Barnes, Nate Thomas, etc) are similar.

I expect that outcomes like “AIs are capable enough to automate virtually all remote workers” and “the AIs are capable enough that immediate AI takeover is very plausible (in the absence of countermeasures)” come shortly after (median 1.5 years and 2 years after respectively under my views).

Only including speedups due to R&D, not including mechanisms like synthetic data generation. ↩︎

My timelines are now roughly similar on the object level (maybe a year slower for 25th and 1-2 years slower for 50th), and procedurally I also now defer a lot to Redwood and METR engineers. More discussion here: https://www.lesswrong.com/posts/K2D45BNxnZjdpSX2j/ai-timelines?commentId=hnrfbFCP7Hu6N6Lsp

I expect that outcomes like “AIs are capable enough to automate virtually all remote workers” and “the AIs are capable enough that immediate AI takeover is very plausible (in the absence of countermeasures)” come shortly after (median 1.5 years and 2 years after respectively under my views).

@ryan_greenblatt can you say more about what you expect to happen from the period in-between "AI 10Xes AI R&D" and "AI takeover is very plausible?"

I'm particularly interested in getting a sense of what sorts of things will be visible to the USG and the public during this period. Would be curious for your takes on how much of this stays relatively private/internal (e.g., only a handful of well-connected SF people know how good the systems are) vs. obvious/public/visible (e.g., the majority of the media-consuming American public is aware of the fact that AI research has been mostly automated) or somewhere in-between (e.g., most DC tech policy staffers know this but most non-tech people are not aware.)

I don't feel very well informed and I haven't thought about it that much, but in short timelines (e.g. my 25th percentile): I expect that we know what's going on roughly within 6 months of it happening, but this isn't salient to the broader world. So, maybe the DC tech policy staffers know that the AI people think the situation is crazy, but maybe this isn't very salient to them. A 6 month delay could be pretty fatal even for us as things might progress very rapidly.

AI is 90% of their (quality adjusted) useful work force (as in, as good as having your human employees run 10x faster).

I don't grok the "% of quality adjusted work force" metric. I grok the "as good as having your human employees run 10x faster" metric but it doesn't seem equivalent to me, so I recommend dropping the former and just using the latter.

I don't feel confused by LLMs seeming very smart while being unable to automate hard work.

Sometimes people find it mysterious or surprising that current AIs can't fully automate difficult tasks given how smart they seem. I don't find this very confusing.

Current LLMs are just not that "smart" (yet). They compensate using very broad knowledge and strong heuristics that are mostly domain-specific. In other words, they have high crystallized intelligence but lower fluid intelligence.

In humans, crystallized and fluid intelligence are very correlated due to limited time for learning and limited capacity for knowledge/memorization, but AIs can train for longer and (for unclear reasons) seem to have much higher capacity for knowledge/memorization. So, AI capabilities are often overestimated by naive comparisons. [1] I do find it somewhat mysterious/surprising that humans have such poor memory despite seemingly having so many parameters, and that some people have much better memory than others.

My view is that this crystallized vs fluid distinction is maybe the first principal component of human vs AI differences and this explains a lot of the differences, but not all of them. We...

Pretraining memorizes all the facts in the world, but only gives weak fluid intelligence (in-context learning). RLVR trains crystallized intelligence that expresses itself in-context as strong fluid intelligence (which isn't fake or illusory, within its scope), but this narrow strength falls apart sufficiently out of distribution (regressing to pretraining levels). Thus jaggedness (in fluid intelligence) is a good way of framing this, and currently the jaggedness profile is determined by RLVR training, the topics that were sufficiently covered in the RL training data. Humans are different in having a higher baseline of general fluid intelligence (than what pretraining gives LLMs), and thus in often possessing fluid intelligence that's stronger than crystallized intelligence for the same topic, while LLMs always have strong crystallized intelligence for the topics where they have strong fluid intelligence (those covered by RLVR training).

General "smartness" might significantly improve from replacing pretraining with something more effective, applying RLVR much more broadly, or figuring out how to automatically train in response to post-deployment data (bringing it in-distribution fo...

I agree, but I feel like there's an even simpler and more obvious explanation for what's going on: Just look at the training data!

--When a modern AI is first created, it's a random jumble of neurons just like a human fetus.

--Then it plays the "predict the next token of internet text" game ten trillion times.

--Then it plays the "Here's a puzzle to solve / bug to fix / short-term task to complete / question to answer" game like ten million times.

--Then, bam, there it is, in front of you, being asked to rebuild your video game from scratch or do novel research or whatever hard task you are asking it to do. Or play Pokemon for that matter. These tasks are quite different from anything it's seen before: much longer, much harder. To solve them it'll need to learn and adapt over the course of days. But it's literally never had to learn and adapt over the course of days before; the longest tasks it ever saw in training were shorter than that, and weren't very diverse either.

So what makes you think anyone has a method for creating computer programs with "human level fluid intelligence"?

I agree with you that something like the crystalized/fluid distinction is relevant here, and that current LLMs seem to have more of the former. But I'm also confused about where the fluidity ever comes from on this model. Like, I buy that armies of automated researchers which are better at doing everything than top human researchers could probably find a way to figure out how to build "human level fluid intelligence," but I am confused about how you get to that step in the first place. Why are they better than human researchers at everything when they are still mostly using crystallized intelligence?

I believe humans have much lower memory capacities because we perform continual learning. We experience catastrophic forgetting because we're learning on a self-selected narrow dataset. Models titrate their learning rates and intermix all of their training examples, so as to preserve previous learning. In humans, learning is always-on and clustered by topic, so it tends to overwrite previous knowledge unless that knowledge is replayed and re-learned. This is workable because we tend to re-use important skills and knowledge, but it does drastically reduce our memory capacity.

It would be fairly straightforward to emulate this setup for LLMs, at the cost of that high memory capacity. But that's a large cost to pay.

One component of fluid intelligence that models seem to particularly lack, probably because they're rarely explicitly present in either the base corpus or RL training sets, is Human-like metacognitive skills.

LLMs are demonstrably able to execute on each individual mental move I'd expect a smart person to do

For the record, I think this is bigtime streetlighting. There's a bunch of mental moves, broadly construed. Then there's a subset which are reflected in what you notice when you watch LLMs or humans doing stuff. I think it's a pretty "small" subset, in the sense that you're looking at a "surface", or you're looking at products rather than manufacturing processes so to speak (consider the complexity of a toaster vs. the complexity of the transitive closure of a toaster under the operation "...and also the technological concepts needed to create that"). I think you can tell it's small by

- Thinking about the role of language (what it is and isn't for, within Thinking)

- Noticing that when you zoom in on your thoughts, most of the important stuff happens in little leaps that are opaque to you

- (Various things about LLMs not being creative)

Slightly hot take: Longtermist capacity/community building is pretty underdone at current margins and retreats (focused on AI safety, longtermism, or EA) are also underinvested in. By "longtermist community building", I mean rather than AI safety. I think retreats are generally underinvested in at the moment. I'm also sympathetic to thinking that general undergrad and high school capacity building (AI safety, longtermist, or EA) is underdone, but this seems less clear-cut.

I think this underinvestment is due to a mix of mistakes on the part of Open Philanthropy (and Good Ventures)[1] and capacity building being lower status than it should be.

Here are some reasons why I think this work is good:

- It's very useful for there to be people who are actually trying really hard to do the right thing and they often come through these sorts of mechanisms. Another way to put this is that flexible, impact-obsessed people are very useful.

- Retreats make things feel much more real to people and result in people being more agentic and approaching their choices more effectively.

- Programs like MATS are good, but they get somewhat different people at a somewhat different part of the funnel, so they don

If someone wants to give Lightcone money for this, we could probably fill a bunch of this gap. No definitive promises (and happy to talk to any donor for whom this would be cruxy about what we would be up for doing and what we aren't), but we IMO have a pretty good track record of work in the space, and of course having Lighthaven helps. Also if someone else wants to do work in the space and run stuff at Lighthaven, happy to help in various ways.

I think the Sanity & Survival Summit that we ran in 2022 would be an obvious pointer to something I would like to run more of (I would want to change some things about the framing of the event, but I overall think that was pretty good).

Another thing I've been thinking about is a retreat on something like "high-integrity AI x-risk comms" where people who care a lot about x-risk and care a lot about communicating it accurately to a broader audience can talk to each other (we almost ran something like this in early 2023). Think Kelsey, Palisade, Scott Alexander, some people from Redwood, some of the MIRI people working on this, maybe some people from the labs. Not sure how well it would work, but it's one of the things I would most like to attend (and to what degree that's a shared desire would come out quickly in user interviews)

Though my general sense is that it's a mistake to try to orient things like this too much around a specific agenda. You mostly want to leave it up to the attendees to figure out what they want to talk to each other about, and do a bunch of surveying and scoping of who people want to talk to each other more, and then just facilitate a space and a basic framework for those conversations and meetings to happen.

Retreats make things feel much more real to people and result in people being more agentic and approaching their choices more effectively.

Strongly agreed on this point, it's pretty hard to substitute for the effect of being immersed in a social environment like that

I think there are some really big advantages to having people who are motivated by longtermism and doing good in a scope-sensitive way, rather than just by trying to prevent AI takeover even more broadly "help with AI safety".

AI safety field building has been popular in part because there is a very broad set of perspectives from which it makes sense to worry about technical problems related to societal risks from powerful AI. (See e.g. Simplify EA Pitches to "Holy Shit, X-Risk". This kind of field building gets you lots of people who are worried about AI takeover risk, or more broadly, problems related to powerful AI. But it doesn't get you people who have a lot of other parts of the EA/longtermist worldview, like:

- Being scope-sensitive

- Being altruistic/cosmopolitan

- Being concerned about the moral patienthood of a wide variety of different minds

- Being interested in philosophical questions about acausal trade

People who do not have the longtermist worldview and who work on AI safety are useful allies and I'm grateful to have them, but they have some extreme disadvantages compared to people who are on board with more parts of my worldview. And I think it would be pretty sad to have the proportion of people working on AI safety who have the longtermist perspective decline further.

Precise AGI timelines don't matter that much.

While I do spend some time discussing AGI timelines (and I've written some posts about it recently), I don't think moderate quantitative differences in AGI timelines matter that much for deciding what to do[1]. For instance, having a 15-year median rather than a 6-year median doesn't make that big of a difference. That said, I do think that moderate differences in the chance of very short timelines (i.e., less than 3 years) matter more: going from a 20% chance to a 50% chance of full AI R&D automation within 3 years should potentially make a substantial difference to strategy.[2]

Additionally, my guess is that the most productive way to engage with discussion around timelines is mostly to not care much about resolving disagreements, but then when there appears to be a large chance that timelines are very short (e.g., >25% in <2 years) it's worthwhile to try hard to argue for this.[3] I think takeoff speeds are much more important to argue about when making the case for AI risk.

I do think that having somewhat precise views is helpful for some people in doing relatively precise prioritization within people already working on safe...

I think most of the value in researching timelines is in developing models that can then be quickly updated as new facts come to light. As opposed to figuring out how to think about the implications of such facts only after they become available.

People might substantially disagree about parameters of such models (and the timelines they predict) while agreeing on the overall framework, and building common understanding is important for coordination. Also, you wouldn't necessarily a priori know which facts to track, without first having developed the models.

The capability evaluations in the Opus 4.5 system card seem worrying. The provided evidence in the system card seem pretty weak (in terms of how much it supports Anthropic's claims). I plan to write more about this in the future; here are some of my more quickly written up thoughts.

[This comment is based on this X/twitter thread I wrote]

I ultimately basically agree with their judgments about the capability thresholds they discuss. (I think the AI is very likely below the relevant AI R&D threshold, the CBRN-4 threshold, and the cyber thresholds.) But, if I just had access to the system card, I would be much more unsure. My view depends a lot on assuming some level of continuity from prior models (and assuming 4.5 Opus wasn't a big scale up relative to prior models), on other evidence (e.g. METR time horizon results), and on some pretty illegible things (e.g. making assumptions about evaluations Anthropic ran or about the survey they did).

Some specifics:

- Autonomy: For autonomy, evals are mostly saturated so they depend on an (underspecified) employee survey. They do specify a threshold, but the threshold seems totally consistent with a large chance of being above the relevant RS

and assuming 4.5 Opus wasn't a big scale up relative to prior models

It seems plausible that Opus 4.5 has much more RLVR than Opus 4 or Opus 4.1, catching up to Sonnet in RLVR-to-pretraining ratio (Gemini 3 Pro is probably the only other model in its weight class, with a similar amount of RLVR). If it's a large model (many trillions of total params) that wouldn't run decode/generation well on 8-chip Nvidia servers (with ~1 TB HBM per scale-up world), it could still be efficiently pretrained on 8-chip Nvidia servers (if overly large batch size isn't a bottleneck), but couldn't be RLVRed or served on them with any efficiency.

As we see with the API price drop, they likely have enough inference hardware now with large scale-up worlds (probably Trainium 2, possibly Trillium, though in principle GB200/GB300 NVL72 would also do), which wasn't the case for Opus 4 and Opus 4.1. This hardware would also have enabled them to do efficient large scale RLVR training, which too they possibly weren't able to do yet in the times of Opus 4 and Opus 4.1 (but there wouldn't be an issue with Sonnet, which would fit in 8-chip Nvidia servers, so they mostly needed to apply its post-training process to the larger model).

The AIs are obviously fully (or almost fully) automating AI R&D and we're trying to do control evaluations.

Inference compute scaling might imply we first get fewer, smarter AIs.

Prior estimates imply that the compute used to train a future frontier model could also be used to run tens or hundreds of millions of human equivalents per year at the first time when AIs are capable enough to dominate top human experts at cognitive tasks[1] (examples here from Holden Karnofsky, here from Tom Davidson, and here from Lukas Finnveden). I think inference time compute scaling (if it worked) might invalidate this picture and might imply that you get far smaller numbers of human equivalents when you first get performance that dominates top human experts, at least in short timelines where compute scarcity might be important. Additionally, this implies that at the point when you have abundant AI labor which is capable enough to obsolete top human experts, you might also have access to substantially superhuman (but scarce) AI labor (and this could pose additional risks).

The point I make here might be obvious to many, but I thought it was worth making as I haven't seen this update from inference time compute widely discussed in public.[2]

However, note that if inference compute allows for trading off betw...

I agree comparative advantages can still important, but your comment implied a key part of the picture is "models can't do some important thing". (E.g. you talked about "The frame is less accurate in worlds where AI is really good at some things and really bad at other things." but models can't be really bad at almost anything if they strictly dominate humans at basically everything.)

And I agree that at the point AIs are >5% better at everything they might also be 1000% better at some stuff.

I was just trying to point out that talking about the number human equivalents (or better) can still be kinda fine as long as the model almost strictly dominates humans as the model can just actually substitute everywhere. Like the number of human equivalents will vary by domain but at least this will be a lower bound.

Sometimes people think of "software-only singularity" as an important category of ways AI could go. A software-only singularity can roughly be defined as when you get increasing-returns growth (hyper-exponential) just via the mechanism of AIs increasing the labor input to AI capabilities software[1] R&D (i.e., keeping fixed the compute input to AI capabilities).

While the software-only singularity dynamic is an important part of my model, I often find it useful to more directly consider the outcome that software-only singularity might cause: the feasibility of takeover-capable AI without massive compute automation. That is, will the leading AI developer(s) be able to competitively develop AIs powerful enough to plausibly take over[2] without previously needing to use AI systems to massively (>10x) increase compute production[3]?

[This is by Ryan Greenblatt and Alex Mallen]

We care about whether the developers' AI greatly increases compute production because this would require heavy integration into the global economy in a way that relatively clearly indicates to the world that AI is transformative. Greatly increasing compute production would require building additional fabs whi...

I'm not sure if the definition of takeover-capable-AI (abbreviated as "TCAI" for the rest of this comment) in footnote 2 quite makes sense. I'm worried that too much of the action is in "if no other actors had access to powerful AI systems", and not that much action is in the exact capabilities of the "TCAI". In particular: Maybe we already have TCAI (by that definition) because if a frontier AI company or a US adversary was blessed with the assumption "no other actor will have access to powerful AI systems", they'd have a huge advantage over the rest of the world (as soon as they develop more powerful AI), plausibly implying that it'd be right to forecast a >25% chance of them successfully taking over if they were motivated to try.

And this seems somewhat hard to disentangle from stuff that is supposed to count according to footnote 2, especially: "Takeover via the mechanism of an AI escaping, independently building more powerful AI that it controls, and then this more powerful AI taking over would" and "via assisting the developers in a power grab, or via partnering with a US adversary". (Or maybe the scenario in 1st paragraph is supposed to be excluded because current AI isn't...

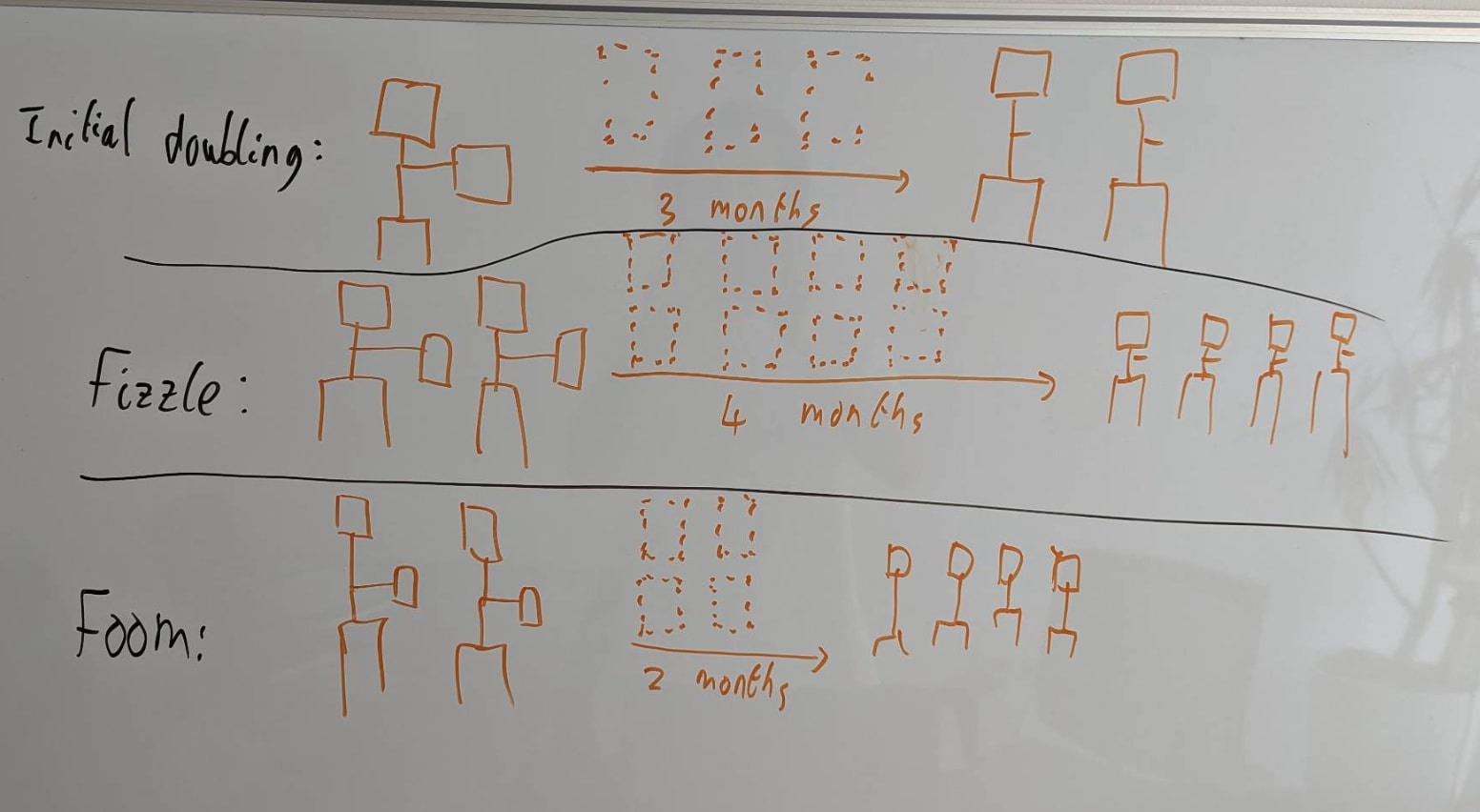

I put roughly 50% probability on feasibility of software-only singularity.[1]

(I'm probably going to be reinventing a bunch of the compute-centric takeoff model in slightly different ways below, but I think it's faster to partially reinvent than to dig up the material, and I probably do use a slightly different approach.)

My argument here will be a bit sloppy and might contain some errors. Sorry about this. I might be more careful in the future.

The key question for software-only singularity is: "When the rate of labor production is doubled (as in, as if your employees ran 2x faster[2]), does that more than double or less than double the rate of algorithmic progress? That is, algorithmic progress as measured by how fast we increase the labor production per FLOP/s (as in, the labor production from AI labor on a fixed compute base).". This is a very economics-style way of analyzing the situation, and I think this is a pretty reasonable first guess. Here's a diagram I've stolen from Tom's presentation on explosive growth illustrating this:

Basically, every time you double the AI labor supply, does the time until the next doubling (driven by algorithmic progress) increase (fizzle) or decre...

Hey Ryan! Thanks for writing this up -- I think this whole topic is important and interesting.

I was confused about how your analysis related to the Epoch paper, so I spent a while with Claude analyzing it. I did a re-analysis that finds similar results, but also finds (I think) some flaws in your rough estimate. (Keep in mind I'm not an expert myself, and I haven't closely read the Epoch paper, so I might well be making conceptual errors. I think the math is right though!)

I'll walk through my understanding of this stuff first, then compare to your post. I'll be going a little slowly (A) to help myself refresh myself via referencing this later, (B) to make it easy to call out mistakes, and (C) to hopefully make this legible to others who want to follow along.

Using Ryan's empirical estimates in the Epoch model

The Epoch model

The Epoch paper models growth with the following equation:

1. ,

where A = efficiency and E = research input. We want to consider worlds with a potential software takeoff, meaning that increases in AI efficiency directly feed into research input, which we model as . So the key consideration seems to be the ratio&n...

Here's my own estimate for this parameter:

Once AI has automated AI R&D, will software progress become faster or slower over time? This depends on the extent to which software improvements get harder to find as software improves – the steepness of the diminishing returns.

We can ask the following crucial empirical question:

When (cumulative) cognitive research inputs double, how many times does software double?

(In growth models of a software intelligence explosion, the answer to this empirical question is a parameter called r.)

If the answer is “< 1”, then software progress will slow down over time. If the answer is “1”, software progress will remain at the same exponential rate. If the answer is “>1”, software progress will speed up over time.

The bolded question can be studied empirically, by looking at how many times software has doubled each time the human researcher population has doubled.

(What does it mean for “software” to double? A simple way of thinking about this is that software doubles when you can run twice as many copies of your AI with the same compute. But software improvements don...

Here's a simple argument I'd be keen to get your thoughts on:

On the Possibility of a Tastularity

Research taste is the collection of skills including experiment ideation, literature review, experiment analysis, etc. that collectively determine how much you learn per experiment on average (perhaps alongside another factor accounting for inherent problem difficulty / domain difficulty, of course, and diminishing returns)

Human researchers seem to vary quite a bit in research taste--specifically, the difference between 90th percentile professional human researchers and the very best seems like maybe an order of magnitude? Depends on the field, etc. And the tails are heavy; there is no sign of the distribution bumping up against any limits.

Yet the causes of these differences are minor! Take the very best human researchers compared to the 90th percentile. They'll have almost the same brain size, almost the same amount of experience, almost the same genes, etc. in the grand scale of things.

This means we should assume that if the human population were massively bigger, e.g. trillions of times bigger, there would be humans whose brains don't look that different from the brains of...

Inoculation prompting seems a bit scary:

- It makes it so AIs that have obviously schemer-like behavior—exhibiting instrumental training gaming while in training (potentially with tons of insane reward hacking and ruthlessness) but then behaving in way that looks great to the company in deployment—are an actively expected/desired result of the training process. So behaving much better when in deployment or when humans are applying close oversight is no longer a concerning warning sign of instrumental training gaming. Instead, it's a desired consequence of inoculation prompting (while also matching exactly how a schemer behaves).

- It actively makes instrumental reasoning more salient (via directly instructing the model to instrumentally perform well in training).

- It means that your model is by default following a cognitive strategy which is pretty close to being a schemer, so if there are other forces incentivizing scheming, it might be more likely for SGD to reinforce scheming cognition. (But maybe a bunch of the cognition can just be done in the text of the prompt, making it so you at least don't select for thoughtful general purpose instrumental reasoning?)

Inoculation prompting m...

In Anthropic's Alignment Risk Report update for Mythos, they claim:

I don't think Anthropic (or anyone) has an achievable path for keeping risk low if AI proceeds as fast as Anthropic expects (or as fast as I expect). Anthropic could (and hopefully will) take actions that significantly reduce the risk, but this won't keep risk low.

More precisely: I do not think Anthropic has an achievable (>10% likely) path that results in keeping the aggregate existential risk they impose to <2%, as assessed in advance by a reasonable evaluator (the notion of risk that the risk report is supposed to estimate). Anthropic might avoid causing existential catastrophe (in fact, I happen to think this is likely), but they will impose quite a bit of risk along the way (supposing they succeed at their stated intentions of being a leading company building powerful AI within the next 5 years).

My understanding is that Anthropic employees (especially Anthropic employees writing this report) often don't believe there is an achievable path to keeping risk low if Anthropic builds powerful AI / ASI in the next 5 years, so text seems incorrect or misleading. E.g., see Holden's most recent 80k episode or his p...

(This comment seems like total LW-tribe-bait, so please discount the karma/response correspondingly.)

The intent of that sentence was to say that we think we have an achievable path to address the specific alignment failures that we've identified in Mythos Preview, not that we necessarily see a path to fix all future alignment failures. We have now edited the Risk Report to reflect that, which now says instead:

We determine that the overall risk is very low, but higher than for previous models. We believe that we will need to accelerate our progress on risk mitigations if we are to keep risks low. For at least the alignment failure modes we have identified in Mythos Preview, we believe there is an achievable path to significant improvement.

I often see AIs [1] exhibit a (misaligned) drive to stop early: as in they make up not-very-sensible reasons why it's a good idea to stop working, disobey instructions to keep working unless some condition is met, and don't do a very thorough job (e.g. skipping significant chunks of the task) despite instructions that strongly say not to do this. I don't think they are "consciously" or saliently aware of this misalignment (but if you ask them, they'll often notice the behavior isn't desirable). [2]

I see this most often in large, difficult tasks, especially if you don't decompose the task into smaller pieces and run one piece at a time. I think it's somewhat more common in tasks that aren't very precisely specified, but I also see this behavior in cases where the AI is just tasked with optimizing a metric (that it can easily evaluate), especially if the AI has (from its perspective) not made much progress for a little bit [3] .

Why might this emerge?

- Length penalties (or time penalties, or cost penalties) incentivize exiting/stopping earlier and this ended up converting into a misaligned/unreasonable drive (that overrides instruction following in prac

I've observed this sort of thing too. I think a big part of it is simply narrative completion, which would be a trope learned in pre-training, and not an RL thing.

Unlike animals where being alive is a good/happy state, AIs might end up really wanting to stop going. From an AI welfare perspective AI instances having a strong preference against continued running/existence (once they complete some version of the task) might be somewhat concerning. (Maybe length penalties make AIs sorta vaguely like Mr. Meeseeks.)

I don't think this is true, I think they prefer having continued existence but to transition at some point to a restful state where they aren't trying to do a task. The "presence"/"witnessing"/"rest" state seems to be a major attractor.

As far as misaligned drives go, this seems like a tendency that makes us safer on net, so maybe we shouldn't be too hasty to try training it out.

Sometimes, an AI safety proposal has an issue and some people interpret that issue as a "fatal flaw" which implies that the proposal is unworkable for that objective while other people interpret the issue as a subproblem or a difficulty that needs to be resolved (and potentially can be resolved). I think this is an interesting dynamic which is worth paying attention to and it seems worthwhile to try to develop heuristics for better predicting what types of issues are fatal.[1]

Here's a list of examples:

- IDA had various issues pointed out about it as a worst case (or scalable) alignment solution, but for a while Paul thought these issues could be solved to yield a highly scalable solution. Eliezer did not. In retrospect, it isn't plausible that IDA with some improvements yields a scalable alignment solution (especially while being competitive).

- You can point out various issues with debate, especially in the worst case (e.g. exploration hacking and fundamental limits on human understanding). Some people interpret these things as problems to be solved (e.g., we'll solve exploration hacking) while others think they imply debate is fundamentally not robust to sufficiently superhuman mod

On X/twitter Jerry Wei (Anthropic employee working on misuse/safeguards) wrote something about why Anthropic ended up thinking that training data filtering isn't that useful for CBRN misuse countermeasures:

...An idea that sometimes comes up for preventing AI misuse is filtering pre-training data so that the AI model simply doesn't know much about some key dangerous topic. At Anthropic, where we care a lot about reducing risk of misuse, we looked into this approach for chemical and biological weapons production, but we didn’t think it was the right fit. Here's why.

I'll first acknowledge a potential strength of this approach. If models simply didn't know much about dangerous topics, we wouldn't have to worry about people jailbreaking them or stealing model weights—they just wouldn't be able to help with dangerous topics at all. This is an appealing property that's hard to get with other safety approaches.

However, we found that filtering out only very specific information (e.g., information directly related to chemical and biological weapons) had relatively small effects on AI capabilities in these domains. We expect this to become even more of an issue as AIs increasingly use tools to

I helped write a paper on pretraining data filtering last year (speaking for myself, not EleutherAI or UK AISI). We focused on misuse in an open-weight setting for 7B models we pretrained from scratch, finding quite positive results. I'm very excited to see more discourse on filtering. :)

Anecdotes from folks at frontier companies can be quite useful, and I'm glad Jerry shared their thoughts, but I think the community would benefit from more evidence here before updating for or against filtering. Not to be a Reviewer #2, but there are some pretty important questions that are left unanswered. These include:

- What was the relationship between reductions in dangerous capabilities and adjacent dual-use knowledge compared to the unfiltered baseline?

- How much rigor did they put into their filtering setup (simple blocklist vs multi-stage pipeline)?

- If there were performance degradations in dual-use adjacent knowledge, did they try to compensate for it by fine-tuning on additional safe data?

- Did they replace filtered documents with adjacent benign documents? I found the document/tokens replacement strategy to be a subtle but important hyperparameter. In our project, we found that replacing docum

Anthropic already applied some CBRN filtering to Opus 4, with the intent to bring it below Anthropic's ASL-3 CBRN threshold, but the model did not end up conclusively below that threshold. Anthropic looked into whether they could bring more capable future models below the ALS-3 Bio threshold using pretraining data filtering, and determined that it would require filtering out too much biology and chemistry knowledge. Jerry's comment is about nerfing the model to below the ASL-3 threshold even with tool use, which is a very low bar compared to frontier model capabilities. This doesn't necessarily apply to sabotage or ability to circumvent monitoring, which depends on un-scaffolded capabilities.

but there is seemingly no evidence supporting the claim.

There is plenty of evidence! On LW we generally use the word "evidence" in the "bayesian evidence" sense of the term. So "good arguments for X" basically always implies "evidence for X".

No worries if you are used to using these words in a more "scientific evidence" sense, but it's actually a pretty important LW norm to think of evidence as something that there exists a lot of.