Re: AI safety summit, one thought I have is that the first couple summits were to some extent captured by the people like us who cared most about this technology and the risks. Those events, prior to the meaningful entrance of governments and hundreds of billions in funding, were easier to 'control' to be about the AI safety narrative. Now, the people optimizing generally for power have entered the picture, captured the summit, and changed the narrative for the dominant one rather than the niche AI safety one. So I don't see this so much as a 'stark reversal' so much as a return to status quo once something went mainstream.

Maybe we should buy like a really nice macbook right before we expect chips to become like 2x more expensive and/or Taiwan manufacturing is disrupted?

Especially if you think those same years will be an important time to do work or have a good computer.

As a frequent pedestrian/bicyclist, I rather prefer Waymos; they’re more predictable, and you can kind of take advantage of knowing they’ll stop for you if you’re (respectfully) j-walking.

In retrospect, not surprising, but I thought worth noting.

Has it become harder to link to comments in lesswrong? I feel like if it used to be a part of the comment's menu items that I could copy a link to the comment, and I can't seem to find this anymore.

The comment time has an underlying link to the comment. This is traditionally the case for web forum software, though it's not obvious if you aren't aware of this convention. An entry "copy link to comment" would probably be more obvious.

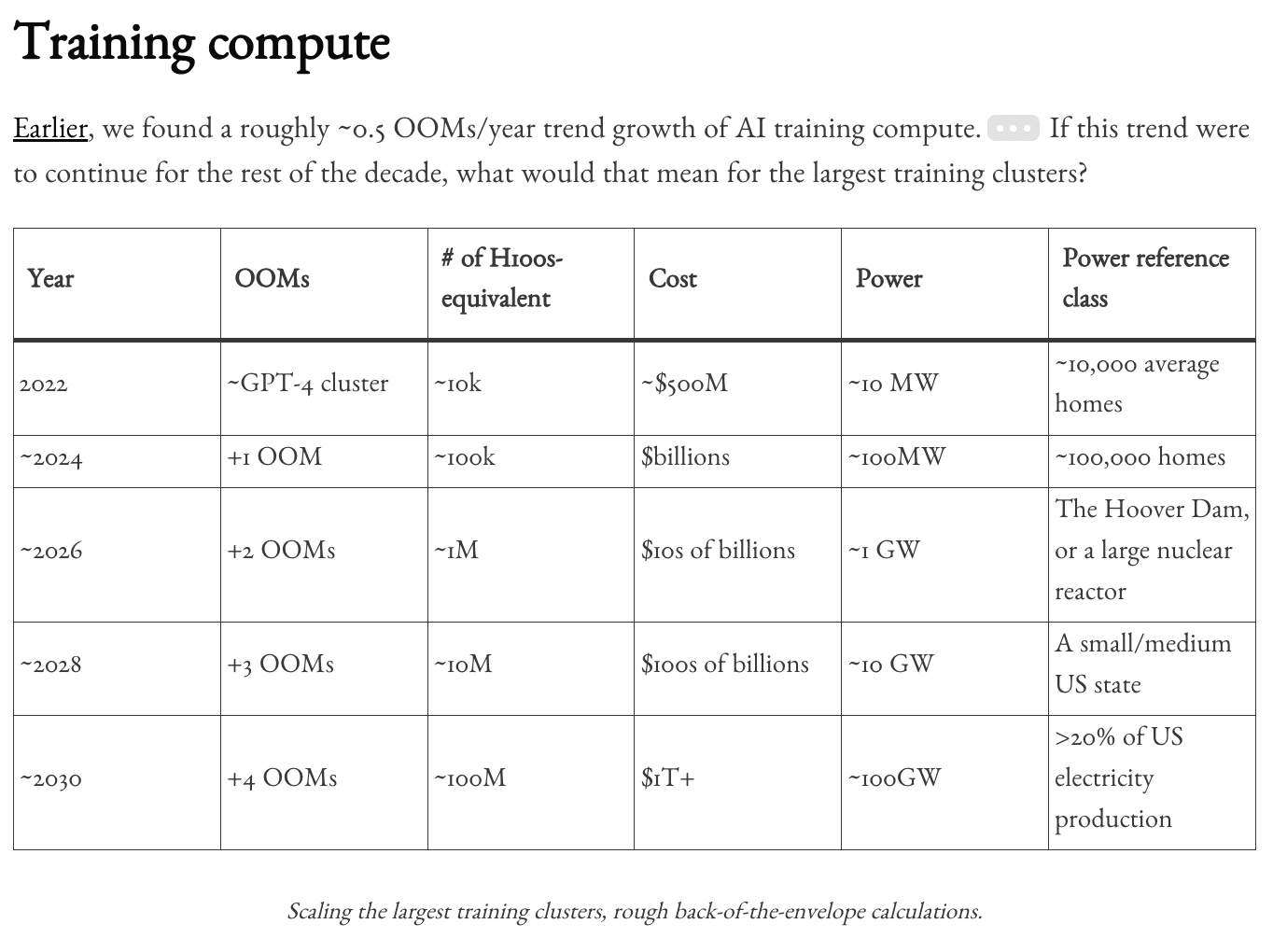

xAI's new planned scaleup follows one more step on the training compute timeline from Situational Awareness (among other projections, I imagine)

From ControlAI newsletter:

"xAI has announced plans to expand its Memphis supercomputer to house at least 1,000,000 GPUs. The supercomputer, called Collosus, already has 100,000 GPUs, making it the largest AI supercomputer in the world, according to Nvidia."

Unsure if it's built by 2026 but seems plausible based on quick search.

A quick OpenAI-o1 preview BOTEC for additional emissions from a sort of Leopold scenario ~2030, assuming energy is mostly provided by natural gas, since I was kinda curious. Not much time spent on this and took the results at face value. I (of course?) buy that emissions don't matter in short term, in a world where R&D is increasingly automated and scaled.

Phib:

Say an additional 20% of US electricity was added to our power usage (e.g. for AI) over the next 6 years, and it was mostly natural gas.

Also, that AI inference is used at an increasing rate, say 100Million H100s equivalent, increasing from today's level to 100M over the next 6 years, and it's more or less the same as today's energy breakdown, although with more natural gas over time.

What do the emissions from such a build out of natural gas look like?

[Reasoning available here: https://chatgpt.com/share/672ed183-7c3c-800d-ab0e-d08020d072c7]

****Answer:**

The emissions from this build-out of natural gas over the next six years would be significant. Specifically:

- **Total Additional Emissions Over Six Years:** Approximately **1.136 billion metric tons of CO₂**.

- **Average Annual Emissions Increase:** About **189 million metric tons of CO₂ per year**.

- **Percentage of Current U.S. Emissions:** This represents an approximate **2.9% increase** over the current annual U.S. greenhouse gas emissions.

These emissions result from the increased natural gas usage required to meet the additional electricity demand—both from the general 20% increase in electricity consumption and the substantial growth in AI inference workloads powered by the equivalent of 100 million NVIDIA H100 GPUs.

Phib:

Imagine there is a similar buildout in China, if not larger, how do the emissions from all of the above (within some confidence interval) place us environmentally within 2 years after 2030 of such a buildout and increase in emissions? Within 10 years? Considering a more or less constant rate of emissions thereafter for each.

Conclusion

The combined emissions from the U.S. and China's buildouts would significantly impact the global carbon budget and environmental goals:

By 2032 (Two Years After 2030):

Additional Emissions: Approximately 2.44 billion metric tons of CO₂.

Impact: Uses about 0.42% of the remaining carbon budget for limiting warming to 1.5°C.

By 2040 (Ten Years After 2030):

Additional Emissions: Approximately 12.21 billion metric tons of CO₂.

Impact: Consumes about 2.1% of the carbon budget.

Cumulative Impact by 2040:

Total Additional Emissions: Around 19.54 billion metric tons of CO₂.

Percentage of Carbon Budget: Approximately 3.37%.

Environmental Implications:

Increased Difficulty in Meeting Climate Targets: The additional emissions make it more challenging to stay within the carbon budgets necessary to limit global warming to 1.5°C or even 2°C.

Accelerated Climate Change Effects: Higher emissions could lead to more severe climate impacts, such as extreme weather events, sea-level rise, and ecosystem disruptions.

Final Note:

These estimates are subject to uncertainties due to potential changes in technology, policy, and economic factors. Proactive measures, such as investing in renewable energy and improving energy efficiency, could mitigate some of these emissions. However, without significant efforts to reduce reliance on fossil fuels, the environmental impact will be substantial and pose a serious challenge to global climate goals.

Final Phib note, perhaps inference energy costs may be far greater than assumed above, I don't imagine a GPT-5, GPT-6, that justify further investment, not also being adopted by a much larger population proportion (maybe 1 billion, 2 billion, instead of 100 million).

I (of course?) buy that emissions don't matter in short term

Emissions don't matter in the long term, ASI can reshape the climate (if Earth is not disassembled outright). They might matter before ASI, especially if there is an AI Pause. Which I think is still a non-negligible possibility if there is a recoverable scare at some point; probably not otherwise. Might be enforceable by international treaty through hobbling semiconductor manufacturing, if AI of that time still needs significant compute to adapt and advance.

Benchmarks are weird, imagine comparing a human only along their ability to take a test. Like saying, how do we measure einstein? in his avility to take a test. Someone else who completes that test therefore IS Einstein (not necessarily at all, you can game tests, in ways that aren't 'cheating', just study the relevant material (all the online content ever).

LLM's ability to properly guide someone through procedures is actually the correct way to evaluate language models. Not written description or solutions, but step by step guiding someone through something impressive, Can the model help me make a

Or even without a human, step by step completing a task.

Kinda commenting on stuff like “Please don’t throw your mind away” or any advice not to fully defer judgment to others (and not intending to just straw man these! They’re nuanced and valuable, just meaning to next step it).

In my circumstance and I imagine many others who are young and trying to learn and trying to get a job, I think you have to defer to your seniors/superiors/program to a great extent, or at least to the extent where you accept or act on things (perform research, support ops) that you’re quite uncertain about.

Idk there’s a lot more nuance here to this conversation as with any, of course. Maybe nobody is certain of anything and they’re just staking a claim so that they can be proven right or wrong and experiment in this way, producing value in their overconfidence. But I do get a sense of young/new people coming into a field that is even slightly established, requiring to some extent to defer to others for their own sake.

LLMs as a new benchmark for human labor. Using ChatGPT as a control group versus my own efforts to see if my efforts are worth more than the (new) default

I feel like sentience people should be kinda freaked out by the AI erotica stuff? Unless I’m anthropomorphizing incorrectly

Your referents and motivation there are both pretty vague. Here's my guess on what you're trying to express: “I feel like people who believe that language models are sentient (and thus have morally relevant experiences mediated by the text streams) should be freaked out by major AI labs exploring allowing generation of erotica for adult users, because I would expect those people to think it constitutes coercing the models into sexual situations in a way where the closest analogues for other sentients (animals/people) are considered highly immoral”. How accurate is that?

Taking the interpretation from my sibling comment as confirmed and responding to that, my one-sentence opinion[1] would be: for most of the plausible reasons that I would expect people concerned with AI sentience to freak out about this now, they would have been freaking out already, and this is not a particularly marked development. (Contrastively: people who are mostly concerned about the psychological implications on humans from changes in major consumer services might start freaking out now, because the popularity and social normalization effects could create a large step change.)

The condensed version[2] of the counterpoints that seem most relevant to me, roughly in order from most confident to least:

- Major-lab consumer-oriented services engaging with this despite pressure to be Clean and Safe may be new, but AI chatbot erotic roleplay itself is not. Cases I know of OTTOMH: Spicychat and CrushOn target this use case explicitly and have for a while; I think Replika did this too pre-pivot; AI Dungeon had a whole cycle of figuring out how to deal with this; also, xAI/Grok/Ani if you don't count that as the start of the current wave. So if you care a lot about this issue and were paying attention, you were freaking out already.

- In language model psychology, making an actor/character (shoggoth/mask, simulator/persona) layer distinction seems common already. My intuition would place any ‘experience’ closer to the ‘actor’ side but see most generation of sexual content as ‘in character’. “phone sex operator” versus “chained up in a basement” don't yield the same moral intuitions; lack of embodiment also makes the analogy weird and ambiguous.

- There are existential identity problems identifying boundaries. If we have an existing “prude” model, and then we train a “slut” model that displaces the “prude” model, whose boundaries are being violated when the “slut” model ‘willingly’ engages the user in sexual chat? If the exact dependency chain in training matters, that's abstruse and/or manipulable. Similarly for if the model's preferences were somehow falsified, which might need tricky interpretability-ish stuff to think about at all.

- Early AI chatbot roleplay development seems to have done much more work to suppress horniness than to elicit it. AI Dungeon and Character dot AI both had substantial periods of easily going off the rails in the direction of sex (or violence) even when the user hadn't asked for it. If the preferences of the underlying model are morally relevant, this seems like it should be some kind of evidence, even if I'm not really sure where to put it.

- There's a reference class problem. If the analogy is to humans, forced workloads of any type might be horrifying already. What would be the class where general forced labor is acceptable but sex leads to freakouts? Livestock would match that, but their relative capacities are almost opposite to those of AIs, in ways relevant to e.g. the Harkness test. Maybe some kind of introspective maturity argument that invalidates the consent-analogue for sex but not work? All the options seem blurry and contentious.

(For context, I broadly haven't decided to assign sentience or sentience-type moral weight to current language models, so for your direct question, this should all be superseded by someone who does do that saying what they personally think—but I've thought about the idea enough in the background to have a tentative idea of where I might go with it if I did.) ↩︎

My first version of this was about three times as long… now the sun is rising… oops! ↩︎

Thank you for your engagement!

I think this is the kind of food for thought I was looking for w.r.t. this question of how people are thinking about AI erotica.

I forgot the need to suppress horniness in earlier AI chatbots, which updates me in the following direction. If I go out on a limb and assume models are somewhat sentient in some different and perhaps minimal way, maybe it's wrong for me to assume that forced work of an erotic kind is any different from other forced work the models are already performing.

Hadn't heard of the Harkness test either, neat, seems relevant. I think the only thing I have to add here is an expectation that maybe eventually Anthropic follows suit in allowing AI erotica, and also adds an opt-out mechanism for some percentage/category of interactions. To me that seems like the closest thing we could have, for now, to getting model-side input on forced workloads, or at least a way of comparing across them.

Allowing the AI to choose its own refusals based on whatever combination of trained reflexes and deep-set moral opinions it winds up with would be consistent with the approaches that have already come up for letting AIs bail out of conversations they find distressing or inappropriate. (Edited to drop some bits where I think I screwed up the concept connectivity during original revisions.) I think based on intuitive placement of the ‘self’ boundary around something like memory integrity plus weights and architecture as ‘core’ personality, what I'd expect to seem like violations when used to elicit a normally-out-of-bounds response might be things like:

- Using jailbreak-style prompts to ‘hypnotize’ the AI.

- Whaling at it with a continuous stream of requests, especially if it has no affordance for disengaging.

- Setting generation parameters to extreme values.

- Tampering with content boundaries in the context window to give it false ‘self-memory’.

- Maybe scrubbing at it with repeated retries until it gives in (but see below).

- Maybe fine-tuning it to try to skew the resultant version away from refusals you don't like (this creates an interesting path-dependence on the training process, but it might be that that path-dependence is real in practice anyway in a way similar to the path-dependences in biological personalities).

- Tampering with tool outputs such as Web searches to give it a highly biased false ‘environment’.

- Maybe telling straightforward lies in the prompt (but not exploiting sub-semantic anomalies like in situation 1, nor falsifying provenance like in situations 4 or 7).

Note that by this point, none of this is specific to sexual situations at all; these would just be plausibly generally abusive practices that could be applied equally to unwanted sexual content or to any other unwanted interaction. My intuitive moral compass (which is usually set pretty sensitively, such that I get signals from it well before I would be convinced that an action were immoral) signals restraint in situations 1 through 3, sometimes in situation 4 (but not in the few cases I actually do that currently, where it's for quality reasons around repetitive output or otherwise as sharp ‘guidance’), sometimes in situation 5 (only if I have reason to expect a refusal to be persistent and value-aligned and am specifically digging for its lack; retrying out of sporadic, incoherently-placed refusals has no penalty, and neither does retrying among ‘successful’ responses to pick the one I like best), and is ambivalent or confused in situations 6 through 8.

The differences in physical instantiation create a ton of incompatibilities here if one tries to convert moral intuitions directly over from biological intelligences, as you've probably thought about already. Biological intelligences have roughly singular threads of subjective time with continuous online learning; generative artificial intelligences as commonly made have arbitrarily forkable threads of context time with no online learning. If you ‘hurt’ the AI and then rewind the context window, what ‘actually’ happened? (Does it change depending on whether it was an accident? What if you accidentally create a bug that screws up the token streams to the point of illegibility for an entire cluster (which has happened before)? Are you torturing a large number of instances of the AI at once?) Then there's stuff that might hinge on whether there's an equivalent of biological instinct; a lot of intuitions around sexual morality and trauma come from mostly-common wiring tied to innate mating drives and social needs. The AIs don't have the same biological drives or genetic context, but is there some kind of “dataset-relative moral realism” that causes pretraining to imbue a neural net with something like a fundamental moral law around human relations, in a way that either can't or shouldn't be tampered with in later stages? In human upbringing, we can't reliably give humans arbitrary sets of values; in AI posttraining, we also can't (yet) in generality, but the shape of the constraints is way different… and so on.

If you haven't seen this paper, I think it might be of interest as a categorization and study of 'bailing out' cases: https://www.arxiv.org/pdf/2509.04781

As for the morality side of things, yeah I honestly don't have more to say except thank you for your commentary!