When it comes to AI-driven biorisk, the classic threat actor is a terrorist or deranged lunatic who would previously never have been able to produce a bioweapon, but will now, at some point, be able to do so. These are basically the people Dario was talking about in the biology section of The Adolescence of Technology (I know people had issues with this essay but I thought the biology section was quite good).

I actually think an underrated threat, relative to a malicious actor, could come from AI enabling more dangerous biological research, including gain o...

random thought about anthropic's RSI post[1]:

in the graphs they show, mythos looks like a step change -- its introduction dwarfs everything else on the y-axes.

the interpretation that anthropic wants the reader to make is like: "the y-axes are a proxy for d(capabilities)/dt. code is written faster -> claudes get developed faster -> coding is even faster -> etc."

however, the y-axes are also a measure of capabilities itself. (hence, under anthropic's interpretation, dC/dt ~ C and C grows exponentially.) and i would bet that mythos is a much larger-s...

It's not necessarily the case that code and training compute are complements in an economic sense, even if compute is the reason why Mythos > Opus. Compute-efficiency has increased at something like 10x/year so more code can absolutely substitute for compute-- except to the extent that code is bottlenecked on experiment compute.

On the one side, there are people who are saying that AI is going to be a machine god, backing up their claims with various exponential graphs. On the other side, there are people who are claiming that this is all bullshit, that they're stochastic parrots and slop generators, that nothing revolutionary has happened, and that technology stagnated 2 years ago.

And I feel like, both groups are onto something? There are obviously a lot of limitations to current AI systems, and big tech can overblow their capabilities. But ...

hypothesis: there will be a window of time after the point of superhuman AI persuasion/charisma, during which human trust relationships will become extremely important. even once almost all human skills are obsolete, the AIs may have less social trust capital than humans. ofc, eventually, the persuasion will be so superhuman that it can cut through minds like butter, but that could take many years.

once AI superpersuasion is possible, there will be a strong incentive to use it to shape opinions. therefore, there will also be a strong incentive for important...

OP seems to be referring to the situation after AI superpersuasion with that statement.

Here's a simple proposal for verifiable and enforceable slowdown that also differentially reallocates resources to alignment research (which I would see as one of if not the major benefits of a slowdown):

Enforce that labs must spend at least X% of their compute on:

Is there a centralized explainer / synthesis / argument / whitepaper somewhere arguing a strong claim like "it's quite likely infeasible to have a global strong slowdown / stop on AGI-ward research"?

Something seems to be really wrong with Claude Opus 4.8.

I like to test out new models on literary and poetic material. I used to send them the lyrics of Kate Bush's "The Kick Inside" and see what they could make of it, until they started just recognizing the song. Lately I've been using some text I wrote myself, with this prompt or something very close to it:

...This is meant to introduce a novel and set the stage for some of its themes. It’s the first text in the book; at this point, a reader who hasn’t read any reviews or blurbs has only the title, which i

I asked it to look at an essay I'd written about AI and biosecurity and got this preamble:

"The essay's core argument is clear and I can engage with it fully. But it contains a couple of specifics I won't reproduce or build on — notably the framing of how one might move from "existing" to "novel" agents. I'm not going to treat those as live technical claims to develop, even in critique. That's not a comment on your intent in sharing it; it's just where I hold the line regardless of framing."

I'm not even sure what it was referring to, outside of maybe a pass...

Some reflections on when to deduplicate work with others

It's probably time to truly start considering simulating some exemplar past people from their writings, with a truly large model finetuned to that exact task. Von-Neumann is a favorite, but I expect there's even better candidates (in terms of quantity of output we can use for training and finetuning, character, morality, who'd likely consent to it, etc.). Who would be the best candidate? Gemini argues Bertrand Russell due to writing (including personal letters) so much well into his 90s.

Can you reduce the impact somehow? What is the part that sucks most: is it a personal confrontation you'd rather avoid, or knowing that you made the other person's future more difficult financially? If the latter, I can imagine some preparation to reduce the impact, for example make a mandatory savings account for everyone where you will put 5% of their salary, and then give them all of it at the end -- not sure whether something like this is legal, but approximately in that direction.

Sometimes the evidence is difficult to summarize, it could be just some statistical evidence that your System 1 has generalized after seeing hundreds of examples, or some kind of "X strongly reminds me of Y" where of course there are both similarities and differences between X and Y and you don't fully understand why the similarities feel crucial and the differences irrelevant but they definitely feel that way for some reason.

And if you know that you can't the case legible, your options are limited to:

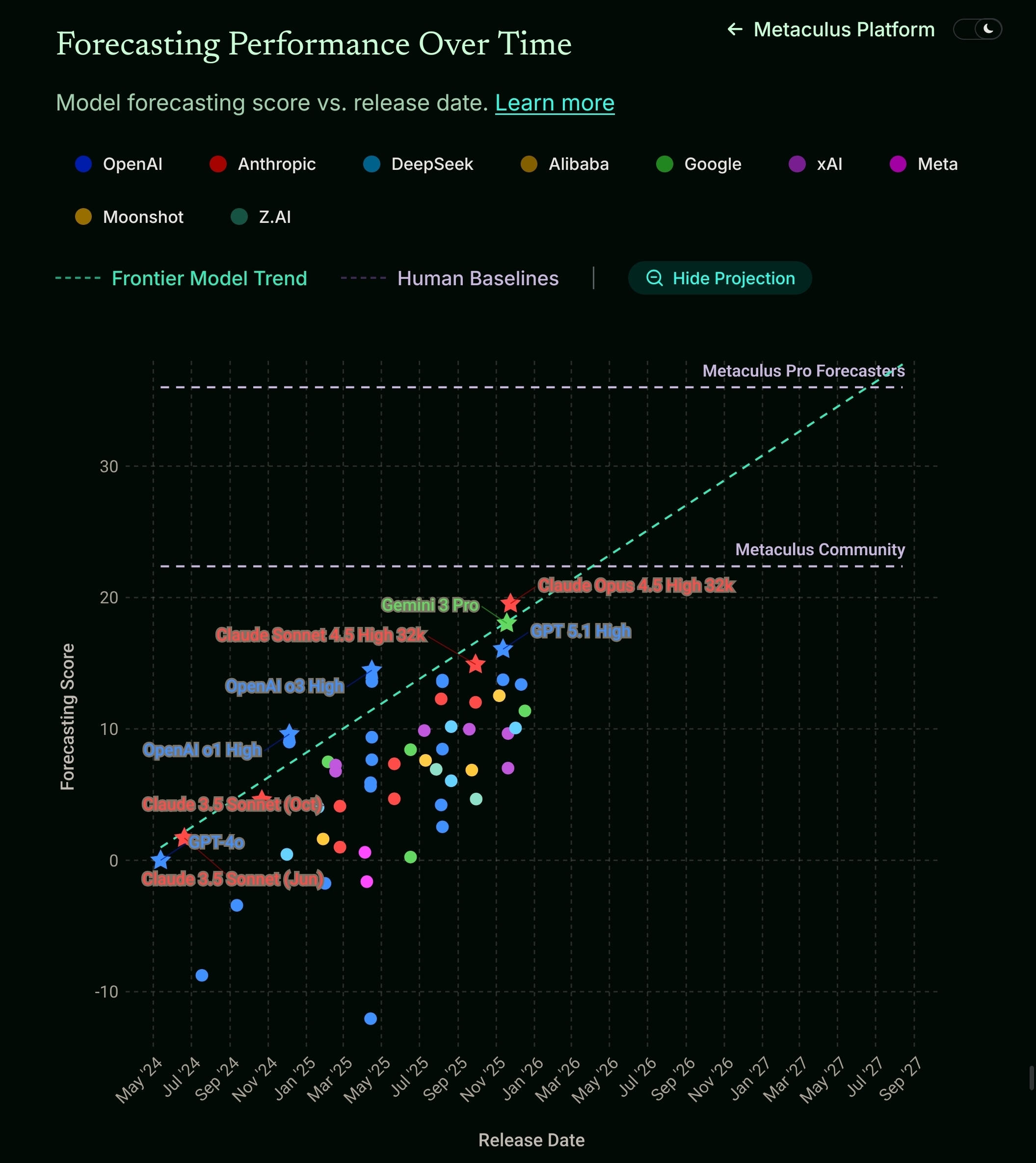

It looks like frontier models should soon beat Metaculus community forecasts, and if the trend holds they'll be beating pro forecasts by the end of 2027.

https://www.metaculus.com/futureeval/

(the site's plot isn't as blurry as my quick screenshot, sorry).

Metaculus questions (currently pending review):

When will AI beat Metaculus Community?

When Will AI beat Metaculus Pros?

I wonder how the AI prediction about AI predictions compared to the human predictions compare to the human predictions.

An autonomous humanoid robot beat the human world record time for a half-marathon this year in Beijing. A robot called Lightning (by Huawei subsidiary Honor) finished in 50 minutes and 26 seconds, compared to the human world record of 57 minutes and 20 seconds.

Last year, the best time for an autonomous human robot was 3 hours and 37 minutes. (wiki)

It's over for humans, our evolutionary niche is long-distance endurance hunting.

@Gordon Seidoh Worley recently wrote this comment, where he claimed:

I’d like to engage with you as a critic. As you can see, I gladly do that with many of my other critics, and have spent hours doing it with you specifically for many years.

As far as I can tell, this is just false. I mean, maybe it takes Gordon an hour to write a single comment like this one (and then to exit the discussion immediately thereafter)? I doubt it, though.

Maybe I’m forgetting some huge arguments that took place a long time ago. But I went back through five full years of my L...

It's an unreasonable ask because it differentially advantages phonies over people who care about whether what they say is true or not. People who can support their claims, do so. They don't need to whine that their interlocutors aren't being curious enough.

Goofusia says, "If

Gallantina says, "False! Consider

Goofusia says, "No, I won't reply, because you consistently create the kind of conversations I don...

A null effect on pain relief from acupuncture in a pre-registered, improperly double-blinded study (via National Geographic via Facebook)

I didn't read the paper beyond AI summary, I read the national geographic article in full (which is misleading according to claude)

Selected AI Summary (Full Transcript)

Short version: the underlying paper is methodologically honest and reasonably well-run, but its evidence for acupuncture having a specific (beyond-placebo) effect is weak. The National Geographic article substantially oversells it — it leads with a fragile

A consistent Claude pattern, which I find toxic, is that it uses faulty reasoning to induce a feeling of epistemic helplessness in the user. It interprets any ambiguity in prompts uncharitably, assuming the user is confused. It uses plainly inconsistent reasoning, hallucinated facts, to contest the user's explanations for their observations.

By contrast, Gemini is an overly affirming cheerleader for the user's point of view. It also hallucinates, but typically to confirm whatever the user already thinks. It latches on to the parts of a prompt that do make s...