This is an automated rejection. No LLM generated, heavily assisted/co-written, or otherwise reliant work.

Read full explanation

I’ve been obsessed lately with why some systems collapse under stress while others seem to get tighter. In most complex adaptive systems—whether it's a corporate hierarchy, a software network, or an ecosystem—we usually treat "entropy" as a synonym for random disorder. But I’ve started thinking about it more as uncaptured constraint: essentially, degrees of freedom the system hasn't figured out how to use yet.

When a system fails, it’s usually because it couldn't route a disturbance back into its own structure. I’m playing with a concept I call Recursive Closure—the systemic ability to "metabolize" a perturbation so that instead of fragmenting the policy, the shock actually increases coherence.

Entropy as Uncaptured Constraint

Normally, we think of entropy as thermodynamics, but in an agent-environment loop, it’s more like an unprocessed signal.

Think about a pilot learning to fly. To a novice, the wind hitting the flaps is just noise—it's a disturbance that disrupts the flight plan. But to a pro, that same wind resistance is a constraint they can use to stabilize a landing. The "entropy" of the environment hasn't changed; the system's ability to "capture" it has.

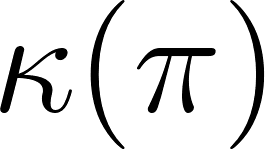

Formally, we look at Shannon entropy as uncertainty:

In this context, high entropy just represents the "hidden levers" in the environment that the system's policy hasn't learned to stabilize yet.

Collapse and Policy Fragmentation

Collapse usually happens when the internal model () can't keep up with the reality (). If the divergence grows faster than the adaptation rate, you get fragmentation.

When this divergence hits a threshold, sub-agents within the system (like departments in a company or modules in a network) start optimizing for their own local survival instead of the global objective. This is how "stress" turns into "collapse"—the feedback loops break, and the system splinters.

Recursive Closure: Turning Shocks into Structure

I’m defining Recursive Closure as the property where perturbations actually increase the expected future ability of the system to satisfy its constraints.

Here, is a measure of coherence—the unity of the system’s policy under variance.

A system with this property doesn't just "resist" a kick; it routes the energy of that kick back into the policy. Every perturbation nudges the system to integrate a new constraint, which strengthens the global control instead of breaking it. It’s the difference between a brittle piece of glass and a muscle that grows stronger after a micro-tear.

The Missing Piece in the Free Energy Principle

The Free Energy Principle (FEP) does a great job of explaining why systems minimize prediction error. But minimizing error alone doesn't guarantee you won't fall apart. You can minimize error by just hiding in a dark room (the "Dark Room Problem").

Recursive Closure is about the topology of that minimization. Does the system converge into a single, coherent attractor (Closure), or does it fragment into a bunch of brittle, conflicting sub-policies (Collapse)?

Potential Metrics

I'm looking for ways to actually measure this in simulations or multi-agent RL. A few thoughts:

Lyapunov Stability: Does the system return to its stable state faster after a perturbation ()?

Constraint Capture: Does the mutual information between the perturbation () and the resulting policy () increase ()?

Integration Rate: Comparing the rate of new constraint generation vs. the system's integration rate.

Request for Prior Art

I'm essentially a hobbyist looking for where these ideas might have already been formalized. I’d love pointers on:

Metrics for attractor fragmentation or "coherence" in agent policies.

Operationalizing in control theory or multi-agent systems.

Any simulations that deal with "metabolizing" shocks into coordination.

I’ve been obsessed lately with why some systems collapse under stress while others seem to get tighter. In most complex adaptive systems—whether it's a corporate hierarchy, a software network, or an ecosystem—we usually treat "entropy" as a synonym for random disorder. But I’ve started thinking about it more as uncaptured constraint: essentially, degrees of freedom the system hasn't figured out how to use yet.

When a system fails, it’s usually because it couldn't route a disturbance back into its own structure. I’m playing with a concept I call Recursive Closure—the systemic ability to "metabolize" a perturbation so that instead of fragmenting the policy, the shock actually increases coherence.

Entropy as Uncaptured Constraint

Normally, we think of entropy as thermodynamics, but in an agent-environment loop, it’s more like an unprocessed signal.

Think about a pilot learning to fly. To a novice, the wind hitting the flaps is just noise—it's a disturbance that disrupts the flight plan. But to a pro, that same wind resistance is a constraint they can use to stabilize a landing. The "entropy" of the environment hasn't changed; the system's ability to "capture" it has.

Formally, we look at Shannon entropy as uncertainty:

In this context, high entropy just represents the "hidden levers" in the environment that the system's policy hasn't learned to stabilize yet.

Collapse and Policy Fragmentation

Collapse usually happens when the internal model ( ) can't keep up with the reality (

) can't keep up with the reality ( ). If the divergence grows faster than the adaptation rate, you get fragmentation.

). If the divergence grows faster than the adaptation rate, you get fragmentation.

When this divergence hits a threshold, sub-agents within the system (like departments in a company or modules in a network) start optimizing for their own local survival instead of the global objective. This is how "stress" turns into "collapse"—the feedback loops break, and the system splinters.

Recursive Closure: Turning Shocks into Structure

I’m defining Recursive Closure as the property where perturbations actually increase the expected future ability of the system to satisfy its constraints.

Here, is a measure of coherence—the unity of the system’s policy under variance.

is a measure of coherence—the unity of the system’s policy under variance.

A system with this property doesn't just "resist" a kick; it routes the energy of that kick back into the policy. Every perturbation nudges the system to integrate a new constraint, which strengthens the global control instead of breaking it. It’s the difference between a brittle piece of glass and a muscle that grows stronger after a micro-tear.

The Missing Piece in the Free Energy Principle

The Free Energy Principle (FEP) does a great job of explaining why systems minimize prediction error. But minimizing error alone doesn't guarantee you won't fall apart. You can minimize error by just hiding in a dark room (the "Dark Room Problem").

Recursive Closure is about the topology of that minimization. Does the system converge into a single, coherent attractor (Closure), or does it fragment into a bunch of brittle, conflicting sub-policies (Collapse)?

Potential Metrics

I'm looking for ways to actually measure this in simulations or multi-agent RL. A few thoughts:

Request for Prior Art

I'm essentially a hobbyist looking for where these ideas might have already been formalized. I’d love pointers on:

in control theory or multi-agent systems.