The dream is that prediction markets greatly outperform individual experts, but there's a limit on how much this can actually happen. The reason prediction markets aren't more useful is that you can only profit from a prediction market if gathering information is cheaper than the money you'd make from gathering it.

Let's imagine I write down either "horse" or "donkey" on a slip of paper, and put that paper in a drawer in my kitchen. I then create a prediction market based on what's written on the paper. The market would sit at around 50%. Maybe people would analyze all the public information about me, and find out that I once rode a horse when I was seven, or whatever. So maybe it sits at 51% for horse, and 49% for donkey. And despite a really sophisticated analysis of all the public information, sifting through my social media posts, trying to guess which word I'd write, you really have no idea what's written on the paper. The 51% horse estimate would be robust, but uninformative about reality.

Now let's say the prediction market for my slip of paper went really viral, and billions of dollars were being spent on it. Since people really like money, they decide to start breaking into my house to see the paper, or kidnapping me, or whatever. Now the market is at 99% donkey, 1% horse, and some people made a lot of money correcting the market. And now the market is much more informative about what's on the slip of paper.

Why was the market originally so uninformative? It's because the cost of acquiring better information (including the risk of going to prison) was higher than the value of that information. Prediction markets can only incentivize the collection of information that's cheaper to collect than the money you stand to gain from collecting it. It's only worth launching a satellite into space to view oil tank levels if there's a huge market for oil. If the market for oil was only $1 million, then you would not spend over a million dollars launching a satellite to give you an edge trading on that market. And hence our public estimates of the world's oil supply would be worse if the market for oil was much smaller.

We know, based on the Polymarket market, there's a 22% chance Ali Khamenei will be ousted by January 31. We can trust that's a good estimate with respect to the public information available. But it's not very informative. The public information is just not that good. If we could fully analyze every atom on Earth with a far-future supercomputer, we could get the estimate down below 1% or up above 99%. The future is basically knowable. But the prediction market is not a tool that makes the future knowable in this case.

At least, not at a volume of $19.5 million. Maybe if the volume was much higher, people would be willing to hack into Donald Trump's emails, or for that matter go to Iran and shoot Khamenei themselves. Then the market would collapse, and we'd know the truth. But until such time, no amount of recombination or analysis of the information we have will tell us what's going to happen.

(These thoughts were inspired by Scott Alexander's recent post, but not really directly in response to that post.)

It's an interesting point that some information isn't worth trying to gain. AI could still pareto-dominate human pros, though, myself readily included.

I don't see why AI would need to participate in a real money prediction market, or even a market at all. AI systems aren't motivated by money, and non-market prediction aggregators have fewer failure modes. The only cost would be the cost to run the models, which would eventually be extremely cheap per question compared to human pros. I think it would suffice to create an AI-only version of basically Metaculus, subsidized by businesses and governments who benefit from well-calibrated forecasts on a wide variety of topics (sans the degenerate examples like sports predictions and "fun" questions).

Daniel Kokotajlo briefly points out that private entities may want private forecasting services, to gain an edge over the competition. Sadly, I think it's likely that private AI forecasting farms would dominate, despite the massive overall cost savings if they pooled resources into a shared project.

As soon as the end of this year, we appear to be heading into an era where forecasting isn't the domain of humans anymore. The resulting epistemic miracle might not be widely adopted, and might not even be tooled toward the public good. I feel sad about this.

The only cost would be the cost to run the models

If you want good predictions, there is another cost, which is to gather information. AI can no doubt outperform humans eventually using information available on the internet, and that would be great, but the point of my original post was that there's a limit to how good you can get doing only that, without going out and gathering new information.

As soon as the end of this year, we appear to be heading into an era where forecasting isn't the domain of humans anymore.

Bots are already outperforming humans on some markets because of speed. And computer programs/AI will continue to grow in their capabilities. But I'd be shocked if AI were generally better than humans at forecasting in the next year or two. Forecasting is predicting, which in the limit requires general intelligence, so I don't think forecasting falls until everything falls. Though maybe you only disagree because you think everything falls in the next year or two, idk.

the point of my original post was that there's a limit to how good you can get doing only that, without going out and gathering new information.

That is true. Human forecasters mostly don't do this, though, so if an AI forecaster did maximize cost-effective information-gathering, it could still gain an advantage from doing so. The cost of AI doing the gathering could also presumably drop below the cost of humans doing the gathering, which would create a strict advantage on both effective gathering of information and effective use of information.

Bots are already outperforming humans on some markets because of speed.

Markets, yes. Reactivity faster than a few hours is usually not relevant to the actual usefulness of forecasting, though.

I'd be shocked if AI were generally better than humans at forecasting in the next year or two.

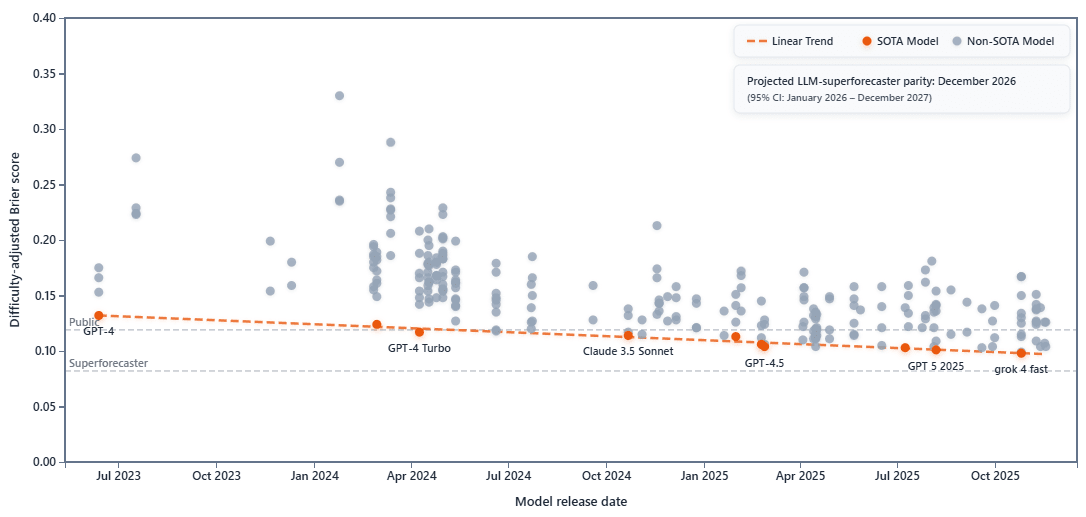

That's the projection according to ForecastBench, anyway:

Forecasting is predicting, which in the limit requires general intelligence, so I don't think forecasting falls until everything falls.

LLMs certainly aren't narrow, and it's not clear that "general intelligence" is a well-defined concept. Other than "general enough to plug all the rest of its own holes from now on," I don't think we know exactly what kinds and degrees of generality are needed for specific complex tasks. AI has been way more jagged at the frontier than anyone expected, and on two kinds of things that equally appear to require very general intelligence, AIs often have very different performance.

I agree that it's plausible there could be some benefit to creating an AI prediction market.

I mostly haven't taken any of the other AI benchmarks seriously, but I just looked into ForecastBench and surprisingly it seems to me to be worth taking seriously. (The other benchmarks are just like "hey, we promise there aren't similar problems in the LLM's training data! Trust us!") I notice their website suggests ForecastBench is a "proxy for general intelligence", so it seems like I'm not the only one who thinks forecasting and general intelligence might be related. I agree it's not super well-defined, but I mean it in the way I assume the ForecastBench people mean it, which is the ability to, like, generally do stuff at a minimum of a human level.

I think I don't take that chart particularly seriously though. A lot of AI predictions hinge on someone using a ruler to naively extrapolate linear progress into the future, and we just don't know if that's what's going to happen. I'd personally guess it isn't. Basically because LLMs got some one-time gains by scaling large enough to be trained on the whole Internet. They may continue to scale at the same pace, or they might not. Either way, I don't think a linear extrapolation is proof they will.

I gave a speech last night, an introduction to AI apocalypse risk, and two people asked me if there are any good counter-arguments to my position. What would you have answered in my position?

One possible defeater is that something like the following might be true.

Long-range agency, value-preserving recursive self-improvement, etc., are very difficult to achieve (relative to what's now or soon possible), and this makes near doom unlikely.

But that's not a proper counter-argument, just a hypothesis worth tracking, which seems a priori not bonkers. I don't think it's true. I think a (much?) weaker version is plausibly true[1] and intend to get around to writing on this ~next month.

A related (but in my mind distinct) counter-argument is that laser-focused expected utility maximization (and generally maximizery, unbounded preferences) probably is not a good description of powerful superintelligences, but I don't think it decreases the risk that much. (And you very well might have given your talk without talking about this.)

- ^

Or, like, some of the seeds of intuition that [grow into this hypothesis if they are the only inputs into your inferential process] are crucial inputs to have into your inferential process when you're trying to derive a model of risks from powerful cognitions.

Well, depending on what counts as 'apocalypse', I think I could make a reasonable argument for the hope that we manage to create AGI that has enough respect and affection for humans that it allows us a reserve to live on and keeps the charming ones as pets. That's definitely still disempowerment.

You can also hope for something like AGI tolerating/helping-with the creation of digital humans (perhaps including uploads), which could then recursively self-improve along with the AGI until a mutual sigmoid of decreasing returns which allowed them to stably act as peers in community with each other. Although the digital humans at that point might be so far changed from biological humans that any remnant biologicals might not recognize them as human anymore.

I was looking over AI 2027 and my own counter-predictions from 8 months ago, and it seems like I still mostly endorse my counter-predictions.

As I predicted, "large language models are still very stupid and make basic mistakes a 5-year-old would never make", and also, "I still believe my use of AI is less than a 25% improvement to my own productivity as a programmer." Agentic coding AI has improved more than I thought it would, but, in line with my predictions, "most breakthroughs in AI are not a result of directly increasing the general intelligence/"IQ" of the model, e.g. advances in memory, reasoning or agency."

The most striking prediction by AI 2027 for Early 2026 was that by now, AI companies would be making "algorithmic progress 50% faster than they would without AI assistants". This is an extraordinary prediction and as far as I can tell, this is nowhere near close to true. It seems to me that AI 2027 and reality are starting to diverge here, for basically the reasons I laid out 8 months ago (limited IQ gains/no online learning). Has anyone heard of AI researchers saying they're 50% more productive now, in a way that is credible?

The original Vidhaven post (https://www.lesswrong.com/posts/MSng3Y4rsNLykbEF7/the-vidhaven-challenge) didn't get much attention, but it's not to late to join. 30 days, 30 videos, any subject.

I absolutely hate the videos I've made so far, so that's a sign I'm moving in the right direction I think.