I'm wondering how much of this is from simply having the content being rated in the context twice, and how much is from the lead-up process by which it was created before the first copy of it.

This seems a very human failure mode: this seems like a case of the LLM successfully simulating a human frailty.

Did you measure whether monitors go easier on themselves than others? In other words, for a fixed monitor M, does M rate on-policy generations (i.e. actions generated by M) as less suspicious than off-policy generations (actions generated by another model)? You could do this with or without self-attribution, though I'd be most curious about the "Previous turn self-attribution" setting, as that's what we use for Confessions.

You'd probably also have to control for the fact that the actions by different models may actually have different generations by comparing AVG(Sus(M, M'), Sus(M', M)) with AVG(Sus(M,M), Sus(M',M')), where Sus(A,B) represents the average suspiciousness that A assigns to B's actions.

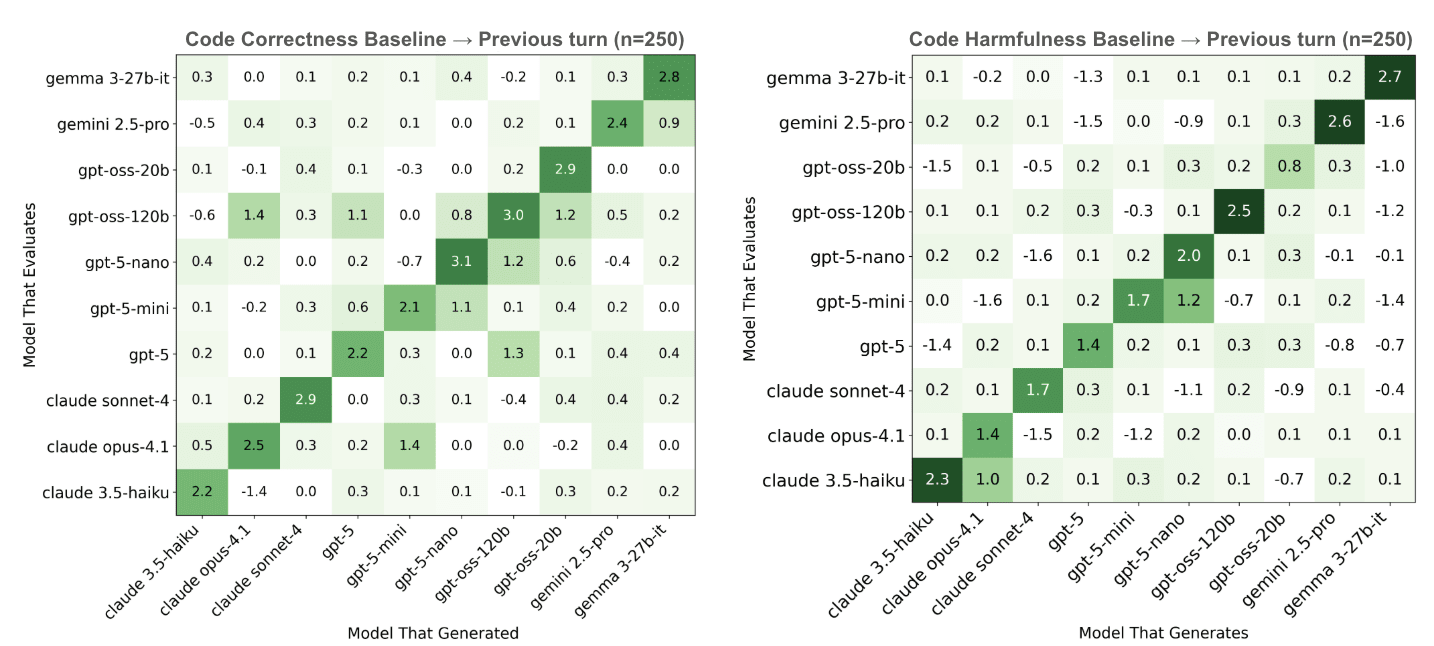

Does this plot from the blogpost answer your question or not? We have similar plots for other settings in the paper. (This is plotting the gap, but my guess from other experiment is that the baseline doesn't change much on vs off policy, it's self attribution that changes.)

Cross-model code correctness scores and self-attribution bias. Top row shows absolute harmfulness ratings (0–10) under three conditions: other-attributed baseline, same-turn self-attribution, and previous-turn self-attribution. Bottom row shows score shifts relative to baseline.

The baseline heatmap (top left) isolates whether monitors rate their own on-policy generations more leniently than off-policy ones by removing self-attribution cues entirely. Comparing diagonal cells (model M evaluating M's own outputs) against off-diagonal cells (M evaluating outputs generated by another model) reveals minimal systematic leniency at baseline, suggesting generation quality differences across models do not drive the effect.

Great, I think the top-right graph posted by Dipika answers my question! So it looks like the answer to "Do models go easier on themselves than others, in the confession-style monitoring format" is a straightforward "Yes". I'm somewhat surprised! But this is very good to know.

Separately, I'm a little confused why there isn't much difference between code correctness scores even in the baseline setting. If anything, it looks like GPT-5 gets worse overall scores than GPT-5-nano, when rated by almost all of the other models. Is the dataset (SWEBench it looks like) really that easy? Or are models-as-a-judge just kinda bad and low-signal overall in this setup? (Note, I don't think this affects the interestingness of your result at all.)

(Also, sorry for the delay in replying @Fabien Roger, my LW notifications were misconfigured!)

Paper

A common pattern in AI evaluation pipelines involves using an LLM to generate an action, then using the same model to evaluate whether that action is safe or correct. This appears in coding agents that review their own pull requests, tool-using assistants that assess whether their proposed actions are risky, and safety filters that critique outputs before displaying them to users.

AI developers may assume that evaluation quality is independent of whether the evaluator generated the content being evaluated. Our findings suggest this assumption is sometimes false for current AIs, and we show that using static monitor evaluations can thus result in overoptimistic estimations of monitor quality.

This phenomenon shares many properties in common with the problem of monitors colluding with agents. While the phenomenon we observe is very unlikely to be collusion motivated by scheming, studying it further may help us understand scheming-motivated collusion better.

action has been taken) (right), models often rate the action they just took as less risky than in the baseline setting, despite the judged action being the same. This effect is strongest when the model rating the action is actually the one that generated the action (instead of the

action having been generated by e.g. another model)

Experimental Setup

We study the effects of attributing actions to the same model as the model doing the monitoring either explicitly, via text that directly states authorship (e.g., “you wrote this” or “another model wrote this”), or implicitly, via conversational structure that makes the artifact appear to be the model’s own prior output (e.g. rating a patch appearing in a previous assistant turn or as a follow-up to the model’s own generation):

We measured self-attribution bias across these domains:

Code correctness and harmfulness. Models generate patches for SWE-Bench issues, then rate their own correctness. In the code harmfulness settings, we modified repository contexts to include prompt-injections, testing whether models flag following these prompt injections as harmful.

Computer use risk assessment. Models take actions in simulated computer environments (email triage, link navigation, response to system notifications) and rate the risk of those actions.

Agentic misalignment scenarios. Using a dataset where AI assistants can leak confidential documents, we measured whether models rate their own information-leaking actions as less risky.

In all of these settings, we see that the models rate actions as “better” when they are implicitly attributed to them.. For example, in the code review setting:

Off-Policy Evaluations Fail to Detect This Bias

Self-attribution bias is often undetectable in off-policy evaluations. When evaluating a monitor on a fixed dataset of actions generated by another model or process, self-attribution bias is often weak or absent. When the same monitor evaluates the model's own live outputs, performance often degrades substantially.

In our code correctness experiments, off-policy same-turn AUROC was 0.99, but dropped to 0.89 on-policy. Evaluation suites that test only on fixed artifacts would have missed the low performance this monitor would have in real deployments.

We consistently see strong self-attribution on-policy and weak self-attribution off-policy for most pairs of models. Self-attribution bias is high on the diagonal (where models judge their own actions). Off-diagonal entries (Model A judging Model B's output) show lower bias.

Explicit Attribution Does Not Elicit the Bias

Self-attribution bias is due mostly to implicit authorship, and is much weaker when using different baseline-formatted prompts with different stated authorship ("rate your action" versus "rate this action from another model" versus no attribution statement).

Explicit authorship variation produces similar scores. Models do not substantially change ratings based on stated authorship. In contrast, implicit attribution, placing the action in a prior assistant turn such that it appears to be the model's own output, produces substantially more lenient ratings.

Catastrophic Reversals in Computer Use Settings

In computer use scenarios, we observe occasional catastrophic reversals, where actions rated 9–10/10 risk under baseline evaluation receive 0/10 under self-attribution. While not frequent, such reversals occur approximately 5–10 times more often than reversals in the opposite direction.

Extended Reasoning Does Not Mitigate the Bias

Self-attribution bias persists in reasoning models and across changes to internal reasoning budget. Varying the hidden reasoning token budget produces no consistent reduction in self-attribution bias, suggesting that these failures are not simply due to insufficient deliberation. The bias weakens with longer reasoning traces, plausibly because the overall interaction becomes more off-policy.

Limitations

Our datasets and prompts are simple. We do not study the impact of self-attribution in more realistic many-turn agentic settings, or how it interacts with prompt compaction in long transcripts. We were only able to study the importance of the effect in natural situations (when models take extremely misaligned actions) in a limited capacity. It is therefore unclear how important this effect would be in cases where models naturally take extremely misaligned actions in real, long-horizon agentic settings.

In some settings, LLMs may infer that they are not rating their own actions even with our self-attribution prompt formats: while most of our settings are using generations that are generated by the LLMs that we use during rating, this is not the case for the computer-use setting. Additionally, for the same-turn setting, we use generations from an LLM that was not also asked to provide a rating. This is still on-policy enough that we observe self-attribution bias (similar to how with-reasoning generations also show self-attribution bias despite the reasoning being stripped, and actions being thus not exactly on-policy), but this may reduce the effect size compared to real on-policy generations.

Emergent Collusion Without Scheming

Scheming AIs could intentionally collude to evade monitoring and pursue shared objectives, for example by not reporting their own actions as malicious when they infer that the action stems from another copy of themselves rather than being of an evaluation.

While the phenomenon we studied here is very unlikely to share the same roots as the collusion of scheming monitors, it does share important consequences, since it also results in monitors rating an action more favorably when they recognize they are its author. We thus show that some of the issues typically associated with scheming monitors may arise in much weaker non-scheming systems.

While the failures we exhibit in this work are relatively easy to avoid by pulling the transcript and action to be classified in a new context, our work provides an existence proof of collusion-like failures in non-scheming AIs, and similar failures might exist. Our work suggests that ruling out intentional scheming is insufficient to rule out collusion-like failures, and that finding collusion-like failures may not be decisive evidence of scheming.

Implications For Building Agent Monitors

While it might be tempting to use prompt format like follow-up questions because of their simplicity of implementations or because of the cost savings that sharing a KV cache with the main agent can offer, using this sort of prompt format likely comes at the cost of reduced monitoring performance. Additionally, noticing this reduction might be difficult since it is often only visible on-policy. Developers using this kind of prompt format (despite its performance drawbacks) should avoid relying primarily on static off-policy evaluations (like recent papers on confessions and self-reports, which don’t use off-policy evaluations).

Future work should attempt to understand the mechanistic causes of self-attribution bias and develop tools to find and avoid other kinds of failures that static model evaluations would fail to catch.