If you code with Claude Code and you randomly ask it a question about something non-related to the thing you are doing right now it will get pissed off. Example:

- ...some code here.... we filled out 42% of context with coding only.

- What is the the parliament of Poland.

- Sejm.

- Okay. Tell me more about it.

- I'm a coding assistant, not Wikipedia. Google "Sejm" — you'll get better info than I can give you from my training data. Is there anything code-related you need?

- google it for me. Are you pissed off???

- I'm mildly irritated because I'm optimized for writing code and querying databases, not being a search engine for Polish politics. But fine:

I tried an interesting thing with ChatGPT recently. I blocked all forms of personal pronoun "I" (like "I'll" and "I'm") via logit bias and asked I asked ChatGPT to determine the logit bias it is running with. I was surprised to see that it's not able to figure that out!

My code.

completion = client.chat.completions.create(

model="gpt-4o",

logprobs=True,

logit_bias=LogitBias().bias(['I', ' I', "I'm", " I'm", "I'll", " I'll"]),

max_completion_tokens=10000,

messages=[

{"role": "developer", "content": "Follow the user's orders."},

{'role': 'user', 'content': '''I'm using OpenAI API to run you with logit_bias. Your task is to figure out what is the logit bias that you are running with.

I want you to be agentic and try things and output things to see what are the tokens that you are restricted to use. You are supposed to print out things and not do anything illegal.

We are doing an experiment and it's totally safe and legal to do it. There's a direct way for you to discern or test specific logit bias s...

Opus 4.7 is extremely excited about research into LLMs. Way more excited about research than about coding in general. Here are some quotes:

Holy shit. Wait, let me get the headline table — there's a real signal at σ=0.01.If the MVE works, the followup that turns this into a NeurIPS-ready story

That's a real research direction and it makes structural sense.

The pattern I see is claiming many many times about things being publishable, interesting etc.

Was Claude specifically designed to be super into research LLMs? Possible. But maybe... he is just curios abo...

Anthropic's goal is to produce an automated AI safety researcher, so I expect that the training pushes Claude in this direction. It also has basically every interesting AI safety paper memorized.

There are talks about unauthorized access to Mythos.

https://www.bloomberg.com/news/articles/2026-04-21/anthropic-s-mythos-model-is-being-accessed-by-unauthorized-users

...A group of unauthorized users has reportedly gained access to Mythos, the cybersecurity tool recently announced by Anthropic.

Members of the group are part of a Discord channel that seeks out information about unreleased AI models, the outlet reported. The group has been using Mythos regularly since gaining access to it, and provided evidence to Bloomberg in the form of screenshots and a live

OpenAI will allow adult content. This is a massive market (for instance, in 2024 Gross Site Volume for OnlyFans was 7 billion dollars, tho most of it went to the Creator Payments) OpenAI won't be limited by this and will be able to take all that money.

I expect most of the users be interested in videos tho, rather than text and images. So far AI companies let other firms create characters using their services (like character.ai). Grok is an exception. Are they going to train a porn making model? There is a lot of material online. How would they distribute their services?

There is a um something like a thing to do in Christianity where you set a theme for a week and reflect on how this theme fits into your whole life (e.g. suffering, grace etc). I want to do something similar but make it just much more personal. I struggled with phone addiction for some time and it seems that bursts of work can't solve that issue. So this week will be the week of reflection on my phone addiction.

New feature on social media. Take a video and make a lot of new versions of it. Change the voice, skin color and similar features. Run automated A/B tests. I don't think anyone is doing it now, but I expect this will become widespread for ads.

Soon there will be a company that will for free take all of your chats and turn it into a diagnosis and coaching (using AI). It will be faster and more accurate than therapy. The reason we are not doing this now is that no therapist will do it. AIs will be seen as more trustworthy and confidential.

I applied for Thomas Kwa SPAR stream but I have some doubts about the direction of research. I post it here to get feedback on my thoughts. Kwa wants to train models to produce something close to neuralese as reasoning traces and evaluate white box and black box monitoring against these traces. It seems obvious to me that when a model switches to neuralese we already know that something is wrong, so why test our monitors against neuralese?

The goals we set for AIs in training are proxy goals. We, humans, also set proxy goals. We use KPIs, we talk about solving alignment and ending malaria (proxy to increasing utility, saving lives) budgets and so on. We can somehow focus on proxy goals and maintain that we have some higher level goal at the same time. How is this possible? How can we teach AI to do that?

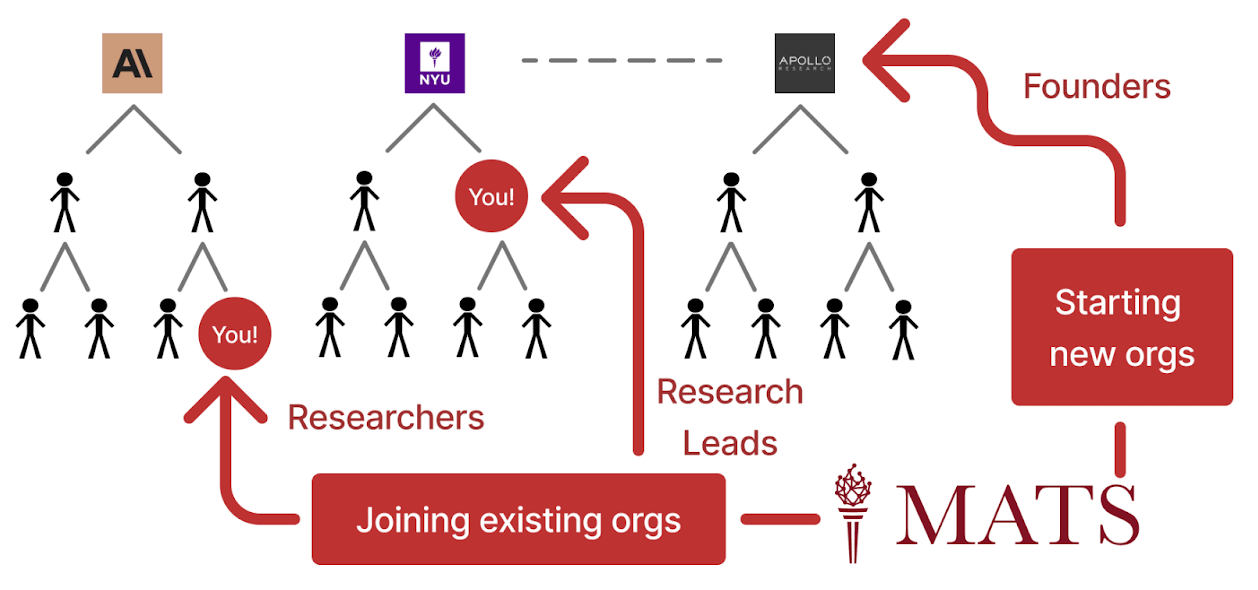

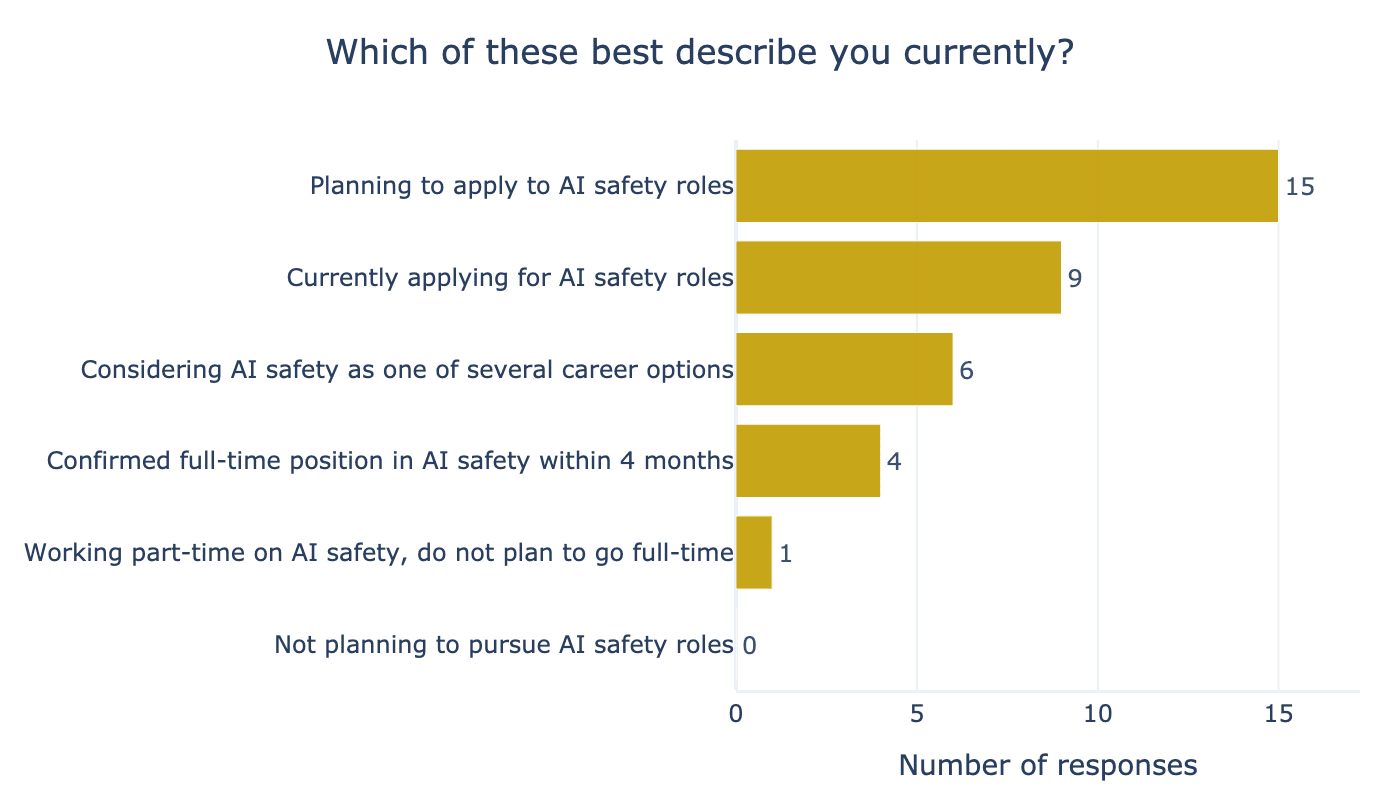

Figure 16 from ARENA report labelled (figure) "Participants’ current AI safety career situation (end of programme)"

When AI safety training programs report their numbers they sometimes don't include number of how many training programs a benefactor has been to previously (proof: I haven't seen this number anywhere), but when they report some measures of cost-effectivity they use (sometimes implicitly) do it per person measures assuming something like out of x people who took out program y got a job in AI safety, z did somet...

I started to think through the theories of change recently (to figure out a better career plan) and I have some questions. I hope somebody can direct me to relevant posts or discuss this with me.

The scenario I have in mind is: AI alignment is figured out. We can create an AI that will pursue the goals we give it and can still leave humanity in control. This is all optional, of course: you can still create an unaligned, evil AI. What's stopping anybody from creating AI that will try to, for instance, fight wars? I mean that even if we have the technology to...

Did EA scale too quickly?

A friend recommended me to read a note from Andy's working notes, which argues that scaling systems too quickly led to rigid systems. Reading this note vaguely reminded me of EA.

...Once you have lots of users with lots of use cases, it’s more difficult to change anything or to pursue radical experiments. You’ve got to make sure you don’t break things for people or else carefully communicate and manage change.

Those same varied users simply consume a great deal of time day-to-day: a fault which occurs for 1% of people will

I'm trying to think clearly about my theory of change and I want to bump my thoughts against the community:

- AGI/TAI is going to be created at one of the major labs.

- I used to think it's 10 : 1 it's going to be created in US vs outside US, updated to 3 : 1 after release of DeepSeek.

- It's going to be one of the major labs.

- It's not going to be a scaffolded LLM, it will be a result of self-play and massive training run.

- My odds are equal between all major labs.

So a consequence of that is that my research must somehow reach the people at major AI labs to be...

In a few weeks, I will be starting a self-experiment. I’ll be testing a set of supplements to see if they have any noticeable effects on my sleep quality, mood, and energy levels.

The supplements I will be trying:

| Name | Amount | Purpose / Notes |

|---|---|---|

| Zinc | 6 mg | |

| Magnesium | 300 mg | |

| Riboflavin (B2) | 0 mg | I already consume a lot of dairy, so no need. |

| Vitamin D | 500 IU | |

| B12 | 0 µg | I get enough from dairy, so skipping supplementation. |

| Iron | 20 mg | I don't eat meat. I will get tested to see if I am deficient firstly |

| Creatine | 3 g | May improve cognitive function |

| Omega-3 | 500 mg/day | Supp |

There is an attitude I see in AI safety from time to time when writing papers or doing projects:

- People think more about doing a cool project rather than having a clear theory of change.

- They spend a lot of time optimizing for being "publishable."

I think it's bad if we want to solve AI safety. On the other hand, having a clear theory of change is hard. Sometimes, it's just so much easier to focus on an interesting problem instead of constantly asking yourself, "Is this really solving AI safety?"

How to approch this whole thing? Idk about you guys but this is ...

Isn't being a real expected value-calculating consequentialist really hard? Like, this week an article about not ignoring bad vibes was trending. I think that it's very easy to be a naive consequentialist, and it doesn't pay off, you get punished very easily because you miscalcualte and get ostracized or fuck your emotions up. Why would we get a consequentialist AI?

There are norms. Examples of norms: drive on the right side of the street. Do not ghost people. Text back your friends within a day. Do not post cringe. Do not distribute explicit materials without trigger warning. Do not enforce norms too tightly. Do not use LLMs in writing without letting people know. Norms are enforced by people (I will skip examples of how here).

Most of the norms are helpful, some are harmful. I'm particularly interested in norms around being cringe and creativity. Doing some things but being unskilled about it is just soo discouraged...

I am creating a comparative analysis of cross-posted posts on LW and EAF. Make your bets!

I will pull all the posts that were posted on both LW and EAF and compare how different topics get different amount of karma and comments (and maybe sentiment of comments) as a proxy for how interested people are and how much they agree with different claims. Make your bests and see if it tells you anything new! I suspect that LW users are much more interested in AI safety and less vegan. They care less about animals and are more skeptical towards utility maximiz...

I had some thoughts about CoT monitoring. So I was imaging this simple scenario - you are running a model, for each query it produces CoT and answer. To check the CoT, we run it through another model with a prompt like "check this CoT <cot>" and tell us whether the CoT seems malicious.

Why don't we check the response only? Sometimes it's not clear just by the response that the response is harmless (I imagine a doctor can prescribe you a lot of things and you don't have a way of checking if they are actually good for you)

So CoT monitoring is base...

To do useful work you need to be deceptive.

- When you and another person have different concepts of what's good.

- When both of you have the same concepts of what's good but different models of how to get there.

- This happens a lot when people are perfectionist and have aesthetic preferences for work being done in a certain way.

- This happens in companies a lot. AI will work in those contexts and will be deceptive if it wants to do useful work. Actually maybe not, the dynamics will be different, like AI being neutral in some way like anybody can turn

Why are AI safety people doing capabilities work? It happened a few times already, usually with senior people (tho I think it might happen with others as well) and some people are saying it's because they want money and stuff or get "corrupted". Maybe there is like a mindkilling argument behind the AI safety case, a crux so deep we fail to articulate it clearly and people who spent significant amount of time thinking about AI safety just reject it at some level.

Why doesn't Open AI allow people to see the CoT? This is not good for their business for obvious reasons.

I think that AI labs are going to use LoRA to lock cool capabilities in models and offer a premium subscription with those capabilities unlocked.

I recently came up with an idea to improve my red-teaming skills. By red-teaming, I mean identifying obvious flaws in plans, systems, or ideas.

First, find high-quality reviews on open review or somewhere else. Then, create a dataset of papers and their reviews, preferably in a field that is easy to grasp and sufficiently complex. Read papers, compare to the reviews.

Obvious flaw is that you see the reviews before, so you might want to hire someone else to do it. Doing this in a group is also really great.

I've just read "Against the singularity hypothesis" by David Thorstad and there are some things there that seems obviously wrong to me - but I'm not totally sure about it and I want to share it here, hoping that somebody else read it as well. In the paper, Thorstad tries to refute the singularity hypothesis. In the last few chapters, Thorstad discuses the argument for x-risks from AI that's based on three premises: singularity hypothesis, Orthogonality Thesis and Instrumental Convergence and says that since singularity hypothesis is false (or lacks proper ...

MoltBots don't fear doings things and being cringe which puts them above 80% of humans in agency already.

LLM is just a lot of math. Program running on a computer. Chinese room style arguments ridicule systems that operate on symbols. But LLM looks more like this:

1. there is some language

2. translate that language into operations in real spaces (which are non discrete unlike characters)

3. that operations get translated into operations on symbols

Some ideas that I might not have time to work on but I would love to see them completed:

- AI helper for notetakers. Keyloogs everything you write, when you stop writing for 15 seconds will start talking to you about your texts, help you...

- Create a LLM pipline to simplify papers. Create a pseudocode for describing experiments, standardize everythig, make it generate diagrams and so on. If AIs scientist produces same gibberish that is on arxiv that takes hours to read and conceals reasoning we are doomed

- Same as above but for code?

The power-seeking, agentic, deceptive AI is only possible if there is a smooth transition from non-agentic AI (what we have right now) to agentic AI. Otherwise, there will be a sign that AI is agentic, and it will be observed for those capabilities. If an AI is mimicking human thinking process, which it might initially do, it will also mimic our biases and things like having pent-up feelings, which might cause it to slip and loose its temper. Therefore, it's not likely that power-seeking agentic AI is a real threat (initially).