New Comment

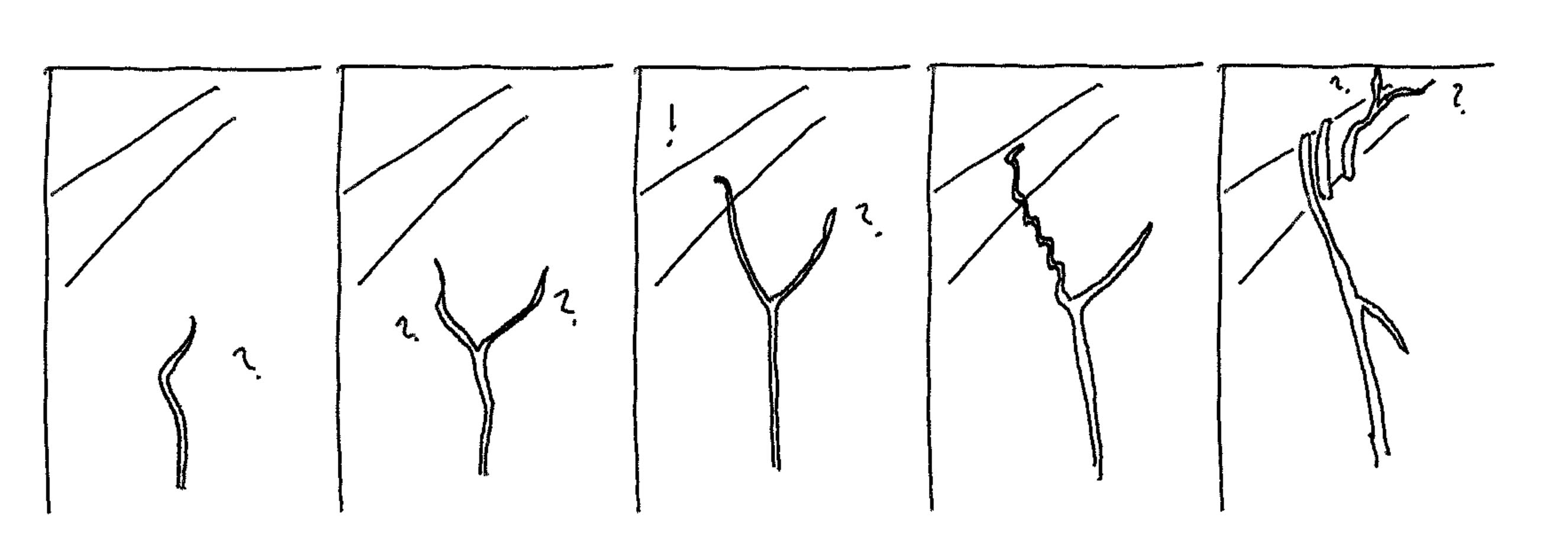

I just want to say that this image of "plant deliberation" was awesome, and made things click in a way that they hadn't, for me, before seeing it (and then reading the text that it was paired with). I love the little question marks, and the "!" when something useful is found by one of the "speculative lines of growth".

Great, I'm glad my silly sketches helped! I've had other feedback that these pics have been useful, actually.

Did you spot the footnote with links to some timelapse videos of climbing plants?

I often skip footnotes, but looking at those two gorgeous videos, I'm reminded of both the central truth of nature, and the contending factor that I find it aesthetic even despite understanding it! <3

The analysis and definitions used here are tentative. My familiarity with the concrete systems discussed ranges from rough understanding (markets and parliaments), through abiding amateur interest (biology), to meaningful professional expertise (AI/ML things). The abstractions and terminology have been refined in conversation and private reflection, and the following examples are both generators and products of this conceptual framework.

We previously discussed a conceptual algorithmic breakdown of some aspects of goal-directed behaviour with the intention of inspiring insights and clarifying thought and discussion around these topics.

The examples presented here include some original motivating examples, some used to refine the concepts, and others drawn from the menagerie after the concepts were mostly refined[1]. Each example is subjected to the analysis, in several cases drawing out novel insights as a consequence.

Most of these examples, for all their intricacy in some cases, are relatively 'simple' as deliberators, and I am quite confident in the applicability of the framing. Analysis of more derived and sophisticated deliberative systems is reserved for upcoming posts.

Brief framework summary

We decompose 'deliberation' into 'proposal', 'promotion', and 'action'.

We also identify as important whether a deliberator's actions are final, or give rise to relevantly-algorithmically-similar subsequent deliberators (iteration and replication), or create or otherwise condition heterogeneous deliberators (recursive deliberation).

Reaction examples

Chemical systems

Innumerable basic chemical reactions, like oxidation of iron, involve actions which change the composition or configuration of some material(s). For the purposes of this analysis these are, alone, mostly uninteresting, but serve to illustrate natural systems which do not preserve their essential algorithmic form and thus do not constitute iterated systems.

Some reactions, on the other hand, involve catalysis, wherein some reagents are essentially preserved or reconstituted in the action, producing the seeds of iterated algorithmic reaction, thus basic 'control'.

Despite pushing in a particular 'goal' direction, these systems are reactions rather than proper deliberations, because they occur without computing alternative pathways[2].

Biological systems

Even very simple organisms can react (that is, act or perform some function in response) to stimuli. No proper deliberation need be involved, no computation instantiated to consider alternatives.

A reaction can take place with or without a brain, or even a nervous system: consider many motions of single-celled organisms[3] or the snapping of a Venus flytrap. The cringe of many animals from intense heat goes via nervous circuitry but takes no deliberation.

Indeed, temperature changes are pervasive in nature, so it is no surprise that we find automatic heat-responsive behaviour at the protein-machinery level in every lineage of cellular life. These latter, along with other protein machinery and the organ-functions of multicellular organisms, demonstrate that, in nature, a single organism will be found to consist of many reactive and deliberative systems.

The class of 'systems undergoing a transformation' in chemical or physical interactions often does not preserve the essential algorithmic characteristics of the system, but most salient biological examples are iterated (the act essentially preserves the capacity to further act in an algorithmically similar way). This is not surprising given their origin by natural selection. (Some context-specific counterexamples exist, like some cases of one-shot autotomy to distract predators, though this works precisely because in so-doing, another deliberator, the gene complex coding for autotomy, sometimes thereby preserves and propagates its essential form).

Genes and systems-producing-systems

In many biochemical cases, it may be most appropriate to think of active genes and gene-complexes as highly iterated actors, sometimes literally implementing classical control analogues. Their products, the proteins, cells, organs and organisms, can be seen as the mediators of the genes' actions. Some of these mediators are themselves, or give rise to, deliberators or controllers.

The primordial genetic replicators, in the RNA world or even earlier, made little use of mediating systems, but nowadays they predominantly build cellular and multicellular vehicles which are responsible for most of their replicative success. Note that 'creating' is just a special case of 'conditioning', and, once created, cells, tissues, and organs are available to be conditioned as mediators of other genes, cells, tissues, and organs' activity.

Of course genes are themselves products and objects of natural selection, so we have systems-conditioning-systems-conditioning-systems.

Artificial reactions

Many artificial systems take reactive actions too. A thermostat adjusts without considering what it might otherwise do, and similar control systems have been in use for centuries at least.

Most contemporary predictive systems, most generative systems, a few game-playing, and many robotics systems produce their outputs reactively - the algorithm is not evaluating multiple proposals, it just proposes some single 'idea' (of varying quality).

There are many contemporary AI systems with rudimentary proper deliberation as well, and some uncertain cases, discussed below.

Many predictive AI systems, e.g. image classifiers, come closest to being 'non iterated' because their results have least bearing on their future invocations (though being more or less apparently useful to human operators does affect this).

Gradient descent as reaction

A single step of gradient descent is a reactive motion. A powerful heuristic - compute (an estimate of) the gradient of some target function at a point - produces exactly one proposed update (direction). The update is degenerately promoted and acted upon (applied) in a single fused motion. (In some cases minor proper deliberation may be introduced by evaluating multiple candidate step sizes.) Variations like momentum, and higher-order gradient methods like Newton-Raphson, have the same top-level analysis (though their quality as controllers may differ).

Iterating steps like this results in a controller directed toward ever lower scores.[4]

What makes gradient descent so good?

Despite carrying out no proper deliberation, iterated gradient descent is capable of somewhat-reliable goal-directed control. In fact, under the right conditions, it can produce results just like iterated natural selection which is, in contrast, a canonical properly deliberative process (see below). How so?

An essential takeaway from this analysis is that effective evaluation and promotion are only one part of deliberation strength. The other part is coming up with good proposals. Gradient descent happens to use a strong heuristic[5] for generating good proposals - good enough to entirely compensate for degenerate deliberation, at least when compared to a relevantly-similar natural selection.

Accurate contour rendition of a loss landscape. For navigating to the bottom-right basin from the marked point, a gradient step which is pushed away by the steep slope will eventually subsequently route around, but better steps are possible.

Note that in spite of this clever proposal heuristic, better-still controllers for the same domain are conceivable (though not, perhaps, easy to find or implement), even constricted to reactive deliberation and a fixed learning-rate schedule: local best-descent is only the best possible intuition on a globally-linear surface (which is nonexistent in practice). Similarly, appropriately-adaptable learning-rates can proceed more efficiently. This is part of the motivation behind such techniques as momentum, trust region optimisation, and adaptive hyperparameter tuning.

Market arbitrage

At a different level of organisation, arbitrage between sufficiently liquid markets is an example of an emergent reactive system which serves to push toward price convergence, along something akin to a gradient.

The essential form of this tendency is not disturbed by its own action, so it is iterated: a controller.

As a robust agent-agnostic process this reaction is constituted by the interactions between many individual actors, but is robust to many of the particulars of the behaviour of individuals or subsets of individuals. It serves as an example of a (reactive) controller at the multi-organism level. Even though the individuals enacting the arbitrage may be doing so very properly deliberately, this does not make the arbitrage control process itself any less degenerately reactive.

Deliberation examples

Natural selection, the ur-deliberator

Natural selection with mutation, a weak evaluative promotive proper deliberator, 'proposes' variations on current themes, tries them out by 'evaluating' their success, and moves (on average) to 'promote' fitter combinations[6]. (Natural selection couples its evaluation and promotion to its action, like reactive systems, but other deliberators need not do so.) It is essential to distinguish 'natural selection', the deliberative moment-to-moment or generation-to-generation proposal, evaluation, and promotion/demotion of local variations from 'evolution by natural selection', the iterated deliberative process (controller) which accumulates changes over time.

The implicit abstraction underlying the proposal part of natural selection is basic: sometimes random mutations will be fitter. The evaluation and promotion implicitly depend on the abstraction that if something works, this is evidence that it may work again. These abstractions are weak - the vast majority of lineages of biological organisms are extinct, and most extant organisms carry large amounts of deleterious genes - but we happen to live in a universe where they have been true enough times that life has persisted on Earth for billions of years and adapted to many changing circumstances in that time.

Hyperparameters of natural selection

Certain particulars of the machinery of life on Earth are notable for affecting the 'hyperparameters' of natural selection:

Since none of these can be said to be the primordial state, in some sense it can be said that natural selection has acted on its own hyperparameters. I think it may be appropriate to rather identify 'higher level' natural selections acting on lineages for the effectiveness of their adaptability, as determined by the hyperparameters of their respective natural selection.

What makes natural selection so bad?

Note that we can turn our question about gradient descent around: what makes natural selection so bad?[7] It uses far more resources than gradient descent but performs comparably.

The answer is the mirror image: the proposal-generating and evaluation heuristics of natural selection are about as rudimentary as they could possibly be, so in spite of massive parallelism and enormous computational resources, it takes a lot of iteration to get many capability innovations off the ground.

Plants are surprisingly deliberate

Plants as actors are often considered to be lacking deliberation, but in contrast, it is more appropriate to consider many of their behaviours to be weakly deliberative in a fashion comparable to or perhaps surpassing that of natural selection.

For example, climbing plants have numerous flailing, spreading, and otherwise (local) searching routines[8] to generate candidate route-proposals, which, composed with evaluation mechanisms (testing support, light availability, etc) and promotion routines (execute growth, latching, grasping, coiling - when evaluated as favourable) serve as every bit as much a heuristic local deliberative search mechanism as does natural selection.

These procedures are iterated as part of the overall growth control mechanism of the plant, to very successful effect (locating efficient shortcuts to regions of most light and other resources). Other plants, fungi and mostly-sedentary organisms exhibit similar behaviours which should be interpreted in similar algorithmic terms (perhaps especially commonly in roots and hyphae - fungal root-like structures - which are less obviously visible).

Another notable local-search deliberation executed by sedentary organisms concerns their dispersal of offspring and offshoots. Note that for such organisms, conditions as determined by physical location are paramount! It is typical of this lifestyle to invest in many candidate offspring and in a wide variety of dispersal mechanisms. Thus, just as genome is replicated with variation, so is location, and it is therefore part of a kind of meta-genome eligible to be selected on[9]. As a consequence of this control process, lineages of entirely sedentary organisms can be seen to rapidly reliably proceed toward physical niches to which they are fit. For some species with vegetative propagation it may be more plain that this process is carried out by a 'single actor'[10], but it results in the same kind of 'hill climbing' (or 'shaded fertile valley seeking' as the case may be), because it is essentially the same algorithm.

Why is plant deliberation 'more efficient' than natural selection?

Notably, these control operations, closely algorithmically related to iterated genetic natural selection, nevertheless operate much faster than genetic natural selection (they may take as little as days to manifest 'large' results). Why might this be?

The obvious way to 'go faster' in an iterated deliberation process is to have a shorter iteration cycle, performing more iterations per time. This can account for some of the difference but not all. The other obvious way to 'do better' is to evaluate more proposals per iteration. Notably, natural selection has high parallelism at its disposal and often evaluates many more proposals per step than do climbing plants.

It is also important to consider the dimension of the search space, which has important bearing on how tractable it is. The dimension is straightforward: genetic natural selection operates on a high-dimensional space, while plant climbing and locomotion operate on two or three dimensions, so it is simply easier for them to locate good solutions by generating local candidate proposals.

Notice, though, that lichen or mosses climbing trees over time can be seen to instantiate the same search, with similar parameters, in the same dimensions as larger more sophisticated climbing plants, but they achieve this much more slowly (but still fast by comparison to natural selection). So dimension and iteration speed do not appear to tell the whole story.

Two other important properties to pay attention to are suggested by the algorithmic breakdown described previously. First, relating to proposal, the fit of the sampling heuristics used in the deliberation. Second, relating to evaluation and promotion, the fidelity of these procedures to tracking the actual target.

The fit of the sampling heuristics is very important for tractability: genetic natural selection generates its proposals via something similar to symmetrical noise, leading to a lot of dead ends, wasted iterations, and even repeated or approximately-repeated computation. Moss-style migration up trees makes its proposals similarly noisily. In contrast, tendril growth of climbing plants is both decidedly biased (upward) and has an orientation, hence a 'memory', yielding a tendency not to double back on itself, at least locally (unless executing a coil, which is a different part of the algorithm). It is also able to use phototropism to further heuristically bias its search.[11]

These simple but highly-fit heuristic proposal-sampling biases, discovered by natural selection, the slow outer deliberator, may largely account for the evident efficiency advantage of climbing plants over less sophisticated passive climbers like moss and lichen.

Colonial organisms

Viewing insect colonies (for example, ants) as actors, we can identify all of the ingredients of deliberation. The pattern is, like with climbing plants, structurally similar to natural selection, but once again with fitter, more efficient, sampling heuristics.

Consider an ant colony foraging for food. The candidate proposals are the paths taken by individuals or small groups of workers. The evaluations begin with the guesses of those individuals (perhaps augmented by a message-passing algorithm) about the quality and quantity of any foods they come across. The properly promotive outcome involves laying down chemical signals and tactile interactions which encourage attractive or aversive behaviour by other workers (and adjust other behavioural parameters). As an iterated control procedure, this rapidly results in dense lines of workers ferrying the best and most plentiful food back to the nest. Note that no individual ant need be at all deliberative for this to work[12].

This process, again similarly-structured and with similar dimension to the tendril-climbing algorithm of some plants, is able to proceed even faster. From an algorithmic point of view, this can be explained by the even fitter sampling heuristics: for example, individual ants have very strong senses of smell, which allows the foraging party's search to be very heavily biased toward promising locations. This bias is more powerful even than phototropism exhibited by plants, because smell is nonlocal. Another factor is the resource efficiency of the state management: plant climbing algorithms record state and promote candidates by actual organism growth, while ant colonies execute these parts of the algorithm by leaving much less resource-intensive pheromone trails.

Properly deliberative artificial systems

The most obvious properly deliberative artificial systems are training and search algorithms inspired by natural selection, including population based training (PBT) and neuroevolution for neural networks, all of which employ an iterated proper deliberator as a control process to locate and promote complex configurations which are found to score well against some target.

Training and search routines often also employ similar but non iterated deliberation, for example static hyperparameter search and random seed search. (Sometimes these themselves end up iterated.)

Other times, the deployed artefact itself is the proper deliberator. Both value-based and policy-based RL controllers over discrete action spaces fall under the properly-deliberative Opt;Enact decomposition in a very basic way. One could make a similar case for a discrete classification model which opts for a single category at the last stage.

Cherry-picking from reactive generative models is an example of composing a Promote function (in this case human judgement!) with a reactive system to bootstrap a properly deliberative system. There are also automated examples of this type of composition and it is a very general formula for improving decision-making[13].

Hard-coded vs learned deliberation

All of the abovementioned examples of deliberation in artificial systems have the deliberation structure (composing Propose, Promote and Act) coded in by the designers.

In the case of evolutionary algorithms and PBT, proposals take the form of some carefully chosen local 'mutation' heuristics and promotion is coded around some provided (perhaps approximate) evaluation or fitness function.

In contemporary non-hierarchical RL with discrete action spaces[14], the proposals consist identically of fixed hard-coded analogues of the atomic action space, the promotion consists of a (usually learned) Evaluate, and Opt;Enact are hand-coded.

Risks from Learned Optimization as well as prior and subsequent discussions ask questions about when we might expect to find 'optimizers' arising without hard-coding them, and about what behaviours and capabilities we should expect from them. Since we have identified non-artificial examples of deliberation arising from the refinement or interactions between non-deliberative systems, it seems reasonable to look for analogies in our artificial systems. It remains unclear whether any contemporary artificial systems have learned deliberation.

Further work could consider the question of learned optimization from the deliberation lens, and subsequent posts in this sequence will discuss where deliberation comes from, as well as its manifestation at different levels of sophistication.

Parliaments

Perhaps representing the prototypical 'deliberation', some mechanisms of democratic parliaments illustrate deliberation algorithms at the level of multiple interacting humans.

Consider a vote on some motion, preceded by a debate. This is a proper, quasi-evaluative, optive deliberation.

Even if the motion is indivisible and singular, there are still at least two proposals: enact the motion, or do not enact the motion. In other cases there may be more proposals. These proposals are generated and surfaced by humans within the process by some means or other (which presumably involves its own deliberation!).

The debate, and the sentiments of the individuals participating, are a protracted evaluation and promotion mechanism over the proposals at hand.

The vote precipitates the choice, typically opting for some proposal, which is then enacted into some derived realisation by one or other organ of the democratic system. (Other possible outcomes to debates are state updates in the form of minds changed or documents written, and re-iterations with new or similar proposals, which is a more properly promotive than optive result.)

Parliaments rarely permanently dissolve themselves (though their actions can lead to their dissolution), so should be seen as iterated deliberators.

Often the parameters of a particular parliamentary session are set by some invoking organ, body, or individual, making a complex of deliberators which fit together in a larger democratic system.

As in previous cases, the power of a parliament lies partly in producing 'good' proposals, partly in recognising and promoting good proposals, and partly in the suitability of its delegate organs to appropriately enact its proposals. In the iterated sense, behaviours which precipitate improvements to these capacities are instrumental.

A note on these algorithmic assignments

One may rightly opine that an assignment of a particular algorithmic abstraction to a system, hiding the full and intricate detail of every moving piece, must involve some ultimately arbitrary or subjective choices. Perhaps this undermines the preceding discussion.

Indeed, no abstraction can work well in all circumstances, but abstractions are nevertheless inescapably necessary tools to make reasoning tractable in a world with bounded computational resources. If an ant colony were transported to the surface of the sun, there would rapidly be no sense in which it continued to carry out the previously-identified food-searching algorithm. In fact, the abstraction 'ant' would cease to be useful in the same instant. But if the same colony were transported to many locations on the surface of the Earth, or other relevantly-similar hypothetical places, the abstract algorithm identified above would continue to usefully predict outcomes, including counterfactuals ('what if I put some food here?', 'what if I move some ants there?' etc.), at least for some time, depending on the fitness of the colony's algorithm's abstractions and heuristics to the new environment.

'Ant' and 'ant colony' cease to be useful descriptions in the context of the surface of the sun, but remain defensible summary descriptions on many parts of the surface of the Earth. On the left a photograph of a magnification of the author's garden. On the right an artist's impression of the same experiment on the sun. (The author was regrettably unable to attend for the latter experiment.)

Conclusion

We've explored various deliberative systems (both proper and reactive), finding them at many levels of organisation, from cells to democracies. In several cases we uncovered novel insights, such as commonalities between natural selection, climbing plants, and ant colonies, and the deliberation abstraction further enabled us to point to relevant discrepancies determining the 'strength' of some of these systems. Suitability or fitness of Propose appears at least as important as that of Promote for deliberation strength in many cases, exemplified by the comparison of natural selection with gradient descent.

Various deliberative systems comprise interactions between multiple deliberators. These can be seen emerging from the interaction between relatively homogeneous subdeliberators (as in colonies and markets), or in deliberators giving rise to, or conditioning, other heterogeneous delegate deliberators' behaviour (as in cellular and multicellular life and bureaucracies).

Later posts will go into more depth about where deliberations come from, and also examine some deliberators which are more sophisticated, either in how they arrange their delegation or how they perform the key components of deliberation. This includes some artificial systems as well as some of the more cognitively sophisticated and powerful behaviours of animals and humans.

Thanks to those who probed in conversation, and thanks to SERI for sponsoring the research time and making those conversations possible.

In some sense this resembles a training, validation, and test data split, and in a similar way it gives me some confidence that the concepts here have some explanatory power. The real test though is other people attempting to use the ideas or providing criticism. ↩︎

I am not a chemist or physicist but based on my rough understanding of molecular thermodynamics and quantum mechanics, this might not quite be true, and it may be that there are in fact nigh-infinitessimal deliberations happening which 'add up to' what looks like smooth reactions, in a similar way to the right kind of infinitessimal natural selection adding up to gradient descent. ↩︎

Bacterial 'twitching' may be a counterexample which involves basic deliberation; compare below discussion of plant tendrils ↩︎

The iteration here actually comes from an outer weakly properly deliberative evaluative process which always proposes 'step again' and 'stop' and evaluates these against some measure (e.g. validation score, compute budget, some combination ...). Usually 'step again' is promoted by default in a short-circuit loop to save compute. This outer deliberation is a controller which delegates most of its action to the single-step gradient descent reaction. ↩︎

The heuristic is predicated on the extrinsic (human-derived) abstraction, empirically often applicable, that repeatedly moving, perhaps noisily, straight in the direction of local steepest descent can often effectively find non-straight paths to globally-low places. ↩︎

Note that the 'on average' claim takes a stochastic ex ante model of fitness as a latent property which is stochastically realised in numbers of progeny. Natural selection can be alternatively understood as just the ex post tautology that 'things which in fact propagate in fact propagate'. ↩︎

It feels wrong to say this; I am in fact in awe of natural selection. Perhaps it is a sign of my own bias for inappropriate anthropomorphism that I feel the need to apologise for saying harsh things about an emotionless natural force. ↩︎

Observe two fascinating short time-lapse montages of these literal 'tree searches' from Sir David Attenborough and the BBC at

- Life: Plants: Climbing plants (https://www.bbc.co.uk/programmes/p005fptt)

- The Private Life of Plants: Climbing Plants (https://www.bbc.co.uk/programmes/p00lx6cl)

↩︎Note that the Price equation does not care whether a characteristic is described by genes or some other information-carrying attribute ↩︎

The 'humongous fungus', possibly simultaneously the largest-spanning and most massive 'single organism' on Earth, falls in this category ↩︎

It is less clear whether climbing plants with tendril action have improved evaluation and promotion heuristics vs moss-style migration, but certainly in both types these heuristics track the target less noisily than does realised-fitness in natural selection. ↩︎

That is not to say that an individual partaking in this collective algorithm can not be deliberative; a tribe of individually-deliberative humans could also execute this type of procedure. The deliberative algorithm in this case just happens to be located outside of any single organism. ↩︎

In general, a good enough promoter can expect to get a benefit of a few standard deviations of quality from a proposer this way, though there are rapidly diminishing returns on extra compute spent. ↩︎

For RL or other sequential control with continuous action spaces, either the atomic-action-analogues are discretised or sampled from a predefined distribution, in which case the same analysis applies, or the action analogues are sampled directly after a learned policy distribution, in which case we have a learned reaction. Hierarchical cases will be discussed in a later post. ↩︎