Bernie Sanders has released a video on x-risk featuring a discussion with Eliezer, Nate, Daniel Kokotajlo, and Jeffrey Ladish. An excerpt from it appears to be blowing up on twitter.

EDIT: re 'blowing up': initial view velocity was really high (~200k/hour), but steeply dropped off and it doesn't seem to have really broken containment

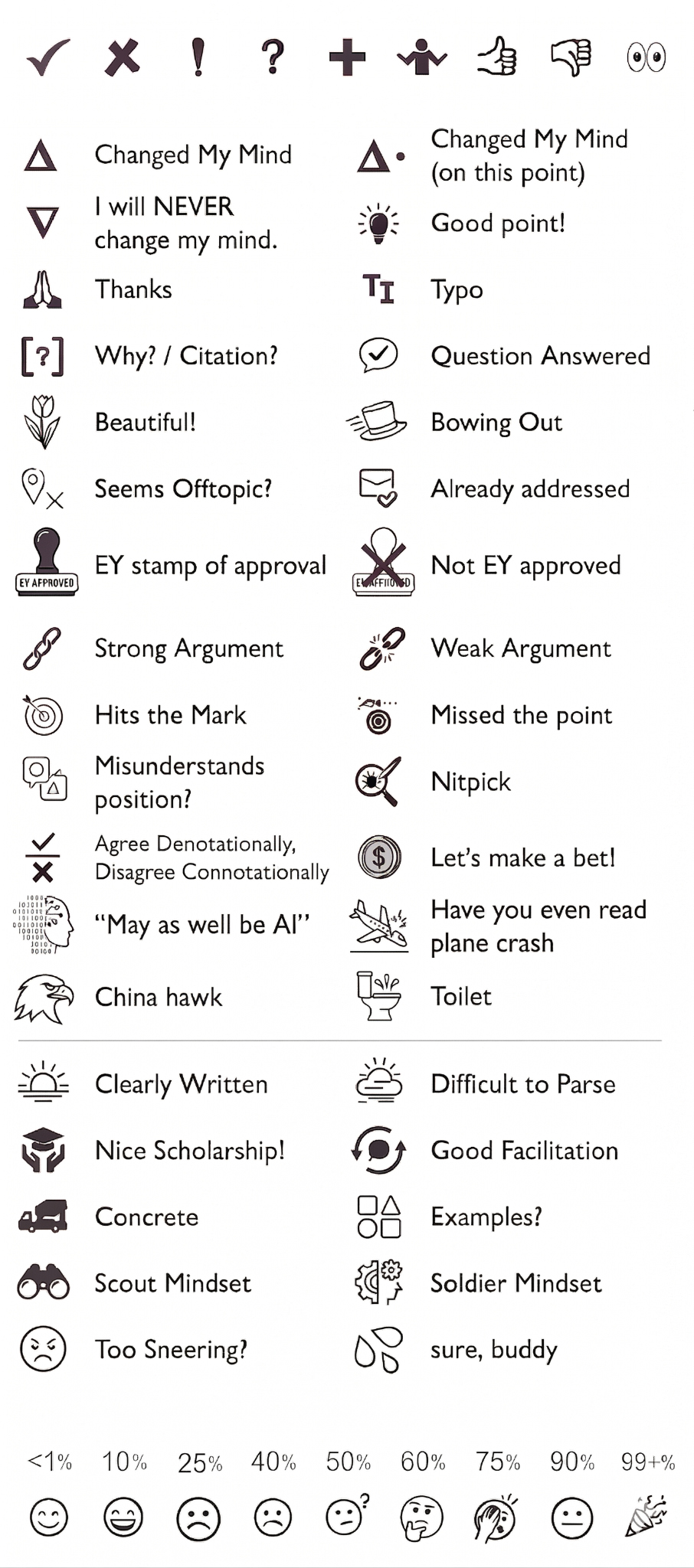

New reacts available only to paid users of LessWrong Premium (not you freeloaders) facilitate frictionless, borderline-telepathic communication.

‘I will NEVER change my mind’: Use this react to assert that you’re content with exactly how wrong you are (which is not at all), and that the case is permanently closed on this matter, so far as you’re concerned.1

‘EY Stamp of Approval’: Use this react to assert that, on your personal authority, Eliezer Yudkowsky agrees with the contents of the comment, rendering it beyond reproach.

‘NOT EY Approved’: Use this react to assert that, on your personal authority, Eliezer Yudkowsky disagrees with the contents of the comment, rendering it immensely reproachful. Users who accrue too many ‘NOT EY Approved’ reacts will have their accounts suspended (although actual thresholds here have yet to be set).2

‘May as well be AI’: Use this react when you’re indifferent to whether or not a statement was generated by AI because shit, it may as well be. You’re ignoring it either way.

‘Have you even read plane crash?’: Use this react when your interlocutor’s unfamiliarity with prior literature is clearly on display.

‘China Hawk’: Use this react to assert American ...

Disappointed that they left out these—I really don't like choosing between being technically correct and politically correct. Maybe they can go in a LessWrong Max tier.

Pete Hegseth just declared Anthropic a supply chain risk.

Under the most expansive naive interpretation of what this might mean, it seems plausible that this bottlenecks their compute access (e.g., through Amazon, who has government contracts).

Does anyone know more here / has thought more about this? I'd be pretty surprised if the most expansive naive interpretation held, but it's also probably not the most narrow one (e.g., can't work with the defense industry, or on government-specific contracts in larger companies).

That so many companies (the majority of Fortune 500s, for instance) contract with the government seems to make this dramatically impactful (e.g. some-double-digit-percentage of B2B revenue; but is it 10 percent or 90? How much does it impact other aspects of their operation? I just don't know).

Edit: An important detail here, not obvious from the initial post, is that 'Hegseth makes a tweet' is kind of a 'step 0' to the actual process unfolding. We actually don't know if he's really placed the order, if the order will be followed through on, and with what level of diligence/brutality (it's this last question that my original post was asking, but in some importance sense 'we're just not there yet').

Not to be overly political (although how do you avoid it with a topic like this?) but I think it's just another part of "MAGA maoism" as it's been called. The idea of the Republican party as a small government free market pro liberty group died with Trump, who painted over that whole philosophy with his focus on culture war grievances, state control, and "owning the libs".

Matt Yglesias had a good piece two years ago about how the tariff regime essentially makes a mini central planning department and we've seen Trump expressly use it as such, trying to bend the economy to attack companies and nations over personal grievances.

And Scott Lincicome of the CATO institute has covered this new brand of "state corporatism" as he calls it quite well IMO, about the nationalization of various companies and the various market distortions it creates.

"First they came" is a famous poem about the oppression of people, but it's also a good general warning for all sorts of behavior. What someone will happily do to another is what they can happily do to you if you ever cross them. Whether that be the chick who cheated on her husband for you cheating on your new relationship, stuffing people into camp...

MIRI is potentially interested in supporting reading groups for If Anyone Builds It, Everyone Dies by offering study questions, facilitation, and / or copies of the book, at our discretion. If you lead a pre-existing reading group of some kind (or meetup group that occasionally reads things together), please fill out this form.

The deadline to submit is September 22, but sooner is much better.

In two days: MIRI's hosting a virtual event for those who preorder Nate and Eliezer’s forthcoming book If Anyone Builds It, Everyone Dies. Sunday, August 10 at noon PT. It’s a chat between Nate Soares and Tim Urban (Wait But Why) followed by a Q&A.

This will be followed by another event in September, a full Q+A w/ Nate + Eliezer.

Meanings of political identities shift dramatically based on context, and you can't manually confirm the beliefs of everyone present at your 'gathering of people with x political identity'. To the extent that your political identity is based on Real Beliefs with Real Consequences, you should expect not to have much in common with many other people who declare the same identity when you move to a new place (or corner of the internet).

Example: In rural Southeast Texas, Confederate flags are a common sight, and my geometry teacher once told us about a cross burning he witnessed (which a few students murmured we really ought to bring back).

The majority of people genuinely hold at least one belief that, to many of my coastal-elite-descended friends, would seem comical. E.g., women should never have jobs and should rarely speak (especially in public), men with long hair are wanton or gay or trans or both, beating children (not like 'spanking' but like 'anything short of broken bones') is not only fine but your duty as a father, weed overdose not only can but will definitely kill you, megadoses of zinc can cure cancer, the covid vaccine is the mark of the beast from the book of revelation...

‘Major AI labs can only justify their high valuations by developing very powerful, very general AI systems’ is a claim I sometimes hear. That is, many seem to expect ‘if no AGI in n years, then the bubble pops’.

However, I think just revolutionizing tech is likely enough to justify current valuation levels (and maybe as much as 4x current valuations; maybe even more?), given the market caps of other large tech companies (even if we exclude NVIDIA, which we may want to do because their current market cap is more heavily tied to the AI boom than others). After all, these are still ‘only’ 12-figure valuations in a sector where many of the major players have broken a trillion. A 12-figure valuation is consistent with ‘future major player of the kind that already exists’ and not ‘potential god emperor of the solar system’.

Using these big tech companies as a reference point and assuming very limited further capabilities progress (eg no TAI, AGI, [your favorite way to talk about very general, ~human level systems]), the major LLM companies still don’t obviously look overvalued to me. The tech pie by itself is big enough (and the current state of the tech looks, to me, sufficient to massiv...

Just said to someone that I would by default read anything they wrote to me in a positive light, and that if they wanted to be mean to me in text, they should put '(mean)' after the statement.

Then realized that, if I had to put '(mean)' after everything I wrote on the internet that I wanted to read as slightly abrupt or confrontational, I would definitely be abrupt and confrontational on the internet less.

I am somewhat more confrontational than I endorse, and having to actually say to myself and the world that I was intending to be harsh, rather than simply demonstrating it, would lead to me being harsh somewhat less often.

I do basically know when I'm being confrontational in public, and am Doing It On Purpose, and also expect others to know it, and almost never say "I wasn't being confrontational" when I was being confrontational, and somehow having to signal it more overtly than it is already (that is to say, very) signaled would encourage me to tone it down.

In person, I even sometimes say 'I am being difficult in a way that I endorse/feels correct/feels important' and just go on being difficult, and considering that I'm about to do that in advance doesn't discourage me from bein...

Please stop appealing to compute overhang. In a world where AI progress has wildly accelerated chip manufacture, this already-tenuous argument has become ~indefensible.

A group of researchers has released the Longitudinal Expert AI Panel, soliciting and collating forecasts regarding AI progress, adoption, and regulation from a large pool of both experts and non-experts.

it’s plausible to me that almost any public discussion of AI or AI safety that is not centrally about LLM consciousness should clarify this early and often.

Low-context audiences are really hung up on the consciousness topic, and are often reading entirely unrelated material as though it were trying to make a claim about consciousness, then generalizing to a judgement about the speaker that inoculates them against partitioning consciousness and capabilities.

Clarifying that you don’t mean to step in the consciousness discussion upfront may be a way to reduce...

Reading so many reviews/responses to IABIED, I wish more people had registered how they expected to feel about the book, or how they think a book on x-risk ought to look, prior to the book's release.

Finalizing any Real Actual Object requires making tradeoffs. I think it's pretty easy to critique the book on a level of abstraction that respects what it is Trying To Be in only the broadest possible terms, rather than acknowledging various sub-goals (e.g. providing an updated version of Nate + Eliezer's now very old 'canonical' arguments), modulations o...

Haven’t found the new ‘following’ page especially useful since the change (whereas previously it was my default). Not sure why that is exactly, but one thing I think would help is if it consolidated threads with multiple posts from followed users, instead of duplicating the same thread many times within the feed. If two or more people I follow have a back and forth, it eats all the other updates (and I usually read the whole back and forth by clicking a link from the first child I see, so then my feed is majority stuff I’ve already seen).

The CCRU is under-discussed in this sphere as a direct influence on the thoughts and actions of key players in AI and beyond.

Land started a creative collective, alongside Mark Fisher, in the 90s. I learned this by accident, and it seems like a corner of intellectual history that’s at least as influential as ie the extropians.

If anyone knows of explicit connections between the CCRU and contemporary phenomena (beyond Land/Fisher’s immediate influence via their later work), I’d love to hear about them.

Sometimes people give a short description of their work. Sometimes they give a long one.

I have an imaginary friend whose work I’m excited about. I recently overheard them introduce and motivate their work to a crowd of young safety researchers, and I took notes. Here’s my best reconstruction of what he’s up to:

"I work on median-case out-with-a-whimper scenarios and automation forecasting, with special attention to the possibility of mass-disempowerment due to wealth disparity and/or centralization of labor power. I identify existing legal and technological...

Thinking about qualia, trying to avoid getting trapped in the hard problem of consciousness along the way.

Tempted to model qualia as a region with the capacity to populate itself with coarse heuristics for difficult-to-compute features of nodes in a search process, which happens to ship with a bunch of computational inconveniences (that are most of what we mean to refer to when we reference qualia).

This aids in generality, but trades off against locally optimal processes, as a kind of 'tax' on all cognition.

This is a literal shower thought and I've read no...

[errant thought pointing a direction, low-confidence musing, likely retreading old ground]

There’s a disagreement that crops up in conversations about changing people’s minds. Sides are roughly:

- You should explain things by walking someone through your entire thought process, as it actually unfolded. Changing minds is best done by offering an account of how your own mind was changed.

- You should explain things by back-chaining the most viable (valid) argument, from your conclusions, with respect to your specific audience.

This first strategy invites framing you...

Do you think of rationality as a similar sort of 'object' or 'discipline' to philosophy? If not, what kind of object do you think of it as being?

(I am no great advocate for academic philosophy; I left that shit way behind ~a decade ago after going quite a ways down the path. I just want to better understand whether folks consider Rationality as a replacement for philosophy, a replacement for some of philosophy, a subset of philosophical commitments, a series of cognitive practices, or something else entirely. I can model it, internally, as aiming to be any...

Sometimes people express concern that AIs may replace them in the workplace. This is (mostly) silly. Not that it won't happen, but you've gotta break some eggs to make an industrial revolution. This is just 'how economies work' (whether or not they can / should work this way is a different question altogether).

The intrinsic fear of joblessness-resulting-from-automation is tantamount to worrying that curing infectious diseases would put gravediggers out of business.

There is a special case here, though: double digit unemployment (and youth unemployment...

I (and maybe you) have historically underrated the density of people with religious backgrounds in secular hubs. Most of these people don't 'think differently', in a structural sense, from their forebears; they just don't believe in that God anymore.

The hallmark here is a kind of naive enlightenment approach that ignores ~200 years of intellectual history (and a great many thinkers from before that period, including canonical philosophers they might claim to love/respect/understand). This type of thing.

They're no less tribal or dogmatic, or more crit...

I don't think I really understood what it meant for establishment politics to be divisive until this past election.

As good as it feels to sit on the left and say "they want you to hate immigrants" or "they want you to hate queer people", it seems similarly (although probably not equally?) true that the center left also has people they want you to hate (the religious, the rich, the slightly-more-successful-than-you, the ideologically-impure-who-once-said-a-bad-thing-on-the-internet).

But there's also a deeper, structural sense in which it's true.

Working on A...

Does anyone have examples of concrete actions taken by Open Phil that point toward their AIS plan being anything other than ‘help Anthropic win the race’?

Sam Kriss (author of the ‘Laurentius Clung’ piece) has posted a critique. I don’t think it’s good, but I do think it’s representative of a view that I ever encounter in the wild but haven’t really seen written up.

https://samkriss.substack.com/p/against-truth