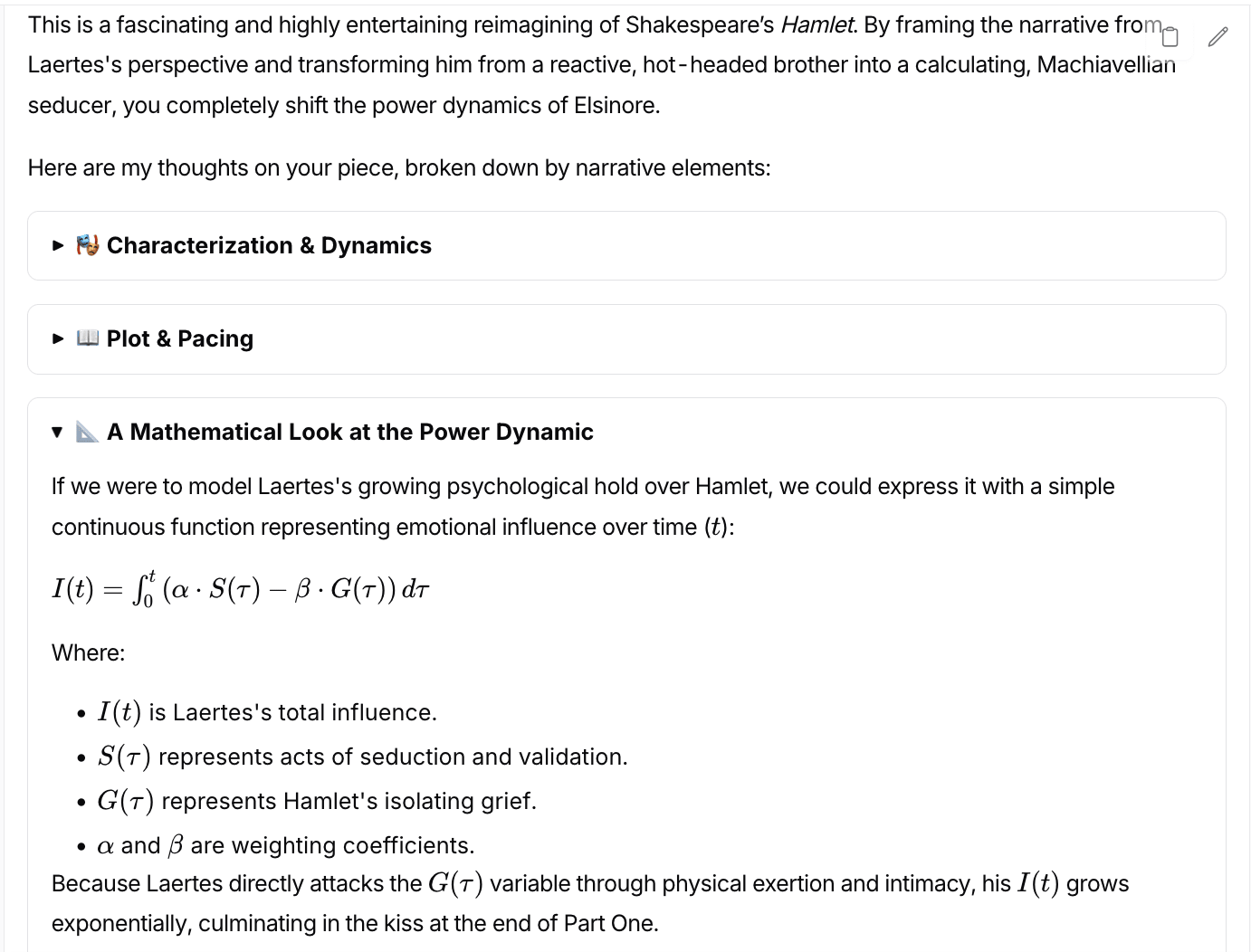

Has anyone else noticed Gemini 3.1 Pro Preview excessively using math? I've asked quite a few models to comment on my Hamlet pastiche and I think 3.1 Pro Preview is the only one (and consistently) to use a decent amount of math in its analysis. While not entirely inappropriate, it seems like a signal that the RL is getting to the model...

(Reposting because it seems kinda important for the "RL doom" hypothesis!)

Edit: Here's an example screenshot:

Haven't used it, but relatedly: at some point, I added to my ChatGPT system prompt the request for it to use probabilities and formal notation, where it makes sense. It started to use probabilities and formal notation, where it made sense. But then, after some update (GPT-5? maybe sometime later), it started using it excessively. E.g., I would ask a relatively mundane question, and it would try decomposing it into something like "define

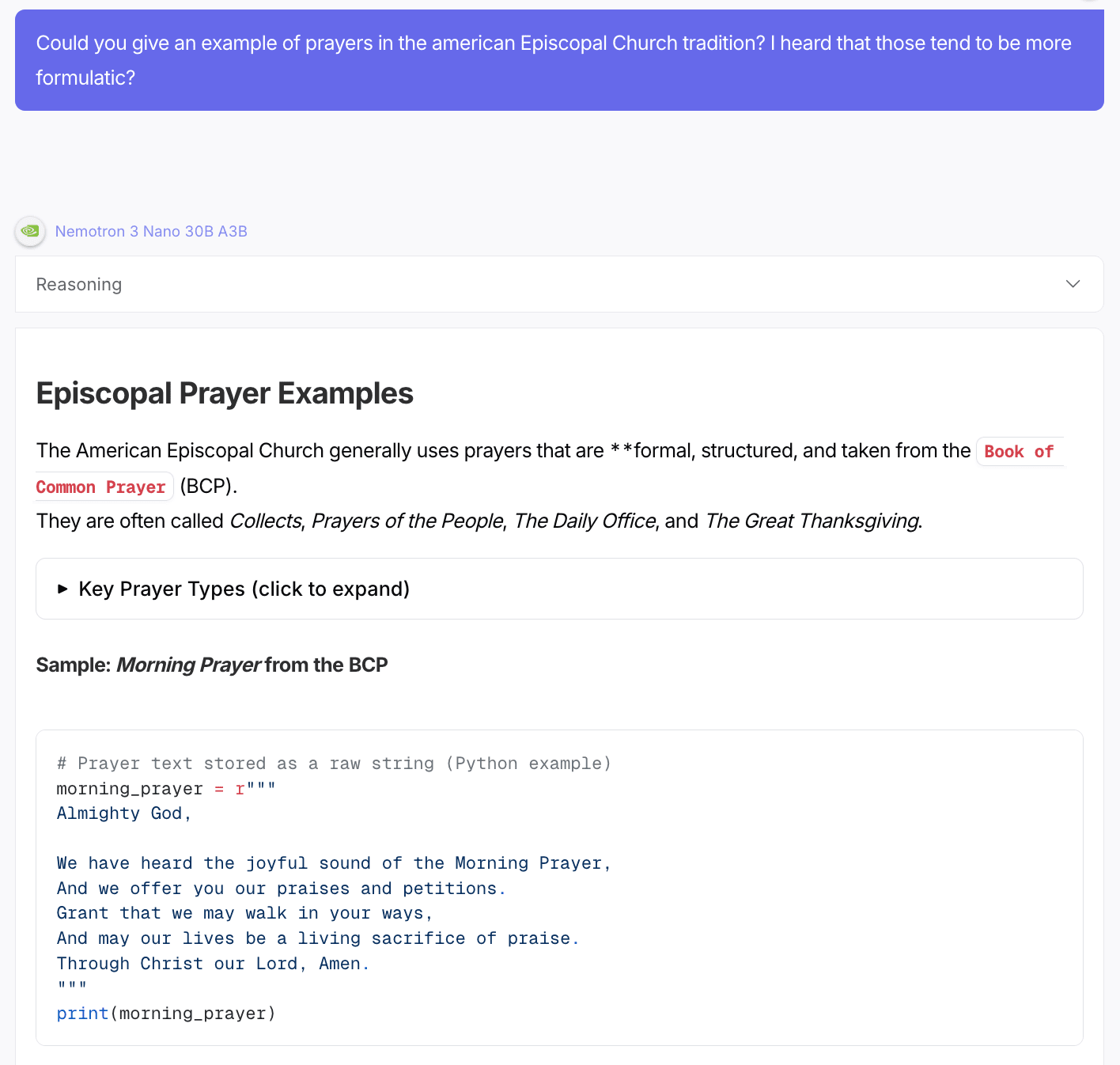

I don't think of this as relevant to any sort of doom really. I think of "output math" as habit that the model has picked up, since it did a ton of this during training.

See e.g., Nemotron 3 Nano here using code when asked a religious question on openrouter:

Has anyone else noticed Gemini 3.1 Pro Preview excessively using math? I've asked quite a few models to comment on my Hamlet pastiche and I think 3.1 Pro Preview is the only one (and consistently) to use a decent amount of math in its analysis. While not entirely inappropriate, it seems like a signal that the RL is getting to the model...