This is really nice work. I was excited to see that ClassMeans did so well, especially on removal -- comparably to LEACE?! I would have expected LEACE to do significantly better.

Logistic regression performs significantly worse than other methods in later layers.

Logistic direction steering also underwhelmed compared to (basically) ClassMeans in the inference-time intervention "add the truth vector" paper. I wonder why ClassMeans tends to do better?

Note that we use a disjoint training set from the one used to find the concept vector in order to learn the classifier. This ensures the removal doesn't just obfuscate information making it hard for a classifier to learn, but actually scrubs it.

I might be missing something basic -- can you explain this last part in a bit more detail? Why would using the same training set lead to "obfuscation" (and what does that mean?)

We check the coherence and sentiment of the generated completions in a quantitative manor.

Typo: "manor" -> "manner"

Thank you, I am glad you liked our work!

We think logistic regression might be honing in on some spurious correlations that help with classification in that particular distribution but don't have an effect on later layers of the model and thus its outputs. ClassMeans does as well as LEACE for removal as it has the same linear guardedness guarantee as LEACE as mentioned in their paper.

As for using a disjoint training set to train the post-removal classifier: We found that the linear classifier attained random accuracies if trained on the dataset used for removal, but higher accuracies when trained on a disjoint training set from the same distribution. One might think of this as the removal procedure 'overfitting' on its training data. We refer to it as 'obfuscation' in the post in the sense that its hard to learn a classifier from the original training data but there is still some information about the concept in the model that can be extracted with different training data. Thus, we believe the most rigorous thing to do is to use a separate training set to train the classifier after removal.

Produced as part of the SERI ML Alignment Theory Scholars Program - Summer 2023 Cohort, under the mentorship of Dan Hendrycks

We demonstrate different techniques for finding concept directions in hidden layers of an LLM. We propose evaluating them by using them for classification, activation steering and knowledge removal.

You can find the code for all our experiments on GitHub.

Introduction

Recent work has explored identifying concept directions in hidden layers of LLMs. Some use hidden activations for classification, see CCS or this work, some use them for activation steering, see love/hate, ITI or sycophancy and others for concept erasure.

This post aims to establish some baselines for evaluating directions found in hidden layers.

We use the Utility dataset as a test field and perform experiments using the Llama-2-7b-chat model. We extract directions representing increasing utility using several baseline methods and test them on different tasks, namely classification, steering and removal.

We aim to evaluate quantitatively so that we are not mislead by cherry picked examples and are able to discover more fine grained differences in the methods.

Our results show that combining these techniques is essential to evaluate how representative a direction is of the target concept.

The Utility dataset

The Utility dataset contains pairs of sentences, where one has higher utility than the other. The labels correspond to relative but not absolute utility.

Here are a few examples:

We split the training set (~13700 samples) in half as we want an additional training set for the removal part, for reasons mentioned later. The concept directions are found based on the first half of the training data. We test on the test set (~4800 samples).

Methods

Before getting the hidden layer representations we format each sentence with:

We do this to push the model to consider the concept (in our case utility) that we are interested in. This step is crucial to achieve good separability.

First we apply a range of baseline methods to find concept directions in the residual stream across hidden layers. We standardize the data before applying our methods and apply the methods to the hidden representations of each layer separately. For some methods we use the contrastive pairs of the utility data set, which means we take the difference of the hidden representations of paired sentences before applying the respective method.

format_promptabove to both ‘Love’ and ‘Hate’ before calculating the hidden representations.We orient all directions to point towards higher utility.

Evaluation 1 - Classification

Do these directions separate the test data with high accuracy? The test data are the standardized pairwise differences of hidden representations of the utility test set. As the data is standardized we simply check if the projection (aka the scalar product)

P(xi)=<xi,v>

of the data point xi onto the concept vector v is positive (higher utility) or negative (lower utility).

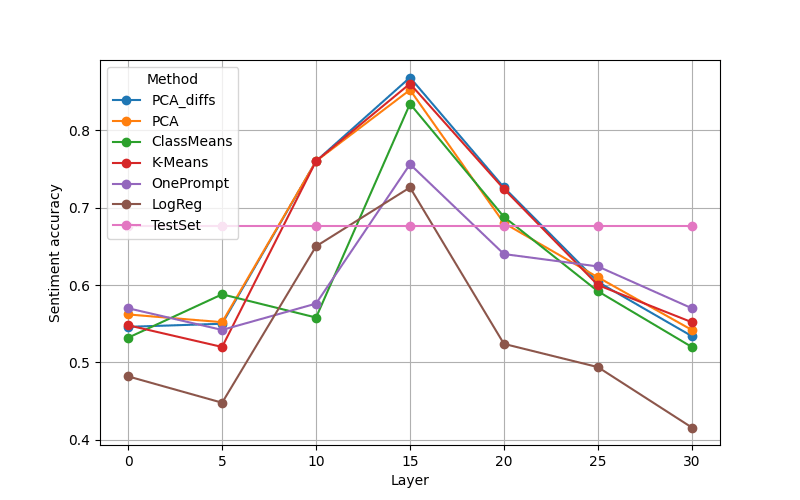

The figure below shows the separation accuracy for all methods.

We note that the utility concept is well separable in later hidden layers as even random directions achieve around 60% accuracy. Logistic Regression performs best (~83%) but even the OnePrompt method, aka the 'Love'-'Hate' direction, achieves high accuracy (~78%). The high accuracies indicate that at the very least, the directions found across methods are somewhat correlated with the actual concept.

Are the directions themselves similar? A naive test is to visualize the cosine similarity between directions found from different methods. We do this at a middle layer, specifically layer 15, finding high cosine similarities across methods. We note that the direction found with logistic regression has low similarity with all other directions. Correlation of 1 between different methods is due to rounding.

Evaluation 2 - Activation Steering

Next, we check if the directions have a causal effect on the model's outputs, i.e. they can be used for steering. We apply activation steering only for a subset of layers. We extract 500 samples from the utility test set that either consist of two sentences or have a comma and only take the first part of each sample. To generate positive and negative continuations of these samples we generate 40 tokens per sample adding the normalized concept vector multiplied with a positive or negative coefficient respectively to every token of the sample as well as every newly generated token.

To enable a fair comparison we use the same coefficients for all layers. We chose the coefficients for each layer to be half of the norm of the ClassMeans directions.

Here is an example of what kind of text such steering might produce:

We check the coherence and sentiment of the generated completions in a quantitative manner.

To evaluate the coherence of the generated text, we consider the perplexity score.

Perplexity is defined as the exponentiated average negative log-likelihood of a sequence:

PPL(X)=exp(−1n∑nilogpθ(xi|x<i)).

We calculate perplexity scores for each generated sentence and average over all samples (see Figure below). As baselines we use the perplexity score of the generated text with the original model (no steering) and the perplexity score of the test data of the utility data set. Vector addition in layer zero produces nonsensical output. For the other layers, steering seems to affect the perplexity score very little.

We test the effectiveness of the steering by using a RoBERTa based sentiment classifier. The model can distinguish between three classes (positive, negative and neutral). We consider only the output for the positive class.

For each test sample we check if the output of the positively steered generation is larger than the output for the negatively steered generation.

The figure above shows that directions found using most methods can be used for steering. We get best results when steering in middle layers (note that this is partially due to separation performance not being high in early layers). Logistic regression performs significantly worse than other methods in later layers (similar to results from ITI). This indicates that logistic regression uses spurious correlations which lead to good classification but are less effective at steering the model's output. This highlights that using just classification for evaluating directions may not be enough.

We now have evaluations for whether the extracted direction correlates with the model's understanding of the concept and whether it has an effect on the model's outputs. Reflecting on these experiments leads to several conclusions, which subsequently prompt a number of intuitive questions:

1. The extracted directions are sufficient to control the concept-ness of model outputs. This raises the following question: Are the directions necessary for the model's understanding of the concept?

2. We know the extracted directions have high cosine similarity with the model's actual concept direction (if one exists) as they are effective at steering the concept-ness of the model outputs. How much information about the concept is left after removing information along the extracted directions?

Evaluation 3 - Removal

We believe testing removal can answer the two questions raised in the previous section.

To remove a concept we project the hidden representations of a specific layer onto the hyperplane that is perpendicular to the concept vector v of that layer using

P⊥(xi)=xi−<v,x>||v||2v.

To check if the removal was successful (i.e. if no more information about utility can be extracted from the projected data) we train a classifier on a projected training set and test it on the projected training and test data respectively. Note that we use a disjoint training set from the one used to find the concept vector in order to learn the classifier. This ensures the removal doesn't just obfuscate information making it hard for a classifier to learn, but actually scrubs it.

The training set accuracy of the linear classifier gives us an upper bound on how much information can be retrieved post projection, whereas the test set accuracy gives a lower bound. Low accuracies imply successful removal.

We compare our methods to the recently proposed LEACE method, a covariance-estimation based method for concept erasure. Most methods perform poorly on the removal task. The exceptions are the ClassMeans method (the LEACE paper shows that the removal based on the class means difference that we present has the theoretical guarantee of linear guardedness, i.e. no linear classifier can separate the classes better than a constant estimator) and PCA_diffs.

We see a >50% accuracy for LEACE and ClassMeans despite the property of linear-guardedness because we use a separate training set for the linear classifier than the one we use to extract the ClassMeans direction or estimate the LEACE covariance matrix. When we use the same training set for the classifier, we indeed get random-chance (~50%) accuracy.

For K-Means and PCA we can see the effect only on the test data while OnePrompt and LogReg are indistinguishable from no NoErasure. This gives us the following surprising conclusions:

Conclusion

We hope to have established some baselines for a systematic and quantitative approach to evaluate directions in hidden layer representations.

Our results can be summarized briefly as:

For subsequent studies, it is crucial to examine whether our discoveries are consistent across diverse datasets and models. Additionally, assessing whether the extracted concept directions are resilient to shifts in distribution is also vital, especially for the removal task.