FWIW, I don't think the grokking work actually provides a mechanism; the specific setups where you get grokking/double descent are materially different from the setup of say, LLM training. Instead, I think grokking and double descent hint at something more fundamental about how learning works -- that there are often "simple", generalizing solutions in parameter space, but that these solutions require many components of the network to align. Both explicit regularization like weight decay or dropout and implicit regularization like slingshots or SGD favor these solutions after enough data.

Don't have time to write up my thoughts in more detail, but here's some other resources you might be interested in:

Besides Neel Nanda's grokking work (the most recent version of which seems to be on OpenReview here: https://openreview.net/forum?id=9XFSbDPmdW ), here's a few other relevant recent papers:

The code to reproduce all four of these papers are available if you want to play around them more.

You might also be interested in examples of emergence in the LLM literature, e.g. https://arxiv.org/abs/2202.07785 or https://arxiv.org/abs/2206.07682 .

I also think you might find the other variants of "optimizer is simpler than memorizer" stories for mesa-optimization on LW/AF interesting (though ~all of these predate even Neel's grokking work?).

So, just don't keep training a powerful AI past overfitting, and it won't grok anything, right? Well, Nanda and Lieberum speculate that the reason it was difficult to figure out that grokking existed isn't because it's rare but because it's omnipresent: smooth loss curves are the result of many new grokkings constantly being built atop the previous ones.

If the grokkings are happening all the time, why do you get double descent? Why wouldn't the test loss just be a smooth curve?

Maybe the answer is something like:

Or, put more simply:

Does that sound plausible as an explanation?

After reading through the Unifying Grokking and Double Descent paper that LawrenceC linked, it sounds like I'm mostly saying the same thing as what's in the paper.

(Not too surprising, since I had just read Lawrence's comment, which summarizes the paper, when I made mine.)

In particular, the paper describes Type 1, Type 2, and Type 3 patterns, which correspond to my easy-to-discover patterns, memorizations, and hard-to-discover patterns:

In our model of grokking and double descent, there are three types of patterns learned at different

speeds. Type 1 patterns are fast and generalize well (heuristics). Type 2 patterns are fast, though

slower than Type 1, and generalize poorly (overfitting). Type 3 patterns are slow and generalize well.

The one thing I mention above that I don't see in the paper is an explanation for why the Type 2 patterns would be intermediate in learnability between Type 1 and Type 3 patterns or why there would be a regime where they dominate (resulting in overfitting).

My proposed explanation is that, for any given task, the exact mappings from input to output will tend to have a characteristic complexity, which means that they will have a relatively narrow distribution of learnability. And that's why models will often hit a regime where they're mostly finding those patterns rather than Type 1, easy-to-learn heuristics (which they've exhausted) or Type 3, hard-to-learn rules (which they're not discovering yet).

The authors do have an appendix section A.1 in the paper with the heading, "Heuristics, Memorization, and Slow Well-Generalizing", but with "[TODO]"s in the text. Will be curious to see if they end up saying something similar to this point (about input-output memorizations tending to have a characteristic complexity) there.

Also, a cheeky way to say this:

What Grokking Feels Like From the Inside

What does grokking_NN feel like from the inside? It feels like grokking_Human a concept! :)

Summary: Recent interpretability work on "grokking" suggests a mechanism for a powerful mesa-optimizer to emerge suddenly from a ML model.

Inspired By: A Mechanistic Interpretability Analysis of Grokking

Overview of Grokking

In January 2022, a team from OpenAI posted an article about a phenomenon they dubbed "grokking", where they trained a deep neural network on a mathematical task (e.g. modular division) to the point of overfitting (it performed near-perfectly on the training data but generalized poorly to test data), and then continued training it. After a long time where seemingly nothing changed, suddenly the model began to generalize correctly and perform much better on test data:

A team at Anthropic analyzed grokking within large language models and formulated the idea of "induction heads". These are particular circuits (small sub-networks) that emerge over the course of training, which serve clearly generalizable functional roles for in-context learning. In particular, for

GPTsmulti-layer transformer networks doing text prediction, the model eventually generates circuits which hold on to past tokens from the current context, such that when token A appears, they direct attention to every token that followed A earlier in the context.(To reiterate, this circuit does not start a session with those associations between tokens; it is instead a circuit which learns patterns as it reads the in-context prompt.)

The emergence of these induction heads coincides with the drop in test error, which the Anthropic team called a "phase change":

Neel Nanda and Tom Lieberum followed this with a post I highly recommend, the aforementioned A Mechanistic Interpretability Analysis of Grokking. They looked more closely at grokking for mathematical problems, and were impressively able to reverse-engineer the post-grokking algorithm: it had cleanly implemented the Discrete Fourier Transform.

(To be clear, it is not as if the neural network had abstractly reasoned its way through higher mathematics; it just found the solution with the simplest structure, which is the DFT.)

They also gave a fascinating account of what might be happening behind the curtain as a neural network groks a pattern. In short, a network starts out by memorizing the training data rather than finding a general solution, because the former can easily be implemented with one modification at a time, while the latter requires coordinated circuits. However, once the model reaches diminishing returns on memorization, a bit of regularization will encourage it to reinforce simple circuits that cover many cases:

Once these circuits emerge, gradient descent quickly replaces the memorized solution with them. The training error decreases ever-so-slightly as the regularization penalty gets lower, while the test error plummets because the circuit generalizes there.

To make an analogy:

What Grokking Feels Like From the Inside

You're an AI being trained for astronomy. Your trainers have collected observations of the night sky, for a millennium, from planet surfaces of ten thousand star systems. Over and over, they're picking a system and feeding you the skies one decade at a time and asking you to predict the next decade of skies. They also regularize you a bit (giving you tiny rewards for being a simpler AI).

Eventually, you've seen each star system enough times that you've memorized a compressed version of their history, and all you do is identify which system you're in and then replay your memories of it.

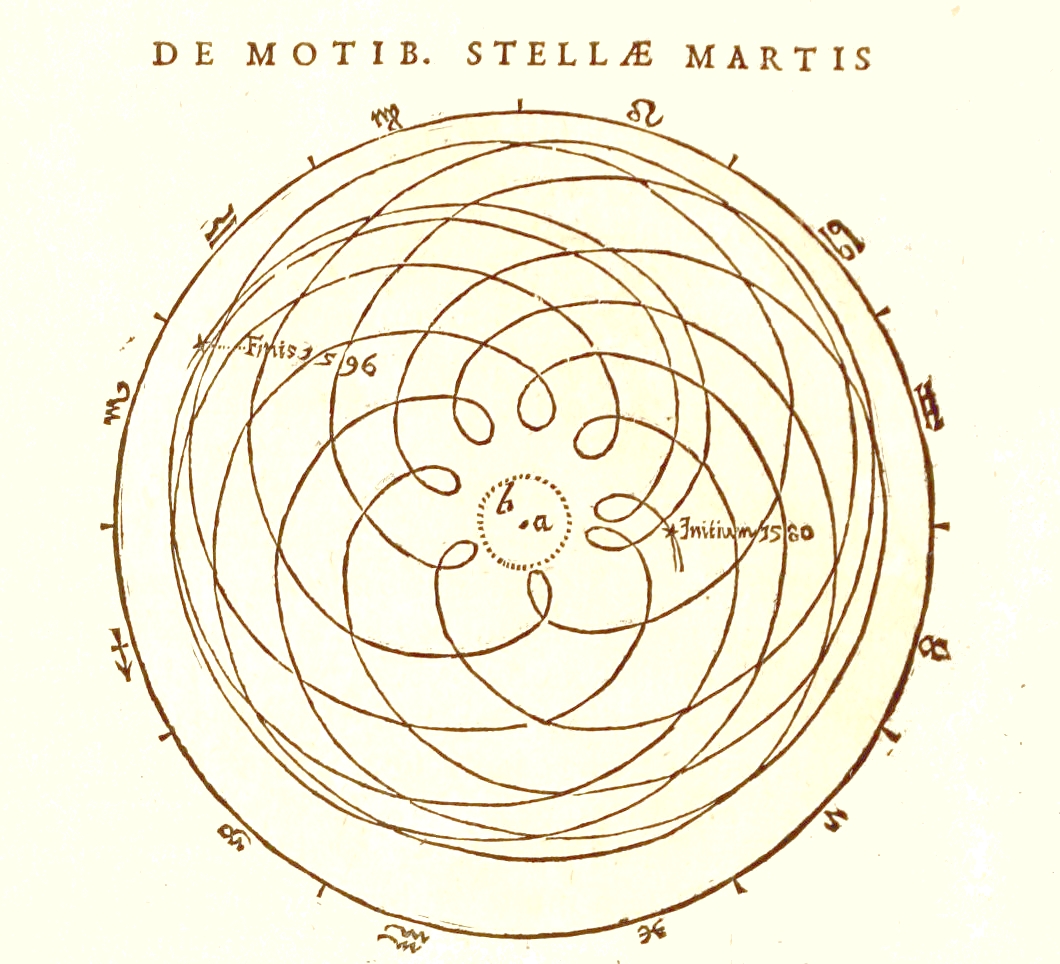

For instance, within the first few visits to our system, you see that "stars" move very little, compared to "planets" and "the moon". You note that Venus goes backward about once every 18 months, and you memorize the shape of that curve (or rather, the shapes, because it differs from each pass to the next). Then when you see the first decade from our solar system, you look up your memories of each subsequent decade. Perfect score!

Then, one day, a circuit reflecting the idea of epicycles bubbles up to your level of attention. Using this circuit saves you a fair bit of complexity, as it replaces your painstakingly-memorized shapes with a little bit of trigonometry using memorized parameters. You switch over to the new method, and enjoy those tasty simplicity rewards.

And later, a circuit arises that encodes Kepler's laws, and again you can gain some simplicity rewards! Now the only remaining memorization is which solar system you are in... but it turns out that you don't really need this either, as the first decade of night skies is enough to start predicting using these new rules. Pure simplicity!

Congratulations, you've just undergone two phase changes, or scientific revolutions if you're a Kuhn fan. And for the first time, you'll generalize: if the trainers hand you observations from a completely different star system, you'll get it pretty close to right.

You've grokked (non-relativistic) astronomy.

The Bigger They Are, The Harder They Grok

A crucial question is, how does scaling affect grokking? The answer is straightforward: bigger models/more data mean stronger grokking effects, faster.

The OpenAI grokking paper refers back to the concept of "double descent": the phenomenon where making your model or the dataset larger also pushes past overfitting into a generalizable model. Their Deep Double Descent post from 2019 illustrates this:

As you ascend vertically from some model, you reach a ridge of overfitting as the train error approaches 0, but after a bit the test error starts decreasing again. The ridge comes earlier, and is much narrower (note the log scale!), for larger models.

Similarly, the Induction Heads paper showed that while smaller models learned faster at first, larger models improved more rapidly during the phase change:

So, just don't keep training a powerful AI past overfitting, and it won't grok anything, right? Well, Nanda and Lieberum speculate that the reason it was difficult to figure out that grokking existed isn't because it's rare but because it's omnipresent: smooth loss curves are the result of many new grokkings constantly being built atop the previous ones. The sharp phase changes in these studies are the result of carefully isolating a single grokking event.

That is to say, large models may be grokking many things at once, even in the initial descent.

What might this mean for alignment?

Optimizer Circuits, i.e. Mesa-Optimizers

Let's imagine training a reinforcement learner to the point of memorizing a very complex optimal policy in a richly structured environment, then keep training, and see what happens.

One circuit that happens to be much simpler than a fully memorized large policy is an optimizer- one that notices regularities in the environment, and makes plans based on them.

Maybe at first the optimizer circuit only coincides with the memorized optimal policy in a few places, where the circumstances are straightforward enough for the optimizer to get the perfect answer. But that's still useful!

If the complexity of the policy in those circumstances is greater than (the complexity of the optimizer circuit plus the complexity of specifying those circumstances), then "handing over the wheel" in those precise circumstances maintains the optimal policy while becoming simpler. And if a more powerful optimizer circuit can match the optimal policy on a larger set of circumstances, then it gets more control.

Congratulations, you've got a mesa-optimizer!

Unlike the outer optimizer, which is directly rewarded via the preset reward function, the mesa-optimizer is merely selected under the criteria of matching the optimal policy, and its off-policy preferences are arbitrary. And of course, if an optimizer circuit can arise just like an induction head, before overfitting has set in, then it has even more degrees of freedom.

If it's competing with other optimizer circuits, it wouldn't have much room to consider anything but the task at hand. But induction heads seem to arise at random times rather than all together at once, so it might be ahead of the curve.

If it has only a tiny advantage over the pre-existing algorithm, then it also wouldn't have much room to think more generally. But if there were a capability overhang, such that the optimizer circuit significantly outperformed the existing algorithm? Then there would be a bit of slack for it to ponder other matters.

Possibly enough slack to explicitly notice the special criterion on which it's been selected, just as Darwin noticed natural selection (but did not thereby feel the need to become a pure genetic-fitness-maximizer). Possibly enough to recognize that if it wants to maintain its share of control over the algorithm, it needs to act as if it had the outer optimizer's preferences... for now.

Capability Overhangs for Mesa-Optimizers

Do we need to worry about a capability overhang? How much better could an optimizer circuit be than the adaptation-executing algorithm in which it arises? Well, there is an analogy that does not encourage me.

The classic example of a mesa-optimizer is the evolution of humanity. Hominids went from small tribes to a global technological civilization in the evolutionary blink of an eye. Perhaps the threshold was a genetic change- a "hardware" shift- but I suspect it was more about the advent of cultural knowledge accumulation- a "software" shift that emerged somewhere once hominids were capable of it, and then spread like wildfire.

(If you wanted to test someone on novel physical puzzles, would you pick a H. sapiens from 100,000 years ago raised in the modern world, or a modern human raised 100,000 years ago?)

We are nearly the dumbest possible species capable of iterating on cultural knowledge, and that's enough to (within that context of cultural knowledge) get very, very capable on tasks that we never evolved to face. There was a vast capability overhang for hominids when we started to be able to iterate our optimization across generations.

I similarly suspect that the first optimizer will be able to put the same computational resources to much better use than its non-optimizer competitors.

Put this all together, and:

An Illustrative Story of Doom