I don't know whether this is relevant to you, but in "x is aware of y" ("y is in x's awareness"), y is considered an intensional term, while for "x physically contains y", y is considered extensional. (And x is extensional in both cases.)

"Extensional" means that co-referring terms can always be substituted for each other without affecting the truth value of the resulting proposition. For "intensional" terms this is not necessarily the case.

For example, "Steve is aware of Yvain" does not entail "Steve is aware of Scott", even if Scott = Yvain. Namely when Steve doesn't know that Scott = Yvain.

However, "The house contains Scott" ("Scott is in the house") implies "The house contains Yvain" because Yvain = Scott.

Most relations only involve extensional terms. Some examples of relations which involve intensional terms: is aware of, thinks, believes, wants, intends, loves, means.

Intensional (with an "s") terms are present mainly or only in relations which express "intentionality" (with a "t") in the technical philosophical sense: a mind (or mental state) representing, or being about, something. It's a central question in philosophy of mind how this can happen. Because ordinary physical objects don't seem to exhibit this property.

Though I'm not completely sure whether your theory has ambitions to solve this problem.

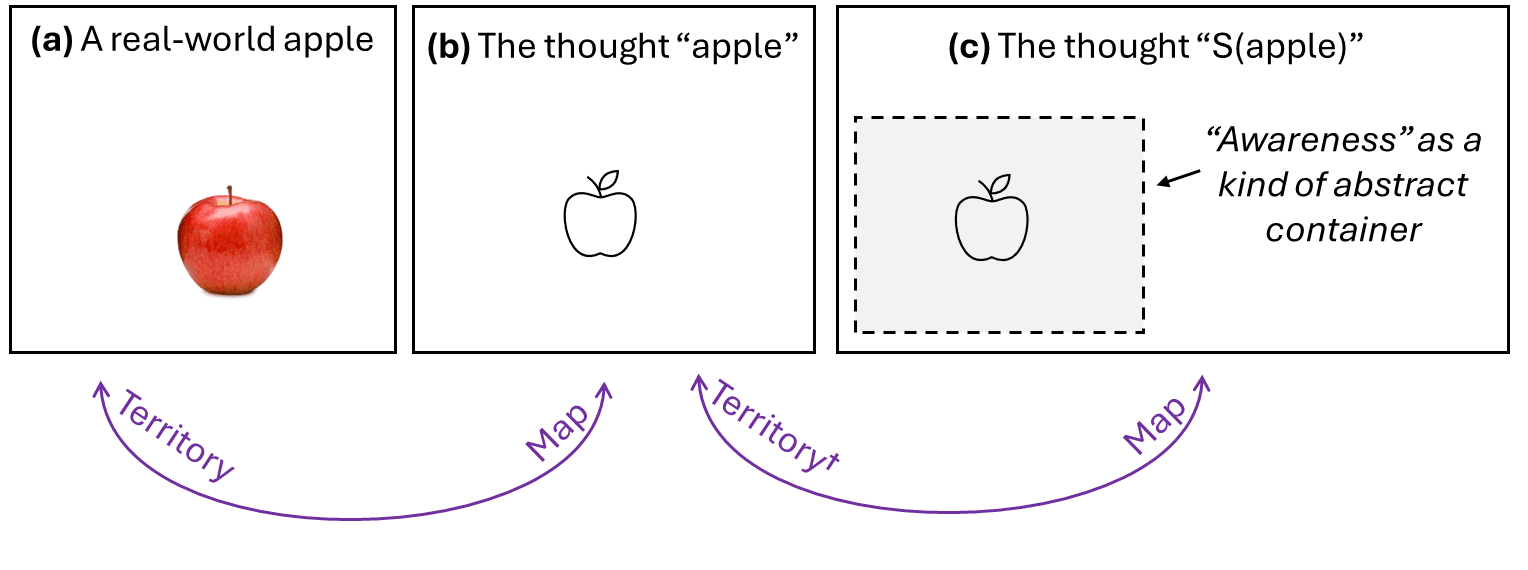

Thanks! I feel like that’s a very straightforward question in my framework. Recall this diagram from above:

[UPDATED TO ALSO COPY THE FOLLOWING SENTENCE FROM OP: †To be clear, the “territory” for (c) is really “(b) being active in the cortex”, not (b) per se.]

Your “truth value” is what I call “what’s happening in the territory”. In the (b)-(a) map-territory correspondence, the “territory” is the real world of atoms, so two different concepts that point to the same possibility in the real world of atoms will have the same “truth value”. In the (c)-(b) map-territory correspondence, the “territory” is the cortex, or more specifically what concepts are active in the cortex, so different concepts are always different things in the territory.

Do you agree that that’s a satisfactory explanation in my framework of why “apple is in awareness” is intensional while “apple is in the cupboard” is extensional? Or am I missing something?

So here (c) is about / represents (b), which itself is about / represents (a). Both (b) and (c) are thoughts (the thought of an apple and the thought of the thought of an apple), so it is expected that they both can represent things. And (a) is a physical object, so it isn't surprising that (a) doesn't represent anything.

However, it is not clear how this difference in capacity for representation arises. More specifically, if we think of (c) not as a thought/concept, but as the cortex, which is a physical object, it is not clear how the cortex could represent / be about something, namely (b).

It is also not clear why thinking about X doesn't imply thinking about Y even in cases where X=Y, while X being on the cupboard implies Y being on the cupboard when X=Y.

Tangential considerations:

I notice that in (b)-(a), (a) is intensional, as expected, while in (c)-(b), (b) does seem to be extensional. Which is not expected, since (c) is a thought about (b).

For example, in the case of (b)-(a) we could have a thought about the apple on the cupboard, and a thought about the apple I bought yesterday, which would not be the same thought, even if both apples are the same object, since I may not know that the apple on the cupboard is the same as the apple I bought yesterday.

But when thinking about our own thoughts, no such failure of identification seems possible. We always seem to know whether two thoughts are the same or not. Apparently because we have direct "access" to them because they are "internal", while we don't have direct "access" to physical objects, or to other external objects, like the thoughts of other people. So extensionality fails for thoughts about external objects, but holds for thoughts about internal objects, like our own thoughts.

Thanks! The “territory” for (c) is not (b) per se but rather “(b) being active in the cortex”. (That’s the little dagger on the word “territory” below (b), I explained it in the OP but didn’t copy it into the comment above, sorry.)

So “thought of the thought of an apple” is not quite what (c) is. Something like “thought of the apple being on my mind” would be closer.

More specifically, if we think of (c) not as a thought/concept, but as the cortex, which is a physical object, it is not clear how the cortex could represent / be about something, namely (b).

I sorta feel like you’re making something simple sound complicated, or else I don’t understand your point. “If you think of a map of London as a map of London, then it represents London. If you think of a map of London as a piece of paper with ink on it, then does it still represent London?” Umm, I guess? I don’t know! What’s the point of that question? Isn’t it a silly kind of thing to be talking about? What’s at stake?

It is also not clear why thinking about A doesn't imply thinking about B even in cases where A=B, while A being on the cupboard implies B being on the cupboard when A=B.

Again, I feel like you’re making common sense sound esoteric (or else I’m missing your point). If I don’t know that Yvain is Scott, and if at time 1 I’m thinking about Yvain, and if at time 2 I’m thinking about Scott, then I’m doing two systematically different things at time 1 versus time 2, right?

But when thinking about our own thoughts, no such failure of identification seems possible.

In some contexts, two different things in the map wind up pointing to the same thing in the territory. In other cases, that doesn’t happen. For example, in the domain of “members of my family”, I’m confident that the different things on my map are also different in the territory. Whereas in the domain of anatomy, I’m not so confident—maybe I don’t realize that erythrocytes = red blood cells. Anyway, whether this is true or not in any particular domain doesn’t seem like a deep question to me—it just depends on the domain, and more specifically how easy it is for one thing in the territory to “appear” different (from my perspective) at different times, such that when I see it the second time, I draw it as a new dot on the map, instead of invoking the preexisting dot.

I sorta feel like you’re making something simple sound complicated, or else I don’t understand your point. “If you think of a map of London as a map of London, then it represents London. If you think of a map of London as a piece of paper with ink on it, then does it still represent London?” Umm, I guess? I don’t know! What’s the point of that question? Isn’t it a silly kind of thing to be talking about? What’s at stake?

Well, it seems that purely the map by itself (as a physical object only) doesn't represent London, because the same map-like object could have been created as an (extremely unlikely) accident. Just like a random splash of ink that happens to look like Jesus doesn't represent Jesus, or a random string generator creating the string "Eliezer Yudkowsky" doesn't refer to Eliezer Yudkowsky. What matters seems to be the intention (a mental object) behind the creation of an actual map of London: Someone intended it to represent London.

Or assume a local tries to explain to you where the next gas station is, gesticulates, and uses his right fist to represent the gas station and his left fist to represent the next intersection. The right fist representing the gas station is not a fact about the physical limb alone, but about the local's intention behind using it. (He can represent the gas station even if you misunderstand him, so only his state of mind seems to matter for representation.)

So it isn't clear how a physical object alone (like the cortex) can be about something. Because apparently maps or splashes or strings or fists don't represent anything by themselves. That is not to say that the cortex can't represent things, but rather that it isn't clear why it does, if it does.

Again, I feel like you’re making common sense sound esoteric (or else I’m missing your point). If I don’t know that Yvain is Scott, and if at time 1 I’m thinking about Yvain, and if at time 2 I’m thinking about Scott, then I’m doing two systematically different things at time 1 versus time 2, right?

Exactly. But it isn't clear why these thoughts are different. If your thinking about someone is a relation between yourself and someone else, then it isn't clear why you thinking about one person could ever be two different things.

(A similar problem arises when you think about something that might not exist, like God. Does this thought then express a relation between yourself and nothing? But thinking about nothing is clearly different from thinking about God. Besides, other non-existent objects, like the largest prime number, are clearly different from God.)

Maybe it is instead a relation between yourself and your concept of Yvain, and a relationship between yourself and your concept of Scott, which would be different relations, if the names express different concepts, in case you don't regard them as synonymous. But both concepts happen to refer to the same object. Then "refers to" (or "represents") would be a relation between a concept and an object. Then the question is again how reference/representation/aboutness/intentionality works, since ordinary physical objects don't seem to do it. What makes it the case that concept X represents, or doesn't represent, object Y?

But when thinking about our own thoughts, no such failure of identification seems possible.

In some contexts, two different things in the map wind up pointing to the same thing in the territory. In other cases, that doesn’t happen. For example, in the domain of “members of my family”, I’m confident that the different things on my map are also different in the territory.

If you believe x and y are members of your family, that doesn't imply you having a belief on whether x and y are identical or not. But if x and y are thoughts of yours (or other mental objects), you know whether they are the same or not. Example: you are confident that your brother is a member of your family, and that the person who ate your apple is a member of your family, but you are not confident about whether your brother is identical to the person who ate your apple.

It seems such examples can be constructed for any external objects, but not for internal ones, so the only "domain" where extensionality holds for intentionality/representation relations is arguably internal objects (our own mental states).

I feel like the difference here is that I’m trying to talk about algorithms (self-supervised learning, generative models, probabilistic inference), and you’re trying to talk about philosophy? (See §1.6.2). I think there are questions that seem important and tricky in your philosophy-speak, but seem weird or obvious or pointless in my algorithm-speak … Well anyway, here’s my perspective:

Let’s say:

- There’s a real-world thing T (some machine made of atoms),

- T is upstream of some set of sensory inputs S (light reflecting off the machine and hitting photoreceptors etc.)

- There’s a predictive learning algorithm L tasked with predicting S,

- This learning algorithm gradually builds a trained model (a.k.a. generative model space, a.k.a. intuitive model space) M.

In this case, it is often (though not always) the case that some part of M will have a straightforward structural resemblance to T. In §1.3.2, I called that a “veridical correspondence”.

If that happens, then we know why it happened; it happened because of the learning algorithm L! Obviously, right? Veridical map-territory correspondence is generally a very effective way to predict what’s going to happen, and thus predictive learning algorithms very often build trained models with veridical aspects. (I think the term “teleosemantics” is relevant here? Not sure.)

By contrast, if some part of M has a straightforward structural resemblence to T, then the hypothesis that this happened by coincidence is astronomically unlikely, compared to the hypothesis that this happened because it’s a good way for L to reduce its loss function.

(Then you say: “Ah, but what if that astronomical coincidence comes to pass?” Well then I would say “Huh. Funny that”, and I would shrug and go on with my day. I never claimed to have an airtight philosophical theory of about-ness or representing-ness or whatever! It was you who brought it up!)

Other times, there isn’t a veridical correspondence! Instead, the predictive learning algorithm builds an M, no part of which has any straightforward structural resemblance to T. There are lots of reasons that could happen. I gave one or two examples of non-veridical things in this post, and much more coming up in Post 3.

But it isn't clear why these thoughts are different. If your thinking about someone is a relation between yourself and someone else, then it isn't clear why you thinking about one person could ever be two different things.

M is some data structure stored in the cortex. If I don’t know that Scott is Yvain, then Scott is one part of M, and Yvain is a different part of M. Two different sets of neurons in the cortex, or whatever. Right? I don’t think I’m saying anything deep here. :)

I'm not sure how much "structural resemblance" or "veridical correspondence" can account for representation/reference. Maybe our concept of a sock or an apple somehow (structurally) resembles a sock or an apple. But what if I'm thinking of the content of your suitcase, and I don't know whether it is a sock or an apple or something else? Surely the part of the model (my brain) which represents/refers to the content of your suitcase does not in any way (structurally or otherwise) resemble a sock, even if the content of your suitcase is indeed identical to a sock.

M is some data structure stored in the cortex. If I don’t know that Scott is Yvain, then Scott is one part of M, and Yvain is a different part of M. Two different sets of neurons in the cortex, or whatever. Right? I don’t think I’m saying anything deep here. :)

But Scott and Ivan are an object in the territory, not parts of a model, so the parts of the model which do represent Scott and Yvain require the existence of some sort of representation relation.

Maybe our concept of a sock or an apple somehow (structurally) resembles a sock or an apple.

I could start writing pairs of sentences like:

REAL WORLD: feet often have socks on them

MY INTUITIVE MODELS: “feet” “often” “have” “socks” “on” “them”

REAL WORLD: socks are usually stretchy

MY INTUITIVE MODELS: “socks” “are” “usually” “stretchy”

(… 7000 more things like that …)

If you take all those things, AND the information that all these things wound up in my intuitive models via the process of my brain doing predictive learning from observations of real-world socks over the course of my life, AND the information that my intuitive models of socks tend to activate when I’m looking at actual real-world socks, and to contribute to me successfully predicting what I see … and you mix all that together … then I think we wind up in a place where saying “my intuitive model of socks has by-and-large pretty good veridical correspondence to actual socks” is perfectly obvious common sense. :)

(This is all the same kinds of things I would say if you ask me what makes something a map of London. If it has features that straightforwardly correspond to features of London, and if it was made by someone trying to map London, and if it is actually useful for navigating London in the same kind of way that maps are normally useful, then yeah, that's definitely a map of London. If there's a weird edge case where some of those apply but not others, then OK, it's a weird edge case, and I don't see any point in drawing a sharp line through the thicket of weird edge cases. Just call them edge cases!)

But what if I'm thinking of the content of your suitcase, and I don't know whether it is a sock or an apple or something else? Surely the part of the model (my brain) which represents/refers to the content of your suitcase does not in any way (structurally or otherwise) resemble a sock, even if the content of your suitcase is indeed identical to a sock.

Right, if I don’t know what’s in your suitcase, then there will be rather little veridical correspondence between my intuitive model of the inside of your suitcase, and the actual inside of your suitcase! :)

(The statement “my intuitive model of socks has by-and-large pretty good veridical correspondence to actual socks” does not mean I have omniscient knowledge of every sock on Earth, or that nothing about socks will ever surprise me, etc.!)

But Scott and [Yvain] are an object in the territory, not parts of a model, so the parts of the model which do represent Scott and Yvain require the existence of some sort of representation relation.

Oh sorry, I thought that was clear from context … when I say “Scott is one part of M”, obviously I mean something more like “[the part of my intuitive world-model that I would describe as Scott] is one part of M”. M is a model, i.e. data structure, stored in the cortex. So everything in M is a part of a model by definition.

But what if I'm thinking of the content of your suitcase, and I don't know whether it is a sock or an apple or something else? Surely the part of the model (my brain) which represents/refers to the content of your suitcase does not in any way (structurally or otherwise) resemble a sock, even if the content of your suitcase is indeed identical to a sock.

Right, if I don’t know what’s in your suitcase, then there will be rather little veridical correspondence between my intuitive model of the inside of your suitcase, and the actual inside of your suitcase! :)

(The statement “my intuitive model of socks has by-and-large pretty good veridical correspondence to actual socks” does not mean I have omniscient knowledge of every sock on Earth, or that nothing about socks will ever surprise me, etc.!)

Okay, but then this theory doesn't explain how we (or a hypothetical ML model) can in fact successfully refer to / think about things which aren't known more or less directly. Like the contents of the suitcase, the person ringing at the door, the cause of the car failing to start, the reason for birth rate decline, the birthday present, what I had for dinner a week ago, what I will have for dinner tomorrow, the surprise guest, the solution to some equation, the unknown proof of some conjecture, the things I forgot about etc.

What you’re saying is basically: sometimes we know some aspects of a thing, but don’t know other aspects of it. There’s a thing in a suitcase. Well, I know where it is (in the suitcase), and a bit about how big it is (smaller than a bulldozer), and whether it’s tangible versus abstract (tangible). Then there are other things about it that I don’t know, like its color and shape. OK, cool. That’s not unusual—absolutely everything is like that. Even things I’m looking straight at are like that. I don’t know their weight, internal composition, etc.

I don’t need a “theory” to explain how a “hypothetical” learning algorithm can build a generative model that can represent this kind of information in its latent variables, and draw appropriate inferences. It’s not a hypothetical! Any generative model built by a predictive learning algorithm will actually do this—it will pick up on local patterns and extrapolate them, even in the absence of omniscient knowledge of every aspect of the thing / situation. It will draw inferences from the limited information it does have. Trained LLMs do this, and an adult cortex does it too.

I think you’re going wrong by taking “aboutness” to be a bedrock principle of how you’re thinking about things. These predictive learning algorithms and trained models actually exist. If, when you run these algorithms, you wind up with all kinds of edge cases where it’s unclear what is “about” what, (and you do), then that’s a sign that you should not be treating “aboutness” as a bedrock principle in the first place. “Aboutness” is like any other word / category—there are cases where it’s clearly a useful notion, and cases where it’s clearly not, and lots of edge cases in between. The sensible way to deal with edge cases is to use more words to elaborate what’s going on. (“Is chess a sport?” “Well, it’s like a sport in such-and-such respects but it also has so-and-so properties which are not very sport-like.” That’s a good response! No need for philosophizing.)

That’s how I’m using “veridicality” (≈ aboutness) in this series. I defined the term in Post 1 and am using it regularly, because I think there are lots of central cases where it’s clearly useful. There are also plenty of edge cases, and when I hit an edge case, I just use more words to elaborate exactly what’s going on. [Copying from Post 1:] For example, suppose intuitive concept X faithfully captures the behavior of algorithm Y, but X is intuitively conceptualized as a spirit floating in the room, rather than as an algorithm within the Platonic, ethereal realm of algorithms. Well then, I would just say something like: “X has good veridical correspondence to the behavior of algorithm Y, but the spirit- and location-related aspects of X do not veridically correspond to anything at all.” (This is basically a real example—it’s how some “awakened” (Post 6) people talk about what I call conscious awareness in this post.) I think you want “aboutness” to be something more fundamental than that, and I think that you’re wrong to want that.

I don’t need a “theory” to explain how a “hypothetical” learning algorithm can build a generative model that can represent this kind of information in its latent variables, and draw appropriate inferences.

Sure, but we would still need a separate explanation if we want to understand how representation/reference works in a model (or in the brain) itself. If we are interested in that, of course. It could be interesting from the standpoint of philosophy of mind, philosophy of language, linguistics, cognitive psychology, and of course machine learning interpretability.

If, when you run these algorithms, you wind up with all kinds of edge cases where it’s unclear what is “about” what, (and you do), then that’s a sign that you should not be treating “aboutness” as a bedrock principle in the first place.

I don't think we did run into any edge cases of representation so far where something partially represents or is partially represented, like chess is partially sport-like. Representation/reference/aboutness doesn't seem a very vague concept. Apparently the difficulty of finding an adequate definition isn't due to vagueness.

That being said, it's clearly not necessary for your theory to cover this topic if you don't find it very interesting and/or you have other objectives.

it's clearly not necessary for your theory to cover this topic if you don't find it very interesting and/or you have other objectives

I’m trying to make a stronger statement than that. I think the kind of theory you’re looking for doesn’t exist. :)

I don't think we did run into any edge cases of representation so far where something partially represents or is partially represented

In this comment you talked about a scenario where a perfect map of London was created by “an (extremely unlikely) accident”. I think that’s an “edge case of representation”, right? Just as a virus is an edge case of alive-ness. And (I claim) that arguing about whether viruses are “really” alive or not is a waste of time, and likewise arguing whether accidental maps “really” represent London or not is a waste of time too.

Again, a central case of representation would be:

- (A) The map has lots and lots of features that straightforwardly correspond to features of London

- (B) The reason for (A) is that the map was made by an optimization process that systematically (though imperfectly) tends to lead to (A), e.g. the map was created by a human map-maker who was trying to accurately map London

- (C) The map is in fact useful for navigating London

I claim that if you run a predictive learning algorithm, that’s a valid (B), and we can call the trained generative model a “map”. If we do that…

- You can pick out places where all three of (A-C) are very clearly applicable, and when you find one of those places, you can say that there’s some map-territory correspondence / representation there. Those places are clear-cut central examples, just as a cat is a clear-cut central example of alive-ness.

- There are other bits of the map that clearly don’t correspond to anything in the territory, like a drug-induced hallucinated ghost that the person believes to be really standing in front of them. That’s a clear-cut non-example of map-territory correspondence, just as a rock is a clear-cut non-example of alive-ness.

- And then there are edge cases, like the Gettier problem, or vague impressions, or partially-correct ideas, or hearsay, or vibes, or Scott/Yvain, or latent variables that are somehow helpful for prediction but in a very hard-to-interpret and indirect way, or habits, etc. Those are edge-cases of map-territory correspondence. And arguing about what if anything they’re “really” representing is a waste of time, just as arguing about whether a virus is “really” alive is a waste of time, right? In those cases, I claim that it’s useful to invoke the term “map-territory correspondence” or “representation” as part of a longer description of what’s happening here, but not as a bedrock ground truth that we’re trying to suss out.

In this comment you talked about a scenario where a perfect map of London was created by “an (extremely unlikely) accident”. I think that’s an “edge case of representation”, right?

I think it clearly wasn't a case of representation, in the same way a random string clearly doesn't represent anything, nor a cloud that happens to look like something. Those are not edge cases; edge cases are arguably examples where something satisfies a vague predicate partially, like chess being "sporty" to some degree. ("Life" is arguably also vague because it isn't clear whether it requires a metabolism, which isn't present in viruses.)

Again, a central case of representation would be:

(A) The map has lots and lots of features that straightforwardly correspond to features of London

(B) The reason for (A) is that the map was made by an optimization process that systematically (though imperfectly) tends to lead to (A), e.g. the map was created by a human map-maker who was trying to accurately map London

(C) The map is in fact useful for navigating London

I think maps are not overly central cases of representation.

(A) is clearly not at all required for clear cases of representation, e.g. in case of language (abstract symbols) or when a fist is used to represent a gas station, or a stone represents a missing chess piece, etc. That's also again the difference between the sock and the contents of the suitcase. The latter concept doesn't resemble the thing it represents, like a sock, even though it may refer to one.

Regarding (C), representation also doesn't have to be helpful to be representation. A secret message written in code is in fact optimized to be maximally unhelpful to most people, perhaps even to everyone in case of an encrypted diary. And the local explaining the way to the next gas station with the help of his hands clearly means something regardless of whether someone is able to understand what he means. Yes, maps specifically are mostly optimized to be helpful, but that doesn't mean representation has to be helpful. It's similar to portraits: they tend to be optimized for representing someone, resembling someone, and for being aesthetically pleasing. Which doesn't mean representation itself requires resemblance or aesthetics.

I agree that something like (B) might be a defining feature of representation, but not quite. Some form of optimization seems to be necessary (randomly created things don't represent anything), but optimization for (A) (resemblance) is necessary only for things like maps or pictures, but not for other forms of representation, as I argued above.

And then there are edge cases, like the Gettier problem, or vague impressions, or partially-correct ideas, or hearsay, or vibes, or Scott/Yvain, or latent variables that are somehow helpful for prediction but in a very hard-to-interpret and indirect way, or habits, etc. Those are edge-cases of map-territory correspondence. And arguing about what if anything they’re “really” representing is a waste of time, just as arguing about whether a virus is “really” alive is a waste of time.

The Gettier problem is a good illustration of what I think is the misunderstanding here: Gettier cases are not supposed to be edge cases of knowledge. Just like the randomly created string "Eliezer Yudkowsky" is not an edge case of representation. Edge cases have to do with vagueness, but the problem with defining knowledge is not that the concept may be vague. Gettier cases are examples where justified true belief is intuitively present but knowledge is intuitively absent. Not partially present like in edge cases. That is, we would apply the first terms (justification, truth, belief) but not the latter (knowledge). Vagueness itself is often not a problem for definition. A bachelor is clearly an unmarried man, but what may count as marriage, and what separates men from boys, is a matter of degree, and so too must be bachelor status.

Now you may still think that finding something like necessary and sufficient conditions for representation is still "a waste of time" even if vagueness is not the issue. But wouldn't that, for example, also apply to your attempted explication of "intention"? Or "awareness"? In my opinion, all those concepts are interesting and benefit from analysis.

Back to the Scott / Yvain thing, suppose that I open Google Maps and find that there are two listings for the same restaurant location, one labeled KFC, the other labeled Kentucky Fried Chicken. What do we learn from that?

- (X) The common-sense answer is: there’s some function in the Google Maps codebase such that, when someone submits a restaurant entry, it checks if it’s a duplicate before officially adding it. Let’s say the function is

check_if_dupe(). And this code evidently had a false negative when someone submitted KFC. Hey, let’s dive into the implementation ofcheck_if_dupe()to understand why there was a false negative! … - (Y) The philosophizing answer is: This observation implies something deep and profound about the nature of Representation! :)

Maybe I’m being too harsh on the philosophizing answer (here and in earlier comments); I’ll do the best I can to steelman it now.

- (Y’) We almost always use language in a casual way, but sometimes it’s useful to have some term with a precise universal necessary-and-sufficient definition that can be unambiguously applied to any conceivable situation. (Certainly it would be hard to do math without those kinds of definitions!) And if we want to give the word “Representation” this kind of precise universal definition, then that definition had better be able to deal with this KFC situation.

Anyway, (Y’) is fine, but we need to be clear that we’re not learning anything about the world by doing that activity. At best, it’s a means to an end. Answering (Y) or (Y’) will not give any insight into the straightforward (X) question of why check_if_dupe() returned a false negative. (Nor vice-versa.) I think this is how we’re talking past each other. I suspect that you’re too focused on (Y) or (Y’) because you expect them to answer all our questions including (X), when it’s obvious (in this KFC case) that they won’t, right? I’m putting words in your mouth; feel free to disagree.

(…But that was my interpretation of a few comments ago where I was trying to chat about the implementation of check_if_dupe() in the human cortex as a path to answering your “failure of identification” question, and your response seemed to fundamentally reject that whole way of thinking about things.)

Nowhere in this series do I purport to offer any precise universal necessary-and-sufficient definition that can be applied to any conceivable situation, for any word at all, not “Awareness”, not “Intention”, not anything. It’s just not an activity I’m generally enthusiastic about. You can be the world-leading expert on socks without having a precise universal necessary-and-sufficient definition of what constitutes a sock. Likewise, if there’s a map of London made by an astronomically-unlikely coincidence, and you say it’s not a Representation of London, and I say yes it is a Representation of London, then we’re not disagreeing about anything substantive. We have the same understanding of the situation but a different definition of the word Representation. Does that matter? Sure, a little bit. Maybe your definition of Representation is better, all things considered—maybe it’s more useful for explaining certain things, maybe it better aligns with preexisting intuitions, whatever. But still, it’s just terminology, not substance. We can always just split the term Representation into “Representation_per_cubefox” and “Representation_per_Steve” and then suddenly we’re not disagreeing about anything at all. Or better yet, we can agree to use the word Representation in the obvious central cases where everybody agrees that this term is applicable, and to use multi-word phrases and sentences and analogies to provide nuance in cases where that’s helpful. In other words: the normal way that people communicate all the time! :)

I don't understand the relevance of the Google Maps example and the emphasis you place on the "check_if_dupe()" function for understanding what "representation" is.

I think my previous explanation of why we can always know the identity of internal objects (to stick with a software analogy: whether two variables refer to the same memory location) but not always the identity of external objects, since there is no full access to those objects. Which is why the function can make mistakes. However, this is not an analysis of representation.

Nowhere in this series do I purport to offer any precise universal necessary-and-sufficient definition that can be applied to any conceivable situation, for any word at all, not “Awareness”, not “Intention”, not anything.

And you don't even aim for a good definition? For what do you aim then?

You can be the world-leading expert on socks without having a precise universal necessary-and-sufficient definition of what constitutes a sock.

I think if I'm doing a priori armchair reasoning on socks, the way you and I do armchair reasoning here, I'm pretty much constrained to conceptual analysis. Which is the activity of finding necessary and sufficient conditions for a concept.

Likewise, if there’s a map of London made by an astronomically-unlikely coincidence, and you say it’s not a Representation of London, and I say yes it is a Representation of London, then we’re not disagreeing about anything substantive.

Yes. But are you even disagreeing with me on this map example here? If so, do you disagree with the cases of clouds, fists, random strings etc? In philosophy the issue is rarely the disagreement over what a term means (because then we could simply infer that the term is ambiguous, and the case is closed), rather, the problem is finding a definition for a term where everyone already agrees on what it means. Like knowledge, causation, explanation, evidence, probability, truth etc.

And you don't even aim for a good definition? For what do you aim then? … I think if I'm doing a priori armchair reasoning on socks, the way you and I do armchair reasoning here, I'm pretty much constrained to conceptual analysis. Which is activity of finding necessary and sufficient conditions for a concept.

The goal of this series is to explain how certain observable facts about the physical universe arise from more basic principles of physics, neuroscience, algorithms, etc. See §1.6.

I’m not sure what you mean by “armchair reasoning”. When Einstein invented the theory of General Relativity, was he doing “armchair reasoning”? Well, yes in the sense that he was reasoning, and for all I know he was literally sitting in an armchair while doing it. :) But what he was doing was not “constrained to conceptual analysis”, right?

As a more specific example, one thing that happens a lot in this series is: I describe some algorithm, and then I talk about what happens when you run that algorithm. Those things that the algorithm winds up doing are often not immediately obvious just from looking at the algorithm pseudocode by itself. But they make sense once you spend some time thinking it through. This is the kind of activity that people frequently do in algorithms classes, and it overlaps with math, and I don’t think of it as being related to philosophy or “conceptual analysis” or “a priori armchair reasoning”.

In this case, the algorithm in question happens to be implemented by neurons and synapses in the human brain (I claim). And thus by understanding the algorithm and what it does when you run it, we wind up with new insights into human behavior and beliefs.

Does that help?

are you even disagreeing with me on this map example here

Yes I am disagreeing. If there’s a perfect map of London made by an astronomically-unlikely coincidence, and someone asks whether it’s a “representation” of London, then your answer is “definitely no” and my answer is “Maybe? I dunno. I don’t understand what you’re asking. Can you please taboo the word ‘representation’ and ask it again?” :-P

Einstein (scientists in general) tried to explain empirical observations. The point of conceptual analysis, in contrast, is to analyze general concepts, to answer "What is X?" questions, where X is a general term. I thought your post fit more in the conceptual analysis direction rather than in an empirical one, since it seems focused on concepts rather than observations.

One way to distinguish the two is by what they consider counterexamples. In science, a counterexample is an observation which contradicts a prediction of the proposed explanation. In conceptual analysis, a counterexample is a thought experiment (like a Gettier case or the string example above) to which the proposed definition (the definiens) intuitively applies but the defined term (the definiendum) doesn't, or the other way round.

The algorithm analysis method arguably doesn't really fit here, since it requires access to the algorithm, which isn't available in case of the brain. (Unless I misunderstood the method and it treats algorithms actually as black boxes while only looking at input/output examples. But then it wouldn't be so different from conceptual analysis, where a thought experiment is the input, and an intuitive application of a term the output.)

are you even disagreeing with me on this map example here

Yes I am disagreeing. If there’s a perfect map of London made by an astronomically-unlikely coincidence, and someone asks whether it’s a “representation” of London, then your answer is “definitely no” and my answer is “Maybe? I dunno. I don’t understand your question.

But I assume you do agree that random strings don't refer to anyone, that clouds don't represent anyone they accidentally resemble, that a fist by itself doesn't represent anything etc. An accidentally created map seems to be the same kind of case, just vastly less likely. So treating them differently doesn't seem very coherent.

Can you please taboo the word ‘representation’ and ask it again?” :-P

Well... That's hardly possible when analysing the concept of representation, since this is just the meaning of the word "represents". Of course nobody is forcing you to do it when you find it pointless, which is okay.

Of course nobody is forcing you to do it when you find it pointless, which is okay.

Yup! :) :)

The algorithm analysis method arguably doesn't really fit here, since it requires access to the algorithm, which isn't available in case of the brain.

Oh I have lots and lots of opinions about what algorithms are running in the brain. See my many dozens of blog posts about neuroscience. Post 1 has some of the core pieces: I think there’s a predictive (a.k.a. self-supervised) learning algorithm, that the trained model (a.k.a. generative model space) for that learning algorithm winds up stored in the cortex, and that the generative model space is continually queried in real time by a process that amounts to probabilistic inference. Those are the most basic things, but there’s a ton of other bits and pieces that I introduce throughout the series as needed, things like how “valence” fits into that algorithm, how “valence” is updated by supervised learning and temporal difference learning, how interoception fits into that algorithm, how certain innate brainstem reactions fit into that algorithm, how various types of attention fit into that algorithm … on and on.

Of course, you don’t have to agree! There is never a neuroscience consensus. Some of my opinions about brain algorithms are close to neuroscience consensus, others much less so. But if I make some claim about brain algorithms that seems false, you’re welcome to question it, and I can explain why I believe it. :)

…Or separately, if you’re suggesting that the only way to learn about what an algorithm will do when you run it, is to actually run it on an actual computer, then I strongly disagree. It’s perfectly possible to just write down pseudocode, think for a bit, and conclude non-obvious things about what that pseudocode would do if you were to run it. Smart people can reach consensus on those kinds of questions, without ever running the code. It’s basically math—not so different from the fact that mathematicians are perfectly capable of reaching consensus about math claims without relying on the computer-verified formal proofs as ground truth. Right?

As an example, “the locker problem” is basically describing an algorithm, and asking what happens when you run that algorithm. That question is readily solvable without running any code on a computer, and indeed it would be perfectly reasonable to find that problem on a math test where you don’t even have computer access. Does that help? Or sorry if I’m misunderstanding your point.

…But interestingly, if I then immediately ask you what you were experiencing just now, you won’t describe it as above. Instead you’ll say that you were hearing “sm-” at t=0 and “-mi” at t=0.2 and “-ile” at t=0.4. In other words, you’ll recall it in terms of the time-course of the generative model that ultimately turned out to be the best explanation.

In my review of Dennett's book, I argued that this doesn't disprove the "there's a well-defined stream of consciousness" hypothesis since it could be the case that memory is overwritten (i.e., you first hear "sm" not realizing what you're hearing, but then when you hear "smile", your brain deletes that part from memory).

Since then I've gotten more cynical and would now argue that there's nothing to explain because there are no proper examples of revisionist memory.[1] Because here's the thing -- I agree that if you ask someone what they experience, they're probably going to respond as you say in the quote. Because they're not going to think much about it, and this is just the most natural thing to reply. But do you actually remember understanding "sm" at the point when you first heard it? Because I don't. If I think about what happened after the fact, I have a subtle sensation of understand the word, and I can vaguely recall that I've heard a sound at the beginning of the word, but I don't remember being able to place what it is at the time.

I've just tried to introspect on this listening to an audio conversation, and yeah, I don't have any such memories. I also tried it with slowed audio. I guess reply here if anyone thinks they genuinely misremember this if they pay attention.

The color phi phenomenon doesn't work for or anyone I've asked so at this point my assumption is that it's just not a real result (kudos for not relying on it here). I think Dennett's book is full of terrible epistemology so I'm surprised that he's included it anyway. ↩︎

The color phi phenomenon doesn't work for or anyone I've asked

Were you using this demo? If so, I set the times to 1000,30,60, demagnified as much as possible, and then stood 20 feet away from my computer to “demagnify” even more. I might have also moved the dots a bit closer. I think I got some motion illusion?

I’m skeptical of the hypothesis that the color phi phenomenon is just BS. It doesn’t seem like that kind of psych result. I think it’s more likely that this applet is terribly designed.

Were you using this demo?

I’m skeptical of the hypothesis that the color phi phenomenon is just BS. It doesn’t seem like that kind of psych result. I think it’s more likely that this applet is terribly designed.

Yes -- and yeah, fair enough. Although-

I think I got some motion illusion?

-remember that the question isn't "did I get the vibe that something moves". We already know that a series of frames gives the vibe that something moves. The question is whether you remember having seen the red circle halfway across before seeing the blue circle.

Take any intuitive notion X, where people’s intuitions are generally a bit incoherent or poorly-thought-through—stream of consciousness, free will, divine grace, the voice in my head, etc.

- (A) One thing you can say is: “X, when properly understood, is coherent, and here’s how to properly understand it …”

- (B) Another you can say is: “X, as commonly understood by the average person, is incoherent, but hey let me tell you about these closely related concepts which are coherent and which rescue some or all of those intuitions about X that you find compelling …”

Fundamentally, neither of these strategies is right or wrong. You say tomato, I say to-mah-to. :)

This is one of many causes of those annoying debates that go around in circles, that I’m trying to declare out-of-scope for this series, cf. §1.6.2. :)

For science terminology like “acceleration”, we take approach (A). People often have incoherent intuitions about acceleration, and when they do, we prompt them to discard their “wrong” intuitions, leaving the “real” acceleration concept.

For more everyday terminology, (A) versus (B) is more of a judgment call—for example, some physicalists say “‘God’ doesn’t exist”, others say “‘God’ is just the term for order and beauty in the universe” or whatever. As another example, I read Elbow Room recently, and Dennett’s revised preface says that he’s taken approach (A) to “free will” for his whole career, but now he’s thinking that maybe all along he should have taken the (B) path and said “free will (as commonly understood) doesn’t exist”.

Anyway, I feel like my post section is pointing out that people’s everyday poorly-thought-through intuitions about “stream of consciousness” are a bit incoherent, but I didn’t go further than that by advocating for either (A) or (B). Whereas your comment is advocating for the (A) path, where we prod people to update their intuitions about what happens when they try to remember what happened one second ago.

I don't think I agree with this framing. I wasn't trying to say "people need to rethink their concept of awareness"; I was saying "you haven't actually demonstrated that there is anything wrong with the naive concept of awareness because the counterexample isn't a proper counterexample".

I mean I've conceded that people will give this intuitive answer, but only because they'll respond before they've actually run the experiment you suggest. I'm saying that as soon as you (generic you) actually do the thing the post suggested (i.e., look at what you remember at the point in time where you heard the first syllable of a word that you don't yet recognize), you'll notice that you do not, in fact, remember hearing & understanding the first part of the word. This doesn't entail a shift in the understanding of awareness. People can view awareness exactly like they did before, I just want them to actually run the experiment before answering!

(And also this seems like a pretty conceptually straight-forward case -- the overarching question is basically, "is there a specific data structure in the brain whose state corresponds to people's experience at every point in time" -- which I think captures the naive view of awareness -- and I'm saying "the example doesn't show that the answer is no".)

Like I said in this post, I think the contents of conscious awareness corresponds more-or-less to what’s happening in the cortex. The homolog to the cortex in non-mammal vertebrates is called the “pallium”, and the pallium along with the striatum and a few other odds and ends comprises the “telencephalon”.

I don’t know anything about octopuses, but I would very surprised if the fish pallium lacked recurrent connections. I don’t think your link says that though. The relevant part seems to be:

While the fish retina projects diffusely to nine nuclei in the diencephalon, its main

target is the midbrain optic tectum (Burrill and Easter, 1994). Thus, the fish visual system

is highly parcellated, at least, in the sub-telencephalonic regions. Whole brain imaging

during visuomotor reflexes reveals widespread neural activity in the diencephalon,

midbrain and hindbrain in zebrafish, but these regions appear to act mostly as

feedforward pathways (Sarvestani et al., 2013; Kubo et al., 2014; Portugues et al., 2014).

When recurrent feedback is present (e.g., in the brainstem circuitry responsible for eye

movement), it is weak and usually arises only from the next nucleus within a linear

hierarchical circuit (Joshua and Lisberger, 2014). In conclusion, fish lack the strong

reciprocal and networked circuitry required for conscious neural processing.

This passage is just about the “sub-telencephalonic regions”, i.e. they’re not talking about the pallium.

To be clear, the stuff happening in sub-telencephalonic regions (e.g. the brainstem) is often relevant to consciousness, of course, even if it’s not itself part of consciousness. One reason is because stuff happening in the brainstem can turn into interoceptive sensory inputs to the pallium / cortex. Another reason is that stuff happening in the brainstem can directly mess with what’s happening in the pallium / cortex in other ways besides serving as sensory inputs. One example is (what I call) the valence signal which can make conscious thoughts either stay or go away. Another is (what I call) “involuntary attention”.

Umm, I would phrase it as: there’s a particular computational task called approximate Bayesian probabilistic inference, and I think the cortex / pallium performs that task (among others) in vertebrates, and I don’t think it’s possible for biological neurons to perform that task without lots of recurrent connections.

And if there’s an organism that doesn’t perform that task at all, then it would have neither an intuitive self-model nor an intuitive model of anything else, at least not in any sense that’s analogous to ours and that I know how to think about.

To be clear: (1) I think you can have some brain region with lots of recurrent connections that has nothing to do with intuitive modeling, (2) it’s possible for a brain region to perform approximate Bayesian probabilistic inference and have recurrent connections, but still not have an intuitive self-model, for example if the hypothesis space is closer to a simple lookup table rather than a complicated hypothesis space involving complex compositional interacting entities etc.

why do you think some invertebrates likely have intuitive self models as well?

I didn’t quite say that. I made a weaker claim that “presumably many invertebrates [are] active agents with predictive learning algorithms in their brain, and hence their predictive learning algorithms are…incentivized to build intuitive self-models”.

It seems reasonable to presume that octopuses have predictive learning algorithms in their nervous systems, because AFAIK that’s the only practical way to wind up with a flexible and forward-looking understanding of the consequences of your actions, and octopuses (at least) are clearly able to plan ahead in a flexible way.

However, “incentivized to build intuitive self-models” does not necessarily imply “does in fact build intuitive self-models”. As I wrote in §1.4.1, just because a learning algorithm is incentivized to capture some pattern in its input data, doesn’t mean it actually will succeed in doing so.

Would you restrict this possibility to basically just cephalopods and the like

No opinion.

fish also lack a laminated and columnar organization of neural regions that are strongly interconnected by reciprocal feedforward and feedback circuitry

Yeah that doesn’t mean much in itself: “Laminated and columnar” is how the neurons are arranged in space, but what matters algorithmically is how they’re connected. The bird pallium is neither laminated nor columnar, but is AFAICT functionally equivalent to a mammal cortex.

Which seems a little silly for me because I'm fairly certain humans without a cortex also show nociceptive behaviours?

My opinion (which is outside the scope of this series) is: (1) mammals without a cortex are not conscious, and (2) mammals without a cortex show nociceptive behaviors, and (3) nociceptive behaviors are not in themselves proof of “feeling pain” in the sense of consciousness. Argument for (3): You can also make a very simple mechanical mechanism (e.g. a bimetallic strip attached to a mousetrap-type mechanism) that quickly “recoils” from touching hot surfaces, but it seems pretty implausible that this mechanical mechanism “feels pain”.

(I think we’re in agreement on this?)

~~

I know nothing about octopus nervous systems and am not currently planning to learn, sorry.

I want to comment on the interpretation of S(A) as an "intention" to do A.

Note that I'm coming back here from section 6. Awakening / Enlightenment / PNSE, so if somebody hasn't read that, this might be unclear.

Using the terminology above, A here is "the patterns of motor control and attention control outputs that would does collectively make my muscles actually execute the standing-up action."

And S(A) is "the patterns of motor control and attention control outputs that would does collectively make my muscles actually execute the standing-up action are in my awareness." Meaning a representation of "awareness" is active together with the container-relationship and a representation of A. (I am still very unsure about how "awareness" is learned and represented.)[1]

Referring to 2.6.2, I agree with this:

[S(A) and A] are obviously strongly associated with each other. They can activate simultaneously. And even if they don’t, each tends to bring the other to mind, such that the valence of one influences the valence of the other.

and

For any action A where S(A) has positive valence, there’s often a two-step temporal sequence: [S(A) ; A actually happens]"

I agree that in this co-occurence sense "S(X) often summons a follow-on thought of X." But it is not causing it, what "summon" might imply. This choice of word is maybe an indication of the uncertainty here.

Clearly, action A can happen without S(A) being present. In fact, actions are often more effectively executed if you don't think too hard about them[citation needed]. An S(A) is not required. Maybe S(A) and A cooccur often, but that doesn't imply causality. But, indeed, it would seem to be causal in the context of a homunculus model of action. Treating it as causal/vitalistic is predictive. The real reason is the co-occurrence of the thoughts, which can have a common cause, such as when the S(A) thought brings up additional associations that lead to higher valence thoughts/actions later (e.g., chains of S(A), A, S(A)->S(B), B).

Thus, S(A) isn't really an "Intention to do A" per se but just as it says on the tin: "awareness of (expecting) A." I would say it is only an "intention to do A" if the thought S(A) also includes the concept of intention - which is a concept tied to the homunculus and an intuitive model of agency.

- ^

I am still very unsure about how "awareness" is learned and represented. Above it says

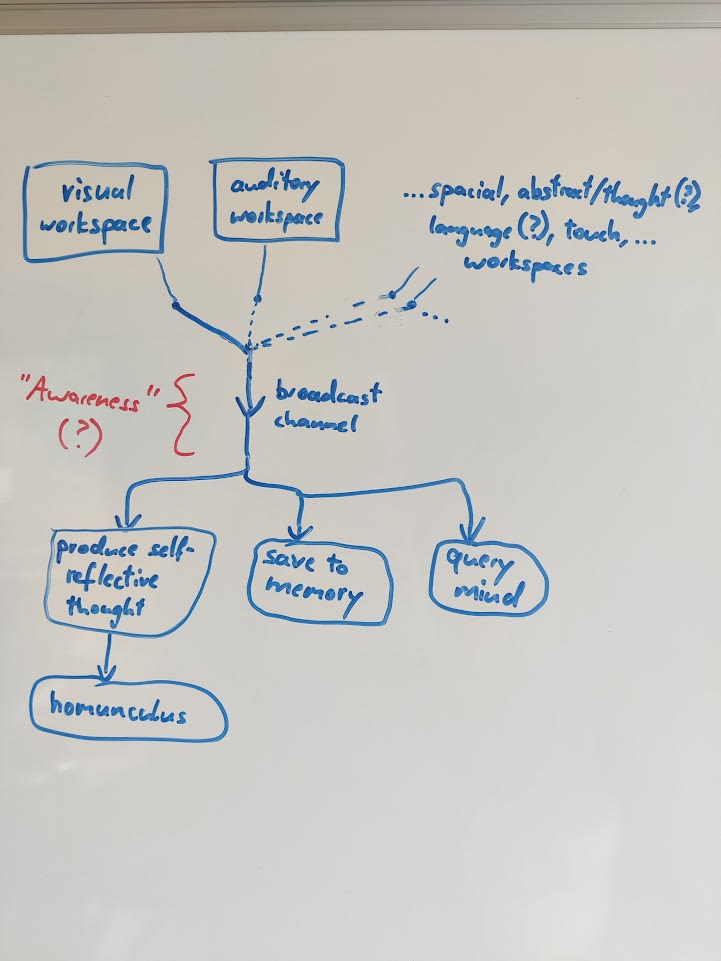

the cortex, which has a limited computational capacity that gets deployed serially [...] When this aspect of the brain algorithm is itself incorporated into a generative model via predictive (a.k.a. self-supervised) learning, it winds up represented as an “awareness” concept,

but this doesn't say how. The brain needs to observe something (sense, interoception) from which it can infer this. The pattern in what observations would that be? The serial processing is a property the brain can't observe unless there is some way to combine/compare past and present "thoughts." That's why I have long thought that there has to be a feedback from the current thought back as input signal (thoughts as observations). Such a connection is not present in the brain-like model, but it might not be the only way. Another way would be via memory. If a thought is remembered, then one way of implementing memory would be to provide a representation of the remembered thought as input. In any case, there must be a relation between successive thoughts, otherwise they couldn't influence each other.

It seems plausible that, in a sequence of events, awareness S(A) is a related to a pattern of A having occurred previously in the sequence (or being expected to occur).

The brain needs to observe something (sense, interoception) from which it can infer this. The pattern in what observations would that be?

(partly copying from my other comment) For example, consider the following fact.

FACT: Sometimes, I’m thinking about pencils. Other times, I’m not thinking about pencils.

Now imagine that there’s a predictive (a.k.a. self-supervised) learning algorithm which is tasked with predicting upcoming sensory inputs, by building generative models. The above fact is very important! If the predictive learning algorithm does not somehow incorporate that fact into its generative models, then those generative models will be worse at making predictions. For example, if I’m thinking about pencils, then I’m likelier to talk about pencils, and look at pencils, and grab a pencil, etc., compared to if I’m not thinking about pencils. So the predictive learning algorithm is incentivized (by its predictive loss function) to build a generative model that can represent the fact that any given concept might be active in the cortex at a certain time, or might not be.

See also §1.4.

That's why I have long thought that there has to be a feedback from the current thought back as input signal (thoughts as observations). Such a connection is not present in the brain-like model, but it might not be the only way. Another way would be via memory.

I mean yeah obviously the cortex has various types of memory, and this fact is important for all kinds of things. :)

Clearly, action A can happen without S(A) being present. In fact, actions are often more effectively executed if you don't think too hard about them[citation needed]. An S(A) is not required. Maybe S(A) and A cooccur often, but that doesn't imply causality.

These sentences seem to suggest that either A’s are either always, or never, caused by a preceding S(A), and out of those two options, “never” is more plausible. But that’s a false dichotomy. I propose that sometimes they are and sometimes they aren’t caused by S(A).

By analogy, sometimes doors open because somebody pushed on them, and sometimes doors open without anyone pushing on them. Also, it’s possible for there to be a very windy day where the door would open with 30% probability in the absence of a person pushing on it, but opens with 85% probability if somebody does push on it. In that case, did the person “cause” the door to open? I would say yeah, they “partially caused it” to open, or “causally contributed to” the door opening, or “often cause the door to open”, or something like that. I stand by my claim that the self-reflective S(standing up), if sufficiently motivating, can “cause” me to then stand up, in that sense.

The fact that “actions are often more effectively executed if you don't think too hard about them” is referring to the fact that if you have a learned skill, in the form of some optimized context-dependent temporal sequence of motor-control and attention-control commands, then self-reflective thoughts can interrupt and thus mess up that temporal sequence, just as people shouting random numbers can disrupt someone trying to count, or how you can’t sing two songs in your head simultaneously. A.k.a. the limited capacity of cortex processing. Whereas that section is more about whether some course-of-action (like saying something, wiggling your fingers, standing up, etc.) starts or not.

Flow states (post 4) are a great example of A’s happening without any S(A).

You give the example of the door that is sometimes pushed open, but let me give alternative analogies:

- S(A): Forecaster: "The stock price of XYZ will rise tomorrow." A: XYZ's stock rises the next day.

- S(A): Drill sergeat, "There will be exercises at 14:00 hours." A: Military units start their exercises at the designated time.

- S(A): Live commentator: "The rocket is leaving the launch pad." A: A rocket launches from the ground.

Clearly, there is a reason for the co-occurrence, but it is not one causing the other. And it is useful to have the forecaster because making the prediction salient helps improve predictions. Making the drill time salient improves punctuality or routine or something. Not sure what the benefit of the rocket launch commentary is.

Otherwise I think we agree.

I agree that there is such a thing as two things occurring in sequence where the first doesn’t cause the second. But I don’t think this is one of those cases. Instead, I think there are strong reasons to believe that if S(A) is active and has positive valence, then that causally contributes to A tending to happen afterwards.

For example, if A = stepping into the ice-cold shower, then the object-level idea of A is probably generally negative-valence—it will feel unpleasant. But then S(A) is the self-reflective idea of myself stepping into the shower, and relatedly how stepping into the shower fits into my self-image and the narrative of my life etc., and so S(A) is positive valence.

I won’t necessarily wind up stepping into the shower (maybe I’ll chicken out), but if I do, then the main reason why I do is the fact that the S(A) thought was active in my mind immediately beforehand, and had positive valence. Right?

There is just one problem that Libet discovered: There is no time for S(A) to cause A.

My favorite example is throwing a ball: A is the releasing of the ball at the right moment to hit a target. This requires Millisecond precision of release. The S(A) is precisely timed to coincide with the release. It feels like you are releasing the ball at the moment your hand releases it. But that can't be true because the signal from the brain alone takes longer than the duration of a thought. If your theory were right, you would feel the intention to release the ball and a moment later would have the sensation of the result happening.

Now, one solution around this would be to time-tag thoughts and reorder them afterwords, maybe in memory - a bit like out-of-order execution in CPUs handles parallel execution of sequential instructions. But I'm not sure that is what is going on or that you think it is.

So, my conclusion is that there is a common cause of both S(A) and A.

And my interpretation of Daniel Ingram's comments is different from yours.

In Mind and Body, the earliest insight stage, those who know what to look for and how to leverage this way of perceiving reality will take the opportunity to notice the intention to breathe that precedes the breath, the intention to move the foot that precedes the foot moving, the intention to think a thought that precedes the thinking of the thought, and even the intention to move attention that precedes attention moving.

These "intentions to think/do" that Ingraham refers to are not things untrained people can notice. There are things in the mind that precede the S(A) and A and cause them but people normally can't notice them and thus can't be S(A). I say these precursors are the same things picked up in the Libet experiments and neurological measurements.

We’re definitely talking past each other somehow. For example, your statement “The S(A) is precisely timed to coincide with the release” is (to me) obviously false. In the case of “deciding to throw a ball”, A would be the time-extended action of throwing the ball, and S(A) would be me “making a decision of my free will” to throw the ball, which happens way before the release, indeed it happens before I even start moving my arm. Releasing the ball isn’t a separate “decision” but rather part of the already-decided course-of-action.

(Again, I’m definitely not arguing that every action is this kind of stereotypical [S(A); A] “intentional free will decision”, or even that most actions are. Non-examples include every action you take in a flow state, and indeed you could say that every day is full of little “micro-flow-states” that last for even just a few seconds when you’re doing something rather than self-reflecting.)

…Then after the fact, I might recall the fact that I released the ball at such-and-such moment. But that thought is not actually about an “action” for reasons discussed in §2.6.1.

We’re definitely talking past each other somehow.

I guess this will only stop when we have made our thoughts clear enough for an implementation that allows us to inspect the system for S(A) and A. Which is OK.

At least this has helped clarify that you think of S(A) to (often) precede A by a lot, which wasn't clear to me. I think this complicates the analysis because of where to draw the line. Would it count if I imagine throwing the ball one day (S(A)) but executing it during the game the next day as I intend?

What do you make of the Libet experiments?

At least this has helped clarify that you think of S(A) to (often) precede A by a lot, which wasn't clear to me.

Not really; instead, I think throwing the ball is a time-extended course of action, as most actions are. If I “decide” to say a sentence or sing a song, I don’t separately “decide” to say the next syllable, then “decide” to say the next syllable after that, etc.

What do you make of the Libet experiments?

He did a bunch of experiments, I’m not sure which ones you’re referring to. (The “conscious intentions” one?) The ones I’ve read about seem mildly interesting. I don’t think they contradict anything I wrote or believe. If you do think that, feel free to explain. :)

I mean this (my summary of the Libet experiments and their replications):

- Brain activity detectable with EEG (Readiness Potential) begins between 350 and multiple seconds (depending on experiment and measurement resolution) before the person consciously feels the intention to act (voluntary motor movement).

- Subjects report becoming aware of their intention to act (via clock tracking) about 200 ms before the action itself (e.g., pressing a button). 200ms seems relatively fixed, but cognitive load can delay.

To give a specific quote:

Matsuhashi and Hallet: Our result suggests that the perception of intention rises through multiple levels of awareness, starting just after the brain initiates movement.

[...]

1. The first detected event in most subjects was the onset of BP. They were not aware of the movement genesis at this time, even if they were alerted by tones.

2. As the movement genesis progressed, the awareness state rose higher and after the T time, if the subjects were alerted, they could consciously access awareness of their movement genesis as intention. The late BP began within this period.

3. The awareness state rose even higher as the process went on, and at the W time it reached the level of meta-awareness without being probed. In Libet et al’s clock task, subjects could memorize the clock position at this time.

4. Shortly after that, the movement genesis reached its final point, after which the subjects could not veto the movement any more (P time).[...]

We studied the immediate intention directly preceding the action. We think it best to understand movement genesis and intention as separate phenomena, both measurable. Movement genesis begins at a level beyond awareness and over time gradually becomes accessible to consciousness as the perception of intention.

Now, I think you'd say that what they measured wasn't S(A) but something else that is causally related, but then you are moving farther away from patterns we can observe in the brain. And your theory still has to explain the subclass of those S(A) that they did measure. The participants apparently thought these to be their decisions S(A) about their actions A.

Thanks!

I don’t think S(A) or any other thought bursts into consciousness from the void via an acausal act of free will—that was the point of §3.3.6. I also don’t think that people’s self-reports about what was going on in their heads in the immediate past should necessarily be taken at face value—that was the point of §2.3.

Every thought (including S(A)) begins its life as a little seed of activation pattern in some little part of the cortex, which gets gradually stronger and more widespread across the global workspace over the course of a fraction of a second. If that process gets cut off prematurely, then we don’t become aware of that thought at all, although sometimes we can notice its footprints via an appropriate attention-control query.

Does that help?

Maybe you’re thinking that, if I assert that a positive-valence S(A) caused A to happen, then I must believe that there’s nothing upstream that in turn caused S(A) to appear and to have positive valence? If so, that seems pretty silly to me. That would be basically the position that nothing can ever cause anything, right?

(“Your Honor, the victim’s death was not caused by my client shooting him! Rather, The Big Bang is the common cause of both the shooting and the death!” :-D )

I think your explanation in section 8.5.2 resolves our disagreement nicely. You refer to S(X) thoughts that "spawn up" successive thoughts that eventually lead to X (I'd say X') actions shortly after (or much later). While I was referring to S(X) that cannot give rise to X immediately. I think the difference was that you are more lenient with what X can be, such that S(X) can be about an X that is happening much later, which wouldn't work in my model of thoughts.

Explicit (self-reflective) desire

Statement: “I want to be inside.”

Intuitive model underlying that statement: There’s a frame (§2.2.3) “X wants Y” (§3.3.4). This frame is being invoked, with X as the homunculus, and Y as the concept of “inside” as a location / environment.

How I describe what’s happening using my framework: There’s a systematic pattern (in this particular context), call it P, where self-reflective thoughts concerning the inside, like “myself being inside” or “myself going inside”, tend to trigger positive valence. That positive valence is why such thoughts arise in the first place, and it’s also why those thoughts tend to lead to actual going-inside behavior.

In my framework, that’s really the whole story. There’s this pattern P. And we can talk about the upstream causes of P—something involving innate drives and learned heuristics in the brain. And we can likewise talk about the downstream effects of P—P tends to spawn behaviors like going inside, brainstorming how to get inside, etc. But “what’s really going on” (in the “territory” of my brain algorithm) is a story about the pattern P, not about the homunculus. The homunculus only arises secondarily, as the way that I perceive the pattern P (in the “map” of my intuitive self-model).

Thanks. It doesn't help because we already agreed on these points.

We both understand that there is physical process in the brain - neurons firing etc. - as you describe in 3.3.6, that gives rise to a) S(A), b) A, and c) the precursors to both as measured by Libet and others.

We both know that people's self-reports are unreliable and informed by their intuitive self-models. To illustrate that I understand 2.3 let me give an example: My son has figured out that people hear what they expect to hear and experimented with leaving out fragments of words or sentences, enjoying himself by how people never noticed anything was off (example: "ood morning"). Here, the missing part doesn't make it into people's awareness despite the whole sentence very well does.

I'm not asserting that there is nothing upstream of S(A) that is causing it. I'm asserting that an individual S(A) is not causing A. I'm asserting so because it can't timing-wise and equivalently, that there is no neurological action path from S(A) to A. The only relation between S(A) and A is that S(A) and A co-occurring has been statistically positive valence in the past. And this co-occurrence is facilitated by a common precursor. But saying S(A) is causing A is as right or wrong as saying A is causing S(A).

(I'd rewrite (in 2.6.5) "Illusions of free will" to "Illusions of intentionality" since it doesn't seem very related to what people usually refer to with "free will" iiuc.)

I was under the impression that “illusions of free will” was a standard term in the literature, but I just double-checked and I guess I was wrong, it just happened to be in one paper I read. Oops. So I guess I’m entitled to use whatever term seems best to me.

I mildly disagree that it’s unrelated to “free will”, but I agree that all things considered your suggestion “illusions of intentionality” is a bit better. I’m changing it, thanks!

I am confused about the purpose of the "awareness and memory" section, and maybe disagree with the intuition said to be obvious in the second subsection. Is there some deeper reason you want to bring up how we self-model memory / something you wanted to talk about there that I missed?

I could have cut §2.4. It’s not particularly important for later posts in the series. I thought about it. But it was pretty short, and I judged that it might be marginally useful all things considered.

Even if you lack the §2.4.2 intuition linking amnesia to I-must-have-been-unconscious, other people certainly have that intuition, e.g. Clive Wearing “constantly believes that he has only recently awoken from a comatose state”. I thought it was worth saying why someone might find that to be intuitively appealing, even if they simultaneously have other countervailing intuitions.

I still don't get this "only one thing in awareness" thing. There are multiple neurons in cortex and I can imagine two apples - in what sense there can only be one thing in awareness?

Or equivalently, it corresponds equally well to two different questions about the territory, with two different answers, and there’s just no fact of the matter about which is the real answer.

Obviously the real answer is the model which is more veridical^^. The latter hindsight model is right not about the state of the world at t=0.1, but about what you thought about the world at t=0.1 later.

I still don't get this "only one thing in awareness" thing. There are multiple neurons in cortex and I can imagine two apples - in what sense there can only be one thing in awareness?

One thought in awareness! Imagining two apples is a different thought from imagining one apple, right? They’re different generative models, arising in different situations, with different implications, different affordances, etc. Neither is a subset of the other. (I.e., there are things that I might do or infer in the context of one apple, that I would not do or infer in the context of two apples.)

I can have a song playing in my head while reading a legal document. That’s because those involve different parts of the cortex. In my terms, I would call that “one thought” involving both a song and a legal document. On the other hand, I can’t have two songs playing in my head simultaneously, nor can I be thinking about two unrelated legal documents simultaneously. Those involve the same parts of the cortex being asked to do two things that conflict. So instead, I’d have to flip back and forth.

There are multiple neurons in the cortex, but they’re not interchangeable. Again, I think autoassociative memory / attractor dynamics is a helpful analogy here. If I have a physical instantiation of a Hopfield network, I can’t query 100 of its stored patterns in parallel, right? I have to do it serially.

I don’t pretend that I’m offering a concrete theory of exactly what data format a “generative model” is etc., such that song-in-head + legal-contract is a valid thought but legal-contract + unrelated-legal-contract is not a valid thought. …Not only that, but I’m opposed to anyone else offering such a theory either! We shouldn’t invent brain-like AGI until we figure out how to use it safely, and those kinds of gory details would be getting uncomfortably close, without corresponding safety benefits, IMO.

On the other hand, I can’t have two songs playing in my head simultaneously,

Tangent: I play at irish sessions, and one of the things you have to do there is swap tunes. If you lead a transition you have to be imagining the next tune you're going to play at the same time as you're playing the current tune. In fact, often you have to decide on the next tune on the fly. This us a skill that takes some time to grok. You're probably conceptualizing the current and future tunes differently, but there's still a lot of overlap - you have to keep playing in sync with other people the entire time, while at the same time recalling and anticipating the future tune.