Positives of a future with human capability improvement over an AI future.

Beren Millidge has an essay arguing for the claim that a future in which humanity proceeds with biological capability increasing is scarier than a future in which we develop AGI. Here are some concluding sentences from his essay:

Ultimately, human intelligence amplification and the resulting biosingularity has a deeper and more intractable alignment problem than AI alignment, at least if we don’t just assume it away by asserting that humans and our transhuman creations just have some intrinsic and ineffable access to ‘human values’ that potential AIs lack.

The only potential positive as regards alignment of the biosingularity is that it will happen much later, most likely in the closing decades of the 21st century and around the end of the natural lifespans of my personal cohort. This gives significantly more time to prepare than AGI, which is likely coming much sooner, but the problem is much harder and requires huge advances in neuroscience and understanding of brain algorithms to even reach the level of control we have over today’s AI systems (which is likely far from sufficient).

I disagree with his thesis — I think that instead of creating AIs smarter than humans, it would be much better to proceed with increasing the capabilities of humans (for at least the next 100 years). [1] [2] Millidge's claim that the only potential positive of a biofoom is that it starts later seems clearly false. In this note, I will list other imo important (pro tanto) positives of a human foom. I agree with Millidge that there are also positives of the AGI path; [3] these won't be discussed in the present note. To assess which path is better overall, one could want to compare the positives of the human path to the positives of the AGI path, [4] but I will not do that here. The rest of this note is the list of positives. [5]

more similar entity => more similar values

an argument in favor of the human path:

- assumption 1. it makes sense to speak of the values of an entity, and the values of an entity are some sort of kinda-smooth function of the entity's structure/constitution

- assumption 2. humanity (taken as an entity) currently has pretty good values

- conclusion. somewhat modified humanity will still have pretty good values. in particular, a somewhat bioenhanced humanity will still have pretty good values

- in contrast: an AGI future will involve the-process-happening-on-earth changing a lot more from what it is now, certainly by each point in time, but also by the time each higher capability level is reached

we can also give a similar argument for single humans:

- assumption 1. it makes sense to speak of the values of an entity, and the values of an entity are some sort of kinda-smooth function of the entity's structure/constitution

- assumption 2'. individual humans growing up in current human societies have pretty good values

- conclusion'. somewhat modified individual humans growing up in somewhat modified societies will still have pretty good values

- in contrast: an AGI future will involve AGIs that are much more different than these future humans growing up in contexts that are much more different from the contexts in which current humans grow up. this is certainly true by each time (or each time after the beginning of each "foom proper"), but also by the point each higher capability level is reached

said another way:

- the target of right values is drawn largely around where the arrow(s) determining the values that humans have landed. shooting more similar arrows in a similar way is a decent strategy for hitting that target again.

[humans are]/[humanity is] just cool

- on the biofoom path, it will still be humanity, made of humans. humanity is cool. humans are cool. humans with increased capabilities and humanity with increased capabilities would be cool as well. like, of course it's possible for us to become even much cooler, but we're already occupying a very specific rare cool region in mindspace

- the smarter humans you make will be slotting into existing human institutions/organizations/communities. existing human institutions/organizations/communities will still be useful to the smarter humans. this is a reason for existing human institutions/organizations/communities to persist/thrive/develop. [6] and existing human institutions are carriers/supporters/implementers of human values and also cool

- the more capable humans will be continuing existing human (social, political, artistic, technological, scientific, mathematical, philosophical) projects and traditions. human thought will continue to develop. this is cool

- like, even if the humans with improved capabilities were to (let's say) form their own state and build a massive biodome around it and cause the outside to eventually become uninhabitable by polluting it or militarily leveling it to make room for automated factories or whatever, killing all other humans, that would be a very bad thing for them to do, but it would still be a human future, and that's pretty important

an analogy:

- suppose you are at intelligence level

- the agent could just be you after having taken a linear algebra course or after inventing some new methods in numerical linear algebra

- the agent could just be you after getting gene therapy which changes a single genetic variant to one that better supports learning (even in adulthood)

- the agent could be some sort of novel mind you create from scratch by some training procedure (ok maybe involving some culture which was also involved when you grew up)

- now, there is at least some range of your mind-making precision/understanding parameter from

- to spell out the analogy: with humanity as the agent, the human path is like becoming smarter via gene therapy and learning linear algebra, whereas the AI path is like creating a novel thing happening on earth largely in novel ways from scratch [9]

reasons why human thought would be guiding development more/better in a biofoom

- we have a lot of experience with and understanding about humans and specifically how to raise humans

- for example, voters, politicians, and researchers will all have much higher-quality starting intuitions about what millions of human einsteins would be like than about what a bunch of AIs would be like

- biofooming will be happening later, so we will have more time to prepare before it starts happening [10]

- but also biofooming will be happening much slower, so humans will have more time to think about each improvement

- like, the speed of development will be slower compared to the speed of human thought, so more human thought can go into each step of development. each unit step of development will be more human-thought-fully chosen

- this is true at the level of individual humans thinking about what should be done, and also true at the level of institutions and governments (like, a government running at human speed will be better able to legislate a slow biofoom)

reasons a biofooming world would be diffusely human-friendly (sociopolitical factors, anti-[gradual disempowerment] stuff)

- since humans will still be expensive to create and will continue to run on [at least order

- it will be somewhat difficult/weird for more capable humans to render the environment unlivable to less capable humans, because all humans have basically the same environmental requirements (for now) [11]

- ditto for good governance, laws, norms. e.g. it'd be quite natural for a law that makes it harder to manipulate the parents of an IVF einstein out of their property to also make it harder to manipulate the amish out of their property

- ditto for organizations and economic structures. e.g. it would be very natural for AIs to have a research process that operates in neuralese on a server, with translation costs being high enough that even if a human could do some useful task, doing the task yourself is cheaper than "translating" the task and context to a human; this effect is still present in human-human interactions but the degree to which it is present on the human path is smaller than the degree to which it is present on the AI path

- empathy is easier/"more natural" toward beings that are more like you. when agent B is more similar to agent A, it is more likely that [golden rule]/[categorical imperative] style reasoning leads A to treat B well [12]

- power concentration stuff is less bad in the biofoom case. it's not like there would be some entity controlling these more capable humans. these humans will be growing up in various families, involved in various communities/cultures/nations, going to various schools. in the biofoom case, RSI will not be localized to a single lab [13] . monopolies are much more natural in the AI case than in the biofoom case

- the AGI path involves power shifting away from people (and to AIs or companies) a lot more

- fooming happening slower means that the change in technological/social/cultural/environmental conditions during any

- fooming happening slower compared to the pace of life means that more life can be lived by existing living beings before being at the frontier of development start to find them boring and useless (or like, i don't want to say that this happens necessarily, but there is a force pushing in this direction)

- in a biofoom, there will be a graph of caring connecting most humans to most other humans with not that many steps; it will only have such-and-such clustering coefficients

- human institutions, organizations, systems are generally more likely to survive for longer. in particular, the following specific human institutions/organizations/systems supporting the ability of each human to live a good long life are more likely to survive for longer: states, laws, systems enforcing laws, social safety nets, democracy, cryonics organizations

- technologies which benefit humans with higher capabilities will also be somewhat likely to benefit current-100-iq humans. eg cures to diseases and other medical treatments, better educational methods, new words/concepts, most consumer technologies. if humans remain central to [doing stuff]/[the economy], then a large fraction of economically rewarded innovation will be making humans more capable, and so technological progress will generally be pointed in a more humane direction

- in general: various mad local forces at play in the world (greed, status-seeking, etc) will stay pointed in a more humane direction

- also, governments will be much better able to understand, track, and deal with messy world problems in the biofoom case

we know humans [can be and often are] kind/nice to other humans

about humans, we know the following:

- most humans consider it very bad to personally kill other humans, and would not kill other humans in normal circumstances. most humans consider the killing of humans highly reprehensible in general [14]

- most humans consider it bad to steal from humans. most humans think human property rights should basically be respected. most humans are not in favor of taking property from people. most people would consider it very bad to take property from a person such that this person would then be unable to live an alright life

- probably there are some humans who have a deep enough commitment to humanity that they'd remain nice to all existing humans even after individually fooming a lot (though note this won't be happening in the human foom)

also:

- there are specific steps/niches in human evolutionary history which made us nicer to unrelated strangers

other factors

- there are factors much more tightly constraining capabilities in the biofoom case than in the AI foom case:

- brain volume is tough to increase by idk more than

- roughly, there is some not-THAT-large finite number of intelligence-increasing "genetic ideas" in the current gene pool, and for at least some initial period these set a cap on how far you can go biologically. it will be very hard to genetically write novel more capable human learning algorithms. the genome interface to mind reprogramming is kinda cursed

- it will be expensive and potentially immoral and illegal to "run experiments"

- brain volume is tough to increase by idk more than

- humanity's correct values are somewhat well-tracked by reflection / self-endorsed development, and the biofoom path will be like that

- it makes sense to speak of what sort of reflection and development should happen, distinguishing this from development that is likely to happen. it is very much not true that anything goes! if it were likely/natural that our society would get replaced by a molecular squiggle maximizer, that wouldn't mean that our society's true values are to make lots of those molecular squiggles, and that wouldn't mean this was what should happen all along. whereas if we reflect carefully and understand more stuff and become better versions of ourselves and conclude that we should make lots of some molecular squiggles, then it's plausible that this was what should happen all along.

- it is (at least prima facie) extremely scary/reckless to have a step in your development where you hand things over to some novel mind created largely from scratch! this is done much more on the AI path than on the human path

- i say some more on good development and also the general topic of this note here: https://www.lesswrong.com/posts/iemgJhjNLa5eyevWR/kh-s-shortform?commentId=PHm2ZkagfyrhT2Wvz

a concluding remark

self-improvement generally has many good properties over creating a new agent/mind from scratch [15]

i think we should ban AGI ↩︎

It is also a possibility that both options are bad. My view is that we should push forward with increasing human capabilities biologically and culturally/educationally. But I think this question deserves serious analysis, and there are certainly specific things here that one should be very careful about and regulate. However, this note will not be analyzing this question. ↩︎

That said, I think the analysis of the positives in his essay gets very many things wrong. ↩︎

But one also doesn't have to do that, to compare the two paths. One can also just "directly" think about what would happen along each path. ↩︎

They are not listed in order of importance. ↩︎

clearly this is a reason for these to be around for longer in wall clock time, but also it's a reason for them to be around until higher capability levels ↩︎

in fact it seems plausible that, at least if the mind design has to be done from your own bounded perspective, it will keep being better to self-improve forever. i think this is plausible on this individual future life coolness axis and also all-things-considered. ↩︎

ok, if you (imo incorrectly) believe in some sort of soul theory of personal identity then maybe to make this a fair example you would need to imagine the soul getting detached in all three examples, but then maybe you will think all of these are suicides... so maybe this isn't a good analogy for you... but hmm i guess maybe you should also in the same sense believe in there being a soul attached to each society though, and then it would be a good analogy actually ↩︎

ok, there's a meaningful amount of shared culture. even if you thought human minds and AI minds are "mostly cultural" and that subbing out representation/learning/etc algorithms/structures and radically changing learning contexts doesn't make it legit to say AGI will be a novel thing created largely in novel ways from scratch, it is still at least a really big step; it's still creating some totally new guy ↩︎

as Millidge says ↩︎

yes, there are sort of examples like climate change. but it is still much easier to imagine entities that are just programs that can be run on arbitrary computers being totally fine with or even preferring a very different environment. there is a large difference in degree here ↩︎

and we at least know humans have a propensity to carry out and be moved by this style of reasoning ↩︎

or three labs or whatever. In reality, I think only a single lab will matter, absent strict capability regulation. ↩︎

there is a major issue around diffuse effects — like, people and institutions currently not tracking [decreasing the lifespan of

this is also important to track when thinking about AIs making more capable AIs ↩︎

Hmmm, some good points. Clearly if I write something to complex this will take way too long and therefore, the cool voice is good.

Okay, yes humans can be cool but all humans cool?

Maybe some governments not cool? How does AI vs Bio affect if one big cool or many small cool? What if like homo deus we get separate Very Smart group of humans and one not so smart?

Human worse thing done worse than LLM worse thing done? Less control over range of expression? Moral mazes lead to psychopaths in control? Maybe non cool humans take control?

Yet, slow process good point. Coolness better chance if longer to remain cool.

Maybe democracy + debate cool? Totalitarianism not cool? Coolness is not group specific for AI or human? Coolness about how cool the decision process is? What does coolness attractor look like?

Cool.

What if like homo deus we get separate Very Smart group of humans and one not so smart?

I agree this would most likely be either somewhat bad or quite bad, probably quite bad (depending on the details), both causally and also indicatorily.

I'll restrict my discussion to reprogenetics, because I think that's the main way we get smarter humans. My responses would be "this seems quite unlikely in the short run (like, a few generations)" and "this seems pretty unlikely in the longer run, at least assuming it's in fact bad" and "there's a lot we can do to make those outcomes less likely (and less bad)".

Why unlikely in the short run? Some main reasons:

- Uptake of reprogenetics is slow (takes clock time), gradual (proceeds by small steps), and fairly visible (science generally isn't very secretive; and if something is being deployed at scale, that's easy to notice, e.g. many clinics offering something or some company getting huge investments). These give everyone more room to cope in general, as discussed above, and in particular gives more time for people to notice emerging inequality and such. So humanity in general gets to learn about the tech and its meaning, institute social and governmental regulation, gain access, understand how to use it, etc.

- Likewise, the strength of the technology itself will grow gradually (though I hope not too slowly). Further, even as the newest technology gets stronger, the previous medium strength tech gets more uptake. This means there's a continuum of people using different levels of strength of the technology.

- Parents will most likely have quite a range of how much they want to use reprogenetics for various things. Some will only decrease their future children's disease risks; some will slightly increase IQ; some might want to increase IQ around as much as is available.

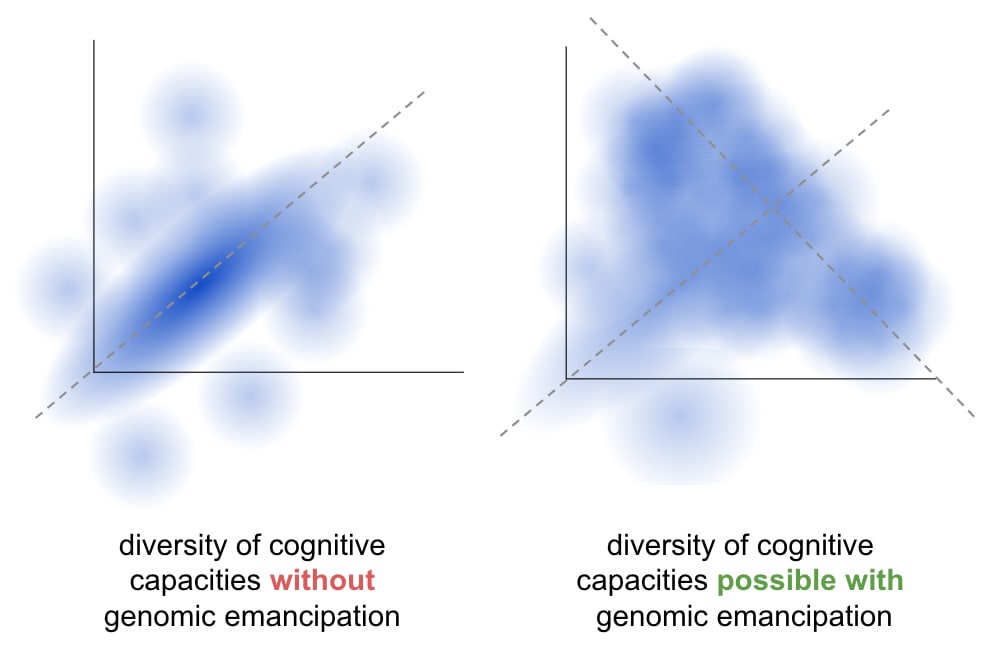

- IQ, and even more so for other cognitive capacities and traits, is controlled...

- partly by genetic effects we can make use of (currently something in the ballpark of 20% or so);

- partly by genetic effects we can't make use of (very many of which might take a long time to make use of because they have small and/or rare effects, or because they have interactions with other genes and/or the environment);

- partly by non-genetic causes (unstructured environment such as randomness in fetal development, structured external environment such as culture, structured internal environment such as free self-creation / decisions about what to be or do).

- Thus, we cannot control these traits, in the sense of hitting a narrow target; we can only shift them around. We cannot make the distribution of a future child's traits be narrow. (This is a bad state of affairs in some ways, but has substantive redeeming qualities, which I'm invoking here: people couldn't firmly separate even if they wanted to (though this may need quantification to have much force).)

- Together, I suspect that the above points create, not separated blobs, but a broad continuum:

- As mentioned in the post, there are ceilings to human intelligence amplification that would probably be hit fairly quickly, and probably wouldn't be able to be bypassed quickly. (Who knows how long, but I'm imagining at least some decades--e.g. BCIs might take on that time scale or longer to impact human general intelligence--reprogenetics is the main strategy that takes advantage of evolution's knowledge about how to make capable brains, and I'm guessing that knowledge is more than we will do in a couple decades but not insanely much in the grand scheme of things.)

What can we do? Without going into detail on how, I'll just basically say "greatly increase equality of access through innovation and education, and research cultures to support those things".

Okay, I think the gradual point is a good one and also that it very much helps our institutions to be able to deal with increased intelligence.

I would be curious what you think about the idea of more permanent economic rifts and also the general economics of gene editing? Might it be smart to make it a public good instead?

Maybe there's something here about IQ being hereditary already and thus the point about a more permanent two caste society with smart and stupid people is redundant but somehow I still feel that the economics of private gene editing over long periods of time feels a bit off?

I would be curious what you think about the idea of more permanent economic rifts and also the general economics of gene editing?

As a matter of science and technology, reprogenetics should be inexpensive. I've analyzed this area quite a bit (though not focused specifically on eventual cost), see https://berkeleygenomics.org/articles/Methods_for_strong_human_germline_engineering.html . My fairly strong guess is that it's perfectly feasible to have strong reprogenetics that's pretty inexpensive (on the order of $5k to $25k for a pretty strongly genomically vectored zygote). From a tech and science perspective, I think I see multiple somewhat-disjunctive ways, each of which is pretty plausibly feasible, and each of which doesn't seem to have any inputs that can't be made inexpensive.

(As a comparison point, IVF is expensive--something like $8k to $20k--but my guess is that this is largely because of things like regulatory restrictions (needing an MD to supervise egg retrieval, even though NPs can do it well), drug price lock-in (the drugs are easy to manufacture, so are available cheaply on gray markets), and simply economic friction/overhang (CNY is cheaper basically by deciding to be cheaper and giving away some concierge-ness). None of this solves things for IVF today; I'm just saying, it's not expensive due to the science and tech costing $20k.)

Assuming that it can be technically inexpensive, that cuts out our work for us: make it be inexpensive, by

- Making the tech inexpensive

- Thinking not just about the current tech, but investing in the stronger tech (stronger -> less expensive https://berkeleygenomics.org/articles/Methods_for_strong_human_germline_engineering.html#strong-gv-and-why-it-matters )

- Culture of innovation

- Both internal to the field, and pressure / support from society and gvt

- Don't patent and then sit on it or keep it proprietary; instead publish science, or patent and license, or at least provide it as a platform service

- Avoiding large other costs

- Sane regulation

- No monopolies

- Subsidies

Might it be smart to make it a public good instead?

I definitely think that

- as much as possible, gvt should fund related science to be published openly; this helps drive down prices, enables more competitive last-mile industry (clinics, platforms for biological operations, etc.), and signals a societal value of caring about this and not leaving it up to raw market forces

- probably gvt should provide subsidies with some kind of general voucher (however, it should be a general voucher only, not a voucher for specific genomic choices--I don't want the gvt controlling people's genomic choices according to some centralized criterion, as this is eugenicsy, cf. https://berkeleygenomics.org/articles/Genomic_emancipation_contra_eugenics.html )

Is that what you mean? I don't think we can rely on gvt and philanthropic funding to build out a widely-accessible set of clinics / other practical reprogenetics services, so if you meant nationalizing the industry, my guess is no, that would be bad to do.

I meant the basic economy way of defining public good, not necessarily the distribution mchanism, electricity and water are public goods but they aren't necessarily determined by the government.

I've had the semi ironic idea of setting up a "genetic lottery" if supply was capped as it would redistribute things evenly (as long as people sign up evenly which is not true).

Anyways, cool stuff, happy that someone is on top of this!

Okay, yes humans can be cool but all humans cool?

generally, humans are cool. in fact probably all current humans are intrinsically cool. a few are suffering very badly and say they would rather not exist, and in some cases their lives have been net negative so far. we should try to help these people. some humans are doing bad things to other humans and that's not cool. some humans are sufficiently bad to others that it would have been better if they were never born. such humans should be rehabilitated and/or contained, and conditions should be maintained/created in which this is disincentivized

Coolness is not group specific for AI or human?

not group specific in principle, but human life is pro tanto strongly cooler. but eg a mind uploaded human society would still be cool. continuing human life is very important. deep friendships with aliens should not be ruled out in principle, but should be approached with great caution. any claim that we should already care deeply about the possible lives of some not-specifically-chosen aliens that we might create, that we haven't yet created, and so that we have great reason to create them, is prima facie very unlikely. this universe probably only has negentropy for so many beings (if you try to dovetail all possible lives, you won't even get to running any human for a single step); we should think extremely carefully about which ones we create and befriend

What if like homo deus we get separate Very Smart group of humans and one not so smart?

Human worse thing done worse than LLM worse thing done? Less control over range of expression? Moral mazes lead to psychopaths in control? Maybe non cool humans take control?

i agree these are problems that would need to be handled on the human path

Moral mazes lead to psychopaths in control? Maybe non cool humans take control?

This is a significant worry--but my guess is that having lots more really smart people would make the problem get better in the long run. That stuff is already happening. Figuring out how to avoid it is a very difficult unsolved problem, which is thus likely to be heavily bottlenecked on ideas of various kinds (e.g. ideas for governance, for culture, for technology to implement good journalism, etc etc.).

Hmmm but what if human good not coupled with human wisdom? Maybe more intelligence more power seeking if not carefully implemented?

Probably better than doing the Big AI though.

Hmmm but what if human good not coupled with human wisdom? Maybe more intelligence more power seeking if not carefully implemented?

I think this is just not the case; I'd guess it's slightly the opposite on average, but in any case, I've never heard anyone make an argument for this based on science or statistics. (There could very well be a good such arguments, curious to hear!)

Separately, I'd suggest that humanity is bottlenecked on good ideas--including ideas for how to have good values / behave well / accomplish good things / coordinate on good things / support other people in getting more good. A neutral/average-goodness human, but smart, would I think want to contribute to those problems, and be more able to do so.

I'll share a paper I remember seeing on the ability to do motivated reasoning and holding onto false views being higher for higher iq people tomorrow (if it actually is statistically significant).

Also maybe the more important things to improve after a certain IQ might be openness and conscientiousness? Thoughts on that?

I do think that it actually is quite possible to do some gene editing on big 5 and ethics tbh but we just gotta actually do it.

Personality is a more difficult issue because

- more potential for scientifically unknown effects--it's a complex trait

- more potential bad parental decision-making (e.g. not understanding what very high disagreeableness really means)

- more potential for parental or even state misuse (e.g. wanting a kid to be very obedient)

- more weirdness regarding consent and human dignity and stuff; I think it's pretty unproblematic to decrease disease risk and increase healthspan and increase capabilities, and only slightly problematic (due to competition effects and possible health issues) to tweak appearance (though I don't really care about this one); but I think it's kinda problematic with personality traits because you're messing with values in ways you don't understand; not so problematic that it's ruled out to tweak traits within the quite-normal human range, and I'm probably ultimately pretty in favor of it, but I'd want more sensitivity on these questions

- technically more difficult than IQ or disease because conceptually muddled, hard to measure, and maybe genuinely more genetically complex.

That said, yeah, I'm in favor of working out how to do it well. E.g. I'm interested in understanding and eventually measuring "wisdom" https://www.lesswrong.com/posts/fzKfzXWEBaENJXDGP/what-is-wisdom-1 .

I would agree that this is a weird incentive issue and that IQ is probably easier and less thorny than personality traits. With that being said here's a fun little thought on alternative ways of looking at intelligence:

Okay but why is IQ a lot more important than "personality"?

IQ being measured as G and based on correlational evidence about your ability to progress in education and work life. This is one frame to have on it. I think it correlates a lot of things about personality into a view that is based on a very specific frame from a psychometric perspective?

Okay, let's look at intelligence from another angle, we use the predictive processing or RL angle that's more about explore exploit, how does that show up? How do we increase the intelligence of a predictive processing agent? How does the parameters of when to explore and when to exploit and the time horizon of future rewards?

Openness here would be the proclivity to explore and look at new sources of information whilst conscientiousness is about the time horizon of the discouting factor in reward learning. (Correlatively but you could probably define new better measures of this, the big 5 traits are probably not the true names for these objectives.)

I think it is better for a society to be able to talk to each other and integrate information well hence I think we should make openness higher from a collective intelligence perspective. I also think it is better if we imagine that we're playing longer form games with each other as that generally leads to more cooperative equilibria and hence I think conscientiousness would also be good if it is higher.

(The paper I saw didn't replicate btw so I walk back the intelligence makes you more ignorant point. )

(Also here's a paper talking about the ability to be creative having a threshold effect around 120 iq with openness mattering more after that, there's a bunch more stuff like this if you search for it.)

(Also here's a paper talking about the ability to be creative having a threshold effect around 120 iq with openness mattering more after that, there's a bunch more stuff like this if you search for it.)

To speculate, it might be the case that effects like this one are at least to some extent due to the modern society not being well-adapted to empowering very-high-g[1] people, and instead putting more emphasis on "no one being left behind"[2]. Like, maybe you actually need a proper supportive environment (that is relatively scarce in the modern world) to reap the gains from very high g, in most cases.

(Not confident about the size of the effect (though I'm sure it's at least somewhat true) or about the relevance for the study you're citing, especially after thinking it through a bit after writing this, but I'm leaving it for the sake of expanding the hypothesis space.)

But, if it's not that, then the threshold thing is interesting and weird.

I would hypothesise that it is more about the underlying ability to use the engine that is intelligence. If we do the classic eliezer definition (i think it is in the sequences at least) of the ability to hit a target then that is only half of the problem because you have to choose a problem space as well.

Part of intelligence is probably choosing a good problem space but I think the information sampling and the general knowledge level of the people and institutions and general information sources around you is quite important to that sampling process. Hence if you're better at integrating diverse sources of information then you're likely better at making progress.

Finally I think there's something about some weird sort of scientific version of frame control where a lot of science is about asking the right question and getting exposure to more ways of asking questions lead to better ways of asking questions.

So to use your intelligence you need to wield it well and wielding it well partly involves working on the right questions. But if you're not smart enough to solve the questions in the first place it doesn't really matter if you ask the right question.

a few thoughts on hyperparams for a better learning theory (for understanding what happens when a neural net is trained with gradient descent)

Having found myself repeating the same points/claims in various conversations about what NN learning is like (especially around singular learning theory), I figured it's worth writing some of them down. My typical confidence in a claim below is like 95%[1]. I'm not claiming anything here is significantly novel. The claims/points:

- local learning (eg gradient descent) strongly does not find global optima. insofar as running a local learning process from many seeds produces outputs with 'similar' (train or test) losses, that's a law of large numbers phenomenon[2], not a consequence of always finding the optimal neural net weights.[3][4]

- if your method can't produce better weights: were you trying to produce better weights by running gradient descent from a bunch of different starting points? getting similar losses this way is a LLN phenomenon

- maybe this is a crisp way to see a counterexample instead: train, then identify a 'lottery ticket' subnetwork after training like done in that literature. now get rid of all other edges in the network, and retrain that subnetwork either from the previous initialization or from a new initialization — i think this literature says that you get a much worse loss in the latter case. so training from a random initialization here gives a much worse loss than possible

- dynamics (kinetics) matter(s). the probability of getting to a particular training endpoint is highly dependent not just on stuff that is evident from the neighborhood of that point, but on there being a way to make those structures incrementally, ie by a sequence of local moves each of which is individually useful.[5][6][7] i think that this is not an academic correction, but a major one — the structures found in practice are very massively those with sensible paths into them and not other (naively) similarly complex structures. some stuff to consider:

- the human eye evolving via a bunch of individually sensible steps, https://en.wikipedia.org/wiki/Evolution_of_the_eye

- (given a toy setup and in a certain limit,) the hardness of learning a boolean function being characterized by its leap complexity, ie the size of the 'largest step' between its fourier terms, https://arxiv.org/pdf/2302.11055

- imagine a loss function on a plane which has a crater somewhere and another crater with a valley descending into it somewhere else. the local neighborhoods of the deepest points of the two craters can look the same, but the crater with a valley descending into it will have a massively larger drainage basin. to say more: the crater with a valley is a case where it is first loss-decreasing to build one simple thing, (ie in this case to fix the value of one parameter), and once you've done that loss-decreasing to build another simple thing (ie in this case to fix the value of another parameter); getting to the isolated crater is more like having to build two things at once. i think that with a reasonable way to make things precise, the drainage basin of a 'k-parameter structure' with no valley descending into it will be exponentially smaller than that of eg a 'k-parameter structure' with 'a k/2-parameter valley' descending into it, which will be exponentially smaller still than a 'k-parameter structure' with a sequence of valleys of slowly increasing dimension descending into it

- it seems plausible to me that the right way to think about stuff will end up revealing that in practice there are basically only systems of steps where a single [very small thing]/parameter gets developed/fixed at a time

- i'm further guessing that most structures basically have 'one way' to descend into them (tho if you consider sufficiently different structures to be the same, then this can be false, like in examples of convergent evolution) and that it's nice to think of the probability of finding the structure as the product over steps of the probability of making the right choice on that step (of falling in the right part of a partition determining which next thing gets built)

- one correction/addition to the above is that it's probably good to see things in terms of there being many 'independent' structures/circuits being formed in parallel, creating some kind of ecology of different structures/circuits. maybe it makes sense to track the 'effective loss' created for a structure/circuit by the global loss (typically including weight norm) together with the other structures present at a time? (or can other structures do sufficiently orthogonal things that it's fine to ignore this correction in some cases?) maybe it's possible to have structures which were initially independent be combined into larger structures?[8]

- everything is a loss phenomenon. if something is ever a something-else phenomenon, that's logically downstream of a relation between that other thing and loss (but this isn't to say you shouldn't be trying to find these other nice things related to loss)

- grokking happens basically only in the presence of weight regularization, and it has to do with there being slower structures to form which are eventually more efficient at making logits high (ie more logit bang for weight norm buck)

- in the usual case that generalization starts to happen immediately, this has to do with generalizing structures being stronger attractors even at initialization. one consideration at play here is that

- nothing interesting ever happens during a random walk on a loss min surface

- it's not clear that i'm conceiving of structures/circuits correctly/well in the above. i think it would help a library of like >10 well-understood toy models (as opposed to like the maybe 1.3 we have now), and to be very closely guided by them when developing an understanding of neural net learning

some related (more meta) thoughts

- to do interesting/useful work in learning theory (as of 2024), imo it matters a lot that you think hard about phenomena of interest and try to build theory which lets you make sense of them, as opposed to holding fast to an existing formalism and trying to develop it further / articulate it better / see phenomena in terms of it

- this is somewhat downstream of current formalisms imo being bad, it imo being appropriate to think of them more as capturing preliminary toy cases, not as revealing profound things about the phenomena of interest, and imo it being feasible to do better

- but what makes sense to do can depend on the person, and it's also fine to just want to do math lol

- and it's certainly very helpful to know a bunch of math, because that gives you a library in terms of which to build an understanding of phenomena

- it's imo especially great if you're picking phenomena to be interested in with the future going well around ai in mind

(* but it looks to me like learning theory is unfortunately hard to make relevant to ai alignment[9])

acknowledgments

these thoughts are sorta joint with Jake Mendel and Dmitry Vaintrob (though i'm making no claim about whether they'd endorse the claims). also thank u for discussions: Sam Eisenstat, Clem von Stengel, Lucius Bushnaq, Zach Furman, Alexander Gietelink Oldenziel, Kirke Joamets

with the important caveat that, especially for claims involving 'circuits'/'structures', I think it's plausible they are made in a frame which will soon be superseded or at least significantly improved/clarified/better-articulated, so it's a 95% given a frame which is probably silly ↩︎

train loss in very overparametrized cases is an exception. in this case it might be interesting to note that optima will also be off at infinity if you're using cross-entropy loss, https://arxiv.org/pdf/2006.06657 ↩︎

also, gradient descent is very far from doing optimal learning in some solomonoff sense — though it can be fruitful to try to draw analogies between the two — and it is also very far from being the best possible practical learning algorithm ↩︎

by it being a law of large numbers phenomenon, i mean sth like: there are a bunch of structures/circuits/pattern-completers that could be learned, and each one gets learned with a certain probability (or maybe a roughly given total number of these structures gets learned), and loss is roughly some aggregation of indicators for whether each structure gets learned — an aggregation to which the law of large numbers applies ↩︎

to say more: any concept/thinking-structure in general has to be invented somehow — there in some sense has to be a 'sensible path' to that concept — but any local learning process is much more limited than that still — now we're forced to have a path in some (naively seen) space of possible concepts/thinking-structures, which is a major restriction. eg you might find the right definition in mathematics by looking for a thing satisfying certain constraints (eg you might want the definition to fit into theorems characterizing something you want to characterize), and many such definitions will not be findable by doing sth like gradient descent on definitions ↩︎

ok, (given an architecture and a loss,) technically each point in the loss landscape will in fact have a different local neighborhood, so in some sense we know that the probability of getting to a point is a function of its neighborhood alone, but what i'm claiming is that it is not nicely/usefully a function of its neighborhood alone. to the extent that stuff about this probability can be nicely deduced from some aspect of the neighborhood, that's probably 'logically downstream' of that aspect of the neighborhood implying something about nice paths to the point. ↩︎

also note that the points one ends up at in LLM training are not local minima — LLMs aren't trained to convergence ↩︎

i think identifying and very clearly understanding any toy example where this shows up would plausibly be better than anything else published in interp this year. the leap complexity paper does something a bit like this but doesn't really do this ↩︎

i feel like i should clarify here though that i think basically all existing alignment research fails to relate much to ai alignment. but then i feel like i should further clarify that i think each particular thing sucks at relating to alignment after having thought about how that particular thing could help, not (directly) from some general vague sense of pessimism. i should also say that if i didn't think interp sucked at relating to alignment, i'd think learning theory sucks less at relating to alignment (ie, not less than interp but less than i currently think it does). but then i feel like i should further say that fortunately you can just think about whether learning theory relates to alignment directly yourself :) ↩︎

Simon-Pepin Lehalleur weighs in on the DevInterp Discord:

I think his overall position requires taking degeneracies seriously: he seems to be claiming that there is a lot of path dependency in weight space, but very little in function space 😄

In general his position seems broadly compatible with DevInterp:

- models learn circuits/algorithmic structure incrementally

- the development of structures is controlled by loss landscape geometry

- and also possibly in more complicated cases by the landscapes of "effective losses" corresponding to subcircuits...

This perspective certainly is incompatible with a naive SGD = Bayes = Watanabe's global SLT learning process, but I don't think anyone has (ever? for a long time?) made that claim for non toy models.

It seems that the difference with DevInterp is that

- we are more optimistic that it is possible to understand which geometric observables of the landscape control the incremental development of circuits

- we expect, based on local SLT considerations, that those observables have to do with the singularity theory of the loss and also of sub/effective losses, with the LLC being the most important but not the only one

- we dream that it is possible to bootstrap this to a full fledged S4 correspondence, or at least to get as close as we can.

Ok, no pb. You can also add the following :

I am sympathetic but also unsatisfied with a strong empiricist position about deep learning. It seems to me that it is based on a slightly misapplied physical, and specifically thermodynamical intuition. Namely that we can just observe a neural network and see/easily guess what the relevant "thermodynamic variables" of the system.

For ordinary 3d physical systems, we tend to know or easily discover those thermodynamic variables through simple interactions/observations. But a neural network is an extremely high-dimensional system which we can only "observe" through mathematical tools. The loss is clearly one such thermodynamic variable, but if we expect NN to be in some sense stat mech systems it can't be the only one (otherwise the learning process would be much more chaotic and unpredictable). One view of DevInterp is that we are "just" looking for those missing variables...

I'd be curious about hearing your intuition re " i'm further guessing that most structures basically have 'one way' to descend into them"

When fooming, uphold the option to live in an AGI-free world.

There are people who think (imo correctly) that there will be at least one vastly superhuman AI in the next 100 years by default and (imo incorrectly) that proceeding along the AI path does not lead to human extinction or disempowerment by default. My anecdotal impression is that a significant fraction (maybe most) of such people think (imo incorrectly) that letting Anthropic/Claude do recursive self-improvement and be a forever-sovereign would probably go really well for humanity. The point of this note is to make the following proposal and request: if you ever let an AI self-improve, or more generally if you have AIs creating successor AIs, or even more generally if you let the AI world develop and outpace humans in some other way, or if you try to run some process where boxed AIs are supposed to create an initial ASI sovereign, or if you try to have AIs "solve alignment" [1] (in one of the ways already listed, or in some other way), or if you are an AI (or human mind upload) involved in some such scheme, [2] try to make it so the following property is upheld:

- It should be possible for each current human to decide to go live in an AGI-free world. In more detail:

- There is to be (let's say) a galaxy such that AGI is to be banned in this galaxy forever, except for AGI which does some minimal stuff sufficient to enforce this AI ban. [3]

- There should somehow be no way for anything from the rest of the universe to affect what happens in this galaxy. In particular, there should probably not be any way for people in this galaxy to observe what happened elsewhere.

- If a person chooses to move to this galaxy, they should wake up on a planet that is as much like pre-AGI Earth as possible given the constraints that AGI is banned and that probably many people are missing (because they didn't choose to move to this galaxy). Some setup should be found which makes institutions as close as possible to current institutions still as functional as possible in this world despite most people who used to play roles in them potentially being missing.

- For example, it might be possible to set this up by having the galaxy be far enough from all other intelligent activity that because of the expansion of the universe, no outside intelligent activity could be seen from this galaxy. In that case, the humans who choose to go live there would maybe be in cryosleep for a long time, and the formation of this galaxy could be started at an appropriate future time.

- One should try to minimize the influence of any existing AGI on a human's thinking before they are asked if they want to make this decision. Obviously, manipulation is very much not allowed. If some manipulation has already happened, it should probably be reversed as much as possible. Ideally, one would ask the version of the person from the world before AGI.

- Here are some further clarifications about the setup:

- Of course, if the resources in this galaxy are used in a way considered highly wasteful by the rest of the universe, then nothing is to be done about that by the rest of the universe.

- If the people in this galaxy are about to kill themselves (e.g. with engineered pathogens), then nothing is to be done about that. (Of course, except that: the AI ban is supposed to make it so they don't kill themselves with AI.)

- Yes, if the humanity in this galaxy becomes fascist or runs a vast torturing operation (like some consider factory farming to be), then nothing is to be done about that either.

- We might want to decide more precisely what we mean by the world being "AGI-free". Is it fine to slowly augment humans more and more with novel technological components, until the technological components are eventually together doing more of the thinking-work than currently existing human thinking-components? Is it fine to make a human mind upload?

- I think I would prefer a world in which it is possible for humans to grow vastly more intelligent than we are now, if we do it extremely slowly+carefully+thoughtfully. It seems difficult/impossible to concretely spell out an AI ban ahead of time that allows this. But maybe it's fine to keep this not spelled out — maybe it's fine to just say something like what I've just said. After all, the AI banning AI for us will have to make very many subtle interpretation decisions well in any case.

- We can consider alternative requests. Here are some parameters that could be changed:

- Instead of AI being banned in this galaxy forever, AI could be banned only for 1000 or 100 years.

- Maybe you'd want this because you would want to remain open to eventual AI involvement in human life/history, just if this comes after a lot more thinking and at a time when humanity's is better able to make decisions thoughtfully.

- Another reason to like this variant is that it alleviates the problem with precisifying what "ban AI" means — now one can try to spell this out in a way that "only" has to continue making sense over 100 or 1000 years of development.

- Instead of giving each human the option to move to this galaxy, you could give each human the option to branch into two copies, with one moving to this galaxy and one staying in the AI-affected world.

- The total amount of resources in this AI-free world should maybe scale with the number of people that decide to move there. Naively, there should be order reachable galaxies per person alive, so the main proposal which just allocates a single galaxy to all the people who make this decision asks for much less than what an even division heuristic suggests.

- We could ask AIs to do some limited stuff in this galaxy (in addition to banning AGI).

- Some example requests:

- We might say that AIs are also allowed to make it so death is largely optional for each person. This could look like unwanted death being prevented, or it could look like you getting revived in this galaxy after you "die", or it could look like you "going to heaven" (ie getting revived in some non-interacting other place).

- We might ask for some starting pack of new technologies.

- We might ask for some starting pack of understanding, e.g. for textbooks providing better scientific and mathematical understanding and teaching us to create various technologies.

- We might say that AIs are supposed to act as wardens to some sort of democratic system. (Hmm, but what should be done if the people in this galaxy want to change that system?)

- We might ask AIs to maintain some system humans in this galaxy can use to jointly request new services from the AIs.

- However, letting AIs do a lot of stuff is scary — it's scary to depart from how human life would unfold without AI influence. Each of the things in the list just provided would constitute/cause a big change to human life. Before/when we change something major, we should take time to ponder how our life is supposed to proceed in the new context (and what version of the change is to be made (if any)), so we don't [lose ourselves]/[break our valuing].

- Some example requests:

- There could be many different AI-free galaxies with various different parameter settings, with each person getting to choose which one(s) to live in. At some point this runs into a resource limit, but it could be fine to ask that each person minimally gets to design the initial state of one galaxy and send their friends and others invites to have a clone come live in it.

- Instead of AI being banned in this galaxy forever, AI could be banned only for 1000 or 100 years.

- Here are some remarks about the feasibility and naturality of this scheme:

- If you think letting Anthropic/Claude RSI would be really great, you should probably think that you could do an RSI with this property.

- In fact, in an RSI process which is going well, I think it is close to necessary that something like this property is upheld. Like, if an RSI process would not lead to each current person [4] being assigned at least (say) of all accessible resources, then I think that roughly speaking constitutes a way in which the RSI process has massively failed. And, if each person gets to really decide how to use at least of all accessible resources [5] , then even a group of people should be able to decide to go live in their own AGI-free galaxy.

- I guess one could disagree with upholding something like this property being feasible conditional on a good foom being feasible or pretty much necessary for a good foom.

- One could think that it's basically fine to replace humans with random other intelligent beings (e.g. Jürgen Schmidhuber and Richard Sutton seem to think something like this), especially if these beings are "happy" or if their "preferences" are satisfied (e.g. Matthew Barnett seems to think this). One could be somewhat more attached to something human in particular, but still think that it's basically fine to have no deep respect for existing humans and make some new humans living really happy lives or something (e.g. some utilitarians think this). One could even think that good reflection from a human starting point leads to thinking this. I think this is all tragically wrong. I'm not going to argue against it here though.

- Maybe you could think the proposal would actually be extremely costly to implement for the rest of the universe, because it's somehow really costly to make everyone else keep their hands off this galaxy? I think this sort of belief is in a lot of tension with thinking a fooming Anthropic/Claude would be really great (except maybe if you somehow really have the moral views just mentioned).

- similarly: You could think that the proposal doesn't make sense because the AIs in this galaxy that are supposed to be only enforcing an AI ban will have/develop lots of other interests and then claim most of the resources in this galaxy. I think this is again in a lot of tension with thinking a fooming Anthropic/Claude would be really great.

- One could say that even if it would be good if each person were assigned all these resources, it is weird to call it a "massive failure" if this doesn't happen, because future history will be a huge mess by default and the thing I'm envisaging has very nearly probability and it's weird to call the default outcome a "massive failure". My response is that while I agree this good thing has on my inside view probability of happening (because AGI researchers/companies will create random AI aliens who disempower humanity) and I also agree future history will be a mess, I think we probably have at least the following genuinely live path to making this happen: we ban AI (and keep making the implementation of the ban stronger as needed), figure out how to make development more thoughtful so we aren't killing ourselves in other ways either, grow ever more superintelligent together, and basically maintain the property that each person alive now (who gets cryofrozen) controls more than of all accessible resources [6] . [7]

- This isn't an exhaustive list of reasons to think it [is not pretty much necessary to uphold this property for a foom to be good] or [is not feasible to have a foom with this property despite it being feasible to have a good foom]. Maybe there are some reasonable other reasons to disagree?

- This property being upheld is also sufficient for the future to be like at least meaningfully good. Like, humans would minimally be able to decide to continue human life and development in this other galaxy, and that's at least meaningfully good, and under certain imo-non-crazy assumptions really quite good (specifically, if: humanity doesn't commit suicide for a long time if AI is banned AND many different humane developmental paths are ex ante fine AND utility scales quite sublinearly in the amount of resources).

- So, this property being upheld is arguably necessary for the future to be really good, and it is sufficient for the future to be at least meaningfully good.

- Also, it is natural to request that whoever is subjecting everyone to the end of the human era preserve the option for each person to continue their AI-free life.

- If you think letting Anthropic/Claude RSI would be really great, you should probably think that you could do an RSI with this property.

- Here are some reasons why we should adopt this goal, i.e. consider each person to have the right to live in an AGI-free world:

- Most importantly: I think it helps you think better about whether an RSI process would go well if you are actually tracking that the fooming AI will have to do some concrete big difficult extremely long-term precise humane thing, across more than trillions of years of development. It helps you remember that it is grossly insufficient to just have your AI behave nicely in familiar circumstances and to write nice essays on ethical questions. There's a massive storm that a fragile humane thing has to weather forever. [8] The main reason I want you to keep these things in mind and so think better about development processes is this: I think there is roughly a logical fact that running an RSI process will not lead to the fragile humane thing happening [9] , and I think you might be able to see this if you think more concretely and seriously about this question.

- Adopting this goal entails a rejection of all-consuming forms of successionism. Like, we are saying: No, it is not fine if humans get replaced by random other guys! Not even if these random other guys are smarter than us! Not even if they are faster at burning through negentropy! Not even if there is a lot of preference-satisfaction going on! Not even if they are "sentient"! I think it would be good for all actors relevant to AI to explicitly say and track that they strongly intend to steer away from this.

- That said, I think we should in principle remain open to us humans reflecting together and coming to the conclusion that this sort of thing is right and then turning the universe into a vast number of tiny structures whose preferences are really satisfied or who are feeling a lot of "happiness" or whatever. But provisionally, we should think: if it ever looks like a choice would lead to the future not having a lot of room for anything human, then that choice is probably catastrophically bad.

- Adopting this also entails a rejection of more humane forms of utilitarianism that however still see humans only as cows to be milked for utility. Like, no, it is not fine if the actual current humans get killed and replaced. Not even if you create a lot of "really cool" human-like beings and have them experiencing bliss! Not even if you create a lot of new humans and have them have a bunch of fun! Not even if you create a lot of new humans from basically the 2025 distribution and give them space to live happy free lives! In general, I want us to think something like:

- There is an entire particular infinite universe of {projects, activities, traditions, ways of thinking, virtues, attitudes, principles, goals, decisions} waiting to grow out of each existing person. [10] Each of these moral universes should be treated with a lot of respect, and with much more respect than the hypothetical moral universes that could grow out of other merely possible humans. Each of us would want to be respected in this way, and we should make a pact to respect each other this way, and we should seek to create a world in which we are enduringly respected this way.

- These reasons apply mostly if, when thinking of RSI-ing, you are not already tracking that this fooming AI will have to do some concrete big difficult extremely long-term precise thing that deeply respects existing humans. If you are already tracking something like this, then plausibly you shouldn't also track the property I'm suggesting.

- E.g., it would be fine to instead track that each person should get a galaxy they can do whatever they want with/in. I guess I'm saying the AI-free part because it is natural to want something like that in your galaxy (so you get to live your own life in a way properly determined by you, without the immediate massive context shift coming from the presence of even "benign" highly capable AI, that could easily break everything imo), because it makes sense for many people to coordinate to move to this galaxy together (it's just better in many mundane ways to have people around, but also your thinking and specifically valuing probably need other people to work as they should), and because it is natural to ask that whoever is subjecting everyone to the end of the human era preserves the option for each person to continue an AI-free life in particular.

- Here are a few criticisms of the suggestion:

- a criticism: "If you run a foom, this property just isn't going to be upheld, even if you try to uphold it. And, if you run a foom, then having the goal of upholding this property in mind when you put the foom in motion will not even make much of a relative difference in the probability the foom goes well."

- my response: I think this. I still suggest that we have this goal in mind, for the reasons given earlier.

- a criticism: "If we imagine a foom being such that this option is upheld, then probably we should imagine it being such that better options are available to people as well."

- my response: I probably think this. I still suggest that we have this goal in mind, for the reasons given earlier.

- a criticism: "If you run a foom, this property just isn't going to be upheld, even if you try to uphold it. And, if you run a foom, then having the goal of upholding this property in mind when you put the foom in motion will not even make much of a relative difference in the probability the foom goes well."

I think this is probably a bad term that should be deprecated ↩︎

well, at least if the year is and we're not dealing with a foom of extremely philosophically competent and careful mind uploads or whatever, firstly, you shouldn't be running a foom (except for the grand human foom we're already in). secondly, please think more. thirdly, please try to shut down all other AGI attempts and also your lab and maybe yourself, idk in which order. but fourthly, ... ↩︎

This will plausibly require staying ahead of humanity in capabilities in this galaxy forever, so this will be extremely capable AI. So, when I say the galaxy is AGI-free, I don't mean that artificial generally intelligent systems are not present in the galaxy. I mean that these AIs are supposed to have no involvement in human life except for enforcing an AI ban. ↩︎

or like at least "their values" ↩︎

and assuming we aren't currently massively overestimating the amount of resources accessible to Earth-originating creatures ↩︎

or maybe we do some joint control thing about which this is technically false but about which it is still pretty fair to say that each person got more of a say than if they merely controlled of all the resources ↩︎

an intuition pump: as an individual human, it seems possible to keep carefully developing for a long time without accidentally killing oneself; we just need to make society have analogues of whatever properties/structures make this possible in an individual human ↩︎

Btw, a pro tip for weathering the storm of crazymessactivitythoughtdevelopmenthistory: be the (generator of the) storm. I.e., continue acting and thinking and developing as humanity. Also, pulling ourselves up by our own bootstraps is based imo. Wanting to have a mommy AI think for us is pretty cringe imo. ↩︎

Among currently accessible RSI processes, there is one exception: it is in fact fine to have normal human development continue. ↩︎

Ok, really humans (should) probably importantly have lives and values together, so it would be more correct to say: there is a particular infinite contribution to human life/valuing waiting to grow out of each person. Or: when a person is lost, an important aspect of God) is lost. But the simpler picture is fine for making my current point. ↩︎

I'm imagining humanity fracturing into a million or billion different galaxies depending upon their exact level of desire for interacting with AI. I think the human value of the unity of humanity would be lost.

I think we need to buffer people from having to interact with AI if they don't want to. But I value having other humans around. So some thing in between everyone living in their perfect isolation and everyone being dragged kicking and screaming into the future is where I think we should aim.

on seeing the difference between profound and meaningless radically alien futures

Here's a question that came up in a discussion about what kind of future we should steer toward:

- Okay, a future in which all remotely human entities promptly get replaced by alien AIs would soon look radically incomprehensible and void to us — like, imagine our current selves seeing videos from this future world, and the world in these videos mostly not making sense to them, and to an even greater extent not seeming very meaningful in the ethical sense. But a future in which [each human]/humanity has spent a million years growing into a galaxy-being would also look radically incomprehensible/weird/meaningless to us.[1] So, if we were to ignore near-term stuff, would we really still have reason to strive for the latter future over the former?

a couple points in response:

- The world in which we are galaxy-beings will in fact probably seem more ethically meaningful to us in many fairly immediate ways. Related: (for each past time ) a modern species typically still shares meaningfully more with its ancestors from time than it does with other species that were around at time (that diverged from the ancestral line of the species way before ).

- A specific case: we currently already have many projects we care about — understanding things, furthering research programs, creating technologies, fashioning families and friendships, teaching, etc. — some of which are fairly short-term, but others of which could meaningfully extend into the very far future. Some of these will be meaningfully continuing in the world in which we are galaxy-beings, in a way that is not too hard to notice. That said, they will have grown into crazy things, yes, with many aspects that one isn't going to immediately consider cool; I think there is in fact a lot that's valuable here as well; I'll argue for this in item 3.

- The world in which we have become galaxy-beings had our own (developing) sense/culture/systems/laws guide decision-making and development (and their own development in particular), and we to some extent just care intrinsically/terminally about this kinda meta thing in various ways.

- However, more importantly: I think we mostly care about [decisions being made and development happening] according to our own sense/culture/systems/laws not intrinsically/terminally, but because our own sense/culture/systems/laws is going to get things right (or well, more right than alternatives) — for instance, it is going to lead us more to working on projects that really are profound. However, that things are going well is not immediately obvious from looking at videos of a world — as time goes on, it takes increasingly more thought/development to see that things are going well.

- I think one is making a mistake when looking at videos from the future and quickly being like "what meaningless nonsense!". One needs to spend time making sense of the stuff that's going on there to properly evaluate it — one doesn't have immediate access to one's true preferences here. If development has been thoughtful in this world, very many complicated decisions have been made to get to what you're now seeing in these videos. When evaluating this future, you might want to (for instance) think through these decisions for yourself in the order in which they were made, understanding the context in which each decision was made, hearing the arguments that were made, becoming smart enough to understand them, maybe trying out some relevant experiences, etc.. Or you might do other kinds of thinking that gets you into a position from which you can properly understand the world and judge it. After a million years[2] of this, you might see much more value in this human(-induced) world than before.

- But maybe you'll still find that world quite nonsensical? If you went about your thinking and learning in a great deal of isolation, without much attempting to do something together with the beings in that world, then imo you probably will indeed find that world quite bad/empty compared to what it could have been[3] [4] (though I'd guess that you would similarly also find other isolated rollouts of your own reflection quite silly[5], and that different independent sufficiently long further rollouts from your current position would again find each other silly, and so on). However, note that like the galaxy-you that came out of this reflection, this world you're examining has also gone through an [on most steps ex ante fairly legitimate] process of thoughtful development (by assumption, I guess), and the being(s) in that world now presumably think there's a lot of extremely cool stuff happening in it. In fact, we could suppose that a galaxy-you is living in that world, and that they contributed to its development throughout its history, and that they now think that their world (or their corner of their world) is extremely based.[6]

- Am I saying that the galaxy-you looking at this world from the outside is actually supposed to think it's really cool, because it's supposed to defer to the beings in that world, or because it's supposed to think any development path consisting of ex ante reasonable-seeming steps is fine, or because some sort of relativism is right, or something? I think this isn't right, and so I don't want to say that — I think it's probably fine for the galaxy-you to think stuff has really gone off the rails in that world. But I do want to say that when we ourselves are making this decision of which kind of future to have from our own embedded point of view, we should expect there to be a great deal of incomprehensible coolness in a human future (if things go right) — for instance, projects whose worth we wouldn't see yet, but which we would come to correctly consider really profound in such a future (indeed, we would be tracking what's worthwhile and coming up with new worthwhile things and doing those) — whereas we should expect there to instead be a great deal of incomprehensible valueless nonsense in an alien future.

- If you've read the above and still think a galaxy-human future wouldn't be based, let me try one more story on you. I think this looking-at-videos-of-a-distant-world framing of the question makes one think in terms of sth like assigning value to spacetime blocks "from the outside", and this is a framing of ethical decisions which is imo tricky to handle well, and in particular can make one forget how much one cares about stuff. Like, I think it's common to feel like your projects matter a lot while simultaneously feeling that [there being a universe in which there is a you-guy that is working on those projects] isn't so profound; maybe you really want to have a family, but you're confused about how much you want to make there be a spacetime block in which there is a such-and-such being with a family. This could even turn an ordinary ethical decision that you can handle just fine into something you're struggling to make sense of — like, wait, what kind of guy needs to live in this spacetime block (and what relation do they need to have to me-now-answering-this-question); also, what does it even mean for a spacetime block to exist (what if we should say that all possible spacetime blocks exist?)? One could adopt the point of view that the spacetime block question is supposed to just be a rephrasing of the ordinary ethical question, and so one should have the same answer for it, and feel no more confused about what it means. One could probably spend some time thinking of one's ordinary ethical decisions in terms of spacetime-block-making and perhaps then come to have one's answers be reasonably coherent under having (arguably) the same decision problem presented in the ordinary way vs in some spacetime block way.[7] But I think this sort of thing is very far from being set up in almost any current human. So: you might feel like saying "whatever" way too much when ethical questions are framed in terms of spacetime-block-making, and the situation we're considering could push one toward that frame; I want to alert you that maybe this is happening, maybe you really care more than it seems in that frame, and that maybe you should somehow imagine yourself being more embedded in this world when evaluating it.

I guess one could imagine a future in which someone tiles the world with happy humans of the current year variety or something, but imo this is highly unlikely even conditional on the future being human-shaped, and also much worse than futures in which a wild variety of galaxy-human stuff is going on. Background context: imo we should probably be continuously growing more capable/intelligent ourselves for a very long time (and maybe forever), with the future being determined by us "from inside human life", as opposed to ever making an artificial system that is more capable than humanity and fairly separate/distinct from humanity that would "design human affairs from the outside" (really, I think we shouldn't be making [AIs more generally capable than individual humans] of any kind, except for ones that just are smarter versions of individual humans, for a long time (and maybe forever); see this for some of my thoughts on these topics). ↩︎

maybe we should pick a longer time here, to be comparing things which are more alike? ↩︎

I think this is probably true even if we condition the rollout on you coming to understand the world in the videos quite well. ↩︎

But if you disagree here, then I think I've already finished [the argument that the human far future is profoundly better] which I want to give to you, so you could stop reading here — the rest of this note just addresses a supposed complication you don't believe exists. ↩︎

much like you could grow up from a kid into a mathematician or a philosopher or an engineer or a composer, thinking in each case that the other paths would have been much worse ↩︎

Unlike you growing up in isolation, that galaxy-you's activities and judgment and growth path will be influenced by others; maybe it has even merged with others quite fully. But that's probably how things should be, anyway — we probably should grow up together; our ordinary valuing is already done together to a significant extent (like, for almost all individuals, the process determining (say) the actions of that individual already importantly involves various other individuals, and not just in a way that can easily be seen as non-ethical). ↩︎