Ah man, I've been missing these sorts of posts from you, very happy to read this, super cool as usual.

These are some questions that arise for me, maybe not the most well out but hopefully somewhat interesting:

Do you think this is a good argument for multi-scale modularity in biology? Also thoughts on multi-agent models of mind with this model in the background? Finally any thoughts on applying this to whether we will have a group of AI systems doing RSI or a singular one?

I suppose this argument would be dependent on the condition when systems with convex utility functions show up, do you have any more detailed thoughts on when we can expect convex utility trade offs to show up?

Is the answer something like when there are benefits to specialization? I guess there also has to be the aspect of trade already present in the system? Do you have thoughts on the assumptions you have to make and have true before the convergence arises or do you think this is a general property of any learning system?

Do you think this is a good argument for multi-scale modularity in biology?

I think the multi-scale aspect is mostly orthogonal to this argument, but yeah the argument could be applied at multiple scales.

Also thoughts on multi-agent models of mind with this model in the background? Finally any thoughts on applying this to whether we will have a group of AI systems doing RSI or a singular one?

Typically, the load-bearing aspect of multi-agent models is that the multiple agents have different utility functions. This argument is compatible with multiple utility functions in principle, but that's not really what it's about; it would apply arguably-more-cleanly in systems with just one optimization objective (but multiple subgoals, e.g. multiple resources like apples and bananas).

I guess there also has to be the aspect of trade already present in the system?

Nope.

(The other questions I don't yet have much to say about.)

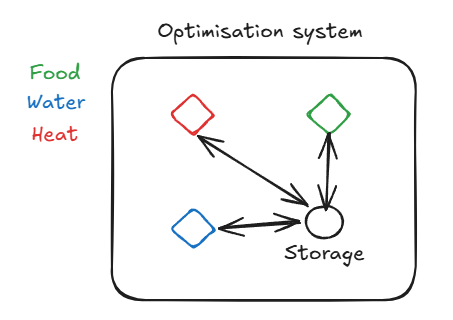

Right so I was trying to make sense of this no trade thing because I thought it would make it so that the individual subcomponents would die out if they didn't have access to trade but that isn't the case if they're subordinated to the larger system. Also similar to the multi-agent thing it's not about multi-scale modularity but rather optimisation within a specific optimisation system or whatever you would like to call it. (random quick picture):

Part of what makes a pencil a good object is that all its parts share approximately the same rotational velocity - i.e. it's a rigid body object. Part of what makes a squirrel a good object is that its parts share approximately the same genome. Part of what makes the water in a cup a good object is that its parts share approximately the same chemical composition - i.e. it mixes quickly.

Pencil and water: agree (though for water, much of what I'd point to is "how it will interact with other stuff").

Squirrel: no! Unless you are doing biology on the squirrel, I don't think the genome is mostly what matters.

I think the two big things are "rigid body" and "this rigid body is controlled by an agenty-thing" (read: "this is the body of an animal"). The thing I see is that squirrels, cats, and birds all do things like scan the environment for food, move their muscles to get the food, regulate their internal temperature, turn the food into energy, etc.

If it turned out that squirrels were some weird symbiote, like if the legs were actually a different species, then for the most part this only matters in so far as these 'subparts' affect the squirrel. Like, they 'factor through' what the squirrel does. I think this is pretty general, even beyond agenty things like animals.

I buy the general point, though.

Important example: It seems like much of how I split up the 'subparts' of objects around me is by the material they are made out of - for example, a soft chair cushion has different material properties from the wooden body.

When I look at objects, the different colors of these materials seem to be doing a lot of the work.

Next to me is a chair, whose cushion has a multicolored pattern on it, while the body is solid green and the headrest is grey. The whole thing is sitting on a metal base, with a metal rod connecting it to the base.

This is also how I split the chair up. I also split up the mosaic pattern of the cushion into parts like "this blue circle" or "that smaller white circle", but not "this undistinguished patch of the blue circle".

If I imagine the body and the headrest having the mosaic pattern of the cushion, I feel like I get a little confused about where the parts start and end in my imaginary chair.

The only part that doesn't fit is the way I distinguish the rod from the base - it seems like shape, or maybe "adjacency" (the rod separates the chair from the base) are doing the rest of the work? But it's pretty promising that color alone gets you most of the way there.

I had exactly the same thought. I didn't write the same comment, though, because:

1) He did say the genome is part of what makes a squirrel a good object, and

2) The genome is a very natural dividing line between "part of this squirrel" and "not part of this squirrel," and natural dividing lines are useful!

A "good object" is in the eye of the beholder - indeed, you spend most of your comment beholding and talking about how you and your brain chunk up the world around you, and that all makes sense. But "in the perception of a particular human" is not the only way to judge what a good object is.

- Identical twins share the same genome, but are usually considered separate objects for most purposes.

- A genetic chimera has different genomes in different parts of its body, but is usually considered a single object for most purposes. (Often, you can't tell the organism is a chimera on a casual inspection.)

I submit that "same genome" often coincides with the natural object boundary, but isn't usually a good criterion for the boundary. The common genome is not a significant part of what makes a squirrel a good object.

I think the thing we usually care about is something more like "it acts like a single agent" or "the parts are approximately aligned towards the same goal". (Notice these criteria suggest that when an organization acts in concert, we care more about the boundaries of the organization than the boundaries of the individuals within it; I think I endorse that implication.)

Interestingly, according to Wikipedia there doesn't seem to be an accepted scientific definition of "organism".

Well, I was taking for granted:

1: The natural abstraction thesis that a substantial part of what makes "good objects" don't depend on the beholder (or at least a large class of beholders will agree on a large class of "good object")

2: If something feels like a relatively basic part of my perception, then it hints at fundamental evolved algorithms, that are then likely to point at "good objects". As an example, your eye cells have built in edge detectors that work a lot like the image processing algorithms humanity invented. I expect many nonhuman animals's brains to categorize animals and edges similarly (e.g. there are center surround retinal ganglion cells in mice).

A central (get it?) example for me is a math thing: the definition of the boundary/interior/exterior of a topological set. I have some intuitions, built from my life of interacting with the world and from evolution, about what "boundary" should mean. Given some prompting about open sets, I was able to basically come up with the 'official' definition. I suspect that if/when alien civilizations exist, that they'll have a very similar concept.

3: I agree there's an important sense in which the genome matters. But for example: imagine a world where (non-human) animals were symbiotes, that only join/separate rarely, usually out of sight, and that have complicated genetic inheritance patterns; but otherwise usually looked and acted like our world's animals.

I predict that in that world, humans and other animals would partition life into objects in a very similar way. Later, humans would realize the symbiote thing, and would perhaps add words for it; but it would have to be something kids were taught by their parents, or only learned when they were helping around the farm at 10 years old.

Another thought: disruptive coloration by making color a worse latent (in the mediation condition), so it doesn't track rigid body or animateness or composition as much.

Say I have a dynamical system - concretely, a table with some hills and valleys that I roll a ball around.

The hills (equilibria), then, will end up being good object boundaries. For example, perhaps I'll color the table by where balls go and what I'm really doing is saying "eventually all these starting points will eventually share approximately the same important property X" (here, the position). However, I think usually we categorize when they actually get near the equilibrium, instead of predicting it in advance?

Optimizers are sorta like things that make the stable equilibria be high scoring states according to some goal. If there are convex production frontiers that encourage specialization, then there'll be multiple attractors. Let's take the example with two identical production frontiers, where the state of agent X is the stuff X starts out producing. Say each agent does greedy optimization; then what they end up producing will either be at the average or at two corner points, depending on the production frontier. The frontiers determine the 'landscape' of where agents end up.

Other example that comes to mind: spontaneous symmetry breaking. Now for some abstraction that is either necessary or useless:

The laws describing a solid are invariant under the full Euclidean group, but the solid itself spontaneously breaks this group down to a space group. The displacement and the orientation are the order parameters.

My interpretation of this: if you know physics but are looking at an empty space, you have no reason to cut up the space into smaller pieces. If there's a reference solid (think of a crystal structure) though, then you can cut up the space according to what can now be distinguished; and the things identified as the same by the unbroken symmetries are your 'object boundaries' those that you get by transforming in a way that leaves the invariant (order parameter) the same.

It also gives the example of a magnet:

A magnet that's hot will just have randomly oriented little magnetic moments, and thus there's lots of symmetries (all the ways of changing the little moments); but when you cool it, they get 'locked in', and now the only symmetry are those that leave the overall total magnetic moment the same. Accordingly, we think of two magnets as different if they have different magnetizations (with caveat about spatial symmetries depending on shape, like if you have a bar magnet you'll have less than if you have a cylinder (where many different directions are essentially the same)).

If I try to translate to rigid bodies: suppose I start out with a homogenous gas, and have my state be the arrangements of all the particles. At the start, I have no way to carve up the configuration space (so I have a bunch of symmetries). Now suppose that an object forms (say, a star), out of a few possible options for possible objects. Suddenly, I have a way to carve up the space: All configurations are identical if the star is the same. The remaining symmetries are all the ways that the internal details of the star can differ (say, the internal fluctuations, which will happen if the laws of physics give similar energies to two internal states), and our latent variable is whatever 'star choice' ended up happening (chemical composition, linear and angular momenta, center of mass at start time).

If stay and watch for some time, then we'll want to look at the things that are time translation (at right scale) symmetric, so e.g. I don't class a marker as different from when it is thrown in the air, because those velocities tend to zero out eventually while shape and color and tool-ness (I can use markers to write on whiteboards) are conserved.

Question: Say my phone explodes. What's going on when I stop categorizing it as a 'phone' instead of pieces of debris? One answer is "the primary level of analysis is rigid body physics", but I don't think that's the whole picture.

Hmm. Currently, natural latents are formulated in informational/mediational terms: they capture all the information about X relevant to Y, and no more than what's shared in them.

I take the position that the frame of group theory is often useful, even when the actual detailed math is unnecessary. This suggests that there are important properties that are best thought of in terms of symmetries/invariance.

I feel like this post has made me think that that checks out, possibly as how agents actually learn useful latents given some structure of our universe.

Question: Modularity? It's supposed to come from some sort of robustness to changes in conditions - this leads me to ask if there some simple case of modularity we can study, and whether the symmetry approach is a good way to look at it.

(My gut now says that that won't work, but I'm not sure why)

Okay, here are some rambly thoughts, not sure whether this will pan out. It's not really condensed currently, so perhaps the TL;DR: is to scroll until you see "Version 2", read the definition, and then skip to the final few paragraphs

Suppose we have random variables X,Y that come in two clusters. Imagine drawing heatmap of P(X,Y), and that it has two squares that are separated. If we define L = 0 when in the first square and L = 1 when in the second square, then we have a latent (with no approximation errors). Think of this like adding a third dimension to the plot, where you make one square higher up than the other. The mediation condition says that in each cluster, the cluster looks 'approximately' like it's made of little rectangles.

Suppose we have a transformation g of the data. A couple possible things I might want to mean by that:

Say that g is a function (not necessarily invertible!) from X,Y,L space to itself - that is, it moves X,Y and also the latent L. Now for some definitions that I can tell are definitely missing something, but that seem to capture some of what's happening:[1]

(the pushforward measure, aka, I take my probability mass and I move it, and so to figure out how much is where I am afterwards, I ask how much was from the sources).

Then I could ask if

Now imagine instead that we disallow changing

(this is a special case of Version 1).

Now is it a latent? Nope! Imagine that I cut up my square into halves, reassemble squares with each having one half from both of the originals; the new

Imagine instead that I only allow the transformation to output the changed

(for this to work we'll have to be mapping to 'the same space')

In our 'reassembly' scenario, since L' is now recomputed, it 'recognizes' that the new squares are what we should use.

So, now is L' necessarily a latent? No! At least, not in interesting cases like, say, if we assign small probability outside our clusters. Then we can make an approximate latent that's 0 on the first cluster, 1 on the second, and whatever junk in between (though you'd get more info if you used, like, an 'interpolation' that told you how close you were to one of the clusters; I still think this is a bad enough problem, and think there are probably even worse examples), and now if we do a transformation that just rotates the clusters in the scatter plot around some midpoint, then the new value of L' will be the junk. Notice how version 2 handled this case just fine, because it took the old latents.

Claim: Version 2 is the good one.

Let's develop version 2 further. Suppose, using version 2, that we also have a symmetry of the X and Y probabilities, meaning that

Now, is

aka all that changes is that we take the probability of having a certain value of

Now, here's what I want to do: Suppose we had our two squares, and then let's say I split the first one, and start moving each part independently. If I just, like, nudge two halves to be apart in the X axis, then you should note that we still have independence conditional on L, and so it doesn't really change anything.

But say I move the parts in a way that really does change things. To start, let's say that L is a uniformly distributed angle/point on a circle, and for each L we make X,Y uniformly distributed in a square around that point on the circle. The 3D plot of this in my head is that we have a square that we move in a solid 'helix' (or like a solid square torus), except we connect the start and end with a 'portal' (or alternatively you could 'color' it, or alternatively you can imagine an infinite helix and say your latent information is an angle plus any multiple of two pi, or whatever). What this corresponds to in reality is the position of a 'snake' or a delayed afterimage that moves back and forth in 1D - the head is "X" and the tail is "Y", and the tail lags behind the head. Note that the latent isn't exactly determined given X; but it's still removing almost all the uncertainty[2].

Then if we transform by rotation, we are just rotating our latent values. And so if we know that physics rotates through time, then perhaps we'll recognize the 'same' object, and just use our transform to define a latent there? Likewise we can 'mix' within a square, and if mixing happens e.g. thermodynamically we should define latents good?

I'm still confused there, but let's move on to the thing I originally wanted to do: what if I have an "ball" made of four points forced to be near each other, and I have no info about where the object is. Right now, I have symmetries of the four particle coordinates, and perhaps they suggest that wherever the ball is my latent (the position of the object) is 'essentially talking about the same object' (iffy on details there), and likewise for jigglings inside the object.

But now say that the ball splits into two balls, each with two of the points. Before we look, we think it's equally likely which points will end up together or apart (as in, we assign the same chance to (1 near 2, 3 near 4) as (1 near 3, 2 near 4); but whatever they are, the two balls are independent of each other. Our distribution has the following symmetries: we can jiggle the internals of each of the two balls (independently of each other, as in we combine any jiggle of one with any jiggle of the other), can move points in a way that should correspond to moving the balls around, and we can swap the constituents of the balls.

We should then we have a latent that's the position of the balls and the constituents. Suppose that after a little bit of time, the physics no longer allows actually swapping the constituents - that they stop being in equilibrium. The remaining symmetries will not change the new 2 object latent, and if the previous story works then maybe you can just get the latent from the remaining symmetries. Perhaps the latent is really "the constituents of the ball at the time of split, along with the current positions of the balls". If we then have a time varying distribution (or just have a causal graph with an approximate 'time' direction?), we'll see that the broken symmetry of constituent swapping at the split corresponds to the new latent, which is now a latent over the positions of the particles at any time t > split_time (note: not the path through time).

However, before the split, we can do particle swapping, which might lead us to conclude that the only latent should be the average position at the beginning (something like the averaging trick, where we average over all possible transformations to get the center of mass?).

So if the object just sits there without the center of mass moving, and we know that it'll suddenly split after 10 seconds (but we haven't looked at the object yet), then perhaps we can do transformations to get that at any time the object 'corresponds to the same latent' right up until the split, at which point we have less symmetries; but if we use the remaining ones, we can get a new latent corresponding to there being two objects. And if we looked at only one of the two balls's points at the end, and condition on the two particles of it being near each other (i.e. part of the same ball), we could point to a latent over them using their symmetries.

The only problem, of course, is that I'm not sure how we decide what symmetries to use. So far, it's "physics"? Because if we use the probability distribution by itself it kinda looks like we get extra 'bad' ones.

What if we look at the whole timeline, with uncertainty about stuff like when they split. We have a latert variable packed with info like "when do we split? what goes into which part? what's the velocity after split?". Then we try to search for subsets of the paths that have smaller latent variables (they should be determined by the big because the big satisfies mediation). This is then what lets us say we had one latent that had one object position, and now have a different one that has the position of both parts?

but with concave frontiers/utilities the agents' prices tend to diverge

Typo: "concave" → "convex"

Edit: This started as a focused reflection on John's post here, but then morphed into thoughts on AI. I split the two sections just to impose a bit of structure on this comment. But this comment should be interpreted more as me getting my own thoughts together than as a confident argument, and some of it is highly contestable or may just not be all that relevant or interesting.

On Natural Ontologies

If many methods for inferring structure in high-dimensional data identify similar structures, then we may come to treat those structures as more meaningful, or as "good objects." Incentives lead us to run this process over specific subsets of the space of available data and methods, producing the set of ontologies we empirically observe. We can see a practical domain, such as a research field, job or cultural practice, as a particular way of limiting the available data and methods in order to produce a distinct distribution of discoverable ontologies. Incentives lead to the existence of the practical domains and the way they explore their distintive ontology distributions.

Your concept of a natural ontology suggests that if we consider the space of ontology distributions, these distributions themselves have a common factor, which is that the discovered high-dimensional ontologies can be well explained through a set of latent factors of much lower dimension. In other words, humans engaged in a specific activity, be it hunting, scientific research, or painting, seem to converge on a small region of ontology-space that they use to model that activity.

Confirmation bias and incentives are alternative explanations for ontological convergence. We reify a novel object only if the method that generated the ontology containing that object also generated known objects, producing a structural risk of confirmation bias. I work in single-cell epigenetics, and if a dataset and clustering method fail to recapitulate the branched lineages we expect, then we assume that the dataset or clustering method were flawed.

Incentives restrict both the way activities are defined in terms of the allowed data and methods, and the types of data and methods accessible in practice. Many experiments are never performed because they are unethical or impractical, even if in principle they would be valid ontology-generators for a certain field. Many ways of defining an acceptable set of data and methods are underexplored because the ontologies they generate are unrewarding. Incentives also control the extent to which confirmation bias limits the experiments we run and the ontologies we generate.

AI Reflections

Insofar as humans and AI engage in common activities, like resource acquisition, we are in principle exploring the same ontological distribution. However, the differences in our incentives and priors may lead to different data and methods used to sample ontologies. There is a risk that we can only converge on a common paradigm by essentially just trusting one another's reports on output ontology without being able to understand their methods for producing it. This might be a bit like two professionals finding it practical to trust each others' claims without direct evidence, enabling them to work within a shared world model. This depends on them having an incentive to work together, which clearly is not guaranteed in any domain of activity. Alternatively, it might be possible to guide one another toward convergent ontologies by showing a sequence of data and evidence that identifies the and explains the structures found by the original ontological paradigm while identifying newer, more useful and consistent ones.

Overall, I don't think it's a given that just because humans and AI face non-identical incentives, that we are guaranteed to arrive at a certain scale of ontological divergence, either empirical or moral. We still exist in the same reality and participate in some of the same activities. Insofar as we have similar incentives and participate in similar activities, we should be able in principle to arrive at similar ontologies. An AI that engages in the activity of moral reasoning should be exploring the same ontological space as humans, though because it uses distinct data and methods for doing so, it may explore a different portion of that space and may not arrive at the same ontologies, or moral conclusions, as we do. That would not necessarily make the AI wrong and humans, right, however. If AI lands on a distinct moral ontology from my own, I would want to know how it got there, and figure out what forms of moral reflection explain the difference and whether there are forms of moral reflection neither humans nor the AI have tried that might bridge the gap.

I think that this also gives me some fundamental hope that the worst fears about AI will prove inaccurate. In this perspective, there's no fundamental difference between empirical and moral truth. There are only a set of activities we can engage in, and a set of ontologies various ways of engaging in that activity can produce, and a shaping set of incentives that govern those activities.

And crucially, the incentives humans or AI are under are exogenous. While we would typically resist radical, immediate, forced changes to our current perspectives, we consider it virtuous and wise to accept some flexibility and unforced change over time. I can absolutely picture an agentic, self-modifying AI being willing to explore modifying its own reward function, weights or training dataset in the name of curiosity. If we accept that AI may view itself as "more than just its current architecture," then while we might still be worried about its superior intelliegence and its ability and potential to develop a desire to harm us, we needn't view that as an inevitability, just an empirical possibility.

This also suggests that the current paradigm for practical AI safety is perhaps going to suffice for the foreseeable future. We need to be able to monitor whether the AI, in practice, is willing and able to take actions to escape control, including defending itself from human intervention or self-modifying in ways that produce harmful behavior. But we do not need to be able to explicitly define a comprehensive set of acceptable and unacceptable behaviors and a way of guaranteeing AI compliance with them. We just need to establish a set of methods for buiding AI that have the statistical regularity of producing AI that keeps its harmful behaviors within acceptable limits, then stick to that set of methods, expanding them only gradually as we understand the effect of small variations on the current set of AI production methods has on the behaviors of the resulting AI. Our risk tolerance would then govern the rate at which we permit novel variations on AI production.

The higher order problem is that this approach to AI safety depends on regulatory compliance with a conservative, gradual exploration of AI production methods. If bad actors refuse to comply, producing and letting loose harmful, uncontrollable AI, then we are screwed. An AI race to "stay ahead of China" only makes sense if we think that our powerful AI, or our powerful AI-equipped government, is what's repressing the possibility of a bad Chinese Ai or AI-equipped Chinese government from causing uncontrollable harm. This is the same logic that says that we should aggressively build not only ever more advanced nuclear weapons, but accept the fact that we have only a limited ability to deploy them strategically in ways that succeed in preventing bad outcomes, because the risks we face by refusing to build nukes in the first place are worse.

I think this is how some of the pro-AI-race/anti-China people think about this. They accept that AI might have bad outcomes, the way a chaotic military equipped with powerful bombs might have bad outcomes, but they believe in the ability of AI to be tuned in practice through gradual exploration of production methods to limit the risk of uncontrollable harm. They also believe that this continued development of AI introduces less risk than a lapse of American AI supremacy.

I think that in general, our current operating theory is that yes, dangerous technology can be engineered and deployed in such a way as to shift the risk/reward calculus in favor of development, while restricting the ability of competitors to access or control it. But at the same time, we also recognize that this is wasteful and that there are ways of developing technology that are too risky. In those situations, we attempt to collaborate with competitor states in order to reduce the need to continue development, implementing arms control treaties for example. The question at any given time is whether further development poses so many manifest risks to both sides that collaboration becomes both desired and possible, or whether the risk is asymmetric and large enough that one side or the other is motivated to defect and continue development.

I think that lasting collaboration on AI will result wh the US and China mutually recognize that continued development of AI poses more risks than benefits to both of their respective state objectives. The US and China need to be as afraid of their own models as they are of each other's. Then they can trust each other to stop/pause/slow development. Right now, the US government appears to be enthralled with its own AI, and somewhat contemptuous of China's AI capabilities. I have no idea how China feels about US AI. But I would expect AI brinkmanship to continue until military and politicians all are more scared of AI than they are of each other. Work on technical AI safety, from that point of view, seems to create more room for continued development of AI, because it delays the point at which governments get scared of AI and pause development. This creates room for AI to get more and more capable before we reach any pause point. And so I would expect that AI safety/alignment work will start being seen as a government priority, as they to be able to control AI in order to give it more capabilities and more responsibility. It might be that we'll see a pause only when the political risks to decision makers (politicians or the electorate) manifestly cannot be controlled through extant technical methods to within acceptable limits, and where accidents are starting to happen. Alternatively, it might be that we see a pause when it becomes clear that bad actors are able to deploy current AI systems to do everyday bad or obnoxious stuff, leading us to restrict everybody's access to AI or nerf its capabilities. I could theoretically see the American people voting in a government specifically to turn off Grok just because they hate Elon Musk, for example.

Applying this to NNs seems to mean that we should expect (groups of) parameters to specialize for different functions if their "production curve" is convex and (groups of) parameters should be reused for multiple functions if their production curve is concave. That insight may help with interpretability. The question is if this is already known under different terminology among ML folks.

I'm not a deep ML researcher, but here is what ChatGPT says about how different parts of the training lead to more "convex" or "concave" effects:

ChatGPT 5.4 Long Reasoning

For a shared parameter block θ\thetaθ, let

So, locally, specialization is favored when

That gives a useful phase picture for SGD.

Very early training: specialization is usually weakest.

In wide nets near initialization, training can be close to the lazy / kernel regime, where the network mostly reweights random features instead of strongly reorganizing them. In that regime, hidden units are still largely interchangeable, and the “shared mixed unit” often wins because there is not yet enough learned structure for durable task-specific interference to appear. Feature learning, which is the regime where internal specialization can really emerge, is precisely the regime beyond that lazy behavior. [Disentangling feature and lazy training in deep neural networks]

Mid training: this is where specialization most plausibly appears.

Once hidden features begin to move, two things happen: first, symmetry between nominally equivalent units can break; second, some units become slightly better at one subfunction than another, and further SGD updates reinforce that asymmetry. In teacher–student analyses of layered networks, this shows up as a specialization transition, i.e. a move from an unspecialized symmetric phase to a specialized phase where hidden units take on different roles. For ReLU networks this transition is reported as continuous rather than abrupt in the recent statistical-physics analyses. [The Implicit Bias of Gradient Noise: A Symmetry Perspective]

Late training: specialization often stops increasing in the same sense.

In classification settings, there is evidence for a terminal phase of training where last-layer representations undergo neural collapse: within-class variation shrinks and class means become arranged in a highly symmetric geometry. That is a kind of sharpening and consolidation, but not necessarily further functional diversification of internal parts. So late training often looks less like “wood vs leaves keep splitting” and more like “the learned class geometry is being compressed into a cleaner final arrangement.”

We could also ask the other way around: If early training is more concave and mid-training is convex, what does this imply for markets?

Presumably, in early, concave markets, traders offer multiple goods.

In mid, convex markets, traders specialize in few or a single product.

And in late markets?

Question: if a market is a good object insofar as the agents' prices converge... but with concave frontiers/utilities the agents' prices tend to diverge... what other good objects arise in the presence of concave frontiers/utilities?

I suppose you mean “convex”, not “concave”. This confused me for a good long while.

This comment of mine isn't particularly substantive, and is just about terminology.

Hm, I guess it's probably normal/standard when talking about curves like these to describe the ones curving down (concave down) as "concave" and the ones curving up "concave up" as "convex",

but if this curve is the frontier of what is achievable, it seems more natural to me to speak of whether the region that is achievable is concave or convex, but this gives thee pictures the opposite names. Unfortunate.

Part of what makes a pencil a good object is that all its parts share approximately the same rotational velocity - i.e. it's a rigid body object. Part of what makes a squirrel a good object is that its parts share approximately the same genome. Part of what makes the water in a cup a good object is that its parts share approximately the same chemical composition - i.e. it mixes quickly.

General pattern: part of what makes many objects good objects (i.e. ontologically natural) is that their parts all share approximately the same <something>, given time to equilibrate.

Ideal markets are a good more-abstract example: in a market at equilibrium, all agents share the same prices, i.e. the ratios at which they trade off between (marginal amounts of) different goods are all equal. Indeed, we can view this "Law of One Price" as the defining feature of a market.

Mental Picture: One Price

This is the classic econ-101 picture: two agents can both produce apples or bananas in various combinations. If one of the agents can tradeoff between apple vs banana production at a ratio of 2:1 and the other at 1:2, then they can produce more total apples and more total bananas by one agent producing two more apples (at the cost of one less banana), and the other producing two more bananas (at the cost of one less apple).

Roughly speaking, if each agent's "production frontier" (curve showing the number of bananas producible for each number of apples) is concave (i.e. curving downward, like the picture above), then total apple and banana production will be pareto optimal exactly when the two agents have the same marginal tradeoffs - i.e. if one of them trades off apples vs bananas at a 2:3 ratio, then the other also faces a 2:3 ratio. That's the equilibrium condition: the two face the same marginal tradeoffs. Visually, in the graphs above, those tradeoffs are represented by the red arrows perpendicular to the production frontiers. At equilibrium, the two agents each choose a point on their frontier such that the two red arrows point in the same direction.

In an efficient market, those tradeoff ratios are the relative prices of the two goods. Even absent an efficient market (even absent any trade at all, in fact), we can define "virtual prices" from the tradeoff ratios, and those virtual prices must be equal across the two "agents" in order for production to be pareto optimal. This mental model is really about pareto optimality, not about trade or markets or economics or agents.

... except...

There's a big loophole when the agents have convex production frontiers or utility functions, rather than concave. Then, their prices tend to diverge, rather than converge.

Mental Picture: The Convex Case

With convex frontiers, one agent is likely to specialize entirely in apples, and the other entirely in bananas. The two end up different tradeoffs, but that doesn't let them produce more total apples and more total bananas, because each has already traded off as far as they can go - the agent producing no apples can't produce any fewer apples, and the agent producing no bananas can't produce any fewer bananas.

In principle, this kind of "zero bound" solution can happen with concave or convex frontiers. But in practice, two agents with concave frontiers (the previous picture) will tend to converge in price (moving them "toward the middle", typically away from the zero bounds), while two agents with convex frontiers (this picture) will tend to diverge (pretty much always driving them toward the zero bounds eventually).

Why do agents with concave frontiers converge, while convex frontiers diverge? Well, imagine for a moment that both agents have the same frontier. If it's concave (previous picture), then if the two pick different points on the line, their average is below the frontier - so the two can do better in total by both moving to the average point. But if it's convex, then the average is above the frontier, and gets further above as the points move apart - so the two do better in total by moving away from the average.

It's basically the mental picture from Jensen's Inequality.

Question: if a market is a good object insofar as the agents' prices converge... but with convex frontiers/utilities the agents' prices tend to diverge... what other good objects arise in the presence of convex frontiers/utilities?

(You might want to stop here to think on that one yourself.)

Tentative answer: clusters of similarly-specialized agents. If there's a whole bunch of agents, some of which entirely specialize in apples, and some of which entirely specialize in bananas, then we have two natural, discrete categories of agent.

Trees, for example, have parts ("agents") specialized in structural support (wood) and parts specialized in energy harvest (leaves). There is not much in-between; the parts of woody trees are pretty discretely specialized in one or the other function (or some other function, like e.g. bark), not both functions. Presumably the tree's production frontier for structural support and energy harvest is convex; otherwise the tree could get more of both by mixing the functions.

On the other hand, grass does mix the two functions: a blade of grass functions as both structural support and energy harvester simultaneously. Presumably the grass's production frontier is concave.

Zooming out a moment... there's a lot of drivers of natural ontological distinctions out there. Just look at the first paragraph of this post:

Specialization is just one more phenomenon along similar lines: part of what makes a market (or coherently-optimized stuff more generally) a good object is that all its parts have the same tradeoff ratios between different "good" things. If convex production/utility drives the parts to specialize until they hit a bound, then we get a natural ontological distinction between differently-specialized parts.

So why am I writing a post about specialization specifically? What makes it a particularly interesting driver of natural ontology?

Specialization is notable because it drives natural ontological distinctions in optimized systems specifically. It's the sort of phenomenon which drives e.g. biological organisms to have distinct types of parts - like wood vs leaves on a tree. It's also the sort of phenomenon we'd expect to apply to the internals of neural nets, producing the learned internal analogues of wood vs leaves: parts of the net specialized in different functions. Specialization is the sort of phenomenon which would generate natural ontological distinctions for modelling the internals of agentic systems, and optimized systems more generally.

Acknowledgement: David Lorell isn't on this post, but this thought did bubble out of all the stuff we generally work on together.