I'm giving up on working on AI safety in any capacity.

I was convinced ~2018 that working on AI safety was an Good™ and Important™ thing, and have spent a large portion of my studies and career trying to find a role to contribute to AI safety. But after several years of trying to work on both research and engineering problems, it's clear no institutions or organizations need my help.

First: yes, it's clearly a skill issue. If I was a more brilliant engineer or researcher then I'd have found a way to contribute to the field by now.

But also, it seems like the bar to work on AI safety seems higher than AI capabilities. There is a lack of funding for hiring more people to work on AI Safety, and it seems to have created a dynamic where you have to be scarily brilliant to even get a shot at folding AI safety into your career.

In other fields, there are a variety of professionals who can contribute incremental progress and get paid as they progress their knowledge and skills. Like educators across varying levels, technicians in lab who support experiments, and so on. There are far fewer opportunities like that w.r.t AI Safety. Many "mid-skilled" engineers and researchers just don't have a place in the field. I've met and am aware of many smart people attempting to find roles to contribute to AI safety in some capacity, but there's just not enough capacity for them.

I don't expect many folks here to be sympathetic to this sentiment. My guess on the consensus is that in fact, we should only have brilliant people working on AI safety because it's a very hard and important problem and we only get a few shots (maybe only one shot) to get it right!

I think the main problem is that society-at-large doesn't significantly value AI safety research, and hence that the funding is severely constrained. I'd be surprised if the consideration you describe in the last paragraph plays a significant role.

I think it's more of a side effect of the FTX disaster, where people are no longer willing to donate to EA, which means that AI safety got particularly hard hit as a result.

I suspect the (potentially much) bigger factor than 'people are no longer willing to donate to EA' is OpenPhil's reluctancy to spend more and faster on AI risk mitigation. Don't know how much this has to do with FTX, it might have more to do with differences of opinion in timelines, conservativeness, incompetence (especially when it comes to scaling up grantmaking capacity) or (other) less transparent internal factors.

(Tbc, I think OpenPhil is still doing much, much better than the vast majority of actors, but I could bet by the end of the decade them not having moved faster with respect to AI risk mitigation will look like a huge missed opportunity).

First: yes, it's clearly a skill issue. If I was a more brilliant engineer or researcher then I'd have found a way to contribute to the field by now.

I am not sure about this, just because someone will pay you to work on AI safety doesn't mean you won't be stuck down some dead end. Stuart Russell is super brilliant but I don't think AI safety through probabilistic programming will work.

Thank you for your service!

For what it's worth, I feel that the bar for being a valuable member of the AI Safety Community, is much more attainable than the bar of working in AI Safety full-time.

There's just not enough funding. In a better world, we'd have more people with more viewpoints and approaches working on ai safety. Brilliance is overrated; creativity, understanding the problem space carefully, and effort also play huge roles in success in most fields.

what's the relationship between attachment and memory?

are people with good/great memories less sentimental?

if you're someone with less recall of events, especially ones that happened many years ago, do you feel more attached to holding onto momentos that act as triggers for special moments in your life? conversely, if you have great memory, do you feel less attached to momentos because you can recall an event on demand?

I have Aranet4 CO2 monitors inside my apartment, one near my desk and one in the living room both at eye level and visible to me at all times when I'm in those spaces. Anecdotally, I find myself thinking "slower" @ 900+ ppm, and can even notice slightly worse thinking at levels as low as 750ppm.

I find indoor levels @ <600ppm to be ideal, but not always possible depending on if you have guests, air quality conditions of the day, etc.

I unfortunately only live in a space with 1 window, so ventilation can be difficult. However a single well placed fan facing outwards blowing towards the window improves indoor circulation. With the HVAC system fan also running, I can decrease indoor ppm by 50-100 in just 10-15 minutes.

If you don't already periodically vent your space (or if you live in a nice enough climate, keep windows open all day), then I highly recommend you start doing so.

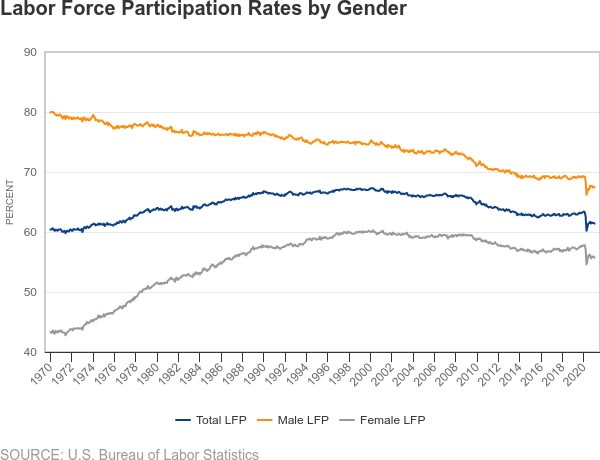

Want to know what AI will do to the labor market? Just look at male labor force participation (LFP) research.

US Male LFP has dropped since the 70s, from ~80% to ~67.5%.

There are a few dynamics driving this, and all of them interact with each other. But the primary ones seem to be:

- increased disability [1]

- younger men (e.g. <35 years old) pursuing education instead of working [2]

- decline in manufacturing and increase in services as fraction of the economy

- increased female labor force participation [1]

Our economy shifted from labor that required physical toughness (manufacturing) to labor that required more intelligence (services). At the same time, our culture empowered women to attain more education and participate in labor markets. As a result, the market for jobs that require higher intelligence became more competitive, and many men could not keep up and became "inactive." Many of these men fell into depression or became physically ill, thus unable to work.

Increased AI participation is going to do for humanity what increased women LFP has done for men in the last 50 years -- it is going to make cognitive labor an increasingly competitive market. The humans that can keep up will continue to participate and do well for themselves.

What about the humans who can't keep up? Well, just look at what men are doing now. Some men are pursuing higher education or training, attempting to re-enter the labor market by becoming more competitive or switching industries.

But an increased percentage of men are dropping out completely. Unemployed men are spending more hours playing sports and video games [1], and don't see the value in participating in the economy for a variety of reasons [3] [4].

Unless culture changes and the nature of jobs evolve dramatically in the next couple of years, I suspect these trends to continue.

Relevant links:

[1] Male Labor Force Participation: Patterns and Trends | Richmond Fed

[2] Men’s Falling Labor Force Participation across Generations - San Francisco Fed

[4] What’s behind Declining Male Labor Force Participation | Mercatus Center

Anthropic's new model seems quite good for longer horizon tasks.

https://www.anthropic.com/news/claude-sonnet-4-5

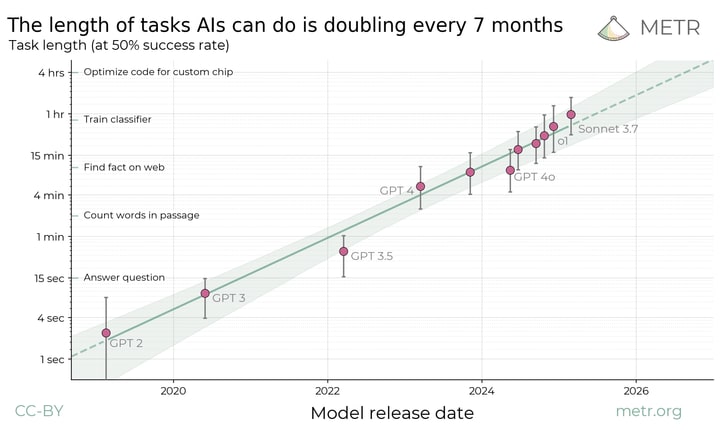

a reminder of timelines

a claim

How are folks interpreting this?

I mean, if true, or even close to true, it would totally change my model of AI progress.

If the task length is like 4 hours then I’ll just update towards not believing Anthropic about this stuff.

You are misunderstanding what METR time-horizons represent. The time-horizon is not simply the length of time for which the model can remain coherent while working on a task (or anything which corresponds directly to such a time-horizon).

We can imagine a model with the ability to carry out tasks indefinitely without losing coherence but which had a METR 50% time-horizon of only ten minutes. This is because the METR task-lengths are a measure of something closer to the complexity of the problem than the length of time the model must remain coherent in order to solve it.

Now, a model's coherence time-horizon is surely a factor in its performance on METR's benchmarks. But intelligence matters too. Because the coherence time-horizon is not the only factor in the METR time-horizon, your leap from "Anthropic claims Claude Sonnet can remain coherent for 30+ hours" to "If its METR time-horizon is not in that ballpark that means Anthropic is untrustworthy" is not reasonable.

You see. the tasks in the HCAST task set (or whatever task set METR is now using) tend to be tasks some aspect of which cannot be found in much shorter tasksanyany. That is, a task of length one hour won't be "write a programme which quite clearly just requires solving ten simpler tasks, each of which would take about six minutes to solve". There tends to be an overarching complexity to the task.

I think that’s reasonable, but Anthropic’s claim would still be a little misleading if it can only do tasks that take humans 2 hours. Like, if you claim that your model can work for 30 hours, people don’t have much way to conceptualize what that means except in terms of human task lengths. After all, I can make GPT-4 remain coherent for 30 hours by running it very slowly. So what does this number actually mean?

I want to clarify that this is Anthropic choosing to display a testimonial from one of their customers on the release page. Anthropic's team is editorializing, but I don't think they intended to communicate that the models are consistently completing tasks that require 10s of hours (yet).

I included the organization and person making the testimonial to try and highlight this, but I should've made it explicit in the post.

re: how this updated my model of AI progress:

We don't quite know what the updates were that improved this model's ability at long contexts, but it doesn't seem like it was a dramatic algorithm or architecture update. It also doesn't seem like it was OOMs more spend in training (either in FLOPs or dollars).

If I had to guess, it might be something like an attention variant (like this one by DeepSeek) that was "cheap" to re-train an existing model with. Maybe some improvements with their post-training RL setup. Whatever it was -- it seems (relatively) cheap.

These improvements are being found faster than I expected (based on METR's timeline) and for cheaper than I anticipated (I predicted going from 2hrs > 4hrs would've needed some factor increase in additional training plus a 2-3 non-trivial algorithm improvements). I suppose this is something Anthropic is capable of in a few months, but then to verify it and deploy it at scale in <half a year is quite impressive and not what I would've predicted.

Are you implying they have over 4 hour task length? I’m confused about what you’re updating on.

- I think that ~4 hour task length is the right estimate for tasks that Sonnet 4.5 consistently succeeds on with the harness that's built into Claude Code

- Some context: most of the people I interact with are bear-ish on model progress. Not the LW crowd, though, I suppose. I forget to factor this in when I post here.

- re: METR's study: I'm saying there's acceleration in model progress. The acceleration seems partly due to the architecture changes being easy to find, test, and scale (e.g. variants on attention, modifications on sampling). And that these changes do not make models more expensive to train and inference; if anything it seems to make them cheaper to train (e.g. MoEs, native spare attention, DeepSeek sparse attention).

- Prior to this announcement (plus DeepSeek's drop today), I thought increasing the task-length that models can succeed on would've required more non-trivial architecture updates. Something on the scale of DeepSeek's RL on reasoning chains or the use of FlashAttention to scale training FLOPs by an OOM.

Note that they also claimed Opus 4 worked for over seven hours but only scored 1h20m on the METR task suite. I wouldn't be surprised to see Sonnet 4.5 get a strong METR score but 30 hours definitely isn't likely.

Well, dividing by <7 would give >4 hours which is on trend ;)

Actually that would be somewhat ahead still.

contra @noahpinion's piece on AI comparative advantage

https://www.noahpinion.blog/p/plentiful-high-paying-jobs-in-the

TL;DR

- AI-pilled people say that some form of major un/underemployment is in the near future for humanity.

- This misses the subtle idea of comparative advantage, i.e:

- "Imagine a venture capitalist (let’s call him “Marc”) who is an almost inhumanly fast typist. He’ll still hire a secretary to draft letters for him, though, because even if that secretary is a slower typist than him, Marc can generate more value using his time to do something other than drafting letters. So he ends up paying someone else to do something that he’s actually better at."

- Future AI's will eventually be better than every human at everything, but humans will still have a human-economy because AI's will have much better things to do.

I think this makes a lot of sense and mostly agree. But I want to pose the question: what if AI's run of out useful things to do?

I don't mean they'll run out of useful things to do forever, but what if AI's run into "atom" bottlenecks the way that humans already do?

I'm using a working definition of aligned AI that goes something like:

- AI systems mostly act autonomously, but are aligned with individual and societal interests

- We make (mostly) reasonable tradeoffs about when and where humans must be in the loop to look over and approve further actions by these systems

More or less, systems like Claude Code or DeepResearch with waiting periods of hours/days/weeks between human check-in time instead of minutes.

Assuming we have aligned AI systems in the future, think about this:

- AI-1 is tasked with developing cures to cancers. It reads all the literature, has some critical insight, and then sends a report to run experiments X, Y, Z to some humans and then report back with the results.

- While waiting for humans to finish up the experiment: what does AI-1 do?

- In the days/weeks it takes to run the experiments maybe AI-1 will be tasked with solving some other class of disease. It reads all the literature, has some critical insight, needs more data, and sends more humans off to run more experiments in atom-realm.

- Eventually, AI-1 no longer has diseases (of human interest) to analyze and has to wait until experiments finish. We do not have enough wet labs and humans running around cultivating petri dishes to keep it busy. (or even if we have robot wet-labs, we are still bottlenecked by the time it takes to cultivate cultures and so on).

It's unclear to me what we'll allocate AI-1 to do next (assuming it's not fully autonomous). And in a world where AI-1 is fully autonomous and aligned, I'm not sure AI-1 will know either.

This is what makes me unsure about the comparative advantage point. At this point, I imagine someone (or AI-1 itself) determines that in the meantime, it can act as AI-1-MD and consult with patients in need.

And then maybe there are no more patients to screen (perhaps everyone is incredibly healthy, or we have more than enough AIs for everyone to have personalized doctors). AI-1-MD has to find something else to do.

There's a wide band of how long this period of "atom bottlenecks" remains. In some areas (like solving all diseases), I imagine the incentives will be aligned enough where we'll look to remove the wetlab/experimentation bottleneck. But I think the world looks very different on if that bottlenecks takes 2 years or 20 years.

In a world where it takes 2 years to solve the "experimentation" bottleneck, then AI-1 can use its comparative advantage to pursue research and probably won't replace doctors/lawyers/whatever it's next best alternative is. But if it takes 20 years to solve these bottlenecks, then maybe we a lot of AI-1's time is spent towards replacing large functions of being a doctor/lawyer/etc.

AI's don't "labor" the same ways humans do. They won't need 20-30 years of training for advanced jobs, they'll always have access to all the knowledge they'll need to be an expert in an instant. They won't think in minutes/hours, they'll think at the speed of processors -- which is milliseconds and nanoseconds. They'll likely be able to context switch to various domains with no penalty.

A really plausible reality to me is that many cognitive and intellectual tasks will be delegated to future AI systems because they'll be far faster, better, and cheaper than most humans, and they won't have anywhere else to point their ability at.

They could be tasked with solving the entropic death of the universe á la Asimov's Last Question.