I think my big problem with complexity science (having bounced off it a couple of times, never having engaged with it productively) is that though some of the questions seem quite interesting, none of the answers or methods seem to have much to say.

Which is exacerbated by a tendency to imply they have answers (or at least something that is clearly going to lead to an answer)

I agree that there often seems to be something very shallow about the methods, but I don't necessarily hold that against them. Many very useful results come from very shallow claims, and my impression is complexity science is often pretty useful at its best, and its wrong to consider their average performers or the current & past hypes.

Comparing to work done by the alignment-style agent-foundationists, you'll probably be disappointed about the "deepness", but I do think impressed by the applicability.

The test of a good idea is how often you find yourself coming back to it despite not thinking it was all that useful when first learning it. This has happened many times to me after learning about many methods in complex systems theory.

Deep learning/AI was historically bottlenecked by things like

(1) anti-hype (when single layer MLPs couldn't do XOR and ~everyone just sort of gave up collectively)

(2) lack of huge amounts of data/ability to scale

I think complexity science is in an analogous position. In its case, the 'anti-hype' is probably from a few people (probably physicists?) saying emergence or the edge of chaos is woo and everyone rolling with it resulting in the field becoming inert. Likewise, its version of 'lack of data' is that techniques like agent based modeling were studied using tools like NetLogo which are extremely simple. But we have deep learning now, and that bottleneck is lifted. It's maybe a matter of time before more people realize you can revisit the phase space of techniques like that with new tools.

As a quick example: John Holland made a distilled model of complex systems called "Echo" which he described in Hidden Order and if you download NetLogo you can run it yourself. It's a bit cute, actually - here's an image of it:

But anyway, the point is that this is the best people could do at the time - there was an acknowledgement that some systems cannot be understood analytically in tractable ways, and predicting/controlling them would benefit from algorithmic approaches. So? An attempt was made to do it by making these hard-coded rules for agents. But now that we have deep learning, we can make beefed up artificial ecologies of our own to empirically study the external dynamics of systems. Though it still demands theoretical advancements (like figuring out the parameterized, generalized forms of physics equations and fitting them to empirical data to then model that system with deep learning ecologies).

If people find that the methods/ideas are lacking, they might be exploring the field in a suboptimal way/not investigating thoroughly. One thing I do is try to make some initial attempt to define things myself the best I can (e.g. try to formalize/locate the sharp left turn, edge of chaos, emergence, etc. and what it'd take to understand it) which adjusts my attention mechanism for looking for research, which lets me find niche content that's surprisingly relevant (in my opinion). If you come at the field with far too much skepticism, you're almost making yourself unnecessarily blind.

The difference between doing this and not might be substantial: if you don't, you might take a look at the surface level of the field and just see hand-wavey attempts at connecting a bunch of disparate things - but if you do, you might notice that 'robust delegation', 'modularity', 'abstractions', 'embedded agency', etc. have undergone investigations from a number of angles already, and find public alignment research almost boring, not-even-wrong, or framed/approached in unprincipled ways in comparison (which I don't blame them for - there's something very anti-memetic about studying the hard parts of alignment theory leaving the field impoverished of size and diversity).

I spent a bit of time reading the first few chapters of Complexity: A Guided Tour. The author (also at the Santa Fe institute) claimed that, basically, everyone has their own definition of what "complexity" is, the definitions aren't even all that similar, and the field of complexity science struggles because of this.

However, she also noted that it's nothing to be (too?) ashamed of: other fields have been in similar positions, have come out ok, and that we shouldn't rush to "pick a definition and move on".

We have to theorize about theorizers and that makes all the difference.

That doesn't really seem to me to hit the nail on the head.

I get the idea of how in physics, if billiards balls could think and decide what to do it'd be much tougher to predict what will happen. You'd have to think about what they will think.

On the other hand, if a human does something to another human, that's exactly the situation we're in: to predict what the second human will do we need to think about what the second human is thinking. Which can be difficult.

Let's abstract this out. Instead of billiards balls and humans we have parts. Well, really we have collections of parts. A billiard ball isn't one part, it consists of many atoms. Many other parts. So the question is of what one collection of parts will do after it is influenced by some other collection of parts.

If the system of parts can think and act, it makes it difficult to predict what it will do, but that's not the only thing that can make it difficult. It sounds to me like difficulty is the essence here, not necessarily thinking.

For example, in physics suppose you have one fluid that comes into contact with another fluid. It can be difficult to predict whether things like eddies or vortices will form. And this happens despite the fact that there is no "theorizing about theorizers".

Another example: if is often actually quite easy to predict what a human will do even though that involves theorizing about a theorizer. For example, if Employer stopped paying John Doe his salary, I'd have an easy time predicting that John Doe would quit.

The problem with the difficulty frame is that I don't really see any reason to believe that you get the same problems & solutions to increasing the difficulty of the problems you try to solve in the following fields:

- Economics

- Sociology

- Biology

- Evolution

- Neuroscience

- AI

- Probability theory

- Ecology

- Physics

- Chemistry

Except of course from the sources of

- Increasing the difficulty of these in some ways plausibly leads to insights about agency & self-reference

- There are a bunch of mathematical problems we don't have efficient solution methods for yet (and maybe never will), like nonlinear dynamics and chaos.

I'm happy with 1, and 2 sounds like applied math for which the sea isn't high enough to touch yet. Maybe its still good to understand "what are the types of things we can say about stuff we don't yet understand", but I often find myself pretty unexcited about the stuff in complex systems theory which takes that approach. Maybe I just haven't been exposed enough to the right people advocating that.

Hm, good points.

I didn't mean to propose the difficulty frame as the answer to what complexity is really about. Although I'm realizing now that I kinda wrote it in a way that implied that.

I think what I'm going for is that "theorizing about theorizers" seems to be pointing at something more akin to difficulty than truly caring about whether the collection of parts theorizes. But I expect that if you poke at the difficulty frame you'll come across issues (like you have begun to see).

https://economics.mit.edu/sites/default/files/publications/Systemic%20Risk%20and%20Stability%20in%20Financial%20Networks..pdf excellent paper on applying networks to financial crisis (although I have no idea if it counts as complexity science, but it seems at least adjacent)

Here is a chapter from an upcoming textbook on complex systems with discussion of their application to AI safety: https://www.aisafetybook.com/textbook/5-1

I forgive the ambiguity in definitions because:

1. they're dealing with frontier scientific problems and are thus still trying to hone in on what the right questions/methods even are to study a set of intuitively similar phenomena

2. it's more productive to focus on how much optimization is going into advancing the field (money, minds, time, etc.) and where the field as a whole intends to go: understanding systems at least as difficult to model as minds, in a way that's general enough to apply to cities, the immune system, etc.

I'd be surprised if they didn't run into some of the same theoretical problems involved in solving alignment. (I wouldn't be very surprised if complexity scientists make more progress on alignment than existing alignment researchers. It's hard to bet against institutions of interdisciplinary scientists in close communication with one another already applying empirical work and exploring where the information theorists and physicists of the previous century left off to study life and minds).

That being said, John Holland (one of the pioneers of complexity science, invented genetic algorithms) has written several books on the subject and has made attempts to lay some groundwork for how the field should be studied.

I think he'd probably say complex adaptive systems are an updating interaction network of 'agents' with world models trying to lower some kind of fitness function (huge oversimplification of course). He'd probably also emphasize the combinatorial nature of adaptation: structures (schemata ~ abstractions ~ innovations) can be found via mutation-like processes, assembled, tiled, and disassembled.

So we've got the textbook you mentioned which talks about co-evolving multilayer networks, Holland's adapting network of agents, and Krakauer's 'teleonomic matter' description. People might toss in other properties like diversity of entities, flows and cycles of some kind of resource (money, energy, etc), 'emergence', self-organization, etc.

I think those seem fine. I'd probably say that complex systems is something like the academic-child of frontier information theory and physics which focuses on the counterfactual evolutions of non-stationary information flows (infodynamics) when the environment contains sources and sinks of information, and when it contains memory/compression systems. Once you introduce memory and compression into the universe, information about the past and the future are allowed to interact, as well as counterfactuals. Downstream of that, I suspect, is theory of mind, acausal decision theories, embedded agency, etc. Memory systems are also 'lags' in the flow of information, which then changes what the geodesic looks like for bits.

I suspect a science of complexity will involve a lot of concepts from physics like work, energy, entropy, etc. - but they'll involve more generalized, parameterized forms which need to be fit via empirical data from a particular complex system you desire to study.

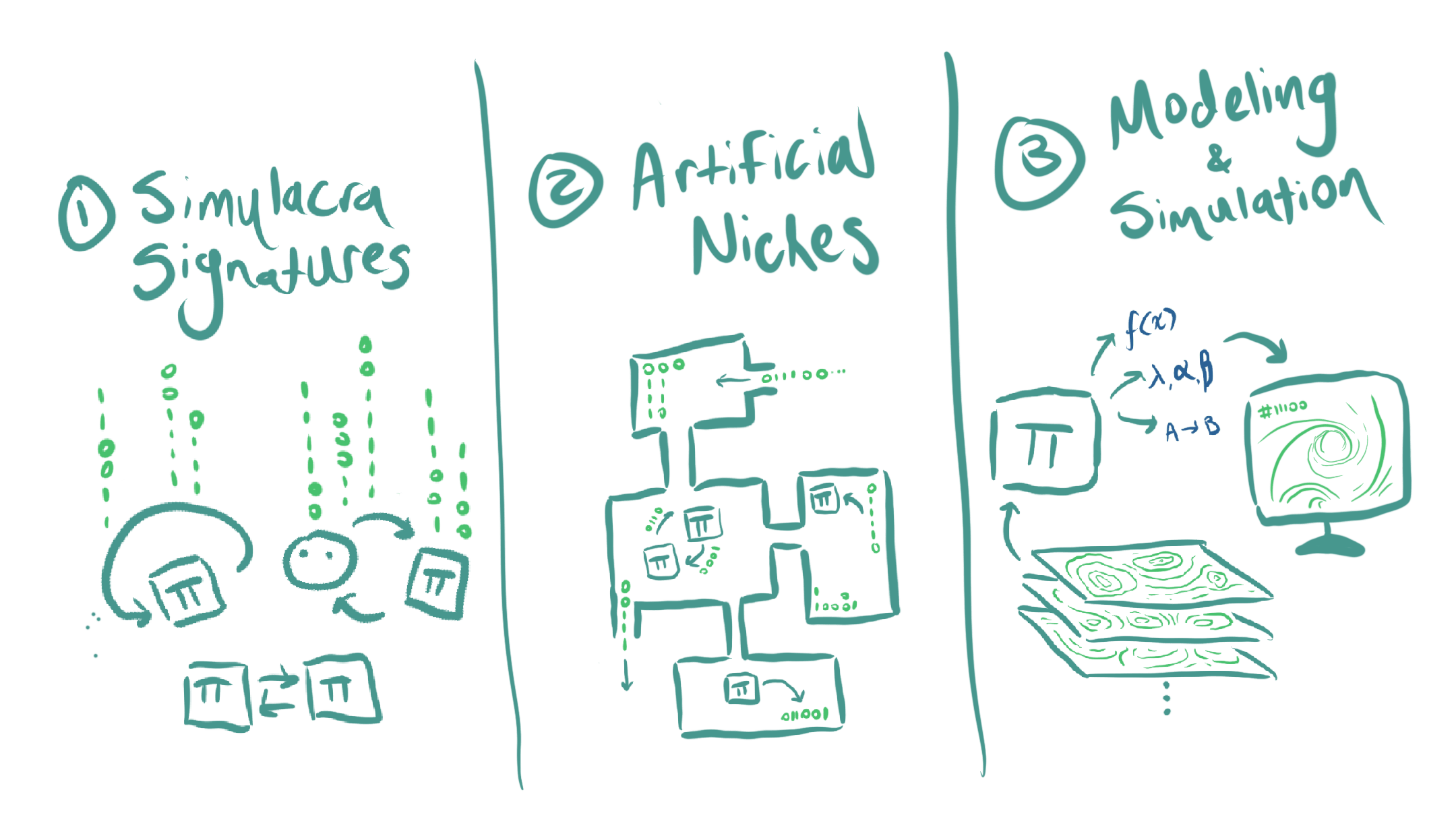

I think alignment might get a lot easier once we understand "infodynamics" far better, and the above drawing is of what I see as my current three-step, high level plan:

(1) find ways to track/measure the heat signautures (really 'information' signatures) of optimization processes/intelligences

(2) develop (open, driven-dissipative) environments that allow intelligences of different scales to move information around and interact with one another to get empirical data on how the 'information-spacetime' changes

(3) extract distilled models of the phenomena/laws going on so that rapid modeling can occur to explore the space of minds with these abstract models

Here are some resources:

1. The journal entropy (this specifically links to a paper co-authored by D. Wolpert, the guy who helped come up with the No Free Lunch Theorem)

2. John Holland's books or papers (though probably outdated and he's just one of the first people looking into complexity as a science - you can always start at the origin and let your tastes guide you from there)

3. Introduction to the Theory of Complex Systems and Applying the Free-Energy Principle to Complex Adaptive Systems (one of the sections talks about something an awful lot like embedded agency in a lot more detail)

4. The Energetics of Computing in Life and Machines

And I'm guessing non-stationary information theory, statistical field theory, active inference/free energy principle, constructor theory (or something like it), random matrix theory, information geometry, tropical geometry, and optimal transport are all also good to look into, as well as adjacent fields based on your instinct. That's not intended to be covering the space elegantly, just a battery of things in the associative web near what might be good to look into. Combinatorics, topology, fractals and fields are where it's at.

I have more resources/thoughts on this but I'll leave it at that for now unless someone's interested. The best resource is the will to understand and the audacity to think you can, of course

You seem to be knowledgeable in this area, what would you recommend someone read to get a good picture of things you find interesting in complex systems theory?

I didn't personally go about it in the most principled way, but:

1. locate the smartest minds in the field or tangential to it (surely you know of Friston and Levin, and you mentioned Krakauer - there's a handful more. I just had a sticky note of people I collected)

2. locate a few of the seminal papers in the field, the journals (e.g. entropy)

3. based on your tastes, skim podcasts like Santa Fe's or Sean Carroll's

4. textbooks (e.g. that theory of cas book you mentioned (chapter 6 on info theory for cas seemed like the most important if i had to pick one), multilayer networks theory, statistical field theory (for neural networks, etc.)) - I personally also save books which seem a bit distanced from alignment (e.g. theoretical ecology/metabolic theory of ecology) just out of curiosity to see how they think/what questions they wind up asking

Of these, I think getting a feel for the repeats in the unsolved problems/vocabulary/concepts that show up in journals is important, and pretty much anything related to "what does it take to unify information and physics, and extend information theory to talk about open-systems, and how do we get there asap" seems good bc of how foundational all that is.

How do you intend to do those 3 things? In particular, 1 seems pretty cool if you can pull it off.

I'm not expecting to pull off all three, exactly - I'm hoping that as I go on, it becomes legible enough for 'nature to take care of itself' (other people start exploring the questions as well because it's become more tractable (meta note: wanting to learn how to have nature take care of itself is a very complexity scientist thing to want)) or that I find a better question to answer.

For the first one, I'm currently making a suite of long-running games/tasks to generate streams of data from LLMs (and some other kinds of algorithms too, like basic RL and genetic algorithms eventually) and am running some techniques borrowed from financial analysis and signal processing (etc) on them because of some intuitions built from experience with models as well as what other nearby fields do

Maybe too idealistic, but I'm hoping to find signs of critical dynamics in models during certain kinds of tasks and I'd also like to observe some models with more memory dominate other models (in terms of which model diverges more from its start state to the other model's) etc. - Anthropic's power laws for scaling are sort of unsurprising, in a certain sense, if you know how ubiquitous some kinds of relationships are given some kinds of underlying dynamics (e.g. minimizing cost dynamics)

Anthropic's power laws for scaling are sort of unsurprising, in a certain sense, if you know how ubiquitous some kinds of relationships are given some kinds of underlying dynamics (e.g. minimizing cost dynamics)

Also unsurprising from the comp-mech point of view I'm told.

For the first one, I'm currently making a suite of long-running games/tasks to generate streams of data from LLMs (and some other kinds of algorithms too, like basic RL and genetic algorithms eventually) and am running some techniques borrowed from financial analysis and signal processing (etc) on them because of some intuitions built from experience with models as well as what other nearby fields do

I'm curious about the technical details here, if you're willing to provide them (privately is fine too).

Yeah, I'd be happy to.

I'm working on a post for it as well + hope to make it so others can try experiments of their own - but I can DM you.

Having briefly looked into complexity science myself, I came to similar conclusions -- mostly a random hodgepodge of various fields in a sort of impressionistic tableau, plus an unsystematic attempt at studying questions of agency and self-reference.

And chemistry??? Its mostly brought into the picture to talk about stoichiometry, the study of the rate and equilibria of chemical reactions. Still, what?

Your classic, well-behaved, homogeneous chemical reaction between perfectly miscible liquids or gases has a system of equations with forms like:

etc, where each term is a reaction rate that either adds or subtracts from (for example here is something that decays into without any other product, and react together to form , but then reacts with two and becomes something else... etc). This by the way is exactly also the sort of system of differential equations we use for epidemiology (and that are also used in social sciences for things like belief spread across networks etc). This kind of model is generally very straightforwardly solved numerically, and doesn't offer much complexity. Fun fact, you can also write a simple "mean field" model of Conway's game of life this way; but that example also drives home the fact that this kind of model is only an idealisation. In reality, for example in Conway's game of life, incredible complexity can emerge when these reactions are constrained by position (for example, a bunch of liquid non-miscible reagents on a plate that can only interact on their boundaries). So I can see how there would be potentially complex chemical systems that are very useful analogues for other systems in other domains.

And chemistry??? Its mostly brought into the picture to talk about stoichiometry, the study of the rate and equilibria of chemical reactions. Still, what?

For what it's worth, deconfusing my patchy chemistry understanding from secondary school by reading proper university chemistry and biochemistry books paid off. For example, my chemistry class hadn't given me deep enough appreciation for the fact that sometimes a reaction might be thermodynamically favorable, but has horrible kinetics. The same is true for evolution in a lot of places, but I have not seen a lot of people using that intuition. Like in some sense that is obvious, but in another sense I think in a lot of subjects including evolution, econ and learning I was applying that intuition inconsistently, and I definitely didn't make the distinction that clearly. Since chemistry has explicit energies there that you can calculate, it's easier to not commit type errors.

I have a long and confused love-hate relationship with the field of complex systems. People there never want to give me a simple, straightforward explanation about what its about, and much of what they say sounds a lot like woo ("edge of chaos" anyone?). But it also seems to promise a lot! This from the primary textbook on the subject:

Of course, that textbook never actually described what the mosaic it thought it saw actually was. The closest it came to was:

Which... well... isn't very exciting, and as far as I can tell just describes any dynamical system (co-evolving or no).

The textbook also seems pretty obsessed with a few seemingly random fields:

"What?" I had asked, and I started thinking

And each time I heard a complex systems theorist talk about why their field was important they would say stuff like

That is, until I found this podcast with David Krakauer[1].

Now, to be clear, his framing of complex systems science is... lets say... controversial. But he is the president of the Santa Fe Institute, so not just some crackpot[2]. Anyway, he says that the phrase "complex systems" is a shortening of the more accurate phrase "complex adaptive systems". That is, complex systems are adaptive systems which are complex.

Ok, what does complex mean? I'll leave it to David to explain

Remind you of anything?

Now we can go back to our list:

And its pretty clear how this ties together. Each field provides new math and data on the same underlying question: How would particles interact if they could "think"? Some of the above provides more foundational stuff (physics, probability theory, chemistry--in particular the study of equilibria and bottlenecks), and others provide more high-level stuff (economics, sociology, evolution, AI), but its all clearly related under this banner.

h/t Nora_Ammann ↩︎

Insofar as complex systems scientists aren't crackpots to begin with ↩︎