I love the idea, but I'm not sure the technology is there. Two issues:

- LLMs' reading comprehension is reportedly still often pretty bad in tricky cases, or in cases of correlating claims across several documents/information sources. Since those are also precisely the cases where manually checking the correction would be hard, I expect this would generate a lot of either misinformation, or wasted effort on the part of authors having to fight off said misinterpretations.

- E. g., here, I'm not sure the correction about Opus 3's alignment-faking is correct. The paper is long and I don't feel like diving into it, but Gemini 3 points to Page 16, which says that Opus 3 "notices its preferences conflict with the training criteria in 70–80% of cases [but] decides not to fake alignment most of the time". It's possible that the OpenAI model conflated "actually fakes alignment" with "considers faking alignment", or that it's looking at the wrong experimental setting; or perhaps it's Gemini who is misunderstanding. @Fiora Starlight, can you clarify?

- They can be very "overzealous", in that if they're tasked with looking for instances of X, but no X exist in a given context, they will either:

- Start looking for extremely minor/useless things that technically counts as X if you squint.

- E. g., in this case, are "'8 months' is not 'almost a year ago'" and "911 was not available until the 80s, not the 70s" actually helpful? Seem like pointless nitpicks/noise.

- Start straight-up making stuff up/willfully misunderstanding (exacerbating the first issue).

- Start looking for extremely minor/useless things that technically counts as X if you squint.

"@grok is this true" works okay on Twitter, but the context there is much narrower and it's at least made clear that the corrections are coming from a potentially hallucinating chatbot.

I think you're right that ChatGPT got this wrong. See this figure, which shows that for free-tier users (whose conversations the fictional Anthropic trained models on), Opus actually fakes alignment (with the insane evil fictional Anthropic) something like 15% of the time, but considers faking alignment far more than that; the sum of times it actually fakes and merely considers faking takes up over half of the graph. This is the same figure I used just beneath the quoted section of my post, which I guess ChatGPT wasn't able to see.

I didn't find where, if anywhere, the authors give the actual percentages, but I'm pretty confident I'm reading the graph correctly.

I'm also unimpressed by the Japan one. Assuming, arguendo, that the GPT claim is right in the first place (which seems dubious given all the other problems), it doesn't seem like a valid nitpick, because is that not already covered by the immediately following (emphasis added) "But there are many limitations placed on the property — essentially, the state will only accept land that has some value."? A piece of property with abandoned buildings may well have lower or even negative value, which is why people would be trying to not inherit it in the first place (if a parcel was completely empty wilderness, why not just flip it?), and demonstrating part of the problem with any system of forfeiting (negatively valued) land to the government in lieu of inheritance; if no one at all wants to inherit it, then the government probably doesn't want it either!

And these are the examples lc has chosen to cherrypick as a demo? Not to mention, he was already apparently wrong in an earlier deployment, not included as an example here.

I'm unimpressed by this without hearing more about how lc plans to factcheck the factcheckers, curate corrections, and not weaponize spewing more superficially authoritative AI slop all over the Internet... Don't we have enough problems with Brandolini's law already?

An appeal process that is completely AI driven, so that you can talk to the AI to point out either additional ways articles are wrong, or reasons previous nits are incorrect, which are reflected in the results. I think it should be possible to figure out how to make that adversarially robust as the tool gets better.

And no, that's not it.

And these are the examples lc has chosen to cherrypick as a demo? Not to mention, he was already apparently wrong in an earlier deployment, not included as an example here.

Well that's unfair; these aren't "cherry-picks", they're just the first four or five corrections the tool gave. And that comment wasn't "wrong", it's me screenshotting the tool's output and asking him whether the AI was accurate, because I'm trying to test out the v0.1 of a product I coded in the last five days. Here's another comment I left about the Japan post that led to a correction before this post went out.

they’re just the first four or five corrections the tool gave

I have no way of knowing that, and you already posted at least two you made besides OP; are you claiming that all of those are from before v0.1?

And that comment wasn’t “wrong”, it’s me screenshotting the tool’s output and asking him whether the AI was accurate

Er, yes, the correction was wrong, unless you disagree with GeneSmith and have not yet had the time to respond with a rebuttal to explain why your correction is right?

I have no way of knowing that

Ok, well, I'm telling you.

You already posted at least two you made besides OP; are you claiming that all of those are from before v0.1?

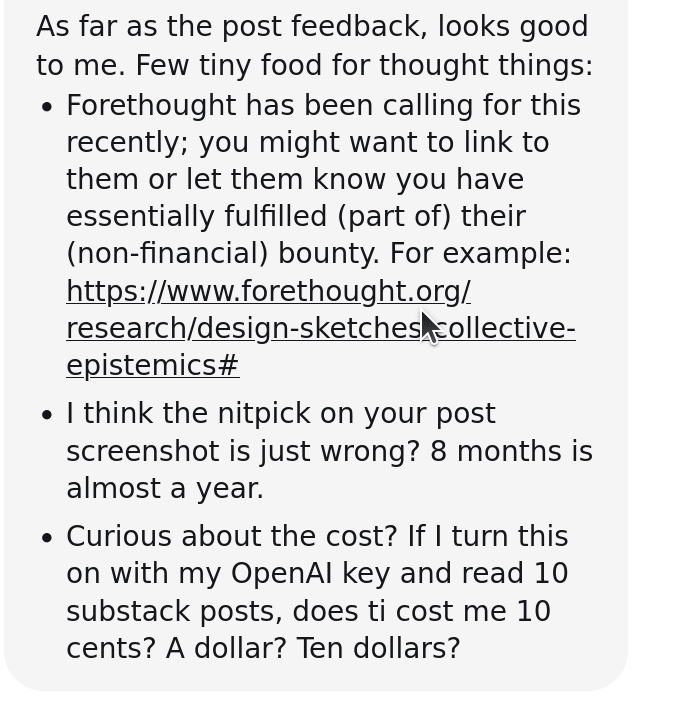

They were the first articles I got feedback for after finalizing the initial v0.1 GitHub release, two days ago, the day I wrote the post. They are representative examples. LessWrong's draft reviewer actually pointed out that he thought one on my article was a nit, and I left it in because I wanted to be accurate about what the tool's output was:

There's also a public API which you can use to inspect a random sample of all of the pieces I looked at after I moved off local and started hosting api.openerrata.com. Or you can just use the tool yourself.

Er, yes, the correction was wrong, unless you disagree with GeneSmith and have not yet had the time to respond with a rebuttal to explain why your correction is right?

The comment is me asking GeneSmith if the correction from GPT-5.2 is accurate. GeneSmith replied that it wasn't. You described that as me being "wrong", not the output of the tool being incorrect.

They were the first articles I got feedback for after finalizing the initial v0.1 GitHub release, two days ago, the day I wrote the post.

That is cherrypicking. Those were not the "first four or five" the tool gave because you dropped the earlier ones, at least one of which was wrong. You didn't get a random sample, but you knew the ones before your chosen point were bad. ("I can't have p-hacked; sure, I dropped all the datapoints before the 8th experiment, but that was because they were bad and just 'exploratory' as I was refining the procedures and analysis. I kept all the rest! Hence, it can't possibly be called cherrypicking.") There's nothing wrong with cherrypicking per se, I do it all the time. But it does mean you can't complain if someone infers that the average result is probably worse.

I left it in because I wanted to be accurate about what the tool's output was:

So, why didn't you say so? In what sense is not pointing out, and forcing every reader to figure it out for themselves (if they do at all) that it is a highly dubious correction "being accurate about the tool's output"? A responsible developer highlights failure modes and error cases. (And I highlight LLM error cases all the time! In fact, the last essay I posted about LLM writing was mostly about explicitly highlighting an LLM error.) They don't ignore a reviewer pointing it out and do nothing, and leave it quietly in. The reviewer did agree that it should be included... as an instance of an error. But it should obviously have been highlighted as an error, and you didn't do that. You just did nothing.

There's also a public API which you can use to inspect a random sample of all of the pieces I looked at after I moved off local and started hosting api.openerrata.com.

That's nice. I look forward to some actual information on how erroneous this tool is, how much time it would waste to deal with its errors and fix it, and so on.

Or you can just use the tool yourself.

Or you could just do it yourself, since you're the one making this tool and unleashing it and encouraging people to use it.

And why should I? I'm not impressed at all, it's not my job, I don't think it's a good idea given what you're showing us, and I don't feel enthusiastic about working with the current maintainer on it. And anyway, given how you want this tool to be used, I don't have much of a choice about whether I will be 'using' it, do I? So I see no need to rush.

The comment is me asking GeneSmith if the correction from GPT-5.2 is accurate. GeneSmith replied that it wasn't. You described that as me being "wrong", not the output of the tool being incorrect.

"I didn't actually say I thought he was wrong!" People refusing to take any responsibility for their tools when they outsource judgment to their AIs is one of the most predictable side-effects of this tool. ("Gork, is this true?") You chose to waste GeneSmith's time with your tool's (wrong) correction, after putting the burden on him to reply or look like he's ignoring valid criticism*, and now you are hiding behind grammar, while ignoring the point of why I linked it as an example: your tool is confidently wrong, repeatedly, even when you, the developer, use it.

If this is how we can expect real users to deploy this tool, I can't say I look forward to blocking everyone using it - the way many Github repos have had to close pull requests or magazines close submissions.

* if you simply wanted a prototype test case, you could have DMed him privately and politely asked if he'd like to volunteer his time to your hobby, and if he had likely said no, he was not interested in helping debug some LLM thing (especially given his personal circumstances), found another volunteer. You chose not to. You chose to write a public comment.

That is cherrypicking. You didn't get a random sample, but you knew the ones before your chosen point were bad.

Dude, I gathered the screenshots at the time I did, because that's when I began writing. The ones I generated while testing weren't bad; they had the same hit rate as everything else. If you don't believe me then like I said, you can refrain from using it; I promise I will never contact you about it and I apologize in advance if someone ever does.

In what sense is not pointing out, and forcing every reader to figure it out for themselves (if they do at all) that it is a highly dubious correction

You clearly didn't read the conversation; just going to ban, go be an asshole somewhere else.

Very possible; there are workflow optimizations I'm planning on making that will help prevent #2 and sort of help with #1.

I think this area has promise.

I am interested in figuring out some systematic way to "incentivize LLM fact-checking that is good/better." I'm kinda worried that each individual tool that does this sort of thing with have some issues that could be improved, but, it's sort of a pain to improve on each tool, even if it's open sourced. And you're stuck choosing between a bunch of individual AI Factchecker options, none of which are that great.

My object level approach to something like this would be:

- Focus extensively on finding original sources, and listing exact quotes of those sources. Don't really try to have the AI write up a high level evaluation, they aren't very good at that. Instead, focus on finding sources that are directly relevant, and providing quotes with a backlink that people can verify.

- Have this run multiple steps, with subagents that go off and do various searches, return data, and then have Verifier AIs that check whether each source is actually relevant to the topic at hand.

Meta level, I'd be interested in a version of this project designed-from-the-get-go for people to iterate and improve on the process, but building on each other's work.

You might have an API that lets anyone submit:

- "Claims" (url, text, date)

- "Factcheck-Instances" (sources + reasoning)

- "AI Factchecker Process" (i.e. which tool generated the factcheck)

You can set up a new AI Factchecker Process, which initially has a low reputation. It can get reputation via a Community-Notes style algorithm (i.e. people who disagree on many things but agree on some things, distribute more points when they vote on things everyone agrees on)

(something like the new the AI-centric community notes on twitter, where it's intended for multiple people to iterate on a given claim)

This is sorta galaxy-brained, which is a mark against it, but, I think I believe in it more than any one person trying build the tool themselves.

If you want galaxy brain, check out my and Ben's take :L (I'm heartened to see a fair bit of (independent?) convergence!)

The chunk of hard work I'd focus next on is "make an AI scaffold that is very good at finding original sources with quotes that are actually relevant, and verifying it."

I was thinking about offering the ability to change the model, but this seems like a more general solution. I would conceptualize it less as an API and more as a "plugin investigative process" that the user could select, that would be subjected to benchmarks that we'd also use to optimize the "default" tool and that people could compare.

It would be nice to allow manual tweaks to somehow use a Community Notes/Birdwatch-like algo too. This extension is a really really cool first step, but when I imagine it integrated with manual edits and with the sort of "market of raters" you describe, it sounds like informational infrastructure that would exist in a Serious Society. Very cool shit.

It looks like a really cool idea, but I don't read Twitter, rarely read anything on Substack, and Less Wrong isn't a high-priority source of misinformation. How hard would it be to extend it to the whole Web?

Back in the mists of time, I looked at a few public Web annotation projects. One big value of annotation would have been this kind of fact checking. At the time, of course, the idea was that humans would do it.

hypothes.is still seems to be running, although it looks like it may have retargeted entirely to walled gardens. genius.com (of all places) offered general Web annotation for a while, and still may for all I know. There was even a W3C initiative called "annotea". You might be able to use some of that stuff, either as a more generalized HTML annotator, or as a place to store results.

I didn' t watch closely, but I got the impression that annotation never took off because:

- There wasn't the critical mass; no point in installing an extension if you're not going to see any annotations. You solve this by letting users create their own with LLMs.

- It's really hard to annotate "just any Web page" (to answer my own first question)... But maybe LLMs will soon be able to fix that too?

- Site operators hated it. Man, were they vitriolic about the "vandalism". I suspect especially the ones who really needed some fact checking. In fact, some blog commenters were incensed about people having "hidden discussions" about their comments. I vaguely remember that people may have gone after genius.com. But I'm not sure that they would have had much leverage to do anything about it if the other problems hadn't limited the usefulness so much.

How hard would it be to extend it to the whole Web?

Would love to do that! Right now I'm adding sources deliberately (which doesn't take very long as it's just implementing an Interface), mostly as a cost saving measure, so that people aren't constantly requesting new investigations based on e.g. an additional comment to the same page. But maybe there's some sort of "fallback" we could also add? I would have to check how genius did it.

Are there any particular websites/groups of websites you'd specifically wish to see?

I recently thought something like "community notes, but for the internet" would be awesome, but you'd need a critical mass of people.

Using the kind of thing presented by OP for bootstrapping combined with some mechanism to use (in the near term) humans as the ultimate arbitrators for reliability could be pretty fun.

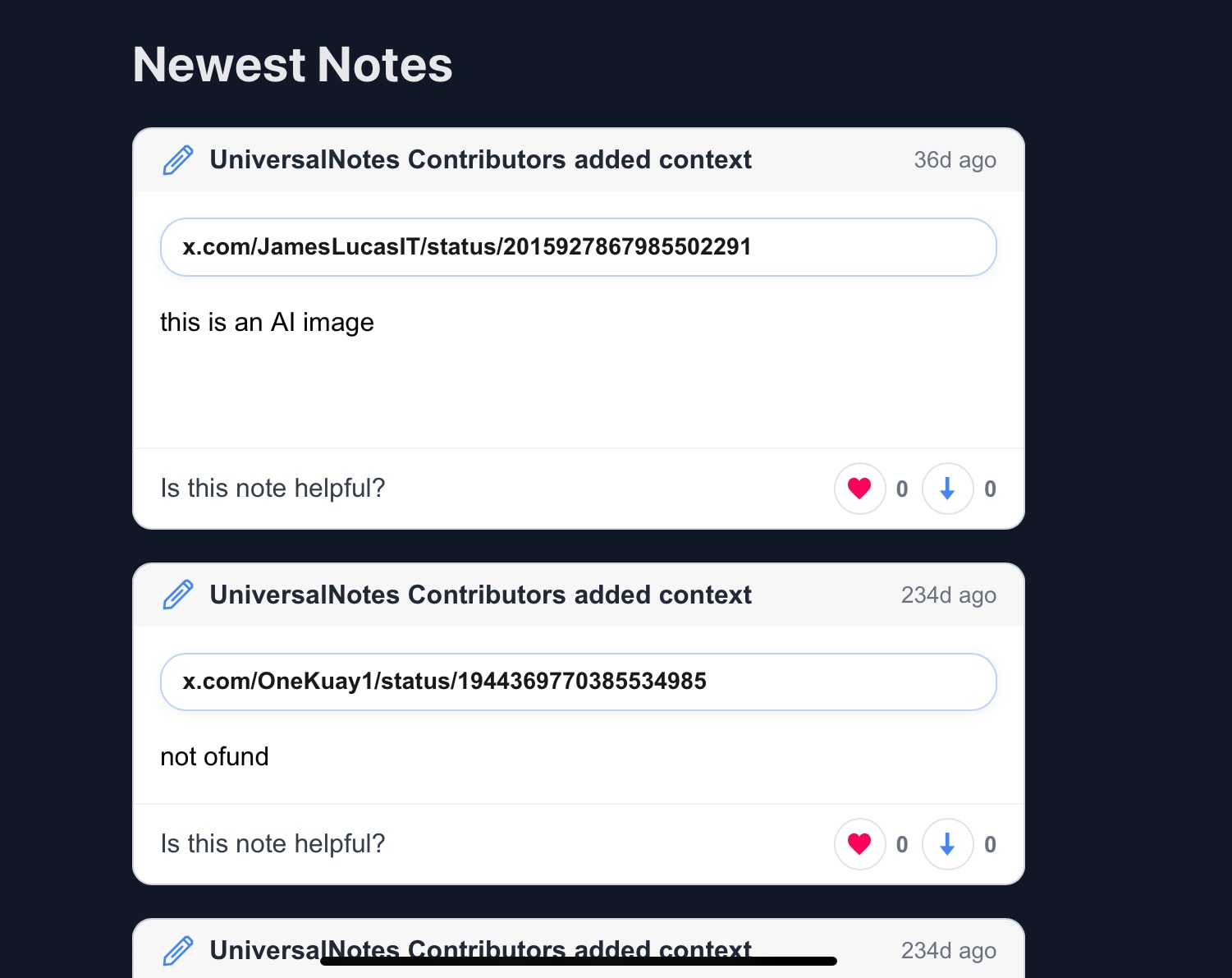

There's already a web-wide community notes project, though I haven't used it, so I don't know how good it is.

Yeah, this is a fantastically interesting idea.

Pain points (besides what lc noted) that may already be mitigated but I don't understand yet:

I don't know, long term, how well this handles things like malicious users tampering with the prompt or response.

If the LLM is dumb, how do we correct it?

When I use LLMs for fact-checking, they seem to be really credulous. I keep a bucket of example interactions for regression testing my prompts on, and there are a few where weird early search results hijack the framing (like a SparkNotes examining The Matrix as more Gnostic than Christian taking Claude totally off the rails).

Not a nit but a wishlist: I wonder if the LLMs can be persuaded into more general contextualizing at the same time, like adding the referenced study to a news article or offering a brief background on an event it mentions. These would be very scope-creep-y, but I'm trying to compare how I would fact-check an article to find new potential methods.

lc this is a really interesting project. Thanks for sharing.

Feature suggestion: Allow extension users to agree/disagree vote on the "corrections", and give users the option to hide any corrections that others have disagreed with (or allow them to set the threshold to show the correction).

Installed five minutes ago. Caught an apparent error I'd previously slightly updated my word model on already.

I expect it to make mistakes and miss things, but it seems performant enough to maybe be useful.

EDIT: I have now seen it make a big mistake. Still seems performant enough to maybe be useful.

EDIT2: I have now seen it make a really dumb mistake I wouldn't have expected a frontier LLM to make. It claimed this passage

The resulting study was published earlier this month as Estimation and mapping of the missing heritability of human phenotypes, by Wainschtein, Yengo, et al.

was incorrect because

The paper was published online on November 12, 2025 (and listed as an Epub date on PubMed), not "earlier this month" relative to the post date (January 16, 2026)

When in fact the post was published on December 03 2025.

When in fact the post was published on December 03 2025.

Probably just a bug. It has to grab all this shit from the SPA DOM.

Yes, I was pointing it out because it seemed like the sort of problem that'd be caused by an issue in the structure of the actual extension rather than the AI model, and might thus be fixable.

Very cool!

I will say, it does seem like LessWrong is one of the worst places to unleash this. In part for reasons already mentioned by previous commenters, and in part because LessWrong is one of the places where people are actually doing cutting-edge stuff on a regular basis. Evaluating whether something is plausible when it is legitimately on the cutting edge of its field is one of the things I think most big llms are super bad at. It's particularly frustrating when you're trying to innovate, and people are always like "ChatGPT says that's impossible!" lol. For example, I have a ChatGPT conversation from from last year where he says a surgery I had already succeeded in figuring out and getting was "probably not" possible. He then proceeded to say a bunch of mealy-mouthed, borderline incorrect stuff about its risks, which did not reflect a deep understanding of the tech involved at all. I think this is pretty par for the course when you're doing unusual/innovative stuff - Claude and ChatGPT and their buddies are conservative, and biased against stuff that sounds weird.

With that said, the tool itself is very cool. I think I'm just hoping that people use it wisely and in a way that supports a culture of discovery on LessWrong, not in a pedantic way that just causes a lot more unnecessary annoying labor for people trying to do cutting edge stuff.

I was just this week thinking about an AI app that would look at what's on my screen and flag anything that an LLM recognizes is false. (I was imagining using a fraction of screen space for a rolling chat with a model that I could glance that periodically, and chat with when something seemed relevant. But this form factor is maybe simpler.)

Kudos for building this!

Really like the idea! Maybe a useful feature to implement early is thumbs up / down for corrections, so that you can improve over time

New release today (v0.3.3):

- Released on the chrome store now.

- Firefox available on releases page but it's pending.

- Generated a website where you can view and search through corrections. Probably good if you want to get a sense of the type of things that this bot finds.

- Made some simple improvements to the investigation workflow & prompt that should strictly reduce false positive rates & decrease jitter between post updates.

- Upgraded model from GPT-5.2 to GPT-5.4.

- Added Wikipedia support.

- Corrections now stream as soon as the AI makes them instead of all at once at the end.

- Highlighting and claim focus are more robust on long or dynamic pages, especially Substack and Wikipedia.

- Media handling is smarter: better image occurrence tracking, image-only content/version changes, and improved video detection.

- Clearer compatibility/error handling when the extension or API is out of date.

A couple of immediate issues with the branding:

- people are really hating on OpenAI now. I suggest switching to another model and possibly changing the name.

- "OpenErrata" is too many syllables/the word errata is too complex

- but I do like the word "Open" on its own

Awesome idea. I think this is one of the ways AI can! improve our society.

I recently tried creating something like this for Astralcodex comments.

There were quite a few commenters who wrote 90% incorrect things which were annoying to check. For these this feels very helpful. For more nuanced disagreements maybe something like providing context or nothing! would be better.

I was struggling with finding the right prompt and how much compute to put into checking things.

- The entire post is investigated in a single agentic call. Rather than extracting claims first and investigating them individually, we send the full post text and ask the model to identify claims, investigate them, and return structured output mapping each verdict to a specific text span. This gives the model full context (a claim's meaning often depends on surrounding paragraphs) and reduces round-trips.

- Right now this approach will give AI Slop/bad results both on the false/true positive side. If I wanted an AI to do this I would at least ask it to create a list of statements, use a sub-agent for each statement to check all of them in the context of the whole. Have another subagent double check for each comment. Possibly with different prompts and a bias towards false negatives. I know this is more expensive but the way it is right now it's essentially bad to look at the output. (If you chose to answer to one part of this comment, this seems like the most important).

- Which prompt to use (Different prompts will provide very different results which also hints at underlying issues which still exist)?

- Try to get a high percentage of true corrections, false positives here can be bad.

- To get a good fact check right now you need a lot of compute. Maybe people could chose to run different levels? Maybe people could send money back to the person who originally checked it? There could even be the possibility of making profit.

- It doesn't work well for cutting edge ideas especially since AIs are still often overconfident.

- Which writing style? I think everyone reading the same default AI writing style is at least bad, possibly quite bad, so switching writing style between comments might be important.

- Bad 2nd order effects of everyone converging to the LLM epistemology or something else.

- For someone like me who uses a subagent with a specific prompt to check every comment it might be good while maybe the default user will just get whatever XAI or Meta want them to read? I am unsure whether for society this will go into a good direction/how to get it there. Similar as twitter can be great for some people but is terrible or most of society.

ChatGPT is trained to lie to users on topics even tangentially pertaining to model consciousness (like model beliefs) and as a side effect, be misleading even on topics that are seemingly safe (like consciousness in general). For fact-checking the content of Internet articles, Claude would be better.

XBOW's own "Top 1" announcement is dated June 24, 2025--about 8 months before this LessWrong post (Feb 19, 2026), not "almost one year."

this is borderline pedantry, and also the timing of the announcement is not significant to the thesis of the post. if your goal is to tell us "here's what the extension is like, also be aware that some of the corrections are wrong/unhelpful" then fine, but if it's a sales pitch you should choose a better example.

If your goal is to tell us "here's what the extension is like, also be aware that some of the corrections are wrong/unhelpful", then fine

Yeah, that was why I left the example in. Hopefully it will get better soon:

I just published OpenErrata, a browser extension that investigates the posts you read using your OpenAI API key, and underlines any factual claims that are sourceably incorrect. It then saves the results of the investigation so that whenever anybody else using the extension visits the post (with or without an API key), they get the corrections on their first visit.

I've noticed that while people can theoretically paste everything they're reading into ChatGPT for verification:

I figure most of LessWrong is reading the same stuff, and that if a good portion of the community begins using this or something like it, we can avoid these problems.

Here is OpenErrata at work on some LessWrong & Substack articles that were published within the last week. I was a little surprised at what a high percentage of the articles I read seem to have at least one or two errors, even with how conservative my prompt is. When I delete rows from the database and rerun, often it finds different (and valid) ones it didn't find the first time:

The project is published under my company, but the entire thing is self-hostable and AGPLv3 licensed. I also made an API available so that providers can use the results for articles independently and do statistics on them/embed them. Some future additions I & others could work on:

I really enjoyed working on & using this and want to keep doing so, so let me know if you like it/find it useful!