Someone posted these quotes in a Slack I'm in... what Ellsberg said to Kissinger:

“Henry, there’s something I would like to tell you, for what it’s worth, something I wish I had been told years ago. You’ve been a consultant for a long time, and you’ve dealt a great deal with top secret information. But you’re about to receive a whole slew of special clearances, maybe fifteen or twenty of them, that are higher than top secret.

“I’ve had a number of these myself, and I’ve known other people who have just acquired them, and I have a pretty good sense of what the effects of receiving these clearances are on a person who didn’t previously know they even existed. And the effects of reading the information that they will make available to you.

[...]

...“In the meantime it will have become very hard for you to learn from anybody who doesn’t have these clearances. Because you’ll be thinking as you listen to them: ‘What would this man be telling me if he knew what I know? Would he be giving me the same advice, or would it totally change his predictions and recommendations?’ And that mental exercise is so torturous that after a while you give it up and just stop listening. I’ve seen this with

Someone else added these quotes from a 1968 article about how the Vietnam war could go so wrong:

...Despite the banishment of the experts, internal doubters and dissenters did indeed appear and persist. Yet as I watched the process, such men were effectively neutralized by a subtle dynamic: the domestication of dissenters. Such "domestication" arose out of a twofold clubbish need: on the one hand, the dissenter's desire to stay aboard; and on the other hand, the nondissenter's conscience. Simply stated, dissent, when recognized, was made to feel at home. On the lowest possible scale of importance, I must confess my own considerable sense of dignity and acceptance (both vital) when my senior White House employer would refer to me as his "favorite dove." Far more significant was the case of the former Undersecretary of State, George Ball. Once Mr. Ball began to express doubts, he was warmly institutionalized: he was encouraged to become the inhouse devil's advocate on Vietnam. The upshot was inevitable: the process of escalation allowed for periodic requests to Mr. Ball to speak his piece; Ball felt good, I assume (he had fought for righteousness); the others felt good (they had given a

I was talking about the immediate parent, not the previous one. Though as secrecy gets ramped up, the effect described in the previous one might set in as well.

I have personal experience feeling captured by this dynamic, yes, and from conversations with other people i get the impression that it was even stronger for many others.

Hard to say how large of an effect it has. It definitely creates a significant chilling effect on criticism/dissent. (I think people who were employees alongside me while I was there will attest that I was pretty outspoken... yet I often found myself refraining from saying things that seemed true and important, due to not wanting to rock the boat / lose 'credibility' etc.

The point about salving the consciences of the majority is interesting and seems true to me as well. I feel like there's definitely a dynamic of 'the dissenters make polite reserved versions of their criticisms, and feel good about themselves for fighting the good fight, and the orthodox listen patiently and then find some justification to proceed as planned, feeling good about themselves for hearing out the dissent.'

I don't know of an easy solution to this problem. Perhaps something to do with regular anonymous surveys? idk.

Rationality has pubs; we need gyms

Consider the difference between a pub and a gym.

You go to a pub with your rationalist friends to:

- hang out

- discuss interesting ideas

- maybe maths a bit in a notebook someone brought

- gossip

- get inspired about the important mission you're all on

- relax

- brainstorm ambitious plans to save the future

- generally have a good time

You go to a gym to:

- exercise

- that is, repeat a particular movement over and over, paying attention to the motion as you go, being very deliberate about using it correctly

- gradually trying new or heavier moves to improve in areas you are weak in

- maybe talk and socialise -- but that is secondary to your primary focus of becoming stronger

- in fact, it is common knowledge that the point is to practice, and you will not get socially punished for trying really hard, or stopping a conversation quickly and then just focus on your own thing in silence, or making weird noises or grunts, or sweating... in fact, this is all expected

- not necessarily have a good time, but invest in your long-term health, strength and flexibility

One key distinction here is effort.

Going to a bar is low effort. Going to a gym is high effort.

In fact, going to gym requires su...

I edited a transcript of a 1.5h conversation, and it was 10.000 words. That's roughly 1/8 of a book. Realising that a day of conversations can have more verbal content than half a book(!) seems to say something about how useless many conferences are, and how incredibly useful they could be.

It's funny, you're right. But I intuitively read it as "it's January 2020—GPT-3 is not yet released, but it will be in a couple of months. Get ready."

2020 is firmly in my head as the year the scaling revolution started, and only secondarily as the year the COVID-19 pandemic started.

I don't expect it that soon, but do I expect more likely than not that there's a covid-esque fire alarm + rapid upheaval moment.

Someone tried to solve a big schlep of event organizing.

Through this app, you:

- Pledge money when signing up to an event

- Lose it if you don't attend

- Get it back if you attend + a share of the money from all the no-shows

For some reason it uses crypto as the currency. I'm also not sure about the third clause, which seems to incentivise you to want others to no-show to get their deposits.

Anyway, I've heard people wanting something like this to exist and might try it myself at some future event I'll organize.

H/T Vitalik Buterin's Twitter

Blackberries and bananas

Here's a simple metaphor I've recently been using in some double-cruxes about intellectual infrastructure and tools, with Eli Tyre, Ozzie Gooen, and Richard Ngo.

Short people can pick blackberries. Tall people can pick both blackberries and bananas. We can give short people lots of training to make them able to pick blackberries faster, but no amount of blackberries can replace a banana if you're trying to make banana split.

Similarly, making progress in a pre-paradigmatic field might require x number of key insights. But are those insights bananas, which can only be picked by geniuses, whereas ordinary researchers can only get us blackberries?

Or, is this metaphor false, such that having a certain number of non-geniuses + excellent tools, we can actually replicate geniuses?

This has implications for the impact of rationality training and internet intellectual infrastructure, as well as what versions of those endeavours are most promising to focus on.

Had a good conversation with elityre today. Two nuggets of insight:

- If you want to level up, find people who seem mysteriously better than you at something, and try to learn from them.

- Work on projects which are plausibly crucial. That is, projects such that if we look back from a win scenario, it's not too implausible we'd say they were part of the reason.

I made a Foretold notebook for predicting which posts will end up in the Best of 2018 book, following the LessWrong review.

You can submit your own predictions as well.

At some point I might write a longer post explaining why I think having something like "futures markets" on these things can create a more "efficient market" for content.

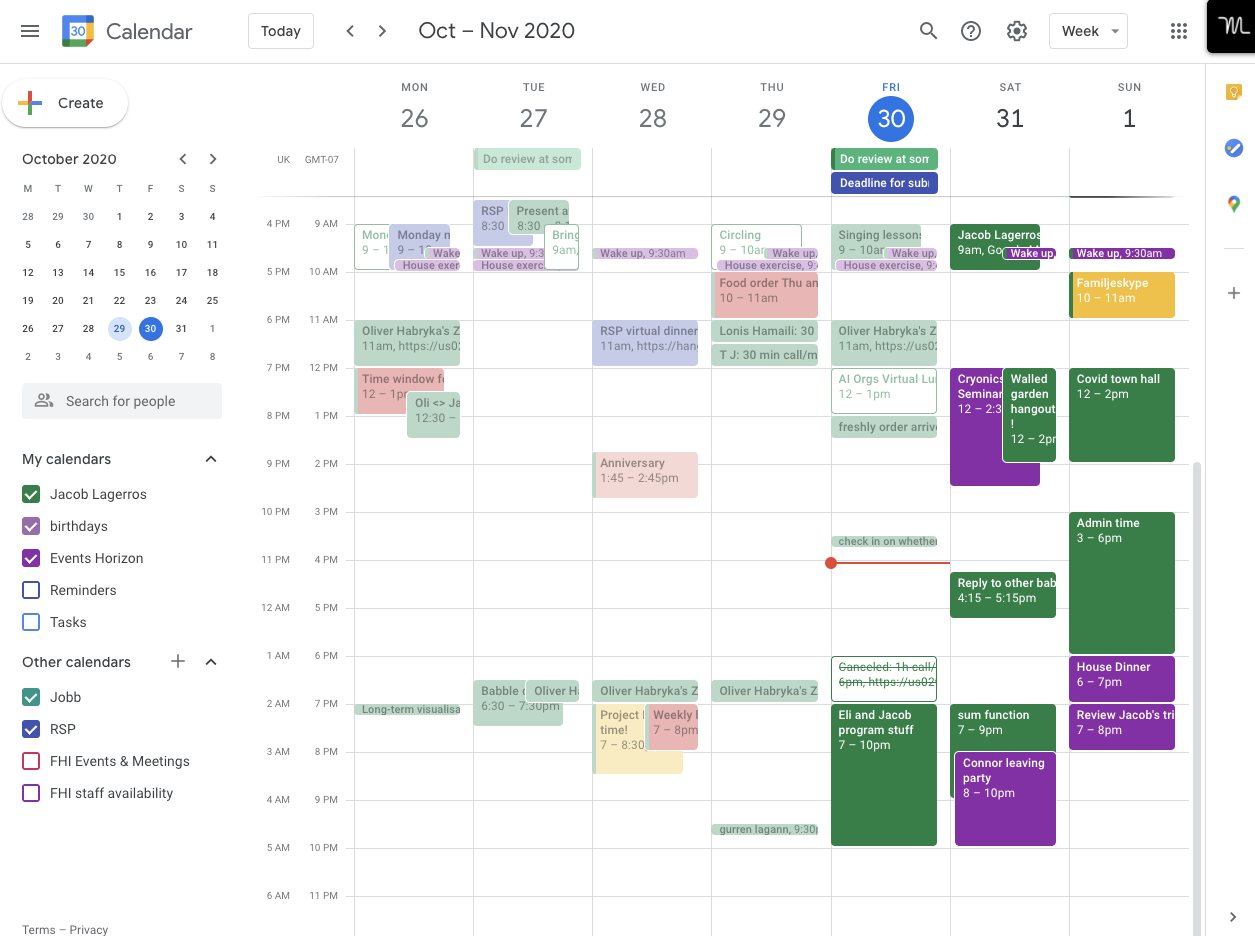

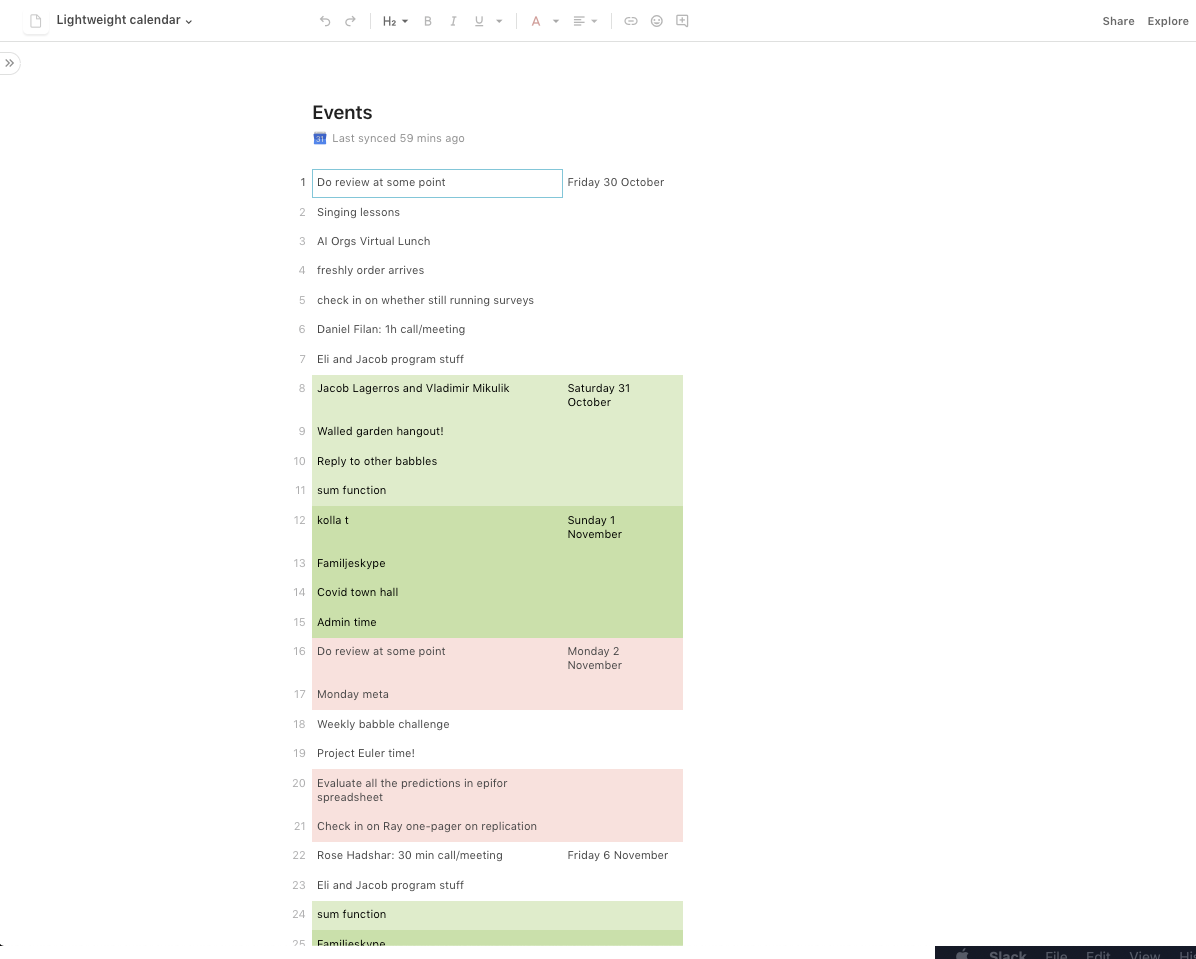

Anyone have good recommendations for a lightweight, minimalist calendar app?

Google calendar is feeling so bloated.

I had a quick stab at building something more lightweight in Coda, just for myself. Compare these two:

I get that G Calendar is trying to solve a bunch of problems for lots of different users. But I feel I can't be the only one with who'd want something more spacious and calm.

I saw an ad for a new kind of pant: stylish as suit pants, but flexible as sweatpants. I didn't have time to order them now. But I saved the link in a new tab in my clothes database -- an Airtable that tracks all the clothes I own.

This crystallised some thoughts about external systems that have been brewing at the back of my mind. In particular, about the gears-level principles that make some of them useful, and powerful,

When I say "external", I am pointing to things like spreadsheets, apps, databases, organisations, notebooks, institutions, room layouts... and distinguishing those from minds, thoughts and habits. (Though this distinction isn't exact, as will be clear below, and some of these ideas are at an early stage.)

Externalising systems allows the following benefits...

1. Gathering answers to unsearchable queries

There are often things I want lists of, which are very hard to Google or research. For example:

- List of groundbreaking discoveries that seem trivial in hindsight

- List of different kinds of confusion, identified by their phenomenological qualia

- List of good-faith arguments which are elaborate and rigorous, though uncertain, and which turned out to be

I'm confused about the effects of the internet on social groups. [1]

On the one hand... the internet enables much larger social groups (e.g. reddit communities with tens of thousands of members) and much larger circles of social influence (e.g. instagram celebrities having millions of followers). Both of these will tend to display network effects. However, A) social status is zero-sum, and B) there are diminishing returns to the health benefits of social status [2]. This suggests that on the margin moving from a very large number of small communities (e.g. bowling clubs) to a smaller number of larger communities (e.g. bowling YouTubers) will be net negative, because the benefits of status gains at the top will diminish faster than the harms from losses at the median.

On the other hand... the internet enables much more niched social groups. Ceteris paribus, this suggests it should enable more groups and higher quality groups.

I don't know how to weigh these effects, but currenly expect the former to be a fair bit larger.

[1] In addition to being confused I'm also uncertain, due to lack of data I could obtain in a few hours, probably.

[2] In contrast to the psychological benefits of status, I think social capital can sometimes have increasing returns. One mechanism: if you grow your network, the number of connections between units in your network grows even faster.

Something interesting happens when one draws on a whiteboard ⬜✍️while talking.

Even drawing 🌀an arbitrary squiggle while making a point makes me more likely to remember it, whereas points made without squiggles are more easily forgotten.

This is a powerful observation.

We can chunk complex ideas into simple pointers.

This means I can use 2d surfaces as a thinking tool in a new way. I don't have to process content by extending strings over time, and forcibly feeding an exact trail of thought into my mind by navigating with my eyes. Instead I can distill the entire scenario into 🔭a single, manageable, overviewable whole -- and do so in a way which leaves room for my own trails and 🕸️networks of thought.

At a glance I remember what was said, without having to spend mental effort keeping track of that. This allows me to focus more fully on what's important.

In the same way, I've started to like using emojis in 😃📄essays and other documents. They feel like a spiritual counterpart of whiteboard squiggles.

I'm quite excited about this. In future I intend to 🧪experiment more with it.

(Potential reason for confusion: "don't endorse it" in habryka's first comment could be interpreted as not endorsing "this comment", when habryka actually said he didn't endorse his emotional reaction to the comment.)

If you're building something and people's first response is "Doesn't this already exist?" that might be a good sign.

It's hard to find things that actually are good (as opposed to just seeming good), and if you succeed, there ought to be some story for why no one else got there first.

Sometimes that story might be:

People want X. A thing existed which called itself "X", so everyone assumed X already existed. If you look close enough, "X" is actually pretty different from X, but from a distance they blend together; causing people looking for ideas to look elsewhere.

There are different kinds of information instutitions.

- Info-generating (e.g. Science, ...)

- Info-capturing (e.g. short-form? prediction markets?)

- Info-sharing (e.g. postal services, newspapers, social platforms, language regulators, ...)

- Info-preserving (e.g. libraries, archives, religions, Chesterton's fence-norms, ...)

- Info-aggregating (e.g. prediction markets, distill.pub...)

I hadn't considered the use of info-capturing institutions until recently, and in particular how prediction sites might help with this. If you have an insight or an update, the...

Anyone know folks working on semiconductors in Taiwan and Abu Dhabi, or on fiber at Tata Industries in Mumbai?

I'm currently travelling around the world and talking to folks about various kinds of AI infrastructure, and looking for recommendations of folks to meet!

If so, freel free to DM me!

(If you don't know me, I'm a dev here on LessWrong and was also part of founding Lightcone Infrastructure.)

Have you been meaning to buy the LessWrong Books, but not been able to due to financial reasons?

Then I might have a solution for you.

Whenever we do a user interview with someone, they get a book set for free. Now one of our user interviewees asked that instead their set be given to someone who otherwise couldn't afford it.

So, well, we've got one free set up for grabs!

If you're interested, just send me a private message and briefly describe why getting the book was financially prohibitive to you, and I might be able to send a set your way.

Very uncertain about this, but makes an interesting claim about a relation between temperature, caffeine and RSI: https://codewithoutrules.com/2016/11/18/rsi-solution/

What it says on the tin.