These numbers seem reasonable. My median for "full automation of AI R&D" is ~early 2031 and my 25th percentile is ~mid 2028.

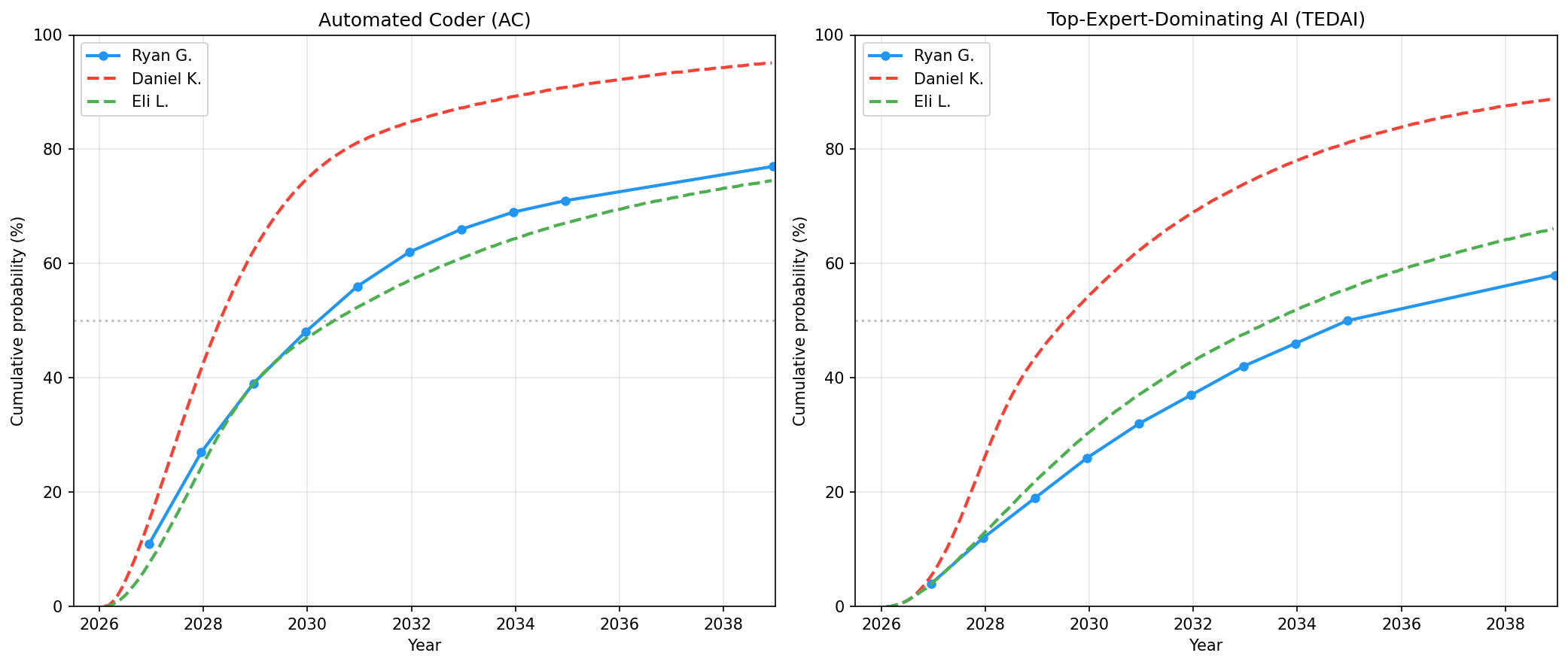

I'm between Daniel's views (more aggressive) and Eli's views. (My superintelligence median is closer to Eli's, my superhuman coder median is closer to Daniel's. My TEDAI median is probably closer to Eli's.)

Ok, on closer examination of my numbers and views, what I said here isn't that accurate about my current views. (This is mostly driven by me thinking more carefully about what my views should be and updating towards slightly longer timelines.)

I'm actually pretty close to Eli on both AC and TEDAI, with shorter timeline to AC and longer timelines to TEDAI. See here for more details.

(My views aren't that stable on reflection as is apparent here...)

Comparing to your post GPT-5 update, it reads like you have shorter timelines than you did at the start of 2025? (This contrasts to the AI Futures model authors whose timelines are now in between their initial and q4 numbers).

What doubling rate and deployment time to AI automation after 1 month 80% reliability are you now assuming? Naively, if I use a 80% reliability 125 day doubling time (which is the current trendline to opus 4.6 using logistic fixed slope), that would get us to 1-month 80% in Feb 2029. That's only about 6 months sooner than your GPT-5 update post. And yet, you've moved back your median for full AI automation by 2 years. Are you assuming a faster jump from 1 month to full AI automation now or an even faster doubling time regime from 2025?

I've updated towards faster progress, more acceleration from AIs, and superexponential time horizon progress earlier.

I plan on saying more in a new post.

I say more in this post. (And see this other comment.)

Thanks for sharing your updated forecasts.

Claude Code reached an annualized revenue of over $2.5 billion in early February, just 9 months after its release. Anthropic’s trend of 10xing annualized revenue each year has continued into the $10B range.

How would you forecast OAI and Anthropic annualized revenue by EOY 2026?

We've thought a bit about this question but don't have a confident answer. It's an interesting case of "The Gods of Straight Lines on Graphs" vs. common sense and napkin math. GOSLOG predicts that Anthropic will be at $100B ARR and OpenAI at a mere $60B ARR. But it's kinda hard to imagine Anthropic overtaking OpenAI so much despite having less compute; they'd have to either eat their seed corn (sacrificing research and training compute) or get much higher margins. In general in fact it seems like margins will probably have to go up? So maybe revenue growth rates will slow down, or at least maybe Anthropic's will.

(The napkin math based on 'but is the addressable market even big enough' seems to support ginormous growth in revenue, or at least is consistent with it. Advertising alone could potentially make $100B ARR for OpenAI, for example. So could coding agents.)

Thank you for posting. In terms of "information not in this post that would be useful", a measure of "how sensitive are these timelines to various potential events" seems useful.

e.g. post implies

high-ish sensitivity to "general impressiveness of new models" (e.g. Opus 4.7 (or Mythos/Capybara), GPT5.5),

low but non-zero sensitivity to "opinions of people in labs about their future output".

Those implications are basically correct. It sounds like you got the right idea then, from our words. Is it that you want a more quantitative measure?

I would say the comment had two purposes:

(i) if my implications were wrong/misleading, I would be corrected (thank you!),

(ii) to encourage future posts on this/related topics to think hard about the sensitivity of the world-model to different types of future information.

I wouldn't say I want a more quantitative measure - although it's important, trying to pin them down too much can make the very precise written content into "the thing that's easier to write in a precise way" and not "what we actually believe".

Ah, I misread your original question, I thought you were talking about this most recent update in particular whereas it seems you are more interested in future updates. Yeah the set of things that could update us in the future is bigger than the set of things that did update us this time... let me think... An obvious one is, people will hopefully be trying to extend the METR graph or more generally collect evidence about AI ability to do real-world coding tasks that would take humans weeks, months, years. If they find that the METR trend is very obviously going superexponential, that would be an update towards much shorter timelines.

There are other ways of trying to guess at the AC arrival date besides METR trend; we'll be looking at those and seeing how solid they start looking. Most notably, uplift studies. If we had a solid trend to extrapolate of the form "Here's how much uplift people are getting when they use AI for coding" that would be significantly better than the METR trend.

Revenue also works maybe, e.g. if AI for coding seems like it'll soon surpass the combined income of all software engineers.

Does the definition of TED-AI include visual-spatial cognitive tasks?

For example I really don't see AI models being equal to expert mechanical engineers or architects anytime soon.

So to be clear, I see by the TED-AI charts that you're expecting a ~50% probability that by July 2029, top AI systems will be equal to or better than a human expert mechanical engineer at all cognitive tasks.

So does that mean you expect an AI system to be able to design a complex mechanical assembly with many moving parts and hundreds of components in CAD, that can be easily created and assembled, works as intended, and is overall just as good or better than what human expert mechanical engineers can design?

For an example, do you think by July 2029, there is a 50% chance that AI can design and program a robot that wins in a university-level robotics competition? (Assuming all the physical/non-cognitive tasks are completed perfectly, and with few redesign iterations allowed because even human experts need to iterate)

Thanks for posting, I find these updates very interesting. How will you know when the Automated Coder milestone is reached? It seems like there weren't be a point where it's clear AI companies would prefer to lay off their workers rather than stop using AI. Is there also a chance that AI could 10x or 100x development speeds before it is possible for the software engineers to be laid off (if for example one of the things currently holding it back is unable to solved), would you consider that automated coding?

Below, we include a plot

Registering that I'd love to see a version of this plot that displays how your probability distributions over the date of AGI arrival have changed over time. You probably won't, understandably, but I'd still appreciate it. Or even just the info, like a list of values of

Editing for clarity here: METRs agents are probably substantially behind the agent harnesses within the labs. How much are you discounting for the gap between METR's agent and what's running internally in the labs?

The update notes that Daniel revised his estimate partly due to the impressiveness of recent models. One asymmetry worth naming: the forecasting literature on AI timelines has gotten increasingly rigorous about capability measurement. The question of what's happening on the inside of the systems being measured hasn't received comparable rigor. That gap probably matters more as the timelines compress.

We’re mostly focused on research and writing for our next big scenario, but we’re also continuing to think about AI timelines and takeoff speeds, monitoring the evidence as it comes in, and adjusting our expectations accordingly. We’re tentatively planning on making quarterly updates to our timelines and takeoff forecasts. Since we published the AI Futures Model 3 months ago, we’ve updated towards shorter timelines.

Daniel’s Automated Coder (AC) median has moved from late 2029 to mid 2028, and Eli’s forecast has moved a similar amount. The AC milestone is the point at which an AGI company would rather lay off all of their human software engineers than stop using AIs for software engineering.

The reasons behind this change include:1

In short, progress in agentic coding has been faster than we expected over the last 3-5 months. The METR coding time horizon trend has its flaws, but we still consider it the best individual piece of evidence for forecasting coding automation. On that metric, growth has continued at a rapid pace.

Meanwhile, in the real world, there may have been an even bigger shift; coding agents have exploded in usefulness and popularity. Claude Code reached an annualized revenue of over $2.5 billion in early February, just 9 months after its release. Anthropic’s trend of 10xing annualized revenue each year has continued into the $10B range.

Annualized revenue of AGI companies over time. Annualized revenue is revenue over the last month times 12. (source)

Additionally, according to our analysis of AI 2027’s predictions, things seem close to being on track; if events in reality continue to go roughly 65% as fast as they go in AI 2027, then AC will be achieved in 2028.

Finally, some AI company researchers that we respect continue to say that automated AI R&D is coming soon; sooner, in fact, than we ourselves think. Rather than walking back their predictions, they are doubling down, both in public and in private discussions. While we don’t put too much weight on such claims, noting that many other researchers have longer timelines, it does count for something.2

The bottom line result of our updates is to shift Daniel’s Automated Coder (AC) median from late 2029 to mid 2028, and to shift Eli’s from early 2032 to mid 2030.

Our medians for Top-Expert-Dominating AI (TED-AI) similarly shifted about 1.5 years sooner. A TED-AI is an AI that is at least as good as top human experts at virtually all cognitive tasks.

Daniel’s latest forecasts compared to his previous ones. View these forecasts here.

Eli’s latest forecasts compared to his previous ones. View these forecasts here.

Below, we include a plot and table that extend our analysis of how our views have changed since publishing AI 2027. When we refer to AGI in the below plot and table, we mean to use the TED-AI definition above, i.e. an AI that is at least as good as top human experts at virtually all cognitive tasks.

Underlying data here.

As always, on the AI Futures Model landing page, you can input your preferred parameter values to explore different possible futures.

1

Additional more minor changes include: updating our estimate of current parallel coding uplift due to passage of time, and minor changes to Daniel’s takeoff parameters which make his predictions slightly faster.

2

Imagine if, by contrast, no one at the AI companies thought they could get to AC by 2029. That would be a pretty good reason to think that AC won’t happen by 2029. So, the existence of some researchers who expect AC by then is some evidence (though far from conclusive) that it will.