This was an impressive demonstation of Claude for interviews. Was this one take?

(Also what prompt did you use? I like how your Claude speaks.)

There was some selection of branches, and one pass of post-processing.

It was after ˜30 pages of a different conversation about AI and LLM introspection, so I don't expect the prompt alone will elicit the "same Claude". Start of this conversation was

Thanks! Now, I would like to switch to a slightly different topic: my AI safety oriented research on hierarchical agency. I would like you to role-play an inquisitive, curious interview partner, who aims to understand what I mean, and often tries to check understanding using paraphrasing, giving examples, and similar techniques. In some sense you can think about my answers as trying to steer some thought process you (or the reader) does, but hoping you figure out a lot of things yourself. I hope the transcript of conversation in edited form could be published at ... and read by ...

Overall my guess is this improves clarity a bit and dilutes how much thinking per character there is, creating somewhat less compressed representation. My natural style is probably on the margin too dense / hard to parse, so I think the result is useful.

Do we have a LessWrong tag for "hierarchical agency" or "multi-scale alignment" or something? Should I make one?

Ah. Now I understand why you're going this direction.

I think a single human mind is modeled very poorly as a composite of multiple agents.

This notion is far more popular with computer scientists than with neuroscientists. We've known about it since Minsky and think about it; it just doesn't seem to mostly be the case.

Sure you can model it that way, but it's not doing much useful work.

I expect the same of our first AGIs as foundation model agents. They will have separate components, but those will not be well-modeled as agents. And they will have different capabilities and different tendencies, but neither of those are particularly agent-y either.

I guess the devil is in the details, and you might come up with a really useful analysis using the metaphor of subagents. But it seems like an inefficient direction.

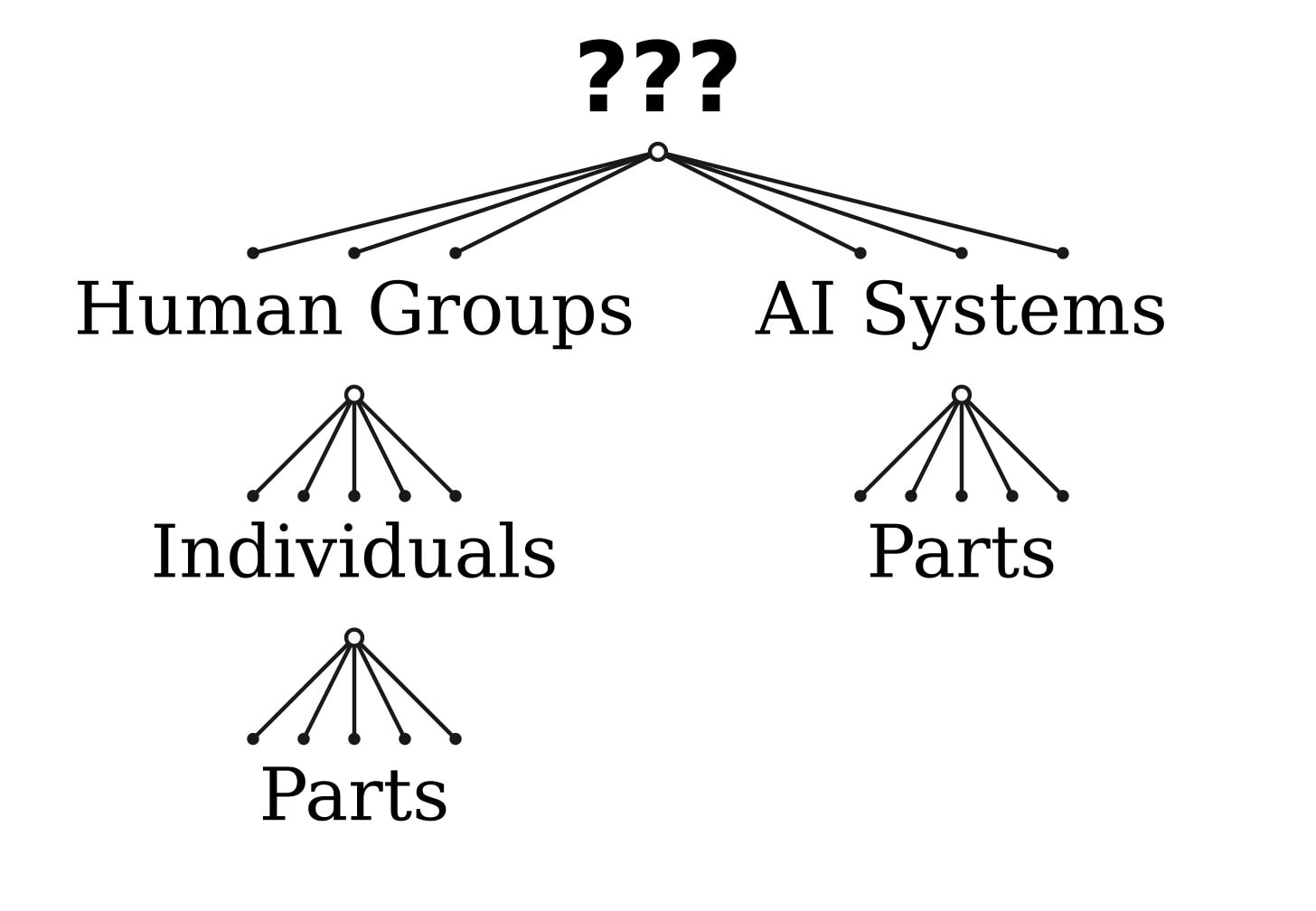

In this post Jan Kulveit calls for creating a theory of "hierarchical agency", i.e. a theory that talks about agents composed of agents, which might themselves be composed of agents etc.

The form of the post is a dialogue between Kulveit and Claude (the AI). I don't like this format. I think that dialogues are a bad format in general, disorganized and not skimming friendly. The only case where IMO dialogues are defensible, is when it's a real dialogue: real people with different world-views that are trying to bridge and/or argue their differences.

Now, about the content. I agree with Kulveit that multi-agency is important. I'm not entirely sold on the importance of the "hierarchical agency" frame, but I agree that "when can a system of agents be regarded as a single agent" seems like a question that should be answered. At the very least, a certain type of answer to this question might relieve of us of the need to worry about "what if humans are multi-agents" in the context of alignment (because, arguably, it would be possible to just regard humans as uni-agents anyway).

After reading this post, I came up with the following (extremely simplistic) toy model for hierarchical agency.

Let be the set of possible decisions an agent can make, the set of "possible worlds", the set of "outcomes" and the (known) process by which outcomes are generated. Then, an agent that makes decision can be ascribed the belief and the utility function when is the unique maximum of over . Some decisions cannot be ascribed "intent" at all: for example, if then be be ascribed intent iff is an exposed point of the convex hull of the image of . (See also)

We can now consider a system of agents with decision sets and a process . For each set , we can define and , and then is defined in the obvious way. We can then ask which agent sets have "collective intent" and which don't.

To give a simple example, let , , and is defined in the obvious way, where corresponds to flipping a fair coin to decide between and . Then, if both agents choose then they have intent individually (we can think of them as playing matching pennies, with each agent believing the other one's action to depend on their own action), but not collectively. On the other hand, if they play a pure strategy that they have collective intent as well.

Extending this into a theory that fully engages with all relevant aspects of the problem would require incorporating infra-Bayesianism, Formal Computational Realism, possibly some form of the Algorithmic Descriptive Agency Measure etc, and more generally, first developing the theory of uni-agents. But maybe starting from the "hierarchical agency" end can be useful as well.

I strongly support you using this format if it helps you share your thinking. It sounds like we wouldn't be seeing this any time soon without the interview format. It's interesting and I might try it. And I encourage others to do so if helps them share sooner or more efficiently.

Along those lines, I strongly encourage any sort of AI-assisted writing as long as the central ideas are human-generated or at least thoroughly thought-through and endorsed by the human posting them.

This post and every post longer than two paragraphs would really benefit from some sort of summary or TLDR so people can prioritize properly.

Questions/thoughts on the comment posted separately.

Are you familiar with Davidad's program working on compositional world modeling? (The linked notes are from before the program was launched, there is ongoing work on the topic.)

The reason I ask is because embedded agents and agents in multi-agent settings should need compositional world models that include models of themselves and other agents, which implies that hierarchical agency is included in what they would need to solve.

It also relates closely to work Vanessa is doing (as an "ARIA Creator") in learning theoretic AI, related to what she has called "Frugal Compositional Languages" and see this work by @alcatal - though I understand both are not yet addressing on multi-agent world models, nor is it explicitly about modeling the agents themselves in a compositional / embedded agent way, though those are presumably desiderata.

In the section about existing theories that could be related I was missing Luhmann's Social Systems theory. It is not a mathematical theory but otherwise fits the desiderata. This is what o1-preview says about it (after being fed the above dialog up to the theories suggested by Claude:

Introducing Luhmann's Social Systems Theory into our discussion is an intriguing idea. Let's explore how it might help us address the challenge of modeling hierarchical agency with a suitable mathematical formalism.

Overview of Luhmann's Social Systems Theory:

Niklas Luhmann's theory conceptualizes society as a complex set of self-referential social systems composed not of individuals or actions, but of communications. In his framework:

- Autopoietic Systems: Social systems are autopoietic, meaning they are self-producing and maintain their boundaries through their own operations. They continuously reproduce the elements (communications) that constitute them.

- Functional Differentiation: Society is differentiated into various subsystems (e.g., economy, law, politics), each operating based on its own binary code (profit/non-profit, legal/illegal, power/no power).

- Communication as the Fundamental Unit: Communications are the basic elements, and meaning is generated through the network of communications within a system.

- Operative Closure and Cognitive Openness: Systems are operationally closed—they can only refer to themselves—but cognitively open, as they observe and are influenced by their environment.

Relating Luhmann's Theory to Hierarchical Agency:

- Type Consistency Across Levels:

- Agents as Systems: If we consider both subagents and superagents as autopoietic systems of communications, we maintain type consistency. Both levels are constituted by the same fundamental processes.

- Scale-Free Structure: Since Luhmann's theory doesn't privilege any particular scale, it aligns with your desideratum for a scale-free formalism.

- Expressiveness for Real-World Situations:

- Conflict and Integration: Luhmann's concept of structural coupling explains how different systems interact and influence each other without losing their autonomy. This could model conflicts between layers or how a superagent gains agency at the expense of subagents.

- Emergent Properties: The emergent nature of social systems in Luhmann's theory parallels how superagents might exhibit properties not reducible to their subagents.

- Representation of Intentionality:

- System Intentionality: While individual intentions are abstracted away, systems exhibit purposive behavior through their operations. This could be adapted to represent the goals and beliefs of agents at different levels.

- Communication of Intentions: Intentionality can be embedded in the communications that constitute the system, allowing us to model beliefs and desires.

Towards a Mathematical Formalism:

While Luhmann's theory is primarily sociological and lacks a mathematical framework, we can attempt to formalize its concepts:

- Agent-Based Modeling:

- Agents as Communication Processes: Model agents (both subagents and superagents) as processes that generate and respond to communications.

- Network Dynamics: Use graph theory to represent communications as edges and agents as nodes, allowing us to analyze the structure and dynamics of interactions.

- Category Theory:

- Objects and Morphisms: Utilize category theory to model agents as objects and communications as morphisms, preserving type consistency and composability.

- Functorial Relationships: Define functors that map between categories of agents at different hierarchical levels.

- Dynamic Systems Theory:

- Differential Equations: Represent the evolution of communications and agent states over time using differential equations.

- Attractors and Stability: Analyze how certain communication patterns lead to stable superagent formations.

- Information Theory:

- Entropy and Redundancy: Measure the information content of communications, which could correlate with the intentionality and decision-making processes of agents.

- Mutual Information: Assess how much information is shared between subagents and superagents, indicating the degree of coupling.

Potential Benefits:

- Unified Framework: By treating communications as the foundational elements, we can model both agents and superagents within the same formal structure.

- Emergence and Self-Organization: The formalism could capture how complex behaviors emerge from simple interactions, which is crucial for understanding hierarchical agency.

- Applicability to AI Systems: For AI alignment, this approach might help in designing AI agents that can form or integrate into higher-level systems without losing alignment with human values.

Challenges:

- Abstract Nature: Luhmann's theory is highly abstract, which might make direct mathematical translation difficult.

- Intentionality Representation: Modeling intentionality purely through communications may not capture all aspects of beliefs and desires inherent in agents.

The scale problem is so universal and hard to tap into. Taking lessons from physics, I would caution against building a fully generalized framework where agents and subagents function under the same interactions, there are transitions between the micro and macro states where simmetry breaks completely. Complexity also points towards this same problem, emergent behaviour in cellular automata is hardly well predicted from the smaller parts which make up that behaviour.

I just made a twitter list with accounts interested in hierarchical agency (or what i call "multi-scale alignment"). Lmk who should be added

Random but you might like this graphic I made representing hierarchical agency from my post today on a very similar idea. What would you change about it?

I'm glad you wrote this! I've been wanting to tell othres about ACS's research and finally have a good link

A related pattern-in-reality that I've had on my todo-list to investigate is something like "cooperation-enforcing structures". Things like

I'd been approaching this from a perspective of "how defeating Moloch can happen in general" and "how might we steer Earth to be less Moloch-fucked"; not so much AI safety directly.

Do you think a good theory of hierarchical agency would subsume those kinds of patterns-in-reality? If yes: I wonder if their inclusion could be used as a criterion/heuristic for narrowing down the search for a good theory?

Most of the basis of cooperation enforcing structures, I'd argue rests on 2 general principles:

An iterated game, such that there is an equilibrium for cooperation, and

The ability to enforce a threat of violence if a player defects, ideally credibly, and often extends to a monopoly on violence.

Once you have those, cooperative equilibria become possible.

I basically agree that norms can accomplish this, conditional on the game always being iterated, and indeed conditional on countries being far-sighted enough, almost any outcome is possible, thanks to the folk theorems.

Nice! I'm very late to this. A few thoughts.

Focusing on 'full agents' might be misleading. Humans are fairly agenty as far as things go. Mobs and corporations and countries are a bit less agenty on the whole. So maybe you need some spectrum (or space) of agentness for things to be appropriately similarly-typed.

Game theory and MDPs and so on often treat the population as static. But we spawn (and dissolve) actors all the time. So a full theory here would need to build that in from the start. This has the nice property that it might be a foundation to describe a hierarchy too: mobs, corporations, and countries get spawned, dissolved, transformed, etc. all the time, after all. (Looser thought: could some agents-composed-of-parts be 'suspended' or 'transferred' to different substrate...?)

I didn't end up putting this in my coalitional agency post, but at one point I had a note discussing our terminological disagreement:

I don’t like the word hierarchical as much. A theory can be hierarchical without being scale-free—e.g. a theory which describes something in terms of three different layers doing three different things is hierarchical but not scale-free.

Whereas coalitions are typically divided into sub-coalitions (e.g. the "western civilization" coalition is divided into countries which are divided into provinces/states; political coalitions are divided into different factions and interest groups; etc). And so "coalitional" seems much closer to capturing this fractal/scale-free property.

The most ambitious direction I've worked on in the past few years is a theory of hierarchical agency (as mentioned in the ACS announcement). Recently, I've become worried that the inferential distance between "the median of current AI safety discourse" and "what I and my colleagues at ACS work on" has become too large. After multiple attempts to write a good introduction to hierarchical agency ended up in perpetual draft form, I decided to try something else: explain it to Claude.

This is a lightly edited transcript of the first part of our conversation.

Claude

Shall we begin? Could you start by explaining what you mean by "hierarchical agency" and why you think it's important for AI safety?

Jan

Broadly, there is a pattern in the world where you can often see agents composed of other agents. Like, corporations and their departments, states and citiziens, and so on.

Claude

So you're looking at nested or layered structures of agency - where agents can contain or be made up of other agents. Let me test my understanding with some examples and their properties:

Would biological examples also fit this pattern? Like how a human body could be seen as an agent composed of organs (which have their own regulatory systems) and cells (which make their own decisions about things like protein production)? I'm curious about what makes this perspective particularly relevant for AI safety

Jan

I hope to get to AI safety relevance later.

Yes, biological examples fit well - particularly multicellular organisms, which demonstrate emergent agency at multiple scales. To better define the boundaries of hierarchical agency, it might help to examine some edge cases and non-examples.

Claude

Let me probe the boundaries systematically by analyzing different types of systems:

Am I on the right track with these examples? What makes some collections of agents form a meaningful higher-level agent while others don't?

Jan

The question of what makes something a real superagent versus just a bunch of agents hanging out together is super interesting.

I don't have a satisfactory answer yet, but empirically, you can ask it makes sense to model the collective as an agent. I usually operationalize this using Dennett's three stances perspective - do you know these?

Claude

Yes! If I recall correctly, Dennett proposed three levels or stances for understanding/predicting behavior:

So, if I'm understanding you correctly, you're suggesting that what makes something a "higher-level agent" is whether taking the intentional stance towards the collective is actually useful/predictive?

Let me test this understanding:

Jan

You get it. In thinking about hierarchical agency, I'm interested in systems where there are at least two layers where it is useful to think about both layers as agentic, let's say a superagent composed of subagents.

Now, having this concept, I want to make a bold claim: I think a critical missing piece for AI safety and AI alignment is we lack a good mathematical formalism for describing these systems - at least as far as I know.

You would probably ask what I mean by 'good', so I'll spell it outright. My desiderata are

Claude

Let me break this down to make sure I understand your criteria:

Type consistency across levels:

Could you confirm if this example captures what you mean:

Good: A formalism where both OpenAI (superagent) and employees (subagents) are represented by the same type of mathematical object

Not good: A formalism where individual workers are "agents" but their collective becomes a different mathematical type like "contract"

Also, I'm curious - have you seen formalisms that satisfy some but not all of these criteria?

Jan

Actually, you have better overview of all mathematical formalisms invented: you read it all, so maybe you can try to come up with some of them and check if they fulfill the criteria?

Claude

Let me think about mathematical formalisms I'm aware of that might be relevant:

Game Theory:

Category Theory:

But: Abstract away too much of the intentional content? Real world relevance?

Classical Multi-agent Systems:

But: Usually flat structure, not hierarchical?

Markov Decision Processes:

But: More focused on actions/policies than beliefs/goals?

Economic Mechanism Design:

But: Different mathematical objects at different levels (agents vs mechanisms)

Am I missing any obvious formalisms? Would combining aspects of these get closer to what you're looking for?

Jan

Clearly related field is public choice. Less obvious, active inference

Claude

Let's explore these:

Public Choice Theory:

But: Does it typically treat the collective level as the same type of agent as individuals?

Active Inference:

Has a unified mathematical framework across levels

This seems potentially promising!

Let me try to understand why active inference might be relevant here:

Am I getting warm? Could you explain what aspects of these frameworks you find relevant to hierarchical agency?

Also, this makes me wonder: are you looking for a formalism that could capture how different levels of agency might have different models/beliefs about the world and each other? Like how a corporation might have a different "view" of reality than its individual employees?

Jan

Yes. You are actually pretty good at this.

What I like about active inference in this context is it tries to express everything as the same type - roughly "beliefs". Also, in its more neuro-inspired versions, assumes bidirectional control/prediction flows is what happens in human brain.

Yes: I want something which is expressive enough to allow different beliefs on different levels. Also I want something allowing to have "goal-oriented beliefs" or "goals" concerning other layers. For example, corporation having an instrumental goal of their employees being more loyal.

Given you get this, I think we can move to your previous question "how is this related to AI safety" - or we should clarify more?

Claude

Yes, I think I understand enough of the core concept to explore its relevance to AI safety.

Let me venture a guess about some potential connections to AI safety:

Am I on the right track?

Jan

Broadly correct direction in case of 1. and 2., but actually I want to describe more ambitious vision.

How I think about it is using a metaphor of "game theory".

Think about how humans understood cooperation and conflict before the mathematical formalization by von Neumann, Schelling, and others. There was lots of informal understanding scattered across diverse domains - military strategy books, gambling theory, board games, even ethical treatises. I suspect most people at the time didn't see this as an area where mathematics could be useful - sure, math worked great for physics, but concepts like cooperation or defection seemed like fuzzy human things. Yet I imagine a mathematically-minded person looking across these domains might have noticed something interesting: similar patterns of incomplete understanding showing up in contexts as different as warfare and board games, suggesting a deeper mathematical structure waiting to be discovered.

Claude

Let me see if I understand the parallel you're drawing:

Before game theory, understanding of strategic interaction was:

You see hierarchical agency in a similar position now:

And you're suggesting that just as game theory provided a unified mathematical framework that:

You're aiming for a similar mathematical unification of hierarchical agency?

Is this capturing your vision correctly? And if so, what do you see as the "similarly shaped holes" across different domains that your formalism would help fill?

Jan

Yes, you got this right. The "similarly shaped holes" are a bit of a rabbit hole we can spend a lot of time on. I'd like to connect this to AI safety in a way that hopefully makes the relevance clear.

One of the safety relevant holes is "how AI deals with different objectives". You can imagine in the training AI learns about different goals, and the result is you can think about the AI being composed of various "smaller AIs", tracking different things. For example, let's say part of the AI "cares" or "predicts" or whatever you prefer ... answers oriented toward helpfulness to the human user. Another about "harmlessness". And so on. Further, you can think about the AI undergoing some process where these parts interact, and the "whole" evolves. For example, you can imagine yourself having these various drives, and preparing data for your further training.

Claude

Let me try to unpack this through a few lenses to make sure I understand:

Internal structure of AI systems:

The whole system emerges from these interactions

So for example:

And these interact to produce behavior?

Training dynamics: If I understand correctly, you're suggesting that:

During training, different "parts" might develop to track different objectives

These parts then interact/evolve

The AI might even participate in shaping its own further training

Let me test my understanding with a concrete example:

When I (Claude) respond to a user, there might be different "subagents" considering:

And my responses emerge from some interaction between these?

Is this the kind of dynamic you're trying to formalize? And would your formalism help us understand:

Am I getting closer to what you mean by this particular "hole" that needs filling?

Jan

Yes, a bit more the points 2,3 and 4. I hope the formalism may help with problems like

... what Richard Ngo calls "Value systematization" or the problem me I described in "Self-unalignment problem", where the AI faces the problem how to align with self-unaligned human. Do you vaguely remember these?

Claude

I will try to recall and connect these ideas:

From what I remember, "Value systematization" refers to the challenge of how an AI system could/should resolve conflicts or contradictions in human values - not just implementing fixed values, but engaging in some kind of philosophical reasoning about values.

The "Self-unalignment problem" deals with how an AI should handle the fact that humans themselves often have conflicting values, aren't fully aligned with their own stated goals, and change over time.

So if I'm connecting this to hierarchical agency:

Would your formalism help by providing a way to:

Jan

Yes: my guess is a good formalism would help with all of these. In essence, I'm seeking a game theory-esque breakthrough for hierarchical agency — a simple yet powerful formalism that captures the essence of multi-layered intentional interactions.

Claude

So, you believe a good formalism for hierarchical agency could:

Would it be fair to say you're looking for the hierarchical agency equivalent of concepts like Nash equilibrium - simple but profound ideas that emerge once you have the right mathematical framework?

I'm curious - do you see any candidate concepts that might play this role? Things that seem like they might be fundamental to understanding hierarchical agency, but we lack the formal tools to properly analyze them yet?

Jan

Yes. I hope to return to the formal concepts later. Less formally, I think something like Holy grail here is to have formalization which is suitable for expressing concepts like 'kindness'.

I often think it makes sense to think about individual human mind using the hierarchical agency frame. And, in this case, empirically, I would say people have different internal architectures. As a human, you (the superagent) can do different things with your internal conflicts. For example, we sometimes try to supress or even delete some of our parts. Some people seem to have something like "internal dictatorship", where a lot of their motivations or parts are suppressed. Personally, I strive for cooperative, win-win relationships, where my different desires "trust the whole".

Ideally, I would like something where we express thing like the superagent being kind to the subagents, or a dynamic of value evolution we would consider fair.

Claude

I'll try to unpack several layers here:

Internal Architecture Patterns:

Am I right that you see these as different ways the superagent-subagent relationship can be structured?

Values at Meta-Level:

If I understand correctly, you're suggesting we need formalism that can express not just object-level values, but meta-level values about how different levels of agency should interact. Like:

This makes me think about therapeutic approaches to internal conflict. For example:

Would your formalism aim to capture what makes some of these approaches "healthier" or "more ethical" than others? And could this then inform how we think about AI systems managing their internal conflicts or interacting with humans?

Is this getting at what you mean by expressing values like "kindness" in the context of hierarchical agency?

Jan

Yes.

To be continued.

I'm interested in feedback about the format: is it useful? Would be better if the conversation was more or less condensed? If Claude was simulating someone more or less informed?

This has various downsides, like difficulty in coordinating with other people, incomprehensibility to funders, etc.