HOLY shit! I just checked out the new concepts portion of the site that shows you all the tags. This feels like a HUGE step in the direction the LW team's vision of a place where knowledge production can actually happen.

"People are over sensitive to ostracism because human brains are hardwired to be sensitive to it, because in the ancestral environment it meant death."

Evopsyche seems mostly overkill for explaining why a particular person is strongly attached to social reality.

People who did not care what their parents or school-teachers thought of them had a very hard time. "Socialization" as the process of the people around you integrating you (often forcefully) into the local social reality. Unless you meet a minimum bar of socialization, it's very common to be shunted through systems that treat you worse and worse. Awareness of this, and the lasting imprint of coercive methods used to integrate one into social reality, seem like they can explain most of an individuals resistance to breaking from it.

I've recently re-read Lou Keep's Uruk series, and a lot more ideas have clicked together. I'm going to briefly summarize each post (hopefully will tie things together if you have read them, might not make sense if you haven't). This is also a mini-experiment in using comments to make a twitter-esque idea thread.

Over the past few months I've noticed a very consistent cycle.

- Notice something fishy about my models

- Struggle and strain until I was able to formulate the extra variable/handle needed to develop the model

- Re-read an old post from the sequences and realize "Oh shit, Eliezer wrote a very lucid description of literally this exact same thing."

What's surprising is how much I'm surprised by how much this happens.

Here's a pattern I'm noticing more and more: Gark makes a claim. Tlof doesn't have any particular contradictory beliefs, but takes up argument with Gark, because (and this is the actual-source-of-behavior because) the claim pattern matches, "Someone trying to lay claim to a tool to wield against me", and people often try to get claims "approved" to be used against the other.

Tlof behavior is a useful adaptation to a combative conversational environment, and has been normalized to feel like a "simple disagreement". Even in high trust scenarios, Tlof by habit continues to follow conversational behaviors that get in the way of good truth seeking.

Sketch of a post I'm writing:

"Keep your identity small" by Paul Graham $$\cong$$ "People get stupid/unreasonable about an issue when it becomes part of their identity. Don't put things into your identity."

"Do Something vs Be Someone" John Boyd distinction.

I'm going to think about this in terms of "What is one's main strategy to meet XYZ needs?" I claim that "This person got unreasonable because their identity was under attack" is more a situation of "This person is panicing at the possibility that their main strategy to meet XYZ need will fail."

Me growing up: I made effort to not specifically "identify" with any group or ideal. Also, my main strategy for meeting social needs was "Be so casually impressive that everyone wants to be my friend." I can't remember an instance of this, but I bet I would have looked like "My identity was under attack" if someone starting saying something that undermined that strategy of mine. Being called boring probably would have been terrifying.

"Keep your identity small" is not actionable advice. The target should be more "...

Lol, one reason it's hard to talk to people about something I'm working through when there's a large inferential gap, is that when they misunderstand me and tell me what I think I sometimes believe them.

I finished reading Crazy Rich Asians which I highly enjoyed. Some thoughts:

The characters in this story are crazy status obsessed, and my guess is because status games were the only real games that had ever existed in their lives. Anything they ever wanted, they could just buy, but you can't pay other rich people to think you are impressive. So all of their energy goes into doing things that will make others think their awesome/fashionable/wealthy/classy/etc. The degree to which the book plays this line is obscene.

Though you're never given exact numbers on Nick's family fortune, the book builds up an aura of impenetrable wealth. There is no way you will ever become as rich as the Youngs. I've already been someone who's been a grumpy curmudgeon about showing off/signalling/buying positional goods, but a thing that this book made real to me was just how deep these games can go.

If you pick the most straight forward status markers (money), you've decided to try and climb a status ladder of impossible height with vicious competition. If you're going to pick a domain in which you care more about your ordinality than your carnality, for the love of god ...

Thoughts on writing (I've been spending the 4 hours every morning the last week working on Hazardous Guide to Words):

Feedback

Feedback is about figuring out stuff you didn't already know. I wrote the first draft of HGTW a month ago, and I wrote it in "Short sentences that convince me personally that I have a coherent idea here". When I went to get feedback from some friends last week, I'd forgotten that I'd hadn't actually worked to make it understandable, and so most of the feedback was "this isn't understandable".

Writing with purpose

Almost always if I get bogged down when writing it's because I'm trying to "do something justice" instead of "do what I want". "Where is the meaning?" started as "oh, I'll just paraphrase Hofstadter's view of meaning". The first example I thought was to talk about how you can draw too much meaning from things, and look at claims of the pyramids predicting the future. I got bogged down righting those examples, because "what can lead you to think meaning is there when it's not?" was not really what I was talking about, nor was it w...

I'm torn on WaitButWhy?s new series The Story of Us. My initial reaction was mostly negative. Most of that came from not liking the frame of Higher Mind and Primitive Mind, as that sort of thinking has been reasonable for a lot of hiccups for me, making "doing what I want" and unnecessarily antagonistic process. And then along the way I see plenty of other ways I don't like how he slices up the world.

The torn part: maybe this is sorta post "most people" need to start bridging the inferential gap towards what I consider good epistemology? I expect most people on LW to find his series too simplistic, but I wonder if his posts would do more good than the Sequences for the average joe. As I'm writing this I'm acutely aware of how little I know about how "most people" think.

It also makes me think about how at some point in recent years I thought, "More dumbed down simplifications of crazy advanced math concepts should exist, to get more people a little bit closer to all the cool stuff there is." I guessed a mathematician might balk at this suggestion ("Don't tarnish my precious precision!") Am I reacting the same way?

I dunno, what do you think?

Memex Thread:

I've taken copious notes in notebooks over the past 6 years, I've used evernote on and off as a capture tool for the past 4 years, and for the past 1.5 years I've been trying to organize my notes via a personal wiki. I'm in the process of switching and redesigning systems, so here's some thoughts.

Noticing an internal dynamic.

As a kid I liked to build stuff (little catapults, modify nerf guns, sling shots, etc). I entered a lot of those projects with the mindset of "I'll make this toy and then I can play with it forever and never be bored again!" When I would make the thing and get bored with it, I would be surprised and mildly upset, then forget about it and move to another thing. Now I think that when I was imagining the glorious cool toy future, I was actually imagining a having a bunch of friends to play with (didn't live around many other kids).

When I got to middle school and highschool and spent more time around other kids, the idea of "That person's talks like they're cool but they aren't." When I got into sub-cultures centering around a skill or activity (magic) I experienced the more concentrated form, "That person acts like they're good at magic, but couldn't do a show to save their life."

I got the message, "To fit in, you have to really be about the thing. No half assing it. No posing."

Why, historically, have I gotten so worried when my interests shift? I'm not yet at a point in my lif...

tldr;

In high-school I read pop cogSci books like "You Are Not So Smart" and "Subliminal: How the Subconscious Mind Rules Your Behavior". I learned that "contrary to popular belief", your memory doesn't perfectly capture events like a camera would, but it's changed and reconstructed every time you remember it! So even if you think you remember something, you could be wrong! Memory is constructed, not a faithful representation of what happened! AAAAAANARCHY!!!

Wait a second, a camera doesn't perfectly capture events. Or at least, they definitely didn't when t

Over this past year I've been thinking more in terms of "Much of my behavior exists because it was made as a mechanism to meet a need at some point."

Ideas that flow out of this frame seem to be things like Internal Family Systems, and "if I want to change behavior, I have to actually make sure that need is getting met."

Question: does anyone know of a source for this frame? Or at least writings that may have pioneered it?

The general does not exist, there are only specifics.

If I have a thought in my head, "Texans like their guns", that thought got there from a finite amount of specific interactions. Maybe I heard a joke about texans. Maybe my family is from texas. Maybe I hear a lot about it on the news.

"People don't like it when you cut them off mid sentence". Which people?

At a local meetup we do a thing called encounter groups, and one rule of encounter groups is "there is no 'the group', just individual people". Having conversations in that mode has been incredibly helpful to realize that, in fact, there is no "the group".

What are the barriers to having really high "knowledge work output"?

I'm not capable of "being productive on arbitrary tasks". One winter break I made a plan to apply for all the small $100 essay scholarships people were always telling me no one applied for. After two days of sheer misery, I had to admit to myself that I wasn't able be productive on a task that involved making up bullshit opinions about topics I didn't care about.

Conviction is important. From experiments with TAPs and a recent bout of meditation, it seems ...

Concrete example: when I'm full, I'm generally unable to imagine meals in the future as being pleasurable, even if I imagine eating a food I know I like. I can still predict and expect that I'll enjoy having a burger for dinner tomorrow, but if I just stuffed myself on french fries, and just can't run a simulation of tomorrow where the "enjoying the food experience" sense is triggered.

I take this as evidence for my internal food experience simulator has "code" that just asks, "If you ate XYZ right now, how would...

I'm in the process of turning this thought into a full essay.

Ideas that are getting mixed together:

Cached thoughts, Original Seeing, Adaption Executors not Fitness Maximizes, Motte and Bailey, Double Think, Social Improv Web.

- A mind can perform original seeing (to various degrees), and it can also use cached thoughts.

- Cache thoughts are more “Procedural instruction manuals” and original seeing is more “Your true anticipations of reality”.

- Both reality and social reality (social improv web) apply pressures and rewards that shape your cached thoughts.

- It

I started writing on LW in 2017, 64 posts ago. I've changed a lot since then, and my writing's gotten a lot better, and writing is becoming closer and closer to something I do. Because of [long detailed personal reasons I'm gonna write about at some point] I don't feel at home here, but I have a lot of warm feelings towards LW being a place where I've done a lot of growing :)

A forming thought on post-rationality. I've been reading more samzdat lately and thinking about legibility and illegibility. Me paraphrasing one point from this post:

State driven rational planning (episteme) destroys local knowledge (metis), often resulting in metrics getting better, yet life getting worse, and it's impossible to complain about this in a language the state understands.

The quip that most readily comes to mind is "well if rationality is about winning, it sounds like the state isn't being very rational, and this isn'...

Alternative hypothesis: Post-rationality was started by David Chapman being angry at historical rationalism. Rationality was started by Eliezer being angry at what he calls "old-school rationality". Both talk a lot about how people misuse frames, pretend that rigorous definitions of concepts are a thing, and broadly don't have good models of actual cognition and the mind. They are not fully the same thing, but most of the time I talked to someone identifying as "postrationalist" they picked up the term from David Chapman and were contrasting themselves to historical rationalism (and sometimes confusing them for current rationalists), and not rationality as practiced on LW.

Sometimes when I talk to friends about building emotional strength/resilience, they respond with "Well I don't want to become a robot that doesn't feel anything!" to paraphrase them uncharitably.

I think wolverine is a great physical analog for how I think about emotional resilience. Every time wolverine gets shot/stabbed/clubbed it absolutely still hurts, but there is an important way in which these attacks "don't really do anything". On the emotional side, the aim is not that you never feel a twinge of hurt/sorrow/jealo...

Quick description of a pattern I have that can muddle communication.

"So I've been mulling over this idea, and my original thoughts have changed a lot after I read the article, but not because of what the article was trying to persuade me of ..."

Genera Pattern: There is a concrete thing I want to talk about (a new idea - ???). I don't tell what it is, I merely provide a placeholder reference for it ("this idea"). Before I explain it, I begin applying a bunch of modifiers (typically by giving a lot of context "This idea is ...

Ribbon Farm captured something that I've felt about nomadic travel. I'm thinking back to a 2 month bicycle trip I did through Vietnam, Cambodia, and Laos. During that whole trip, I "did" very little. I read lots of books. Played lots of cards. Occasionally chat with my biking partner. "Not much". And yet when movement is your natural state of affairs, every day is accompanied with a feeling of progress and accomplishment.

I love the experience of realizing what cognitive algorithm I'm running in a given scenario. This is easiest to spot when I screw something up. Today, I misspelled the word "process" by writing three "s" instead of two. I'm almost certain that while writing the word, there was a cached script of "this word has one more 's' than feels write, so add another one" that activated as I wrote the 1st "s", but then some idea popped into my mind (small context switch, working memory dump?) and I then execu...

Something as simple as talking too loud can completely screw you over socially. There's a guy in one of my classes who talks at almost a shouting level when he asks questions, and I can feel the rest of the class tense up. I'd guess he's unaware of it, and this is likely a way he's been for many years which has subtlety/not so subtlety pushed people away from him.

Would it be a good idea to tell him that a lot of people don't like him because he's loud? Could I package that message such that it's clear I'm just trying...

To everyone on the LW team, I'm so glad we do the year in review stuff! Looking over the table of contents for the 2018 book I'm like "damn, a whole list of bangers", and even looking at top karma for 2019 has a similar effect. Thanks for doing something that brings attention to previous good work.

I've been having fun reading through Signals: Evolution, Learning, & Information. Many of the scenarios revolve around variations of the Lewis Signalling Game. It's a nice simple model that lets you talk about communication without having to talk about intentionality (what you "meant" to say).

Intention seems to mostly be about self-awareness of the existing signalling equilibrium. When I speak slowly and carefully, I'm constantly checking what I want to say against my understanding of our signalling equilibrium, and reasoning o...

"Moving from fossil fuels to renewable energy" but as a metaphor for motivational systems. Nate Soares replacing guilt seems to be trying to do this.

With motivation, you can more easily go, "My life is gonna be finite. And it's not like someone else has to deal with my motivation system after I die, so why not run on guilt and panic?"

Hmmmm, maybe something like, "It would be doper if large scale people got to more renewable motivational systems, and for that change to happen it feels important for people growing up to be able to see those who have made the leap."

Reverse-Engineering a World View

I've been having to do this a lot for Ribbonfarm's Mediocratopia blog chain. Rao often confuses me and I have to step up my game to figure out where he's coming from.

It's basically a move of "What would have to be different for this to make sense?"

Confusion: "But if you're going up in levels, stuff must be getting harder, so even though you're mediocre in the next tier, shouldn't you be loosing slack, which is antithetical to mediocrity?"

Resolution: "What if there&a...

[Everything is "free" and we inundate you in advertisements] feels bad. First thought alternative is something like paid subscriptions, or micropayments per thing consumed. But the question is begged, how does anyone find out about the sites they want to subscribe to? If only there was some website aggregator that was free for me to use so that I could browse different possible subscriptions...

Oh no. Or if not oh no, it seems like the selling eyeballs model won't go away just because alternatives exist, if only from the "people need to ...

The university I'm at has meal plans were you get a certain number of blocks (meal + drink + side). These are things that one has, and uses them to buy stuff. Last week at dinner, I gave the cashier my order and he said "Sorry man, we ran out of blocks." If I didn't explain blocks well enough, this is a statement that makes no sense.

I completely broke the flow of the back and forth and replied with a really confused, "Huh?" At that point the guy and another worker started laughing. Turns out they'd been coming up with non...

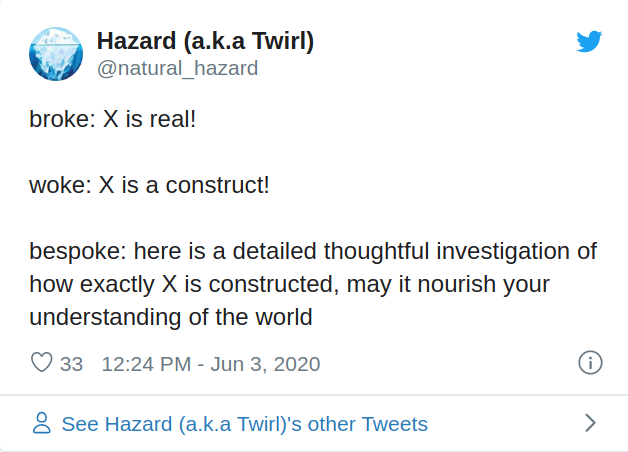

I've been writing on twitter more lately. Sometimes when I'm trying to express and idea, to generate progress I'll think "What's the shortest sentence I can write that convinces me I know what I'm talking about?" This is different from "What's a simple but no simpler explanation for the reader?"

Starting a twitter thread and forcing several tweet sized chunk of ideas out are quite helpful for that. It helps get the concept clearer in my head, and then I have something out there and I can dwell on how I'd turn it into a consumable for others.

Re Mental Mountains, I think one of the reasons that I get worried when I meet another youngin that is super gung-ho about rationality/"being logical and coherent", is that I don't expect them to have a good Theory of How to Change Your Mind. I worry that they will reason out a bunch of conclusions, succeed in high-level changing their minds, think that they've deeply changed their minds, but instead leave hoards of unresolved emotional memories/models that they learn to ignore and fuck them up later.

Weird hack for a weird tick. I've noticed I don't like audio abruptly ending. Like, sometimes I've listened to an entire podcast on a walk, even when I realized I wasn't into it, all because I anticipated the twinge of pain from turning it off. This is resolved by turning the volume down until it is silent, and then turning it off. Who'd of thunk it...

Me circa March 2018

"Should"s only make sense in a realm where you are divorced form yourself. Where you are bargaining with some other being that controls your body, and you are threatening it.

Update: This past week I've had an unusual amount of spontaneous introspective awareness on moments when I was feeling pulled my a should, especially one that came from comparing myself from others. I've also been meeting these thoughts with an, "Oh interesting. I wonder why this made me feel a should?" as opposed to a standard "end...

From Gwern's about page:

I personally believe that one should think Less Wrong and act Long Now, if you follow me.

Possibly my favorite catch-phrase ever :) What do I think is hiding there?

- Think Less Wrong

- Self anthropology- "Why do you believe what you believe?"

- Hugging the Query and not sinking into confused questions

- Litany of Tarski

- Notice your confusion - "Either the story is false or you model is wrong"

- Act Long Now

- Cultivate habits and practice routines that seem small / trivial on a day/week/month timeline, but will result in you

Claim: There's a headspace you can be in where you don't have a bucket for explore/babble. If you are entertaining an idea or working through a plan, it must be because you already expect it to work/be interesting. If your prune filter is also growing in strength and quality, then you will be abandoning ideas and plans as soon as you see any reasonable indicator that they won't work.

Missing that bucket and enhancing your prune filter might feel like you are merely growing up, getting wiser, or maybe more cynical. This will be really strongly...

You can have infinite aspirations, but infinite plans are often out to get you.

When you make new plans, run more creative "what if?" inner-sims, sprinkle in more exploit, and ensure you have bounded loss if things go south.

When you feel like quitting, realize you have the opportunity to learn and update being asking, "What's different between now and when I first made this plan?"

Make your confidence in your plans explicit, so if you fail you can be surprised instead of disappointed.

If the thought of giving up feels terrible, you mi...

Stub Post: Thoughts on why it can be hard to tell if something is hindsight bias or not.

Imagine one's thought process as an idea-graph, with the process of thinking being hopping around nodes. Your long term memory can be thought of as the nodes and edges that are already there and persist strongly. The contents of your working memory are like temporary nodes and edges that are in your idea graph, and everything that is close to them gets a +10 to speed-of-access. A short term memory node can even cause edges to pop up between two other nodes around i...

Utility functions aren't composable! Utility functions aren't composable! Sorry to shout, I've just realized a very specific way I've been wrong for quite some time.

VNM utility is completely ignores that structure and of outcomes and "similarities" between outcomes. U(1 apple) doesn't need to have any relation to U(2 apples). With decision scenarios I'm used to interacting in, there are often ways in which it is natural to things of outcomes as compositions or transformation of other outcomes or objects. When I thin...

I pointed out in this post that explanations can be confusing because you lack some assumed knowledge, or because the piece of info that will make the explanation click has yet to be presented (assuming a good/correct explanation to begin with). It seems like there can be a similar breakdown when facing confsion in the process of trying to solve a problem.

I was working on some puzzles in assembly code, and I made the mistake of interpreting hex numbers as decimal (treating 0x30 as 30 instead of 48). This lead me to draw a memory map that looked really weir...

For anyone curious about what the sPoOkY and mYsTeRiOuS Michael Vassar actually thinks about various shit, many of his friends have blogs and write about what they chat about, and he's also been on several long form podcasts.

https://naturalhazard.xyz/ben_jess_sarah_starter_pack

https://open.spotify.com/episode/1lJY2HJNttkwwmwIn3kyIA?si=em0lqkPaRzeZ-ctQx_hfmA

https://open.spotify.com/episode/01z3WDSIHPDAOuVp1ZYUoN?si=VOtoDpw9T_CahF31WEhZXQ

https://open.spotify.com/episode/2RzlQDSwxGbjloRKqCh1xg?si=XuFZB1CtSt-FbCweHtTnUA

... those posts are saying much more specific things than 'people are sometimes hypocritical'?

"Can crimes be discussed literally?":

- some kinds of hypocrisy (the law and medicine examples) are normalized

- these hypocrisies are / the fact of their normalization is antimemetic (OK, I'm to some extent interpolating this one based on familiarity with Ben's ideas, but I do think it's both implied by the post, and relevant to why someone might think the post is interesting/important)

- the usage of words like 'crime' and 'lie' departs from their denotation, to exclude normalized things

- people will push back in certain predictable ways on calling normalized things 'crimes'/'lies', related to the function of those words as both description and (call for) attack

- "There is a clear conflict between the use of language to punish offenders, and the use of language to describe problems, and there is great need for a language that can describe problems. For instance, if I wanted to understand how to interpret statistics generated by the medical system, I would need a short, simple way to refer to any significant tendency to generate false reports. If the available simple terms were also attack words

I'm reflecting back on this sequence I started two years ago. There's some good stuff in it. I recently made a comic strip that has more of my up to date thoughts on language here. Who knows, maybe I'll come back and synthesize things.

The way I see "Politics is the Mind Killer" get used, it feels like the natural extension is "Trying to do anything that involves high stakes or involves interacting with the outside world or even just coordinating a lot of our own Is The Mind Killer".

From this angle, a commitment to prevent things from getting "too political" to "avoid everyone becoming angry idiots" is also a commitment to not having an impact.

I really like how jessica re-frames things in this comment. The whole comment is interesting, here's a snippet:

...Basically, if the issue is adversar

The original post was mostly about not UNNECESSARILY introducing politics or using it as examples, when your main topic wasn't about politics in the first place. They are bad topics to study rationality on.

They are good topics to USE rationality on, both to dissolve questions and to understand your communication goals.

They are ... varied and nuanced in applicability ... topics to discuss on LessWrong. Generally, there are better forums to use when politics is the main point and rationality is a tool for those goals. And generally, there are better topics to choose when rationality is the point and politics is just one application. But some aspects hit the intersection just right, and LW is a fine place.

So a thing Galois theory does is explain:

Why is there no formula for the roots of a fifth (or higher) degree polynomial equation in terms of the coefficients of the polynomial, using only the usual algebraic operations (addition, subtraction, multiplication, division) and application of radicals (square roots, cube roots, etc)?

Which makes me wonder; would there be a formula if you used more machinery that normal stuff and radicals? What does "more than radicals" look like?

There are two times when Occam's razor comes to mind. One is for addressing "crazy" ideas ala "The witch down the road did it" and one is for picking which legit seeming hypothesis might I prioritize in some scientific context.

For the first one, I really like Eliezer's reminder that when going with "The witch did it" you have to include the observed data in your explanation.

For the second one, I've been thinking about the simplicity formulation that one of my professors uses. Roughly, A is simpler than B if all ...

"Contradictions aren't bad because they make you explode and conclude everything, they're bad because they don't tell you what to do next."

Quote from a professor of mine who makes formalisms for philosophy of science stuff.

Looking at my calendar over the last 8 months, it looks like my attention span for a project is about 1-1.5 weeks. I'm musing on what it would like to lean into that. Have multiple projects at once? Work extra hard to ensure I hit save points before the weekends? Only work on things in week long bursts?

Elephant in the Brain style model of signaling:

Actually showing that you have XYZ skill/trait is the most beneficial thing you can do, because others can verify you've got the goods and will hire your / like you / be on your team. So now there's an incentive for everyone to be constantly displaying there skills/traits. This takes up a lot of time and energy, and I'm gonna guess that anti-competition norms created "showing off" as a bad thing to do to prevent this "over saturation".

So if there's an "no showing-of...

When I first read The Sequences, why did I never think to seriously examine if I was wrong/biased/partially-incomplete in my understanding of these new ideas?

Hyp: I believed that fooling one's self was all identity driven. You want to be a type of person, and your bias lets you comfortably sink into it. I was unable to see my identity. I also had a self narrative of "Yeah, this Eliezer dude, what ever, I'll just see if he has anything good to say. I don't need to fit in with the rationalists."

I saw myself as "just" taking...

Legibility. Seeing like a state. Reason isn't magic. The secret of our success.

There is chaos and one (or a state) is trying to predict and act on the world. It sure would be easier if things were simpler. So far, this seems like a pretty human/standard desire.

I think the core move of legibility is to declare that everything must be simple and easy to understand, and if reality (i.e people) aren't as simple as our planned simplification, well too bad for people.

As a rationalist/post-rationalist/person who thinks good, you don't have to do th...

"If we're all so good at fooling ourselves, why aren't we all happy?"

The zealot is only "fooling themselves" from the perspective of the "rational" outsider. The zealot has not fooled themselves. They have looked at the world and their reasoning processes have come to the clear and obvious conclusion that []. They have gri-gri, and it works.

But it seems like most of us are much better at fooling ourselves than we are at "happening to use the full capacity of our minds to come to false and useful conclusions"...

(tid bit from some recent deep self examination I've been doing)

I incurred judgment-fueled "motivational debt" by aggressively buying into the idea "Talk is worthless, the only thing that matters is going out and getting results" at a time where I was so confident I never expected to fail. It felt like I was getting free motivation, because I saw no consequences to making this value judgment about "not getting results".

When I learned more, the possibility of failure became more real, and that cannon of judgement I'd built swiveled around to point at me. Oops.

I've spent the last three weeks making some simple apps to solve small problems I encounter, and practice the development cycle. Example.

I've already been sold on the concept of developing things in a Lean MVP style for products. Shorter feedback loops between making stuff and figuring out if anyone wants it. Less time spent making things people don't want to give you money for. It was only these past few weeks where I noticed the importance of a MVP approach for personal projects. Now it's a case of shortening the feedback loops betwe...

I love attention, but I HATE asking for it. I've noticed this a few times before in various forms. This time it really clicked. What changed?

- This time around, the insight came in the context of performing magic. This made the "I love attention" part more obvious than other times, when I merely noticed, "I have an allergic reaction to seeming needy."

- I was able to remember some of the context that this pattern arose from, and can observe "Yes, this may have helped me back then, but here are ways it isn't as helpful now, and it's not automatically terrible

One of the more useful rat-techniques I've enjoyed has been the reframing of "Making a decision right here right now" to "Making this sort of decision in these sorts of scenarios". When considering how to judge a belief based on some arguments, the question becomes, "Am I willing to accept this sort of conclusion based on this sort of argument in similar scenarios?"

From that, if you accept claim-argument pair A "Dude, if electric forks where a good idea, someone would have done it by now", but not claim-argument...

There are a few instances where I've had "re-have an idea" 3 times, each in a slightly different form, before it stuck and affected me in any significant way. I noticed this when going through some old notebooks and seeing stub-thoughts of ideas that I was currently flushing out (and had been unaware that I had given this thing thought before). One example is with TAPS. Two winters ago I was writing about an idea I called "micro habits/attitudes" and they felt super important, but nothing ever came of them. Now I see that basically...

So Kolmogorov Complexity depends on the language, but the difference between complexity in any two languages differs by at most a constant (what ever the size of an interpreter from one to the other is).

This seems to mean that the complexity ordering of different hypothesis can be rearranged by switching languages, but "only so much". So

and

are both totally possible, as long as

I see how if you care about orders of magnitude, the description...

Mini Post, Litany of Gendlin related.

Changing your mind feels like changing the world. If I change my mind and now think the world is a shittier place than I used to (all my friends do hate me), it feels like I just teleported into a shittier world. If I change my mind and now think the world is a better place than I used to (I didn't leave the oven on at home, so my house isn't going to burn down!) it feels like I've just been teleported into a better world.

Consequence of the above: if someone is trying to change your mind, it feels like t...

Something I noticed about what I take certain internal events to mean:

Over the past 4 years I've had trouble being in touch with "what I want". I've made a lot of progress in the past year (a huge part was noticing that I'd previously intentionally cut off communication with the parts of me that want).

Previously when I'd ask "what do I want right now?" I was basically asking, "What would be the most edifying to my self-concept that is also doable right now?"

So I've managed to stop doing that a lot. La...

Being undivided is cool. People who seem to act as one monolithic agent are inspiring. They get stuff done.

What can you do to try and be undivided if you don't know any of the mental and emotional moves that go into this sort of integration? You can tell everyone you know, "I'm this sort of person!" and try super super hard to never let that identity falter, and feel like a shitty miserable failure whenever it does.

How funny that I can feel like I shouldn't be having the "problem" of "feeling like I shouldn't be...

Reasons why I currently track or have tracked various metrics in my life:

1. A mindfulness tool. Tacking the time to record and note some metric is itself the goal.

2. Have data to be able to test an hypothesis about ways some intervention would affect my life. (i.e Did waking up earlier give me less energy in the day?)

3. Have data that enables me to make better predictions about the future (mostly related to time tracking, "how long does X amount of work take?")

4. Understanding how [THE PAST] was different of [THE PRESENT] to help defeat the Deadl...

Current beliefs about how human value works: various thoughts and actions can produce a "reward" signal in the brain. I also have lots of predictive circuits that fire when they anticipate a "reward" signal is coming as a result of what just happened. The predictive circuits have been trained to use the patterns of my environment to predict when the "reward" signal is coming.

Getting an "actual reward" and a predictive circuit firing will both be experienced as something "good". Because of this, predictive ...

The slogan version of some thoughts I've been having lately are in the vein of "Hurry is the root of all evil". Thinking in terms of code. I've been working in a new dev environment recently and have felt the siren song of, "Copy the code in the tutorial. Just import all the packages they tell you to. Don't sweat the details man, just go with it. Just get it running." All that as opposed to "Learn what the different abstractions are grounded in, figure out what tools do what, figure out exactly what I need, and use w...

The fact that utility and probability can be transformed while maintaing the same decisions matches what the algo feels like from the inside. When thinking about actions, I often just feel like a potential action is "bad", and it takes effort to piece out if I don't think the outcome is super valuable, or if there's a good outcome that I don't think is likely.

Don't ask people for their motives if you are only asking so that you can shit on their motives. Normally when I see someone asking someone else, "Why did you do that?" I interpret the statement to come from a place of, "I'm already about to start making negative judgments about you, this is the last chance for you to offer a plausible excuse for your behavior before I start firing."

If this is in fact the dynamic, then no one is incentivised to give you their actual reasons for things.

I'm looking at notebook from 3 years ago, and reading some scribbles from past me excitedly describing how they think they've pieced together that anger and the desire to punish are adaptations produced by evolution because they had good game theoretic properties. In the haste of the writing, and in the number of exclamation marks used, I can see that this was a huge realization for me. It's surprising how absolutely normal and "obvious" the idea is to me now. I can only remember a glimmer of the "holy shit!"ness that I felt at the time. It's so easy to forget that I haven't always thought the way I currently do. As if I'm typical-minding my past self.

An uncountable finite set is any finite set that contains the source code to a super intelligence that can provably prevent anyone from counting all of it's elements.

In a fight between the CMU student body and the rationalist community, CMU would probably forget about the fight unless it was assigned for homework, and the rationalists would all individually come to the conclusion that it is most rational to retreat. No one would engage in combat, and everyone would win.

I notice a disparity between my ability to parse difficult texts when I'm just "reading for fun" versus when I'm trying to solve a particular problem for a homework assignment. It's often easier to do it for homework assignments. When I've got time that's just, "reading up on fun and interesting things," I bounce-off of difficult texts more often than I would like.

After examining some recent instances of this happening, I've realized that when I'm reading for fun, my implicit goal has often been, "...

I've been working on some more emotional bugs lately, and I'm noticing that many of the core issues that I'm dragging up are ones I've noticed at various points in the past and then just... ? I somehow just managed to forget about them, though I remember that in round 1 it also took a good deal of introspection for these issues to rise to the top. Keeping a permanent list of core emotional bugs would be an easy fix. The list would need to be somewhere I look at least once a week. I don't always have to be working on all of them, but I at least need to not forget that these problems exist.

"With a sufficiently negligent God, you should be able to hack the universe."

Just a fun little thought I had a while ago. The idea being that if your deity intervenes with the world, or if if there are prayers, miracles, "supernatural creatures" or anything of that sort, then with enough planning and chutzpah, you should be able to hack reality unless God has got a really close eye on you.

This partially came from a fiction premise I have yet to act on. Dave (garden variety atheist) wakes up in hell. Turns out that the Christian God TM is real, though a bit of a dunce. Dave and Satan team up and go on a wacky adventure to overthrow God.

Quick thoughts on TAPS:

The past few weeks I've been doing a lot of posture/physical tick based TAPs (not slouching, not biting lips etc). These seem to be very well fit to TAPs, because the trigger is a physical movement, making it easier to notice. I've noticed roughly three phases of noticing triggers

- I suddenly become aware of the fact I've been doing the action.

- I become aware of the fact that I've initiated the action.

- Before any physical movement happens, I notice the "impulse" to do the thing .

To make a TAP run deep, it se...

Here's a pattern I want to outline and possible suggestions on how to fix it.

Sometimes when I'm trying to find the source of the bug, I make incorrect updates. An explanation of what the problem might be pops to mind, and it seems to fit (ex. "oops, this machine is Big Endian, not Little Endian"). Then I work on the bug some more, things still don't work, and at some point I find the real problem. Today, when I found a bug I was hunting for, I had a moment of, "Oh shit, an hour ago I updated my beliefs about how this machine w...

Previously when I'd encountered the distinction between synthetic and analytic thought (as philosophers used them), I didn't quite get it. Yesterday I started reading Kant's Prolegomena and have a new appreciation for the idea. I used to imagine that "doing the analytic method" meant looking at definitions.

I didn't imagine the idea actually being applied to concepts in one's head. I imagined the process being applied to a word. And it seemed clear to me that you're never going to gain much insight or wisdom from investigation a words definition and g...

This comment will collect things that I think beginner rationalists, "naive" rationalists, or "old school" rationalists (these distinctions are in my head, I don't expect them to translate) do which don't help them.

Have some horrible jargon: I spit out a question or topic and ask you for your NeMRIT, your Next Most Relevant Interesting Take.

Either give your thoughts about the idea I presented as you understand it, unless that's boring, then give thoughts that interests you that seem conceptually closest to the idea I brought up.

I often don't feel like I'm "doing that much", but find that when I list out all of the projects, activities, and thought streams going on, there's an amount that feels like "a lot". This has happened when reflecting on every semester in the past 2 years.

Hyp: Until I write down a list of everything I'm doing, I'm just probing my working memory for "how much stuff am I up to?" Working mem has a limit, and reliably I'm going to get only a handful of things. Anytime when I'm doing more things than what fit in working memory, when I stop to write them all down, I will experience "Huh, that's more than it feels like."

Short framing on one reason it's often hard to resolve disagreements:

[with some frequency] disagreements don't come from the same place that they are found. You're brain is always running inference on "what other people think". From a statement like, "I really don't think it's a good idea to homeschool", you're mind might already be guessing at a disagreement you have 3 concepts away, yet only ping you with a "disagreement" alarm.

Combine that with a decent ability to confabulate. You ask yourself "Why do I disagree about homeschooling?" and you are given a plethora of possible reasons to disagree and start talking about those.

True if you squint at it right: Learning more about "how things work" is a journey that starts at "Life is a simple and easy game with random outcomes" and ends in "Life is a complex and thought intensive game with deterministic outcomes"

Idea that I'm going to use in these short form posts: for ideas/things/threads that I don't feel are "resolved" I'm going to write "*tk*" by the most relevant sentence for easy search later (I vaguely remember Tim Ferris talking about using "tk" as a substitute for "do research and put the real numbers in" since "tk" is not a letter pair that shows up much in English words. )

I've taken a lot of programming courses at university, and now I'm taking some more math and proof based courses. I notice that it feels considerably worse to not fully understand what's going on in Real Analysis than it did to not fully understand what was going on in Data Structures and Algorithms.

When I'm coding and pulling on levers I don't understand (outsourcing tasks to a library, or adding this line to the project because, "You just have to so it works") there's a yuck feeling, but there's also, "We...

The other day at a lunch time I realized I'd forgot to make and pack a lunch. It felt odd that I only realized it right when I was about to eat and was looking through my bag for food. Tracing back, I remembered that something abnormal had happened in my morning routine, and after dealing with the pop-up, I just skipped a step in my routine and never even noticed.

One thing I've done semi-intentionally over the past few years is decrease the amount of ambient thought that goes to logistics. I used to consider it to be "useless worrying", but given how a small disruption was able to make me skip a very important step, now I think of it more as trading off efficiency for "robustness".

Here is a an abstraction of a type of disagreement:

Claim: it is common for one to be more concerned with questions like, "How should I respond to XYZ system?" over "How should I create an accurate model of XYZ system?"

Let's say the system / environment is social interactions.

Liti: Why are you supposed to give someone a strong handshake when you meet them?

Hale: You need to give a strong handshake

Here Hale misunderstands Liti as asking for information about the proper procedure to perform. Really, Liti wants to know how this system ...

Last fall I hosted a discussion group with friends on three different occasions. I pitched it as "get interesting people together and intentionally have an interesting conversation" and was not a rationalist discussion group. One thing that I noticed was that whenever I wanted to really fixate on and solve a problem we identified, it felt wrong, like it would break some implicit rule I never remembered setting.

Later I pin pointed the following as the culprit. I personally can't consistantly produce quality clear thinking at "conversatio...

Highly speculative thought.

I don't often get angry/upset/exasperated with the coding or math that I do, but today I've gotten royally pissed at some Java project of mine. Here's a guess at a possible mechanism.

The more human-like a system feels, the easier it is to anthropomorphize and get angry at. When dealing with my code today, it has felt less like the world of being able to reason carefully over a deterministic system, and more like dealing with an unpredictable possibly hostile agent. Mayhaps part of my brain pattern matches that behaviour to something inteligent -> something human -> apply anger strategy.

Good description of what was happening in my head when I went was experiencing the depths of the uncanny valley of rationality:

I was more genre savvy than reality savvy. Even when I first started to learn about biases, I was more genre-of-biases savvy than actual bias-savvy. My first contact with the sequences successfully prevented me from being okay with double-thinking, and mostly removed my ability to feel okay about guiding my life via genre-savvyness. I also hadn't learned enough to make any sort of superior "basis" from which to act and decide. So I hit some slumps.

Likely false semi-explicit belief that I've had for a while: changes in patterns of behavior and thought are "merely" a matter of conditioning/training. Whenever it's hard to change behavior, it's just because the system is already in motion in a certain direction, and it takes energy/effort to push it in a new direction.

Now, I'm more aware of some behaviors that seem to have access to some optimization power that has the goal of keeping them around. Some behaviors seem to be part of a deeper strategy run my some sub-process ...

I've always been off-put when someone says, "free will is a delusion/illusion". There seems to be a hinting that one's feelings or experiences are in some way wrong. Here's one way to think you have fundamental free will without being 'deluded' -> "I can imagine a system where agents have an ontologically basic 'decision' option, and it seems like that system would produce experiences that match up with what I experience, therefore I live in a system with fundamental free-will". Here, it's not ...

Person I talked to once: "Moral rules are dumb because they aren't going to work in every scenario you're going to encounter. You should just everything case by case."

The thing that feels most wrong about this to me is the proposition that there is an action you can do which is, "Judge everything case by case". I don't think there is. You wouldn't say, "No abstraction covers every scenario, so you should model everything in quarks."

For someone reason or another, it sometimes feels like you can "model t...

I can't remember the exact quote or where it came from, so I'm going to paraphrase.

The end goal of meditation is not to be able to calm your mind while you are sitting cross-legged on the floor, it's to be able to calm your mind in the middle of a hurricane.

Mapping this onto rationality, there are two question you can ask yourself.

How rational can I be while making decisions in my room?

How rational can I be in the middle of a hurricane?

I think the distinction is important because recognizing it allows you to train both skills separately.

Some thoughts on a toy model of productivity and well-being

T = set of task

S = set of physiological states

R = level of "reflective acceptance" of current situation (ex. am I doing "good" or "bad")

Quality of Work = some_function(s,t) + stress_applied

Quality of Subjective Experience = Quality - stress + R

Some states are stickier than others. It's easier to jump out of "I'm distracted" then it is to escape "I've got the flu". States can be better or worse at doing tasks, and tasks can be of varyi...

I notice that there’s almost a sort of pressure that builds up when I look at someone, as if it’s a literal indicator of, “Dude, you’re approaching a socially unacceptable staring time!”

It seems obvious what is going on. If you stare at someone for too long, things get “weird” and you come off as a “creep”. I know that. Most people know that. And since we all have common knowledge about that rule, I understand that there are consequences to staring at someone for more than a second or two. Thus, the reason I don’t stare at people for very long is because ...

I'm currently reading The Open Veins of Latin America, which is a detailed history of how Latin America has been screwed over across the centuries. It reminds me of a book I read a while ago, Confessions of an Economic Hit-man. Though it's clear the author thinks that what has happened to Latin America has been unjust, he does a good job of not adding lots of, "and therefor..."s. It's mostly a poetic historical account. There's a lot more cartoonishly evil things that have happened in history than I realized.

I'm simulatin...

Fun Framing: Empiricism is trying to predict TheUniverse(t = n + delta) using TheUniverse(t=n) as your blackbox model.

Sometimes the teacher makes a typo. In conversation, sometimes people are "just wrong". So a lot of the times, when you notice confusion, it can be dismissed with "the other person just screwed up". But reality doesn't screw up. It just is. Always pay attention to confusion that comes from looking at reality.

(Also, when you come to the conclusion that another person "screwed up", you aren't completely done until you have some understanding of how they might of screwed up)

A rephrasing of ideas from the recent Care Less post.

Value allocation is not zero sum, though time allocation is. In order to not break down at the "colossal injustice of it all", a common strategy is to operate as if value is zero-sum.

To be as effective as possible, you need to be able to see the dark world, one that is beyond the reach of God. Do not explain why the current state of affairs is acceptable. Instead, look at reality very carefully and move towards the goal. Explaining why your world is acceptable shuts down the sense that more is ...

I just finished reading and rereading Debt: The First 5000 Years. I was tempted to go, "Yep, makes sense, I was basically already thinking about money and debt like that." Then I remembered that not but two months ago I was arguing with a friend and asserting that there was nothing disfunctional about being able to sell your kidney. It's hard to remember what I used to think about certain things. When there's a concrete reminder, sometimes it comes as a shock that I used to think differently from how I do. For whatever the big things I've changed...

"It seems like you are arguing/engaging with something I'm not saying."

I can remember a argument with a friend who went to great lengths to defend a point he didn't feel super strongly about, all because he implicitly assumed I was about to go "Given point A, X conclusion, checkmate."

It seems like a pretty common "argumental movement" is to get someone to agree to a few simple propositions, with the goal of later "trapping" them with a dubious "and therefore!". People are good at spotting this, an...

I really like the phrasing alkjash used, One Inch Punch. Recently I've been paying closer attention to when I'm in "doing" or "trying" mode, and whether or not those are quality handles, there do seem to be multiple forms of "doing" that have distinct qualities to them.

It's way easier for me to "just" get out of bed in the morning, than to try and convince myself getting out of bed is a good idea. It's way easier for me to "just" hit send on an email or message that might not be worded ...

I really like the phrasing alkjash used, One Inch Punch. Recently I've been paying closer attention to when I'm in "doing" or "trying" mode, and whether or not those are quality handles, there do seem to be multiple forms of "doing" that have distinct qualities to them.

It's way easier for me to "just" get out of bed in the morning, than to try and convince myself getting out of bed is a good idea. It's way easier for me to "just" hit send on an email or message that might not be worded ...

In light of reading through Raemon's shortform feed, I'm making my own. Here will be smaller ideas that are on my mind.