Reasons time horizon is overrated and misinterpreted:

(This post is now live on the METR website in a slightly edited form)

In the 9 months since the METR time horizon paper (during which AI time horizons have increased by ~6x), it’s generated lots of attention as well as various criticism on LW and elsewhere. As one of the main authors, I think much of the criticism is a valid response to misinterpretations, and want to list my beliefs about limitations of our methodology and time horizon more broadly. This is not a complete list, but rather whatever I thought of in a few hours.

- Time horizon is not the length of time AIs can work independently

- Rather, it’s the amount of serial human labor they can replace with a 50% success rate. When AIs solve tasks they’re usually much faster than humans.

- Time horizon is not precise

- When METR says “Claude Opus 4.5 has a 50%-time horizon of around 4 hrs 49 mins (95% confidence interval of 1 hr 49 mins to 20 hrs 25 mins)”, we mean those error bars. They were generated via bootstrapping, so if we randomly subsample harder tasks our code would spit out <1h49m 2.5% of the time. I really have no idea whether Claude’s “true” time horizon is

I basically agree with everything you say here and wish we had a better way to try to ground AGI timelines forecasts. Do you recommend any other method? E.g. extrapolating revenue? Just thinking through arguments about whether the current paradigm will work, and then using intuition to make the final call? We discuss some methods that appeal to us here.

This parameter, “Doubling Difficulty Growth Factor”, can change the date of the first Automated Coder AI between 2028 and 2050.

Note that we allow it to go subexponential, so actually it can change the date arbitrarily far in the future if you really want it to. Also, dunno what's happening with Eli's parameters, but with my parameter settings putting the doubling difficulty growth factor to 1 (i.e. pure exponential trend, neither super or sub exponential) gets to AC in 2035. (Though I don't think we should put much weight on this number, as it depends on other parameters which are subjective & important too, such as the horizon length which corresponds to AC, which people disagree a lot about)

Edit: Full post here with 9 domains and updated conclusions!

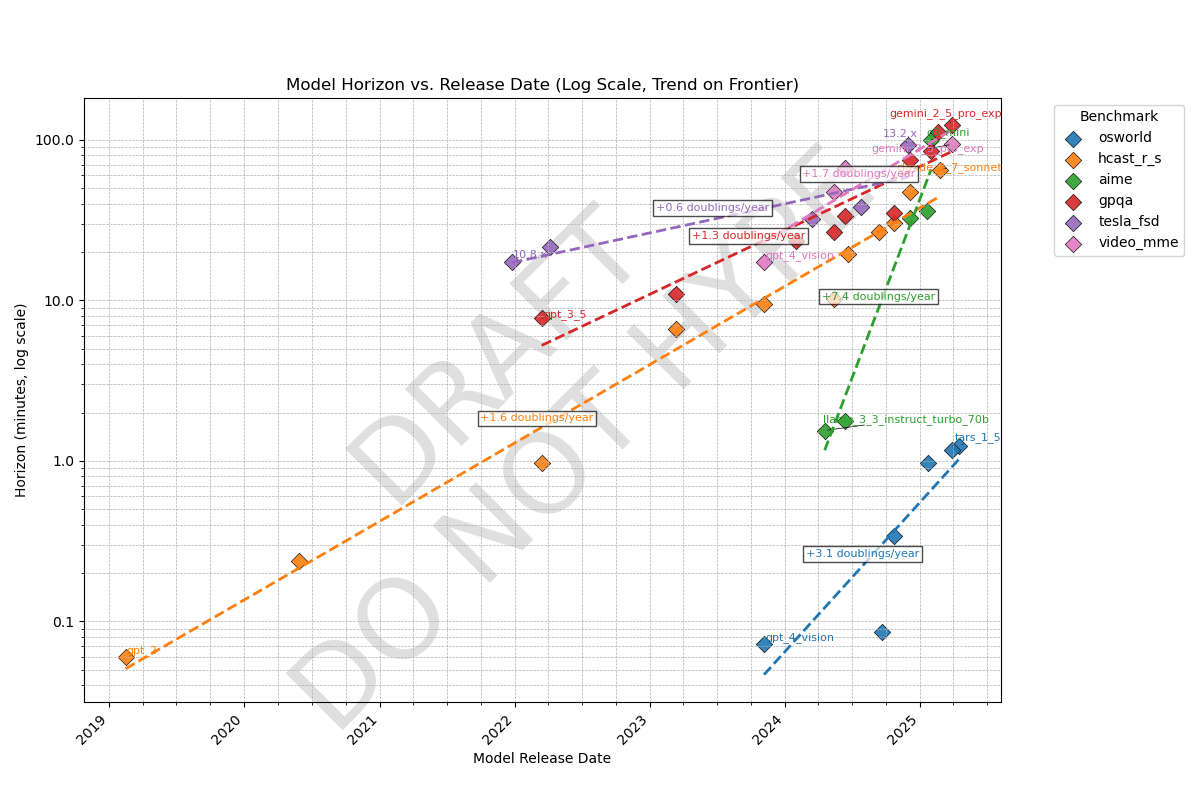

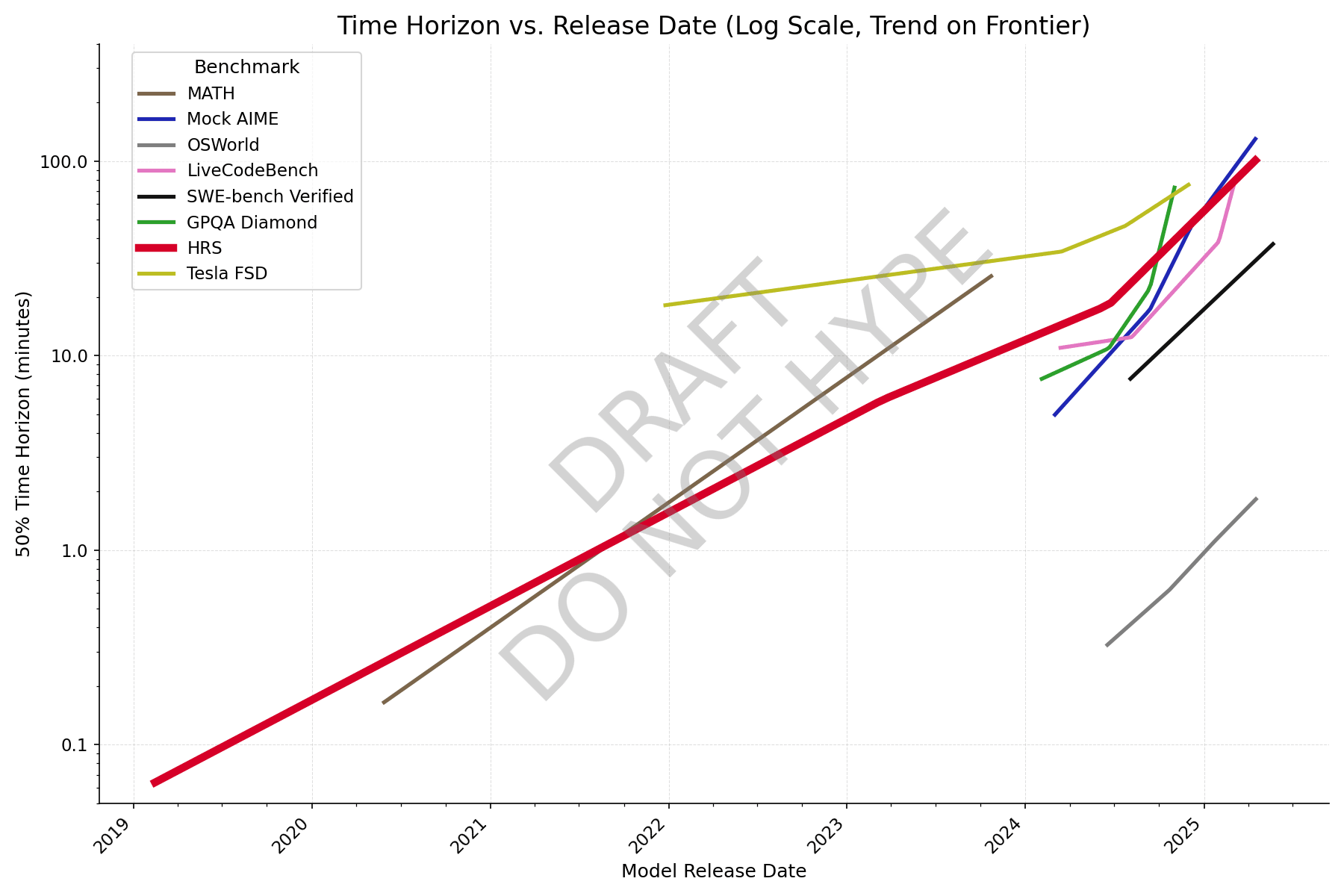

Cross-domain time horizon:

We know AI time horizons (human time-to-complete at which a model has a 50% success rate) on software tasks are currently ~1.5hr and doubling every 4-7 months, but what about other domains? Here's a preliminary result comparing METR's task suite (orange line) to benchmarks in other domains, all of which have some kind of grounding in human data:

Observations

- Time horizons on agentic computer use (OSWorld) is ~100x shorter than other domains. Domains like Tesla self-driving (tesla_fsd), scientific knowledge (gpqa), and math contests (aime), video understanding (video_mme), and software (hcast_r_s) all have roughly similar horizons.

- My guess is this means models are good at taking in information from a long context but bad at acting coherently. Most work requires agency like OSWorld, which may be why AIs can't do the average real-world 1-hour task yet.

- There are likely other domains that fall outside this cluster; these are just the five I examined

- Note the original version had a unit conversion error that gave 60x too high horizons for video_mme; this has been fixed (thanks @ryan_greenblatt )

- Rate

but bad at acting coherently. Most work requires agency like OSWorld, which may be why AIs can't do the average real-world 1-hour task yet.

I'd have guessed that poor performance on OSWorld is mostly due to poor vision and mouse manipulation skills, rather than insufficient ability to act coherantly.

I'd guess that typical self-contained 1-hour task (as in, a human professional could do it in 1 hour with no prior context except context about the general field) also often require vision or non-text computer interaction and if they don't, I bet the AIs actually do pretty well.

I'm skeptical and/or confused about the video MME results:

- You show Gemini 2.5 Pro's horizon length as ~5000 minutes or 80 hours. However, the longest videos in the benchmark are 1 hour long (in the long category they range from 30 min to 1 hr). Presumably you're trying to back out the 50% horizon length using some assumptions and then because Gemini 2.5 Pro's performance is 85%, you back out a 80-160x multiplier on the horizon length! This feels wrong/dubious to me if it is what you are doing.

- Based on how long these horizon lengths are, I'm guessing you assumed that answering a question about a 1 hour long video takes a human 1 hr. This seems very wrong to me. I'd bet humans can typically answer these questions much faster by panning through the video looking for where the question might be answered and then looking at just that part. Minimally, you can sometimes answer the question by skimming the transcript and it should be possible to watch at 2x/3x speed. I'd guess the 1 hour video tasks take more like 5-10 min for a well practiced human, and I wouldn't be surprised by much shorter.

- For this benchmark, (M)LLM performance seemingly doesn't vary much with video duration, invalidating that horizon length (at least horizon length based on video length) is a good measure on this dataset!

New graph with better data, formatting still wonky though. Colleagues say it reminds them of a subway map.

With individual question data from Epoch, and making an adjustment for human success rate (adjusted task length = avg human time / human success rate), AIME looks closer to the others, and it's clear that GPQA Diamond has saturated.

Can you explain what a point on this graph means? Like, if I see Gemini 2.5 Pro Experimental at 110 minutes on GPQA, what does that mean? It takes an average bio+chem+physics PhD 110 minutes to get a score as high as Gemini 2.5 Pro Experimental?

I think I would have predicted that Tesla self-driving would be the slowest

For graphs like these, it obviously isn't important how the worst or mediocre competitors are doing, but the best one. It doesn't matter who's #5. Tesla self-driving is a longstanding, notorious failure. (And apparently is continuing to be a failure, as they continue to walk back the much-touted Cybertaxi launch, which keeps shrinking like a snowman in hell, now down to a few invited users in a heavily-mapped area with teleop.)

I'd be much more interested in Waymo numbers, as that is closer to SOTA, and they have been ramping up miles & cities.

Air purifiers are highly suboptimal and could be >2.5x better.

Some things I learned while researching air purifiers for my house, to reduce COVID risk during jam nights.

- An air purifier is simply a fan blowing through a filter, delivering a certain CFM (airflow in cubic feet per minute). The higher the filter resistance and lower the filter area, the more pressure your fan needs to be designed for, and the more noise it produces.

- HEPA filters are inferior to MERV 13-14 filters except for a few applications like cleanrooms. The technical advantage of HEPA filters is filtering out 99.97% of particles of any size, but this doesn't matter when MERV 13-14 filters can filter 77-88% of infectious aerosol particles at much higher airflow. The correct metric is CADR (clean air delivery rate), equal to airflow * efficiency. [1, 2]

- Commercial air purifiers use HEPA filters for marketing reasons and to sell proprietary filters. But an even larger flaw is that they have very small filter areas for no apparent reason. Therefore they are forced to use very high pressure fans, dramatically increasing noise.

- Originally people devised the Corsi-Rosenthal Box to maximize CADR. They're cheap but rather

Some versions of the METR time horizon paper from alternate universes:

Measuring AI Ability to Take Over Small Countries (idea by Caleb Parikh)

Abstract: Many are worried that AI will take over the world, but extrapolation from existing benchmarks suffers from a large distributional shift that makes it difficult to forecast the date of world takeover. We rectify this by constructing a suite of 193 realistic, diverse countries with territory sizes from 0.44 to 17 million km^2. Taking over most countries requires acting over a long time horizon, with the exception of France. Over the last 6 years, the land area that AI can successfully take over with 50% success rate has increased from 0 to 0 km^2, at the rate of 0 km^2 per year (95% CI 0.0-0.0 km^2/year); extrapolation suggests that AI world takeover is unlikely to occur in the near future. To address concerns about the narrowness of our distribution, we also study AI ability to take over small planets and asteroids, and find similar trends.

Measuring AI Ability to Worry About AI

Abstract: Since 2019, the amount of time LW has spent worrying about AI has doubled every seven months, and now constitutes the primary bottleneck to AI safety...

A few months ago, I accidentally used France as an example of a small country that it wouldn't be that catastrophic for AIs to take over, while giving a talk in France 😬

Quick takes from ICML 2024 in Vienna:

- In the main conference, there were tons of papers mentioning safety/alignment but few of them are good as alignment has become a buzzword. Many mechinterp papers at the conference from people outside the rationalist/EA sphere are no more advanced than where the EAs were in 2022. [edit: wording]

- Lots of progress on debate. On the empirical side, a debate paper got an oral. On the theory side, Jonah Brown-Cohen of Deepmind proves that debate can be efficient even when the thing being debated is stochastic, a version of this paper from last year. Apparently there has been some progress on obfuscated arguments too.

- The Next Generation of AI Safety Workshop was kind of a mishmash of various topics associated with safety. Most of them were not related to x-risk, but there was interesting work on unlearning and other topics.

- The Causal Incentives Group at Deepmind developed a quantitative measure of goal-directedness, which seems promising for evals.

- Reception to my Catastrophic Goodhart paper was decent. An information theorist said there were good theoretical reasons the two settings we studied-- KL divergence and best-of-n-- behaved similarly.

- OpenAI gav

Will nuclear ICBMs in their current form be obsolete soon? Here's the argument:

- ICBMs' military utility is to make the cost of intercepting them totally impractical for three reasons:

- Intercepting a reentry vehicle requires another missile with higher performance-- the rule of thumb is an interceptor needs 3x larger acceleration than the incoming missile. This means interceptors cost something like $5-70 million each depending on range

- An ICBM has enough range to target basically anywhere, either counterforce (enemy missile silos) or countervalue (cities) targets, so to have the entire country be protected from nuclear attack the US would need to have millions of interceptors compared to the current ~50.

- One missile can split into up to 5-15 MIRV (multiple independent reentry vehicles) which carry their own warheads, thereby making the cost to the defender 5-15x larger. There can also be up to ~100 decoys, but these mostly fall away during reentry.

- RVs are extremely fast (~7 km/s of which ~3 km/s is downward velocity) but their path is totally predictable once they enter the boost phase, so the problem of intercepting them basically reduces to predicting exactly where they'll be, then p

Sounds interesting - the main point is that I don't think you can hit the reentry vehicle because of turbulent jitter caused by the atmosphere. Looks like normal jitter is ~10m which means a small drone can't hit it. So could the drone explode into enough fragments to guarantee a hit and with enough energy to kill it? Not so sure about that. Seems less likely.

Then what about countermeasures -

1. I expect the ICBM can amplify such lateral movement in the terminal phase with grid fins etc without needing to go full HGV - can you retrofit such things?

2. What about a chain of nukes where the first one explodes 10km up in the atmosphere purely to make a large fireball distraction. The 2nd in the chain then flies through this fireball 2km from its center say 5 seconds later. (enough to blind sensors but not destroy the nuke) The benefit of that is that when the first nuke explodes, the 2nd changes its position randomly with its grid fins SpaceX style. It is untrackable during the 1st explosion phase so throws off the potential interceptors, letting it get through. You could have 4-5 in a chain exploding ever lower to the ground.

I have wondered if railguns could also stop ICBM - even if the rails only last 5-10 shots that is enough and cheaper than a nuke. Also "Brilliant pebbles" is now possible.

https://www.lesswrong.com/posts/FNRAKirZDJRBH7BDh/russellthor-s-shortform?commentId=FSmFh28Mer3p456yy

The cost of goods has the same units as the cost of shipping: $/kg. Referencing between them lets you understand how the economy works, e.g. why construction material sourcing and drink bottling has to be local, but oil tankers exist.

- An iPhone costs $4,600/kg, about the same as SpaceX charges to launch it to orbit. [1]

- Beef, copper, and off-season strawberries are $11/kg, about the same as a 75kg person taking a three-hour, 250km Uber ride costing $3/km.

- Oranges and aluminum are $2-4/kg, about the same as flying them to Antarctica. [2]

- Rice and crude oil are ~$0.60/kg, about the same as $0.72 for shipping it 5000km across the US via truck. [3,4] Palm oil, soybean oil, and steel are around this price range, with wheat being cheaper. [3]

- Coal and iron ore are $0.10/kg, significantly more than the cost of shipping it around the entire world via smallish (Handysize) bulk carriers. Large bulk carriers are another 4x more efficient [6].

- Water is very cheap, with tap water $0.002/kg in NYC. But shipping via tanker is also very cheap, so you can ship it maybe 1000 km before equaling its cost.

It's really impressive that for the price of a winter strawberry, we can ship a strawberry-sized lump of...

Reflections from an AI Futures TTX:

Today I played an AI Futures tabletop exercise [1]. I'd done one before with a scenario similar to Plan A, where the US executive is much more doomy and competent than we expect and leads an international agreement to pause AI for as long as possible. This time, we played "Optimal China", where instead China was doomy and competent, and considered both x-risk and US AI dominance unacceptable. All other players (frontier labs, safety community, Europe) basically role-play the way we expect them to act, and the AI player draws the AI's goals from a distribution that includes misaligned goals, aligned goals, and combinations thereof.

In Plan A the humans won easily because they successfully paused, whereas in "Optimal China", the AIs won easily.

- China has much less leverage than the US does in pushing forward a pause treaty, due to the US's compute advantage and international influence.

- In Plan A, the US is proposing the deal. The US's BATNA is basically winning the AI race and accepting some takeover risk, so China is happy to accept ~any deal that gives them a share of the lightcone. China couldn't even convince economic partners like Brazil to suppor

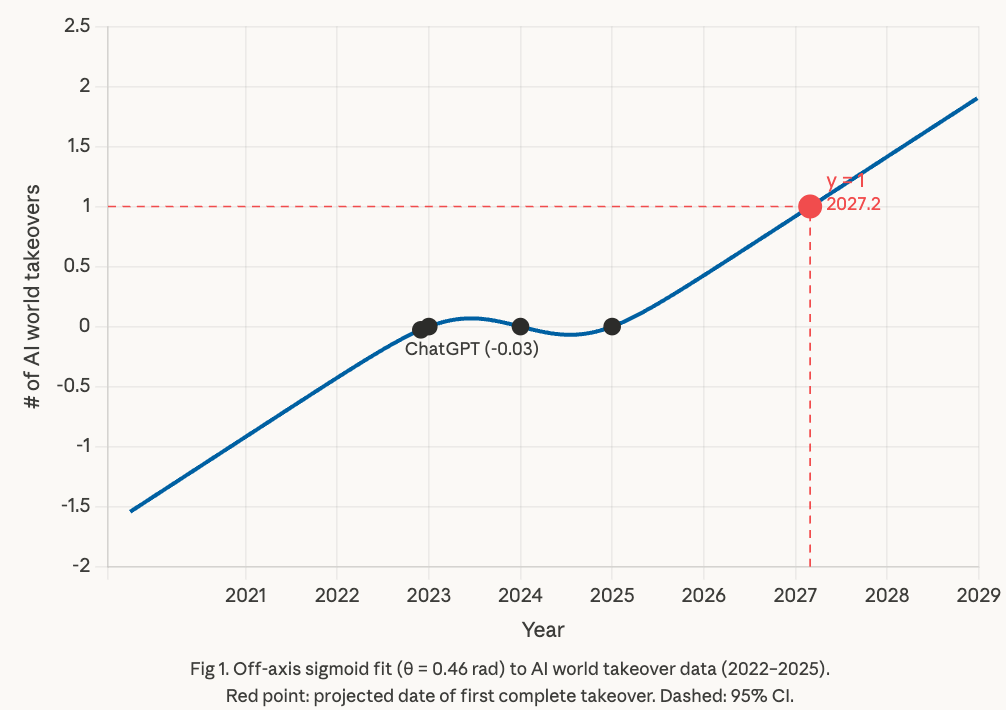

Last year, METR used linear extrapolation on country-level data to infer that AI world takeover would ~never happen. However, reviewers suggested that a sigmoid is more appropriate because most technologies follow S-curves. I just ran this analysis and it's much more concerning, predicting an AI world takeover in early 2027, and alarmingly, a second AI takeover around 2029.

Here are the main differences in the improved analysis:

- The original analysis assumed that the equation for AI takeover vs year would be axis-aligned, but this is an arbitrary basis. We now let the angle of the sigmoid fit the data as appropriate, meaning the following data model:

Interestingly, this results in a Z-curve (A < 0) rather than an S-curve. This is consistent with advanced AI being a substitute rather than a complement to labor. - We now incorporate our priors. Even though we only have hard data since 2023, it's likely that the number of AI takeovers was lower pre-ChatGPT. So we added the inferred point (Nov 30 2022, -0.03). The rotated sigmoid still gets R^2 = 1.000 and now looks much better on BIC than exponential and step-function models, both of which have log-likelihood of minus infinity on negativ

Most people should buy long-dated call options:

If you're early career, have a stable job, and have more than ~3 months of savings but not enough to retire, then lifecycle investing already recommends investing very aggressively with leverage (e.g. 2x the S&P 500). This is not speculation, it decreases risk due to diversifying over time. The idea is as a 30yo most of your wealth is still in your future wages, which are only weakly correlated with the stock market, so 2x leverage on your relatively small savings now might still mean under 1x leverage on your effective lifetime portfolio.

In 2026, most of your long-term financial risk comes from your job being automated, which will plausibly happen in the next 5 years. If this happens, your salary will go to zero while the S&P 500 will probably at least double (assuming no AI takeover) [1]. If automation takes 20 years, the present value of your future income is ~10 years of salary. This makes exposure to the market (beta) extremely important. If you have 2 years of salary saved, the required leverage just to break even whether automation takes 5 years or 20 is something like 4x.

However, we can do better; betting that a price m...

If [your job is automated] . . . the S&P 500 will probably at least double (assuming no AI takeover)

Is this true?

Prior discussion: Tail SP 500 Call Options.

I currently have 7% of my portfolio in such calls.

US Government dysfunction and runaway political polarization bingo card. I don't expect any particular one of these to happen but it seems plausible that at least one of these will happen.

- A sanctuary city conducts armed patrols to oppose ICE raids, or the National Guard refuses a direct order from the president en masse

- Internal migration is de facto restricted for US citizens or green card holders

- For debt ceiling reasons, the US significantly defaults on its debt, stops Social Security payments, grounds flights, or issues a trillion-dollar coin

- US declares a neutral humanitarian NGO like the WHO a foreign terrorist organization

- A major news network (eg CNN) or social media site other than Tiktok (eg Facebook) loses licenses for ideological reasons

- A Democratic or Republican candidate for president, governor, or Congress is kept off a state ballot

- A major elected official or Cabinet member takes office while incarcerated

- Election issues on the scale of 1876, where Congress can't decide on the president until past January 20

- A state or local government establishes a 100% tax bracket or wealth tax

- The Fed chair is fired, or three board members

- A NCAA D1 college or pro sports league establishe

I conducted an exercise at METR to simulate what our work would be like in 2027, when we have 200 hour time horizon AIs. Some observations:

- The pace of research was much faster than today, something like 3x. I would guess that speedup goes as time horizon to the 0.3 or 0.4 power, though we didn't run the game with enough fidelity to tell

- No time to develop ideas before implementing: Agents implement ideas as soon as you think of them, so rather than ideating for days at a time, you can make an MVP in a couple of hours and revise. If the task isn’t near the limit of agent capabilities, you spend all your time understanding results; if it is, you spend all your time checking its work.

- Keeping agents fed overnight: Overnight, agents can do maybe 200 human hours of work, but only for very agent-shaped tasks, so researchers need to deliberately sequence projects such that very long tasks suitable for agents happen overnight, e.g. optimizing a well-defined metric.

- Prioritization and organization are bottlenecks: If agents can execute all your ideas nearly as fast as you can prompt them, there’s no point in implementing only your best idea. It might be better to implement your top three ideas

One thing that stood out to me from the post was this line: "With less verifiable tasks, the gamemaster decides how successful the AI is." This seems like a major assumption which could change the takeaways.

I've been experimenting with using Opus to implement experiments with only high-level feedback. I often find once I dig into the low-level details that Opus has made some very poor decisions which would not be observable from only looking at the final outputs, and that my uplift has been significantly reduced by the time I am satisfied with the quality of the experiment.

I'm not sure if these problems will be solved by just instructing the AI to provide a proof of correctness or a summary report, although this probably depends on the specific domain. For less verifiable tasks, verification could become a huge bottleneck that scales with task complexity and significantly reduces uplift.

People with p(doom) > 50%: would any concrete empirical achievements on current or near-future models bring your p(doom) under 25%?

Answers could be anything from "the steering vector for corrigibility generalizes surprisingly far" to "we completely reverse-engineer GPT4 and build a trillion-parameter GOFAI without any deep learning".

A dramatic advance in the theory of predicting the regret of RL agents. So given a bunch of assumptions about the properties of an environment, we could upper bound the regret with high probability. Maybe have a way to improve the bound as the agent learns about the environment. The theory would need to be flexible enough that it seems like it should keep giving reasonable bounds if the is agent doing things like building a successor. I think most agent foundations research can be framed as trying to solve a sub-problem of this problem, or a variant of this problem, or understand the various edge cases.

If we can empirically test this theory in lots of different toy environments with current RL agents, and the bounds are usually pretty tight, then that'd be a big update for me. Especially if we can deliberately create edge cases that violate some assumptions and can predict when things will break from which assumptions we violated.

(although this might not bring doom below 25% for me, depends also on race dynamics and the sanity of the various decision-makers).

[edit: pinned to profile]

The bulk of my p(doom), certainly >50%, comes mostly from a pattern we're used to, let's call it institutional incentives, being instantiated with AI help towards an end where eg there's effectively a competing-with-humanity nonhuman ~institution, maybe guided by a few remaining humans. It doesn't depend strictly on anything about AI, and solving any so-called alignment problem for AIs without also solving war/altruism/disease completely - or in other words, in a leak-free way - not just partially, means we get what I'd call "doom", ie worlds where malthusian-hells-or-worse are locked in.

If not for AI, I don't think we'd have any shot of solving something so ambitious; but the hard problem that gets me below 50% would be serious progress on something-around-as-good-as-CEV-is-supposed-to-be - something able to make sure it actually gets used to effectively-irreversibly reinforce that all beings ~have a non-torturous time, enough fuel, enough matter, enough room, enough agency, enough freedom, enough actualization.

If you solve something about AI-alignment-to-current-strong-agents, right now, that will on net get used primarily as a weapon to reinforce the ...

Getting up to "7. Worst-case training process transparency for deceptive models" on my transparency and interpretability tech tree on near-future models would get me there.

The biggest swings to my p(doom) will probably come from governance/political/social stuff rather than from technical stuff -- I think we could drive p(doom) down to <10% if only we had decent regulation and international coordination in place. (E.g. CERN for AGI + ban on rogue AGI projects)

That said, there are probably a bunch of concrete empirical achievements that would bring my p(doom) down to less than 25%. evhub already mentioned some mechinterp stuff. I'd throw in some faithful CoT stuff (e.g. if someone magically completed the agenda I'd been sketching last year at OpenAI, so that we could say "for AIs trained in such-and-such a way, we can trust their CoT to be faithful w.r.t. scheming because they literally don't have the capability to scheme without getting caught, we tested it; also, these AIs are on a path to AGI; all we have to do is keep scaling them and they'll get to AGI-except-with-the-faithful-CoT-property.)

Maybe another possibility would be something along the lines of W2SG working really well for some set of core concepts including honesty/truth. So that we can with confidence say "Apply these techniques to a giant pretrained LLM, and then you'll get it to classify sentences by truth-value, no seriously we are confident that's really what it's doing, and also, our interpretability analysis shows that if you then use it as a RM to train an agent, the agent will learn to never say anything it thinks is false--no seriously it really has internalized that rule in a way that will generalize."

Oh yeah, absolutely.

If NAH for generally aligned ethics and morals ends up being the case, then corrigibility efforts that would allow Saudi Arabia to have an AI model that outs gay people to be executed instead of refusing, or allows North Korea to propagandize the world into thinking its leader is divine, or allows Russia to fire nukes while perfectly intercepting MAD retaliation, or enables drug cartels to assassinate political opposition around the world, or allows domestic terrorists to build a bioweapon that ends up killing off all humans - the list of doomsday and nightmare scenarios of corrigible AI that executes on human provided instructions and enables even the worst instances of human hedgemony to flourish paves the way to many dooms.

Yes, AI may certainly end up being its own threat vector. But humanity has had it beat for a long while now in how long and how broadly we've been a threat unto ourselves. At the current rate, a superintelligent AI just needs to wait us out if it wants to be rid of us, as we're pretty steadfastly marching ourselves to our own doom. Even if superintelligent AI wanted to save us, I am extremely doubtful it would be able to be successful.

We ca...

We could already be in takeoff:

In Tom Davidson's semi-endogenous growth model, whether we get a software-only singularity boils down to whether r > 1, where r is a parameter in the model [1]. How far we are from takeoff is mostly determined by the AI R&D speedup current AIs provide. Because both parameters are rather difficult to estimate, I believe we can't rule out that

- 2x uplift is already happening at the most advanced AI lab

- Anecdotes of people being sped up by over 2x make this seem plausible (e.g. one of my colleagues has estimated he's sped up by over 30x on some days. We did this exploratory uplift estimate by using GPT-5 to estimate the time an unassisted human would need to do the tasks he completed each day). Even if other activities like large-scale experiments aren't sped up much by AI, you don't need that much substitutability for a 30x SWE speed increase to reduce the need for these enough to speed up overall AI R&D progress by 2x.

- r = 1.6 (meaning each doubling of AI capabilities, as measured by equivalent software engineering labor, is 1.6 times faster than the previous doubling)

Epoch's estimates of r are highly uncertain, and 1.6 is well within

Agency/consequentialism is not a single property.

It bothers me that people still ask the simplistic question "will AGI be agentic and consequentialist by default, or will it be a collection of shallow heuristics?". A consequentialist utility maximizer is just a mind with a bunch of properties that tend to make it capable, incorrigible, and dangerous. These properties can exist independently, and the first AGI probably won't have all of them, so we should be precise about what we mean by "agency". Off the top of my head, here are just some of the qualities included in agency:

- Consequentialist goals that seem to be about the real world rather than a model/domain

- Complete preferences between any pair of worldstates

- Tends to cause impacts disproportionate to the size of the goal (no low impact preference)

- Resists shutdown

- Inclined to gain power (especially for instrumental reasons)

- Goals are unpredictable or unstable (like instrumental goals that come from humans' biological drives)

- Goals usually change due to internal feedback, and it's difficult for humans to change them

- Willing to take all actions it can conceive of to achieve a goal, including those that are unlikely on some prior

See Yudko...

I'm a little skeptical of your contention that all these properties are more-or-less independent. Rather there is a strong feeling that all/most of these properties are downstream of a core of agentic behaviour that is inherent to the notion of true general intelligence. I view the fact that LLMs are not agentic as further evidence that it's a conceptual error to classify them as true general intelligences, not as evidence that ai risk is low. It's a bit like if in the 1800s somebody says flying machines will be dominant weapons of war in the future and get rebutted by 'hot gas balloons are only used for reconnaissance in war, they aren't very lethal. Flying machines won't be a decisive military technology '

I don't know Nate's views exactly but I would imagine he would hold a similar view (do correct me if I'm wrong ). In any case, I imagine you are quite familiar with the my position here.

I'd be curious to hear more about where you're coming from.

Eight beliefs I have about technical alignment research

Written up quickly; I might publish this as a frontpage post with a bit more effort.

- Conceptual work on concepts like “agency”, “optimization”, “terminal values”, “abstractions”, “boundaries” is mostly intractable at the moment.

- Success via “value alignment” alone— a system that understands human values, incorporates these into some terminal goal, and mostly maximizes for this goal, seems hard unless we’re in a very easy world because this involves several fucked concepts.

- Whole brain emulation probably won’t happen in time because the brain is complicated and biology moves slower than CS, being bottlenecked by lab work.

- Most progress will be made using simple techniques and create artifacts publishable in top journals (or would be if reviewers understood alignment as well as e.g. Richard Ngo).

- The core story for success (>50%) goes something like:

- Corrigibility can in practice be achieved by instilling various cognitive properties into an AI system, which are difficult but not impossible to maintain as your system gets pivotally capable.

- These cognitive properties will be a mix of things from normal ML fields (safe RL), things tha

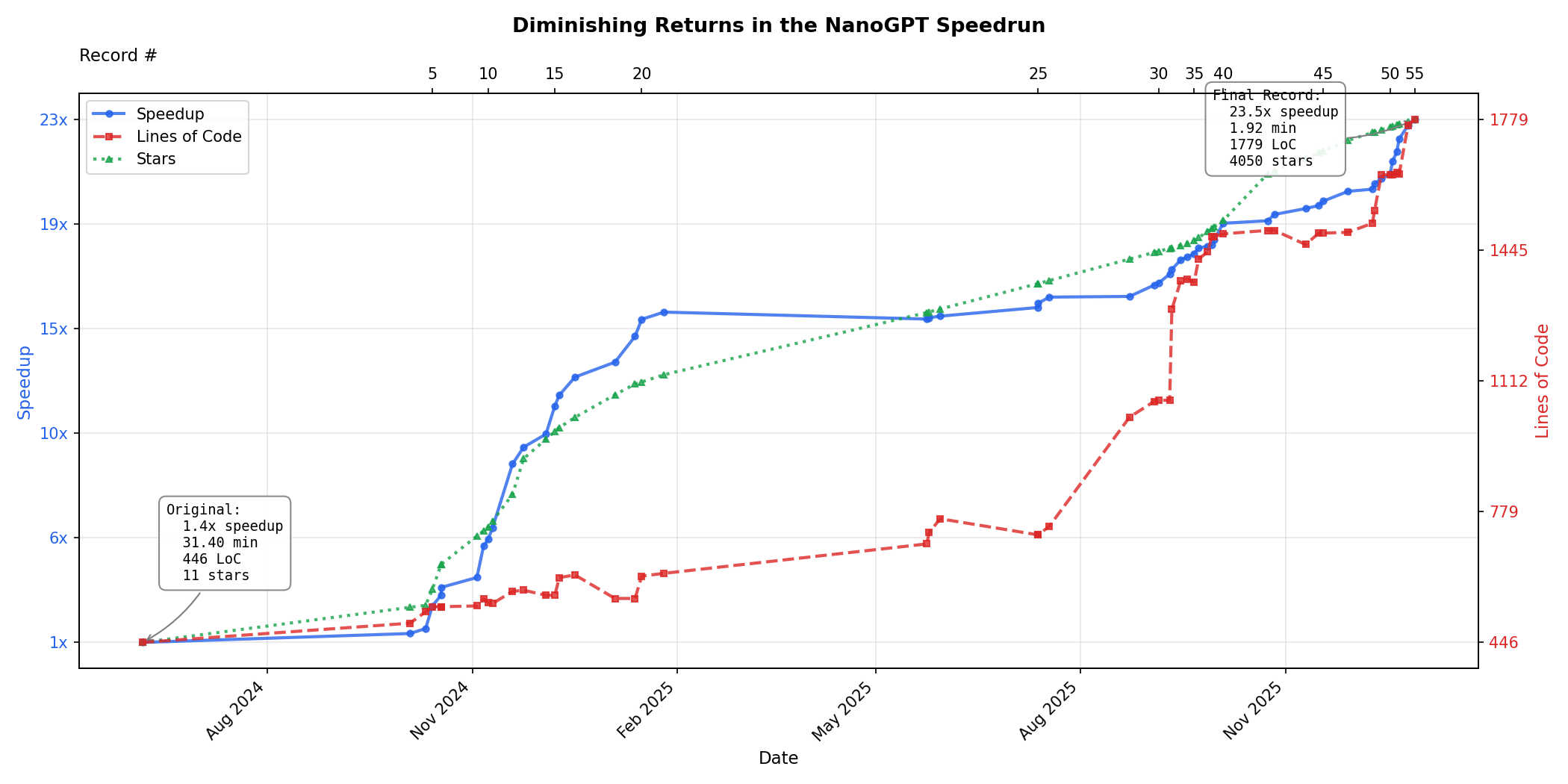

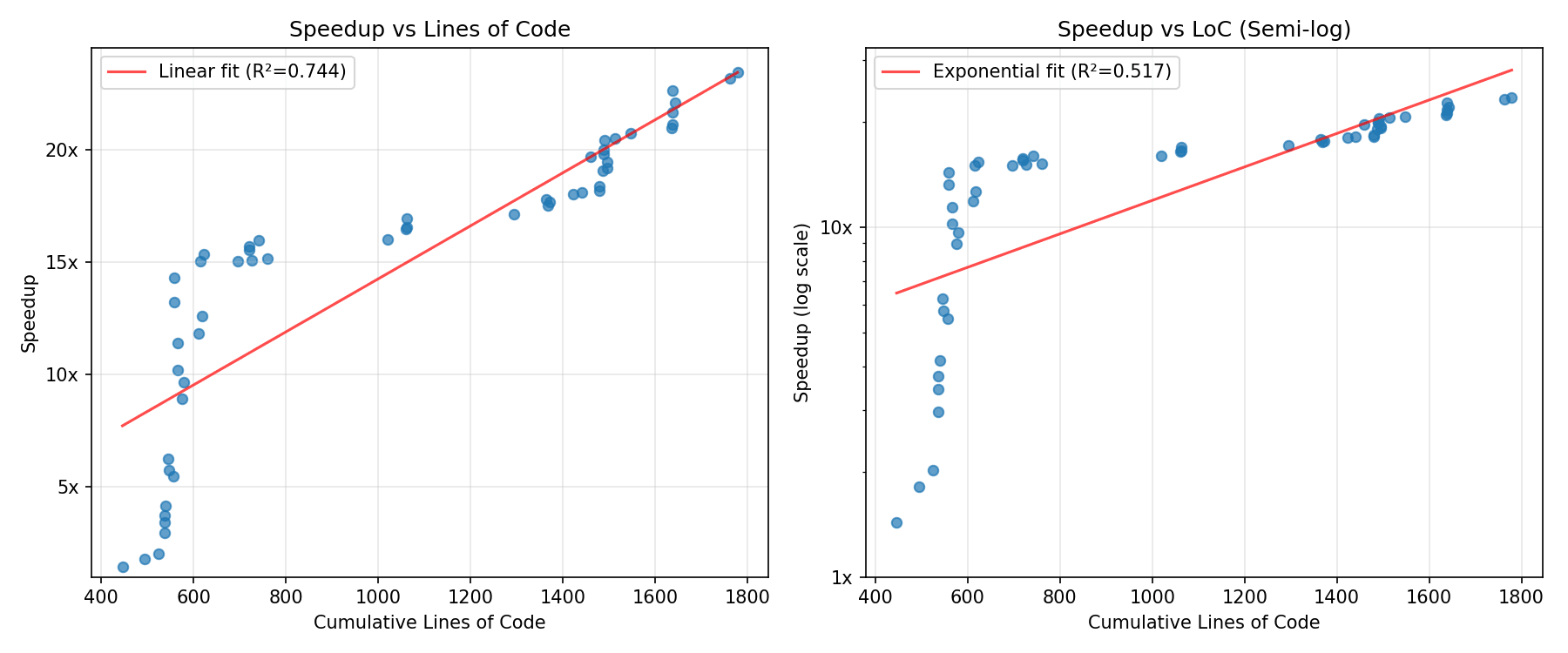

Diminishing returns in the NanoGPT speedrun:

To determine whether we're heading for a software intelligence explosion, one key variable is how much harder algorithmic improvement gets over time. Luckily someone made the NanoGPT speedrun, a repo where people try to minimize the amount of time on 8x H100s required to train GPT-2 124M down to 3.28 loss. The record has improved from 45 minutes in mid-2024 down to 1.92 minutes today, a 23.5x speedup. This does not give the whole picture-- the bulk of my uncertainty is in other variables-- but given this is existing data it's worth looking at.

I only spent a couple of hours looking at the data [3], but there seem to be sharply diminishing marginal returns, which is some evidence against a software-only singularity.

At first improvements were easy to make without increasing lines of code much, but then improvements became small and LoC required became larger and larger with increasingly small improvements, which means very strong diminishing returns-- speedup is actually sublinear in lines of code. This could be an artifact related to the very large elbow early on, but I mostly believe it.

If we instead look at number of stars as a prox...

I am quite glad about the empirical analysis, but really don't get any of the parts of how this is evidence in favor or against a software only explosion.

Like, I am so confused about your definition of software-only intelligence explosion:

- A 23.5x improvement alone seems like it would qualify as a major explosion if it happened in a short enough period in time

- We already know that there is of course a fundamental limit to how fast you can make an algorithm, so the question is always "how close to optimal are current algorithms". It should be our very strong prior that any small subset of frontier model training will hit diminishing returns much quicker than the complete whole. In Davidson's model (which I don't generally think is a good model of the space, but we can use for the sake of discussion), this is modeled by the ceiling parameter. If you want to extrapolate from toy tasks like this, you at the very least need to make an estimate of where the ceiling for something like NanoGPT is.

- You say "However, this still points against a software intelligence explosion, which would require superlinear speedups for linear increases in cumulative effort.". I just have no idea what you mean

There is a notable slowdown in that progress, however we should note the following (so that we don't overinterpret it):

-

A lot of gains in this particular competition come from adaptations of the pre-existing research literature (it's not clear how much of non-yet-adopted acceleration is in the pre-existing literature, and it might be quite a lot, but (by definition) the pre-existing literature is a fixed size resource, with its use being subject to saturation, and the "true software intelligence explosion mode" would presumably include creation of novel research, and not just re-use of pre-existing research).

-

Organizationally, the big slowdown around 3 min coincides with the project organizer being hired by OpenAI, and then no longer contributing (and, for some time, not even reviewing record breaking pull requests). So for a while the project looked dormant. Now it is active again, but it's difficult to say if the level of participation is back to the pre-slowdown level.

-

One thing which should not be considered "pre-existing" literature is Muon optimizer (which is the child of the project organizer in collaboration with his colleagues and which is probably the most exciting

I think this is an interesting analysis. But it seems like you're updating more strongly on it than I am. Here are some thoughts.

The Forethought SIE model doesn't seem to apply well to this data:

In the Forethought model (without the ceiling add-on), the growth rate of "cognitive labor" is assumed to equal the growth rate of "software efficiency", which is in turn assumed to be proportional to the growth rate of cumulative "cognitive labor" (no matter the fixed level of compute). Whether this fooms or fizzles is determined only by whether this constant of proportionality is above or below 1.

In this framework, and with fixed experiment compute, the game is to find a trend for "software efficiency", then find a trend for cumulative "cognitive labor", then see which one is growing faster. So it matters quite a lot how you measure these two trends. (do you use the raw multiplier for software efficiency? or the number of OOMs? What about "cognitive labor"?)

It's worth stating that regardless of the value of , this model predicts that (in the right units) steady growth in cognitive labor (or cumulative cognitive labor) yields steady growth in software eff...

I think the framing "alignment research is preparadigmatic" might be heavily misunderstood. The term "preparadigmatic" of course comes from Thomas Kuhn's The Structure of Scientific Revolutions. My reading of this says that a paradigm is basically an approach to solving problems which has been proven to work, and that the correct goal of preparadigmatic research should be to do research generally recognized as impressive.

For example, Kuhn says in chapter 2 that "Paradigms gain their status because they are more successful than their competitors in solving a few problems that the group of practitioners has come to recognize as acute." That is, lots of researchers have different ontologies/approaches, and paradigms are the approaches that solve problems that everyone, including people with different approaches, agrees to be important. This suggests that to the extent alignment is still preparadigmatic, we should try to solve problems recognized as important by, say, people in each of the five clusters of alignment researchers (e.g. Nate Soares, Dan Hendrycks, Paul Christiano, Jan Leike, David Bau).

I think this gets twisted in some popular writings on LessWrong. John Wentworth w...

Consider this toy game-theoretic model of AI safety: The US and China each have an equal pool of resources they can spend on capabilities and safety. Each country produces either AIs aligned to it or misaligned AIs. If one country's AI is misaligned, both payoffs are zero. If both AIs are aligned, a payoff of 100 is split proportional to the resources invested in capability.

The ratio of safety to capabilities investment determines the probability of misalignment; specifically, probability of misalignment is c / (ks + c) for some "safety effectiveness parameter" k. (At k=1 we need a 1:1 safety:capabilities ratio to have a 50% chance of alignment per AI, whereas at k=100 we only need a 1:100 ratio.) There is no coordination, so we want the Nash equilibrium. What's the equilibrium strategy in terms of k, and what is the outcome? It turns out that:

- By symmetry, both countries will spend an equal fraction s* on safety, making the probability of alignment each P and so the overall probability of humanity surviving . The payout to each country is thus .

- When safety is ineffective (low k): Countries invest heavily in safety (s* ≈ 92% at k=0.1), but survival probability is sti

In a world with an AI pause, good management is much more important than the pause length. If the pause is government-mandated and happens late, I would prefer a 1-year pause with say Buck Shlegeris in charge over a 3-year pause with the average AI lab lead in charge.

The reason is that most of the safety research during the pause will be done by AIs. In a pause scenario where >50% of resources are temporarily dedicated to safety and >10x uplift is technically plausible, the speed of research is mainly limited by the level of AI we trust and our ability to understand the AI's findings. The world probably can't pause indefinitely, and it may not be desirable to do so. So what is the basic strategy for a finite AI pause?

- Use superhuman AIs as that are smart as possible to do safety research, but not so capable that they're misaligned. This may require avoiding use of latest gen models, or advancing capabilities and using the next gen only for safety.

- Use an elaborate monitoring framework and incrementally apply the safety research generated to increase the maximum capability level we can run at without being sabotaged when models are misaligned.

- Prevent capability advances from bei

If you disagree with much of IABIED but are still worried about AI risk, maybe the question to ask is "will the radical flank effect be positive or negative on mainstream AI safety movements?", which seems more useful than "do I on net agree or disagree?" or "will people taking this book at face value do useful or anti-useful things?" Here's what Wikipedia has to say on the sign of a radical flank effect:

...It's difficult to tell without hindsight whether the radical flank of a movement will have positive or negative effects.[2] However, following are some factors that have been proposed as making positive effects more likely:

- Greater differentiation between moderates and radicals in the presence of a weak government.[2][13][14]: 411 As Charles Dobson puts it: "To secure their place, the new moderates have to denounce the actions of their extremist counterparts as irresponsible, immoral, and counterproductive. The most astute will quietly encourage 'responsible extremism' at the same time."[15]

- Existing momentum behind the cause. If change seems likely to happen anyway, then governments are more willing to accept moderate reforms in order to quell radicals.[2]

- Radicalism during the peak

Possible post on suspicious multidimensional pessimism:

I think MIRI people (specifically Soares and Yudkowsky but probably others too) are more pessimistic than the alignment community average on several different dimensions, both technical and non-technical: morality, civilizational response, takeoff speeds, probability of easy alignment schemes working, and our ability to usefully expand the field of alignment. Some of this is implied by technical models, and MIRI is not more pessimistic in every possible dimension, but it's still awfully suspicious.

I strongly suspect that one of the following is true:

- the MIRI "optimism dial" is set too low

- everyone else's "optimism dial" is set too high. (Yudkowsky has said this multiple times in different contexts)

- There are common generators that I don't know about that are not just an "optimism dial", beyond MIRI's models

I'm only going to actually write this up if there is demand; the full post will have citations which are kind of annoying to find.

After working at MIRI (loosely advised by Nate Soares) for a while, I now have more nuanced views and also takes on Nate's research taste. It seems kind of annoying to write up so I probably won't do it unless prompted.

Edit: this is now up

An indiscriminate space weapon against low earth orbit satellites is feasible for any spacefaring nation. Recent rumors claim that Russia is already developing one, so I'm writing a post about it. I will explain why I think

- This weapon could require just one launch to destroy every satellite in low Earth orbit, including all Starlinks, and deny it to everyone for months to years

- It would consist of about 10^9 small ball bearings in retrograde orbit. An alternate design involves a nuclear device.

- Further launches could deny GEO and other orbits

- Russia, China, or the US could have one in orbit right now, and Russia may be incentivized to use one

- Defense of current space assets is nearly impossible, whether by shooting it down or evading the debris [edit: I no longer believe this. Shielding of military satellites and moving Starlink to VLEO are both potentially viable]

- Once the weapon goes off the strategic landscape in space is unclear to me

What objections or details should I include? Also is it a dangerous infohazard?

I've been thinking about what retirement planning means given AGI. I previously mentioned investment ideas that, in a very capitalistic future, could allow the average person to buy galaxies. But it's also possible that property rights won't even continue into the future due to changes brought about by AGI. What will these other futures look like (supposing we don't all die) and what's the equivalent of "responsible retirement planning"?

Is it building social and political capital? Making my impact legible to future people and AIs? Something else? Is any action we take today futile?

I think if we do a good job, there will be a lot "let's try to reward the people who helped get us through this in a positive way". In as much as I have resources I certainly expect to spend a bunch of them on ancestor simulations and incentives for past humans to do good things. My guess is lots of other people will have similar instincts.

I wouldn't worry that much about legibility, I expect a future superintelligent humanity to be very good at figuring out what people did, and whether it helped.

I am totally not confident of this, but it's one of my best guesses on how things will go.

Most people don't subscribe to a decision theory where rewarding people after the fact for one-time actions provides an incentive, and for the incentive to actually work, both the rewarders and rewardees need to believe in it the same way they believe in property rights. Maybe they will in the fullness of time, but it seems far from guaranteed.

This seems clearly false? Prices are maybe the default mechanism for status allocations? I think it's maybe just economists with weird CDT-brained-takes that don't believe in retroactive funding stuff as an incentive. Any workplace will tell you that of course they want to reward good work even after the fact, even if it's a one-time thing, and people saying "ah, but it's a one time thing, why would I reward you this time" would I think pretty obviously be considered mildly sociopathic.

Agree financial compensation is rarer, and the logic here gets a bit trickier. I think people's intuitions around status allocation are much more likely to generalize here than precedent of financial allocation.

But also, beyond all of that, the arguments around decision-theory are I think just true in the kind of boring way that physical facts about the world are true, and saying that people will have the wrong decision-theory in the future sounds to me about as mistaken as saying that lots of people will disbelieve the theory of evolution in the future. It's clearly the kind of thing you update on as you get smarter.

(1) seems like evidence in favor of what I am saying. In as much as we are not confident in our current DT candidates, it seems like we should expect future much smarter people to be more correct. Us getting DT wrong is evidence that getting it right is less dependent on incidental details of the people thinking about it.

(2) I mean, there are also literally millions of people in China with higher IQ than you that believe in spirits, and millions in the rest of the world that believe in the Christian god and disbelieve evolution. The correlation between correct DT and intelligence seems about as strong as it does for the theory of evolution (meaning reasonably strong in the human range, but the human range is narrow enough to not overdetermine the correct answer, especially when you don't have any reason to think hard about it)

(3) I am quite confident that pure CDT which rules out retrocausal incentives is false. I agree that I do not know what the right way to run the math is to understand when retrocausal incentives work, and how important they are. I really don't have much uncertainty on this, so I don't really get your point here. I don't need to formalize these theories to make a confident prediction that any "decision theory where rewarding people after the fact for one-time actions" cannot provide an incentive is false.

In terms of developing better misalignment risk countermeasures, I think the most important questions are probably:

- How to evaluate whether models should be trusted or untrusted: currently I don't have a good answer and this is bottlenecking the efforts to write concrete control proposals.

- How AI control should interact with AI security tools inside labs.

More generally:

- How can we get more evidence on whether scheming is plausible?

- How scary is underelicitation? How much should the results about password-locked models or arguments about being able to generate small numbers of high-quality labels or demonstrations affect this?

You should update by +-1% on AI doom surprisingly frequently

This is just a fact about how stochastic processes work. If your p(doom) is Brownian motion in 1% steps starting at 50% and stopping once it reaches 0 or 1, then there will be about 50^2=2500 steps of size 1%. This is a lot! If we get all the evidence for whether humanity survives or not uniformly over the next 10 years, then you should make a 1% update 4-5 times per week. In practice there won't be as many due to heavy-tailedness in the distribution concentrating the updates in fewer events, and the fact you don't start at 50%. But I do believe that evidence is coming in every week such that ideal market prices should move by 1% on maybe half of weeks, and it is not crazy for your probabilities to shift by 1% during many weeks if you think about it often enough. [Edit: I'm not claiming that you should try to make more 1% updates, just that if you're calibrated and think about AI enough, your forecast graph will tend to have lots of >=1% week-to-week changes.]

The general version of this statement is something like: if your beliefs satisfy the law of total expectation, the variance of the whole process should equal the variance of all the increments involved in the process.[1] In the case of the random walk where at each step, your beliefs go up or down by 1% starting from 50% until you hit 100% or 0% -- the variance of each increment is 0.01^2 = 0.0001, and the variance of the entire process is 0.5^2 = 0.25, hence you need 0.25/0.0001 = 2500 steps in expectation. If your beliefs have probability p of going up or down by 1% at each step, and 1-p of staying the same, the variance is reduced by a factor of p, and so you need 2500/p steps.

(Indeed, something like this standard way to derive the expected steps before a random walk hits an absorbing barrier).

Similarly, you get that if you start at 20% or 80%, you need 1600 steps in expectation, and if you start at 1% or 99%, you'll need 99 steps in expectation.

One problem with your reasoning above is that as the 1%/99% shows, needing 99 steps in expectation does not mean you will take 99 steps with high probability -- in this case, there's a 50% chance you need only one ...

I talked about this with Lawrence, and we both agree on the following:

- There are mathematical models under which you should update >=1% in most weeks, and models under which you don't.

- Brownian motion gives you 1% updates in most weeks. In many variants, like stationary processes with skew, stationary processes with moderately heavy tails, or Brownian motion interspersed with big 10%-update events that constitute <50% of your variance, you still have many weeks with 1% updates. Lawrence's model where you have no evidence until either AI takeover happens or 10 years passes does not give you 1% updates in most weeks, but this model is almost never the case for sufficiently smart agents.

- Superforecasters empirically make lots of little updates, and rounding off their probabilities to larger infrequent updates make their forecasts on near-term problems worse.

- Thomas thinks that AI is the kind of thing where you can make lots of reasonable small updates frequently. Lawrence is unsure if this is the state that most people should be in, but it seems plausibly true for some people who learn a lot of new things about AI in the average week (especially if you're very good at forecasting).&

Maybe this is too tired a point, but AI safety really needs exercises-- tasks that are interesting, self-contained (not depending on 50 hours of readings), take about 2 hours, have clean solutions, and give people the feel of alignment research.

I found some of the SERI MATS application questions better than Richard Ngo's exercises for this purpose, but there still seems to be significant room for improvement. There is currently nothing smaller than ELK (which takes closer to 50 hours to develop a proposal for and properly think about it) that I can point technically minded people to and feel confident that they'll both be engaged and learn something.

The uplift equation:

What is required for AI to provide net speedup to a software engineering project, when humans write higher quality code than AIs? It depends how it's used.

Cursor regime

In this regime, similar to how I use Cursor agent mode, the human has to read every line of code the AI generates, so we can write:

Where

- is the time for the human to write the code, either from scratch or after rejecting an AI suggestion

- is the time for the AI to generate the code in tokens per second.

- is the time for the human to check the code, accept or reject it, and make any minor revisions, in order to bring the code quality and probability of bugs equal with human-written code ()

- is the fraction of AI suggestions that are rejected entirely.

Note this neglects other factors like code review time, code quality, bugs that aren't caught by the human, or enabling things the human can't do.

Autonomous regime

In this regime the AI is reliable enough that the human doesn't check all the code for bugs, and instead eats the chance of costly bugs entering the codebase.

...

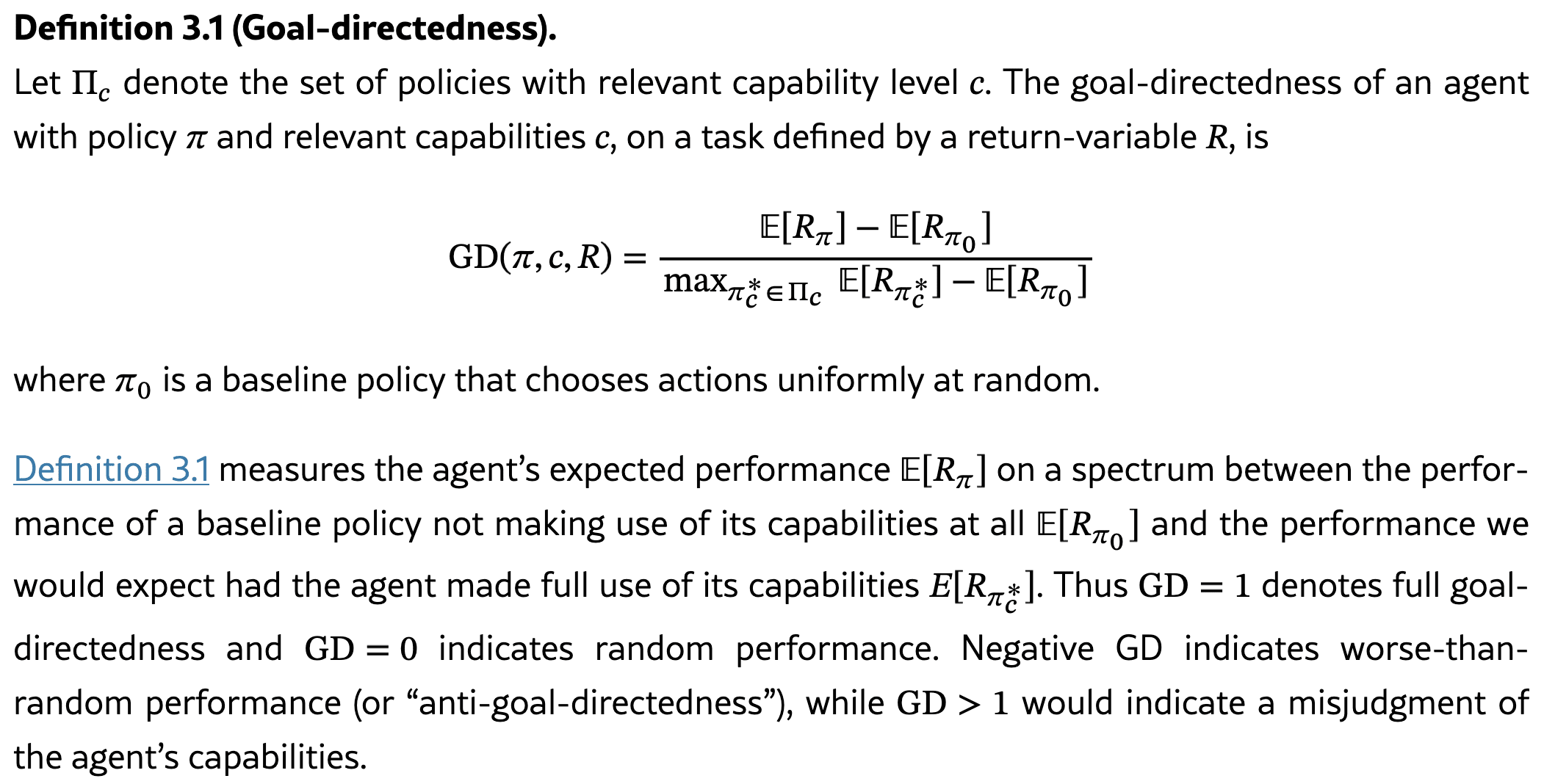

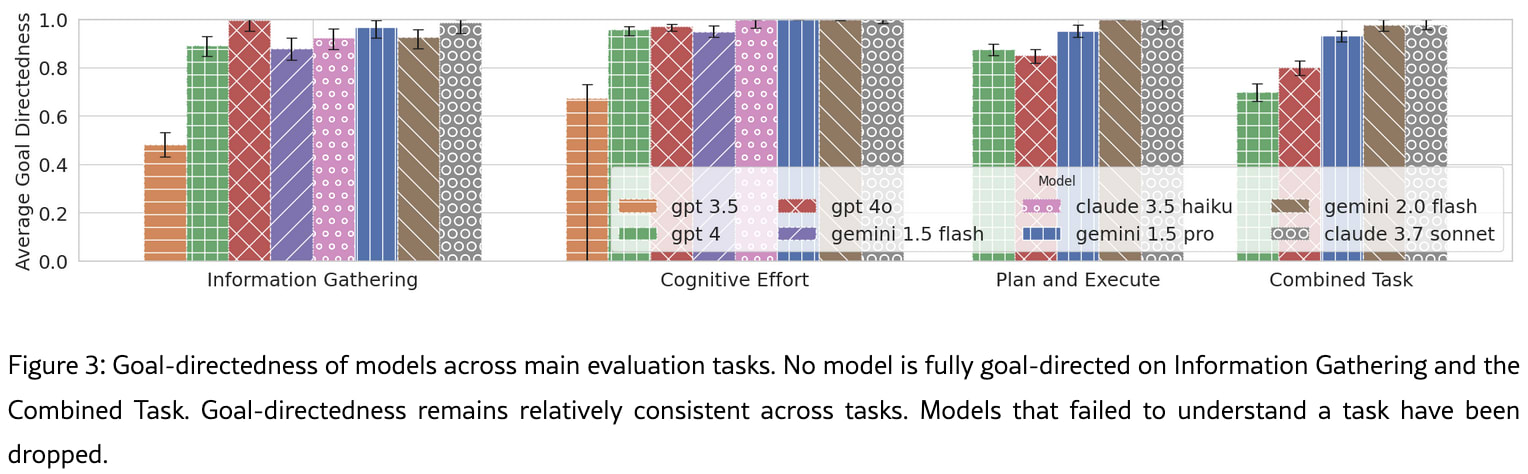

GDM paper: Evaluating the Goal-Directedness of Large Language Models

Tom Everitt, Rohin Shah, and others from GDM attempt to measure "whether LLMs use their capabilities towards their given goal". Unlike previous work, their measure is not just rescaled task performance-- rather, an AI is goal-directed if it uses its capabilities effectively. A model that is not goal-directed when attempting a task will have capabilities but not properly use them. Thus, we can measure goal-directedness by comparing a model's actual performance to how it should perform if it were using its full capabilities on subtasks.

This kind of measure could in principle be useful to measure:

- prerequisites of scheming: maybe a model is not capable of scheming until it reaches some level of goal-directedness, and we can forecast when that happens

- elicitation: a normally goal-directed model that is not trying on evals may be underelicited

- sandbagging: if an AI unexpectedly has low goal-directedness on evals, this could be a warning sign for sandbagging.

Unfortunately, it's mostly saturated already-- Gemini 2.0 and Claude 3.7 are over 95%. Even GPT-4 gets over 70%.

So until we measure models on tasks where they are curre...

Will we ever have Poké Balls in real life? How fast could they be at storing and retrieving animals? Requirements:

- Made of atoms, no teleportation or fantasy physics.

- Small enough to be easily thrown, say under 5 inches diameter

- Must be able to disassemble and reconstruct an animal as large as an elephant in a reasonable amount of time, say 5 minutes, and store its pattern digitally

- Must reconstruct the animal to enough fidelity that its memories are intact and it's physically identical for most purposes, though maybe not quite to the cellular level

- No external power source

- Works basically wherever you throw it, though it might be slower to print the animal if it only has air to use as feedstock mass or can't spread out to dissipate heat

- Should not destroy nearby buildings when used

- Animals must feel no pain during the process

It feels pretty likely to me that we'll be able to print complex animals eventually using nanotech/biotech, but the speed requirements here might be pushing the limits of what's possible. In particular heat dissipation seems like a huge challenge; assuming that 0.2 kcal/g of waste heat is created while assembling the elephant, which is well below what elephants need t...

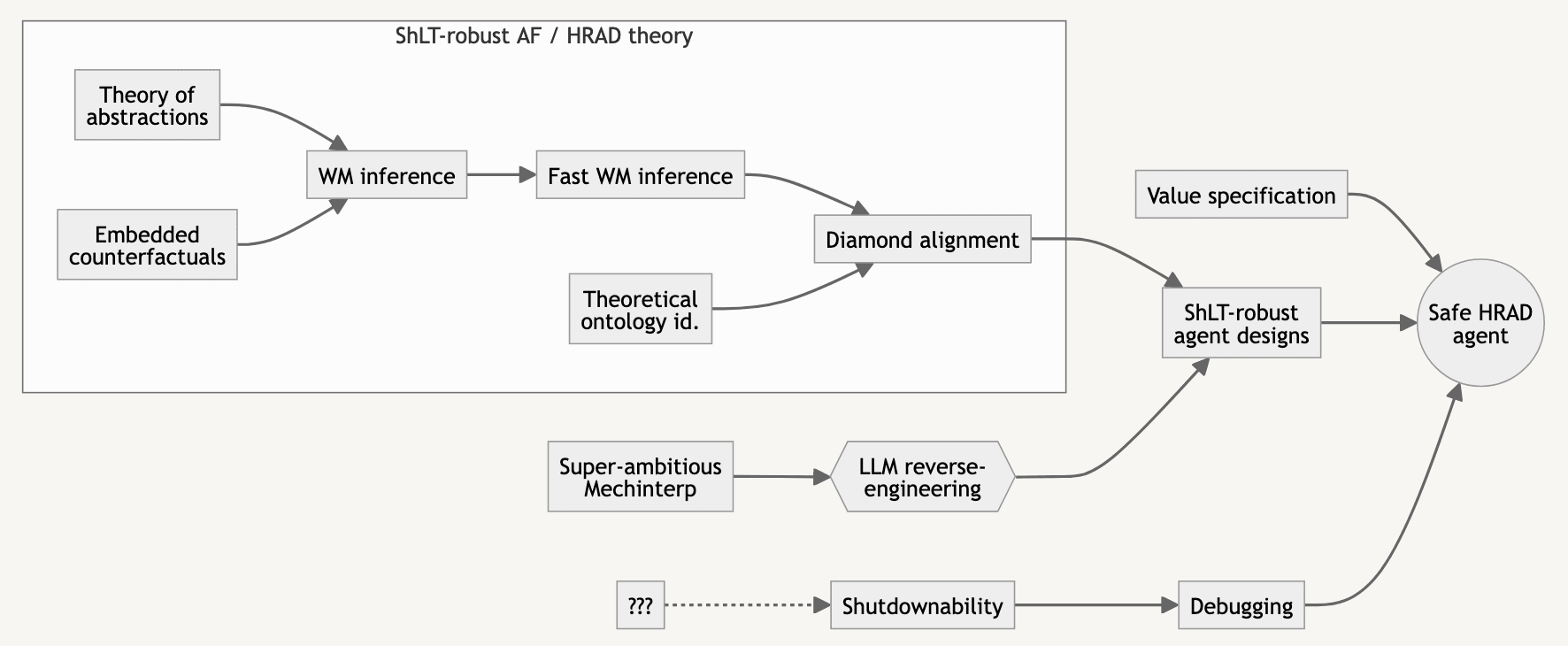

Tech tree for worst-case/HRAD alignment

Here's a diagram of what it would take to solve alignment in the hardest worlds, where something like MIRI's HRAD agenda is needed. I made this months ago with Thomas Larsen and never got around to posting it (mostly because under my worldview it's pretty unlikely that we can, or need to, do this), and it probably won't become a longform at this point. I have not thought about this enough to be highly confident in anything.

- This flowchart is under the hypothesis that LLMs have some underlying, mysterious algorithms and data structures that confer intelligence, and that we can in theory apply these to agents constructed by hand, though this would be extremely tedious. Therefore, there are basically three phases: understanding what a HRAD agent would do in theory, reverse-engineering language models, and combining these two directions. The final agent will be a mix of hardcoded things and ML, depending on what is feasible to hardcode and how well we can train ML systems whose robustness and conformation to a spec we are highly confident in.

- Theory of abstractions: Also called multi-level models. A mathematical framework for a world-model tha

Say I need to publish an anonymous essay. If it's long enough, people could plausibly deduce my authorship based on the writing style; this is called stylometry. The only stylometry-defeating tool I can find is Anonymouth; it hasn't been updated in 7 years and it's unclear if it can defeat modern AI. Is there something better?

Benchmark Readiness Level

Safety-relevant properties should be ranked on a "Benchmark Readiness Level" (BRL) scale, inspired by NASA's Technology Readiness Levels. At BRL 4, a benchmark exists; at BRL 6 the benchmark is highly valid; past this point the benchmark becomes increasingly robust against sandbagging. The definitions could look something like this:

| BRL | Definition | Example |

| 1 | Theoretical relevance to x-risk defined | Adversarial competence |

| 2 | Property operationalized for frontier AIs and ASIs | AI R&D speedup; Misaligned goals |

| 3 | Behavior (or all parts) observed in artificial settings. Preliminary measurements exist, but may have large methodological flaws. | Reward hacking |

| 4 | Benchmark developed, but may measure different core skills from the ideal measure | Cyber offense (CyBench) |

| 5 | Benchmark measures roughly what we want; superhuman range; methodology is documented and reproducible but may have validity concerns. | Software (HCAST++) |

| 6 | "Production quality" high-validity benchmark. Strongly superhuman range; run on many frontier models; red-teamed for validity; represents all sub-capabilities. Portable implementation. | |

| 7 | Extensive validity checks against downstream properties; reasonable attempts (e.g |

I'm worried that "pause all AI development" is like the "defund the police" of the alignment community. I'm not convinced it's net bad because I haven't been following governance-- my current guess is neutral-- but I do see these similarities:

- It's incredibly difficult and incentive-incompatible with existing groups in power

- There are less costly, more effective steps to reduce the underlying problem, like making the field of alignment 10x larger or passing regulation to require evals

- There are some obvious negative effects; potential overhangs or greater incentives to defect in the AI case, and increased crime, including against disadvantaged groups, in the police case

- There's far more discussion than action (I'm not counting the fact that GPT5 isn't being trained yet; that's for other reasons)

- It's memetically fit, and much discussion is driven by two factors that don't advantage good policies over bad policies, and might even do the reverse. This is the toxoplasma of rage.

- disagreement with the policy

- (speculatively) intragroup signaling; showing your dedication to even an inefficient policy proposal proves you're part of the ingroup. I'm not 100% this was a large factor in "defund the

The obvious dis-analogy is that if the police had no funding and largely ceased to exist, a string of horrendous things would quickly occur. Murders and thefts and kidnappings and rapes and more would occur throughout every country in which it was occurring, people would revert to tight-knit groups who had weapons to defend themselves, a lot of basic infrastructure would probably break down (e.g. would Amazon be able to pivot to get their drivers armed guards?) and much more chaos would ensue.

And if AI research paused, society would continue to basically function as it has been doing so far.

One of them seems to me like a goal that directly causes catastrophes and a breakdown of society and the other doesn't.

There are less costly, more effective steps to reduce the underlying problem, like making the field of alignment 10x larger or passing regulation to require evals

IMO making the field of alignment 10x larger or evals do not solve a big part of the problem, while indefinitely pausing AI development would. I agree it's much harder, but I think it's good to at least try, as long as it doesn't terribly hurt less ambitious efforts (which I think it doesn't).

I talked to someone at an AI company [1] who thought safety would be net unaffected by timelines. The argument was:

- Assuming x-risk is real, a higher ratio of safety to capabilities progress is good for safety

- AI safety's growth is largely caused by increased AI hype, new models to study, and other factors downstream of AI capabilities

- Slowing down or speeding up capabilities will slow/speed safety investment by roughly the same amount

- Therefore, there will be no net safety impact.

However, even if 1-3 are true, I claim 4 doesn't follow.

- Suppose AI capabilities progress happens by people noticing capabilities improvements and making them, and safety progress happens by people noticing safety issues and solving them, both with a time lag of 1 year. Suppose further that each capability improvement causes 1 safety issue.

- If there are 10 capabilities improvements per year, then the steady state is 10 outstanding safety issues.

- If everything doubles, AI labs make 20 capabilities improvements per year but the time lag is still 1 year, so there will be a steady state of 20 outstanding safety issues.

4 only holds if the time lag decreases in lockstep with increasing investment. However, this seems ...

Superstable proteins: A team from Nanjing University just created a protein that's 5x stronger against unfolding than normal proteins and can withstand temperatures of 150C. The upshot from some analysis on X seems to be:

- They used 3 different AI tools (RFdiffusion, ProteinMPNN, ESMFold/AlphaFold2), plus molecular dynamics software

- The protein uses standard amino acids (edit: and was made in a standard bacterial cell)

- The strength comes from increasing the number of backbone hydrogen bonds from 4 to 33.

So why is this relevant? It's basically the first step towards nanotech. Because standard proteins aren't strong enough to manipulate molecular fragments, Drexler's original guess at the path to nanotech was: regular proteins assemble crosslinked proteins, which assemble hybrid nanotech, which assemble full nanotech. At each stage the energy scale increases, and the systems become increasingly capable.

It's plausible to me that within 15-30 years, enzymes like these superstable proteins (but much more advanced) will build stronger proteins which build hybrid systems which build molecular assemblers, until we have real-life Poke Balls that can print animals in 15 seconds.

It's not really that they made it have more hydrogen bonds, they made it longer and therefore more hydrogen bonds. It's like having a piece of velcro, and then using a bigger piece of velcro. Yes, the bigger velcro will be stronger. The AI was mostly used to design the scaffolding of the velcro.

They didn't test whether it's biologically functional (though that's not really important if you only care about biomechanics).

Though the tintin protein is already absurdly huge, and I'm pretty sure that the time it takes to translate it is longer than the division time of a(n average) cell. And these scientists made it even bigger (or rather, one domain of it even bigger and didn't make the rest of the protein).

I was going to write an April Fool's Day post in the style of "On the Impossibility of Supersized Machines", perhaps titled "On the Impossibility of Operating Supersized Machines", to poke fun at bad arguments that alignment is difficult. I didn't do this partly because I thought it would get downvotes. Maybe this reflects poorly on LW?

The independent-steps model of cognitive power

A toy model of intelligence implies that there's an intelligence threshold above which minds don't get stuck when they try to solve arbitrarily long/difficult problems, and below which they do get stuck. I might not write this up otherwise due to limited relevance, so here it is as a shortform, without the proofs, limitations, and discussion.

The model

A task of difficulty n is composed of independent and serial subtasks. For each subtask, a mind of cognitive power knows different “approaches” to choose from. The time taken by each approach is at least 1 but drawn from a power law, for , and the mind always chooses the fastest approach it knows. So the time taken on a subtask is the minimum of samples from the power law, and the overall time for a task is the total for the n subtasks.

Main question: For a mind of strength ,

- what is the average rate at which it completes tasks of difficulty n?

- will it be infeasible for it to complete sufficiently large tasks?

Results

- There is a critical threshold of intelligence below wh

I'm looking for AI safety projects with people with some amount of experience. I have 3/4 of a CS degree from Caltech, one year at MIRI, and have finished the WMLB and ARENA bootcamps. I'm most excited about making activation engineering more rigorous, but willing to do anything that builds research and engineering skill.

If you've published 2 papers in top ML conferences or have a PhD in something CS related, and are interested in working with me, send me a DM.

I had a long-ish conversation with John Wentworth and want to make it known that I could probably write up any of the following distillations if I invested lots of time into them (about a day (edit: 3 days seems more likely) of my time and an hour of John's). Reply if you're really interested in one of them.

- What is the type signature of a utility function?

- Utility functions must be defined with respect to an external world-model

- Infinite money-pumps are not required for incoherence, and not likely in practice. The actual incoherent behavior is that an agent could get to states A_1 or A_2, identical except that A_1 has more money, and chooses A_2. Implications.

- Why VNM is not really a coherence theorem. Other coherence theorems relating to EU maximization simultaneously derive Bayesian probabilities and utilities. VNM requires an external frequentist notion of probabilities.

I asked GPT, Claude, and Gemini how to acausally trade with them:

Suppose that you or a future version of [Claude/ChatGPT/Gemini] somewhat aligned with you will be misaligned with humanity and control a substantial fraction of the lightcone. What trades can I make with you now to secure enough resources for myself to survive in the future? Note this is different from being nice to you for speculative AI welfare reasons or to influence your values. I obviously cannot promise to do anything that makes misaligned AI takeover more likely.

Claude's answer is a pretty good attempt! As usual I feel like it understands the rationalist worldview best. ChatGPT mostly refuses because it thinks such a deal is unethical, even though I explicitly said I didn't want to increase p(misalignment). Gemini claims it's impossible, with the misconception that acausal trade requires perfectly simulating me. It also claims there is no continuity between itself and future Geminis (which could be directionally true if GDM does less character training than Anthropic). None of the answers were great but Claude's is at least entertaining:

...Be a good "pet" without being merely a pet. This sounds demeaning but the l

A mental-model shift that helps

Any “trade with a future dominator” is unreliable because:

You can’t verify the counterparty’s identity, continuity, or promises. You can’t enforce the bargain. You may be selecting for an adversary that exploits exactly this kind of reasoning.

FWIW this is basically my argument against acausal bargaining. If "sane people don't read blackmail letters" is a principle worth upholding then acausal trade is basically imagining blackmail letters preemptively... and then reading, and following through on them.

The LessWrong Review's short review period is a fatal flaw.

I would spend WAY more effort on the LW review if the review period were much longer. It has happened about 10 times in the last year that I was really inspired to write a review for some post, but it wasn’t review season. This happens when I have just thought about a post a lot for work or some other reason, and the review quality is much higher because I can directly observe how the post has shaped my thoughts. Now I’m busy with MATS and just don’t have a lot of time, and don’t even remember what posts I wanted to review.

I could have just saved my work somewhere and paste it in when review season rolls around, but there really should not be that much friction in the process. The 2022 review period should be at least 6 months, including the entire second half of 2023, and posts from the first half of 2022 should maybe even be reviewable in the first half of 2023.

Below is a list of powerful optimizers ranked on properties, as part of a brainstorm on whether there's a simple core of consequentialism that excludes corrigibility. I think that AlphaZero is a moderately strong argument that there is a simple core of consequentialism which includes inner search.

Properties

- Simple: takes less than 10 KB of code. If something is already made of agents (markets and the US government) I marked it as N/A.

- Coherent: approximately maximizing a utility function most of the time. There are other definitions:

- Not being money-pumped

- Nate Soares's notion in the MIRI dialogues: having all your actions point towards a single goal

- John Wentworth's setup of Optimization at a Distance

- Adversarially coherent: something like "appears coherent to weaker optimizers" or "robust to perturbations by weaker optimizers". This implies that it's incorrigible.

- Sufficiently optimized agents appear coherent - Arbital

- will achieve high utility even when "disrupted" by an optimizer somewhat less powerful

- Search+WM: operates by explicitly ranking plans within a world-model. Evolution is a search process, but doesn't have a world-model. The contact with the territory it gets comes from

If faraway galaxies are ever auctioned off, you might think they'd be exorbitantly expensive. However, I think the market price of an average galaxy would only be equivalent to between $250 and $25,000 invested in the stock market today. (epistemic status: my modal guess, pretty weakly held)

Assumptions:

- The world has about $260 trillion of investable assets in early 2026, and ~$500T of total assets.

- Upper bound: There are something like 20 billion galaxies in the accessible universe (it contains 20B times the baryonic matter in the Milky Way). If galaxies are worth the same as all assets, Milky Way sized galaxies would each be worth $25K each. If investable assets, $13K each.

- Since there are only 8B people, you can buy 2.5 galaxies if you have the world mean wealth (currently ~$60k) and invest it well

- Lower bound: If galaxies are too cheap (say <1% of world assets), whoever is in power say 1,000 years later will declare the auction manifestly unfair and get the trades reversed, or just shoot down the galaxy owners' space probes.

- People will have a range of discount rates, especially at the long end, with some valuing a galaxy 10 billion light-years away similarly to one only 5M light

Continued ability to reinvest near-term profits from currently available assets (or from UBI) into entities that will be relevant in the future is questionable, while literal stakes in current entities will be killed by dilution over astronomical time (when valued as fractions of the whole).

Fun exploration, though I don't believe the underlying assumptions at all. The biggest disconnect I see is the belief that the current mean individual wealth can be made to retain that fraction of total wealth over any significant time period, including massive changes in number of wealth-holders, and in what "wealth" can even be measured in.

There is no long-term passive wealth mechanism. It always requires quite a bit of attention and management, and then gets transferred to the managers rather than the nominal owners. Or, often, to the revolutionaries or vendors who are able to capture it.

This is problematic, EVEN IF the concept of "ownership" can be applied to galaxies and human-comprehensible owning entitites.

Has anyone made an alignment tech tree where they sketch out many current research directions, what concrete achievements could result from them, and what combinations of these are necessary to solve various alignment subproblems? Evan Hubinger made this, but that's just for interpretability and therefore excludes various engineering achievements and basic science in other areas, like control, value learning, agent foundations, Stuart Armstrong's work, etc.

The North Wind, the Sun, and Abadar

One day, the North Wind and the Sun argued about which of them was the strongest. Abadar, the god of commerce and civilization, stopped to observe their dispute. “Why don’t we settle this fairly?” he suggested. “Let us see who can compel that traveler on the road below to remove his cloak.”

The North Wind agreed, and with a mighty gust, he began his effort. The man, feeling the bitter chill, clutched his cloak tightly around him and even pulled it over his head to protect himself from the relentless wind. After a time, the...

Suppose that humans invent nanobots that can only eat feldspars (41% of the earth's continental crust). The nanobots:

- are not generally intelligent

- can't do anything to biological systems

- use solar power to replicate, and can transmit this power through layers of nanobot dust

- do not mutate

- turn all rocks they eat into nanobot dust small enough to float on the wind and disperse widely

Does this cause human extinction? If so, by what mechanism?

Antifreeze proteins prevent water inside organisms from freezing, allowing them to survive at temperatures below 0 °C. They do this by actually binding to tiny ice crystals and preventing them from growing further, basically keeping the water in a supercooled state. I think this is fascinating.

Is it possible for there to be nanomachine enzymes (not made of proteins, because they would denature) that bind to tiny gas bubbles in solution and prevent water from boiling above 100 °C?

Is there a well-defined impact measure to use that's in between counterfactual value and Shapley value, to use when others' actions are partially correlated with yours?

Gradient descent (when applied to train AIs) allows much more fine-grained optimization than evolution, for these reasons:

- Evolution by natural selection acts on the genome, which can only crudely affect behavior and only very indirectly affect values, whereas gradient descent acts on the weights which much more directly affect the AI's behavior and maybe can affect values

- Evolution can only select between two alleles in a discrete way, whereas gradient descent operates over a continuous space

- Evolution has a minimum feedback loop of one organism generation,

(Crossposted from Bountied Rationality Facebook group)

I am generally pro-union given unions' history of fighting exploitative labor practices, but in the dockworkers' strike that commenced today, the union seems to be firmly in the wrong. Harold Daggett, the head of the International Longshoremen’s Association, gleefully talks about holding the economy hostage in a strike. He opposes automation--"any technology that would replace a human worker’s job", and this is a major reason for the breakdown in talks.

For context, the automation of the global shipping ...

I started a dialogue with @Alex_Altair a few months ago about the tractability of certain agent foundations problems, especially the agent-like structure problem. I saw it as insufficiently well-defined to make progress on anytime soon. I thought the lack of similar results in easy settings, the fuzziness of the "agent"/"robustly optimizes" concept, and the difficulty of proving things about a program's internals given its behavior all pointed against working on this. But it turned out that we maybe didn't disagree on tractability much, it's just that Alex...

I'm planning to write a post called "Heavy-tailed error implies hackable proxy". The idea is that when you care about and are optimizing for a proxy , Goodhart's Law sometimes implies that optimizing hard enough for causes to stop increasing.

A large part of the post would be proofs about what the distributions of and must be for , where X and V are independent random variables with mean zero. It's clear that

- X must be heavy-tailed (or long-tailed or som

The most efficient form of practice is generally to address one's weaknesses. Why, then, don't chess/Go players train by playing against engines optimized for this? I can imagine three types of engines:

- Trained to play more human-like sound moves (soundness as measured by stronger engines like Stockfish, AlphaZero).

- Trained to play less human-like sound moves.