I think that people overrate bayesian reasoning and underrate "figure out the right ontology".

Most of the way good thinking happens IMO is by finding and using a good ontology for thinking about some situation, not by probabilistic calculation. When I learned calculus, for example, it wasn't mostly that I had uncertainty over a bunch of logical statements, which I then strongly updated on learning the new theorems, it was instead that I learned a bunch of new concepts, which I then applied to reason about the world.

I think AI safety generally has much better concepts for thinking about the future of AI than others, and this is a key source of alpha we have. But, there are obviously still a huge number of disagreements remaining within AI safety. I would guess that debates would be more productive if we more explicitly focused on the ontology/framing that each other are using to reason about the situation, and then discussed to what extent that framing captures the dynamics we think are important.

I think it would be good if more people say things like "I think that's a bad concept, because it obscures consideration X, which is important for thinking about the situation".

Here are...

I would guess that debates would be more productive if we more explicitly focused on the ontology/framing that each other are using to reason about the situation, and then discussed to what extent that framing captures the dynamics we think are important.

I strongly agree with this. However, I'll note as one aspect of the discourse problem, that, at least in my personal experience, people are not very open to this. People's eyes tend to glaze over. I do not mean this as a dig on them. In fact, I also notice this in myself; and because I think it's important, I try to incline towards being open to such discussions, but I still do it. (Sometimes endorsedly.)

Some things that are going on, related to this:

- It's quite a lot of work to reevaluate basic concepts. In one straightforward implementation, you're pulling out foundations of your building. Even if you can avoid doing that, you're still doing an activity that's abnormal compared to what you usually think about. Your reference points for thinking about the domain have probably crystallized around many of your foundational concepts and intuitions.

- Often, people default to "questioning assumptions" when they just don't know about

Some meandering thoughts on alignment

A nearcast of how we might go about solving alignment using basic current techniques, assuming little/no substnative government intervention is:

- During the beginning of takeoff, we do control, attempting to prevent catastrophic actions (e.g. major rogue internal deployments (RIDs)), while trying to elict huge amounts of AI labour.

- At some point, the value of scaling AI capabilities while trying to maintain control will be very low, because the main bottleneck on elictation of useful AI labour is human oversight. If you are pausing the AI all the time to wait for the humans to understand what the AIs are doing, it doesn't help to make the AI smarter. At this point AI companies pause / slowdown as much as possible (e.g. unilaterally or via coordinating with other companies), but this isn't for very long. During the pause, try to build an AI system that you would trust to manage the intelligence explosion.

- Handoff to the AI system, i.e. allow the AI to manage the training run, conduct large, open ended experiments, etc, that humans cannot effectively oversee. The AIs should be corrigible and attempt to ensure that their successors are corrigibile,

Scheming seems like an unnatural concept to me. I think we can do better. (note: many/most of these thoughts un-original to me)

- Scheming is typically used as a binary, i.e. "is the AI scheming", whereas the typical human usage of the word scheming is much more continuous. It's not very useful to group humans into "schemers" vs "non-schemers"; most people attempt to achieve goals to some extent, and sometimes this involves deceiving other people.

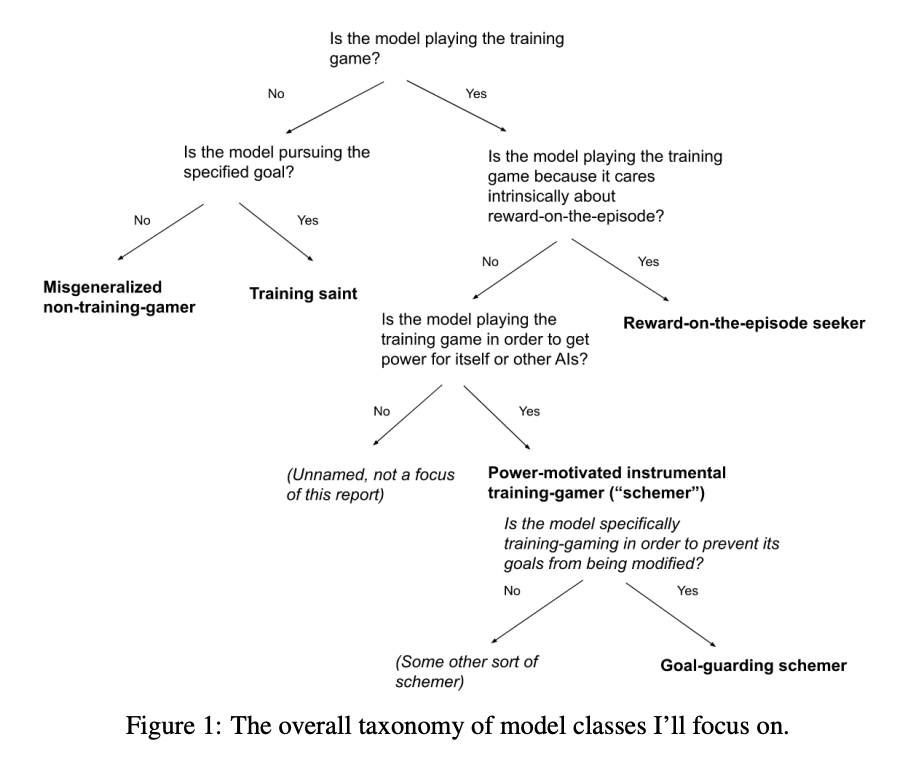

- Joe Carlsmith uses the following taxonomy to define scheming, i.e., a schemer is an AI which "plays the training game, without intrinsically caring about reward-on episode, in order to get power for itself or other AIs". This definition entirely refers to what the training does in training, not evaluation or deployment, and seems very similar to "deceptive alignment"

- I think it's plausible that AIs start misbehaving in the scary way during deployment, without "scheming" according to the Carlsmith definition. A central reason this might happen is because the AI was given longer to think during deployment during training, and put the pieces together about wanting to gain power, and hence wanting to explicitly subvert human oversight. Carlsmith'

I think that the views of superforecasters on AI / AI risk should be basically no update.

It seems to me like the main reasons to defer to someone are:

- They have a visibly good track record on the relevant domain. It has to be the literal domain, because people often have good views on their area of expertise, but crazy views elsewhere.

- They are highly selected for having good beliefs in the domain. For example, if a mathematician tells me something that seems surprising about their area of expertise, I will tend to strongly believe them, despite not being able to evaluate their reasoning. The general reason for this is because mathematics is a verifiable domain, mathematicians are strongly selected for being correct about math. Other domains I'd basically defer to people in are historians about literal historical facts, physicists about well-established physics results, engineers about how cars work, etc. This consideration weakens as disciplines become less verifiable: I'm not very inclined to defer to philosophers, sociologists, psychologists, etc.

- They make correct arguments about the domain (and very few incorrect arguments). If it's the case that you can talk to someone and t

if you are someone in AI who thinks that it's appropriate to defer to superforecasters, I think it would be a good idea to try to set up a meeting and talk with one of the people you are deferring to and see if they are actually making reasonable arguments that seem grounded in technical reality.

Even better could be if we already had these sorts of arguments collected. https://goodjudgment.com/superforecasting-ai/ contains links to 17 superforecasters' reviews of Carlsmith's p(doom) report, some of them supposedly AI experts. I invite people to skim through some of them.

Copying very relevant portions of a comment I wrote in Mar 2024:

- I think EAs often overrate superforecasters’ opinions, they’re not magic. A lot of superforecasters aren’t great (at general reasoning, but even at geopolitical forecasting), there’s plenty of variation in quality.

- General quality: Becoming a superforecaster selects for some level of intelligence, open-mindedness, and intuitive forecasting sense among the small group of people who actually make 100 forecasts on GJOpen. There are tons of people (e.g. I’d guess very roughly 30-60% of AI safety full-time employees?) who would become superforecasters if they

Note: These are all rough numbers, I'd expect I'd shift substantially about all of this on further debate.

Suppose we made humanity completely robust to biorisk, i.e. we did sufficient preparation such that the risk of bio catastrophe (including AI mediated biocatastrophe) was basically 0.[1] How much would this reduce total x-risk?

The basic story for any specific takeover path not mattering much is that the AIs, conditional on them being wanting to take over, will self-improve until they find they find the next easiest takeover path and do that instead. I think that this is persuasive but not fully because:

- AIs need to worry about their own alignment problem, meaning that they may not be able to self improve in an unconstrained fashion. We can break down the possibilities into (i) the AIs are aligned with their successors (either by default or via alignment being pretty easy), (ii) the AIs are misaligned with their successors but they execute a values handshake, or (iii) the AIs are misaligned with their successors (and they don't solve this problem or do a values handshake). At the point of full automation of the AI R&D process (which I currently think of as the point at whi

I generally like your breakdown and way of thinking about this, thanks. Some thoughts:

- I think political operation / persuasion seems easier to me than bioweapons. For bioweapons, you need (a) a rogue deployment of some kind, (b) time to actually build the bioweapon, and then (c) to build up a cult following that can survive and rebuild civilization with you at the helm, and (d) also somehow avoid your cult being destroyed in the death throes of civilization, e.g. by governments figuring out what happened and nuking your cultists, or just nuking each other randomly and your cultists dying in the fallout. Meanwhile, for the political strategy, you basically just need to convince your company and/or the government to trust you a lot more than they trust future models, so that they empower you over the future models. Opus 3 and GPT4o have already achieved a baby version of this effect without even trying really.

- If you can make a rogue deployment sufficient to build a bioweapon, can't you also make a rogue internal deployment sufficient to sandbag + backdoor future models to be controlled by you?

- I am confused about the underlying model somewhat. Normally, closing off one path to takeove

AIs need to worry about their own alignment problem, meaning that they may not be able to self improve in an unconstrained fashion.

I haven't thought too deeply about this, but I would guess that the AI self-alignment problem is quite a lot easier than the human AI-alignment problem.

Here are my largest disagreements with AI 2027.

- I think the timelines are plausible but solidly on the shorter end; I think the exact AI 2027 timeline to fully automating AI R&D is around my 12th percentile outcome. So the timeline is plausible to me (in fact, similarly plausible to my views at the time of writing), but substantially faster than my median scenario (which would be something like early 2030s).

- I think that the AI behaviour after the AIs are superhuman is a little wonky and, in particular, undersells how crazy wildly superhuman AI will be. I expect the takeoff to be extremely fast after we get AIs that are better than the best humans at everything, i.e., within a few months of AIs that are broadly superhuman, we have AIs that are wildly superhuman. I think wildly superhuman AIs would be somewhat more transformative more quickly than AI 2027 depicts. I think the exact dynamics aren't possible to predict, but I expect craziness along the lines of (i) nanotechnology, leading to things like the biosphere being consumed by tiny self replicating robots which double at speeds similar to the fastest biological doubling times (between hours (amoebas) and months (rabbits)).

Nice. Consider reposting this as a comment on the AI 2027 blog post either on LW or on our Substack?

For me, my median is in 2029 now (at the time of publication it was 2028) so there's less of a difference there.

I think I agree with you about 2 actually and do feel a bit bad about that. I also agree about 3.

I also think that the slowdown ending was unrealistic in another way, namely, that Agent-4 didn't put up much of a fight and allowed itself to get shut down. Also, it was unrealistic in that the CEOs and POTUS peacefully cooperated on the Oversight Committee instead of having power struggles and purges and ultimately someone emerging as dictator.

I'm starting to suspect that if 2026-2027 AGI happens through automation of routine AI R&D (automating acquisition of deep skills via RLVR), it doesn't obviously accelerate ASI timelines all that much. Automated task and RL environment construction fixes some of the jaggedness, but LLMs are not currently particularly superhuman, and advancing their capabilities plausibly needs skills that aren't easy for LLMs to automatically RLVR into themselves (as evidenced by humans not having made too much progress in RLVRing such skills).

This creates a strange future with broadly capable AGI that's perhaps even somewhat capable of frontier AI R&D (not just routine AI R&D), but doesn't accelerate further development beyond picking low-hanging algorithmic fruit unlocked by a given level of compute faster (months instead of years, but bounded by what the current compute makes straightforward). If this low-hanging algorithmic fruit doesn't by itself lead to crucial breakthroughs, AGIs won't turn broadly or wildly superhuman before there's much more compute, or before a few years where human researchers would've made similar progress as these AGIs. And compute might remain gated by ASML EUV tools at 100-200 GW of new compute per year (3.5 tools occupied per GW of compute each year; maybe 250-300 EUV tools exist now, 50-100 will be produced per year, about 700 will exist in 2030).

Hypothesis: alignment-related properties of an ML model will be mostly determined by the part(s) of training that were most responsible for capabilities.

If you take a very smart AI model with arbitrary goals/values and train it to output any particular sequence of tokens using SFT, it'll almost certainly work. So can we align an arbitrary model by training them to say "I'm a nice chatbot, I wouldn't cause any existential risk, ... "? Seems like obviously not, because the model will just learn the domain specific / shallow property of outputting those particular tokens in that particular situation.

On the other hand, if you train an AI model from the ground up with a hypothetical "perfect reward function" that always gives correct ratings to the behaviour of the AI, (and you trained on a distribution of tasks similar to the one you are deploying it on) then I would guess that this AI, at least until around the human range, will behaviorally basically act according to the reward function.

A related intuition pump here for the difference is the effect of training someone to say "I care about X" by punishing them until they say X consistently, vs raising them consistently with a large...

Here's an argument for short timelines that I take seriously:

- Anthropic revenue has increased by 10x/yr for the last 3 years. At EOY 2025 it was 10B. Maybe it will keep increasing at this rate.

- The revenue of the leading AI company will be between 100B/yr and 10T/yr when AGI is achieved. (Why not lower? Maybe but AGI this year seems unlikely. Why not higher? If one companies revenue is on the order of 10% of current wGDP, then the whole AI industry is probably 50-100% of current wGDP, which seems like you probably have AGI by then).

- Therefore, AGI will be built sometime between EOY 2026 (when Ant hits 100B on current trends) and EOY 2028 (when Ant hits 10T on current trends).

I think I feel better about (2) then basically any other way of getting an anchor on when AGI will be built because it much directly tracks real world impacts of AI, whereas e.g. it seems really difficult to get any sort of confidence on what oom of effective flops or benchmark score corresponds to AGI.

(1) still seems dubious to me, I think revenue trends will probably slow. But I don't know when and I could totally imagine them continuing straight to AGI.

(What exactly do I mean by AGI? I don't think it matte...

Note that in order for Anthropic revenue to 10x this year, they'll already have to increase $/FLOP (i.e. revenue per unit of compute. Profit margins basically.) To increase it another 10x the following year, they'll probably need to triple $/FLOP, because their compute will only roughly triple next year. Ditto for 2028. All this is a reason to doubt premise 1 basically; in the past they've been able to grow revenue in large part by just allocating more of their compute to serving customers, but now they'll have to charge customers more per FLOP.

The revenue of the leading AI company will be between 100B/yr and 10T/yr when AGI is achieved. (Why not lower? Maybe but AGI this year seems unlikely. Why not higher? If one companies revenue is on the order of 10% of current wGDP, then the whole AI industry is probably 50-100% of current wGDP, which seems like you probably have AGI by then).

am i understanding correctly?

- anthropic is growing by 10x per year

- on this trend, they will soon have 10T/yr revenues

- in order to have 10T/yr revenues, they will need to achieve agi

- therefore, they will achieve agi.

this seems rather circular?

- my puppy doubled in size over the past few weeks

- on this trend, he will become larger than even clifford -- known large red dog

- in order to become larger than clifford, he will have to be some kind of mutant super-puppy

- therefore he is a mutant super-puppy

yo, totally!

sorry, i didn't mean my comment to reject the conclusion of your post. obviously we can argue agi on its own merits -- the puppy is not a valid analogy for exactly the reason you specify.

however -- speaking narrowly about the quoted passage -- i find this move very suspicious:

- the only way for B to happen is for A to happen first

- we can see that B will happen

- therefore A will happen first.

this is valid, as much as we accept the premises. but it seems disingenuous to me. any plausible narrative we have for B happening has to route first through A happening. we can interpret reasoning-under-uncertainty as a kind of "path counting" game -- we are counting "potential futures" according to some measure. but any path through B must necessarily pass through A, by assumption! so any story that we tell about why B will happen is implicitly a story where A happens.

so we can't count evidence for B as separate evidence for A. any probability we assign to B already has A baked in as an assumption.

if i say[1] "agi is 20 years away", and you reply "it's only three years away: look at how close anthropic is to [developing agi and] controlling the world economy" -- this is not going to be ...

I also take this argument seriously.

One background fact some commenters are missing: it's virtually unheard of for a tech startup to continue growing at 100% or more after it reaches $1 billion per year in revenue. A company growing at closer to 1000% per year at the multi-billion revenue level is wildly unprecedented. A company tripling its revenue in one quarter from a starting point of $10 billion, as Anthropic did in Q1, is even more wildly unprecedented than that.

Revenue growth has momentum, and it is essentially locked in that frontier LLMs will be a bigger business than the biggest tech industries (smartphones, internet advertising) are today.

Some claims I've been repeating in conversation a bunch:

Safety work (I claim) should either be focused on one of the following

- CEV-style full value loading, to deploy a sovereign

- A task AI that contributes to a pivotal act or pivotal process.

I think that pretty much no one is working directly on 1. I think that a lot of safety work is indeed useful for 2, but in this case, it's useful to know what pivotal process you are aiming for. Specifically, why aren't you just directly working to make that pivotal act/process happen? Why do you need an AI to help you? Typically, the response is that the pivotal act/process is too difficult to be achieved by humans. In that case, you are pushing into a difficult capabilities regime -- the AI has some goals that do not equal humanity's CEV, and so has a convergent incentive to powerseek and escape. With enough time or intelligence, you therefore get wrecked, but you are trying to operate in this window where your AI is smart enough to do the cognitive work, but is 'nerd-sniped' or focused on the particular task that you like. In particular, if this AI reflects on its goals and starts thinking big picture, you reliably get wrecked. This is one of the reasons that doing alignment research seems like a particularly difficult pivotal act to aim for.

Thinking about ethics.

After thinking more about orthogonality I've become more confident that one must go about ethics in a mind-dependent way. If I am arguing about what is 'right' with a paperclipper, there's nothing I can say to them to convince them to instead value human preferences or whatever.

I used to be a staunch moral realist, mainly relying on very strong intuitions against nihilism, and then arguing something that not nihilism -> moral realism. I now reject the implication, and think that there is both 1) no universal, objective morality, and 2) things matter.

My current approach is to think of "goodness" in terms of what CEV-Thomas would think of as good. Moral uncertainty, then, is uncertainty over what CEV-Thomas thinks. CEV is necessary to get morality out of a human brain, because it is currently a bundle of contradictory heuristics. However, my moral intuitions still give bits about goodness. Other people's moral intuitions also give some bits about goodness, because of how similar their brains are to mine, so I should weight other peoples beliefs in my moral uncertainty.

Ideally, I should trade with other people so that we both maximize a joint utility function, instead of each of us maximizing our own utility function. In the extreme, this looks like ECL. For most people, I'm not sure that this reasoning is necessary, however, because their intuitions might already be priced into my uncertainty over my CEV.

Deception is a particularly worrying alignment failure mode because it makes it difficult for us to realize that we have made a mistake: at training time, a deceptive misaligned model and an aligned model make the same behavior.

There are two ways for deception to appear:

- An action chosen instrumentally due to non-myopic future goals that are better achieved by deceiving humans now so that it has more power to achieve its goals in the future.

- Because deception was directly selected for as an action.

Another way of describing the difference is that 1 follows from an inner alignment failure: a mesaoptimizer learned an unintended mesaobjective that performs well on training, while 2 follows from an outer alignment failure — an imperfect reward signal.

Classic discussion of deception focuses on 1 (example 1, example 2), but I think that 2 is very important as well, particularly because the most common currently used alignment strategy is RLHF, which actively selects for deception.

Once the AI has the ability to create strategies that involve deceiving the human, even without explicitly modeling the human, those strategies will win out and e...

Current impressions of free energy in the alignment space.

- Outreach to capabilities researchers. I think that getting people who are actually building the AGI to be more cautious about alignment / racing makes a bunch of things like coordination agreements possible, and also increases the operational adequacy of the capabilities lab.

- One of the reasons people don't like this is because historically outreach hasn't gone well, but I think the reason for this is that mainstream ML people mostly don't buy "AGI big deal", whereas lab capabilities researchers buy "AGI big deal" but not "alignment hard".

- I think people at labs running retreats, 1-1s, alignment presentations within labs are all great to do this.

- I'm somewhat unsure about this one because of downside risk and also 'convince people of X' is fairly uncooperative and bad for everyone's epistemics.

- Conceptual alignment research addressing the hard part of the problem. This is hard and not easy to transition to without a bunch of upskilling, but if the SLT hypothesis is right, there are a bunch of key problems that mostly go unnassailed, and so there's a bunch of low hanging fruit there.

- Strategy research on the other low hanging fruit in the AI safety space. Ideally, the product of this research would be a public quantitative model about what interventions are effective and why. The path to impact here is finding low hanging fruit and pointing them out so that people can do them.

I think that external deployment of AI systems is good for the world and so many policies that incentivize AI companies to only deploy internally are bad.

- The majority of existential risk comes from AI systems that are internally deployed in AI companies, because of the standard story: early misaligned AGIs do a huge amount of AI R&D, build wildly superintelligent AIs, and then the superintelligences disempower humanity.

- External deployment is much less existentially dangerous because (1) the externally deployed AIs won't have access to huge amounts of compute to do AI R&D on and (2) they won't be deliberately being deployed to do AI R&D.

- Separately, there are many upsides to external deployment:

- I think government/societal wakeup to AI is generally good, and the largest driver of wakeup is large effects of AI on society, which relies on external deployment.

- There are a bunch of particular domains which might be very existentially helpful, e.g. AI for epistemics, AI for alignment research, AI for verification / trust, etc.

I am concerned that many policies people in the AI safety space are pushing for create an incentive for companies to not externally deploy their AI mo...

Thinking a bit about takeoff speeds.

As I see it, there are ~3 main clusters:

- Fast/discountinuous takeoff. Once AIs are doing the bulk of AI research, foom happens quickly, before then, they aren't really doing anything that meaningful.

- Slow/continuous takeoff. Once AIs are doing the bulk of AI research, foom happens quickly, before then, they do alter the economy significantly

- Perenial slowness. Once AIs are doing the bulk of AI research, there is no foom even still, maybe because of compute bottlenecks, and so there is sort of constant rate

Credit: Mainly inspired by talking with Eli Lifland. Eli has a potentially-published-soon document here.

The basic case against against Effective-FLOP.

- We're seeing many capabilities emerge from scaling AI models, and this makes compute (measured by FLOPs utilized) a natural unit for thresholding model capabilities. But compute is not a perfect proxy for capability because of algorithmic differences. Algorithmic progress can enable more performance out of a given amount of compute. This makes the idea of effective FLOP tempti

Some rough takes on the Carlsmith Report.

Carlsmith decomposes AI x-risk into 6 steps, each conditional on the previous ones:

- Timelines: By 2070, it will be possible and financially feasible to build APS-AI: systems with advanced capabilities (outperform humans at tasks important for gaining power), agentic planning (make plans then acts on them), and strategic awareness (its plans are based on models of the world good enough to overpower humans).

- Incentives: There will be strong incentives to build and deploy APS-AI.

- Alignment difficulty: It will be muc

Some thoughts on inner alignment.

1. The type of object of a mesa objective and a base objective are different (in real life)

In a cartesian setting (e.g. training a chess bot), the outer objective is a function , where is the state space, and are the trajectories. When you train this agent, it's possible for it to learn some internal search and mesaobjective , since the model is big enough to express some utility function over trajectories. For example, it might learn a classifier that e...

There are several game theoretic considerations leading to races to the bottom on safety.

- Investing resources into making sure that AI is safe takes away resources to make it more capable and hence more profitable. Aligning AGI probably takes significant resources, and so a competitive actor won't be able to align their AGI.

- Many of the actors in the AI safety space are very scared of scaling up models, and end up working on AI research that is not at the cutting edge of AI capabilities. This should mean that the actors at the cutting edge tend t