The acceleration of the work as a whole is not determined by the mean of the accelerations experienced by individual employees. If only the tightest bottleneck widens by 4x, that means you go roughly as fast as the second tightest bottleneck is wide, not 4x faster. So long as there is any bottleneck that isn't widened and that's less than 4x as wide as the former tightest bottleneck, the work as a whole will be sped up by less than 4x. It would be entirely possible for many or most employees to experience >4x speedup without the overall org moving all that much faster.[1]

Additionally, this continues at the individual level. in my experience, if you ask people how much speedup they got from a major new model after they just got their hands on it, there's some tendency for them to think about the tasks that used to occupy a lot of their time and that the model just sped up massively when giving their estimate, and not yet really think about the tasks the model didn't speed up massively and that are now the new bottleneck in their workflow.

- ^

Yes, they take a geometric mean rather than an arithmetic mean. I still don't buy it.

kinda agree, but a consideration worth noting: if your company currently carries out process

- if algo research is sped up 1000x but compute buildup isn't sped up, I think you will still have

- maybe: if high-iq algo research isn't sped up much but kinda dumb algo research tasks are sped up

so, i think what you're saying is technically true for things which are really bottlenecks — like, in the sense that you will really have to keep doing the same amount of the same thing later for each unit of AI progress — but i'm concerned about various things one would want to apply this thinking to not actually being bottlenecks in that sense

I agree in general, but presumably this would result in the company redistributing resources towards whatever the now-most-critical-bottleneck activities are? Maybe that's impossible for humans at the current pace of AI development (organizations are usually not this responsive and adaptable)? Alternatively, couldn't accelerating the acceleratable activities plausibly cause bigger gains per model iteration in ways that might subsequently loosen remaining bottlenecks?

The acceleration of the work as a whole is not determined by the mean of the accelerations experienced by individual employees.

I agree with this, but think this is unlikely to be the main reason the overall serial labor acceleration is smaller.

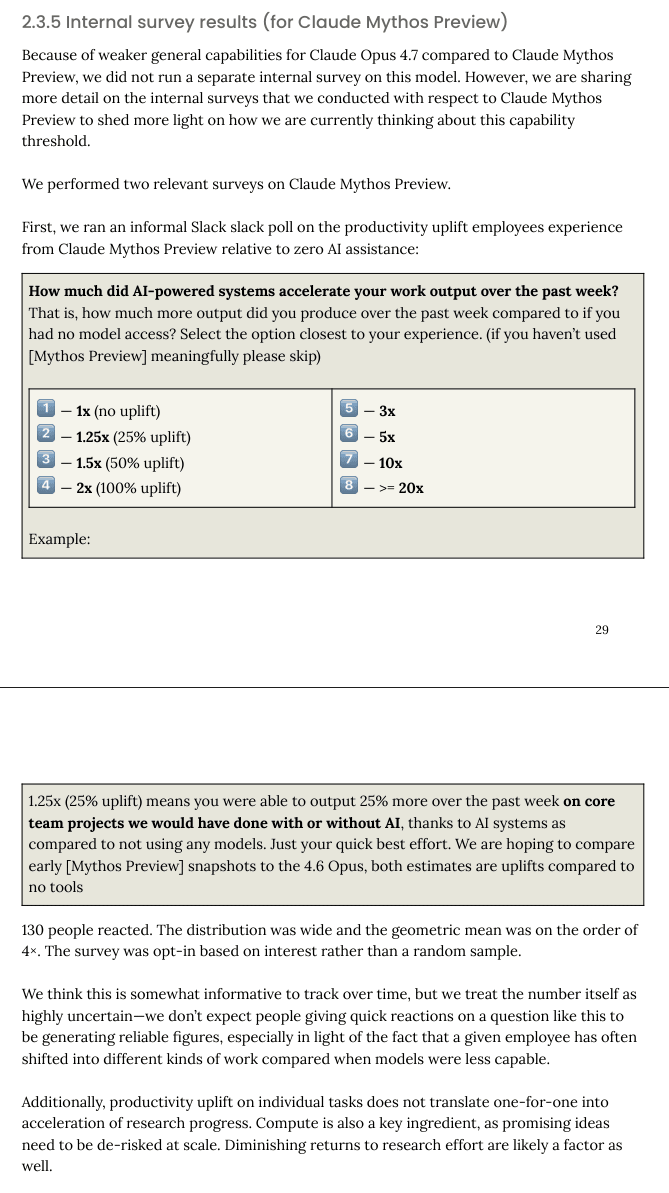

More context: an internal survey (n=16) from the Opus 4.6 system card they said they got a 2.52x average speedup[1], so Mythos is apparently 59% better than Opus 4.6 for productivity uplift.

Productivity uplift estimates from the use of Claude Opus 4.6 ranged from 30% to 700% with a mean of 152% and median of 100%

- ^

152%/100% = 1.52, plus 1 because a 0% uplift would be a 1x speedup

In the Dwarkesh podcast released Feb 13, Dario said:

I would say right now the coding models give maybe, I don’t know, a 15-20% total factor speed up. That’s my view. Six months ago, it was maybe 5%. So it didn’t matter. 5% doesn’t register. It’s now just getting to the point where it’s one of several factors that kind of matters. That’s going to keep speeding up.

The Opus 4.7 system card includes more details about this uplift survey (done for Mythos):

My takeaways:

- I agree with "We think this is somewhat informative to track over time, but we treat the number itself as highly uncertain—we don’t expect people giving quick reactions on a question like this to be generating reliable figures, especially in light of the fact that a given employee has often shifted into different kinds of work compared when models were less capable." I think this might give some sense for the relative vibe between models, but it's unclear how to convert the resulting number into a reasonable uplift estimate.

- I don't think the survey question clearly corresponds to a particular notion of uplift and I don't think this question with this methodology would result in survey takers understanding the difference and thinking carefully about what notion is in use. (I don't think a slack emoji poll could really do this, this is just capturing something else.)

- It looks like Anthropic isn't really taking an official stance on the level of uplift (seems reasonable).

I appreciate this additional information from Anthropic. Thanks to whoever put this in!

It's unclear how we should interpret this. What do they mean by productivity uplift? To what extent is Anthropic's institutional view that the uplift is 4x? (Like, what do they mean by "We take this seriously and it is consistent with our own internal experience of the model.")

I think we've all seen the famous study, around a year ago, on self-overestimates of LLM productivity increases, which ultimately concluded that the actual gains were illusory. It's true that I would expect frontier AI researchers to be smarter than ordinary programmers, but they're also much more devoted to the idea of AI capabilities, and have stronger incentives to believe that their LLM is exceptionally good.

To be clear, LLMs have improved enormously since then, and I must expect that there are very real productivity gains now. I myself have used autoresearch to skip the grating process of manual hyperparameter optimization when there's no elegant way to do so with a search algorithm. It does do valuable things. But there is a long, long, long history of very smart AI company employees reporting, subjectively, that their next model represents a sea change in 'vibes-based' performance.

My current best (low confidence, low precision) guess for the serial labor acceleration is ~1.55x (with a higher serial labor acceleration of ~1.75x for just research engineering activities).

My own guess would be somewhere in that same ballpark.

Thus, I'm pretty unhappy about a situation in which:

- Anthropic seemingly claims they are getting 4x productivity uplift, but it's publicly unclear what they mean by this or how much they believe this.

- ...

I don't like it either, and I'm usually on the far tail of Anthropic-skeptical people, but I think this is unavoidable. The people saying things like this almost definitely sincerely believe it, and objections to any given quantitative metric are legitimate, as covered in your post. Even if we suddenly became a USSR-style command economy and the marketing hype cycle were abolished, we would still regularly see posts like these, simply on the basis that the most effective capabilities researchers appear to be devout optimists with regard to what they are building.

I agree on your recommendations for more transparent release of survey results, that seems obviously beneficial to me, and the vagueness is possibly a result of hype-related decision-making. I don't think it would have a very substantial impact on the social impact, though - the portion of the public inclined to believe hype would read the headlines, the portion of the public inclined to be skeptical would likely draw the same conclusions that we draw from the lack of a survey release, barring exceptional circumstances, and the portion of the public that reflexively denies hype would just call them liars.

I agree that adjusting self-reports by the estimated discrepancy in effect sizes informed by the METR study is the way to go here - so maybe somewhere up to 1.5x is more likely. 4x speedup would require some really convincing evidence.

I suspect such evidence would involve giving away more details of Anthropic's internal processes and workflows than Anthropic would be comfortable with.

I suspect you wouldn't believe a claim that it has improved it 4x (or whatever) months down the line either, and I struggle to see under what scenario you'd believe them, just how you dont trust this survey.

Relatedly, what do you believe the current improvement is for them from using their pre-Mythos models compared to if they used no AI? Is it close to nothing?

Also, don't your estimates that if it was 4x timelines will be shortened by X forget that this survey compares to 0 AI, and the current timelines are (I hope) based on them already using AI?

FWIW, I do have sources of signal other than surveys, like talking to Anthropic employees about what's going on (and using the model myself).

Relatedly, what do you believe the current improvement is for them from using their pre-Mythos models compared to if they used no AI? Is it close to nothing?

I discuss this here. I think the serial labor acceleration from Opus 4.5 was around 1.3x while Mythos is around 1.55x. (Obviously pretty imprecise guesses, just using precision because rounding would lose some signal.)

Also, don't your estimates that if it was 4x timelines will be shortened by X forget that this survey compares to 0 AI, and the current timelines are (I hope) based on them already using AI?

See footnote 3:

The update toward shorter timelines is almost entirely from thinking we're further along in the capability progress than I previously realized, rather than from thinking progress will be faster but we're starting from a similar point. As in, I both update towards getting more acceleration at a lower level of capability and towards models being closer in capability space towards various high milestones, and I'm mostly updating timelines based on the second of these.

True, but I doubt very many employees will openly state they were not able to do any work before Mythos.

No need for this whole essay. I can explain why Anthropic's claim should be disregarded in three words. Surveys are unreliable.

Ai podcasts have noted that Antrhopic has been shipping really fast, both on Claude Code updates (mostly done with Claude Code) and other work. We are seeing external evidence of increased velocity from Anthropic on codong efforts.

Anthropic's system card for Mythos Preview says:

It's unclear how we should interpret this. What do they mean by productivity uplift? To what extent is Anthropic's institutional view that the uplift is 4x? (Like, what do they mean by "We take this seriously and it is consistent with our own internal experience of the model.")

Edit: Anthropic has released more information about this survey and their interpretation in the Opus 4.7 system card. I appreciate that they did this! I discuss this new information here.

One straightforward interpretation is: AI systems improve the productivity of Anthropic so much that Anthropic would be indifferent between the current situation and a situation where all of their technical employees magically work 4 hours for every 1 hour (at equal productivity without burnout) but they get zero AI assistance. In other words, AI assistance is as useful as having their employees operate at 4x faster speeds for all activities (meetings, coding, thinking, writing, etc.) I'll call this "4x serial labor acceleration" [1] (see here for more discussion of this idea [2] ).

I currently think it's very unlikely that Anthropic's AIs are yielding 4x serial labor acceleration, but if I did come to believe it was true, I would update towards radically shorter timelines. (I tentatively think my median to Automated Coder would go from 4 years from now to maybe 1.3 years from now; my median to AI R&D parity would go from 5 years from now to maybe 2.5 years from now.) My best guess is that 4x serial labor acceleration would cause AI progress to go 1.75x faster (see "Appendix: Estimating AI progress speed up from serial labor acceleration") which is very large and close to the 2x "dramatic acceleration" threshold Anthropic is using for "Autonomy threat model 2: risks from automated R&D". [3]

My current best (low confidence, low precision) guess for the serial labor acceleration is ~1.55x (with a higher serial labor acceleration of ~1.75x for just research engineering activities). I currently think that reasonably informed Anthropic employees that have thought about this topic in a decent amount of detail think the serial labor acceleration is closer to 1.5x than 4x.

I think uplift metrics like "serial labor acceleration" at AI companies are some of the most relevant metrics to track when trying to figure out how close we are to key risk-relevant milestones in AI development like full automation of AI R&D. I also think uplift metrics are among the most relevant metrics for Anthropic's "Autonomy threat model 2: risks from automated R&D". I also think accurately capturing the views of employees, managers, and leadership at AI companies (probably with something like a survey) is currently one of our best ways of assessing serial labor acceleration (or other uplift metrics), especially for AI systems that aren't publicly deployed.

Thus, I'm pretty unhappy about a situation in which:

Some things that would improve the situation in future system cards / risk reports:

If there is a large disagreement about the current level of uplift, this seems like a particularly tractable empirical crux: I would substantially shorten my timelines if I learned the uplift was much higher than I expect, and I'd guess some people at Anthropic would lengthen theirs if they learned it was significantly lower than they expect. I also expect that various people who are much more skeptical than me of reaching very high levels of AI capability within the next 10 years would update some on credible internal uplift measurements. Getting better empirical information about the level of uplift seems hard but doable.

Additionally, Anthropic claims "We estimate that reaching 2× on overall progress via this channel would require uplift roughly an order of magnitude larger than what we observe." Insofar as "productivity uplift" is supposed to correspond to something like serial labor acceleration, I'm very skeptical. I think ~40x serial labor acceleration would yield much more than 2x faster progress. My guess (see "Appendix: Estimating AI progress speed up from serial labor acceleration") is that you'd get 2x overall AI progress at around 5x serial labor acceleration. My understanding is that the AI Futures Project timelines model would indicate that around 8x serial labor acceleration is required. It seems that Anthropic might have their own takeoff speeds / timelines model that differs substantially from current public modeling, produces much less conservative conclusions about the level of concern, and that they are using for decision making. If so, I think they should either publicly write up their modeling (informally would be fine) or get third parties to review it privately. Insofar as they mean "we think we'll maybe reach 2x overall progress when our survey—that's mostly capturing vibes and doesn't have a clear correspondence to any particular notion of uplift—reaches 40x", fair enough, but it seems good to clarify this.

The current state of our evidence about AI R&D acceleration from Mythos seems extremely limited and AI companies should (and can) do much better going forward. [4]

Appendix: Estimating AI progress speed up from serial labor acceleration

This model is basically a simplified version of the AI Futures Project model with somewhat different constants.

Appendix: Different notions of uplift

There are several different concepts that could be meant by "productivity uplift", and which one we're talking about makes a huge difference:

A parallel labor acceleration of X is much less useful than a serial labor acceleration of X. And depending on the operationalization, the AIs doing 90% of the work is way less useful than a 10x serial labor acceleration. So the choice of concept matters a lot for interpreting any claimed level of uplift.

I'm using a specific name to distinguish from other things we might call "4x productivity uplift" like "if the median employee had to do the tasks they are currently doing without the use of AI, they would be 4x slower". These notions have strongly different implications as I discuss here and in Appendix: Different notions of uplift. ↩︎

For reference, the speed up modeling I do in that post is out of date with my latest thinking. ↩︎

The update toward shorter timelines is almost entirely from thinking we're further along in the capability progress than I previously realized, rather than from thinking progress will be faster but we're starting from a similar point. As in, I both update towards getting more acceleration at a lower level of capability and towards models being closer in capability space towards various high milestones, and I'm mostly updating timelines based on the second of these. ↩︎

I think there are also some other issues in the system card's assessment of AI R&D acceleration. They seemingly argue that even if Mythos was substantially above trend due to AI acceleration, because this acceleration was done by earlier (less capable!) AIs, this would imply this Mythos-caused acceleration wouldn't be that high: "This means that even if the slope change were AI-attributable, the model it would implicate is not the one we are assessing." This seems backward: if less capable AIs yield a large acceleration, then we should expect the effect from more capable AIs to be even larger. To be clear, this seems like a minor/moderate issue, I just thought it was worth mentioning. ↩︎