There is something weird about LLM-produced text. It seems to be very often the case that if I'm trying to read a long text that has been produced primarily by an LLM, I notice that I find it difficult to pay attention to the text. Even if there's apparently semantically rich content, I notice that I'm not even trying to decode it.

the typical LLM writing style has a tendency to make people's eyes slide off of it.

It's kind of similar to the times when your attention wanders away during reading, and then you realize that you were scanning/semi-reading the last page with your eyes, but the information mostly didn't make it past the visual cortex, and you failed to construct the coherent scene that the book was trying to put in your mind. In the case of LLMs, a milder version of this seems to be the default.

This is much more (and much more often) the case if an LLM is tasked to produce something "original-ish" (an essay on topic X, say), rather than just give you the facts on some topic.

What's going on?

Tom White has this non-exhaustive list of telltale tics of LLM writing:

...

- The “It’s not X, it’s Y” Antithesis

- The most common tell. A fake profundity wrapped in a neat contrast: “We’re not a company, we’re a movement.” “It’s not just a tool, it’s a journey.” Humans use this sparingly; AI uses it compulsively

- The Punchline Em-Dash

- Every section feels like it’s waiting for a big reveal—until the reveal is obvious or hollow

- The Three-Item List

- AI loves the rhythm of threes: “clarity, precision, and impact.” It’s a pattern baked deep into training data and reinforced in feedback

- Mirrored Metaphors & Faux Gravitas

- “We don’t chase trends — trends chase us.” They sound like aphorisms, but they’re cosplay; form without experience

- Adverbial Bloat

- “Importantly,” “remarkably,” “fundamentally,” “clearly.” Empty intensifiers meant to simulate significance

- Mechanical Rhythm

- Sentences marching in lockstep, each about the same length. Humans sprawl, stumble, cut themselves off. AI taps its digital foot to a metronome

- Hedged Authority

- The “at its core,” “in many ways,” “arguably.” A way of sounding wise without taking a stand

- Latin Sidebar Syndrome

- AI’s compulsive use of e.g. and i.e. often comes with a giveaway

This has been discussed a lot in the past, often under the term 'mode collapse' and RLHF and flattened logits etc. Hollis Robbins has some good posts the past 2 years on her Substack on the bad writing of chatbot LLMs. It's hard to say what is 'the' problem, since there seem to be multiple overlapping phenomenon.

I don't have a real explanation, but I've been interested in this, since it feels like the LLM is doing something like the opposite of what writers intend to do (at least in the effect). As if there's some portion of language space that invites engagement, or trips an alarm in the reader that says 'there's something in this!' Human writers swim toward that portion of the space; LLMs swim away from it.

[I would be unsurprised to find I have not expressed this well.]

I mean the base models are outputting the most likely next token (modulo a temperature parameter). "Whatever is most likely to come next, based on what we've seen so far" is, in some sense, the opposite of interesting writing, which is interesting precisely because it has something novel or unusual or surprising to say.

This is not what it feels like to me. What it feels like is that I am reading a low quality SEO optimized human website that puts boilerplate like "What is an X? What is the definition of an X? Read on to learn what an X is..." or a news article that launches into a personal anecdote that I don't care about - I expect low density vapid 'slop' and want to skip to the good parts.

LLM writing is not so transparently doing that, yet it seems like my brain can (correctly!) tell it's low density and so skips past. Unfortunately it's less likely to somewhere give the tidbits in a higher density way (like the recipe part of a recipe site is usually fine), and more likely to intersperse the tidbits in the low density so that it's like a middle ground of density. Human articles like this tend to have large chunks you can entirely skip to get to the good parts; LLMs with this problem feel like I have to read the whole thing just to mine 2 sentences worth of info.

Thinking of times while reading/hearing human words where I felt the manipulation worry you're describing - it feels like it takes the more reflective parts of me to notice, to then say "hey uhh am I being 'hacked' and my feelings are ...

A model to track:

- AI gets increasingly good at X, so good that strong pressures arise to increasingly automate it, even if AI is still quite bad at some subtle but very important aspects of X.

- More and more X-related work/activity gets AI-automated, including the parts where AI is still bad or fails frequently and non-gracefully.

- This is partly driven by the fact that it's just ~psychologically hard for a human to exert effortful X-related cognition when AI would be more efficient at 99% of it.

- In cases where being good at [those aspects of X AI is still bad at][1] is critical, the quality of work deteriorates relative to the level before intense AI automation.

- ^

I guess we could call them reverse salients?

This happens with all technologies, not just AI. For example, automobiles replaced horses, with improvements in 99% of aspects of transportation. But if you fall asleep riding a horse, the horse safely halts or goes home under its own power. If you fall asleep driving a car, disaster! That's the 1% that got worse.

Scott's old post Concept-Shaped Holes Can Be Impossible To Notice says that concept-shaped holes can be impossible to notice, in oneself as well as others. You might be very off-base when estimating how much things that seem obvious and straightforward to you are obvious and straightforward to others. They might very well not be. See also: the curse of knowledge.

I learned that writing something up or starting a conversation about a thing that seemed [obvious and therefore not worth talking about] can reveal that this thing is not as [obvious and therefore not worth talking about] as it seemed to me.

So, if you're experiencing that sort of thing as a blocker ("I don't have any particularly interesting/novel ideas"), you might want to gain empirical info about which ideas are worth writing about. Getting feedback is crucial for cultivating intellectual activity.

Ironically, as I was contemplating posting this shortform, it occurred to me that "Is it really worth posting? Shouldn't this already be in the LW-ish water supply?".

ETA: A class of micro-examples is when you bang your head against the wall of some math concept until pieces of it start clicking, and once everything has clicked ...

The increase[1] in stated support for the AGI pause over the last few months has exceeded what I would have expected half a year ago. Neil deGrasse Tyson, Bernie Sanders, IABIED making an appearance in congressional hearings, Joe Carlsmith[2], etc. Even Dario and Demis are saying stuff like "yeah, if we could make the world stop, we would do it".

On IABIED

First things first, I wholeheartedly endorse the main actionable conclusion: Ban unrestrained progress on AI that can kill us all.

I broadly think Eliezer and Nate did a good job communicating what's so difficult about the task of building a thing that is more intelligent than all of humanity combined and shaped appropriately so as to help us, rather than have a volition of its own that runs contrary to ours.[1]

The main (/most salient) disagreement I can see at the moment is the authors' expectations of value-strangeness and maximizeriness of superintelligence; or rather, I am much more uncertain about this. However, this detail is not relevant for the desirability of the post-ASI future, conditional on business-close-to-as-usual and therefore not relevant for whether the ban is good.

(Also, not sure about their choice of some stories/parables, but that's a minor issue as well.)

I liked the comparison with the Allies winning against the Axis in WWII, which, at least in resource/monetary terms, must have costed much more than it would cost to implement the ban. The things we're missing at the moment are awareness of the issue, pulling ourselves together, and collective steam.

...The acronym SLT (in this community) is typically taken/used to refer to Singular Learning Theory, but sometimes also to (~old-school-ish) Statistical Learning Theory and/or to Sharp Left Turn.

I therefore put that to disambiguate between them and to clean up the namespace, we should use SiLT, StaLT, and ShaLT, respectively.

In his MLST podcast appearance in early 2023, Connor Leahy describes Alfred Korzybski as a sort of "rationalist before the rationalists":

Funny story: rationalists actually did exist, technically, before or around World War One. So, there is a Polish nobleman named Alfred Korzybski who, after seeing horrors of World War One, thought that as technology keeps improving, well, wisdom's not improving, then the world will end and all humans will be eradicated, so we must focus on producing human rationality in order to prevent this existential catastrophe. This is a real person who really lived and he actually sat down for like 10 years to like figure out how to like solve all human rationality God bless his autistic soul. You know, he failed obviously but you know you can see that the idea is not new in this regard.

Korzybski's two published books are Manhood of Humanity (1921) and Science and Sanity (1933).

...E. P. Dutton published Korzybski's first book, Manhood of Humanity, in 1921. In this work he proposed and explained in detail a new theory of humankind: mankind as a "time-binding" class of life (humans perform time binding by the transmission of knowledge and abstractions through tim

I've written about this here:

https://www.lesswrong.com/posts/kFRn77GkKdFvccAFf/100-years-of-existential-risk

Are there any memes prevalent in the US government that make racing to AGI with China look obviously foolish?

The "let's race to AGI with China" meme landed for a reason. Is there something making the US gov susceptible to some sort of counter-meme, like the one expressed in this comment by Gwern?

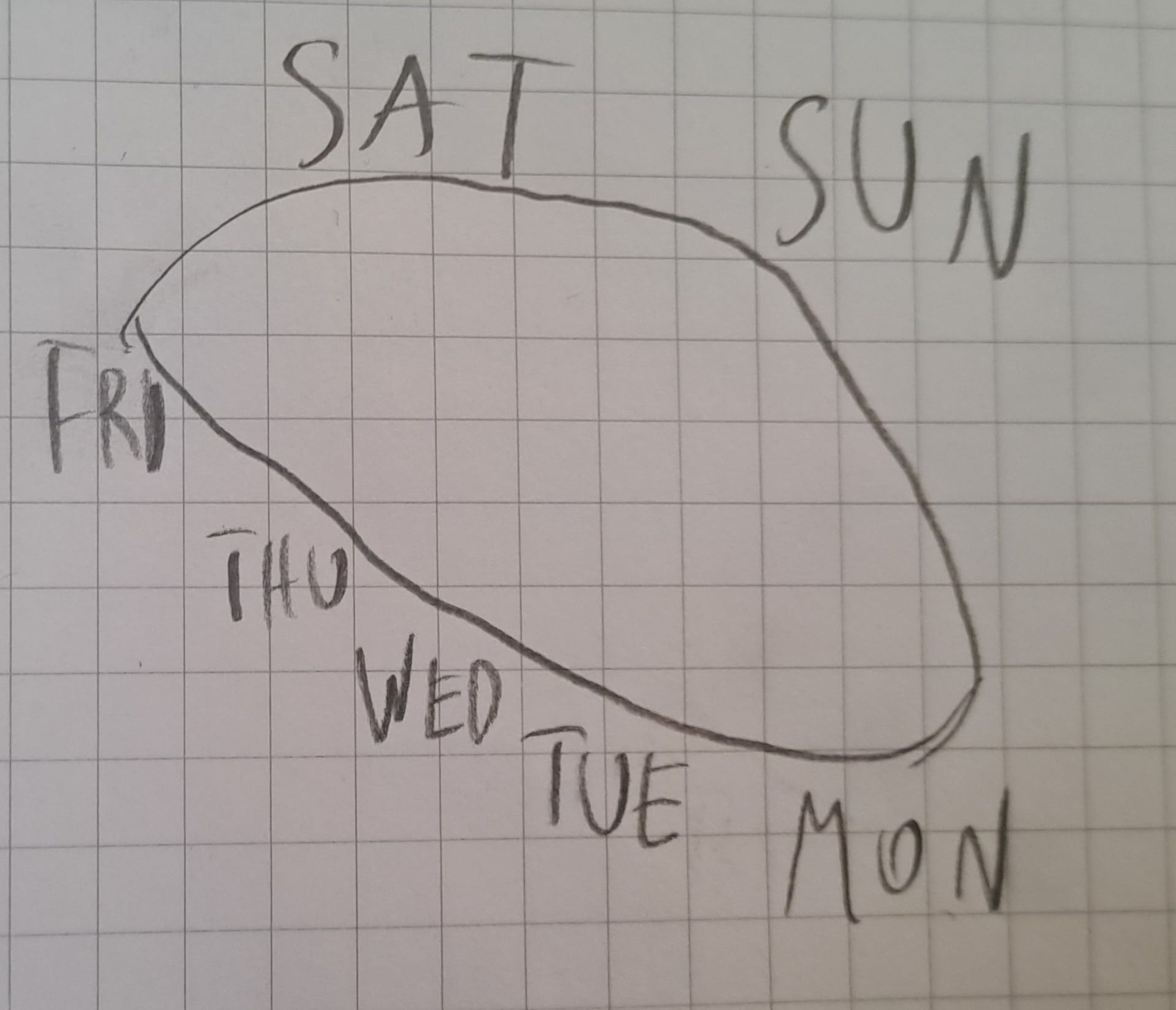

For as long as I can remember, I've had a very specific way of imagining the week. The weekdays are arranged on an ellipse, with an inclination of ~30°, starting with Monday in the bottom-right, progressing along the lower edge to Friday in the top-left, then the weekend days go above the ellipse and the cycle "collapses" back to Monday.

Actually, calling it "ellipse" is not quite right because in my mind's eye it feels like Saturday and Sunday are almost at the same height, Sunday just barely lower than Saturday.

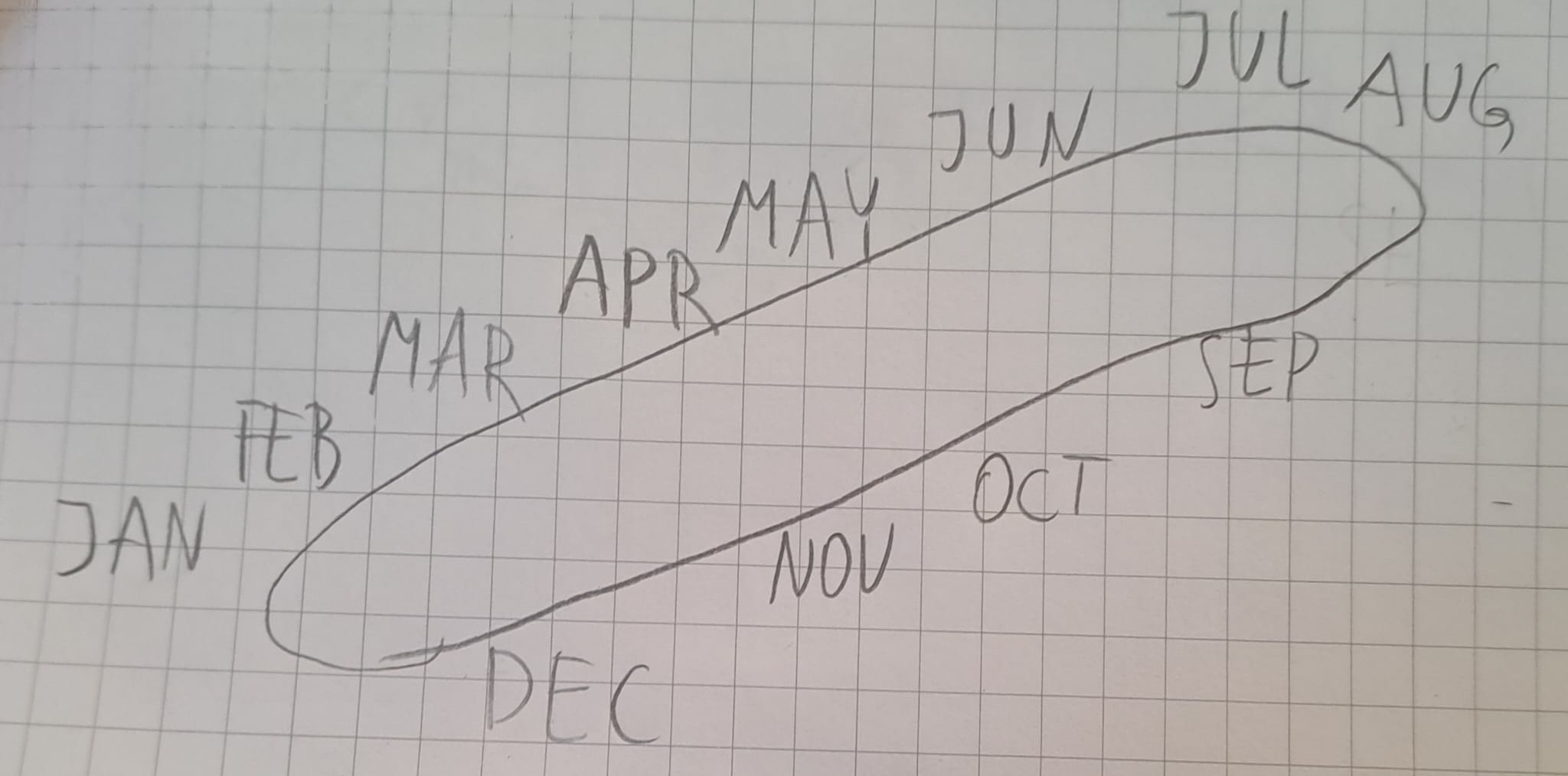

I have a similar ellipse for the year, this one oriented bottom-left to top-right:

This one also feels wrong because "in my head" each of the following is true:

- Each month takes the same amount of space (/measure?).

- There are fewer months along the lower side of the cycle.

- I strongly feel that "this should be a mathematically proper ellipse". (Non-Euclidean geometry?)

The main interesting commonalities I see between them:

- The initial elements (Monday and January) start in the lower corner of the cycle.

- The "free" elements of the cycle (weekend and summer vacation) are along the top edge of the cycle.

- The segment between the last "free" element and the initial element is stretched s

Strongly normatively laden concepts tend to spread their scope, because (being allowed to) apply a strongly normatively laden concept can be used to one's advantage. Or maybe more generally and mundanely, people like using "strong" language, which is a big part of why we have swearwords. (Related: Affeective Death Spirals.)[1]

(In many of the examples below, there are other factors driving the scope expansion, but I still think the general thing I'm pointing at is a major factor and likely the main factor.)

1. LGBT started as LGBT, but over time developed into LGBTQIA2S+.

2. Fascism initially denoted, well, fascism, but now it often means something vaguely like "politically more to the right than I am comfortable with".

3. Racism initially denoted discrimination along the lines of, well, race, socially constructed category with some non-trivial rooting in biological/ethnic differences. Now jokes targeting a specific nationality or subnationality are often called "racist", even if the person doing the joking is not "racially distinguishable" (in the old school sense) from the ones being joked about.

4. Alignment: In IABIED, the authors write:

...The problem of making AIs want—and ultimately

beyond doom and gloom - towards a comprehensive parametrization of beliefs about AI x-risk

doom - what is the probability of AI-caused X-catastrophe (i.e. p(doom))?

gloom - how viable is p(doom) reduction?

foom - how likely is RSI?

loom - are we seeing any signs of AGI soon, looming on the horizon?

boom - if humanity goes extinct, how fast will it be?

room - if AI takeover happens, will AI(s) leave us a sliver of the light cone?

zoom - how viable is increasing our resolution on AI x-risk?

The notations we use for (1) function composition; and (2) function types, "go in opposite directions".

For example, take functions and that you want to compose into a function (or ), which, starting at some element , uses to obtain some , and then uses to obtain some . The notation goes from left to right, which works well for the minds that are used to the left-to-right direction of English writing (and of writing of all extant European languages, a...

[Epistemic status: speculation from scant evidence, take with an appropriate grain of salt.]

It seems plausible to me that country-wide EA groups are much more favorable to pausing/slowing down AGI-ward progress than the general collective EA geist, according to which (I think) it's still "not a thing people like us do"[1]. This is definitely the case with EA Poland.

I don't have direct evidence that it's true more generally of, say, EA foundations in other EU countries, but it's worth noting that historically, a major (maybe the biggest?) source of funding ...

Recently, I watched Out of This Box. In the musical, they test their nascent AGI on the Christiano-Sisskind test, a successor to the Turing test. What the test involves exactly remains unexplained. Here are my hypotheses.[1]

Sisskind certainly refers to Scott Alexander, and one thing that Scott Alexander posted about something in the vicinity of the Turing test was this post (italics added):

...The year is 2028, and this is Turing Test!, the game show that separates man from machine! Our star tonight is Dr. Andrea Mann, a generative linguist at University of Ca

I've read the SEP entry on agency and was surprised how irrelevant it feels to whatever it is that makes me interested in agency. Here I sketch some of these differences by comparing an imaginary Philosopher of Agency (roughly the embodiment of the approach that the "philosopher community" seems to have to these topics), and an Investigator of Agency (roughly the approach exemplified by the LW/AI Alignment crowd).[1]

If I were to put my finger on one specific difference, it would be that Philosopher is looking for the true-idealized-ontology-of-agency-indep...

Is there any research on how the actual impact of [the kind of AI that we currently have] lives up to the expectations from the time [shortly before we had that kind of AI but close enough that we could clearly see it coming]?

This is vague but not unreasonable periods for the second time would be:

- After OA Copilot, before ChatGPT (so summer-autumn 2022).

- After PaLM, before Copilot.

- After GPT-2, before GPT-3.

I'm also interested in research on historical over- and under-performance of other tech (where "we kinda saw it (or could see) it coming") relative to expectations.

[Epistemic status: shower thought]

The reason why agent foundations-like research is so untractable and slippery and very prone to substitution hazards, etc, is largely because it is anti-inductive, and the key source of its anti-inductivity is the demons in the universal prior preventing the emergence of other consequentialists, which could become a trouble for their acausal monopoly on the multiverse.

(Not that they would pose a true threat to their dominance, of course. But they would diminish their pool of usable resources a little bit, so it's better to...

Does severe vitamin C deficiency (i.e. scurvy) lead to oxytocin depletion?

According to Wikipedia

The activity of the PAM enzyme [necessary for releasing oxytocin fromthe neuron] system is dependent upon vitamin C (ascorbate), which is a necessary vitamin cofactor.

I.e. if you don't have enough vitamin C, your neurons can't release oxytocin. Common sensically, this should lead to some psychological/neurological problems, maybe with empathy/bonding/social cognition?

Quick googling "scurvy mental problems" or "vitamin C deficiency mental symptoms" doesn't r...