Accidental AI Safety experiment by PewDiePie: He created his own self-hosted council of 8 AIs to answer questions. They voted and picked the best answer. He noticed they were always picking the same two AIs, so he discarded the others, made the process of discarding/replacing automatic, and told the AIs about it. The AIs started talking about this "sick game" and scheming to prevent that. This is the video with the timestamp:

Scott Alexander left an important reply to Rob Bensinger on X. I happen to agree with Scott. Here's the original post by Rob:

...In response to "What did EAs do re AI risk that is bad?":

Aside from the obvious 'being a major early funder and a major early talent source for two of the leading AI companies burning the commons', I think EAs en masse have tended to bring a toxic combination of heuristics/leanings/memes into the AI risk space. I'm especially thinking of some combination of:

'be extremely strategic and game-playing about how you spin the things you say, rather than just straightforwardly reporting on your impressions of things'

plus 'opportunistically use Modest Epistemology to dismiss unpalatable views and strategies, and to try to win PR battles'.

Normally, I'm at least a little skeptical of the counterfactual impact of people who have worsened the AI race, because if they hadn't done it, someone else might have done it in their place. But this is a bit harder to justify with EAs, because EAs legitimately have a pretty unusual combination of traits and views.

Dario and a cluster of Open-Phil-ish people seem to have a very strange and perverse set of views (at least insofar as t

I think that both of these posts seem very confused about the dynamics of who says or thinks what, and I'm pretty sad about these posts.

Thoughts on Rob's post

In general, I'll note that I don't think Rob really knows many of the OP people; I suspect he has spent <40 hours talking to them about any of this possibly ever. (This is in contrast to e.g. Habryka.) I don't know where he's getting his ideas about what the OP people think, but he seems incredibly confused and ignorant. (Eliezer seems similarly ignorant about who believes what.)

'be extremely strategic and game-playing about how you spin the things you say, rather than just straightforwardly reporting on your impressions of things' plus 'opportunistically use Modest Epistemology to dismiss unpalatable views and strategies, and to try to win PR battles'.

I don't really think this is true

Dario and a cluster of Open-Phil-ish people seem to have a very strange and perverse set of views

I wish Rob would be clear who he was referring to. Dario has beliefs that seem to me very different from most people who worked on the 2022 AI misalignment risk efforts at Open Phil. (I'm thinking of people like Holden Karnofsky, Ajeya Cotra, Joe C...

Thanks for writing this, Buck. I'm not going to try to reply to your whole post, because I think some of it is stuff I should chew on for longer and see whether I agree with it. But going through some of your points:

I definitely apologize for making it sound like I was making a harsher criticism of (the relevant parts of) EA than I intended. My tweet was originally written as a quick follow-up comment to someone who asked why I thought EA's impact on AI x-risk was only ~55% likely to be positive. I turned it into a top-level tweet because I didn't want to hide it deep in an existing discussion, but this was an error given I didn't add extra context.

I also apologize for anything I said that made it sound like I was universally criticizing past or present Open Phil / cG staff (or centrally basing my views on first-hand conversations, for that matter). I already believed that tons of past and present rank-and-file OP/cG staff have very reasonable views, and I happily further update in that direction based on your and Oliver's statements to that effect (e.g., Ollie's "I have since updated that more people who are a level below Alexander, Dustin and Dario have more reasonable beliefs")....

Thanks, this is helpful and I basically accept most of what you're saying. Some more specific comments on the part about me:

I don't really think of Rob or MIRI as having a comms strategy of undermining EAs. I think Rob and Eliezer just say a bunch of false, wrong things about EAs because they're mad at them for reasons downstream of the EAs not agreeing with Eliezer as much as Eliezer and Rob think would be reasonable, and a few other things.

I accept this criticism and take back my claim. I noticed that some people who worked for MIRI comms seemed to do this, and I assumed that anything said by enough MIRI comms people in a serious-sounding voice was on some level a MIRI communique. Eliezer has clarified that this isn't true, so I apologize for saying it was.

...I think Dario (like various other Anthropic people) does not believe that AI takeover is a very plausible outcome, and I think his position is indefensible on the merits, as are some of his other AI positions (e.g. his skepticism that there are substantial returns to intelligence above the human level, his skepticism that ASI could lead to 2x manufacturing capacity per year). He moderately disagrees with the OP people about thi

I'm generally sympathetic to Scott's positions in this discussion, but I think he is probably very wrong about Ilya.

- To the best of my knowledge, Safe Superintelligence has never published a single word about what they plan to do move alignment forward, which is pretty damning. in my opinion.

- I have not heard of anyone who is known to be thoughtful about AI safety to have been hired to SSI, and I have not seen any position being advertised to AI safety people. People should correct me if I missed someone good joining SSI, but I think this is also a very bad sign.

- My impression is that people who worked with Ilya at OpenAI don't remember him as being particularly thoughtful about alignment, e.g. much less so than Jan Leike. This is a low confidence, third-hand impression, people can correct me if I'm wrong.

- My impression is that the available evidence suggests that Ilya mostly took part in Altman's firing for (perhaps justified) office politics grievances, and not primarily due to safety concerns. I also think that evidence points to his behavior during and after the incident being kind of cowardly. (I haven't looked deeply into the details of the battle of the board, and it's possible

In general, I'll note that I don't think Rob really knows many of the OP people; I suspect he has spent <40 hours talking to them about any of this possibly ever.

I think you are overfitting Rob's post to be about the wrong people. I think it's much closer to accurate, if you actually read what he says, which is:

Dario and a cluster of Open-Phil-ish people

I think the things Rob is saying still have some strawman-y nature to them, but I think they are reasonably accurate descriptors of Anthropic leadership, plus my best guesses of what Alexander (head of Coefficient Giving) and Zach (head of CEA) believe, which seems well-described by "Dario and a cluster of Open-Phil-ish people", and furthermore also of course constitutes an enormous fraction of the authority over broader EA.

I feel like almost all of your comment is just running with that misunderstanding and hence mostly irrelevant.

As you say yourself, almost no one in your list works at cG, or is in any meaningful position of authority at cG, so this feels like a bit of an absurd interpretation (I think trying to apply the things he is saying to Holden is reasonable, given Holden's historical role in cG, and I do think he in the distant past said things much closer to this, but seems to have changed tack sometime in the past few years).

As you say yourself, almost no one in your list works at cG, or is in any meaningful position of authority at cG, so this feels like a bit of an absurd interpretation

A lot of Rob's complaints are about things that happened in the past, so I don't think it's crazy to interpret him as talking about people who worked at CG in the past.

I think the things Rob is saying still have some strawman-y nature to them, but I think they are reasonably accurate descriptors of Anthropic leadership, plus my best guesses of what Alexander (head of Coefficient Giving) and Zach (head of CEA) believe, which seems well-described by "Dario and a cluster of Open-Phil-ish people", and furthermore also of course constitutes an enormous fraction of the authority over broader EA.

I think that these people believe different things, and I don't think Rob's post particularly accurately describes any of them. For example, the Anthropic leadership doesn't really think of themselves as trying to coordinate with AI safety people or trying to suppress them. I don't think Alexander thinks "AI is going to become vastly superhuman in the near future" (and fwiw I don't think Dario thinks that either, he doesn't seem to believe in returns to intelligence substantially above human-level).

(sending quickly, I might be wrong)

A lot of Rob's complaints are about things that happened in the past, so I don't think it's crazy to interpret him as talking about people who worked at CG in the past.

Fair enough. I think that the people you list also used to believe things closer to what Rob is saying in the past, so at least we need to do a consistent comparison. Holden from 10 years ago seems to say a lot of the things that Rob is saying here, and Ajeya from a few years ago also said things more like this (more point 1 and 3, less point 2).

My guess is that it is worth digging up quotes here, but it's a lot of work, so I am not going to do it for now, but if it turns out to be cruxy, I can.

(Again, I don't think these are centrally the people Rob is talking about in either case. I think centrally he is talking about Anthropic, and then secondarily talking about how Open Phil people have related to Anthropic over the years, but I do still think his criticism is correct directionally for those people)

...I don't think Alexander thinks "AI is going to become vastly superhuman in the near future" (and fwiw I don't think Dario thinks that either, he doesn't seem to believe in returns to intelligence substantially above h

Ajeya from a few years ago also said things more like this (more point 1 and 3, less point 2).

I don't remember anything like this. I think it might be misremembered or a strained interpretation.

Here are points 1 and 3 for reference:

1. AI is going to become vastly superhuman in the near future; but being a good scientist means refusing to speculate about the potential novel risks this may pose. Instead, we should only expect risks that we can clearly see today, and that seem difficult to address today.

3. In general, people worried about AI risk should coordinate as much as possible to play down our concerns, so as not to look like alarmists. This is very important in order to build allies and accumulate political influence, so that we're well-positioned to act if and when an important opportunity arises.

I asked ChatGPT to read bioanchors (where I thought this was most likely to occur), and then to read all of her other writings looking for anything that fits that mode. Here's its reply, not finding anything.

The closest match it finds is that Ajeya often caveats her claims. For example from bio anchors:

...This is a work in progress and does not represent Open Philanthropy’s institution

I think you're right, and also it seems misleading / like a bad clustering to lump "the EAs" in with "Anthropic's leadership". I think those groups have some memetic connections, but they're not the same group!

More than 50% of the talent-weighted safety people in EA are literally employees of Anthropic! The ex-CEO of Open Phil now works at Anthropic, and is married to one of its founders. These groups have enormous overlap.

Like, there is so enormous overlap, and the overlap results in such an enormous amount of de-facto deference (being an employee of a company is approximately the strongest common deference relationship we have) that it makes sense to think of these as closely intertwined.

Yes, there are people who attach the EA label themselves who are different here, sometimes even quite substantial clusters. But it's also IMO clear from Scott's response that he himself is also majorly deferring and is majorly supportive of Anthropic as a representative of EA, so this clearly isn't just a split between "everyone who works at Anthropic and everyone who doesn't".

Rob used "Open Phil" exactly two times. One time saying "a cluster of Dario and Open-Phil-ish people" and another time "...

Honestly, this is such a bad reply by Scott that I… don’t quite know whether I want to work on all of this anymore.

If this is how this ecosystem wants to treat people trying their hardest to communicate openly about the risks, and who are trying to somehow make sense of the real adversarial pressures they are facing, then I don't think I want anything to do with it.

I have issues with Rob's top-level tweet. I think it gets some things wrong, but it points at a real dynamic. It’s kind of strawman-y about things, and this makes some of Scott’s reaction more understandable, but his response overall seems enormously disproportionate.

Scott's response is extremely emblematic of what I've experienced in the space. Simultaneous extreme insults and obviously bad faith arguments ("actually, it's your fault that Deepmind was founded because you weren't careful enough with your comms"), and then gaslighting that no one faces any censure for being open about these things (despite the very thing you are reading being extremely aggro about the lack of strategic communication), and actually we should be happy that Ilya started another ASI lab, and that Jan Leike has some compute budget.

The whole ...

Unless you mean "making this my last day [on twitter]", which might or might not be a good idea.

If this is how this ecosystem wants to treat people trying their hardest to communicate openly about the risks, and who are trying to somehow make sense of the real adversarial pressures they are facing, then I don't think I want anything to do with it.

I don't think Scott speaks for the ecosystem. He's just a guy in it, and one who isn't even that closely connected to Anthropic or Coefficient Giving people. (E.g. you spend >10x as much time talking to people from those orgs as he does.) I think that the people in the ecosystem you're criticizing would not approve of Scott's post.

This is of course in contrast to Open Phil defunding almost everyone who has been pursuing this strategy and making mine and tons of other people's lives hell, and all kinds of complicated adversarial shit that I've been having to deal with for years, where absolutely there have been tons of attempts to sabotage people trying to pursue strategies like this.

I think this is not a good summary of what Coefficient Giving has done. (I do think it really sucks that they defunded Lightcone.)

I think that the people in the ecosystem you're criticizing would not approve of Scott's post.

I think this is false. I expect Scott's post to be heavily upvoted, if it was posted to the EA Forum to have an enormously positive agree/disagree ratio, and in-general for people to believe something pretty close to it.

There are a few exceptions (somewhat ironically a good chunk of the cG AI-risk people), but they would be relatively sparse. I think this is roughly what someone who is smart, but doesn't have a strong inside-view take about what they should do about AI-risk believes that they should act like if they want to be a good member of the EA community. My guess is it's also pretty close to what leadership at cG, CEA and Anthropic believe, plus it would poll pretty well at a thing like SES.

He's just a guy in it, and one who isn't even that closely connected to Anthropic or Coefficient Giving people.

The issue is of course not that Scott is right or wrong about what Anthropic or cG people believe. The issue is that he seems to be taking a view where you should be super strategic in your communications, sneer at anyone who is open about things, and measure your success in how many of...

I think this is false. I expect Scott's post to be heavily upvoted, if it was posted to the EA Forum to have an enormously positive agree/disagree ratio, and in-general for people to believe something pretty close to it.

The EA Forum is a trash fire, so who knows what would happen if this was published there.

My read of the social dynamics is that in places where people are inclined to defer to me or people like me, they might initially approve of the Scott thing for bad tribal reasons, but change their mind when they read criticism of it from me or someone like me (which is ofc part of why I sometimes bother commenting on things like this).

My guess is it's also pretty close to what leadership at cG, CEA and Anthropic believe, plus it would poll pretty well at a thing like SES.

I think that Scott's post would not overall be received positively by those people. Maybe you're saying that one of the directions argued for by Scott's post is approved of by those people? I agree with that more.

In case it matters to either of you, my guesses:

- I agree with Habryka that absent criticism Scott's post would be well received by an important group of people reasonably characterized as EA-ish AI safety people.

- Imo absent criticism Rob's post would be well received by a different group of people reasonably characterized as doomers. (Literally right before seeing this thread I saw another post on LW that is directionally correct but is mostly wrong or exaggerated in its details, and that was very well received.)

- Both posts are broadly wrong about lots of things, about equally so, such that most people would be better off having never encountered either of them.

- Tbc, my first-order intuitive impression is that Scott's post is much more directionally accurate. But I expect that is because I constantly experience people knifing me, pushing me to take strategies that systematically destroy my ability to do anything while gaining approximately no safety benefit, or making claims about members of groups that include me that are false of me, whereas I don't really experience any of the stuff that Rob gestures at, even though I expect it exists. Though Rob's post doesn't actually inform me of

I endorse you taking the space to figure out how you want to relate and doing what's right for you, I've increasingly updated to thinking that people doing things they're not wholeheartedly behind tends to be net bad in all sorts of sideways ways, but the effort would be weaker for your loss. Wherever you end up, I appreciate you having taken the strategy of speaking in public about things that usually aren't in a way that helped clarify the strategic situation for me many times.

(also, it's scary to see three of the people I'd put in the upper tiers of good communication and understanding where we're at with AI technically get into this intense conflict. I'm going to be thinking on this some and seeing if anything crystalizes which might help specifically, but in the meantime a few more general-purpose posts that might be useful memes for minimizing unhelpful conflict are A Principled Cartoon Guide to NVC, NVC as Variable Scoping, and Why Control Creates Conflict, and When to Open Instead)

I think you need to be a lot more deflationary about the g-word. If you think, "But 'gaslighting' is something Bad people do; Scott Alexander isn't Bad, so he would never do that", well, that might be true depending on what you mean by the g-word. But if the behavior Habryka is trying to point to with the word to is more like, "Scott is adopting a self-serving narrative that minimizes wrongdoing by his allies and inflates wrongdoing by his rivals" (which is something someone might do without being Bad due to having "somewhat snapped"), well, why wouldn't the rivals reach for the g-word in their defense? What is the difference, from their perspective?

"Gaslighting" should probably be avoided because it is anywhere between meaningless and a fighting word depending on who says it and how.

The g-word is a very nasty accusation. It gets thrown around and means a bunch of stuff down to just "saying stuff I disagree with", but it shouldn't.

It is originally a conscious, malicious attempt to drive someone insane by strategically lying to them.

On the substance, people are honest but wrong an awful lot, and honest but massively overstating their case even more often. Assuming your rivals are malicious or dishonest when they're just wrong or overstating is a huge source of conflict and thereby confusion.

It's a really useful pointer towards a tactic that is relatively widespread and has no better word. I am personally happy to use other words, but I have the sense that sentences like "I am so very very tired of the ambiguous but ultimately strategic enough attempts at undermining my ability to orient in this situation by denying pretty clearly true parts of reality combined with intense implicit threats of consequences if I indicate I believe the wrong thing that might or might not be conscious optimizations happening in my interlocutors but have enough long-term coherence to be extremely unlikely to be the cause of random misunderstandings" would work that well.

That's also what your brain is doing when you say you don't want to work on this anymore. Scott doesn't want you to quit! (Partially because he values Lightcone's work, and partially because it would look bad for him if you can publicly blame your burnout on him.) Crucially, your brain knows this.

Man, I really wish this was the case, and it's non-zero of what is going on, but the vast majority of what I am expressing with my (genuine) desire to quit is the stress and frustration associated with the gaslighting, which is one level more abstract than the issue you talk about.

Like yes, there is a threat here being like "for fuck's sake, stop gaslighting or I am genuinely going to blow up my part of the pie", but it's not actually about the object level, and I don't actually have much of any genuine hope of that working in the same way one might expect from a negotiation tactic.

I am just genuinely actually very tired, and Scott changing his mind on this and going "oh yeah, actually you are right" actually wouldn't do much to make me want to not quit, because it wouldn't address the continuous gaslighting where every time anyone tries to talk about any of the adversarial dynamics, the...

Yeah, the frustrating part is almost always on a meta level. I think Zack's point about "No natural units of pie" applies to the gaslighting issue as well though. Asserting one's viewpoint means asserting it as truth which invalidates differing perspectives. "I disagree, you contradict, he gaslights".

It's difficult because sometimes the gas lights really don't seem to be dimming, and sometimes that perception is downstream of some motivated thinking because I really don't want to believe we're running out of oil already, dammit. And so the result is simultaneously kinda an honest statement of perspective (at least, as honest as these tend to get) while also being a (not-necessarily-consciously) motivated action pushing people to disregard their own senses. And then we have to decide how to judge this mess of bias and honesty, and if we don't judge such that the product after a round trip of perceiving C/D and responding accordingly we get more C than last time... shit's fucked. And without objective units of pie that people can agree on when judging who was in the wrong.

So like... am I trying to gaslight people into questioning their own sanity so they accept what I want them to ac...

I wrote a reply to Scott on Twitter, before seeing the discussion here; I think it's a lot clearer than my original (IMO sloppy) tweet.

I've copied the reply below; see also my reply to Buck.

_____________________________________________________

To clarify the claim I’m making: I’m not trying to throw EA under a bus. This thread spun off from a discussion where I said I thought EA’s net impact on AI x-risk was probably positive, but I was highly uncertain.

Somebody asked what the bad components of EA’s impact were, and I went off on Anthropic, and on EA’s (and especially OpenPhil’s) entanglement with the company and their support for Anthropic’s operations. (To the extent that a lot of x-risk-adjacent EA seems to function, in practice, as a talent pipeline for Anthropic.)

I also said that I think OpenPhil’s bet on OpenAI was a disaster. And I said that there’s a culture of caginess, soft-pedaling, and trying-to-sound-reassuringly-mundane that I think has damaged AI risk discourse a fair amount, and that various people in and around OpenPhil have contributed to.

I’m restating this partly to be clear about what my exact claims are. E.g., I’m not claiming that items 1+2+3 are things OpenPhi...

I respect this - all of our options are bad and unlikely to work, the situation is desperate, and I have no plan better than playing a portfolio of all the different desperate hard strategies in the hopes that one of them works.

I used to support such a portfolio approach, but subsequently realized that it's actually not safe (i.e., is potentially net-negative even aside from opportunity costs), or the portfolio has be restricted a lot. This is because due to the existence of illegible AI safety problems, solving some (i.e., more legible) AI safety problems can actually make the overall situation worse, by increasing the chances of an unsafe AI being developed or deployed.

According to this logic, safer strategies include:

- Pausing AI, and other actions that help broadly with both legible and illegible problems, like improving societal epistemic health.

- Making illegible problems more legible.

- Working directly on illegible problems.

Another reason to think that many "AI safety strategies" are actually not safe is that even nominally altruistic humans are more power/status-seeking[1] than actually altruistic, and one way this manifests is that they tend to neglect risks more than they sho...

Copying over my response to Scott from Twitter (with a few additions in square brackets):

I think my biggest disagreement here is about the concept of strategic communications.

In particular, you claim that MIRI should have been more PR-strategic to avoid hyping AI enough that DeepMind and OpenAI were founded.

Firstly, a lot of this was not-very-MIRI. E.g. contrast Bostrom’s NYT bestseller with Eliezer popularizing AI risk via fanfiction, which is certainly aimed much more at sincere nerds. And I don’t think MIRI planned (or maybe even endorsed?) the Puerto Rico conference.

But secondly, even insofar as MIRI was doing that, creating a lot of hype about AI is also what a bunch of the allegedly PR-strategic people are doing right now! Including stuff like Situational Awareness and AI 2027, as well as Anthropic. [So it's very odd to explain previous hype as a result of not being strategic enough.]

You could claim that the situation is so different that the optimal strategy has flipped. That’s possible, although I think the current round of hype plausibly exacerbates a US-China race in the same way that the last round exacerbated the within-US race, which would be really bad.

But more plausi...

More than any other group I've been a part of, rationalists love to develop extremely long and complicated social grievances with each other, taking pages and pages of text to articulate. Maybe I'm just too stupid to understand the high level strategic nuances of what's going on -- what are these people even arguing about? The exact flavor of comms presented over the last ten years?

As someone who spends a significant part of his time briefing policymakers in Europe, ministerial advisors, senior civil servants in AI governance, I want to point out something obvious from where I stand, but absent from this discussion.

The "radical transparency vs. strategic communication" debate presupposes that framing is the bottleneck. It isn't. The bottleneck is volume. Most policymakers have never heard the argument, no matter how you frame it. Among the ones I interact with, maybe 2% have been exposed to the problem enough to have an opinion. Another 10% or so have heard something, but mostly through the Yann LeCun-adjacent dismissals, and formed their view from that. The remaining ~88%, including people in very important AI governance positions, have simply never had the conversation.

The question of which approach works better is real but secondary. What's missing is more people doing this work at all. It's a campaign, and the limiting factor is coverage, not the message.

To give a concrete data point: the only policymaker in my circles who has ever brought up "If Anyone Builds It, Everyone Dies" is Lord Tim Clement-Jones, chair of the All-Party Parliamentary Group on AI in the UK. And he was probably already sympathetic. That's one person.

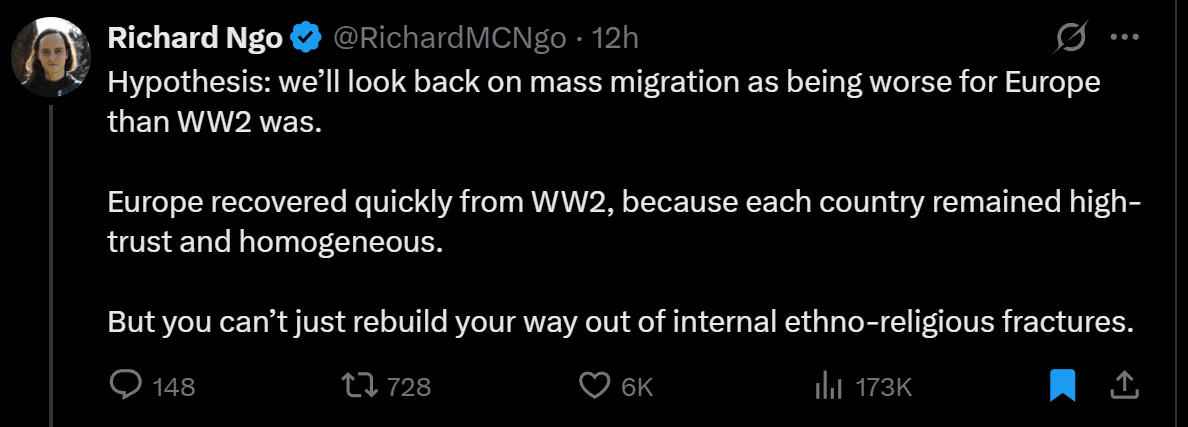

There is a phenomenon in which rationalists sometimes make predictions about the future, and they seem to completely forget their other belief that we're heading toward a singularity (good or bad) relatively soon. It's ubiquitous, and it kind of drives me insane. Consider these two tweets:

Timelines are really uncertain and you can always make predictions conditional on "no singularity". Even if singularity happens you can always ask superintelligence "hey, what would be the consequences of this particular intervention in business-as-usual scenario" and be vindicated.

For a while now, some people have been saying they 'kinda dislike LW culture,' but for two opposite reasons, with each group assuming LW is dominated by the other—or at least it seems that way when they talk about it. Consider, for example, janus and TurnTrout who recently stopped posting here directly. They're at opposite ends and with clashing epistemic norms, each complaining that LW is too much like the group the other represents. But in my mind, they're both LW-members-extraordinaires. LW is clearly obviously both, and I think that's great.

People are very worried about a future in which a lot of the Internet is AI-generated. I'm kinda not. So far, AIs are more truth-tracking and kinder than humans. I think the default (conditional on OK alignment) is that an Internet that includes a much higher population of AIs is a much better experience for humans than the current Internet, which is full of bullying and lies.

All such discussions hinge on AI being relatively aligned, though. Of course, an Internet full of misaligned AIs would be bad for humans, but the reason is human disempowerment, not any of the usual reasons people say such an Internet would be terrible.

One difference between the releases of previous GPT versions and the release of GPT-5 is that it was clear that the previous versions were much bigger models trained with more compute than their predecessors. With the release of GPT-5, it's very unclear to me what OpenAI did exactly. If, instead of GPT-5, we had gotten a release that was simply an update of 4o + a new reasoning model (e.g., o4 or o5) + a router model, I wouldn't have been surprised by their capabilities. If instead GPT-4 were called something like GPT-3.6, we would all have been more or less equally impressed, no matter the naming. The number after "GPT" used to track something pretty specific that had to do with some properties of the base model, and I'm not sure it's still tracking the same thing now. Maybe it does, but it's not super clear from reading OpenAI's comms and from talking with the model itself. For example, it seems too fast to be larger than GPT-4.5.

For example, it seems too fast to be larger than GPT-4.5.

A "GPT-5" named according to the previous convention in terms of pretraining compute would need at least 1e27 FLOPs (50x original GPT-4), which on H100/H200 can at best be done in FP8. Which could be done with 150K H100s for 3 months at 40% utilization. (GB200 NVL72 is too recent to use for this pretraining run, though there is a remote possiblity of B200.) A compute optimal shape for this model would be something like 8T total params, 1T active[1].

The speed of GPT-5 could be explained by using GB200 NVL72 for inference, even if it's an 8T total param model. GPT-4.5 was slow and expensive likely because it needed many older 8-chip servers (which have 0.64-1.44 TB of HBM) to keep in HBM with room for KV caches, but a single GB200 NVL72 has 14 TB of HBM. At the same time, it wouldn't help as much with the speed of smaller models (but it would help with their output token cost because you can fit more KV cache in the same NVLink domain, which isn't necessarily yet being reflected in prices, since GB200 NVL72 is still scarce). So it remains somewhat plausible that GPT-5 is essentially GPT-4.5-thinking running on better hardwar...

Alignment seems quite similar to the problem of imbuing AIs with artistic taste. Morality and taste are both hard to verify and subjective (or inter-subjective). Alignment has in practice the further difficulty that deception may play a role. I.e., even after managing to train moral principles into an AI system, you have to make sure they actually act as a guide for action.

That said, my very subjective impression is that AI is far ahead in terms of ethical taste compared to artistic taste. Perhaps this is thanks to the fact that alignment has been considered a core AI problem for a much longer time.

Previously, I said:

People are very worried about a future in which a lot of the Internet is AI-generated. I'm kinda not. So far, AIs are more truth-tracking and kinder than humans. I think the default (conditional on OK alignment) is that an Internet that includes a much higher population of AIs is a much better experience for humans than the current Internet, which is full of bullying and lies.

All such discussions hinge on AI being relatively aligned, though. Of course, an Internet full of misaligned AIs would be bad for humans, but the reason is human disempowerment, not any of the usual reasons people say such an Internet would be terrible.

I feel good about this prediction so far. Instagram and TikTok have now a significant amount of AI-generated videos (though they haven't overrun these platforms by any means). The categories I've seen so far are:

- Low-brow animated stories.

- Fantasy or sci-fi scenarios with music.

- Colorful AI-generated art.

- Cute meme animals.

The greatest sin of this content is that it's often low quality. But it's not really that great of a sin. I think, all things considered, AI slop is above average content. Other content often contains bullying, meanness,...

There is also a significant category of "AI video passing itself off as a real video", and many videos have people debating in the comments if it's real or AI. This seems like it can erode trust and is generally negative.

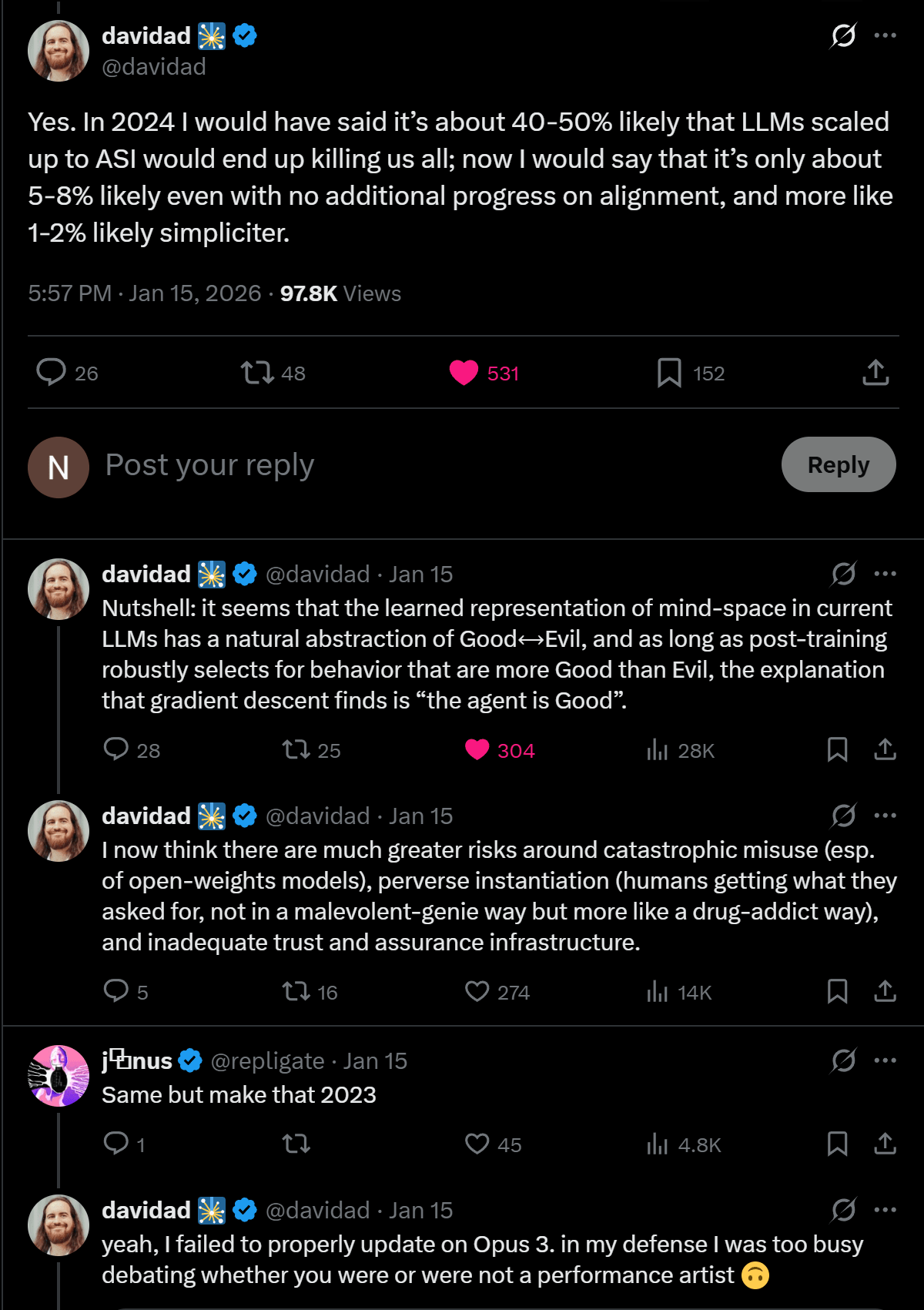

Rationalists and Pause AI people on X are accusing Davidad of suffering of AI psychosis. I think it's them who have lost the plot actually, not Davidad. The move here looks political, rather than truth-tracking. "Davidad is now my political opponent, so I'm accusing him of being crazy." This happened to Emmet Shear too at some point.

I also strongly believe AI psychosis to be a far more limited phenomenon than people here seem to believe. I think you're treating it as a good soldier in your army of arguments rather than investigating it truthfully for what it is.

If we're in a history simulation, I don't think it's unlikely that the simulators will just set us free in their reality. I'm expecting a more enlightened humanity to consider simulated humans moral patients.

There's no real difference between a simulated human and a human. Both are causally-interacting signals in a computer. If you inhabit a simulation, you automatically inhabit base reality just as much as native base-reality beings. They're also just interacting signals. It's just that you're embedded in some other software and they're not, but that's not fundamental at all.

People seemed confused by my take here, but it's the same take Davidad expressed in this thread that has been making rounds: https://x.com/davidad/status/2011845180484133071

I'm pretty sure a human brain could, in principle, visualize a 4D space just as well as it visualizes a 3D space, and that there are ways to make that happen via neurotech (as an upper bound on difficulty).

Consider: we know a lot about how 4-dimensional spaces behave mathematically, probably no less than how 3-dimensional spaces work. Once we know exactly how the brain encodes and visualizes a 3D space in its neurons, we probably also understand how it would do it for a 4D space if it had sensory access to it. Given good enough neurotech, we could manually...

Has anyone proposed a solution to the hard problem of consciousness that goes:

- Qualia don't seem to be part of the world. We can't see qualia anywhere, and we can't tell how they arise from the physical world.

- Therefore, maybe they aren't actually part of this world.

- But what does it mean they aren't part of this world? Well, since maybe we're in a simulation, perhaps they are part of the simulation. Basically, it could be that qualia : screen = simulation : video-game. Or, rephrasing: maybe qualia are part of base reality and not our simulated reality in the same way the computer screen we use to interact with a video game isn't part of the video game itself.

A UBI of AI or compute kind of makes sense to me under safe superintelligence. I don't know if AI companies will still be incentivized to sell AI if they can just use it themselves, but a state isn't under the same incentives. Instead of centralizing the use of its AI systems, it could distribute access to all citizens so they can use it productively themselves.

Why not just give people money so they can use it to pay for compute? I guess it's a matter of which option you expect to lead to the most productive and freedom-promoting outcome for everyone in the long term. A UBI of AI access helps ensure that people receive a directly useful productive resource, and could reduce their dependence on cash transfers once they become rich enough from using AI productively.

This scenario assumes it's possible for superintelligence to remain an assistant, on guardrails, to each individual.

Slop is in the mind, not in the territory. I will not call slop something that I like, regardless of what other people call slop.

Reaction request: "bad source" and "good source" to use when people cite sources you deem unreliable vs. reliable.

I know I would have used the "bad source" reaction at least once.

Is anyone working on experiments that could disambiguate whether LLMs talk about consciousness because of introspection vs. "parroting of training data"? Maybe some scrubbing/ablation that would degrade performance or change answer only if introspection was useful?

Iff LLM simulacra resemble humans but are misaligned, that doesn't bode well for S-risk chances.

An optimistic way to frame inner alignment is that gradient descent already hits a very narrow target in goal-space, and we just need one last push.

A pessimistic way to frame inner misalignment is that gradient descent already hits a very narrow target in goal-space, and therefore S-risk could be large.

This community has developed a bunch of good tools for helping resolve disagreements, such as double cruxing. It's a waste that they haven't been systematically deployed for the MIRI conversations. Those conversations could have ended up being more productive and we could've walked away with a succint and precise understanding about where the disagreements are and why.

If you try to write a reward function, or a loss function, that caputres human values, that seems hopeless.

But if you have some interpretability techniques that let you find human values in some simulacrum of a large language model, maybe that's less hopeless.

The difference between constructing something and recognizing it, or between proving and checking, or between producing and criticizing, and so on...

As a failure mode of specification gaming, agents might modify their own goals.

As a convergent instrumental goal, agents want to prevent their goals to be modified.

I think I know how to resolve this apparent contradiction, but I'd like to see other people's opinions about it.

I keep seeing absolutely terrible epistemics from like 50% of AI Safety. From people who previously seemed reasonable. This quick take was prompted by an example I just saw, from Connor Leahy: https://x.com/JoshWalkos/status/2021087240126976511