Seems a bit weird to have 10% probability mass for the next 15 years followed by 40% probability mass over the subsequent 10 years. That seems to indicate a fairly strong view about how the next 20 years will go. IMO the probability of AGI in the next 15 years should be substantially higher than 10%.

Whoa, I hadn’t noticed that. The old predictions put 40% probability on AGI being developed in a 5 year window

That was going to be my initial comment, then I noticed that the blog post addresses that problem. Not sufficiently IMO.

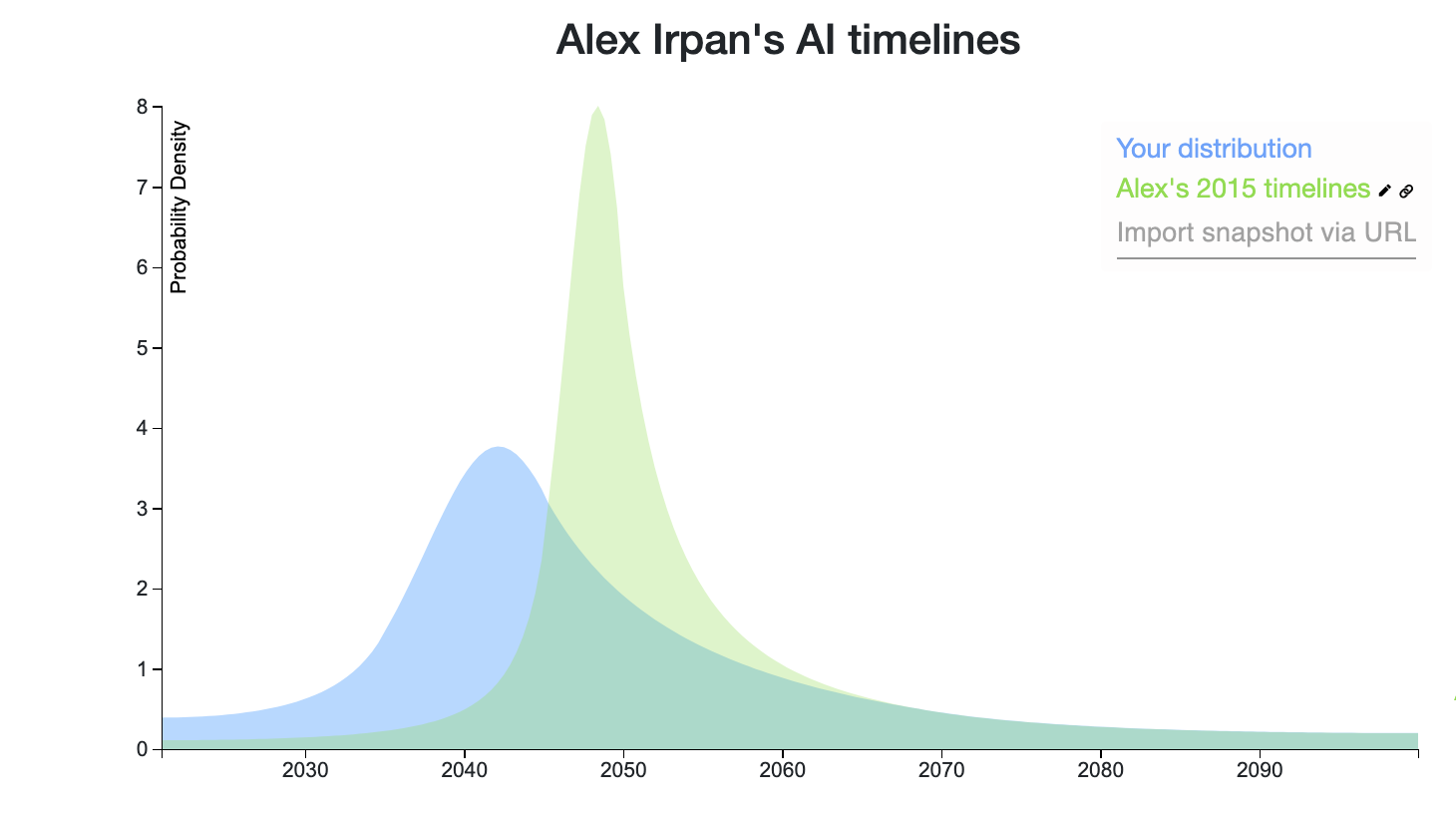

Yea this was a lot more obvious to me when I plotted visually: https://elicit.ought.org/builder/om4oCj7jm

(NB: I work on Elicit and it's still a WIP tool)

Ahhhh this is so nice! I suspect a substantial fraction of people would revise their timelines after seeing what they look like visually. I think encouraging people to plot out their timelines is probably a pretty cost-effective intervention.

Sounds like we could have a thread for that. The image above looks great, would be interested in seeing more comparisons like it, if they're easy to generate using elicit.

Yes, let's please create a thread. If you don't I will. Here's mine: (Ignore the name, I can't figure out how to change it)

Can you make the thread (make it a question post?) and share it with me? Then I'll suggest any rewrites and we can publish it?

I think the title is particularly confusing because it includes an "I" statement. Maybe change to:

Alex Ipran: "My AI Timelines Have Sped Up"

If 65% of the AI improvements will come from compute alone, I find quite surprising that the post author assigns only 10% probability of AGI by 2035. By that time, we should have between 20x to 100x compute per $. And we can also easily forecast that AI training budgets will increase 1000x easily over that time, as a shot to AGI justifies the ROI. I think he is putting way too much credit on the computational performance of the human brain.

For this post, I’m going to take artificial general intelligence (AGI) to mean an AI system that matches or exceeds humans at almost all (95%+) economically valuable work.

I'm not sure this is such a good operationalization. I believe that if you looked at the economically valuable work that humans were doing 200 hundred years ago (mostly farming, as I understand), more than 95% of it is automated today. And we don't spend 95% of GDP on farming today.

So I'm not quite sure what the above means. Does it mean 95% of GDP spent on compute? Or unemployment at 95%? Or 95% of jobs that are done today by people are done then by computers? If that last one, then how do you measure it if jobs have morphed s.t. there's neither a human nor a computer clearly doing a job that today is done by a human?

I think that productivity is going to increase. And humans will continue to do jobs where they have a comparative advantage relative to computers. And what those comparative advantages are will morph over time. (And in the limit, if I'm feeling speculative, I think being a producer and a consumer might merge, as one of the last areas where you'll have a comparative advantage is knowing what your own wants are.)

But given that prices will be set based on supply and demand it's not quite obvious to me how to measure when 95% of economically valuable work is done by computers. Because, for a given task that involves both humans and computers, even if computers are doing "more" of the work, you won't necessarily spend more on the computers than the people, if the supply of compute is plentiful. So, in some hard-to-define sense, computers may be doing most of the work, but just measured in dollars they might not be. And one could argue that that is already the case (since we've automated so much of farming and other things that people used to do).

Alternatively, you could operationalize 95% of economically valuable work being done by computers as the total dollars spent on compute being 20x all wages. That's clear enough I think, but I suspect is not exactly what Alex had in mind. And also, I think it may just be a condition that never holds, even when AI is strongly superhuman, depending on how we end up distributing the spoils of AI, and what kind of economic system we end up with at that point.

Blog post by Alex Irpan. The basic summary:

The main underlying shifts: more focus on improvements in tools, compute, and unsupervised learning.