This is how some of the most effective, transformative labs in the world have been organized, from Bell Labs to the MRC Laboratory of Molecular Biology.

I have a post currently in my drafts pile titled "Leading The Parade". Basic idea is that lots of supposedly-successful scientists, politicians, organizations, etc, were mostly "leading the parade" - walking somewhat in front of everyone else, but not actually counterfactually impacting the trajectory of progress much. It's the opposite of counterfactual impact.

On the other hand, at least some scientists etc were counterfactually impactful. But "how much people talk about how great they were" is not a very reliable proxy for how counterfactually impactful people/organizations were; we need to look at some specific kinds of historical evidence which people usually ignore.

I use two cases from Bell Labs as central examples:

When asking whether a historical scientist or inventor had much counterfactual impact or was just leading the parade, one main type of evidence to look for is simultaneous discovery. If multiple people independently made the discovery around roughly the same time, then counterfactual impact is clearly low; the discovery would have been made even without the parade-leader. On the other hand, if nobody else was even close to figuring it out, then that’s evidence of counterfactual impact.

For example, let’s consider two big breakthroughs made at Bell Labs in the late 1940’s: the transistor, and information theory.

In the case of the transistor, we don’t have evidence quite as clear-cut as simultaneous discovery, but we have evidence almost that clear-cut. First, the people who developed the transistor at Bell Labs (notably Bardeen, Brattain and Shockley) were themselves quite worried that semiconductor researchers elsewhere (e.g. Purdue) would beat them to the punch. So they themselves apparently did not expect their impact to be highly counterfactual (other than to who got the patent).

Second, there were actually two transistor inventions at Bell Labs. Bardeen and Brattain figured out their transistor design first. Shockley, unhappy at being scooped, came along and figured out a totally different design within a few months. It’s not quite simultaneous independent invention, but clearly the problem was relatively tractable and plenty of people were working on it.

So, the inventors of the transistor were mostly “leading the parade”; they didn’t have much counterfactual impact on the technology’s discovery.

Shannon’s invention of information theory is on the other end of the spectrum.

Ask an early twentieth century communications engineer, and they’d probably tell you that different kinds of signals need different hardware. Sure, you could send Morse over a telephone line, but it would be wildly inefficient. To suggest that any signal can be sent with basically-the-same throughput over a given transmission channel would sound crazy to such an engineer; it wasn’t even in the space of things which most technical people considered.

So when Shannon showed up with information theory, ~nobody saw it coming at all. There was (as far as I know) no simultaneous discovery, nor anyone even close to figuring out the core ideas (i.e. fungibility of channel capacity). Shannon was probably not leading the parade; his impact was highly counterfactual.

Summary and upshot of all this:

- It's pretty straightforward to read histories in order to look for counterfactual impact

- ... but hardly anyone actually does that.

- Standard narratives about which people/organizations were "highly impactful" seem pretty poorly correlated with counterfactual impact.

- So I expect there's a bunch of low-hanging intellectual fruit here, to be picked by going back through histories of lots of people/organizations and actually looking for evidence for/against counterfactual impact (like e.g. simultaneous discovery), and then compiling new lists of famous people/orgs which were/weren't counterfactually impactful.

- Absent that sort of project, I see claims like "This is how some of the most effective, transformative labs in the world have been organized, from Bell Labs to the MRC Laboratory of Molecular Biology", and I'm like... ummm, no, I'm not buying that these are some of the most effective, transformative labs in the world.

Can someone destroy my hope early by giving me the Molochian reasons why this change hasn't been made already and never will be?

Govt. spending is a ratchet that only goes one direction, replacing dysfunctional agencies costs jobs and makes political enemies. Reform might be more practical, but much like people, very hard to reform an agency that doesn't want to change. You'd be talking about sustained expenditure of political capital, the sort of thing that requires an agency head who's invested in the change and popular enough with both parties to get to spend a few administrations working at it.

Edit: I answered separately above with regards to private industry.

Letting people specialize as “science managers” sounds in practice like transferring the reins from scientists to MBAs, as was much maligned at Boeing. Similarly, having grants distributed by people who aren’t practicing scientists sounds like a great way to avoid professional financial retaliation and replace it with politicians setting the direction of funding.

Who says they would be MBAs? The best science managers are highly technical themselves and started out as scientists. It's just that their career from there evolves more in a management direction.

Once you eliminate the requirement that the manager be a practicing scientist, the roles will become filled with people who like managing, and are good at politics, rather than doing science. I’m surprised this is controversial. There is a reason the chair of academic departments is almost always a rotating prof in the department, rather than a permanent administrator. (Note: “was once a professor” is not considered sufficient to prevent this. Rather, profs understand that serving as chair for a couple years before rotating back into research is an unpleasant but necessary duty.)

We see this with doctors too. As the US medical system consolidates, and private practices are squeezed to a tiny and tinier fraction of docs, slowly but surely all the docs become employees of hospitals and the people in charge are MBA-types. Some of them have MDs, and once practiced medicine, but they specialize in management and they don’t come back.

You can of course argue that the downside is worth the benefits. But the existence and size of the downside are pretty clear from history, and need to be addressed in such a system.

Other examples:

-

“Career politician” is something of a slur. It seems widely accepted (though maybe you dispute?) that folks who specialize in politics certainly become better at winning politics (“more effective”) but that also this selects for politicians who are less honest or otherwise not well aligned with their constituents.

-

Tech startups still led by their technical CEO are somehow better than those where they have been replaced with a “career CEO”. Obviously there are selection effects, but the career CEOs are generally believed to be more short-term- and power-focused.

People have tried to fix these problems by putting constraints on managers (either through norms/stigmas about “non-technical” managers or explicit requirements that managers must, e.g., have a PhD). And probably these have helped some (although they tend to get Goodhardted, e.g., people who get MDs in order to run medical companies without any desire to practice medicine). And certainly there are times when technical people are bad managers and do more damage than their knowledge can possibly make up for.

But like, this tension between technical knowledge and specializing in management (or grant evaluation) seems like the crux of the issue that must be addressed head-on in any theorizing about the problem.

As a scientist I strongly agree. It seems like there's been a few steps towards this in recent years, for example with things like the Arc Institute or FROs. Hopefully this model gets the attention of government funders.

One of the theories on why more innovations (heliocentrism, discovery of the New World, etc.) happended in Europe instead of China (which is where astronomy, navigation, etc. were far more advanced) is that in China, there was lot more centralization. If you went to the Emperor and couldn't convince him that the earth was round, you were done. In Europe you could shop your idea around to a bunch of Kings and Queens until someone liked your idea.

Success in science and innovation isn't driven by efficiency. It's driven by diversity. You want to have a lot of little groups trying out crazy ideas.

To understand this, it helpful to understand Moivre's equation, which he described in the article, “The Most Dangerous Equation.” I summarize it below.

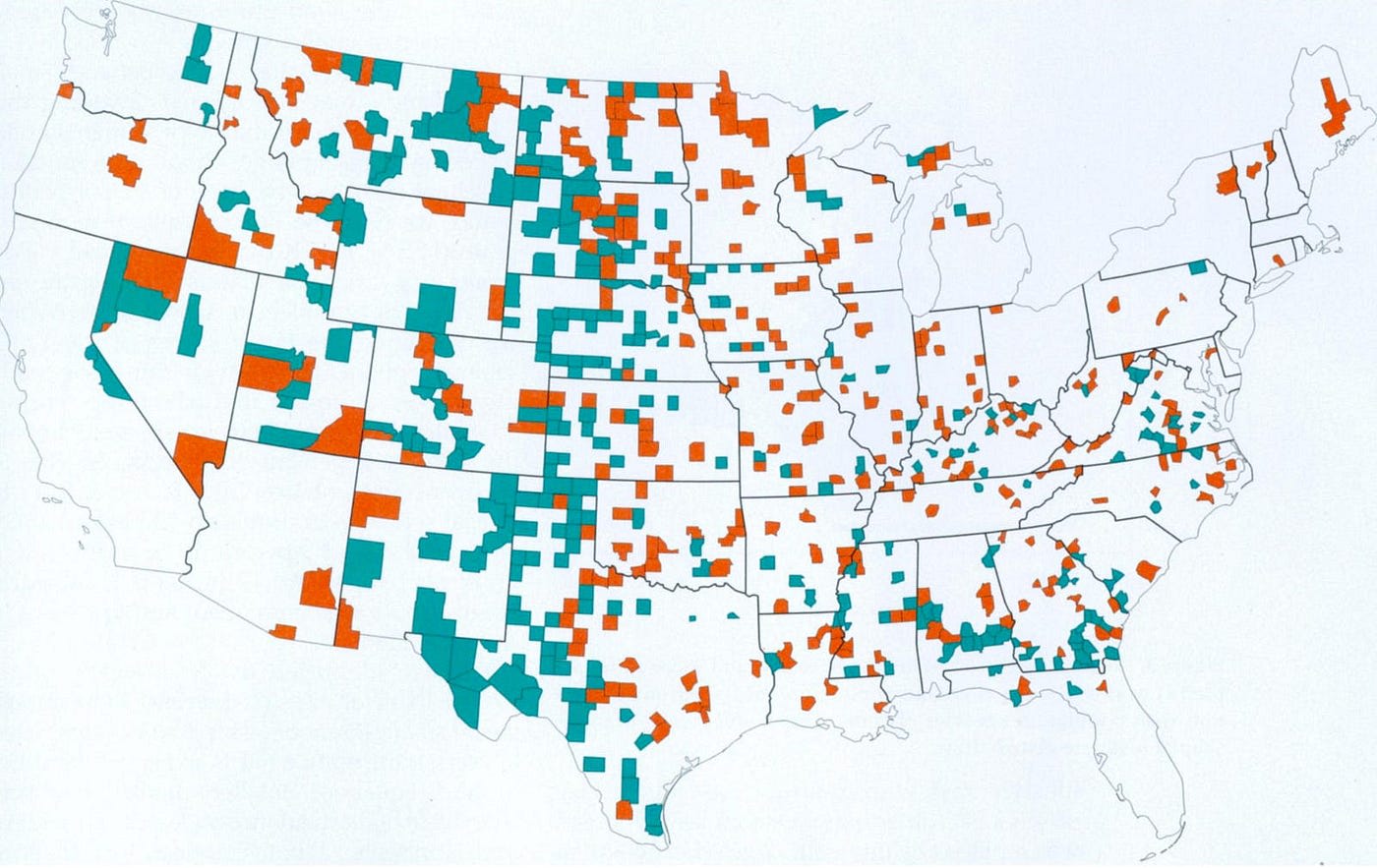

Let’s say you wanted to figure out what causes kidney cancer. A reasonable question to ask might be, “which counties in the U.S. have the highest rates of kidney cancer?”

The answer is that rural, sparsely populated counties have the highest rates. You might think that perhaps this was due to pesticides, or lack of access to healthcare, or some other factor related to the rural lifestyle.

However, if you were to ask which counties have the lowest rates, you would find that rural, sparsely populated counties also have the lowest rates. In fact, the counties are often adjacent. See below. The red counties have the highest rates of kidney cancer, and the teal counties the lowest.

What’s going on?

Well, when you have only a few people in the county, the likelihood that there will be very high or low rates, due simply to chance, is high. For example, if there were only 2 people in the county and 1 person got cancer, that would be 50%. If 0 out 2 got cancer, it would be 0%.

This is why the best (and the worst) hospitals in the country or the best (and the worst) places to live often are small hospitals and small towns. And why the best (and the worst) science is done by small groups or individual scientists. Statistically, the smaller the sample size, the greater the likelihood of seeing an outlier.

This phenomenon was discovered by de Moivre, and made famous by Wainer’s.

The point is, as a group group gets larger and larger, innovation regresses more and more toward the mean. This is OK if you're working on a weakest link problem (where what you're doing is to prevent mistakes) but in endeavors like sciences, which is a strongest link problem (where what matters are the few huge breakthroughs), you want to make each group as small as possible.

I go into more detail in my post, https://medium.com/entrepreneur-s-handbook/why-the-most-dangerous-way-to-innovate-is-the-most-effective-way-dc9aad9b1b49

Give all the firsts from Bell labs https://en.wikipedia.org/wiki/Bell_Labs : solar cell in 1954. That's 70 years in the past.

What bothers me is that this is an excellent policy proposal...why didn't it already happen 70 years ago. It's dead obvious the more 'modern' fields of research will take more and more resources per discovery, a rat race of individual PIs is not a model that is going to work. I noticed this myself firsthand more than 10 years ago, I don't see how anyone could have failed to see this in the 1970s, etc.

Reminds me of Georgism: best tax proposal that nobody uses. For Georgism, there are all these vested interests - current property owners who would have the value of their land taxed to 0. For the PI model, apparently this is the gateway to tenure, making a significant discovery with whatever money you can beg for.

Whatever forces made the current inefficient model dominant are still working against you. Unless there is some larger trend - perhaps large institutions are closing because they went broke, or technological change from AI is making a new model dominant, I don't see why you would get any meaningful change now, if it didn't happen in the past.

I don't know what effect near term AI models will have on this situation, though, ironically, by amplifying the effort and lowering the cost of an individual PI's research, it is possible that AI will make the 'solo lab' viable again.

Also, obviously, AI could mean none of these other scientists will matter. Advanced AI turns this into a "lazy wait" calculation - just wait until AI is strong enough to assist in researching your field of study, saving your pennies, then get 1000+ instances to help. Theoretically the rate of discovery would become similar to Edison or early Bell labs until all the innovations that a team of 1000+ experts working around the clock can find are found. It wouldn't matter what humans research groups discover in the next 10-20 years, because anything they found would get discovered in a few months once AI is good enough.

When the Department of Energy created their research institutes I would expect that the people driving that policy agenda expect the research institutes to work like this.

In practice, any organization like this can grow its own budget by seeking external grants. Most people who would fund an organization with a yearly budget of 20 million don't have a problem if that org seeks another 20 million dollars in external grants and even likes the fact that there's external validation.

For publically funded research organizations, telling them not to seek external grants and thus be driven by the incentives around seeking external grants is likely the hardest policy decision.

Bell Labs or DeepMind in our time have structures that prevent their researchers from seeking external grants but many other research organizations don't have those.

How does seeking public grants change the incentives so the 70+ year old model doesn't remain?

Organizations like the Los Alamos National Laboratory which are publically owned and privately managed seem to be relatively ignored in the progress study debate.

For seeking public grants it's necessary that the PI looks over the grants for which their research might qualify and apply.

When it comes to the management layer, if there's the choice between hiring a researcher that's likely to bring in external grants and a researcher who's unlikely to do so, there's a huge incentive to hire the researcher that will bring in the grants.

Organizations like the Los Alamos National Laboratory which are publically owned and privately managed seem to be relatively ignored in the progress study debate.

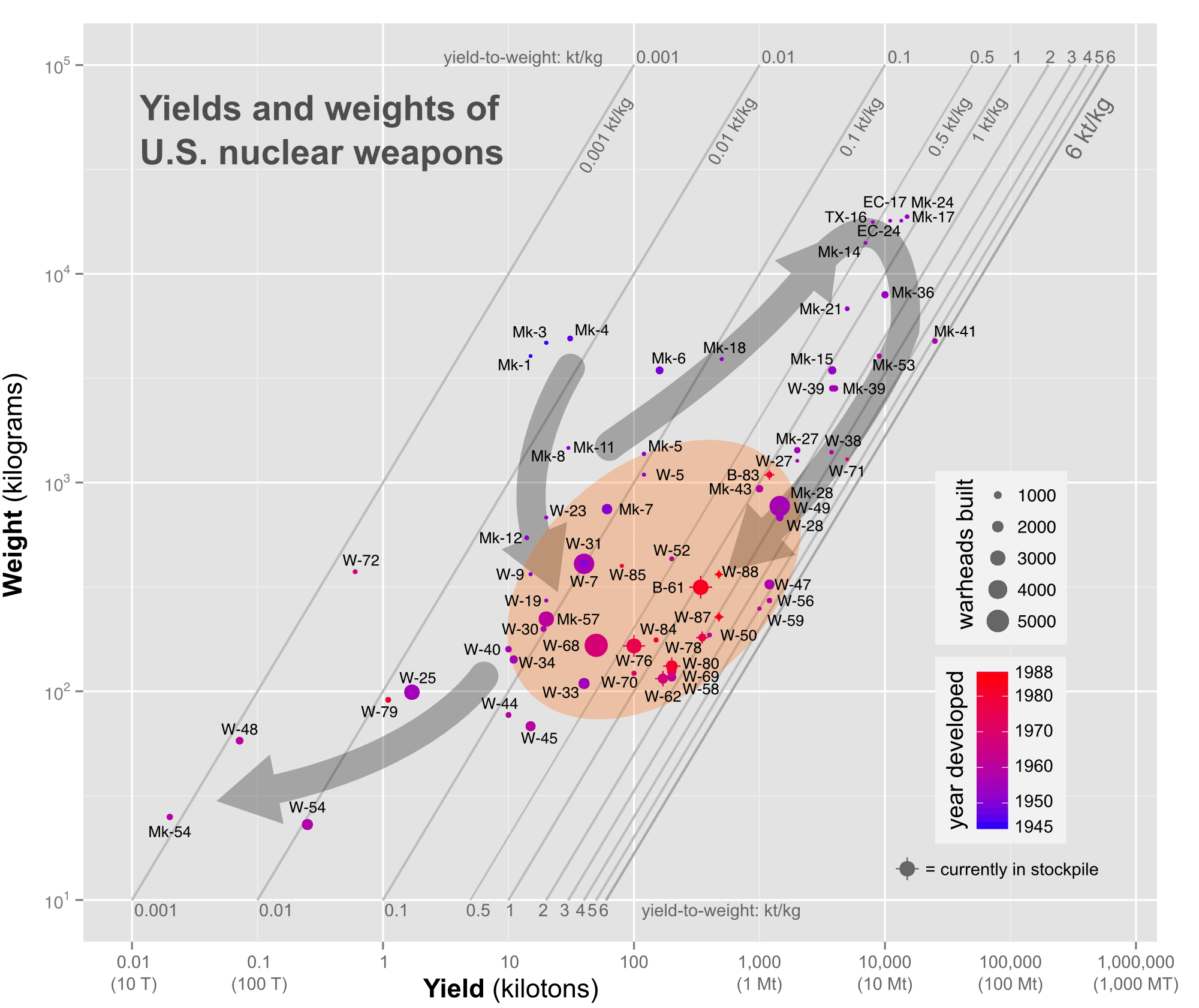

So Los Alamos's primary contribution to progress is developing better nuclear weapons, by the government and for the government. The "private management" and the fact that they are fundamentally a military weapons lab but are managed by a "civilian" organization, the Federal DOE, seem like meaningless technicalities*. Their business is death by thermonuclear fire, and they historically did their jobs pretty well...

Are you aware of any meaningful contributions they have made to overall "progress", aka advancing the general capabilities of humanity? I know of attempts to sell contract scientific services post cold war, and have some relatives who were involved in this, but I wasn't aware that this was a particularly productive source of "progress". The lab has access to things like neutron radiation sources which can be used for imaging, and other unique things that a private lab won't have access to. But it's not ASML, it's not Bell labs, it's not Deepmind, it's not CATL... The magnitude of "progress" they contribute to is probably at least 1000 times smaller than the 4 I mentioned.**

Note also that progress requires sharing of innovations, so others can build on them, or at least sharing of the product of the innovation. (example, OAI letting anyone use GPT-4, Intel letting anyone buy an x86 CPU with secret optimizations). I don't think Los Alamos shares anything, especially the thousands of small innovations that were needed to make better nuclear weapons.

*similar argument for Pantex, which is fundamentally a military facility for servicing and assembling nuclear warheads, DOE ownership notwithstanding.

** whether extremely efficient nuclear weapons are progress at all is debatable. But check out the below, borrowed from https://www.projectrho.com/public_html/rocket/spacegunconvent.php . This is undeniably "progress" in this domain..

Their business is death by thermonuclear fire, and they historically did their jobs pretty well...

While that might have been true in the beginning, currently they list their research areas as Applied Energy Programs, Civilian Nuclear Program, and Office of Science.

Their Office of Science does basic research like developing a Quantum Light Source.

But it's not ASML, it's not Bell labs, it's not Deepmind, it's not CATL... The magnitude of "progress" they contribute to is probably at least 1000 times smaller than the 4 I mentioned.**

They don't seem to be as productive as the others, but in this context, the interesting question is why they aren't. The researchers at the Los Alamos National Laboratory (and other similar DOE laboratories) don't have to manage a bunch of grad students but they still seem to produce less research output.

While not being certain, I would expect that in the beginning the researchers at the Los Alamos National Laboratory where mainly funded out of the budget of Laboratory.

They don't seem to be as productive as the others, but in this context, the interesting question is why they aren't. The researchers at the Los Alamos National Laboratory (and other similar DOE laboratories) don't have to manage a bunch of grad students but they still seem to produce less research output.

I picked the below because I think they are large organizations, doing research that has tangible benefits to humans at a wide scale.

ASML: they aren't a general lab but are doing applied research to advance chip fabrication tech. They don't get paid if their tools don't work or are not a major advance. There are competitors who will take their business if they fall too far behind too long.

Bell Labs: you know this one

Deepmind: Googles private management thought they were being insufficiently productive, and fired 40 percent of the staff in October 2022, 1 month before chatGPT. Since then they obviously are now central to the core survival strategy for Google and presumably have many more resources, and are under pressure to deliver models that are competitive. This competition and large scale effort makes them similar to the other successes.

CATL: a massive company that just does fairly narrow scope research to fine tune the production process for battery cells, and has developed a sodium battery. Under heavy competition like the others. I mentioned this one because their research isn't a graphene, it has immediate real value for humans, the cost of battery storage affects end users at a large scale, and obviously once it is cheap enough most homes will have a storage battery between the meter and electrical panel with solar input, and most cars and trucks will primarily use batteries.

Each success case is massive, with a lot of equipment, and has a clear goal they are optimizing for, where the organization itself supports them.

This is what I have noticed with the academic research I have seen : basically just a lack of funds and inefficient processes designed primarily to protect the funds means people can't get prompt access to tools and materials. Each research avenue doesn't have many people working on it, for example the large well known lab I personally saw is a bunch of separate labs that are mostly not collaborating.

Vs say a skunkworks or a large private effort that is aligned with the survival of the company. There is specialized equipment and specialized skilled staff available in massive quantities in special dedicated labs. I saw this at 2 chip company employers. Competitive pressure means that the goal is to achieve results today...or tonight...

I wrote all the above to say Los Alamos likely doesn't have the organizational structure to contribute efficiently to private research, and it isn't focused on a single area, which seems to be a common element above. Each success above has many billions of dollars and it's all going to one specialized domain.

Googles private management thought they were being insufficiently productive, and fired 40 percent of the staff in October 2022

No, they cut staff costs by 40%. Not the same thing at all. (You would have noticed a lot more ex-DMers if they had fired half the place!)

They shut down the Edmonton office with Sutton*, so they clearly shed some people, but it's not clear what percentage by headcount; because of how compensation works, a lot of that 40% could reflect, say, high stock grants and high share prices in the COVID tech bubble followed by slashing offered bonuses to return to baseline. The DM budget was unusually high for a while, I think, and interpreting the official public numbers is hard because of issues like the purchase of their extremely expensive London HQ.

Interpretation is also ambiguous because this was near-simultaneous with the merger with Google Brain; the general view is that GB was the one that lost out in the merger and was the one being dissolved due to insufficient productivity compared to DM. (And we do see a lot of ex-GBers now.)

* which is probably relevant to why Sutton is now partnering with Carmack's Keen Technologies AGI startup.

Interpretation is also ambiguous because this was near-simultaneous with the merger with Google Brain; the general view is that GB was the one that lost out in the merger and was the one being dissolved due to insufficient productivity compared to DM. (And we do see a lot of ex-GBers now.)

Thanks for the details, to me the issue is that a large budget slash like this sounds pretty detrimental in EV. You could get this kind of savings during the Manhattan project if you decided to cut 2 of the 3 enrichment methods for example.

Sure we know in hindsight that all 3 methods worked, but the expected value of "bomb before the end of the war" drops a lot because everything is now riding on whichever method you kept.

I would assume now Deepmind is going to be focused on massive transformers and has much less to spare on any other routes.

This also, like you said, sends out many 'B team' members who still know almost everything the people not fired know, spreading the knowledge around to all the competition. (Imagine if the Manhattan project staff who were fired were able to join the Axis powers. They are bringing with them strategically relevant info, even if none of them are the most talented physicists)

It depends on what that '40% staff cost' means, really. Was it just accounting shenanigans related to RSUs and GOOG stock fluctuations? Then it means pretty much nothing of interest to us here at LW. Did it come from shedding a few superstars with multi-million-dollar compensation packages? Hard to say, depends on how much you think superstars matter at this point compared to researchers. Could be a very big deal: I remain convinced that search for LLMs may be the Next Big Thing and everyone who is reinventing RL from scratch for LLMs is botching the job, and so a few superstar researchers leaving DM could be critical. (But maybe you think the opposite because it's now all about big pressgangs of researchers whipping a model into shape.) Did it come from shedding a lot of lower-level people who are obscure and unheard of? Inverse of the former.

If the cut is inflated by Edmonton people getting the axe, then I personally would consider this cut to be irrelevant: I have been largely unimpressed by their work, and I think Sutton's 'Edmonton plan' or whatever he was calling it is not an interesting line of work compared to more mainstream RL scaling approaches. (In general, I think Sutton has completely missed the boat on deep learning & especially DL scaling. I realize the irony of saying this about the author of "The Bitter Lesson", but if you look at his actual work, he's committed to basically antiquated model-free tweaks and small models, rather than the future of large-scale model-based DRL - like all of his stuff on continual learning is a waste of time, when scaling just plain solves that!)

Are you aware of any meaningful contributions they have made to overall "progress", aka advancing the general capabilities of humanity?

Not sure if this meets the bill, but off the top of my head I thought of the HIV sequence database which has been around since 1988.

Aren’t existing research orgs already like this to some extent, where the organisation provides funding to its individual researchers in the form of a salary and they can form and run projects as they see fit? Or is this a naive understanding of how most research labs work?

Yes, but those researchers are typically grad students. To become a professor, get tenure, get your own grants, etc., you need to go run your own lab. At least that is my understanding of the system.

Yeah, I wondered about the same thing. I have no idea how research works, but naively it seems to me that if you want to implement "finance groups rather than individuals" all you need is to coordinate a group of individuals and make them sign a contract that whatever money one of them gets from a grant will be distributed among the rest equally (or maybe 50% to the person who got the money, and the remaining 50% equally). In other words, group financing can be already implemented on top of individual financing.

And the obvious argument against this is that the people who bring the most money would leave the system, because they would believe they could do better individually? Unless the system is set up so that the imbalances are not too great, and being a member of the system brings benefits that outweigh the net financial loss. For example, the system could provide non-scientists (or not-top-scientists) that would handle the bureaucracy, so that the scientists have more time for their research. It would be as if you pay a secretary, only it is one secretary shared by a number of scientists.

But maybe I am just reinventing a university here?

I really don't think a group of, say, university professors could join in such a contract. For one, I'm not sure their universities would let them, especially if they weren't all at the same university. For another, the granting organizations (e.g., NIH) put a lot of restrictions on the grant money. You can't redistribute it to other labs.

Also, the grants are still going to be small ones to fund a single lab, not large ones that could fund hundreds of researchers. If everyone still has to seek grants you haven't really solved the problem, even if they are spreading risk/reward somehow.

Thanks for posting this, I agree with the overall take that a block model is a superior alternative. I think some people in the Bay Area have idly looked into this for AIS funding; I was considering doing this myself but unfortunately had other obligations.

This change would not get rid of the need for researchers to have a non-research skillset to secure funding. It would just switch the required non-research skillset from 'wrangling money out of grant committees' to 'wrangling positions out of administrators'. Your mileage may vary as to which of those two is less dysfunctional.

Could you expand on that? I’m French, and even though I’m not involved in research I notice that: 1. French researchers are very underfunded 2. Somehow they’re still pretty good at building a lab with duct tape and string. But all the good metascience articles are written in English and focus on the US, so I have no clue how research funding in France has evolved over time, what’s been getting worse or better, etc., and I’d like to know more.

That also was, naturally, the model in the Soviet Union, with orgs called "scientific research institutes". https://www.jstor.org/stable/284836

I still like this post. I worked some of this material into “The Progress Agenda” (the last essay in the series The Techno-Humanist Manifesto), and updated it a bit with some more quotes and citations.

Bell labs, Xerox park, etc were AFAIK were mostly privately funded research labs that existed for decades and churned out patents that may as well have been money printers. When AT&T (Bell Labs) was broken up, that research all but started the modern telecom and tech industry, which is now something like 20%+ of the stock market. If you attribute even a tiny fraction of that to Bell Labs it's enough to fund another 1000 times over.

The missing piece arguably is executive teams with a 25 year vision instead of a 25 week vision, AND the institutional support to see it through; cost cutting is in fashion with investors too. Private equity is in theory well positioned to repeat this elsewhere, but for reasons I don't entirely understand has become too short sighted and/or has significantly shortened horizons on returns. IBM, Qualcom, TSMC, ASML, and Intel all seem to have research operations of that same near-legendary caliber, mostly privately funded (albeit treated as a national treasure of strategic importance); what they have in common of course, is they're all tech. Semiconductor fabrication is extremely research intensive and world class R&D operations are table stakes just to survive to the next process node.

Maybe a good followup question is why hasn't this model spread outside of semiconductors and tech? Is a functional monopoly a requirement for the model to work? (ASML has a functional monopoly on leading edge photo-lithography machines that power modern semiconductor fabs). Do these labs ever start independently without a clear lineage to 100 billion+ dollar govt research initiatives? Electronics and tech is probably many trillions in US govt funding since WWII once you include military R&D and contracts.

This moves the funding decision to a hiring decision, which may be better, but when does an investigator get fired? This doesn't seem deeply different to me. The main difference seems to be extending the duration of funding significantly.

Investigators get fired when they aren't being productive. This does happen. The difference in the block model is that whether someone is being productive is determined by their manager, with input from their peers.

The LessWrong Review runs every year to select the posts that have most stood the test of time. This post is not yet eligible for review, but will be at the end of 2025. The top fifty or so posts are featured prominently on the site throughout the year.

Hopefully, the review is better than karma at judging enduring value. If we have accurate prediction markets on the review results, maybe we can have better incentives on LessWrong today. Will this post make the top fifty?

New Zealand government research sort of does that. In 90s, the public research was reorganised into institutes - private companies but owned by government (government of day appoints the board members as position falls vacant). Initially, all government funding of these institutes was contestable - vaguely like the PI model. The cost and inefficiency of this system led to this be abandoned. Instead, the institutes get "Core" funding to support their core area (eg earth science in my case). Essentially the institutes propose very broad-brush programmes (so peer-driven) about how this will be spent. External panels (researchers from similar institutions overseas mostly, or possibly unis) critique it and a government bureaucracy evaluates against performance and alignment with government goals. Essentially it’s a negotiation process that sets a contract of around 5 years (from memory) which get tweaked as required. A similar model provides research funding (as opposed to teaching funding) to the universities. There are also several contestable funds, open to the whole research community.

Does it work? I doubt any system is perfect and this one has a number of downsides. It is certainly a much more efficient system (less scientist-hours spent chasing money, and less bureaucracy evaluating bids) than what preceded it. There are advantages to the individual scientist though in pursuing contestable funding, though most will involve teams of scientists, often across multiple institutes/universities. Core funding is tied to agreed programmes and the programme manager controls it. You can’t just do your thing on Core funding. Winning contestable funding means the team that won it is in control. Enough funding for your pet project and you can thumb your nose at institute management.

The downsides as I see them: The % of government funding going to each institute is very static. It is hard to convince the government that your discipline is now hotter and more important than the discipline of another institute. The opposite was true of the contestable model - uncertainty of future funding made investment in research infrastructure (eg vessels) uncertain. Scrapping between institutes over money turned to scrapping within institutes over money. Perhaps this is actually a major upside - at least the scrappers know what they are talking about unlike the bureaucrats. The accountability model is everyone’s bugbear. It's public money so asking for accountability is obviously reasonable, but it generally feels like time-consuming paperwork to clueless bureaucrats. There must be a better way.

It should be noted that the institutes total funding thus consists of Core funding, contestable funding but also commercial research contracts. Depending on the Institute, this can be pretty high. Ours has been up 50% commercial. Others even higher.

DARPA projects are often moderately large, say about a dozen researchers on one grant.

Here, the PI is basically full-time writing grants/managing the budget/managing people, and their minions ar3 doing the actual research. Can work.

A stupid bug in the system at my current institution. So, we have full time researchers and teaching staff. Teaching staff have permanent contracts. Research staff are only ever employed until the end of whatever research grant the6 are currently employed on. Bug: research staff cannot be PIs. Because, well, the grant will end and the researcher will cease to be employed, but there might still need to be government paperwork to be done wrt the grant by the PI, which well won't happen if you've just terminated the PIs contract because the grant ended. But .. Hang on a minute ..l didn't we just say that being a PI is a full-time management position? And you're asking that guy to teach classes too?

[Amusing factoid. I once had an EU research contract with a strict condition that we absolutely not do any research. Background: original grant has ended, but we haven't had the wrap up meeting where you present the results to the evaluators. Somehow, the travel expenses for that meeting need a grant extension to cover them, but, says the sponsors, understand this additional money is travel expenses only and don't you guys dare to charge any actual research costs to this contract]

In case it isn't obvious. Consequence of the above institutional rule is that your full-time research staff are not, and be rule cannot be, principal investigators.

Me: "I need to get some business cards printed, and the online form here says I can put whatever I like in the title field. I think I'm going with 'senior research fellow'."

member of academc staff, and my long term collaborator: "That would have the advantage that it's actually true. Someone could probably do more damage by writing in "head of finance" in there..."

When Galileo wanted to study the heavens through his telescope, he got money from those legendary patrons of the Renaissance, the Medici. To win their favor, when he discovered the moons of Jupiter, he named them the Medicean Stars. Other scientists and inventors offered flashy gifts, such as Cornelis Drebbel’s perpetuum mobile (a sort of astronomical clock) given to King James, who made Drebbel court engineer in return. The other way to do research in those days was to be independently wealthy: the Victorian model of the gentleman scientist.

Eventually we decided that requiring researchers to seek wealthy patrons or have independent means was not the best way to do science. Today, researchers, in their role as “principal investigators” (PIs), apply to science funders for grants. In the US, the NIH spends nearly $48B annually, and the NSF over $11B, mainly to give such grants. Compared to the Renaissance, it is a rational, objective, democratic system.

However, I have come to believe that this principal investigator model is deeply broken and needs to be replaced.

That was the thought at the top of my mind coming out of a working group on “Accelerating Science” hosted by the Santa Fe Institute a few months ago. (The thoughts in this essay were inspired by many of the participants, but I take responsibility for any opinions expressed here. My thinking on this was also influenced by a talk given by James Phillips at a previous metascience conference. My own talk at the workshop was written up here earlier.)

What should we do instead of the PI model? Funding should go in a single block to a relatively large research organization of, say, hundreds of scientists. This is how some of the most effective, transformative labs in the world have been organized, from Bell Labs to the MRC Laboratory of Molecular Biology. It has been referred to as the “block funding” model.

Here’s why I think this model works:

Specialization

A principal investigator has to play multiple roles. They have to do science (researcher), recruit and manage grad students or research assistants (manager), maintain a lab budget (administrator), and write grants (fundraiser). These are different roles, and not everyone has the skill or inclination to do them all. The university model adds teaching, a fifth role.

The block organization allows for specialization: researchers can focus on research, managers can manage, and one leader can fundraise for the whole org. This allows each person to do what they are best at and enjoy, and it frees researchers from spending 30–50% of their time writing grants, as is typical for PIs.

I suspect it also creates more of an opportunity for leadership in research. Research leadership involves having a vision for an area to explore that will be highly fruitful—semiconductors, molecular biology, etc.—and then recruiting talent and resources to the cause. This seems more effective when done at the block level.

Side note: the distinction I’m talking about here, between block funding and PI funding, doesn’t say anything about where the funding comes from or how those decisions are made. But today, researchers are often asked to serve on committees that evaluate grants. Making funding decisions is yet another role we add to researchers, and one that also deserves to be its own specialty (especially since having researchers evaluate their own competitors sets up an inherent conflict of interest).

Research freedom and time horizons

There’s nothing inherent to the PI grant model that dictates the size of the grant, the scope of activities it covers, the length of time it is for, or the degree of freedom it allows the researcher. But in practice, PI funding has evolved toward small grants for incremental work, with little freedom for the researcher to change their plans or strategy.

I suspect the block funding model naturally lends itself to larger grants for longer time periods that are more at the vision level. When you’re funding a whole department, you’re funding a mission and placing trust in the leadership of the organization.

Also, breakthroughs are unpredictable, but the more people you have working on things, the more regularly they will happen. A lab can justify itself more easily with regular achievements. In this way one person’s accomplishment provides cover to those who are still toiling away.

Who evaluates researchers

In the PI model, grant applications are evaluated by funding agencies: in effect, each researcher is evaluated by the external world. In the block model, a researcher is evaluated by their manager and their peers. James Phillips illustrates with a diagram:

A manager who knows the researcher well, who has been following their work closely, and who talks to them about it regularly, can simply make better judgments about who is doing good work and whose programs have potential. (And again, developing good judgment about researchers and their potential is a specialized role—see point 1).

Further, when a researcher is evaluated impersonally by an external agency, they need to write up their work formally, which adds overhead to the process. They need to explain and justify their plans, which leads to more conservative proposals. They need to show outcomes regularly, which leads to more incremental work. And funding will disproportionately flow to people who are good at fundraising (which, again, deserves to be a specialized role).

To get scientific breakthroughs, we want to allow talented, dedicated people to pursue hunches for long periods of time. This means we need to trust the process, long before we see the outcome. Several participants in the workshop echoed this theme of trust. Trust like that is much stronger when based on a working relationship, rather than simply on a grant proposal.

If the block model is a superior alternative, how do we move towards it? I don’t have a blueprint. I doubt that existing labs will transform themselves into this model. But funders could signal their interest in funding labs like this, and new labs could be created or proposed on this model and seek such funding. I think the first step is spreading this idea.

PS (Jan 31): After publishing this, Michael Nielsen (who has thought about and researched this much more than I have) argues that I have oversimplified and made the case too starkly:

Read his whole thread. Maybe it would be better to say that the PI model is overused today, and block funding is underused.